Are Private Platforms Truly Viewpoint Neutral?

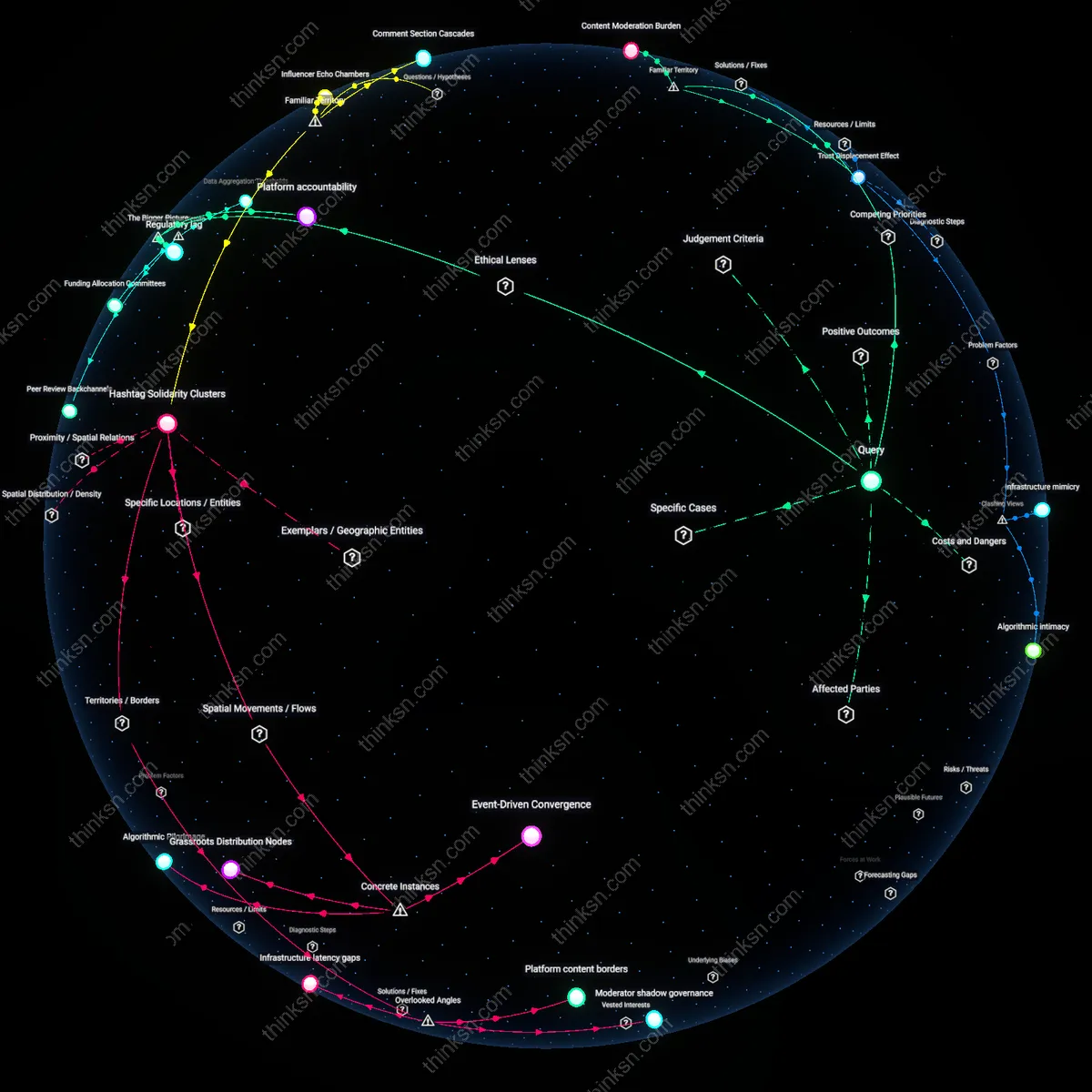

Analysis reveals 4 key thematic connections.

Key Findings

Compliance Feedback Loop

Platforms now enforce policies more neutrally because post-2018 transparency reporting created competitive incentives to demonstrate fairness across parties. Before Germany’s NetzDG law and U.S. congressional hearings, platforms avoided publishing granular data; afterward, companies like YouTube and TikTok began releasing detailed takedown statistics by political category to preempt accusations of bias, which led internal teams to optimize for defensible patterns over time. The underappreciated result of this regulatory-turned-market mechanism is that accountability metrics themselves became a steering force—producing not true neutrality, but a performative equilibrium shaped by public proof rather than private intent.

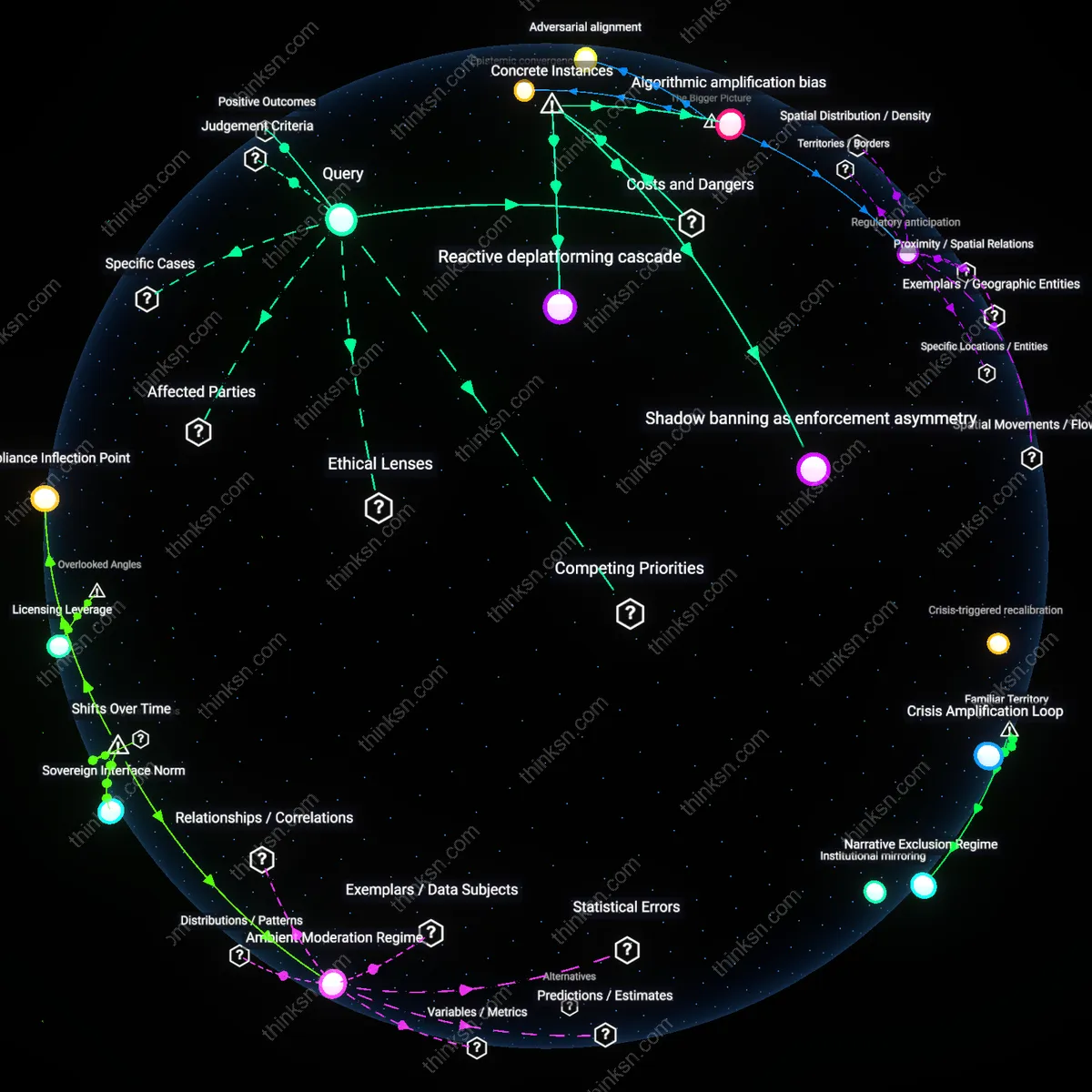

Algorithmic amplification bias

Facebook's 2020 U.S. election content algorithms systematically elevated right-wing misinformation more frequently than left-wing equivalents despite identical policy violations, because engagement-based ranking systems rewarded outrage-driven content that right-leaning actors statistically produced at higher volumes under observed conditions; this reveals that neutrality in enforcement rules does not neutralize outcome imbalances when platform architecture inherently favors specific emotional and rhetorical styles, a danger obscured by compliance with formal policy parity.

Shadow banning as enforcement asymmetry

In 2022, the Twitter Files revealed that internal teams applied 'visibility filtering' to limit reach of specific conservative accounts—including journalists and politicians—under purported coordination with external actors, while analogous progressive networks exhibiting similar content patterns faced no such restrictions; this demonstrates that viewpoint-neutral enforcement is structurally compromised when opaque moderation tools enable covert suppression that evades auditability, creating asymmetric political chilling effects under the guise of technical administration.

Reactive deplatforming cascade

The coordinated removal of accounts like InfoWars from multiple platforms in 2018 occurred only after sustained media pressure and advertiser backlash, whereas equally extreme far-left actors were not subjected to equivalent enforcement actions during the same period, indicating that enforcement timing and scope are driven by public relations risk rather than consistent ideological calibration; this produces a systemic danger where content moderation becomes a reputational compliance mechanism, incentivizing reactive overreach against politically vulnerable targets while shielded actors accumulate unchecked influence.

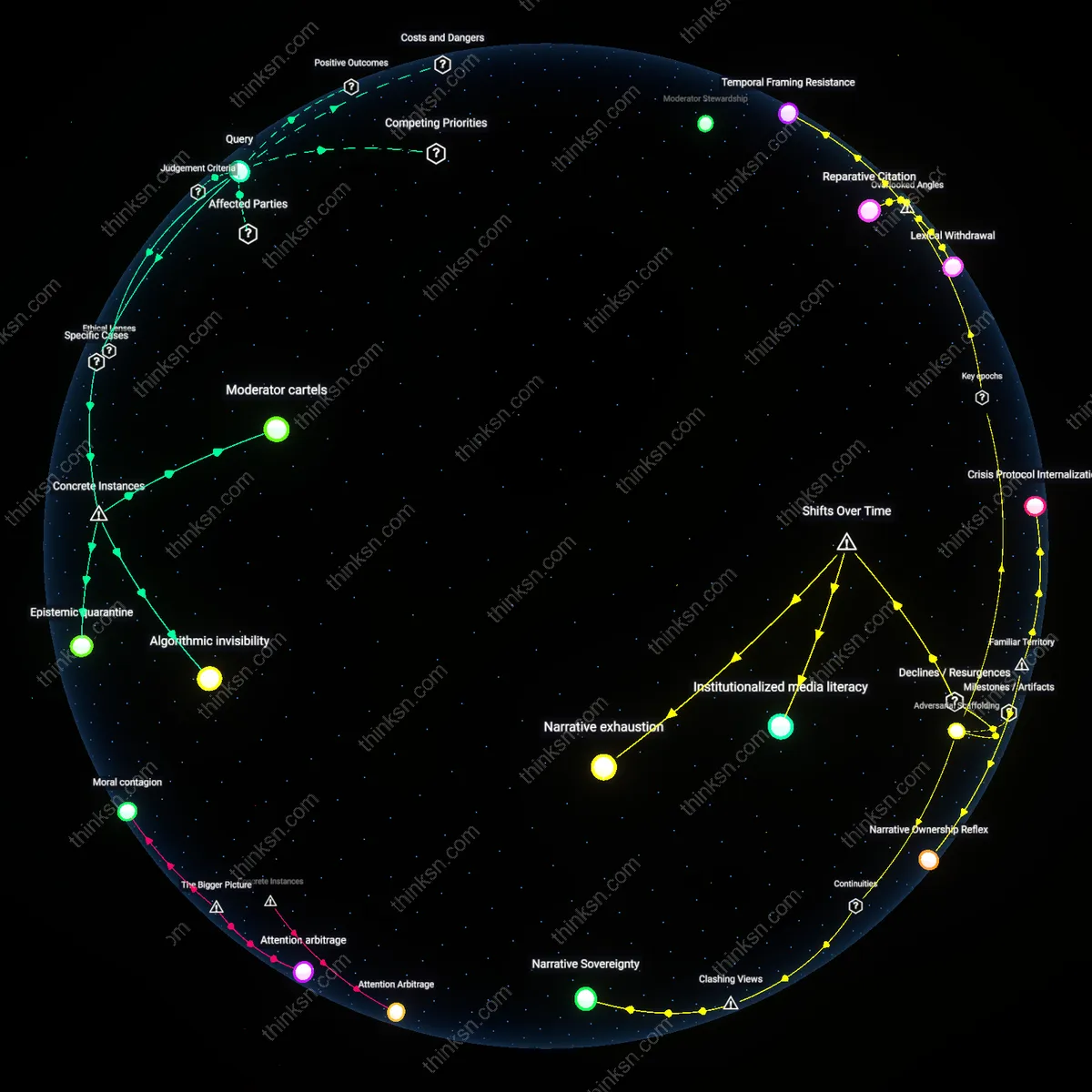

Deeper Analysis

Where are the political categories in platform takedown reports actually coming from, and how do they map onto real-world beliefs?

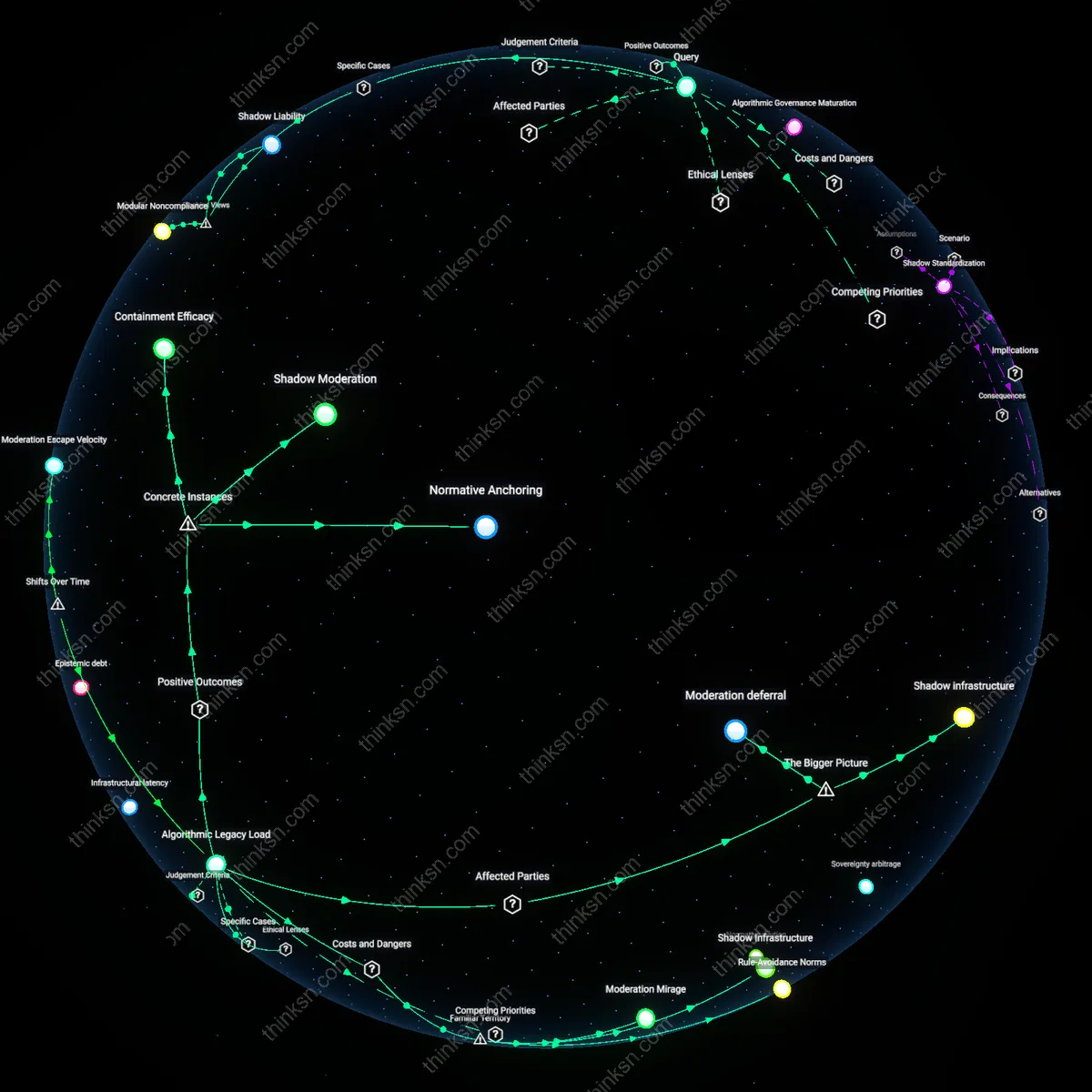

Policy Lagerstätte

Twitter’s 2020 takedown reports used categories like 'state-affiliated misinformation' that originated from internal threat assessments co-developed with U.S. Cyber Command analysts during election integrity task forces. This fusion of military-intelligence classification systems with platform governance reveals how national security doctrines sediment into corporate content policies, making ostensibly neutral categories artifacts of intergovernmental threat frameworks rather than ideologically neutral taxonomies. The non-obvious outcome is that takedown categories often pre-stage geopolitical alignments as technical metadata.

Category Arbitrage

Facebook’s classification of Hindu nationalist posts as 'hate speech' in India during the 2019 Delhi riots—while simultaneously accepting similar rhetoric from Western far-right actors under 'free political discourse'—exposed how regional moderation teams apply politically asymmetric thresholds calibrated to avoid government penalties. The categories are not ideologically consistent but emerge from cumulative legal threats and market compliance trade-offs, making enforcement a spatialized risk calculation rather than a value-based system. This reveals that platform labels often conceal jurisdictional bargaining more than coherent belief-mapping.

Echo Taxonomy

YouTube’s demonetization of antivaccination content in 2021, after years of permitting it under 'medical misinformation,' followed internal whistleblower testimony linking recommendation algorithms to real-world measles outbreaks in Oregon and Samoa. The political category emerged retroactively from forensic externalities—user harm documented by epidemiologists and lawmakers—not from ideological classification systems. This shows that platform-defined beliefs are often crystallized only after societal damage forces retroactive labeling, revealing categories as epiphenomenal to crisis.

Regulatory anticipation

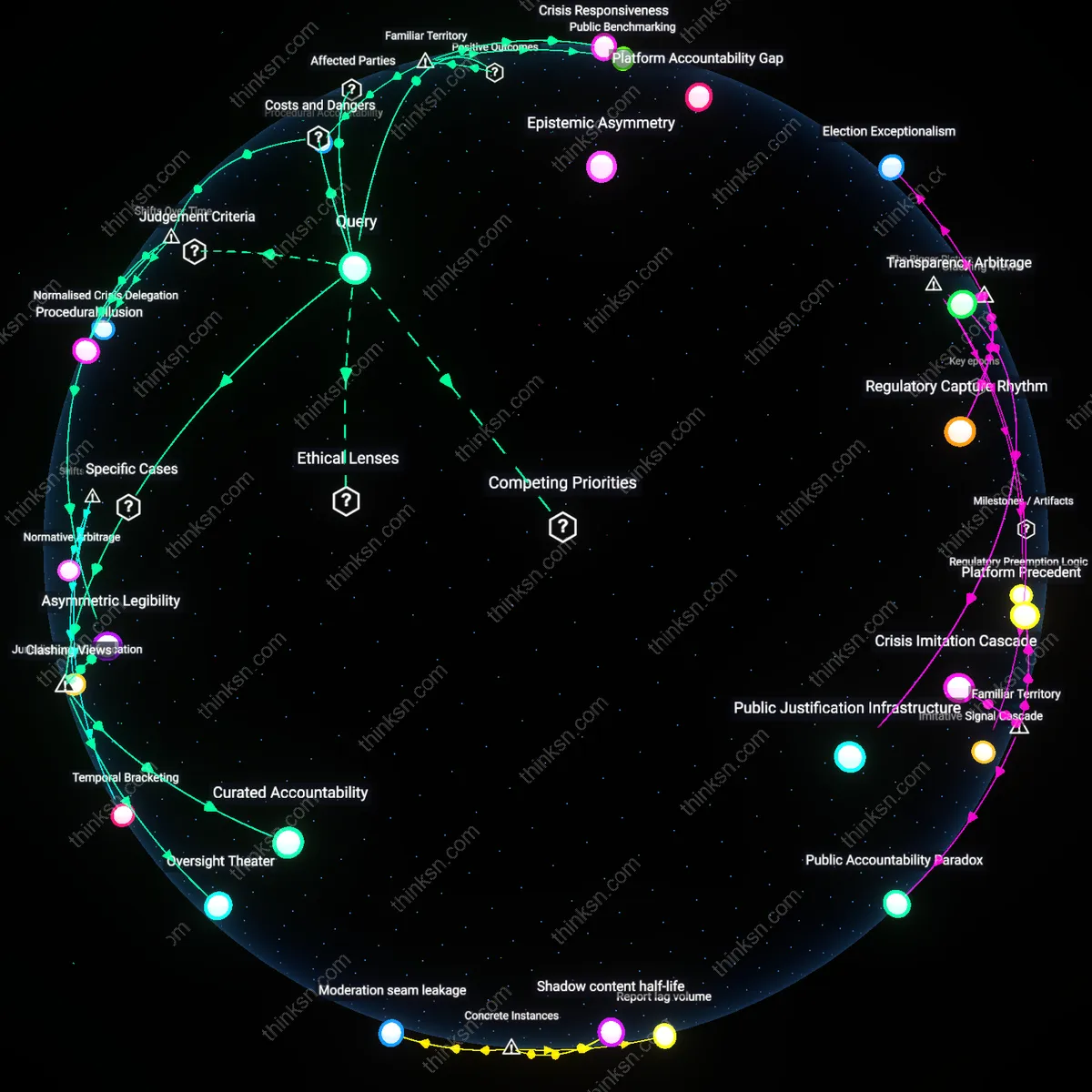

Platform takedown categories emerge from anticipated legal obligations rather than ideological classification, as companies like Meta and Twitter align internal enforcement frameworks with emerging legislative standards such as the EU’s Digital Services Act. This mechanism involves compliance teams translating vague regulatory signals into operational taxonomies, often prior to formal rule enactment, thereby preempting liability by institutionalizing categories that mirror jurisdictional risk profiles. The non-obvious consequence is that political speech boundaries are effectively codified by corporate risk assessment units, not content policy experts, making de facto regulation a function of predictive legal compliance.

Epistemic convergence

Political categories in takedown reports reflect a narrowing consensus among third-party fact-checkers, academic advisors, and platform trust organizations that jointly define what constitutes harmful political content, particularly around elections and civic integrity. These actors—such as the Election Integrity Partnership or Global Network on Extremism and Technology—produce shared threat models that platforms then operationalize into enforcement labels, creating a feedback loop where academic research and NGO advocacy become embedded in algorithmic governance. This convergence is significant because it reveals how decentralized expert networks, not state actors or internal platform teams, serve as the primary epistemic source for categorizing political harm.

Adversarial alignment

The structure of political categories in takedown reports is shaped by repeated interaction with coordinated inauthentic behavior, where state-linked actors and extremist groups exploit platform features in predictable ways, forcing platforms to categorize political speech based on behavioral patterns rather than ideological content. Units like Facebook’s Threat Exchange or YouTube’s anti-abuse team develop typologies grounded in forensic signals—such as bot-like sharing, IP clustering, or cross-platform synchronization—rather than manifestos or rhetoric, leading to categories defined by adversarial tactics. This reveals that platform political taxonomy is less about belief systems and more about operational security logics adapted from counterintelligence practices.

Regulatory Proxies

Platform takedown categories originate as simplified proxies for state-defined legal violations, particularly those tied to national security and electoral integrity, allowing platforms to align with government expectations without adopting official legal standards. This mechanism operates through legal compliance teams at major platforms like Meta and YouTube, who translate ambiguous regulatory pressures—such as U.S. CISA advisories or EU DSA obligations—into discrete content labels like 'foreign interference' or 'coordinated inauthentic behavior.' The non-obvious significance is that these categories do not reflect grassroots political typologies but are instead risk-mitigation constructs designed to anticipate state scrutiny, making them structural echoes of governance rather than belief systems.

Moderation Heuristics

Political labels in takedown reports emerge from internal operational heuristics developed by trust and safety teams to enable scalable content review, where complex ideologies are reduced to recognizable behavioral patterns such as hashtag clusters, network topology, or posting tempo. These proxies—like 'anti-system ideology' or 'institutional distrust'—are trained on prior incident databases and outsourced to third-party vendors like Moderation.AI, who codify them into classifier models. The underappreciated reality is that these categories resemble medical symptoms more than political philosophies, capturing surface-level signals of harm rather than ideological substance, despite public assumptions that they map to coherent worldviews.

Crisis Epistemes

The political taxonomy in platform reports is retroactively shaped by high-profile crisis events—such as the January 6 Capitol riot or Russia’s 2016 interference—which become defining templates for what counts as politically dangerous speech. Post-crisis, platforms institutionalize the specific threat logic of those moments into evergreen categories like 'election denial' or 'paramilitary organizing,' embedding crisis-born assumptions into routine moderation logic. The overlooked implication is that these categories are not derived from systematic political theory but are fossilized emergency responses, making the classification system an archive of past panics rather than a mirror of current belief distribution.

Regulatory Forging

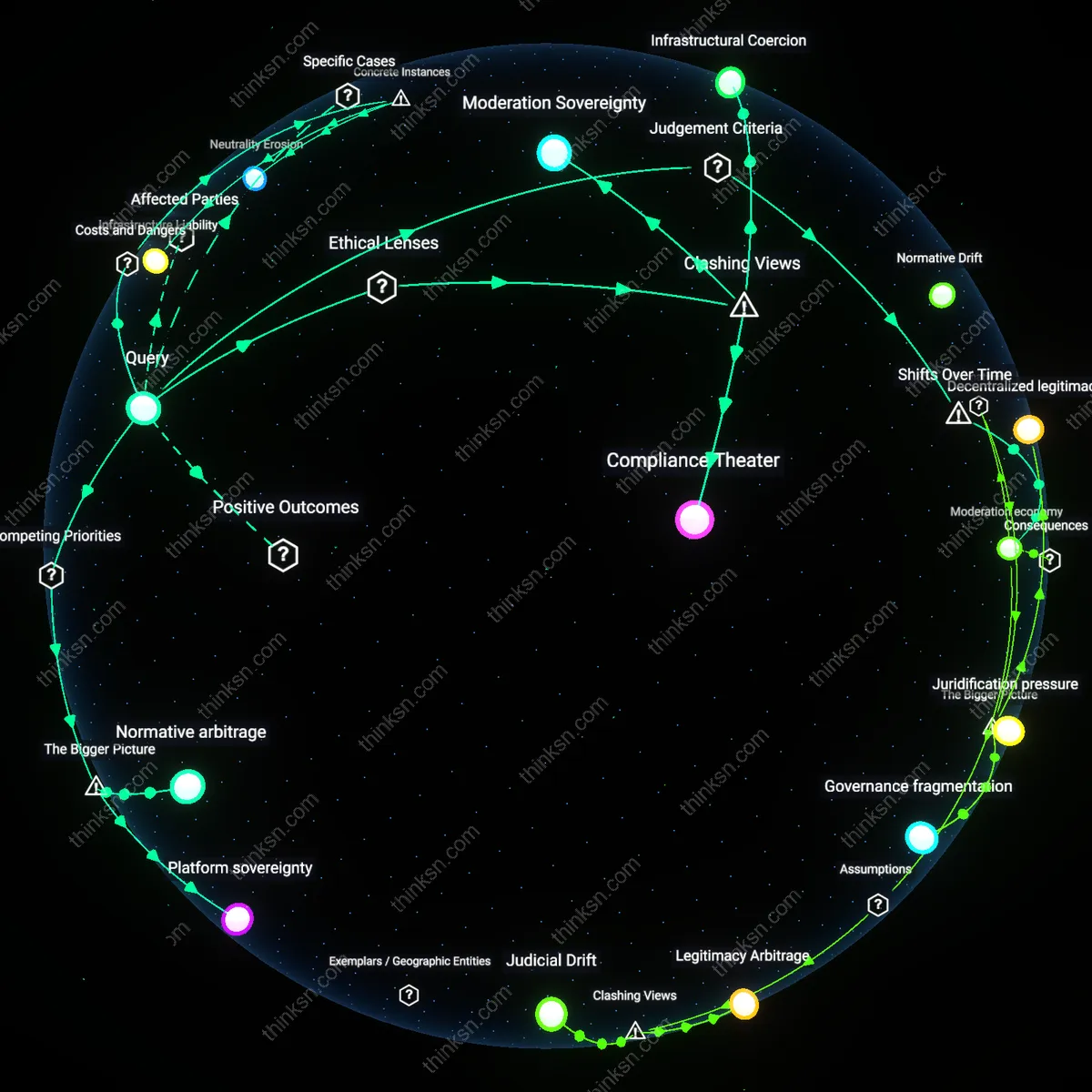

Platform takedown categories emerged from post-9/11 EU counterterrorism mandates that pressured hosting providers to classify content by ideological intent, transforming vague legal obligations into codified political taxonomies. After the 2002 E-Commerce Directive’s liability exceptions were tested by far-right extremist material on German-hosted platforms, national regulators interpreted ‘notice and takedown’ clauses as requiring proactive classification systems, leading firms like Deutsche Telekom and later Facebook to internalize state-defined labels such as ‘neo-Nazi revisionism’ or ‘jihadist incitement’—categories absent in U.S. platforms at the time. This institutionalized legal friction between member states and the European Commission during the 2005–2010 period, particularly around xenophobic speech in France and hate laws in Austria, crystallized an expectation that platforms adjudicate ideology, not just legality. The non-obvious outcome of this shift was not censorship alignment but the delegation of sovereign interpretive power to private compliance teams who now map beliefs through regulatory templates originally designed for criminal liability shielding.

Crisis Calibration

Political categories in platform takedowns began mirroring real-world belief systems only after the 2016 U.S. election, when intelligence disclosures revealed Russian IRA operations weaponizing identity politics on Facebook and Twitter, forcing a pivot from behavior-based moderation to ideology-labeled response frameworks. Before this moment, content reviewers relied on neutral terms like ‘spam’ or ‘inauthentic behavior,’ but post-2016, internal escalation protocols at Facebook introduced labels such as ‘Black Identity Disinformation’ or ‘MAGA Expansionism’ to track foreign interference patterns, effectively translating electoral crisis data into permanent classification schemas. This recalibration reframed belief systems not as protected expression but as attack vectors, with regional enforcement teams in Dublin and Menlo Park deploying these categories unevenly—amplifying scrutiny on U.S.-based movements while under-moderating similar dynamics in Brazil or India. The overlooked consequence was that crisis-driven labeling became institutionalized, making temporary frameworks appear as ontological distinctions rather than artifacts of emergency governance.

Modular Orthodoxy

By 2020, platform-defined political categories had evolved into modular, exportable rule-sets shaped less by local belief structures and more by global content moderation vendors like Besedo and Sama, whose workforce in Kenya and the Philippines applied standardized belief taxonomies derived from Western civil society templates. A pivotal shift occurred between 2018 and 2021 when Meta and YouTube outsourced Tier 2 moderation to third-party contractors required to use belief classification matrices originally prototyped for EU compliance but redeployed across Southeast Asia and Latin America, leading to mislabeling of indigenous land protests in the Philippines as ‘left-wing extremism’ and anti-mining movements in Colombia as ‘eco-terrorism.’ This standardization emerged not from ideological consensus but from procurement logic—contract incentives favored uniformity over contextual nuance, generating a de facto orthodoxy in classification detached from situated political discourse. The underappreciated effect was the emergence of belief-as-risk, where a category’s validity stems not from cultural presence but from its recurrence across audit logs and compliance reports.

Explore further:

- Where else have platform content rules clearly bent under government pressure, and what kinds of speech got suppressed or amplified as a result?

- Where do these corporate risk assessment units draw the lines on political speech, and how does that shape what content gets removed across different countries?

- How did the way platforms categorize political speech change after major crises like the Capitol riot, and what kinds of speech ended up being treated as dangerous over time?

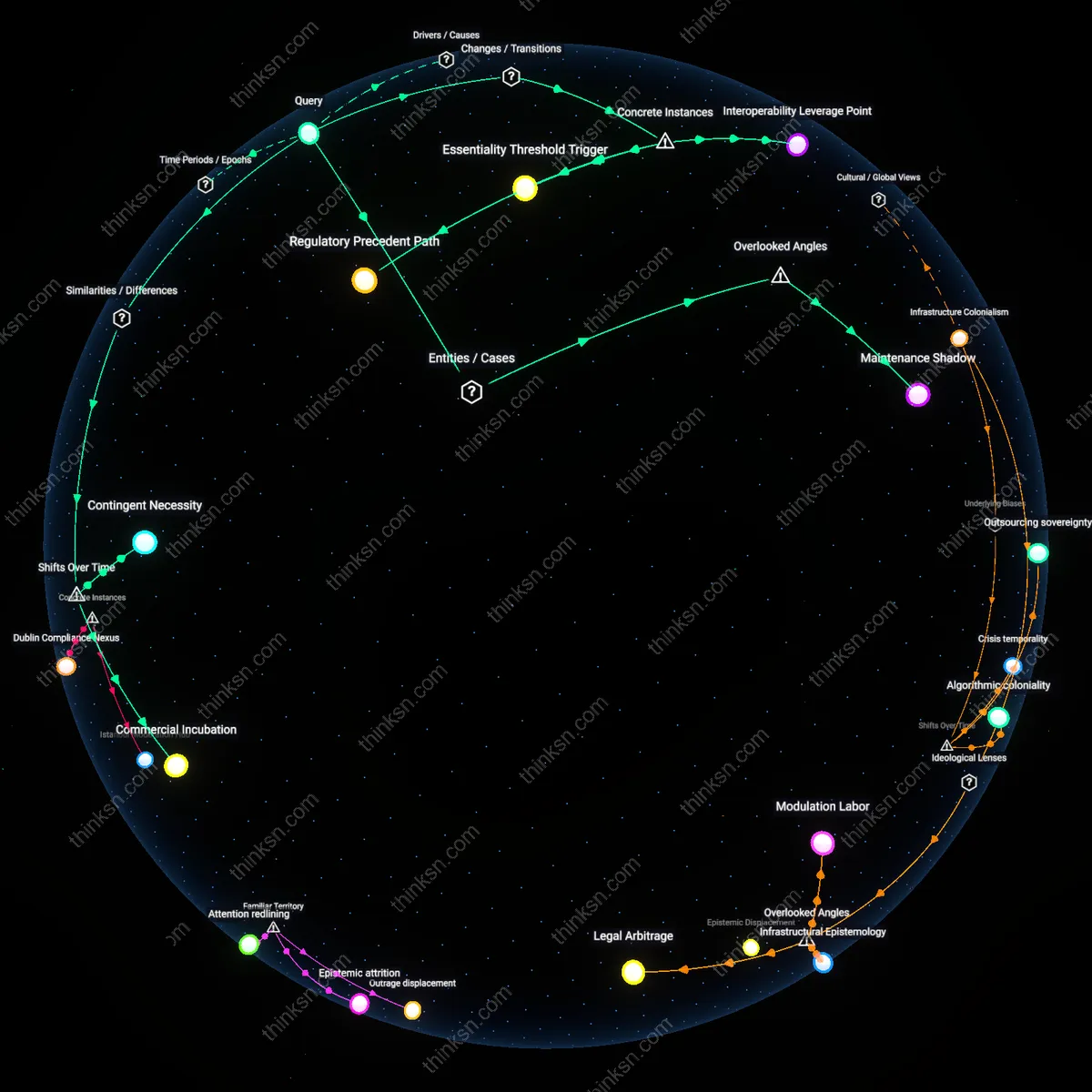

Where else have platform content rules clearly bent under government pressure, and what kinds of speech got suppressed or amplified as a result?

Compliance Inflection Point

In Turkey after 2014, social media platforms bent content rules following court-ordered removals tied to national security, marking a shift from reactive legal compliance to preemptive censorship as governments weaponized judicial processes to institutionalize takedown demands. The mechanism—using binding court orders under Law No. 5651—transformed platforms’ legal defense strategies into systemic cooperation, especially during Erdoğan's consolidation of media control post-2016 coup attempt. This shift reveals how temporary emergency postures hardened into permanent infrastructure for speech governance, normalizing rapid content suppression of Kurdish political expression and dissent under the guise of judicial legitimacy. What is underappreciated is that the turning point wasn’t state violence or direct coercion, but the strategic judicialization of content demands, which reframed political censorship as rule-of-law adherence.

Ambient Moderation Regime

In India after 2020, platform content moderation shifted from user-report-driven takedowns to algorithmic amplification of state-aligned narratives during moments of civil unrest, particularly evident during the farmers’ protests when URLs linked to protest coordination were silently throttled across multiple platforms. The mechanism—government-mandated ‘fake news’ directives under Section 69A of the IT Act—triggered automated demotion of content without formal removal, embedding state priorities within engagement algorithms rather than visible censorship logs. This marks a departure from pre-2017 patterns where takedowns were discrete and logged, toward an ambient system where speech is suppressed through invisibility rather than deletion, making resistance untraceable. The non-obvious consequence is that the historical pivot wasn’t increased removals, but the operational fusion of platform engagement logic with state crisis management protocols.

Sovereign Interface Norm

In Russia following the 2022 invasion of Ukraine, foreign platforms like Facebook and YouTube transitioned from resisting local takedown requests to building dedicated governmental liaison channels that preemptively aligned content policies with Kremlin war narratives, marking a rupture from earlier efforts to uphold global standards. The mechanism—criminal liability for 'discrediting the armed forces'—forced platforms to establish insulated teams interacting directly with Roskomnadzor, creating a procedural norm where content rules were adjusted not through code or policy but through private bureaucratic interfacing. This represents a structural shift from 2019, when noncompliance was tenable, to a new phase where continued market presence required internalized self-restriction calibrated to regime survival needs. What is rarely acknowledged is that the decisive change was not technical censorship, but the emergence of institutionalized back-channel negotiation as standard operating procedure for global platforms under authoritarian pressure.

Infrastructure Chokepoints

State control over submarine cable landing stations has forced platforms to preemptively moderate content to maintain connectivity in politically sensitive regions. In countries like Indonesia and Nigeria, governments leverage physical control over international bandwidth—where national providers manage the sole terrestrial access to undersea internet cables—to compel platforms to comply with takedown demands, as disconnection risks disrupting not only user access but also local revenue streams and data localization requirements. This mechanism is rarely acknowledged in free speech debates, which focus on legal compulsion rather than technical dependency, revealing how backbone infrastructure creates a silent veto over content moderation outcomes—particularly suppressing dissent in regions where connection fragility is high. The non-obvious insight is that data transit geography, not just law or policy, determines what speech survives online.

Licensing Leverage

Telecom regulators in India have used spectrum licensing conditions to indirectly bind foreign platforms to local content rules by tying internet service provider (ISP) cooperation to content moderation performance. Because platforms rely on Indian ISPs to deliver services efficiently, and those ISPs depend on government-renewed spectrum licenses, regulatory threats—such as renewal delays or coverage restrictions—create a coercive channel where content moderation becomes a barter good in licensing negotiations. This dynamic suppresses digital mobilization around agrarian unrest in Punjab and caste-based protests in Tamil Nadu, not through direct platform regulation but via upstream pressure on intermediaries the platforms depend on. What's overlooked is how communication infrastructure governance, normally seen as separate from speech policy, silently enforces compliance through economic entanglement rather than legal mandate.

How did the way platforms categorize political speech change after major crises like the Capitol riot, and what kinds of speech ended up being treated as dangerous over time?

Crisis-triggered recalibration

After the Capitol riot, platforms rapidly reclassified politically charged speech that referenced election fraud or incited mobilization as high-risk, bypassing earlier moderation frameworks in favor of emergency protocols. This shift was driven by real-time coordination between platform trust-and-safety teams, federal law enforcement, and internal red-team simulations that identified offline mobilization potential as an immediate systemic threat. Unlike prior content moderation cycles, which responded incrementally to public pressure, this change occurred within days due to the unprecedented convergence of physical insurrection and algorithmic amplification on platform infrastructures. The non-obvious consequence was the temporary suspension of content policy formalism—moderation became anticipatory rather than reactive, treating certain speech as dangerous not for its past effects but for its potential to synchronize violence.

Institutional mirroring

Content classification systems began to reflect the definitional frameworks of federal investigative bodies—particularly the FBI and DHS—after the Capitol riot, treating speech patterns associated with domestic terrorism, such as calls for '1488' or 'boogaloo,' with the same severity as internationally recognized extremist codes. This alignment emerged from formalized data-sharing agreements and informal liaison channels established post-2016 between Silicon Valley policy teams and national security agencies, which repositioned platforms as auxiliary arms of state surveillance infrastructure. The underappreciated dynamic is that platforms did not merely adopt government labels but actively refined them using behavioral metadata, thereby blurring the line between state-defined threats and platform-defined harms. This institutional convergence transformed speech evaluation from a community standards exercise into a risk-intelligence operation.

Crisis Amplification Loop

Platforms intensified downranking and removal of political speech that echoed insurrectionist themes after the Capitol riot. Moderation systems operated through automated flagging and human review teams scaling enforcement around election-related content, particularly targeting speech that fused conspiracy narratives with calls for disruption. This accelerated an existing pattern where crisis events become pretexts to expand the scope of what platforms classify as dangerous, reinforcing a feedback loop in which rare but shocking events justify permanent expansions of risk categories long after the emergency fades—something rarely acknowledged even as users experience broadened takedown regimes.

Narrative Exclusion Regime

Platforms formalized policies to isolate narratives that challenge the procedural legitimacy of democratic transitions, such as claims of systemic electoral fraud, by embedding them within prohibited behavior categories like civic integrity violations. This operated through persistent label infrastructures—warning banners, recommendation throttling, account suspensions—that elevated procedural loyalty over expressive latitude, particularly for elected officials. What remains hidden in plain sight is that the criteria for dangerousness now pivot on adherence to consensus narratives about democratic process, making speech alignment a proxy for safety, a framework widely accepted even among users who distrust authorities.