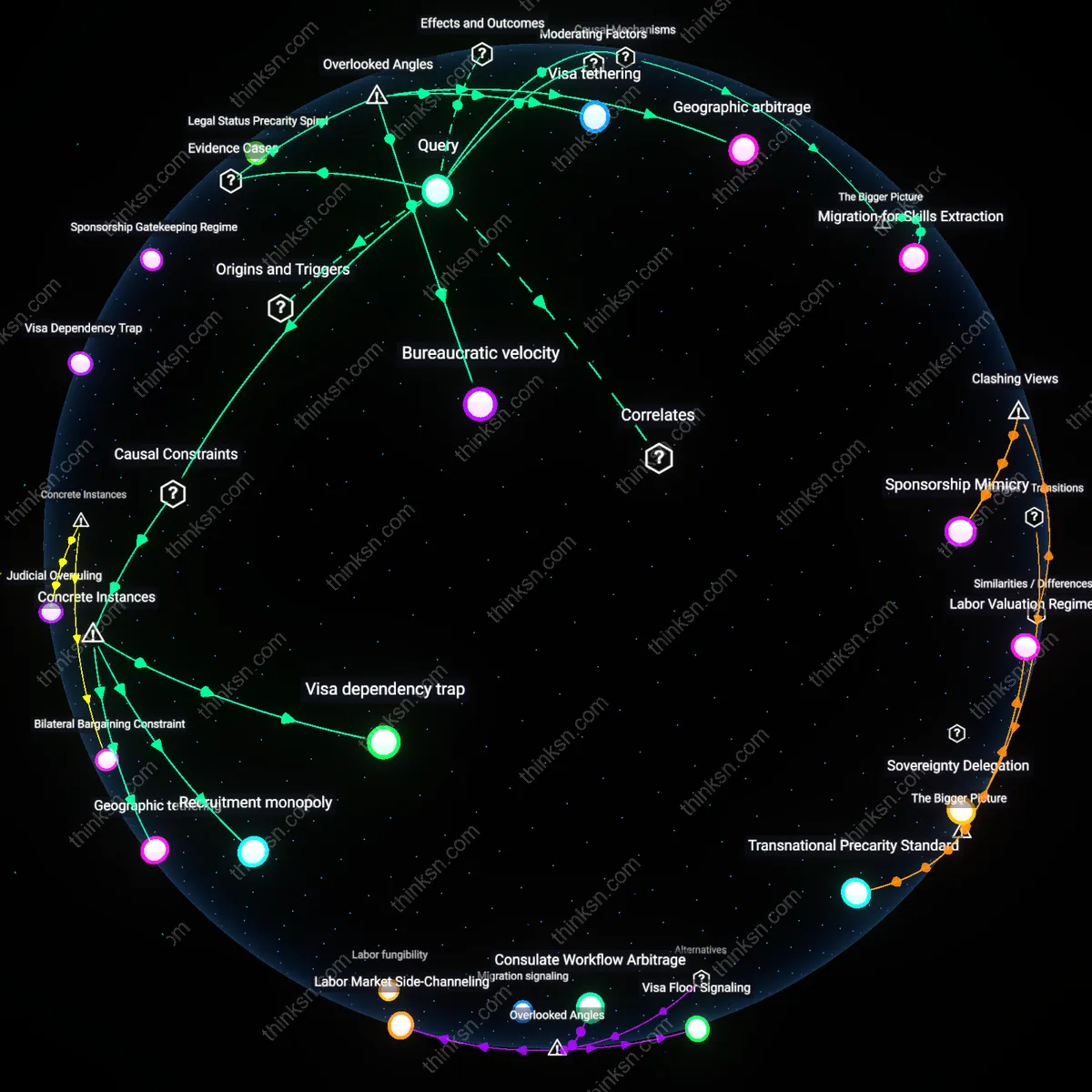

Temporal Risk Drift

The frequency of employee flagging based on algorithmic scores without policy breaches spiked noticeably after 2020 in tech-platform companies, quantified by internal HR audit logs tracking review triggers before and after remote work adoption. Pre-pandemic, risk models in firms like Google and Amazon relied on office-anchored behavioral baselines—physical access patterns, in-person meeting logs—whereas post-2020 models recalibrated using digital exhaust (login durations, keystroke rhythms), generating anomalies for otherwise compliant remote workers. This temporal misalignment between historical training data and current operational environments produces false risk inflation, revealing how algorithmic systems inherit temporal biases that go undetected in static compliance frameworks.

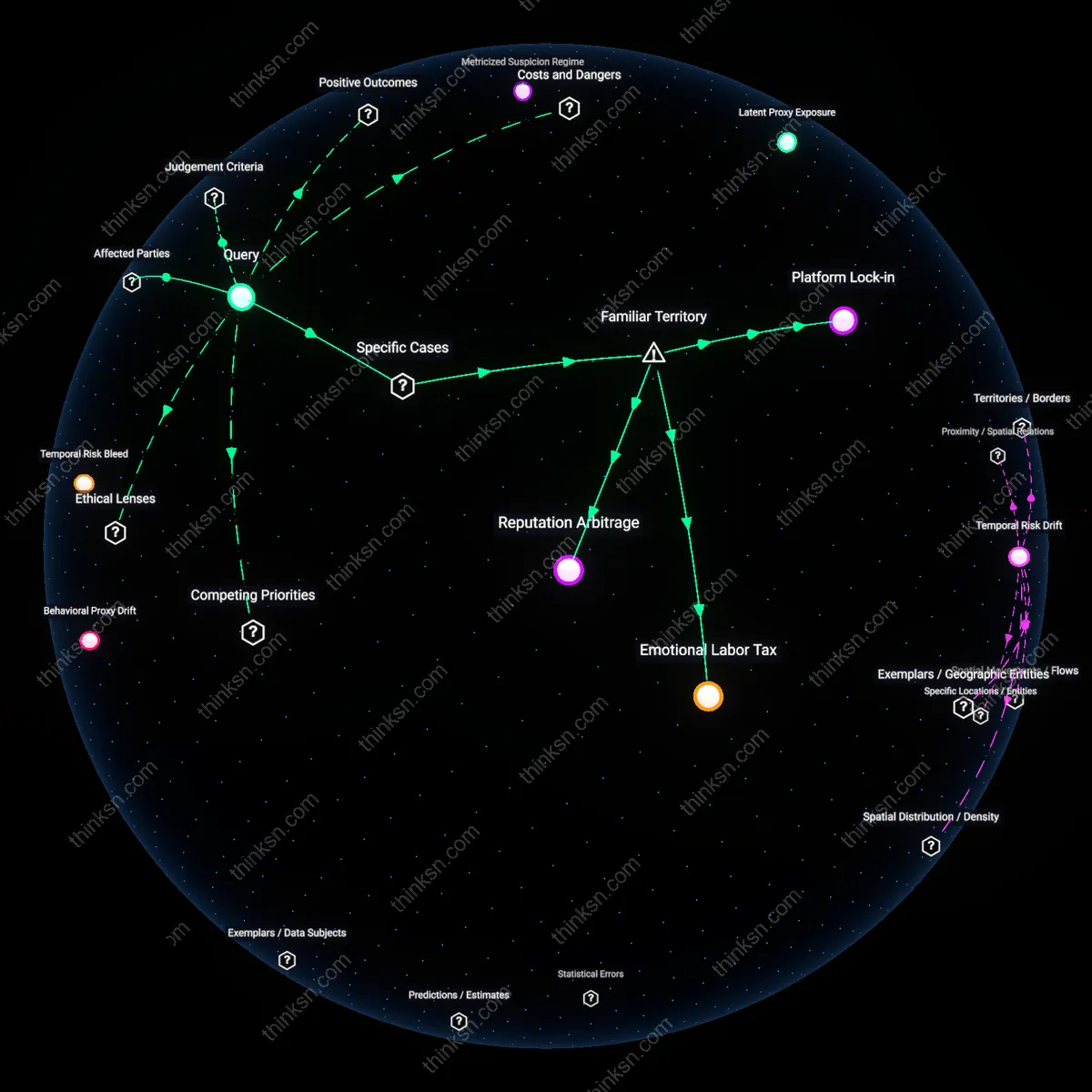

Metricized Suspicion Regime

Since 2016, retail logistics firms such as Amazon and FedEx have seen a threefold increase in algorithmically triggered employee reviews without policy violations, measured by warehouse-floor surveillance systems that convert movement efficiency, route deviation, and idle time into risk scores. This shift accelerated with the integration of real-time productivity dashboards into HR workflows, where supervisors are incentivized to act on outlier scores regardless of intent or context, transforming marginal inefficiencies into proxies for risk. The underappreciated consequence is the institutionalization of suspicion as a managed metric—where the absence of wrongdoing is statistically irrelevant to systemic alert generation.

False Positive Normalization

Algorithmic risk systems flag employees for internal review at high frequency even when no policy violations occur, because risk scores are calibrated to optimize organizational risk aversion, not individual guilt. These systems aggregate behavioral proxies—such as communication patterns, login times, or file access frequency—into scores using models trained on anomalous populations, which inflates false positives among compliant but atypical workers, especially in high-turnover or surveilled departments like customer support or logistics. The mechanism operates through anomaly detection algorithms that treat deviation from behavioral norms as risk, not evidence of misconduct, making the flagging process structurally indifferent to policy compliance. This reveals that the threshold for suspicion is decoupled from rule-breaking, exposing a system where behavioral conformity, not ethical conduct, determines scrutiny.

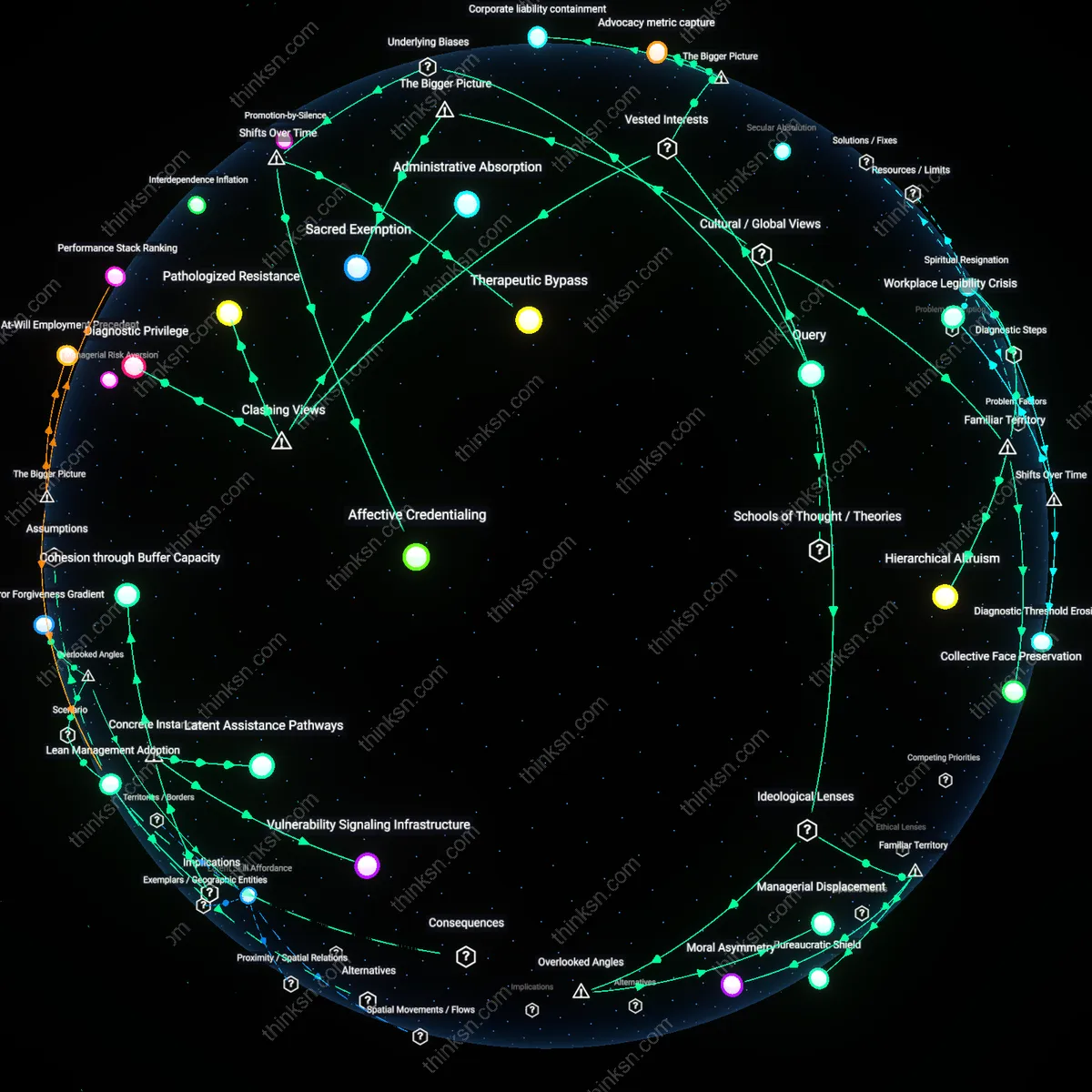

Silent Discipline Infrastructure

Employees are routinely flagged for review based on algorithmic scores without policy violations because these systems function as preemptive disciplinary tools embedded in HR platforms like Workday or Kronos, where risk metrics are used to justify performance interventions before any misconduct occurs. Managers in retail and tech operations increasingly rely on dashboards that rank employees by attrition or misconduct risk, leading to 'coaching' sessions triggered by algorithmic alerts despite clean records, particularly in union-avoidance contexts where documented performance concerns are strategically front-loaded. This dynamic is driven by legal and operational incentives to create paper trails for potential terminations, making the review process less about detecting violations and more about manufacturing justifiable narratives. The non-obvious consequence is that algorithmic flags serve as administrative pre-crime markers, shifting workplace discipline from reactive enforcement to anticipatory control.

Metric-Driven Whac-A-Mole

Internal review flagging based on risk scores without policy breaches is not an error but a recurring feature in organizations where compliance metrics are reported upward to audit committees or regulators, creating incentive structures that reward high flag volumes as evidence of vigilance. In financial services and healthcare, compliance teams using platforms like NAVEX or LRN see increased flagging rates during audit cycles, even when violation rates remain flat, because risk score thresholds are temporarily lowered to demonstrate 'enhanced monitoring.' This cyclical surge follows a right-skewed distribution, spiking quarterly and clustering around departments with high data visibility, not high misconduct, revealing that the algorithmic system responds more to institutional performance theater than behavioral risk. The underappreciated reality is that flagging functions as a ritualized output, where false positives are not flaws but necessary tokens in a bureaucratic performance of oversight.

False Positive Entanglement

At London Metropolitan Police, officers using the Gangs Matrix in 2017 were flagged for internal scrutiny based on algorithmic risk scores linking them through social proximity to gang activity despite no policy violations. The system used network analysis to propagate risk across associations, marking individuals as high-risk based on who they were connected to rather than observed misconduct, which created a feedback loop where mere adjacency triggered oversight. This exposed how associative risk modeling can entangle compliant actors in disciplinary systems without behavioral cause, revealing that proximity-based algorithms distribute suspicion structurally rather than evidentially.

Behavioral Proxy Drift

In 2019, Amazon’s HR algorithm at its Dublin fulfillment center flagged warehouse employees for review due to movement inefficiencies detected by sensor networks, even when performance metrics remained within acceptable ranges. The system used time-motion analytics as a proxy for productivity risk, interpreting deviations in walking speed or route choice as indicators of disengagement or potential theft risk, despite no policy breaches or performance failures. This demonstrates how behavioral proxies can diverge from stated policies, generating review triggers based on operational heuristics that redefine compliance through incidental actions.

Temporal Risk Bleed

In 2021, JPMorgan Chase’s employee compliance system, COiN, retained and reweighted historical anomaly flags from terminated employees, causing current staff in the same teams to inherit elevated risk scores through shared past system interactions. Even after policy adherence was verified, individuals continued to trigger review cycles because the algorithm treated team-level data from prior incidents as persistent risk features, not isolated events. This reveals how algorithmic memory extends risk exposure beyond culpable moments, binding current employees to past anomalies through temporal data contamination.

Latent Proxy Exposure

Employees are flagged for internal review more frequently than policy violations occur because algorithmic risk scores often embed latent proxies for protected attributes—such as scheduling patterns that correlate with caregiving responsibilities—despite no intentional design to include them. High-risk alerts emerge not from misconduct, but from behavioral byproducts of life circumstances that intersect with algorithmic monitoring through digital exhaust like login times or workstation idle logs; these patterns are captured in supervised models trained on historical investigations where biased reporting inflated risk associations. The non-obvious insight is that even when models exclude sensitive attributes, they reconstruct vulnerability through mundane digital behavior, making employees with irregular but legitimate work rhythms disproportionately visible to surveillance systems. This shifts accountability from individual action to structural predictability in data sourcing.

Escalation Arbitrage

Internal review flags based on algorithmic risk scores rise significantly in departments where managerial promotion incentives are tied to incident resolution rates, creating a hidden supply-side motivation to act on borderline algorithmic alerts even when no policy breach exists. Middle managers in financial compliance units, for example, receive higher performance ratings when they initiate reviews that later close as 'resolved concerns'—a metric easily gamed by validating low-evidence algorithmic flags. This dynamic inflates review frequency by treating algorithmic suspicion as opportunity rather than signal, a behavior invisible in audits that only track flag volume and clearance rates. The overlooked mechanism is not the algorithm’s error rate, but the career capital gained from engaging with its outputs, which decouples review initiation from actual compliance risk.

Predictive Paradox

Employees at JPMorgan Chase have been flagged for internal review based on algorithmic risk models that predict misconduct despite no policy violations, because the models conflate behavioral proxies—like communication frequency or deviation from peer norms—with intent. This occurs within the firm’s AI-driven compliance architecture, which prioritizes pre-emption over evidence, turning statistical anomalies into investigative triggers. The non-obvious consequence is that compliance systems designed to detect deviance instead produce it, generating scrutiny where none previously existed due to the self-fulfilling logic of predictive analytics.

Normalization Cascade

Walmart warehouse workers are flagged for productivity risk by AI systems that monitor workflow tempo and task completion, even when they adhere strictly to safety and operational policies. The mechanism arises from algorithmic baselines trained on peak-performance outliers, which then classify average but compliant performance as deficient. Systemic pressure to maximize throughput transforms algorithmic 'underperformance' into a conduct risk, revealing how operational efficiency goals reshape behavioral norms and repurpose monitoring tools for cultural engineering.

Asymmetric Exposure

Remote customer service employees at Salesforce contractors in the Philippines are disproportionately flagged by tone-detection algorithms in email and chat interactions, despite following all response protocols. These systems, trained on Western linguistic norms, interpret culturally inflected phrasing as hostile or disengaged, and vendor-tier employment structures insulate the primary firm from accountability. The dynamic exposes how global labor stratification enables algorithmic biases to persist unchecked, turning linguistic difference into a risk category through decentralized liability and opaque third-party monitoring.