Facial Recognition in Healthcare: Security vs. Personal Freedom?

Analysis reveals 8 key thematic connections.

Key Findings

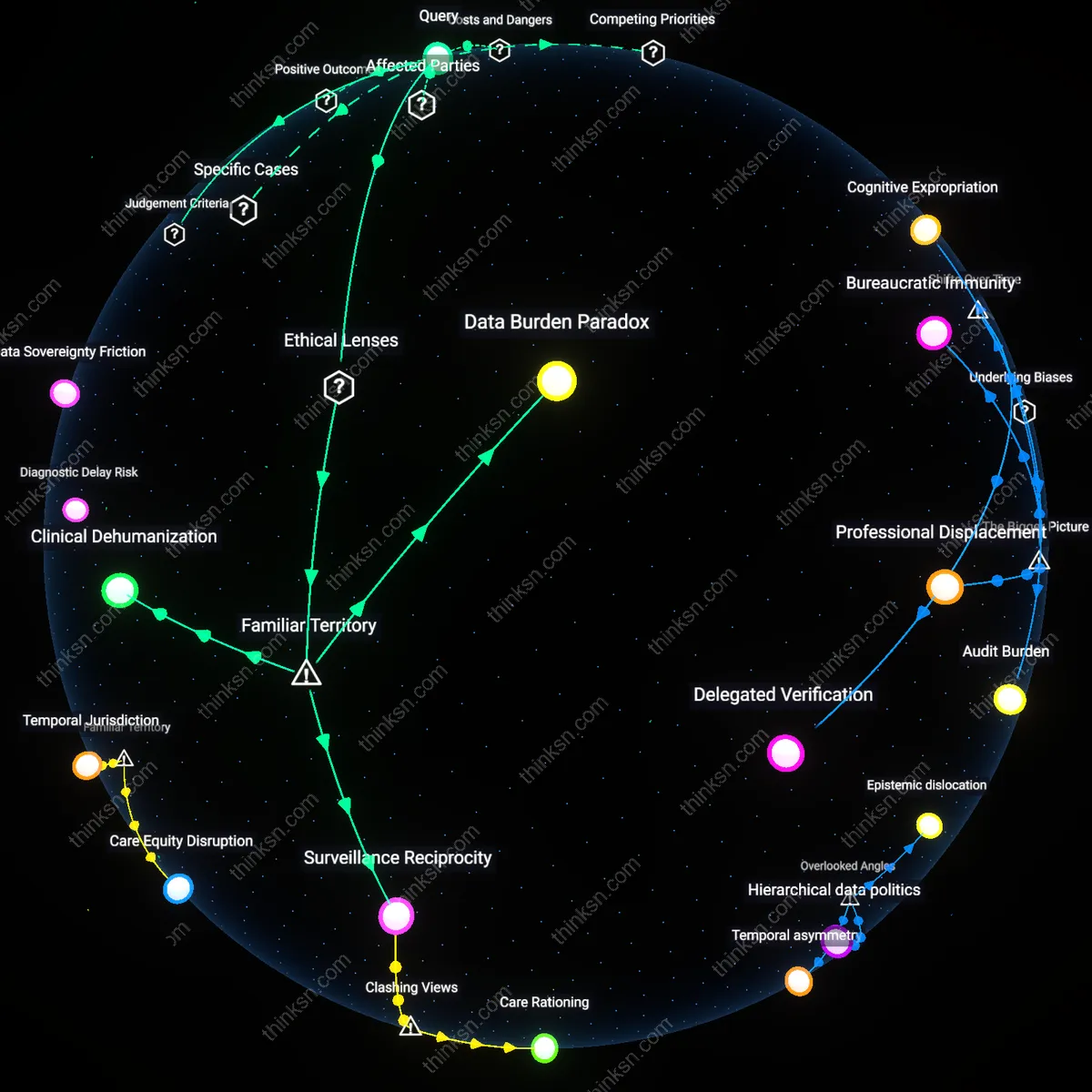

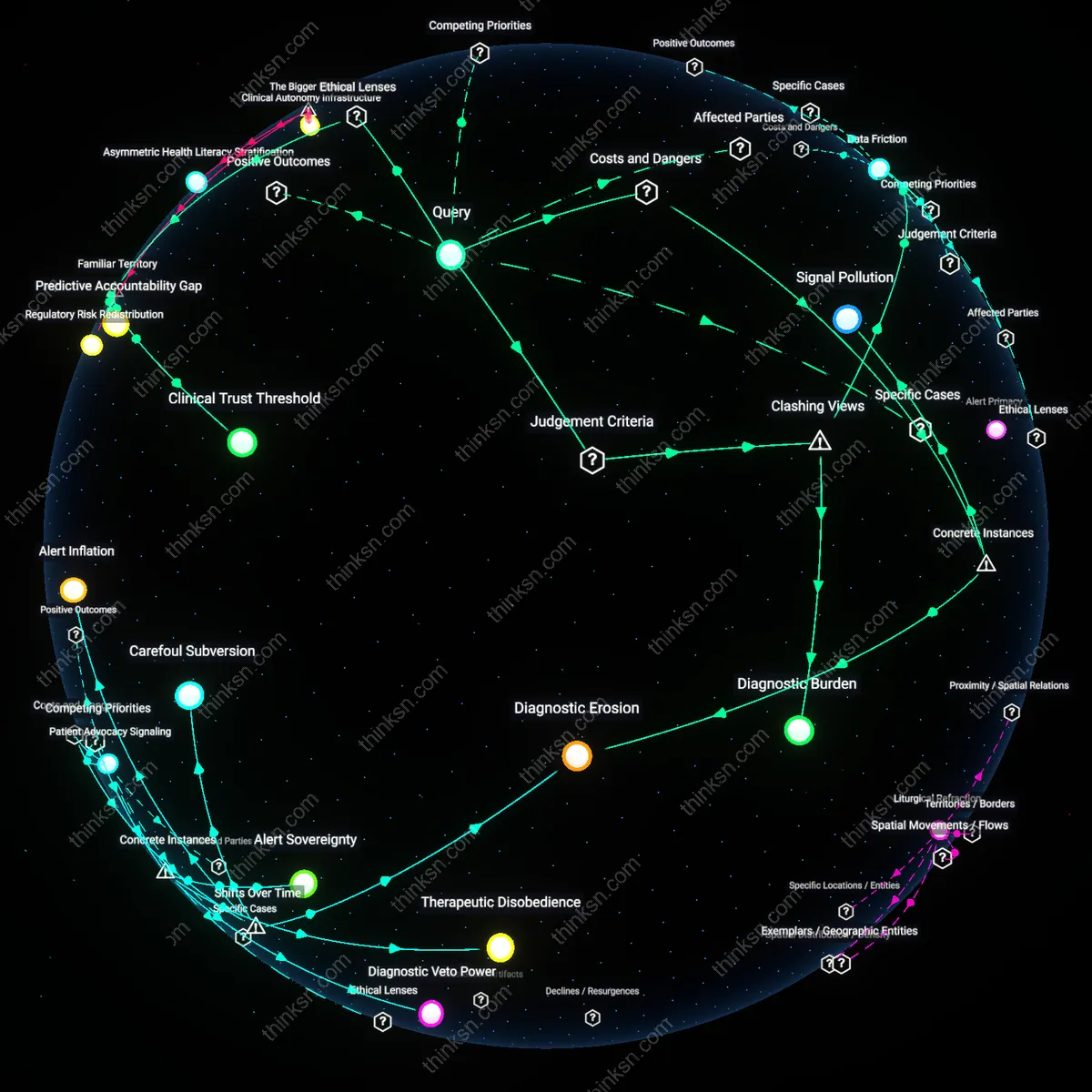

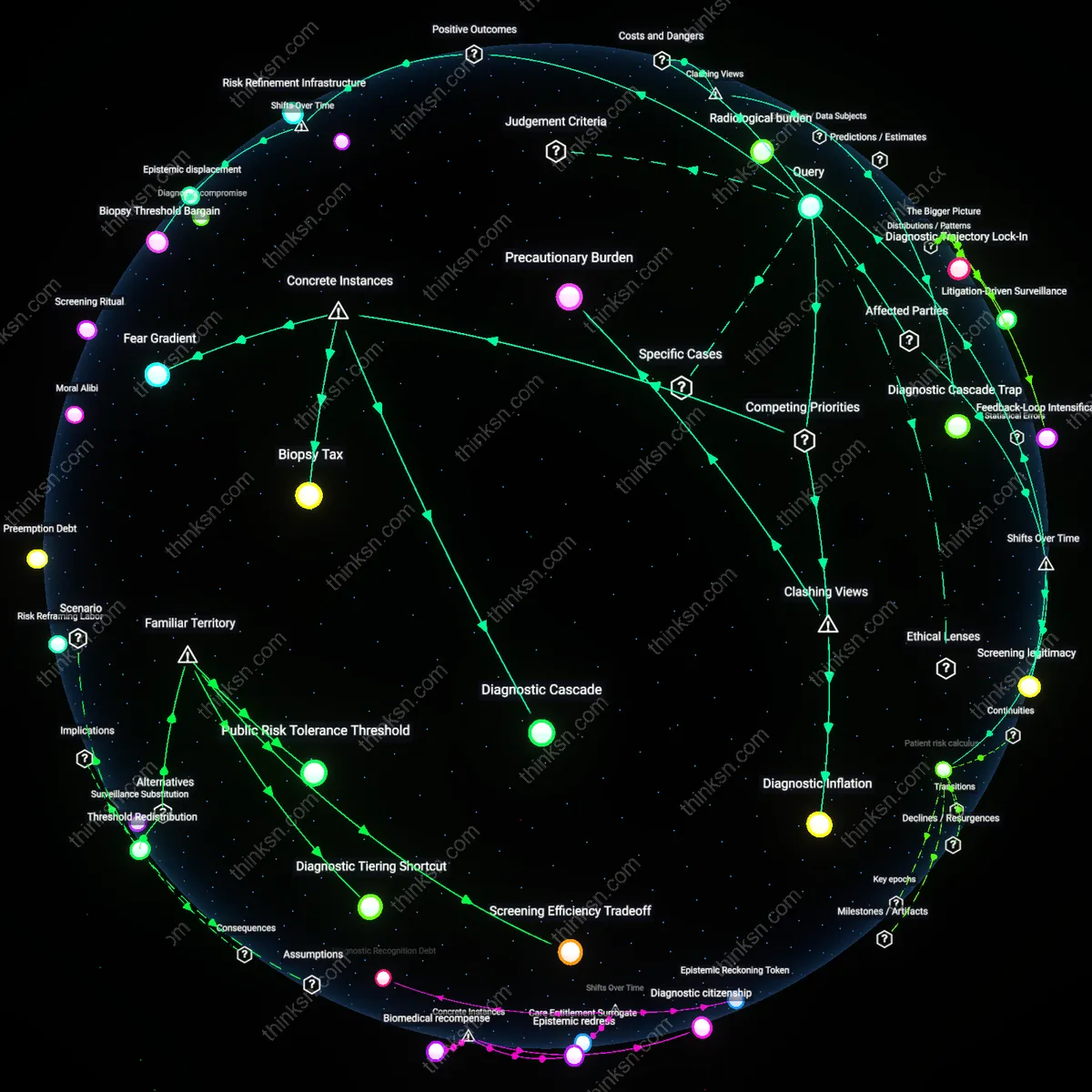

Therapeutic Surveillance

Facial recognition access to health records enhances clinical continuity by reducing authentication friction for patients with cognitive impairments, enabling uninterrupted care in geriatric and neurodegenerative contexts. Health systems like NHS Trusts piloting biometric login in dementia wards show improved medication adherence and reduced administrative errors because patients unable to recall passwords or manage tokens can still securely access personalized treatment plans. This reframes surveillance not as autonomy erosion but as care infrastructure, challenging the assumption that biometric control inherently undermines agency—instead, in compromised decision-making environments, automated recognition sustains it. The non-obvious insight is that autonomy can be preserved through reduced conscious consent when cognitive incapacity is systemic, not exceptional.

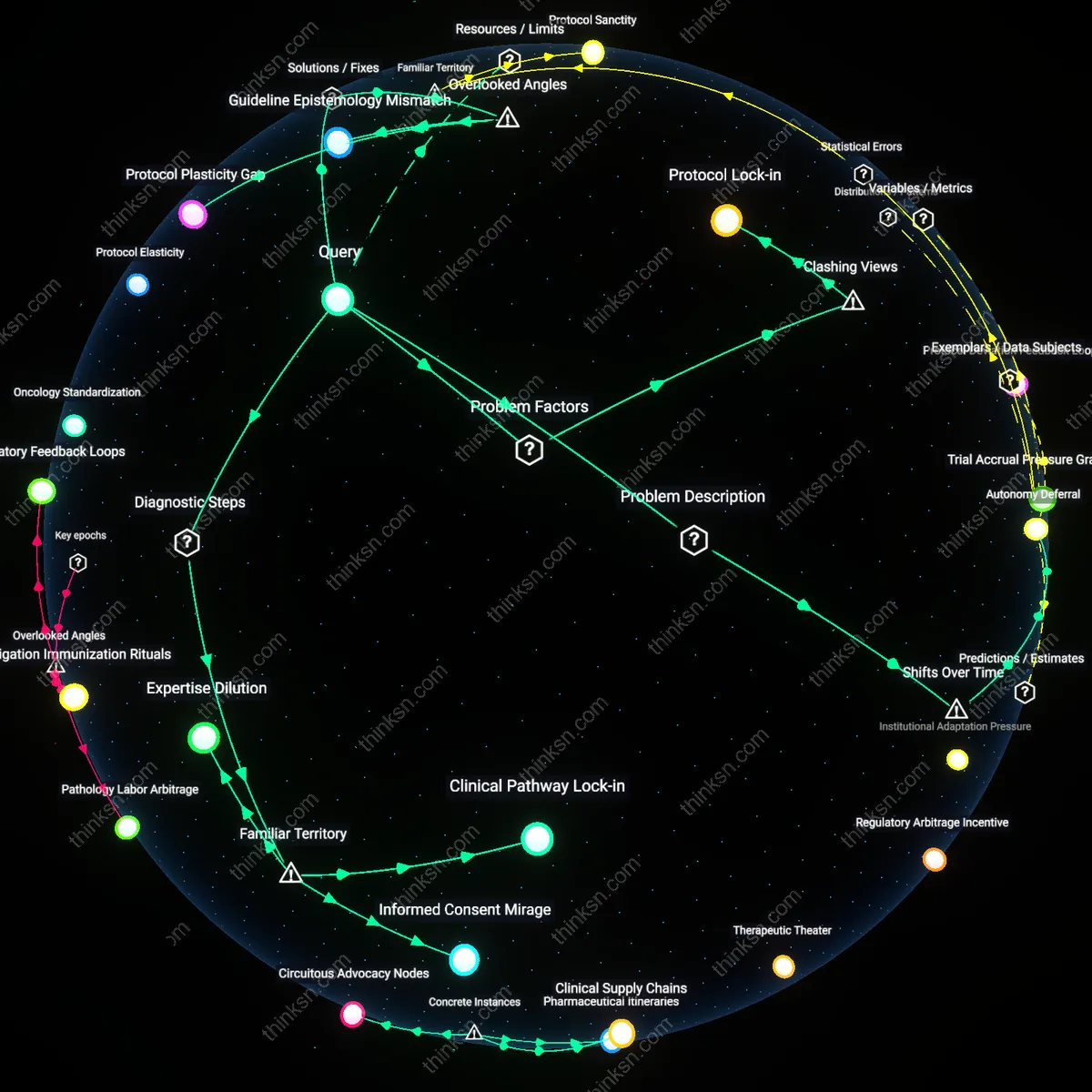

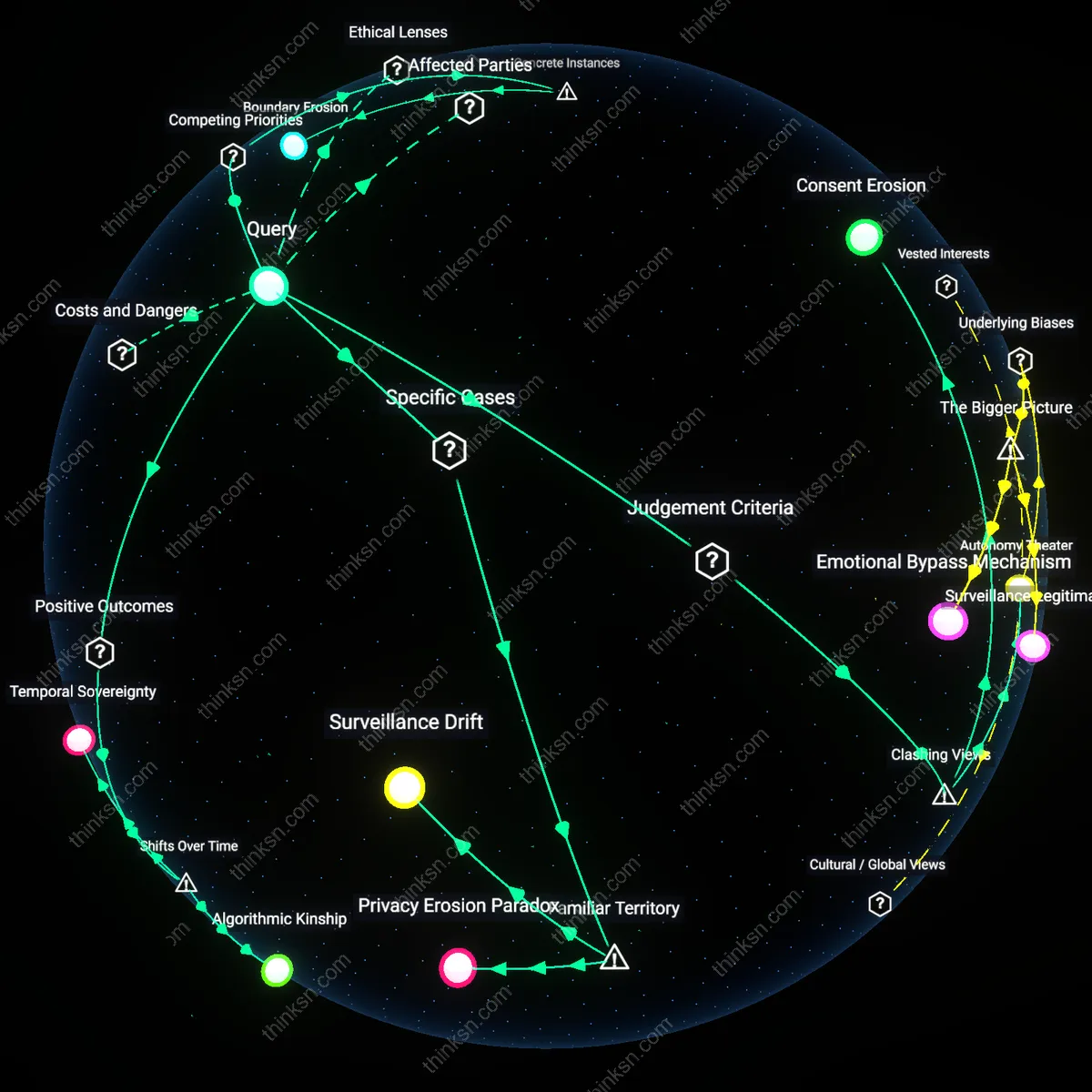

Asymmetric Trust Transfer

The trade-off becomes unacceptable not when surveillance expands, but when facial recognition systems delegate patient trust to third-party vendors like Clearview AI or Amazon Rekognition, who become de facto custodians of medical integrity without clinical accountability. In U.S. hospitals using off-the-shelf facial recognition APIs, audit logs reveal that authentication decisions are increasingly made by opaque machine learning models trained on non-consensual image scraping, shifting liability from healthcare providers to unregulated algorithms. This inversion—where personal autonomy is compromised not by state overreach but by privatized verification logic—exposes how security is redefined as corporate pattern-matching accuracy, not patient consent. The friction here dismantles the public-private surveillance dichotomy, showing that autonomy loss occurs through vendor dependency, not direct coercion.

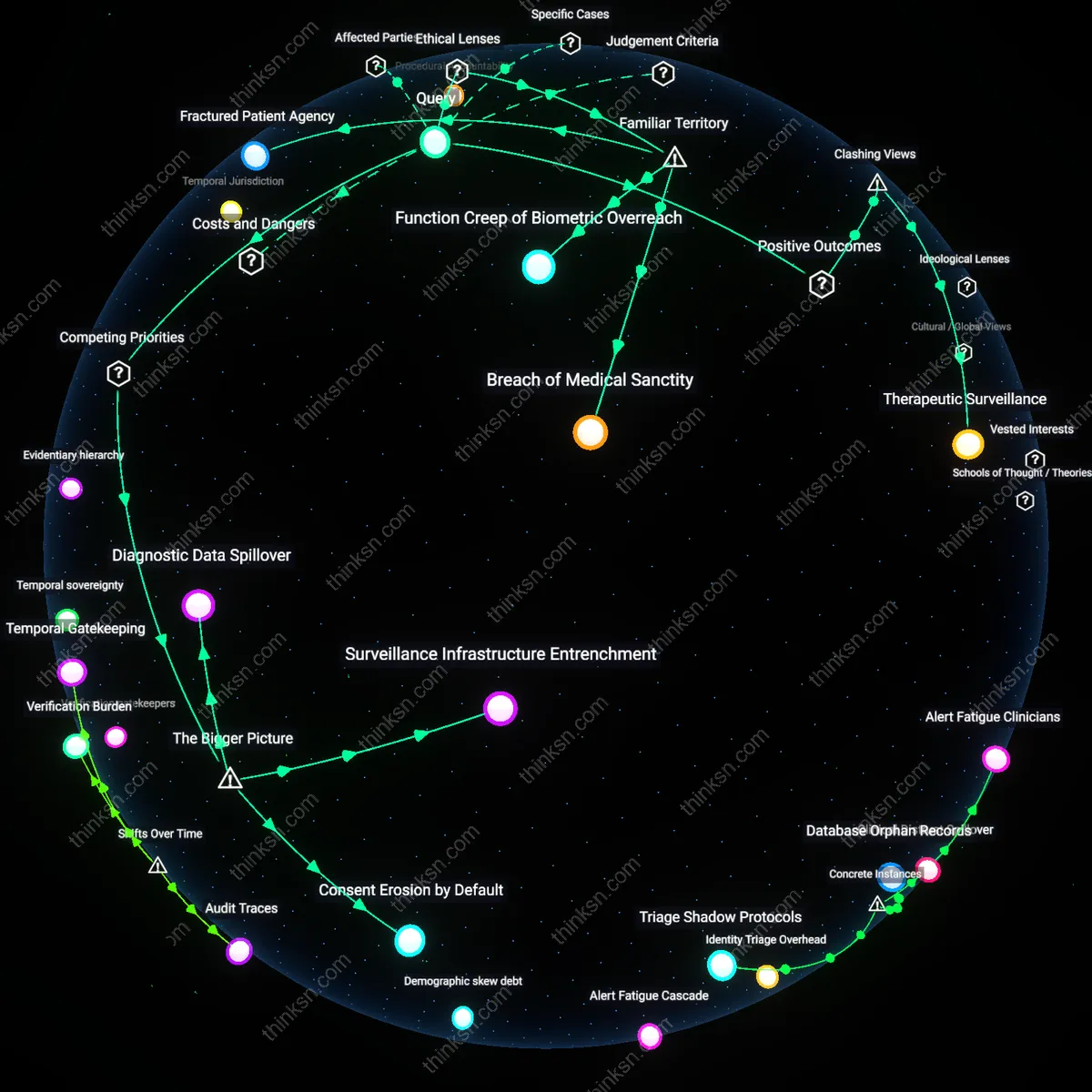

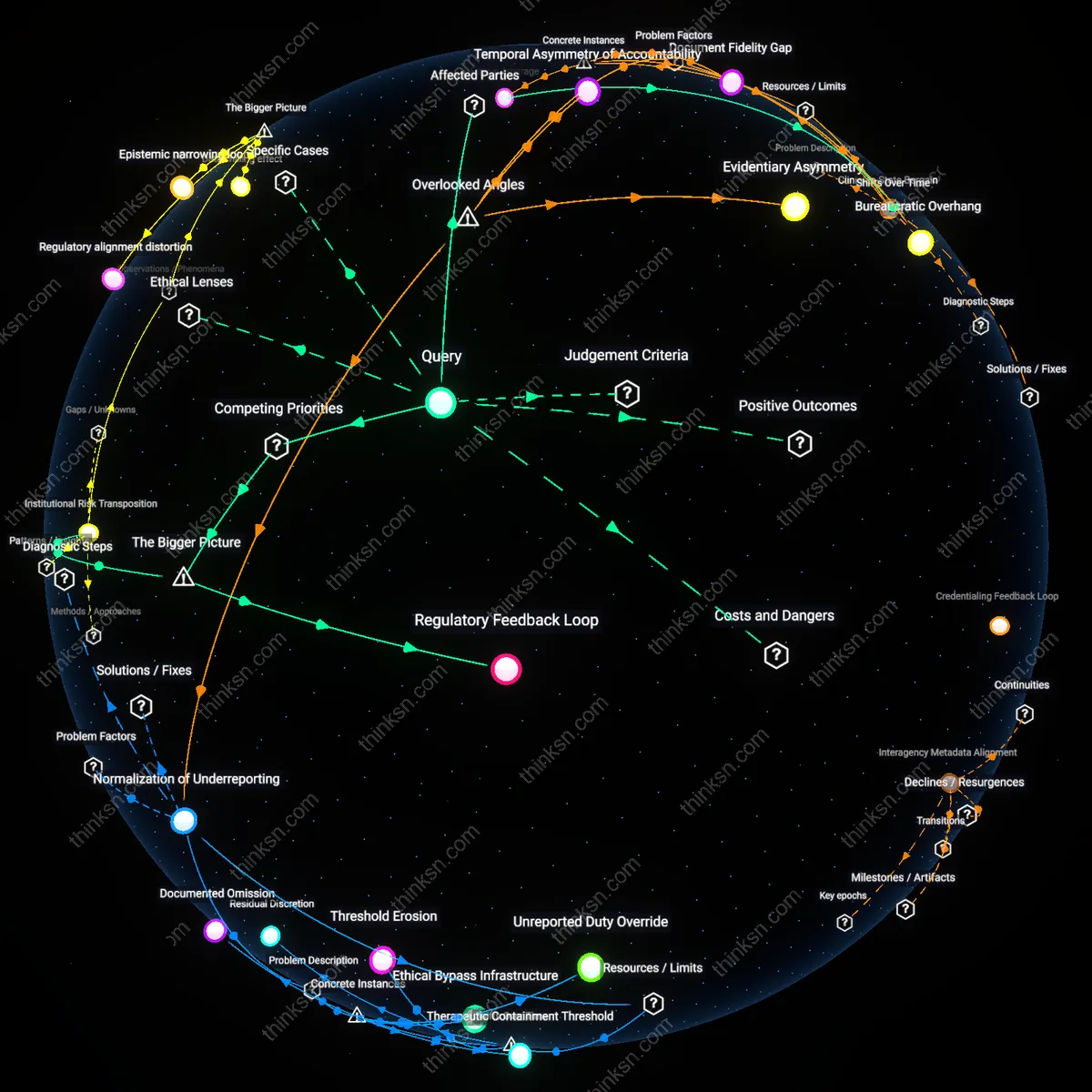

Surveillance Infrastructure Entrenchment

The trade-off becomes unacceptable when facial recognition systems for health access become integrated into urban surveillance infrastructure, enabling mission creep where data collected for healthcare is repurposed for policing and population monitoring. Municipal governments and private tech firms collaborate to deploy interoperable biometric platforms under the guise of public health efficiency, creating irreversible dependencies on centralized facial recognition databases. This integration embeds surveillance capabilities into everyday civic life, making opt-out practically impossible and shifting the baseline of normative state monitoring — a change that is rarely subjected to democratic ratification. The non-obvious consequence is that healthcare access becomes a vector for normalizing permanent biometric visibility, not merely a one-off privacy trade-off.

Consent Erosion by Default

The trade-off becomes unacceptable when facial recognition is implemented as the default authentication method for health records, rendering informed consent functionally meaningless due to the asymmetry of power between patients and institutional providers. In public health systems under pressure to reduce administrative overhead, policymakers adopt facial recognition as a ‘seamless’ solution, but this convenience comes at the cost of structurally undermining patient autonomy, as opting out requires disproportionate effort or alternative verification that is less accessible. This systemic design leverages bureaucratic friction to coerce compliance, masking coercion as choice — a dynamic rarely visible in policy evaluations focused on technological accuracy or user satisfaction. The deeper issue is that autonomy is eroded not through overt restriction but through the deliberate minimization of usable alternatives.

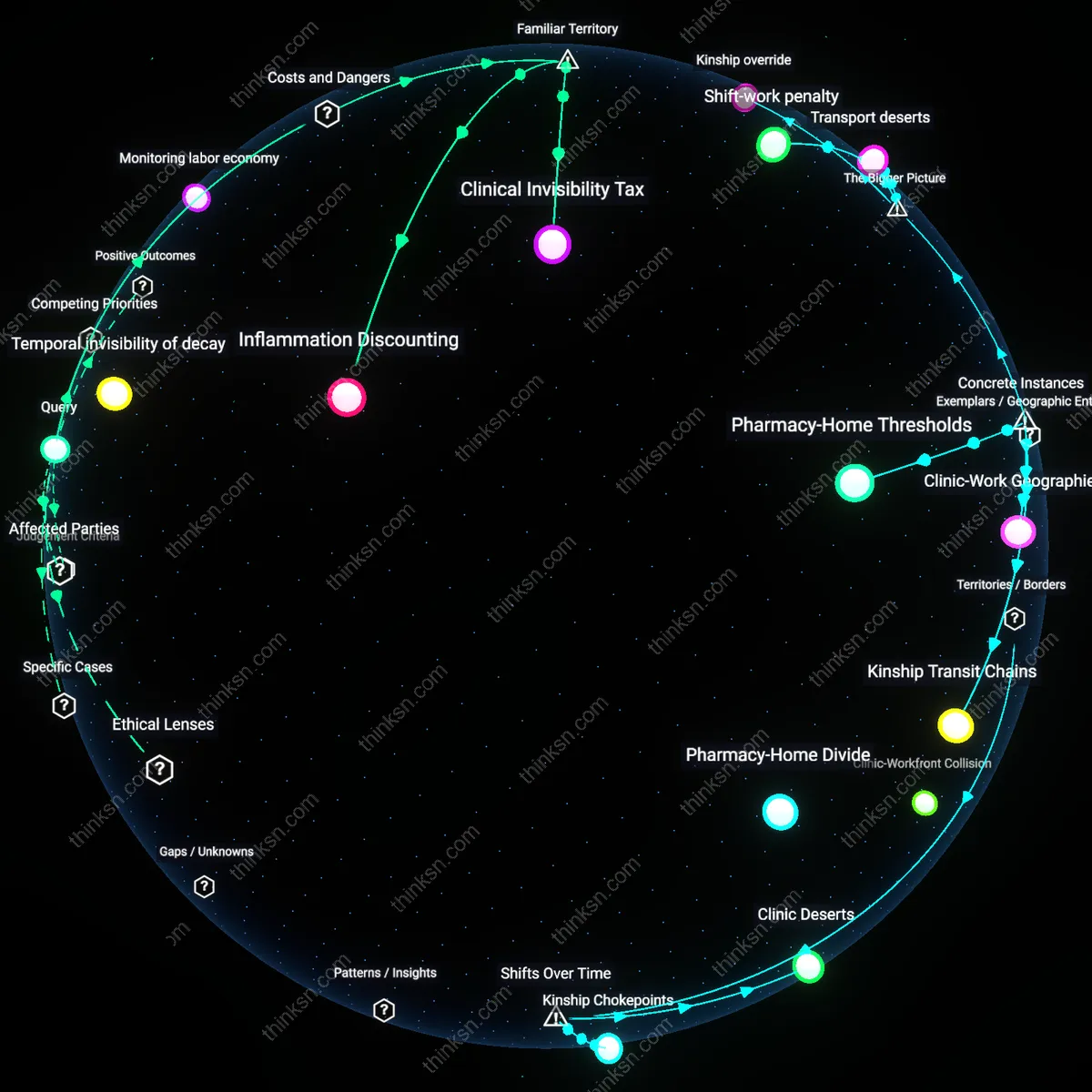

Diagnostic Data Spillover

The trade-off becomes unacceptable when facial recognition systems used for health record access begin to infer and log diagnostic indicators—such as facial asymmetry, pallor, or micro-expressions—thereby transforming authentication tools into passive diagnostic sensors without clinical validation or patient knowledge. Private healthtech companies, incentivized to monetize data exhaust, exploit the proximity of biometric authentication systems to collect phenotypic data at scale, which is then aggregated into risk profiles used by insurers or employers. This shifts the boundary of medical data collection from intentional clinical encounters to routine access events, creating unregulated pathways for health surveillance. The underappreciated dynamic is that the technology’s secondary data outputs — not its primary function — become the main source of autonomy violation.

Breach of Medical Sanctity

The trade-off becomes unacceptable when healthcare providers delegate access control to third-party facial recognition systems that lack HIPAA-grade accountability, because the mechanism of biometric authentication introduces external surveillance vectors into a domain traditionally insulated by doctor-patient confidentiality. This shift operates through the integration of commercial AI platforms—such as those operated by Clearview AI or Amazon Rekognition—into public health infrastructure, undermining the ethical principle of medical privacy as codified in both the Hippocratic Oath and the Health Insurance Portability and Accountability Act (HIPAA). What is underappreciated is that the public conflates technological convenience with systemic security, failing to recognize that facial recognition does not merely authenticate but continuously logs identity in ways that recontextualize medical visits as data events rather than sacred consultations.

Function Creep of Biometric Overreach

The trade-off becomes unacceptable the first time a patient’s facial scan, originally collected for health record access, is repurposed by law enforcement or immigration authorities through data-sharing agreements with municipal health departments, because the mechanism of centralized biometric databases enables mission creep under doctrines like qualified immunity and national security exemptions. This dynamic is already visible in cities like Detroit and Baltimore, where facial recognition systems used in public services have been cross-referenced with police watchlists, transforming healthcare facilities into de facto surveillance nodes—contradicting the political ideal of bodily autonomy central to liberal democratic theory. The non-obvious insight is that the public associates facial recognition primarily with crime prevention, not the incremental erosion of civil domains through administrative drift, where consent is assumed rather than granted.

Fractured Patient Agency

The trade-off becomes unacceptable when elderly or neurodivergent patients are denied care because facial recognition systems fail to authenticate their identities due to atypical facial expressions or age-related morphological changes, because the mechanism of algorithmic gatekeeping replaces clinician discretion with rigid technical protocols rooted in utilitarian efficiency rather than the ethic of care. This occurs within Medicaid and VA hospitals increasingly adopting AI-driven access systems like NEC’s NeoFace, where error rates for non-neurotypical or aging populations exceed 15%, violating the principle of equitable access embedded in the Americans with Disabilities Act (ADA). What remains hidden is that people assume facial recognition is universally accessible, overlooking how its technical 'neutrality' masks the disenfranchisement of those whose bodies don’t conform to algorithmic norms, thus replacing medical judgment with computational exclusion.