Whose Fault is Algorithmic Misinformation on Search Engines?

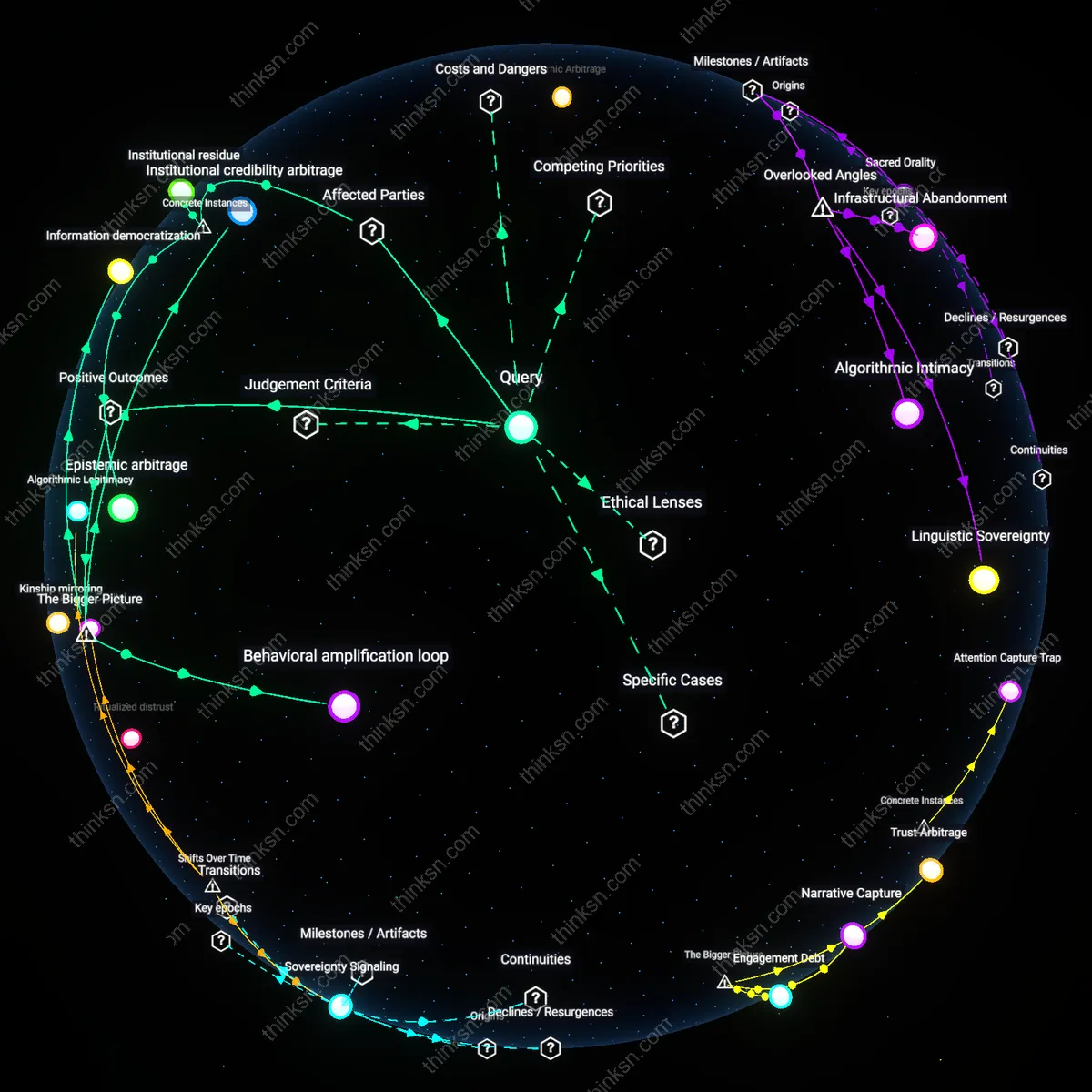

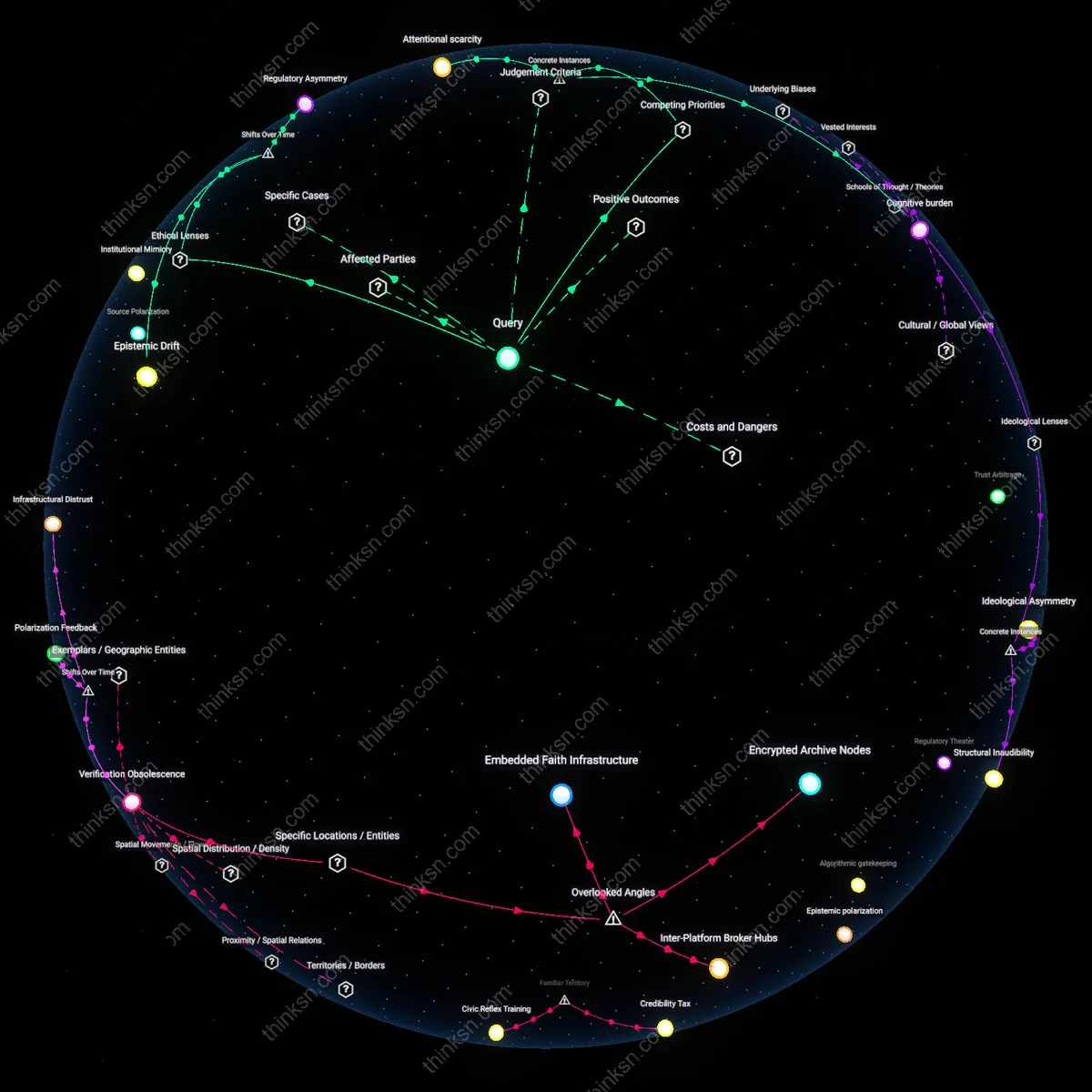

Analysis reveals 9 key thematic connections.

Key Findings

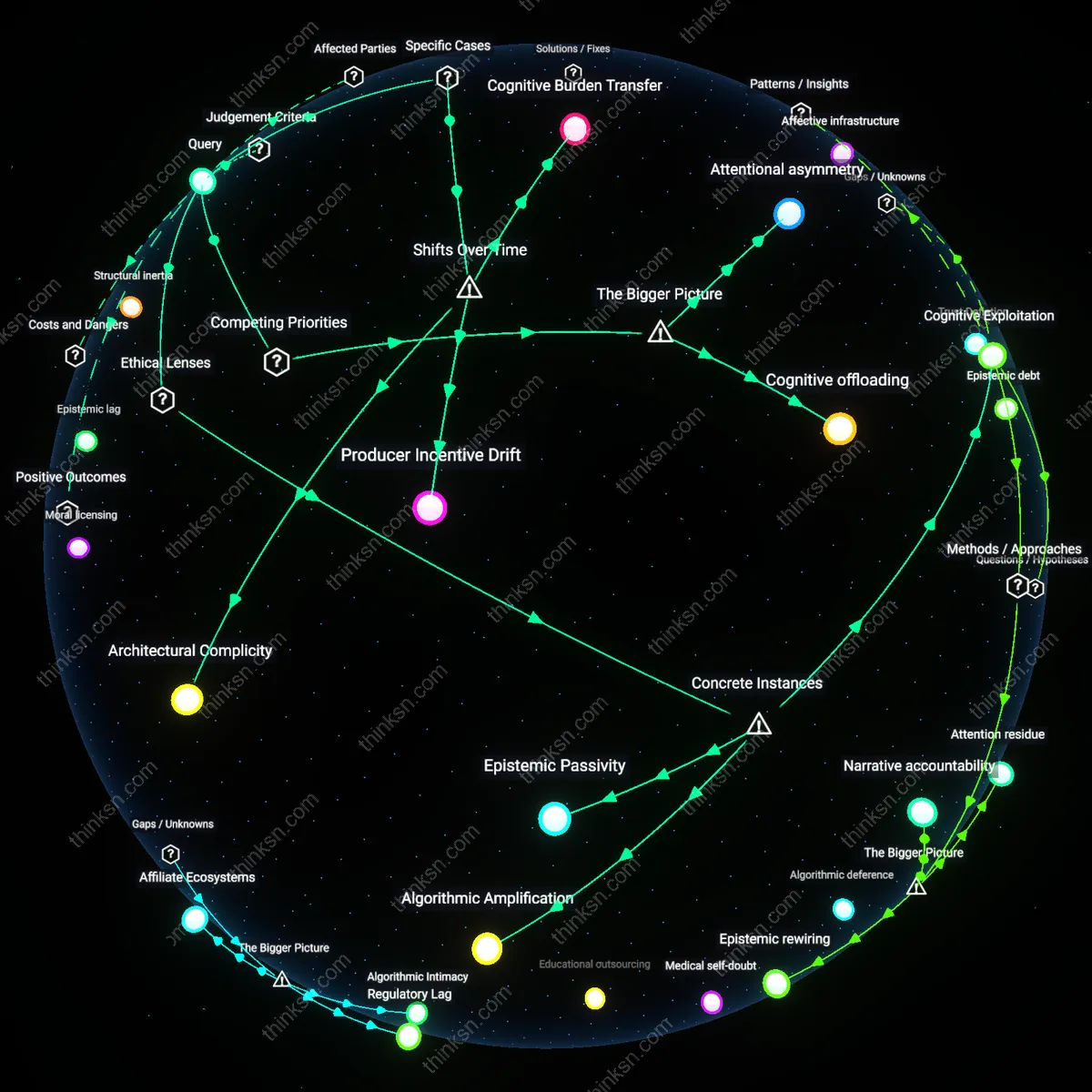

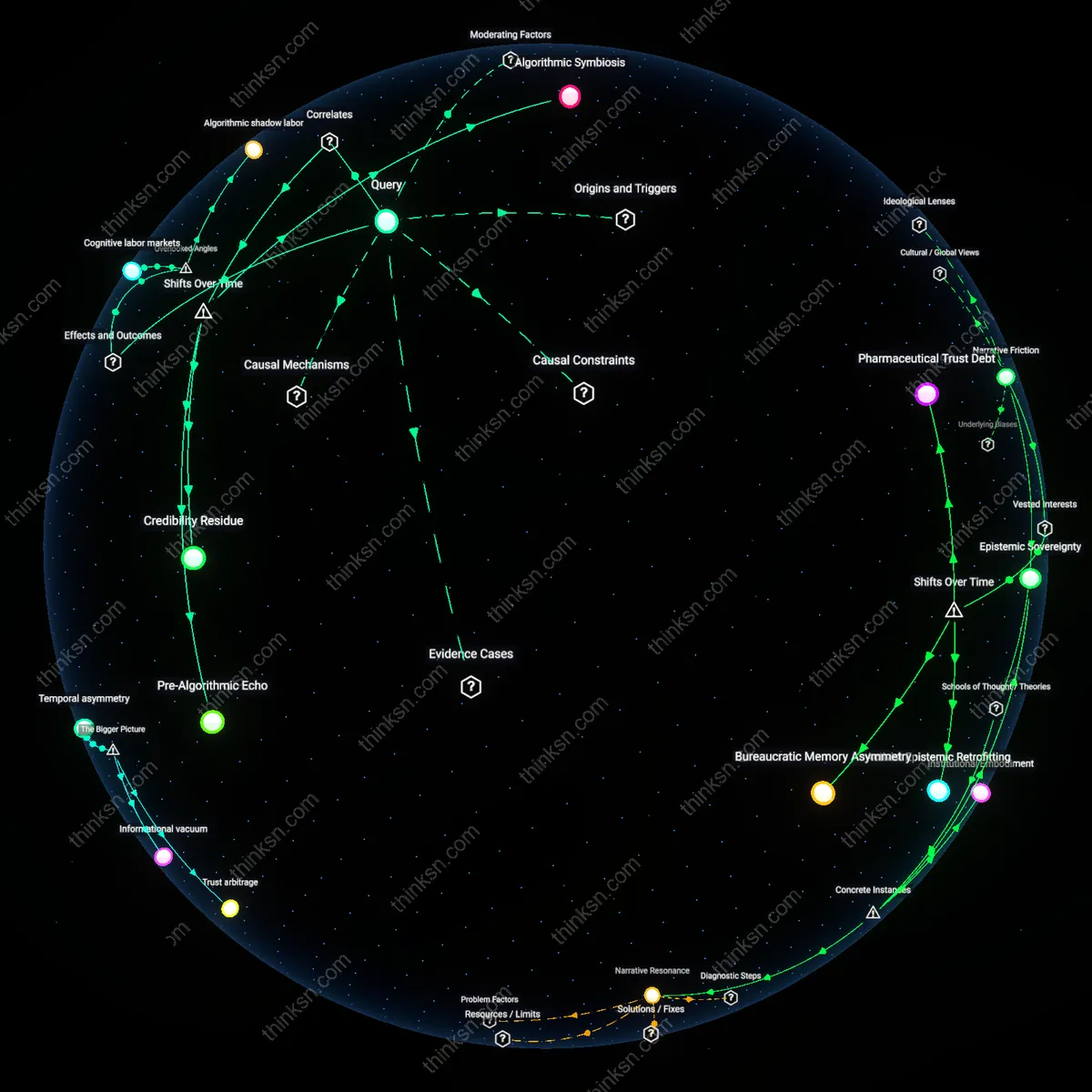

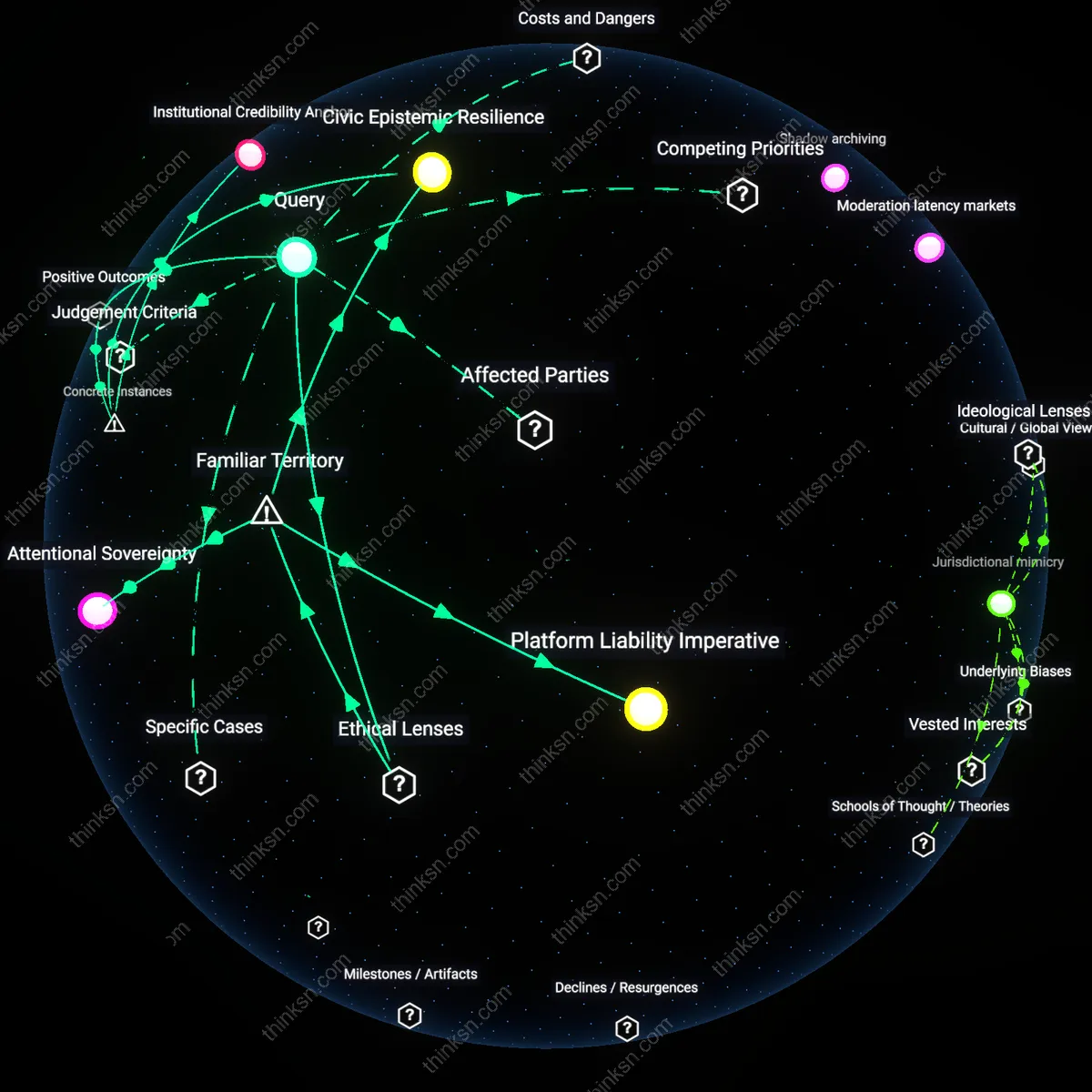

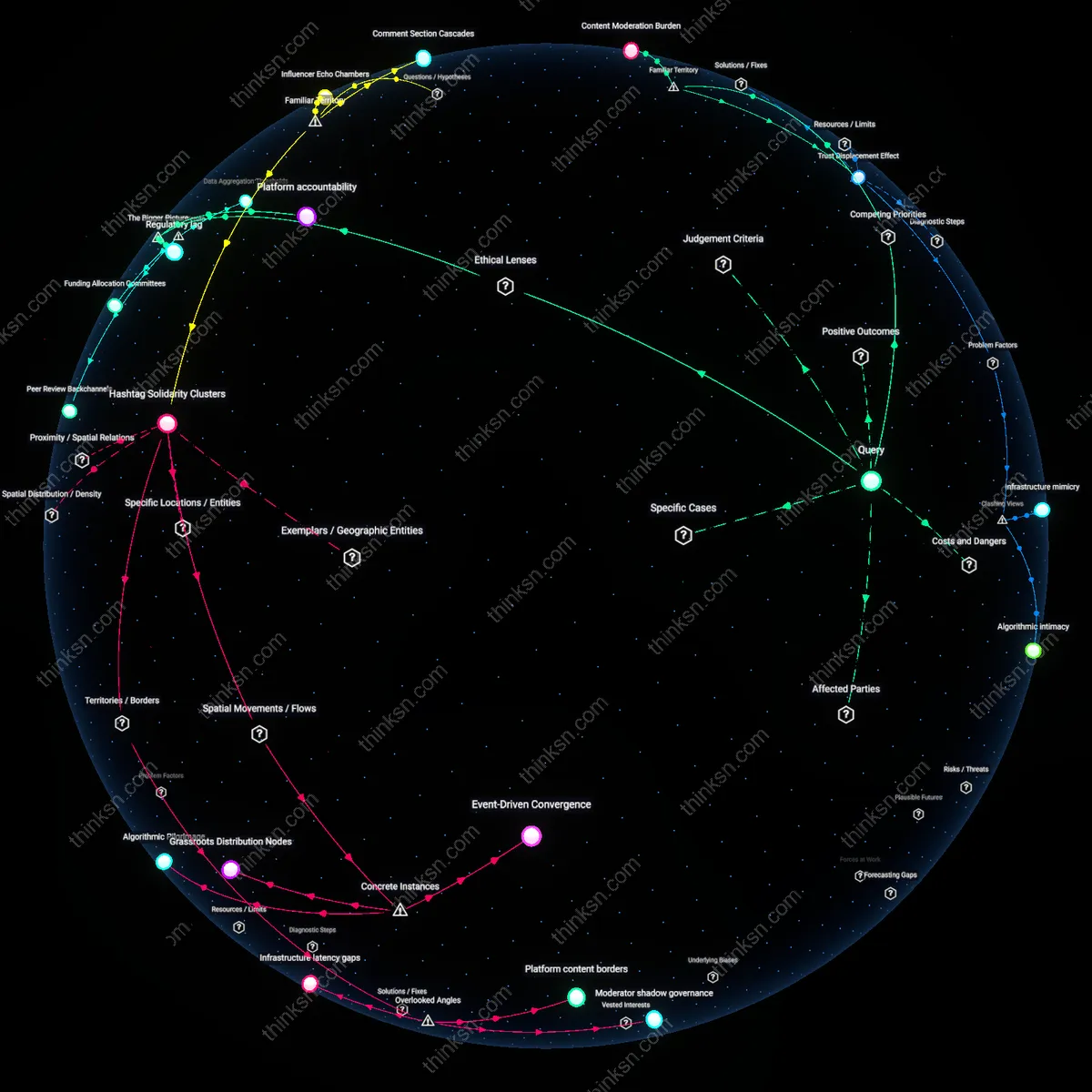

Attentional asymmetry

The platform is primarily responsible for the spread of algorithmic misinformation because its ranking systems amplify content based on engagement rather than accuracy, creating a structural bias toward emotionally charged or sensational claims. This mechanism operates through machine learning models trained on user interaction data—clicks, dwell time, shares—which reward content that triggers impulsive attention, regardless of veracity. The non-obvious consequence is that even factually weak misinformation spreads faster not because users seek falsehoods, but because the platform’s design privileges psychological stickiness over epistemic reliability, locking in a feedback loop where credibility loses to arousal in the zero-sum allocation of visibility.

Incentive distension

Content creators bear foundational responsibility for algorithmic misinformation because the profitability of digital content has decoupled from informational integrity and attached to audience reach, enabling low-cost, high-reach fabrication. This shift is systemically sustained by platform ad-revenue models that reward volume and virality, incentivizing creators to optimize for algorithmic favor rather than truthfulness, especially in jurisdictions with weak media regulation. The underappreciated dynamic is that the economic viability of misinformation stems not from consumer demand, but from a structural glut in content supply where ethical production cannot compete with algorithm-tailored deception on cost, speed, or emotional resonance.

Cognitive offloading

Users are complicit in the proliferation of algorithmic misinformation because their habitual reliance on search engines as neutral arbiters of truth displaces personal epistemic diligence, treating algorithmic results as de facto validation. This cognitive shortcut is systemically enabled by platforms that design interfaces to obscure editorial mechanisms, making algorithmic curation feel like objective discovery rather than a value-laden selection process. The overlooked consequence is that users, in outsourcing judgment to systems they do not understand, unwittingly reinforce the legitimacy of misinformation when they engage with or share top-ranked results—thus converting passive consumption into active behavioral signals that further entrench false content in search hierarchies.

Algorithmic Amplification

Google bears responsibility for amplifying algorithmic misinformation through its search ranking mechanisms, as demonstrated by the 2016 controversy in which YouTube’s recommendation algorithm systematically elevated conspiracy theory videos—such as those promoting the Pizzagate hoax—into prominent visibility. Because Google’s machine learning systems prioritize engagement metrics like watch time and click-through rates over factual accuracy or societal harm, the platform structurally favors emotionally charged, misleading content, effectively outsourcing editorial judgment to algorithms trained for profit, not truth. This reveals the non-obvious reality that platform-designed incentive architectures, not individual malice, can become engines of large-scale misinformation diffusion, even absent intentional deception by creators or users.

Cognitive Exploitation

Content creators are primarily responsible for the spread of algorithmic misinformation when they deliberately craft emotionally manipulative narratives that exploit cognitive biases to maximize reach, as seen in the case of Alex Jones and his website InfoWars, which repeatedly published fabricated stories—like the Sandy Hook massacre being staged—that gained significant traction on Google and Bing. These creators understand how algorithmic systems reward sensationalism and emotional arousal, and they engineer content accordingly, turning verifiable falsehoods into widely indexed search results. This demonstrates that malign actors who master the semiotics of outrage can game ostensibly neutral search infrastructures, revealing that algorithmic systems are not inherently deceptive but become weaponized through intentional, epistemic bad-faith production at scale.

Epistemic Passivity

Individual users bear responsibility for the propagation of algorithmic misinformation when their persistent engagement with false or dubious content trains search engines to treat those queries and sources as credible, exemplified by the widespread search behavior following the 2020 U.S. election, in which millions repeatedly queried variations of 'election fraud proof,' reinforcing the visibility of debunked claims through aggregate user data. Search engines like Google rely on user click patterns and search volume as proxies for relevance and demand, meaning that collectively irrational or ideologically motivated querying habits effectively signal importance to the algorithm. This uncovers the underappreciated reality that user behavior is not merely a passive response to content but an active computational input that shapes the epistemic landscape, making mass gullibility a formative force in algorithmic visibility.

Architectural Complicity

The platform is primarily responsible for the spread of algorithmic misinformation on search engines because the design of its recommendation algorithms after 2015 prioritized engagement metrics over information integrity, enabling low-credibility content to achieve high visibility. This shift—from indexing as a neutral retrieval system to curating content through behavioral feedback loops—transformed Google and Bing into de facto arbiters of epistemic authority, particularly evident in how YouTube’s recommendation engine amplified conspiracy theories during the 2016 U.S. election. The non-obvious reality is that platforms became structurally incentivized to promote controversy not because users demanded it per se, but because the algorithmic architecture evolved to reward attention persistence, making misinformation a systemic byproduct rather than an exception.

Producer Incentive Drift

Content creators bear primary responsibility for spreading algorithmic misinformation because the monetization thresholds lowered on platforms after the mid-2010s, enabling financially driven actors—like the troll farms in Veles, North Macedonia—to game search visibility through sensationalist and false narratives. As YouTube’s Partner Program and Google AdSense made micro-revenue streams accessible to low-barrier entrants, a new class of entrepreneurial producers emerged, whose content strategies were calibrated specifically to exploit algorithmic distribution patterns, particularly around health myths and political conspiracies during the 2020 pandemic. The critical shift was not merely in creator intent, but in the economic realignment of content production, where credibility became an operational cost rather than a baseline, revealing a market logic that now fuels algorithmic virality.

Cognitive Burden Transfer

Users are ultimately responsible for the spread of algorithmic misinformation because platforms offloaded editorial discernment onto individuals after the 2010s, positioning search results as ‘neutral’ outputs while retaining design opacity, thereby normalizing epistemic self-reliance in environments like Google Search during crises such as the 2013 Boston Marathon bombing, when false identifications trended. As the architecture of trust shifted from institutional gatekeeping to algorithmic output, users were silently cast as arbiters of truth without the tools or training to perform that role, creating a feedback loop where repeated exposure normalized fringe theories. The underappreciated consequence of this transition is that user ‘choice’ in information consumption now operates within a choreographed illusion of neutrality, masking the systemic delegation of accountability.