Is Securing Digital Identity Futile with Private Cloud Registries?

Analysis reveals 8 key thematic connections.

Key Findings

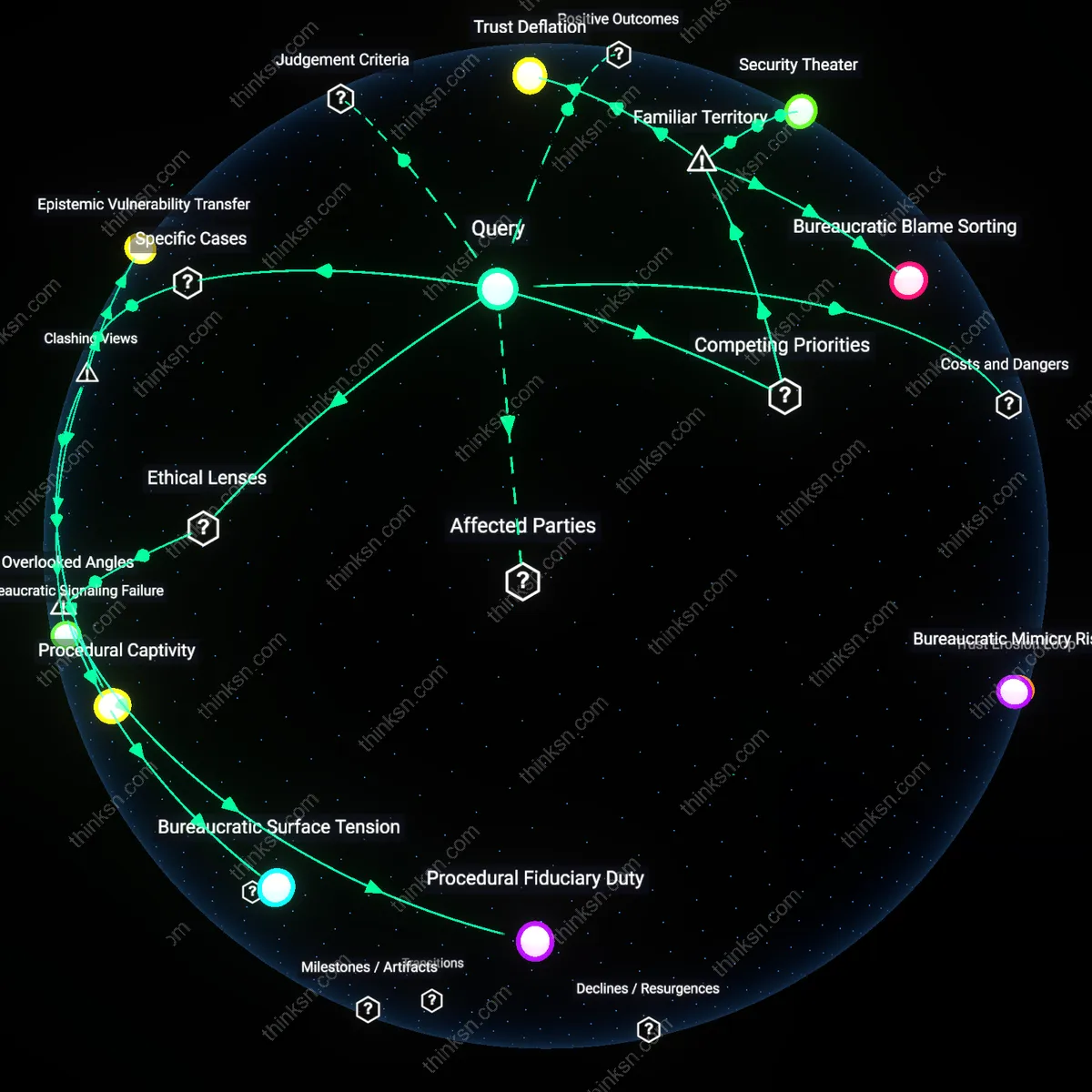

Institutional Betrayal

Yes, individuals can realistically be held responsible for securing their digital identity because national identity frameworks like India’s Aadhaar system outsource authentication infrastructure to private vendors such as Tata Consultancy Services, placing low-income rural users—who lack digital literacy and stable connectivity—at systemic risk when access failures occur; this responsibility regime obscures how design mandates from state authorities combine with proprietary black-box systems to shift accountability onto citizens who face real exclusion from healthcare and welfare if biometrics fail. The non-obvious truth is that individual responsibility functions less as practical security guidance than as a discursive alibi for governments relying on opaque corporate systems, revealing that harm stems not from negligence but from deliberate structural delegation. What this exposes is not insecurity per se, but the routinization of institutional betrayal—where trust in governance is undermined by externally managed failure points.

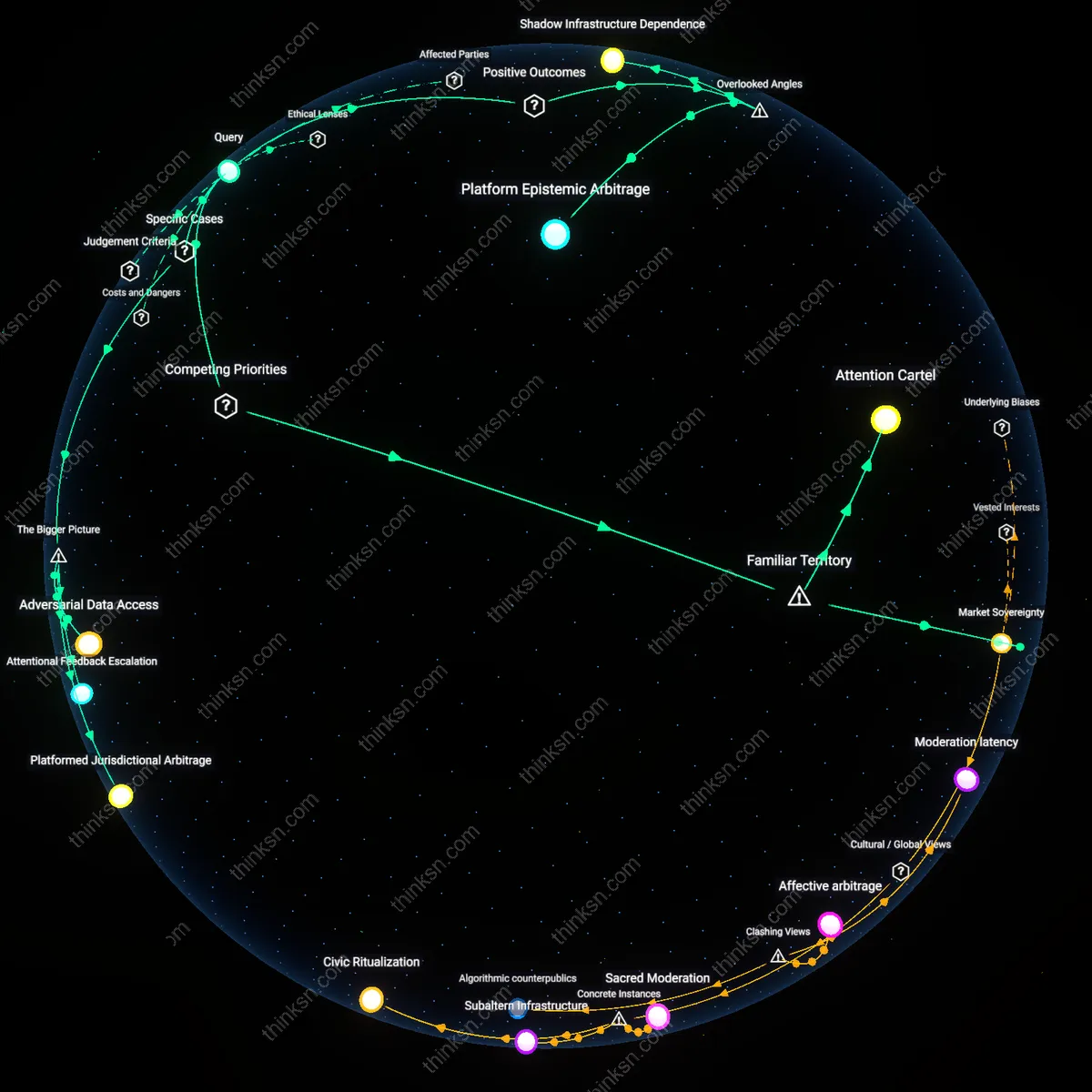

Security Theater

No, individuals cannot realistically be held responsible because Estonian e-Residency, often heralded as a digital governance model, requires citizens to manage cryptographic identity keys on government-issued tokens while backend authentication depends on private cloud providers like Microsoft Azure, whose infrastructure changes or outages are beyond user control; thus, while users face penalties for token mismanagement, they are powerless against systemic disruptions originating in proprietary platforms. The dominant assumption that digital self-sovereignty empowers citizens collapses when the foundational layer of trust operates outside both public scrutiny and end-user agency, revealing a performative security framework. This disconnect between symbolic responsibility and actual control produces a political fiction known as security theater—where individual accountability rituals mask centralized, unaccountable backend power.

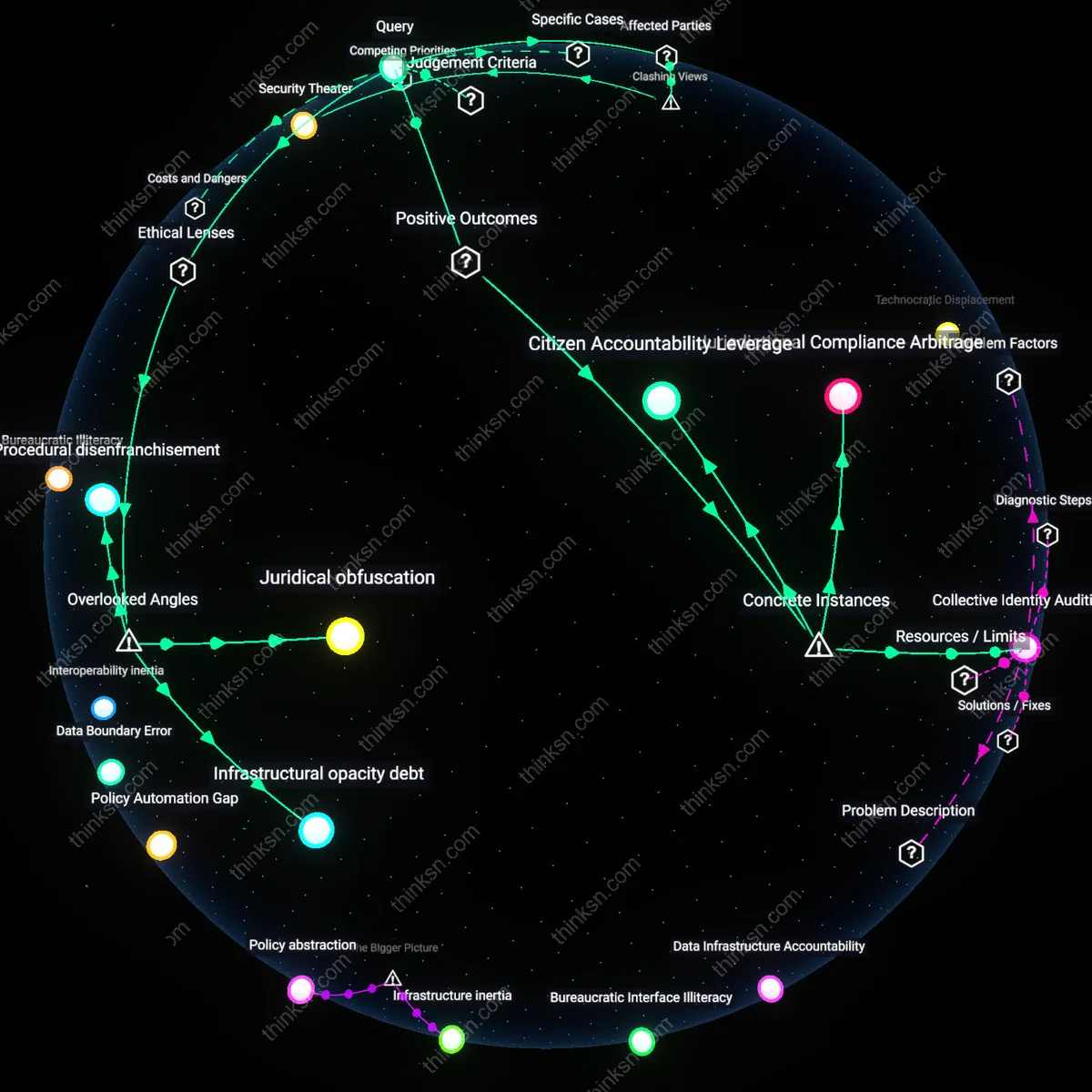

Citizen Accountability Leverage

The Indian Aadhaar system’s mandatory linkage to bank accounts and tax filings has compelled private cloud vendors like Tech Mahindra to implement audit-ready identity trails, enabling individuals to challenge erroneous denials of services through verifiable digital footprints. This created a feedback loop where citizens, despite relying on opaque infrastructure, could invoke procedural accountability by referencing timestamped authentication logs, thus transforming passive identity storage into an active instrument for administrative redress. The non-obvious insight is that individual responsibility becomes operational not through technical control but through legally enforceable access to system-generated records.

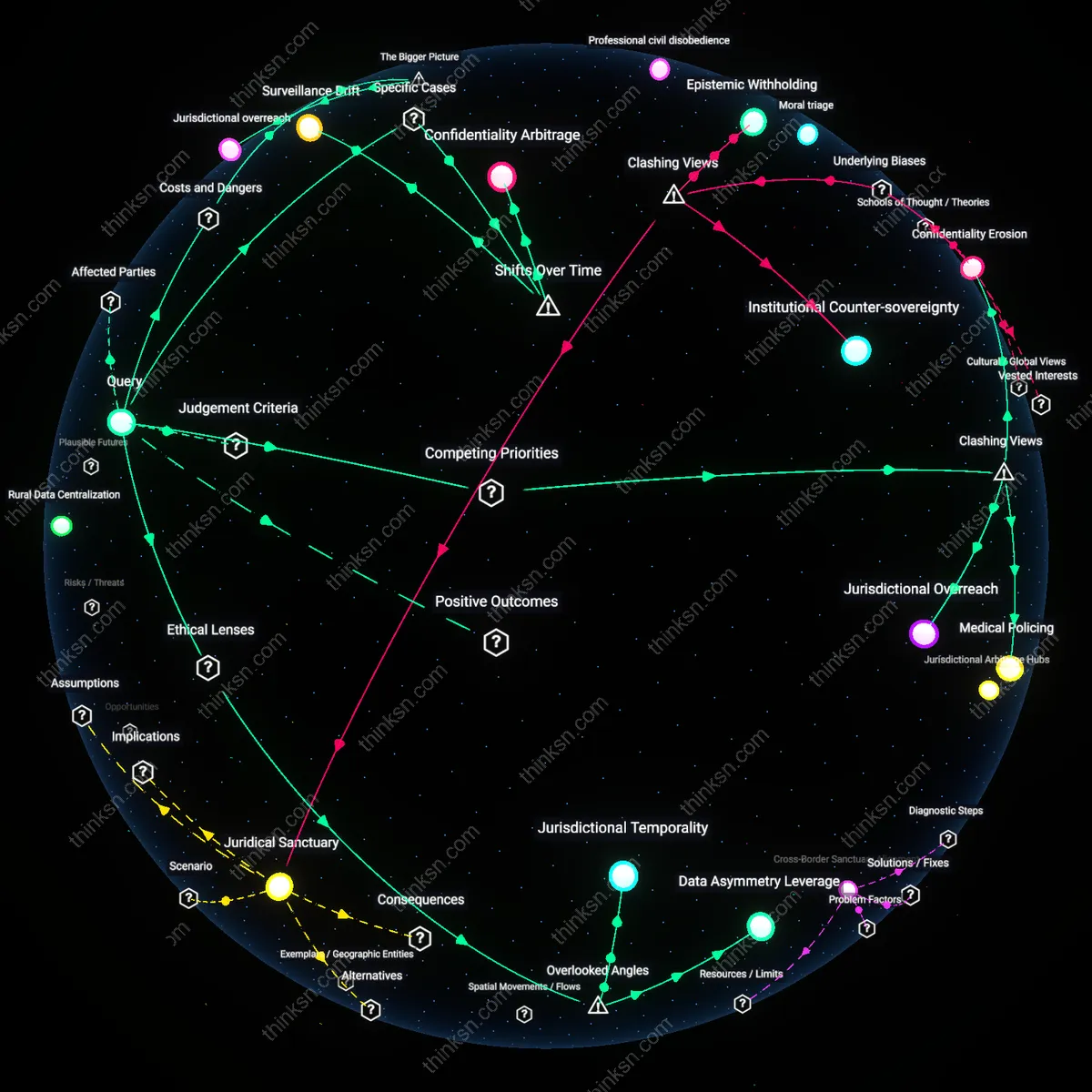

Jurisdictional Compliance Arbitrage

When Estonia introduced its e-Residency program backed by Microsoft Azure, it delegated the infrastructure security of national digital identities to a U.S.-based cloud provider, yet retained regulatory oversight through strict data protection mandates and real-time monitoring agreements. This arrangement allowed individuals to benefit from globally interoperable identity credentials while the state enforced transparent accountability standards, demonstrating that personal responsibility is amplified when users act as conduits for transnational regulatory pressure rather than sole custodians. The underappreciated dynamic is that individuals gain agency not by securing data directly but by leveraging geopolitical compliance tensions to ensure system-wide robustness.

Collective Identity Auditing

Following the 2020 U.S. Census’s migration to AWS-hosted data collection platforms, community advocacy groups in Detroit and Phoenix organized localized ‘identity verification drives’ to cross-check self-reported responses against federal identity databases, ensuring accurate representation in apportionment. This grassroots auditing—enabled by public API gateways mandated by the Census Bureau—transformed individual users into distributed validators of systemic integrity, where personal identity management served a civic function beyond personal security. The overlooked outcome is that individual responsibility scales into collective resilience when public processes institutionalize participatory validation points within opaque systems.

Juridical obfuscation

Individuals cannot be ethically held responsible for securing digital identity because government delegation of critical identity functions to private cloud providers creates juridical obfuscation—a condition in which legal accountability is structurally dissolved across public-private boundaries, as seen in Estonia’s X-Road system where liability for data integrity defaults to undefined contractual terms between state agencies and AWS/Azure, shielding both from tort claims under EU eIDAS regulations; this masks the actual locus of control and erases individual recourse, which reframes digital self-sovereignty not as failure of user diligence but as systemic design of unactionable responsibility—a dimension overlooked because public discourse focuses on password hygiene while ignoring how sovereignty is contractually outsourced.

Infrastructural opacity debt

Individual responsibility for digital identity is untenable because the accumulation of infrastructural opacity debt—unresolved dependencies on undocumented APIs, proprietary logging practices, and jurisdictionally fragmented data flows in systems like India’s Aadhaar-linked cloud architecture—renders personal security decisions epistemically blind, as users cannot audit or anticipate failure points embedded in third-party infrastructure maintained by firms like Tata Consultancy Services under government mandate; this hidden technical debt breaks the feedback loop required for responsible agency, a critical blind spot since most ethical assessments assume transparency of system behavior rather than its deliberate concealment for 'national security' or 'commercial confidentiality'.

Procedural disenfranchisement

People cannot meaningfully secure their digital identity under state-private cloud regimes because procedural disenfranchisement—systematic exclusion from standard-setting forums like ISO/IEC JTC 1, where cloud identity protocols are codified by unelected industry representatives from Amazon, Microsoft, and national standards bodies—prevents civic input into the rules that govern identity authenticity, as demonstrated by the lack of public participation in shaping NIST SP 800-63-3 compliance frameworks that define 'reliable' identity in U.S. federal systems; this delegitimates the notion of consent in identity management, a dimension typically ignored because accountability debates assume procedural inclusivity rather than recognizing rule-making as captured technical elite space.