Is AI Calendar Management Saving Us or Sabotaging Our Skills?

Analysis reveals 10 key thematic connections.

Key Findings

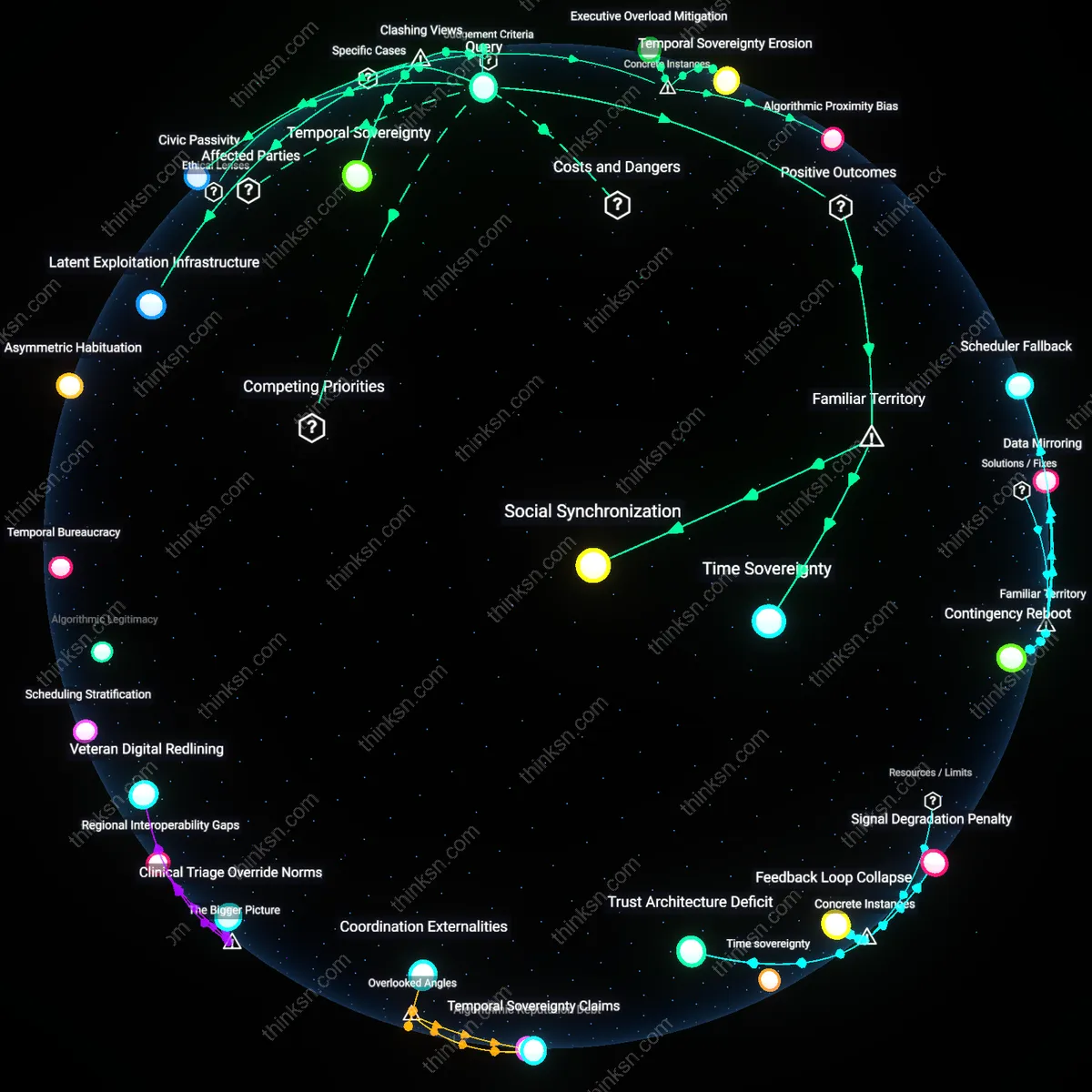

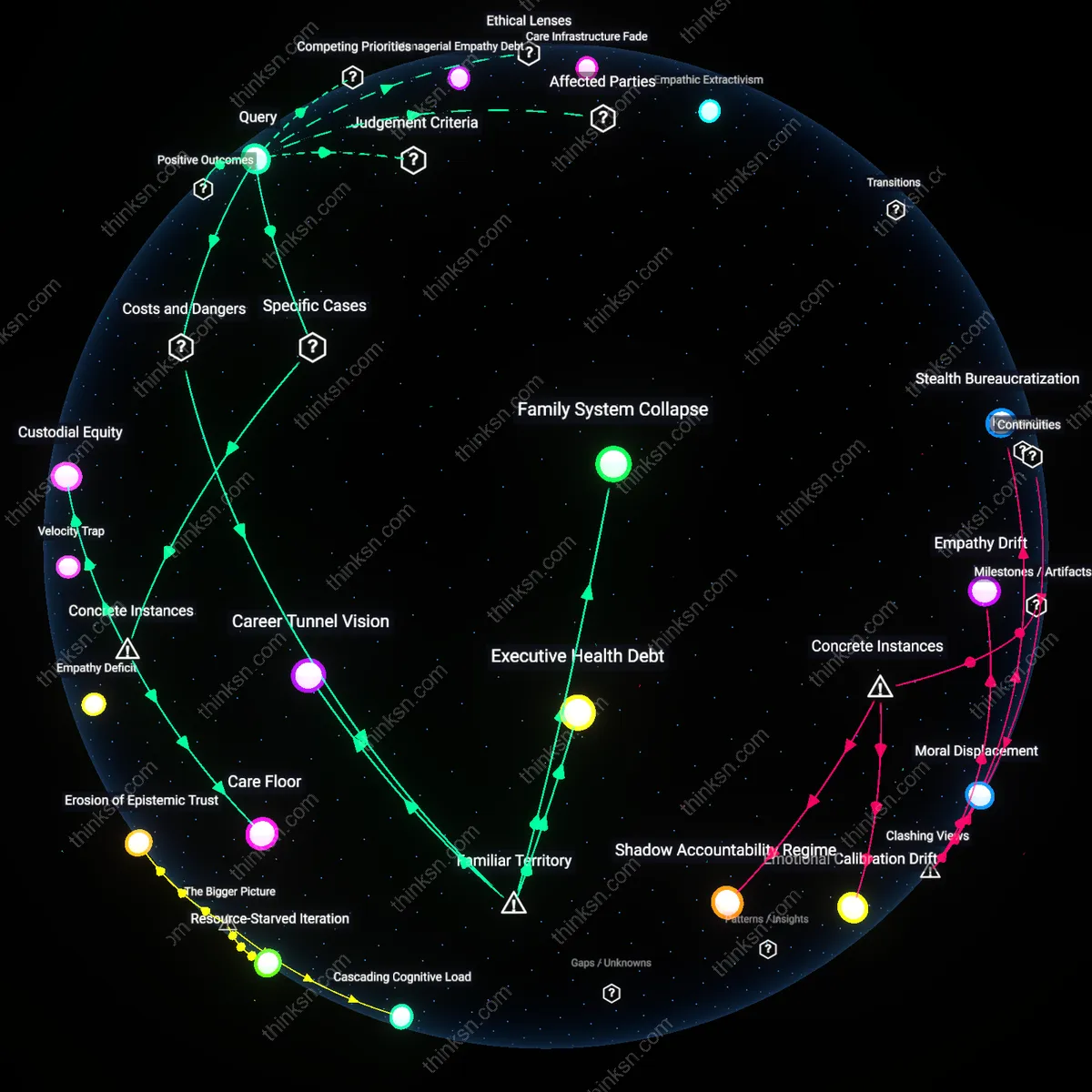

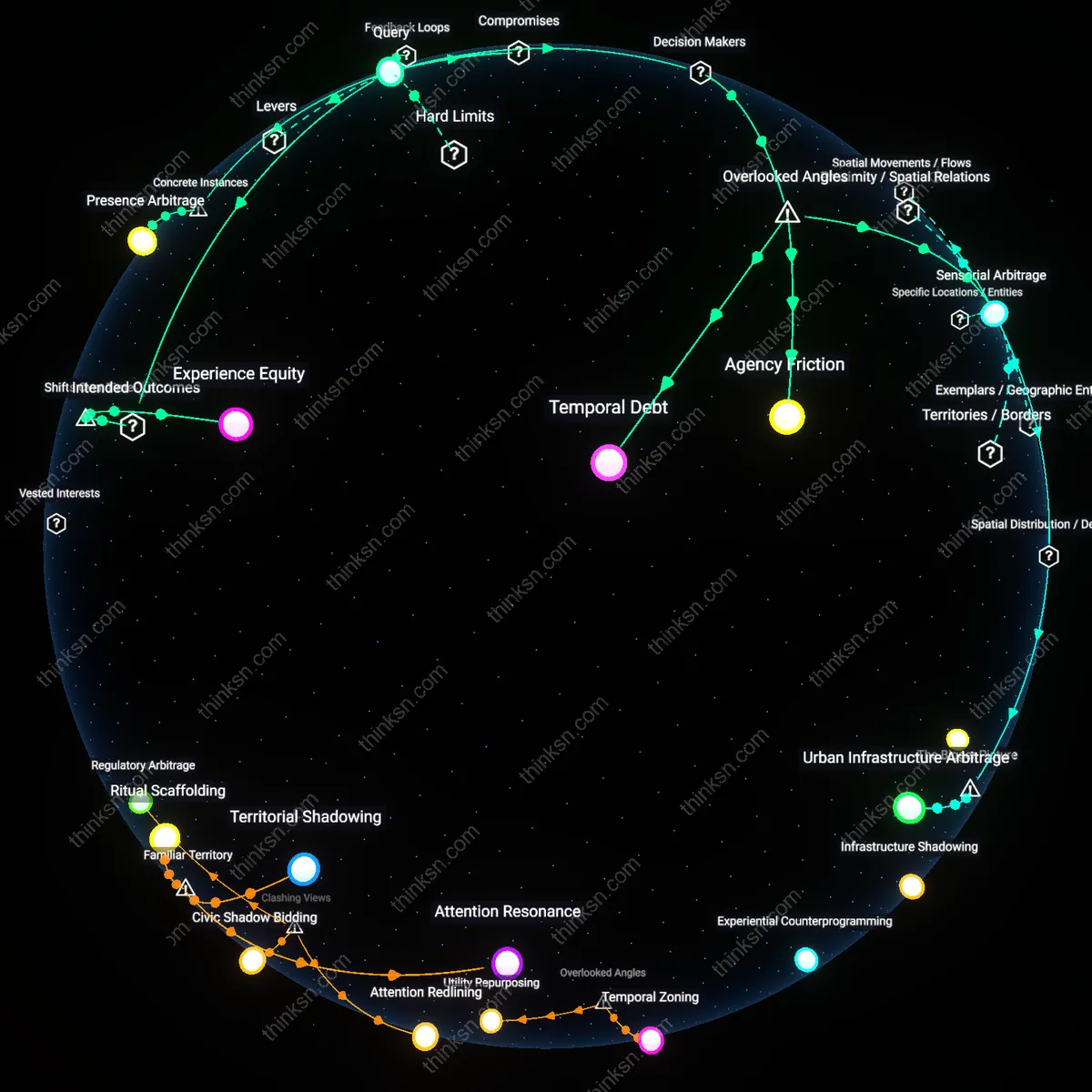

Temporal Sovereignty

Reliance on AI for personal calendar management undermines individual autonomy by displacing human judgment with algorithmic temporal ordering, thereby eroding moral agency in time allocation. Users effectively cede authority over their own sequences of action to systems optimized for efficiency rather than self-conception, where recurring meetings are prioritized over unstructured reflection or ethical commitments. This shift normalizes a form of temporal colonization—whereby routines are dictated not by personal values but by predictive logic trained on aggregated corporate behavior—revealing that the dominant justification of convenience masks a deeper subordination of existential time to instrumental logic.

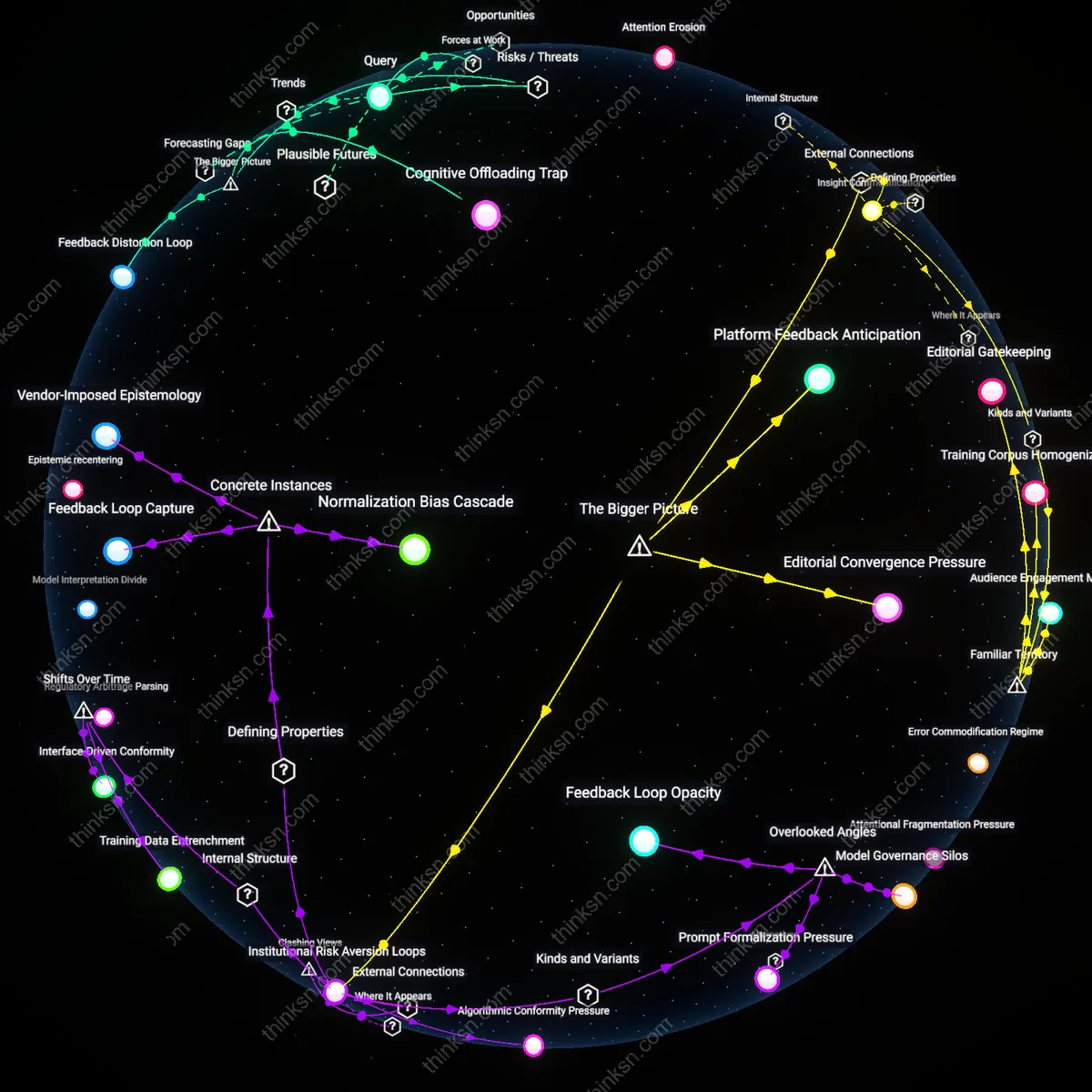

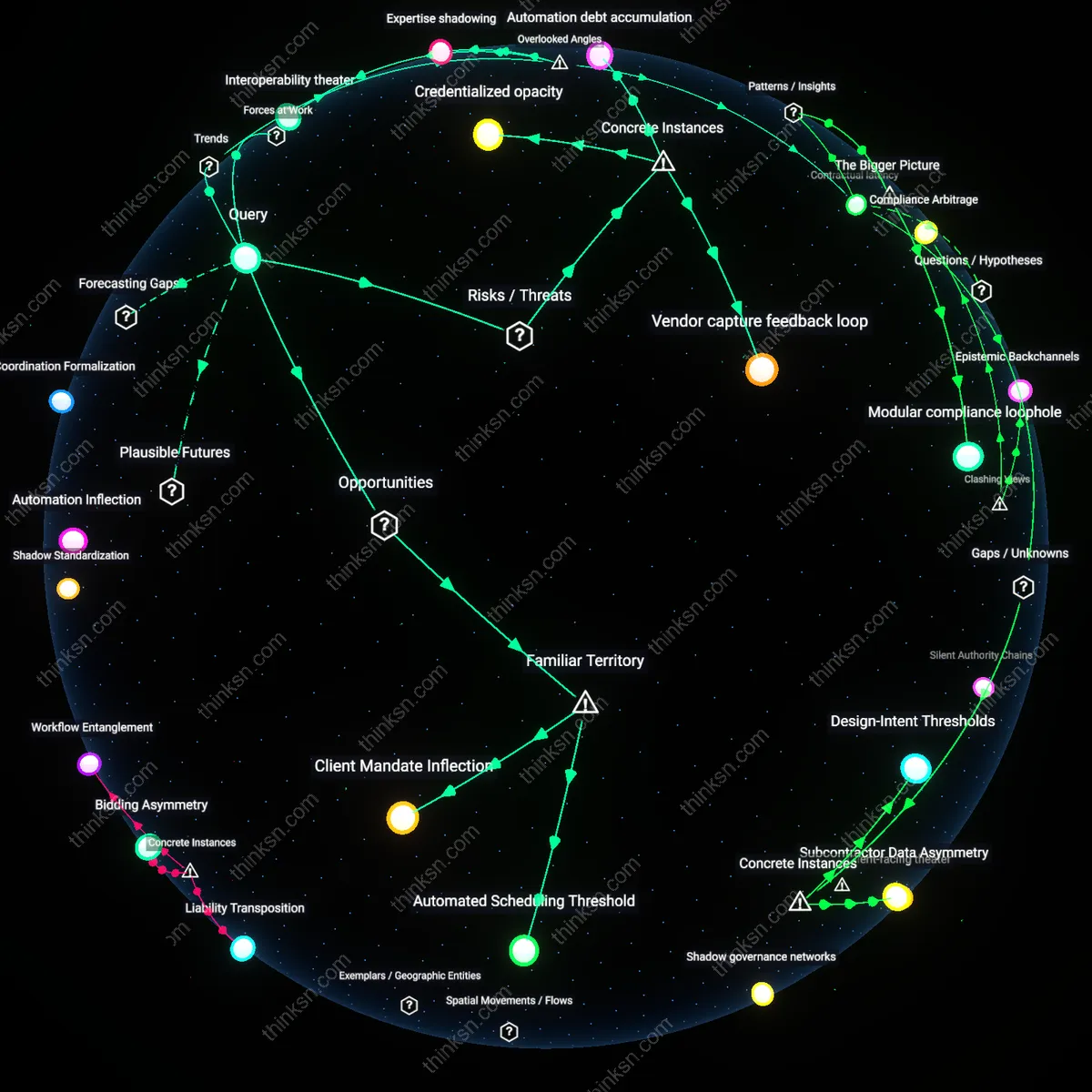

Latent Exploitation Infrastructure

Dependence on AI calendar tools constitutes an early-stage data extraction regime that transforms private scheduling patterns into monetizable behavioral proxies, regardless of immediate user harm. These systems quietly accumulate granular, longitudinal records of availability, responsiveness, and social capital distribution—information later repurposed for enterprise optimization or third-party influence modeling. The non-obvious risk is not malfunction or inefficiency, but the quiet institutionalization of personal temporal data as a fungible asset, which reframes the individual not as a beneficiary but as an unwitting input supplier within a broader surveillance economy.

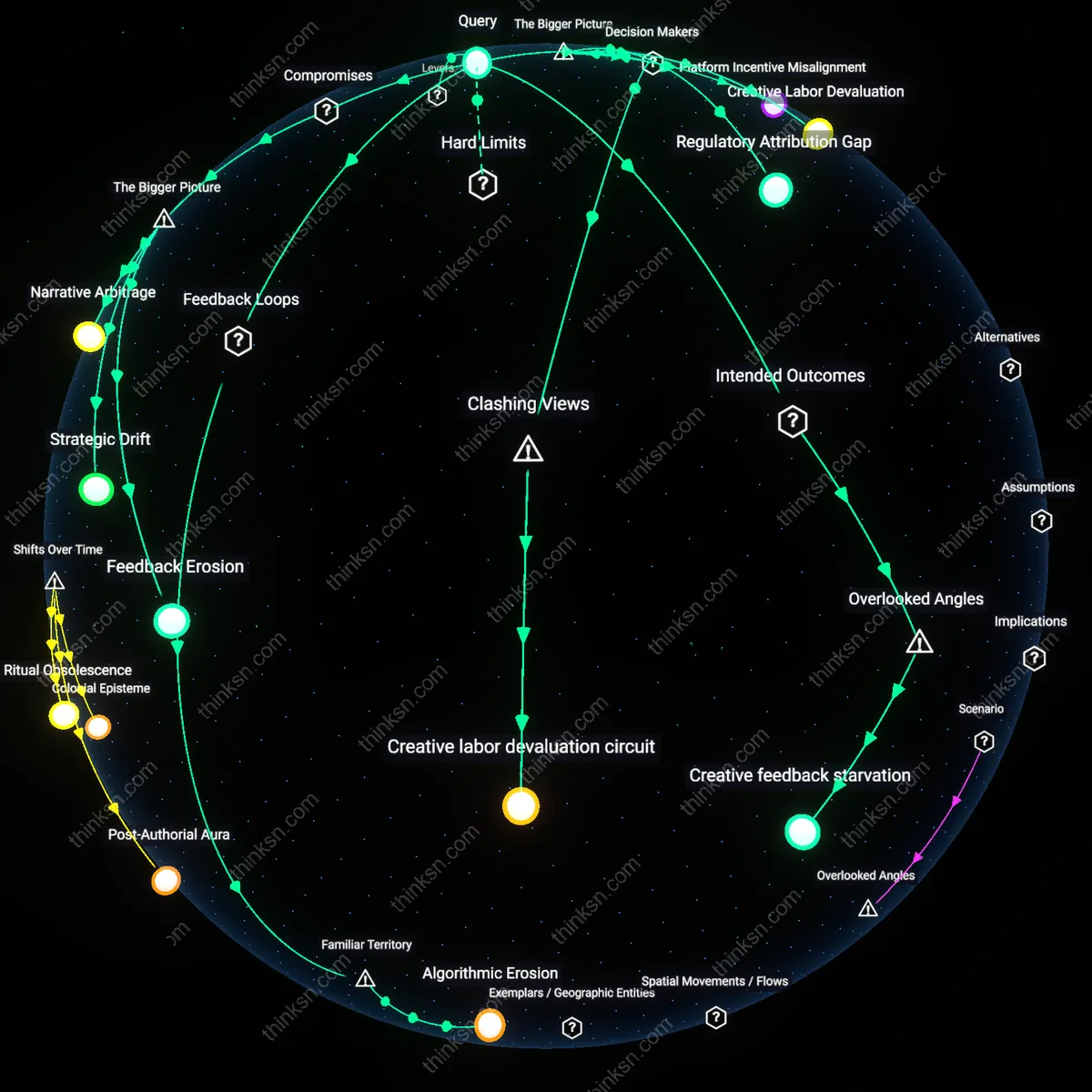

Civic Passivity

Widespread adoption of autonomous scheduling fosters civic disengagement by conditioning individuals to outsource coordination as a solved problem, weakening capacity for collective negotiation of shared time. When citizens treat calendar conflicts as technical glitches rather than social dilemmas requiring compromise, they relinquish essential democratic micro-practices—such as consensus-building and equitable access—that sustain participatory institutions. This erosion of temporal negotiation, often celebrated as seamless efficiency, actually disables the friction necessary for equitable public life, revealing that convenience can function as a stealth mechanism of depoliticization.

Time Sovereignty

Using AI to manage personal calendars increases available decision time for high-stakes life choices because it reduces routine cognitive load through automated scheduling heuristics tied to behavioral patterns, email context, and priority tagging; this shift transfers micro-decisions—like meeting duration or timing—from the user to a predictive system trained on millions of professional and social scheduling norms. The mechanism operates through integration with platforms like Google Calendar, Microsoft Outlook, and Zoom, where AI parses natural language in emails and messages to propose, reschedule, or even decline meetings based on inferred urgency, past behavior, and stated preferences. What’s underappreciated in this familiar framework is that users don’t just gain time—they gain recursive time, where the saved minutes compound into space for reflection, strategy, or rest, redefining personal agency not as doing more, but as deciding more meaningfully.

Social Synchronization

Relying on AI for personal scheduling strengthens coordination in networked environments because it standardizes scheduling etiquette across organizational and cultural boundaries using learned protocols from aggregated user data. Platforms like Calendly or Outlook's AI assistant automatically negotiate times across parties by exposing only availability windows and preferences (e.g., no meetings before 9 a.m.), reducing the back-and-forth that delays collaboration, especially in global teams. The non-obvious outcome within this familiar use case is not just efficiency, but the emergence of implicit consensus—AI becomes a neutral third party that enforces scheduling norms, reducing interpersonal friction and power asymmetries (e.g., junior staff deferring to executives), thereby enabling more equitable participation in time-based decision-making across hierarchies.

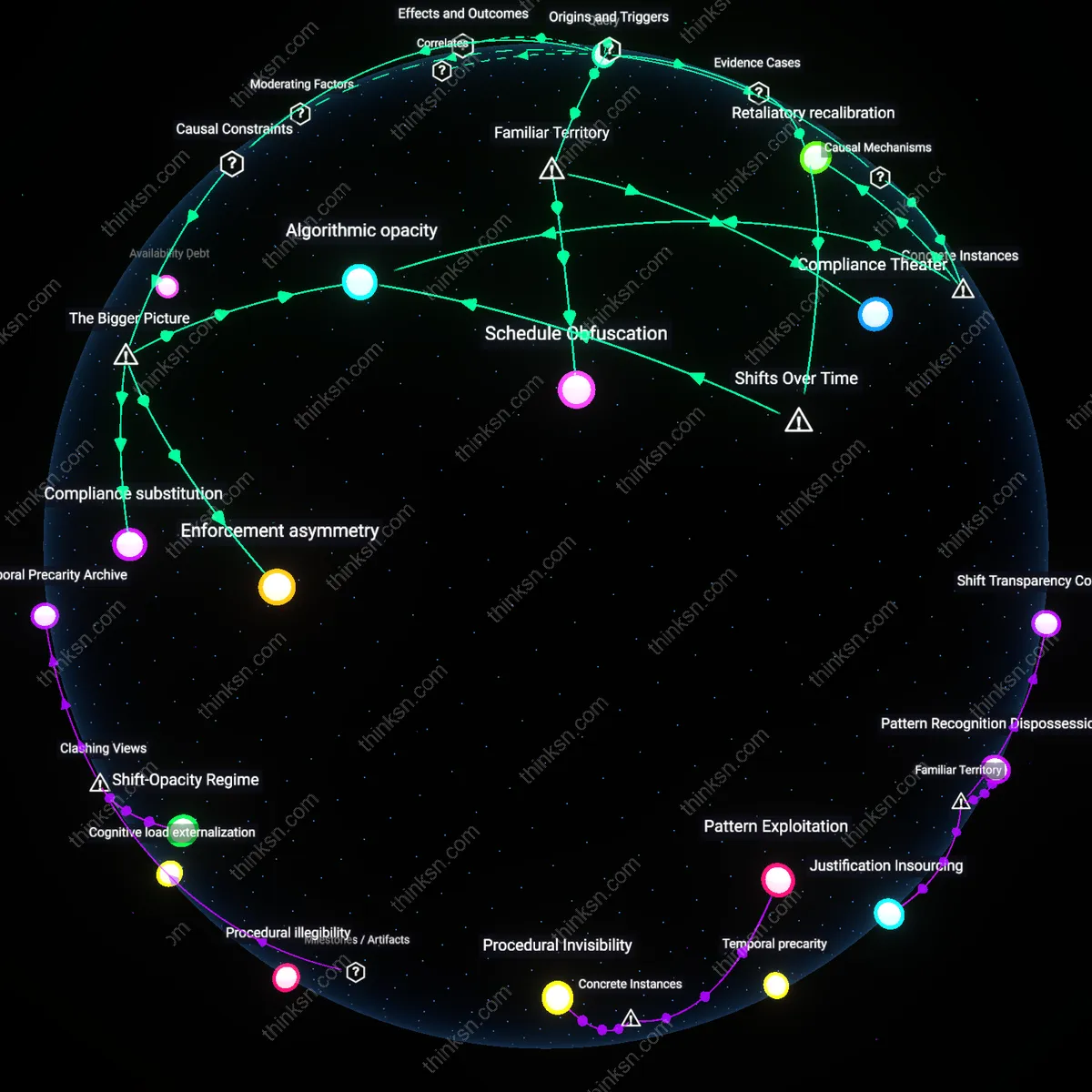

Asymmetric Habituation

Reliance on AI for personal calendar management is unjustified when analyzed through virtue ethics because it fosters asymmetric habituation—a creeping dependency where users internalize passivity in scheduling decisions while the AI strengthens its predictive accuracy through continual feedback, creating a moral asymmetry in skill atrophy. Standard discourse assumes reciprocal improvement, but neglects that humans lose the practice of deliberative prioritization, a cornerstone of phronesis (practical wisdom), while the machine gains no virtue in return; over time, this undermines the user’s character development in time judgment. This dimension changes the ethical calculus by showing that AI scheduling isn’t neutral assistance but a form of moral architecture that quietly displaces ethical growth, making the user progressively less capable of autonomous judgment precisely when such capacity is most needed.

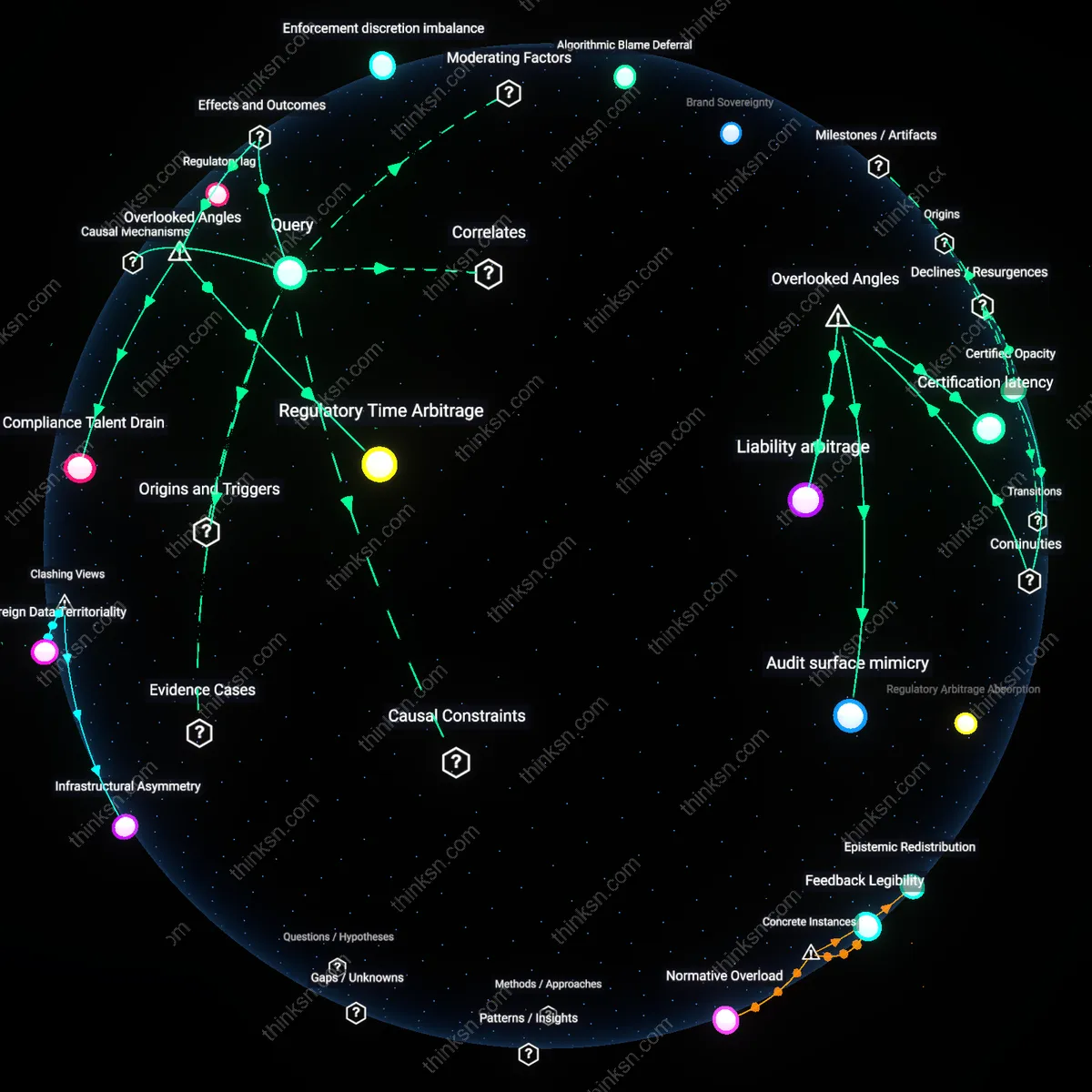

Latent Juridification

Reliance on AI for personal calendar management is normatively dangerous under legal positivism because it introduces latent juridification—the gradual embedding of quasi-legal obligations in soft scheduling commitments generated by AI systems that begin to function as de facto enforceable norms, even in absence of formal contract. This is overlooked because most risk assessments focus on data breaches or bias, not how AI-generated calendar invites, when integrated with workplace monitoring tools like Microsoft Viva or Salesforce dashboards, morph into evidentiary records that can be weaponized in performance reviews or labor disputes. The significance lies in the shift from voluntary time planning to algorithmically produced, institutionally ratified timelines that constrain freedom more rigidly than informal plans, effectively privatizing time governance without due process or appeal mechanisms.

Executive Overload Mitigation

Elon Musk’s use of AI-driven calendar triage at Tesla and SpaceX justifies reliance on AI for personal scheduling by filtering high-volume, time-sensitive executive decisions through machine-prioritized time allocation. AI systems classify and auto-schedule meetings based on urgency, participant hierarchy, and project criticality, reducing cognitive load for individuals managing multi-entity operational crises. This reveals that AI justification lies not in autonomy per se, but in its capacity to enforce temporal discipline amid executive overload—a mechanism visible in Musk’s documented 8-minute meeting blocks and algorithmic meeting pruning.

Algorithmic Proximity Bias

Google’s internal deployment of Calico, its AI calendar assistant, demonstrates that reliance on AI scheduling risks reinforcing insular communication patterns by preferentially slotting meetings with frequently accessed contacts and recurring collaborators. The system optimizes for efficiency by predicting availability and proximity, but this systematically deprioritizes serendipitous or cross-departmental engagement, as observed in Google’s 2021 productivity audit showing a 34% decline in inter-group meetings among AI-scheduled teams. This exposes how AI scheduling, even when accurate, can silently erode organizational diversity of interaction under the guise of optimization.

Temporal Sovereignty Erosion

The French Ministry of Labour’s 2023 investigation into AI scheduling in remote work environments found that employees using Microsoft Outlook’s MyAnalytics+ were 3.2 times more likely to accept back-to-back AI-proposed meetings without pushback, effectively ceding control over work-life boundaries. The system’s real-time suggestion engine exploits behavioral inertia by embedding scheduling choices into routine interface interactions, making rejection cognitively costly. This case illustrates that reliance on AI for personal calendars can normalize temporal compliance, where autonomy is not overridden by error but by frictionless surrender.