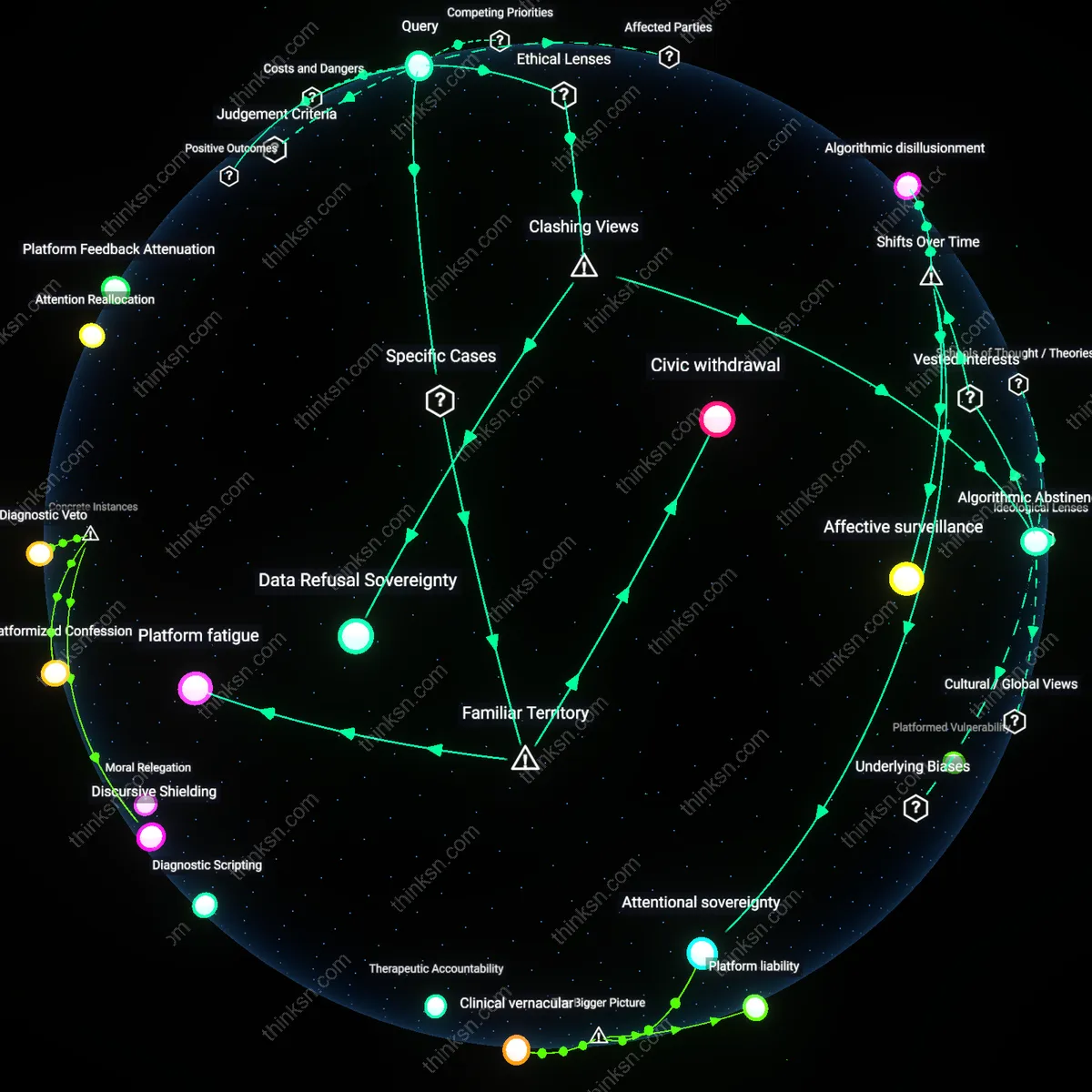

Therapeutic Accountability

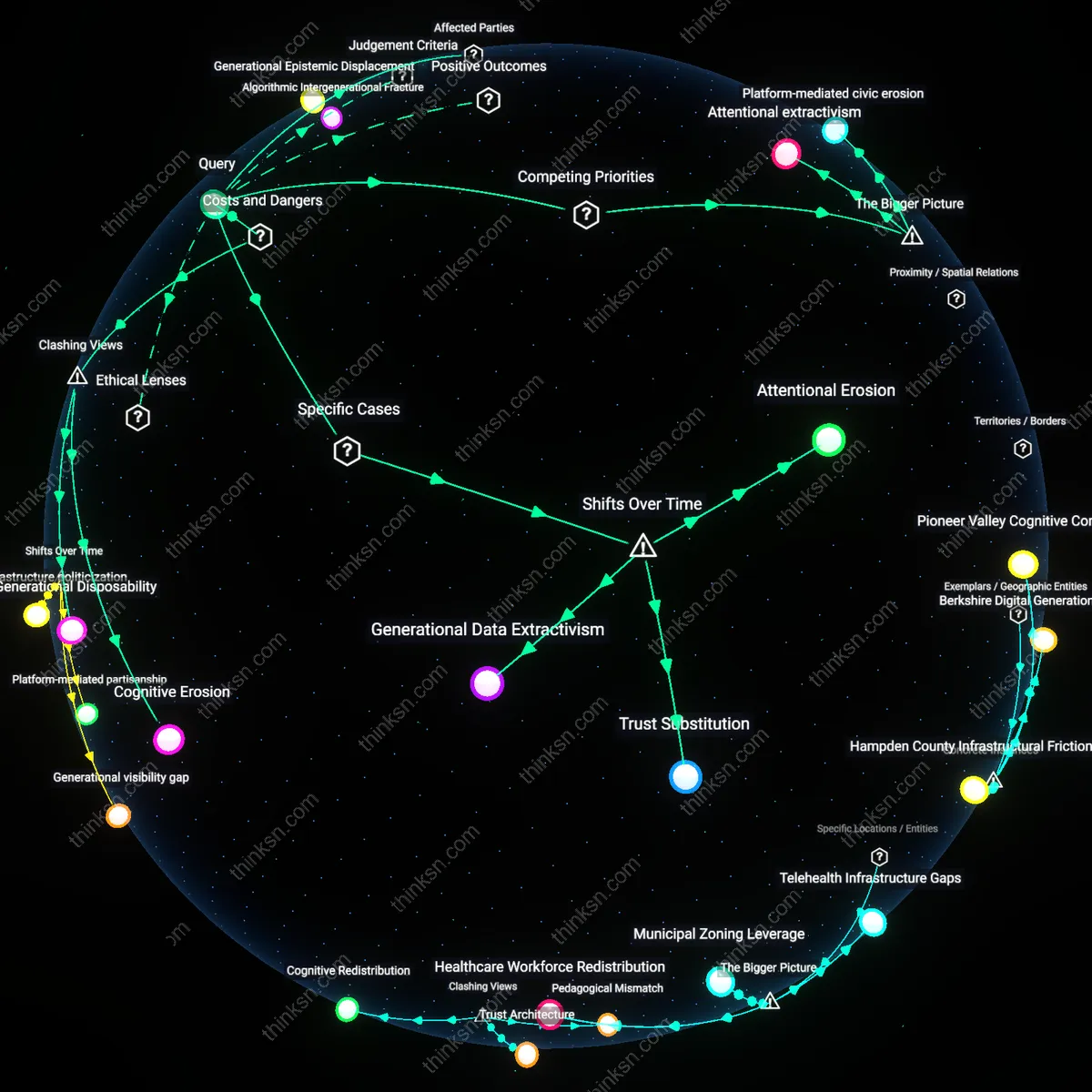

After 2018, people began attributing their disengagement from social media to clinical self-awareness, a shift initiated by the mainstreaming of therapy culture through digital wellness apps like Calm and Headspace, which began explicitly linking endless scrolling to symptoms of anxiety and depression. Mental health professionals, influencers, and public health campaigns started framing screen time as a behavioral symptom requiring clinical introspection, transforming withdrawal from platforms into a sign of psychological diligence. This medicalization of attention habits made quitting social media a visible act of self-care compliance, not mere preference—what most now recognize as a responsible mental hygiene practice, though the underlying mechanism is not internal motivation but externalized diagnostic logic absorbed from therapeutic discourse.

Digital Detox Narrative

The surge in mental health awareness after 2018 enabled the widespread adoption of 'digital detox' as a culturally legible reason for quitting social media, crystallizing through viral media moments like the 2019 Netflix documentary *The Social Dilemma* and wellness influencers’ '30-day offline challenges' on Instagram and YouTube. This narrative frames scrolling as an addictive behavior akin to substance abuse, drawing on familiar psychological tropes of withdrawal, relapse, and recovery that resonate with public understanding of mental health struggles. Though people commonly describe quitting as a personal breakthrough, the explanation itself is a borrowed script—one sustained by rehab idioms repurposed for tech use, revealing how the language of addiction provided the most accessible moral grammar for disengagement, even when clinical dependence was never diagnosed.

Platformed Vulnerability

Beginning around 2019, quitting social media became narrativized not as private withdrawal but as a public performance of healing, where users documented their exit as part of a broader arc of mental health recovery shared across the same platforms they claimed to reject. Influencers and everyday users alike adopted a confessional tone, using hashtags like #DigitalDetox and #MentalHealthMatter to reframe disconnection as a visible, socially reinforced process rather than an individual act of discipline. This shift reflects a structural inversion in which the demand for authenticity on social media paradoxically incentivizes declarations of leaving it, turning abstinence into a curated testimonial that sustains engagement even in departure—revealing how platform logic absorbs and redeploys resistance as content.

Platform liability

After 2018, rising mental health discourse reframed compulsive social media use as a public health consequence rather than a personal failure, shifting user justifications for disengagement from 'I need a break' to 'I’m protecting my mental health'—a rhetorical pivot that aligned individual behavior with institutional culpability. This reframing gained traction as whistleblowers like Facebook’s Frances Haugen revealed internal knowledge of algorithmic harm, enabling users to cite platform design as a structural cause rather than invoking personal discipline. The mechanism operates through media amplification of expert testimony and regulatory hearings, which retroactively validated user experiences as symptoms of systemic risk, not self-regulation gaps. This shift is significant because it transformed withdrawal from a privatized act of willpower into a visible, morally defensible resistance to engineered addiction, implicating platforms in psychological harm and altering public expectations of digital responsibility.

Clinical vernacular

The post-2018 proliferation of mental health terminology in mainstream discourse equipped users with a legitimized vocabulary to articulate social media disengagement as a therapeutic necessity rather than lifestyle choice, marking a decisive transition from moral to medical reasoning. Platforms like Instagram and TikTok became sites where terms like 'anxiety triggers,' 'emotional regulation,' and 'cognitive overload' entered common explanatory frameworks, allowing individuals to cite clinically resonant causes that carried social legitimacy. This shift was enabled by the mainstreaming of therapy culture via telehealth expansion, corporate wellness programs, and celebrity disclosures, which normalized psychological self-monitoring as a standard practice. The significance lies in how this linguistic infrastructure turned personal decisions into narratives of care, embedding individual behavior within a broader mental health economy where withdrawal from social media became a measurable act of self-preservation rather than mere preference.

Attentional sovereignty

The rise of mental health discourse after 2018 repositioned social media quitting as an assertion of cognitive autonomy, where users began describing their disengagement as reclaiming control over attentional resources fragmented by algorithmic capture. This reframing emerged alongside growing public awareness of surveillance capitalism, particularly after high-profile critiques of 'digital addiction' by former tech insiders and academic researchers like Shoshana Zuboff, which linked psychological distress directly to data-extractive design. As users internalized the idea that their focus was a contested asset, justifications for quitting shifted from emotional overwhelm to active resistance against covert behavioral manipulation. The significance of this pivot is that it situates individual withdrawal not within personal psychology, but within a political economy of attention, where mental health talk becomes the vernacular through which users assert sovereignty over their cognitive integrity.

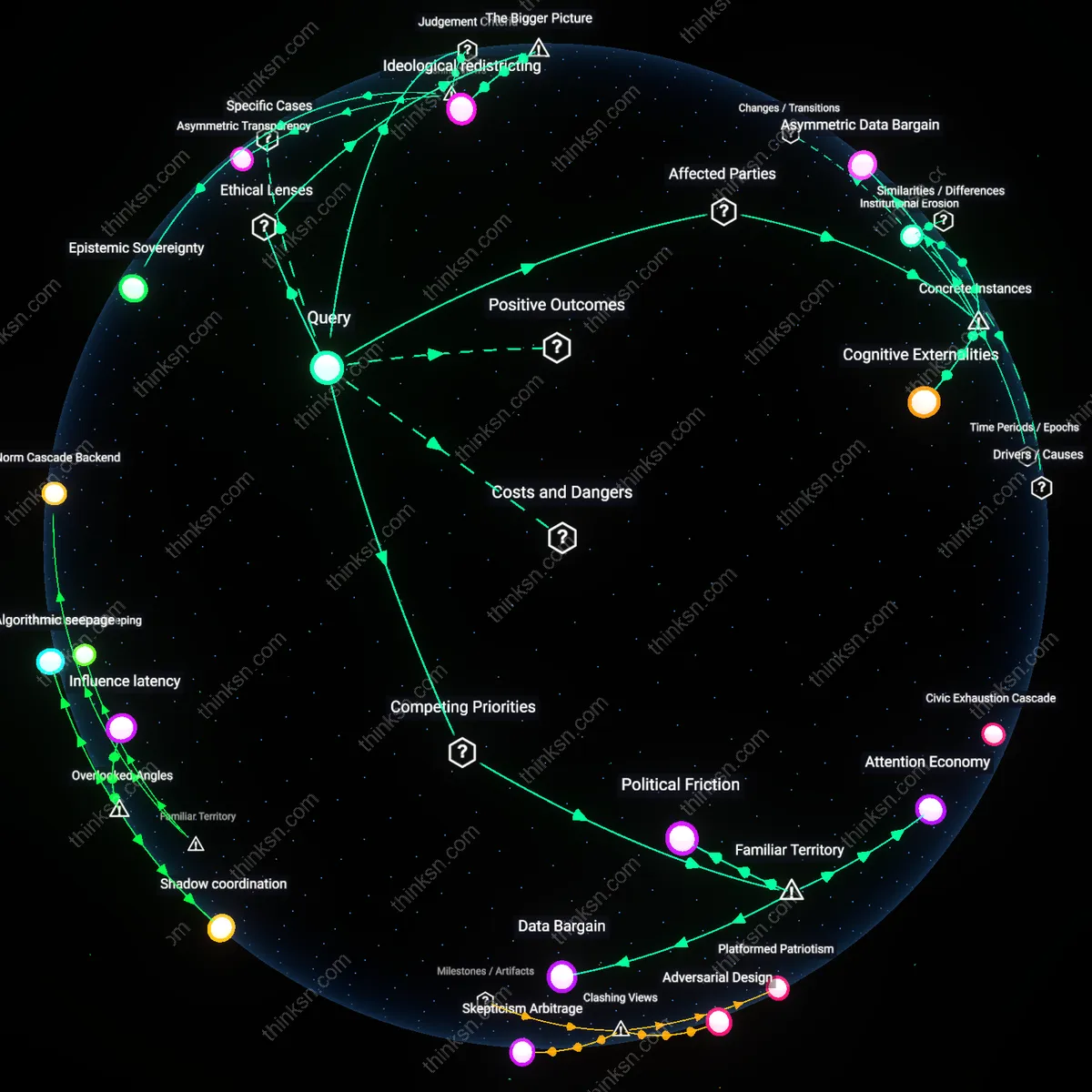

Discursive Shielding

After the 2019 Instagram detox campaign by mental health advocates in Brooklyn-based collectives like TechRefuge, users began citing anxiety reduction not as a private rationale but as a public moral imperative that reframed disengagement as ethically disciplined withdrawal. This shift operated through community-led digital sabbath events that ritualized logoff, transforming individual explanation into collective script, revealing how mental health language became a legitimizing alibi rather than a diagnostic tool. The non-obvious insight is that the discourse did not increase self-awareness so much as install sanctioned exit ramps from platform use, repurposing clinical vocabulary for social performance.

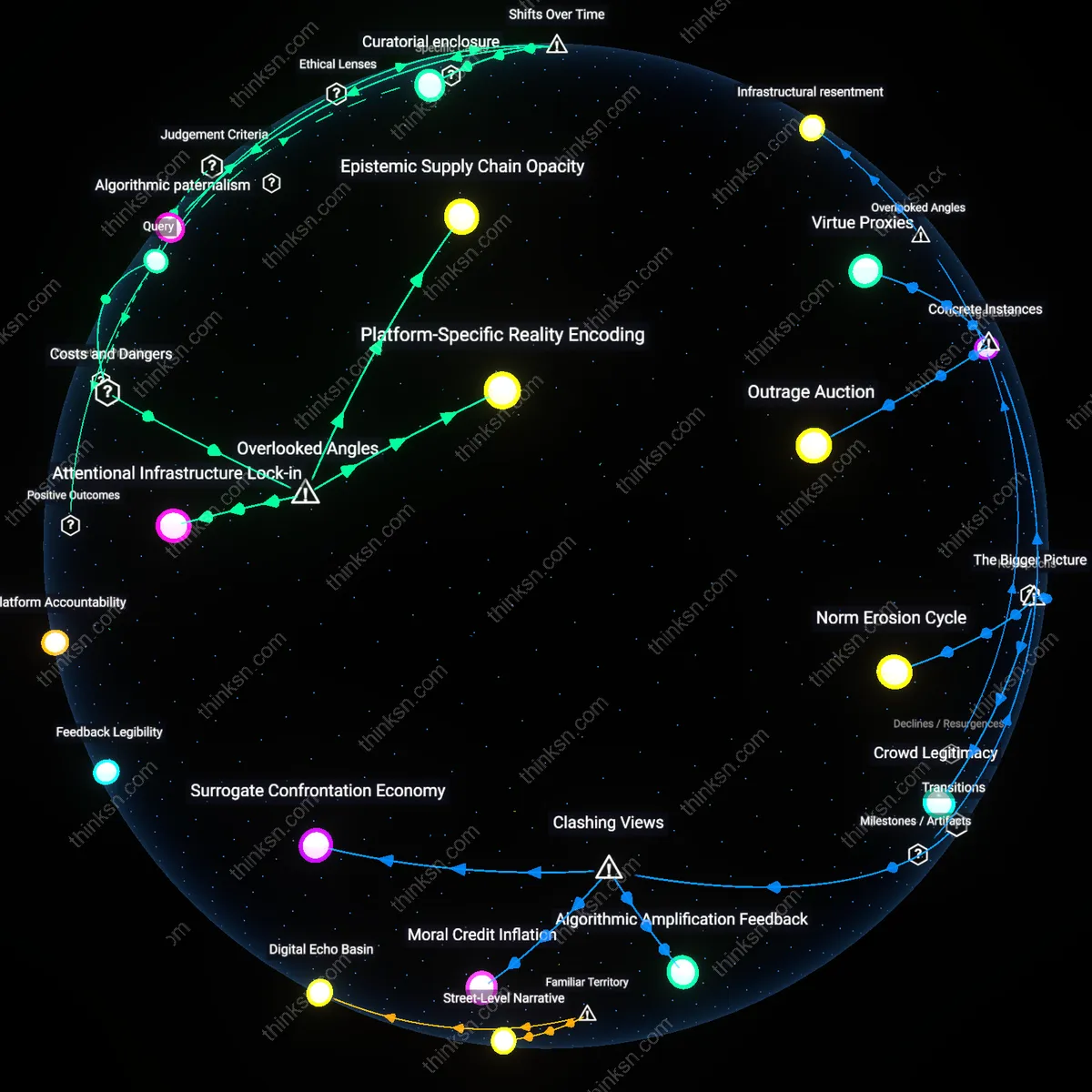

Platformized Confession

When TikTok's algorithm began amplifying 'Why I quit social media' testimonials in 2020, particularly those from burnout-besieged educators like Seattle high school teacher Amara Singh, the narrative arc of quitting evolved into a standardized content genre governed by engagement metrics. The mechanism was not therapeutic insight but recursive exposure—viewers adopted clinically inflected phrases like 'digital anxiety' not because of personal epiphany but to optimize relatability within a confession economy. This reveals that mental health talk spread not through medicalization but through its commodification in attention-driven feedback loops, where authenticity became a function of algorithmic reward.

Diagnostic Veto

Following Meta's internal 2021 leak of studies linking Instagram to teen depression, former platform engineers at the Center for Humane Technology pointed to how 'mental health awareness' became a post hoc justification retrofitted onto pre-existing fatigue with content overload—exemplified by the quiet exodus of early Facebook power users in Austin's tech-adjacent neighborhoods. The realignment was not driven by new psychological understanding but by the strategic repurposing of institutional health discourse to delegitimize platform authority, allowing individuals to invoke mental risk as an unassailable closure to debate. The underappreciated dynamic is that clinical language conferred unchallengeable finality to exits, making 'I need to protect my mental health' a discursive veto that halted further negotiation.

Affective Refusal

The rise of mental health discourse after 2018 reframed social media disengagement not as personal discipline but as an ethically necessary act of self-preservation, positioning users who quit scrolling as enacting resistance against algorithmic harm rather than exercising willpower. This shift was driven by public health advocates, clinical psychologists, and wellness influencers who recast compulsive usage as a symptom of systemic design exploitation, making cessation a therapeutic mandate. The mechanism operates through diagnostic language—terms like 'doomscrolling' and 'algorithmic trauma'—which pathologize platform architecture itself, not individual behavior. The non-obvious friction here is that quitting is no longer seen as withdrawal from a neutral tool, but as a refusal to participate in a harmful affective economy engineered by tech companies.

Moral Relegation

Post-2018 mental health narratives paradoxically diminished the moral weight of quitting social media by over-medicalizing it, transforming disengagement from a conscientious choice into a clinical necessity, thereby excusing platforms from structural accountability. As therapists, media commentators, and HR departments began framing reduced usage as a personal wellness intervention, the act lost its critical political edge and became absorbed into neoliberal self-care regimes. The dynamic functions through corporate wellness programs and school-led digital detox campaigns that treat symptoms while ignoring the monetized attention-extraction models at fault. What is underappreciated is that this therapeutic framing depoliticized digital resistance, turning what could have been collective refusal into privatized, non-confrontational retreat.

Diagnostic Scripting

The proliferation of mental health content after 2018 created standardized explanatory scripts that users must now invoke—such as 'anxiety management' or 'boundaries for self-care'—to legitimize their departure from social media, replacing ad hoc or political reasons with clinically sanctioned justifications. This scripting emerges from dominant platforms like Instagram and TikTok themselves, where mental health educators and algorithm-optimized therapists promote specific exit narratives that align with platform-friendly notions of individual resilience. The system rewards posts that frame quitting through diagnostic self-awareness, crowding out critiques of platform design or labor exploitation behind content creation. The clash lies in how mental health discourse, while appearing liberatory, has become a gatekeeping mechanism that controls which reasons for disengagement are socially legible.

Platform grief cycles

The 2019 internal well-being dashboards released by Instagram engineers mark a shift in how user disengagement was tracked—not as data loss but as emotional exit—revealing that post-2018 mental health discourse began to shape not only user self-explanations but also platform metrics, which in turn retrofitted personal narratives through feedback loops where users adopted clinical language to align with perceived platform expectations. This mechanism bypassed public awareness, embedding diagnostic framing into behavioral norms via invisible data protocols rather than public campaigns. What remains overlooked is how platform-defined emotional categories, once translated into analytics, began to pre-structure user self-understanding—making 'burnout' or 'anxiety' not just personal experiences but systemically anticipated exit conditions.

Peer witness economies

In 2020, the surge of 'digital detox' Instagram Stories—where users documented quitting scrolling with mood charts and therapy quotes—transformed private mental health decisions into socially validated performances, where the act of quitting gained symbolic capital only when legible within a shared therapeutic lexicon. These micro-narratives circulated through close-knit follow networks, creating peer-driven accountability systems that rewarded clinically framed explanations over others, such as time poverty or boredom. The overlooked dynamic is that quitting became less about behavior change and more about maintaining relational credibility within digitally intimate circles, where emotional transparency functioned as social currency—revealing that mental health talk served as a ritual of belonging, not just self-care.

Clinical exit scripts

After the American Psychological Association’s 2021 advisory on social media and adolescent mental health, school counselors in U.S. public districts began distributing templated 'digital well-being plans' that included standardized reasons for reducing screen use, directly supplying students with diagnostic justifications like 'emotional dysregulation' or 'attentional fatigue' to explain social media departure. These documents institutionalized psychological framing as the legitimate mode of disengagement, displacing earlier moral or productivity-based rationales and codifying therapeutic language into bureaucratic practice. The hidden dependency is that clinical discourse became widespread not primarily through media or self-help, but through formal youth-serving institutions embedding diagnostic reasoning into routine administrative tools—making mental health explanations not spontaneous, but administratively prompted.