Regulating Moderation: Transparency vs. Malicious Use Risk?

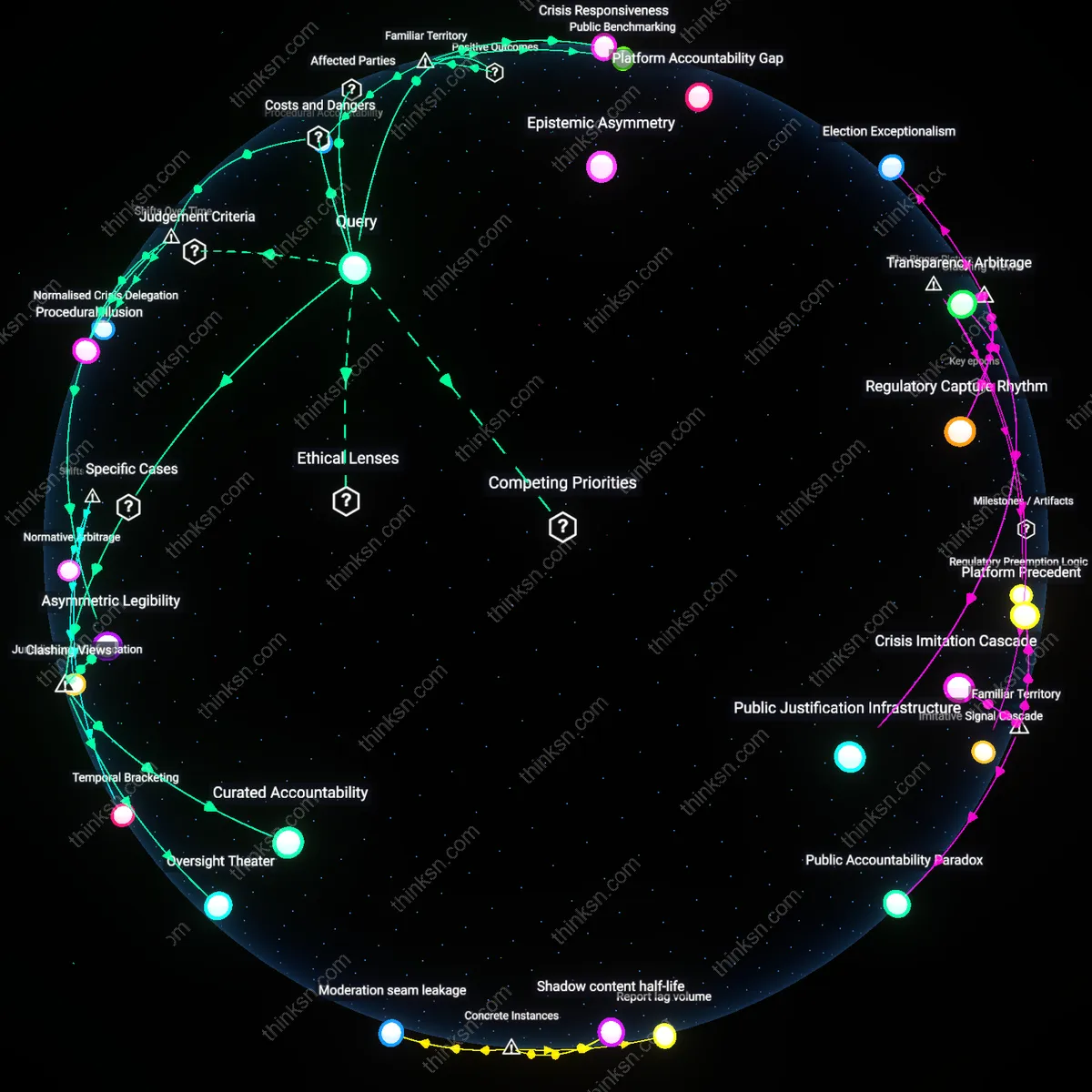

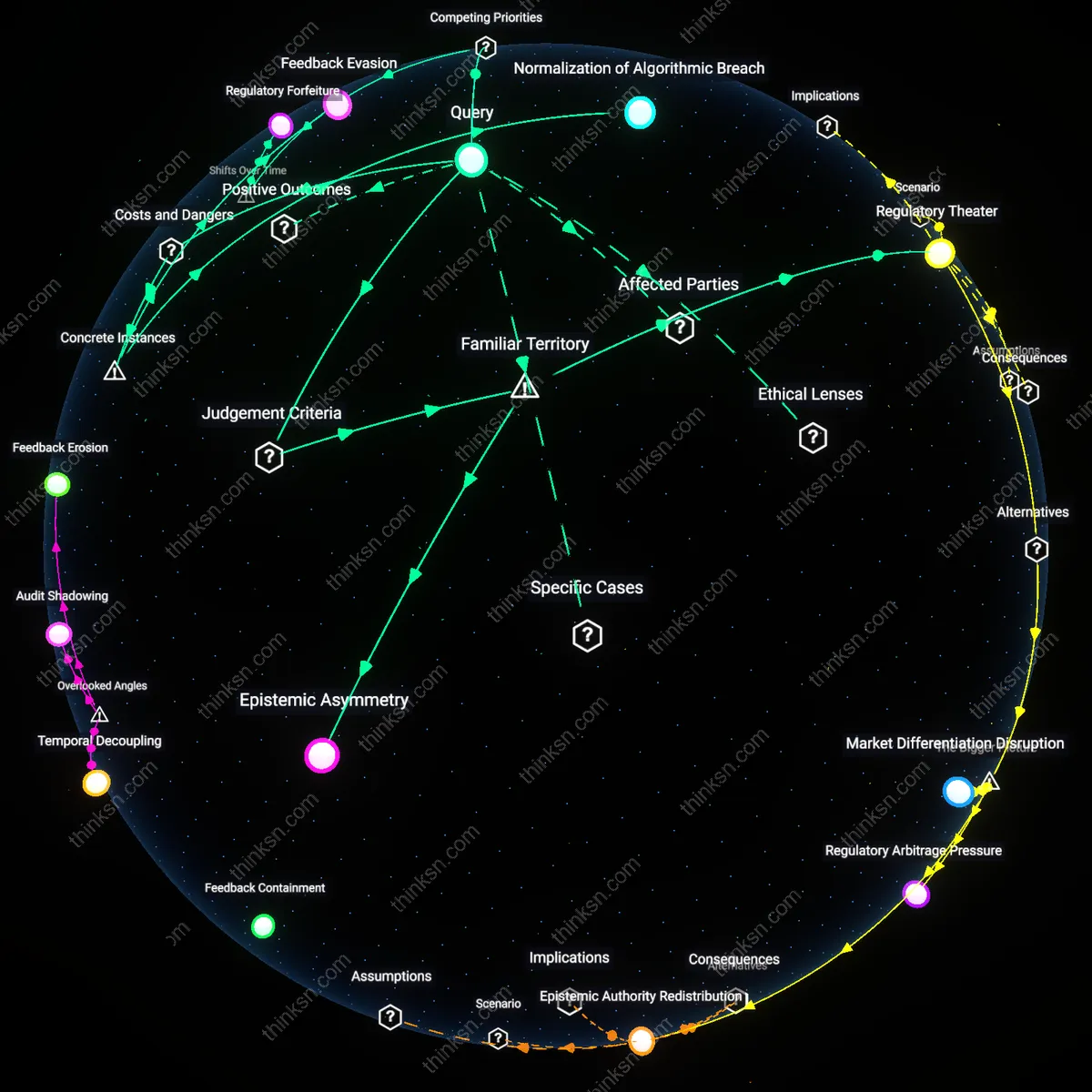

Analysis reveals 6 key thematic connections.

Key Findings

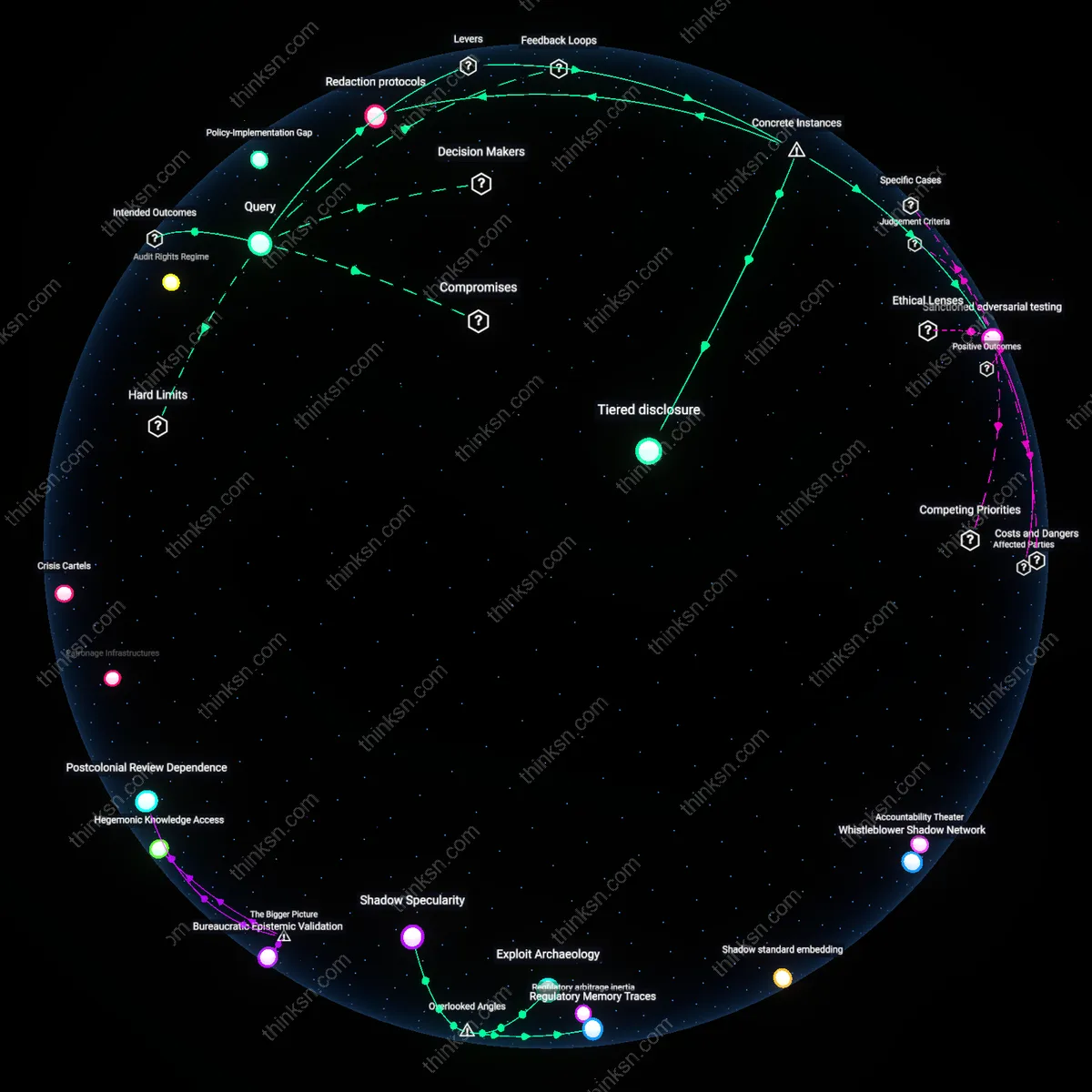

Tiered disclosure

Regulators can impose tiered access to algorithmic documentation, where public summaries reveal moderation principles while technical specifics remain restricted to vetted researchers, as seen in the EU’s Digital Services Act requiring transparency reports from platforms like Meta but mandating secure environments for sensitive data access; this creates a controlled gradient of visibility that limits weaponization risk while enabling external audit, revealing how differential access layers can reconcile openness with security in practice.

Redaction protocols

Regulators can mandate standardized redaction of sensitive components within disclosed moderation systems, as demonstrated by Germany’s NetzDG law requiring platforms to publish automated takedown decisions while omitting pattern-enabling metadata such as threshold weights or signal combinations used in detection; this selective omission prevents adversarial learning while preserving accountability, showing that intentional information gaps can function as a stabilizing mechanism in regulatory transparency.

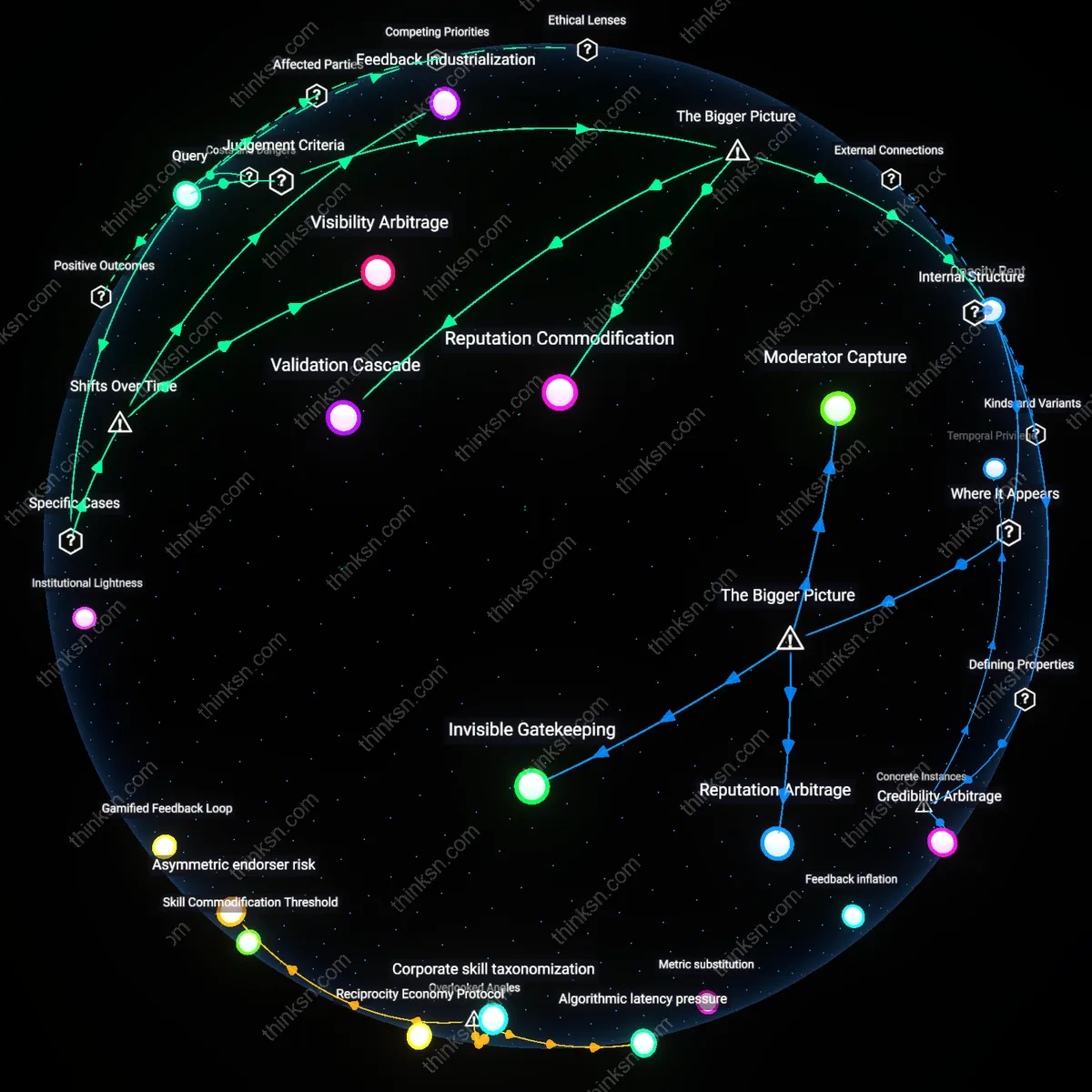

Sanctioned adversarial testing

Regulators can authorize official bug bounty programs or adversarial audits conducted by accredited third parties, as exemplified by Twitter’s 2023 initiative allowing selected researchers to probe shadow-banning mechanisms under strict non-disclosure and ethical guidelines; this institutionalized form of controlled exploitation transforms potential threats into systemic stress tests, demonstrating that regulated contestation can enhance robustness without uncontrolled leakage of operational logic.

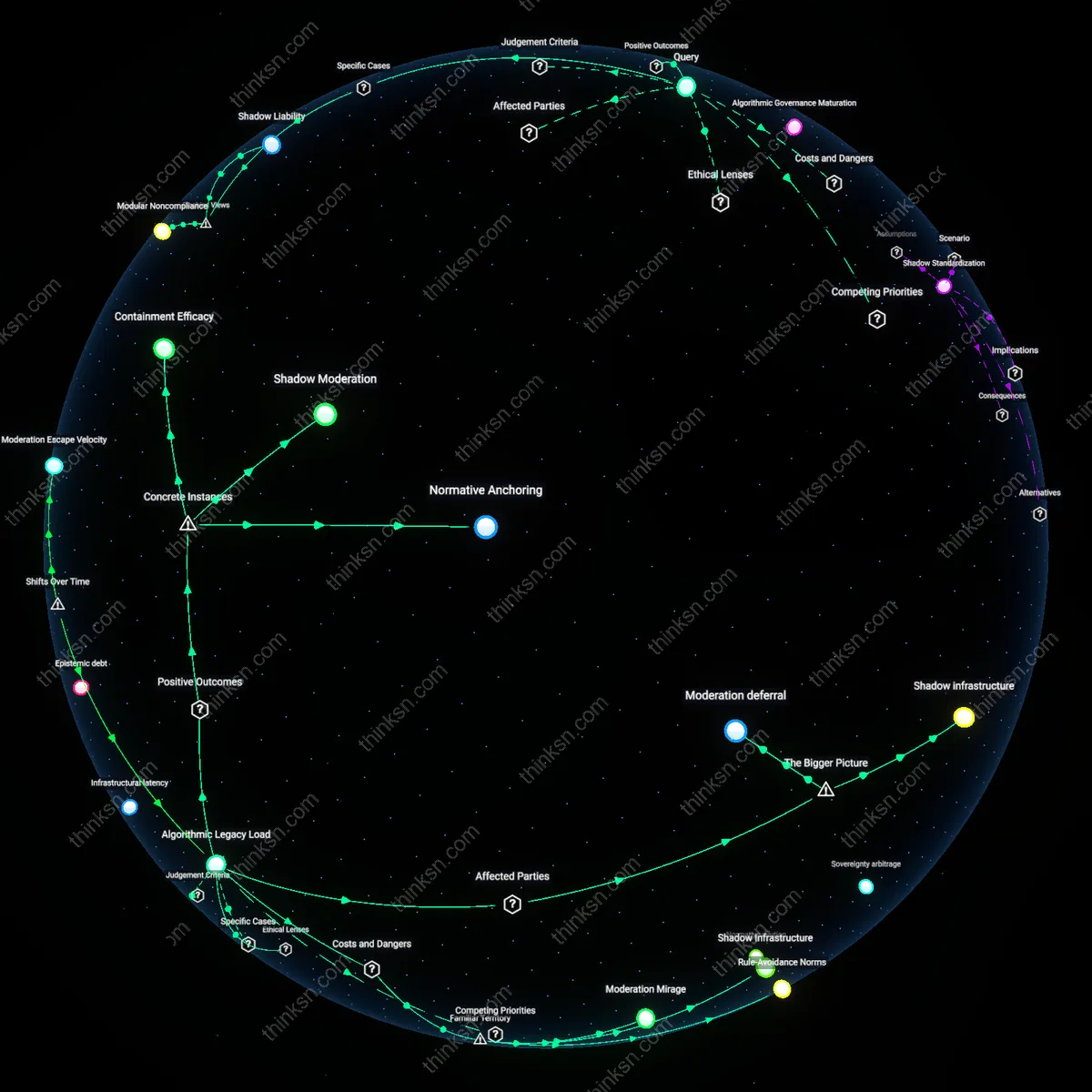

Audit Rights Regime

Regulators should mandate independent algorithmic audits by trusted third parties with access to platform code and moderation logs. This allows transparency to the public interest without releasing proprietary systems to open exploitation, as auditors can verify fairness and compliance while redacting sensitive implementation details. The non-obvious insight is that most people assume transparency means public disclosure, but experienced governance systems often rely on oversight intermediaries who reconcile accountability with security—like financial regulators using certified accountants rather than publishing corporate balance sheets online.

Exploitation Delay Window

Regulators should require delayed disclosure of moderation rules, releasing detailed algorithmic logic only after a fixed period such as six months. This preserves transparency over time while raising the cost of weaponizing knowledge, since tactics based on leaked logic will be outdated by the time adversarial actors act. The familiar fear is that revealing how moderation works helps bad actors game the system—but what’s underappreciated is that time itself can be a protective buffer, much like how central banks delay policy minutes to prevent real-time market manipulation.

Policy-Implementation Gap

Regulators should enforce strict separation between public-facing content policies and internal algorithmic execution, requiring platforms to justify discrepancies through documented review boards. This creates transparency about intent without exposing operational mechanics, as public reporting focuses on decisions rather than code. Most public debate conflates understanding *what* is moderated with *how*, but the key insight is that transparency about outcomes—what content was removed and why—can satisfy democratic scrutiny without revealing exploit-prone technical pathways.