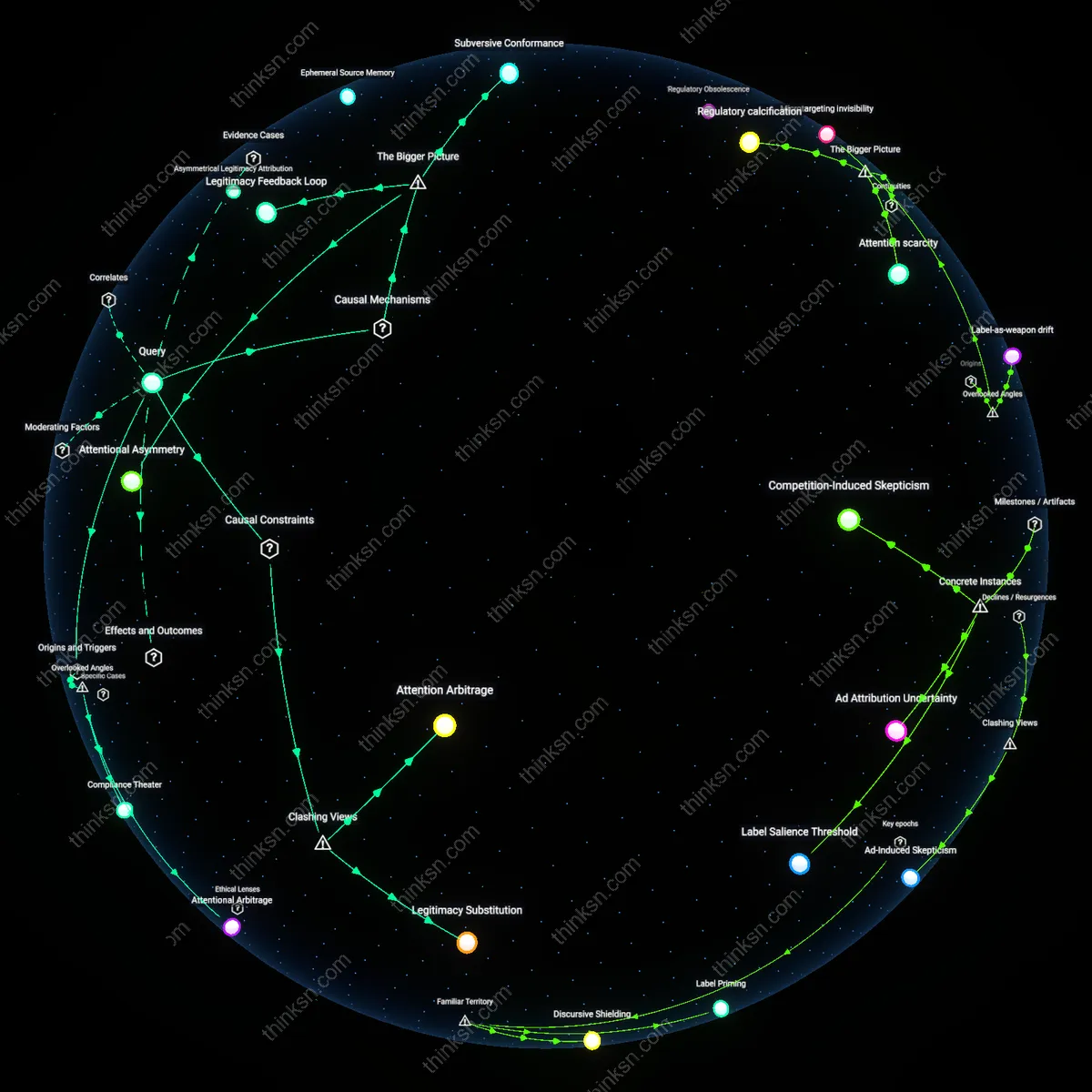

Do UK Deepfake Rules Favor Big Media by Relying on Self-Labeling?

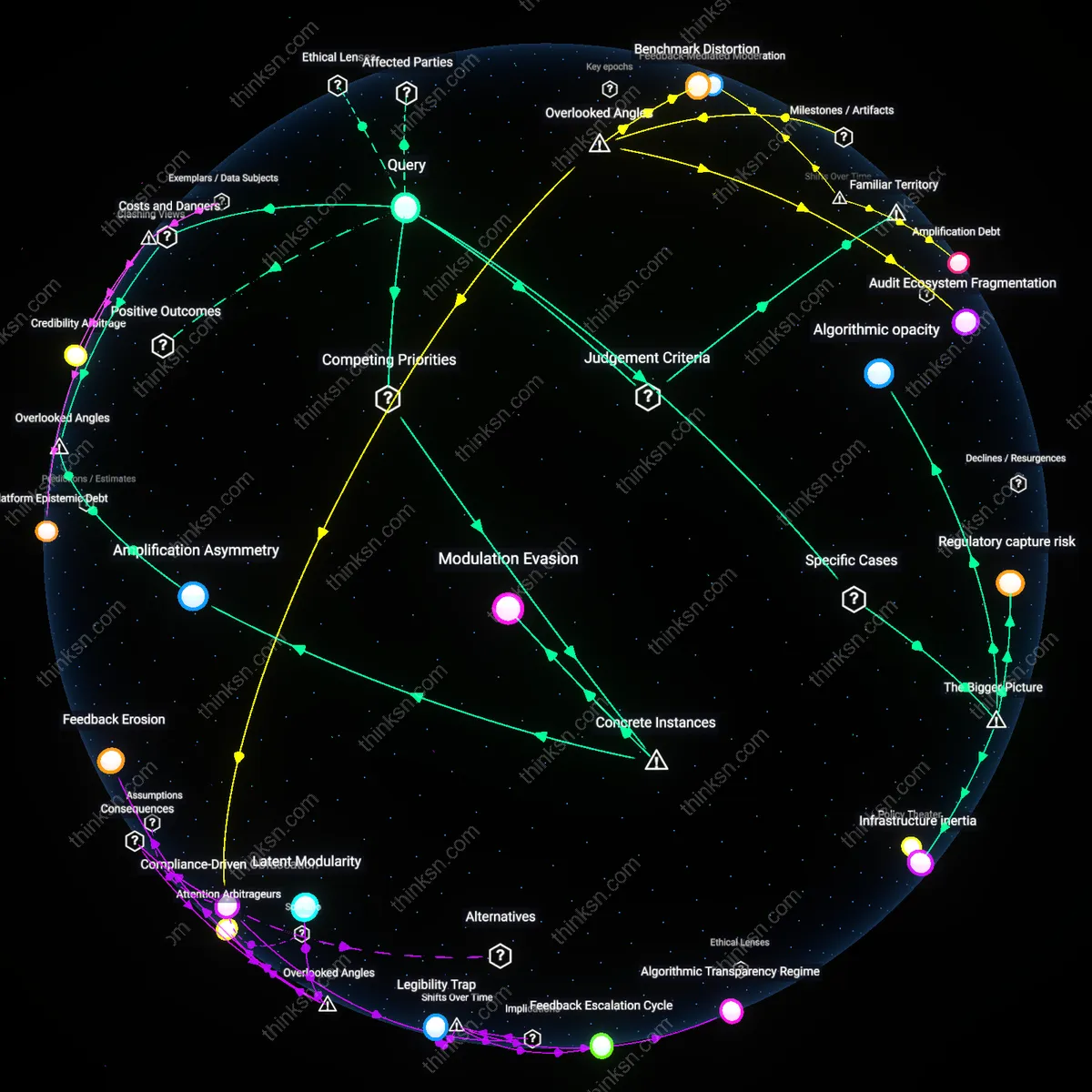

Analysis reveals 7 key thematic connections.

Key Findings

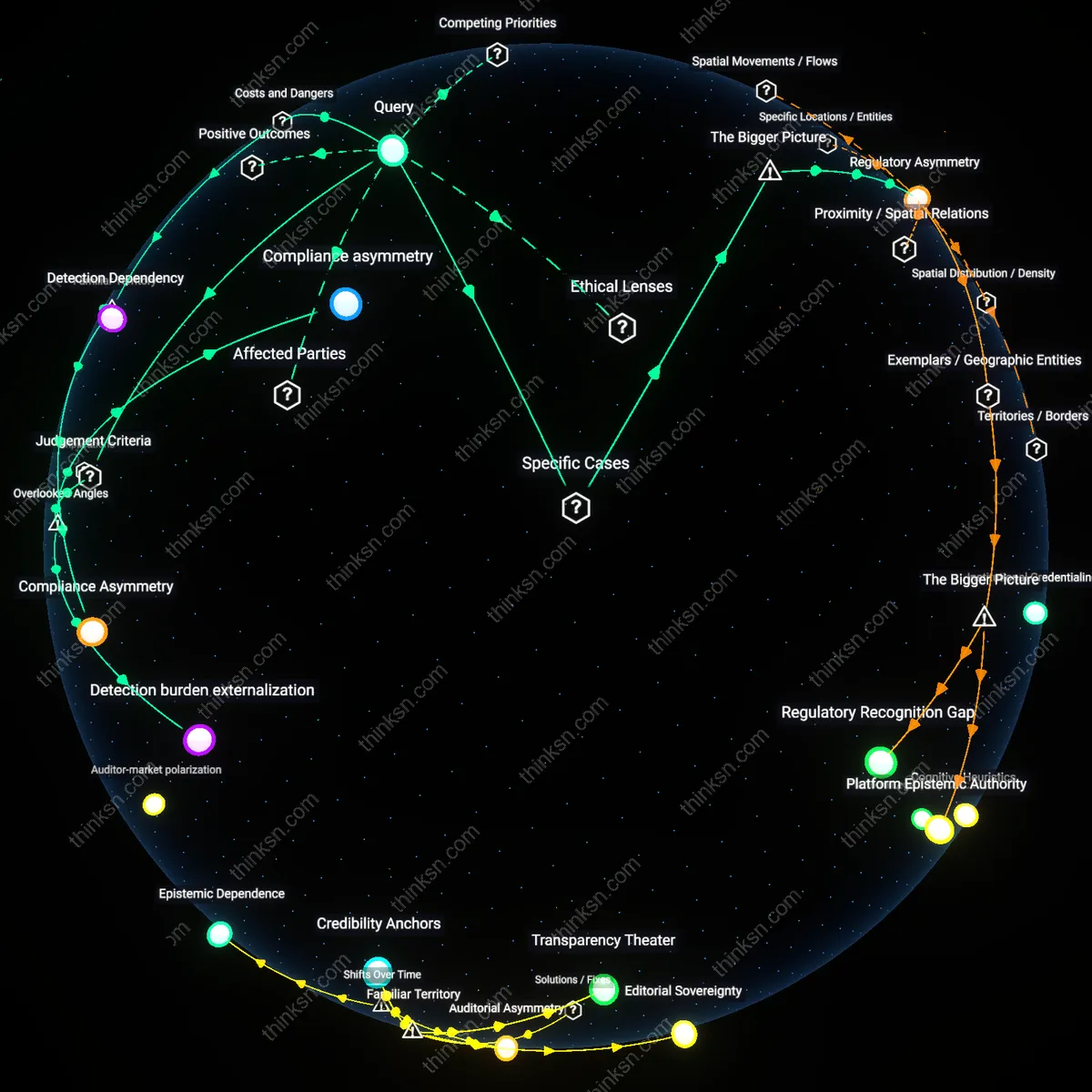

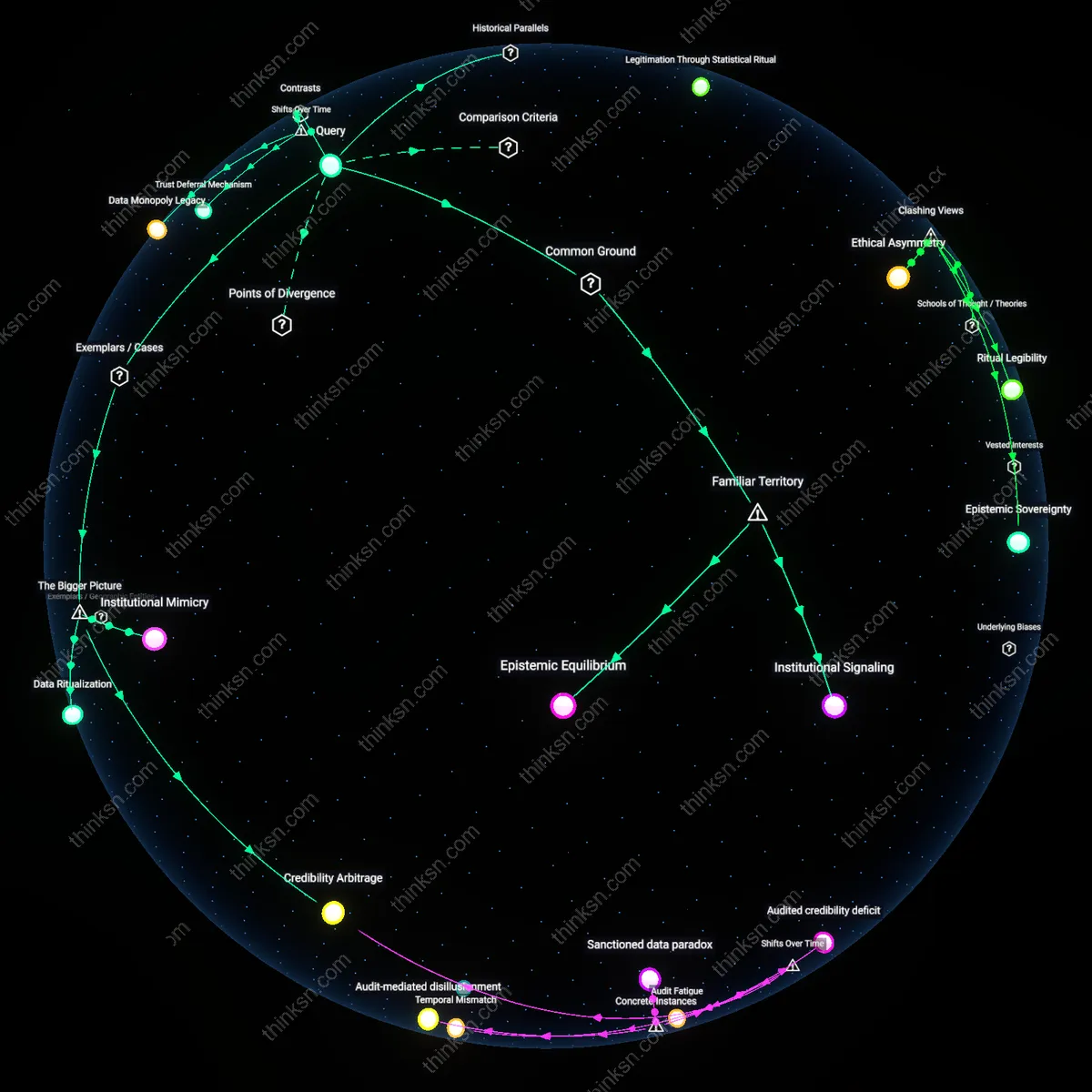

Compliance asymmetry

The UK's self-labeling regime for AI-generated deepfakes disproportionately benefits large media companies because their existing legal and editorial infrastructure allows them to absorb compliance costs effortlessly, whereas smaller creators face cascading liability risks even under the same rules. This disparity arises not from intentional exclusion but from the mismatch between voluntary enforcement mechanisms and pre-existing institutional capacity—large broadcasters already maintain teams for content provenance and regulatory reporting, which smaller actors cannot replicate at scale. The non-obvious insight is that regulatory neutrality on paper enables structural favoritism in practice, where identical rules produce divergent outcomes based on organizational scale and legacy systems, effectively outsourcing governance to those best equipped to self-police.

Detection burden externalization

By relying on self-labeling, the UK shifts the technical and financial burden of deepfake detection onto individual platforms and users, which advantages large media firms that can integrate watermarking and metadata tools into proprietary content distribution pipelines. Smaller actors, lacking access to standardized verification architectures, become de facto invisible in enforcement ecosystems dominated by algorithmic monitoring systems tuned to recognize only compliant formats. What is overlooked is that compliance isn’t just about labeling—it’s about being legible to automated enforcement; thus, the regime privileges entities already embedded in formalized data standards, reinforcing a hidden layer of infrastructural gating that escapes conventional discourse on media equity.

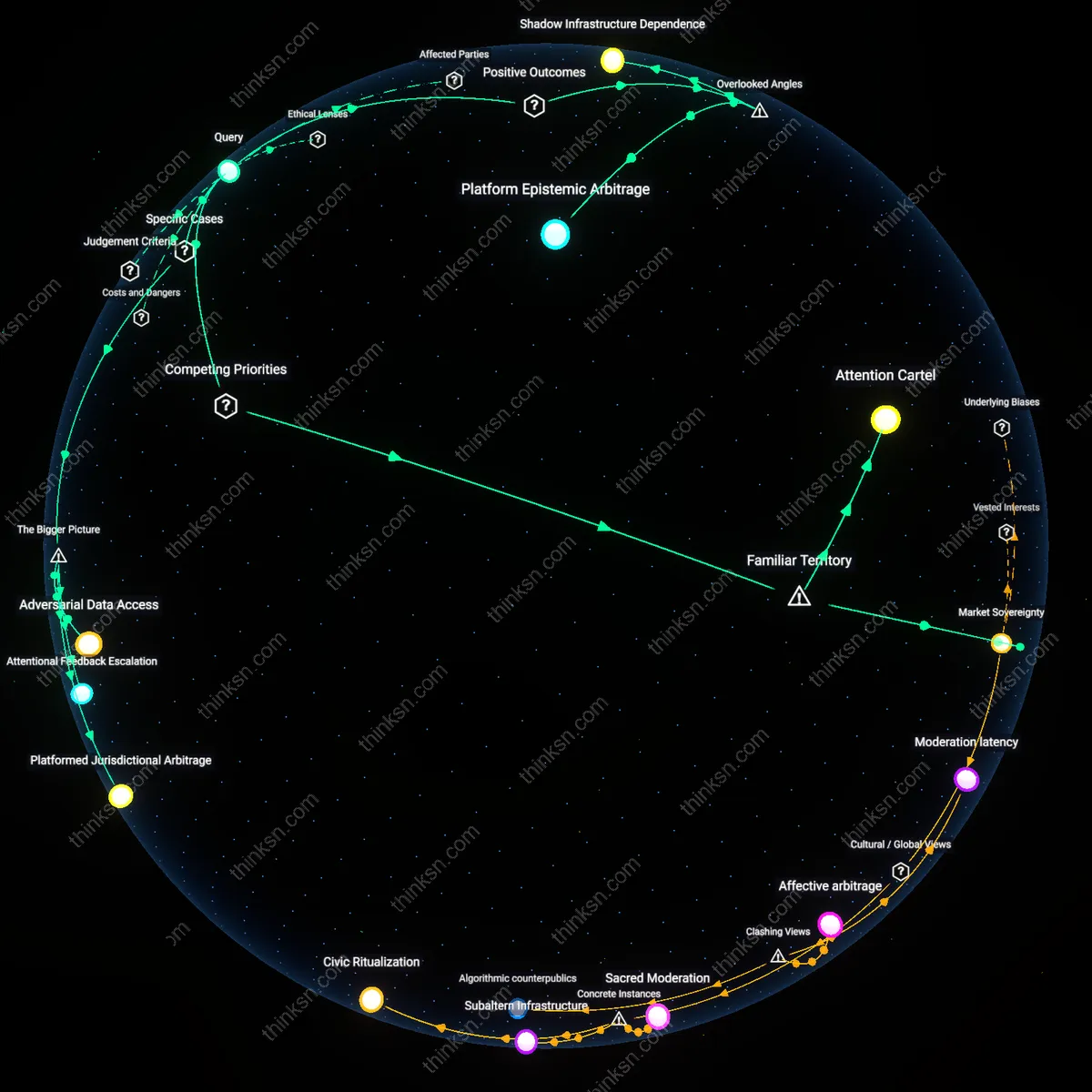

Reputational collateral

Large media companies gain implicit regulatory leniency because their brand reputations function as enforceable proxies for compliance, allowing them to leverage goodwill as a substitute for strict technical adherence—something unavailable to lesser-known or independent producers. In moments of ambiguous content origin, enforcement bodies and platforms are more likely to trust outputs bearing familiar institutional signatures, effectively creating a shadow system where trust, not transparency, becomes the operational currency of legitimacy. The overlooked dynamic is that self-labeling regimes do not operate uniformly but amplify pre-existing reputational hierarchies, transforming public trust into a form of regulatory capital that distorts fair competition.

Compliance Asymmetry

Large media firms can afford AI detection tools and legal teams to self-certify content, while independent creators and smaller outlets lack resources to verify or challenge classifications, creating a regulatory barrier that entrenches corporate control over legitimacy. This mechanism operates through the UK’s voluntary Code of Practice, where monitoring and record-keeping become de facto requirements not by mandate but by risk exposure, privileging organizations with existing compliance infrastructure. The non-obvious danger is that self-labeling doesn’t just favor big players—it redefines regulatory compliance as a function of organizational scale rather than accuracy or transparency.

Enforcement Illusion

Self-labeling regimes create the public impression of oversight without meaningful enforcement, allowing dominant media companies to selectively label content while facing minimal consequence for omissions—because verification is sporadic and penalties weak. This functions through public trust in labeling as a proxy for accountability, even when no independent audit exists, and it benefits established entities accustomed to reputational risk management. The underappreciated risk is that the system satisfies regulatory optics while concentrating informational authority in those already positioned to define truth by default.

Detection Dependency

Reliance on self-labeling assumes that producers can accurately detect AI generation, but in practice this pushes smaller actors to depend on flawed or proprietary detection tools controlled by major tech and media firms, who set the standards for what counts as 'synthetic.' This dependency emerges through integration of detection APIs and certification workflows that align with corporate interoperability standards, making neutrality impossible. The familiar framing of 'labeling for transparency' masks how the technical means of identification are themselves a site of market capture and exclusion.

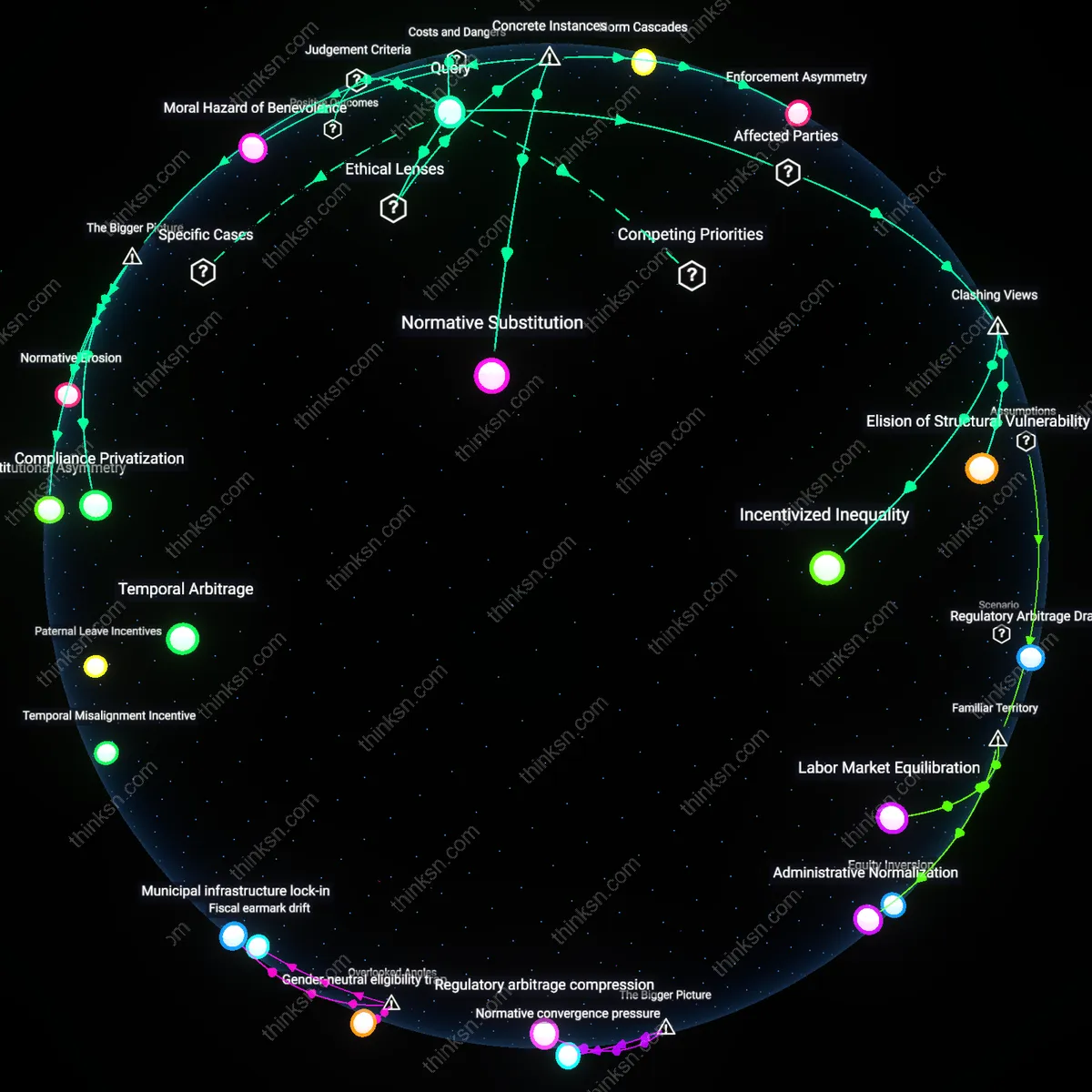

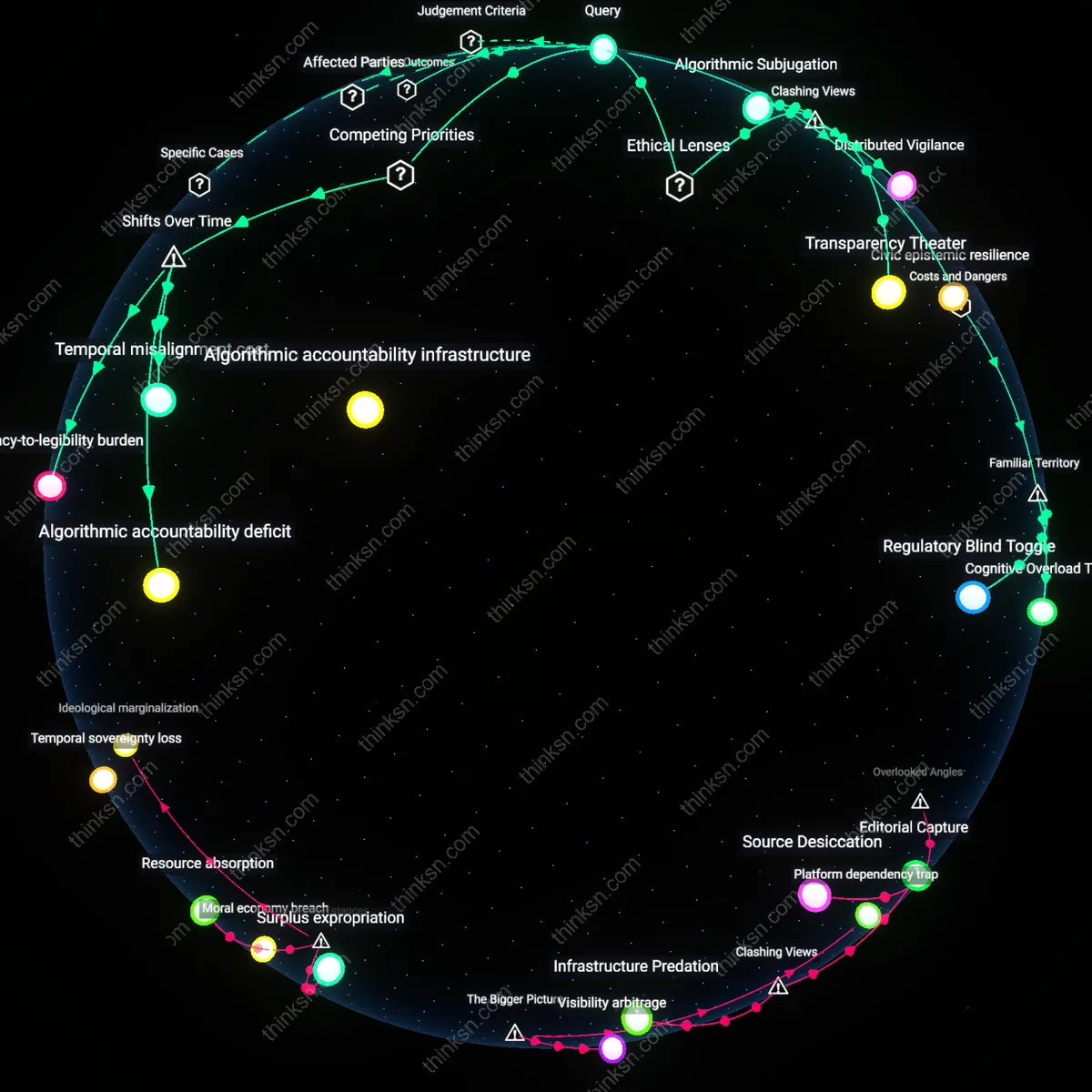

Regulatory Asymmetry

The UK's self-labeling requirement for AI-generated deepfakes disproportionately benefits large media companies because they possess internal compliance infrastructure capable of systematic content tagging, whereas smaller creators lack standardized tools or legal teams to verify and label content consistently. The BBC and Reuters, for instance, already operate under stringent editorial oversight and digital provenance protocols—such as using the Content Authenticity Initiative’s metadata standards—enabling seamless integration of deepfake disclosures, while independent journalists or citizen publishers face both technical and legal uncertainty when self-labeling. This creates a de facto barrier to credible participation in public discourse, where authenticity is increasingly tied to institutional capacity rather than factual accuracy. The non-obvious consequence is not merely unequal compliance, but the gradual monopolization of 'trustworthy' media space by entities that can afford bureaucratic conformity.