Does Facebook Polarize or Inform? The Contradictory Study Results

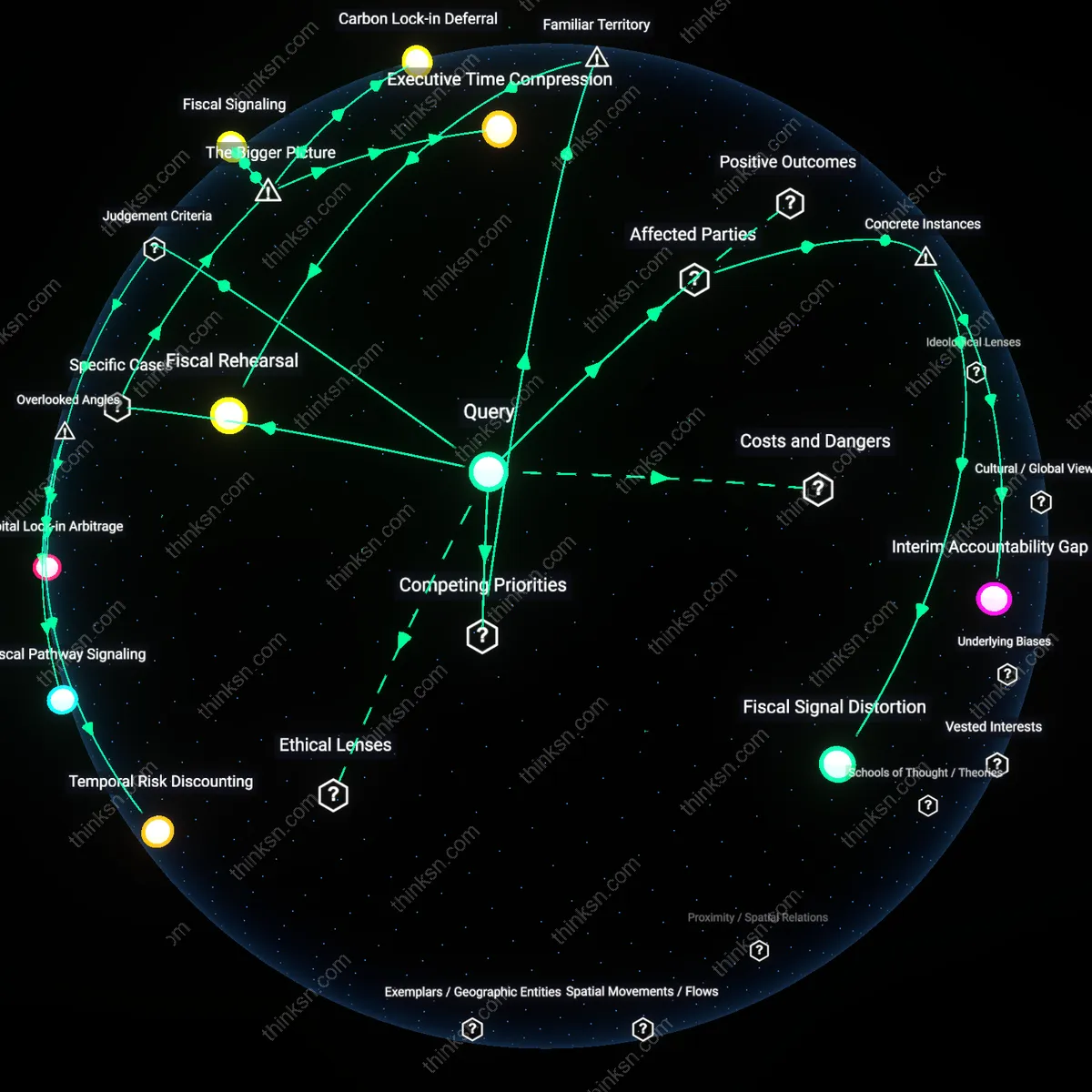

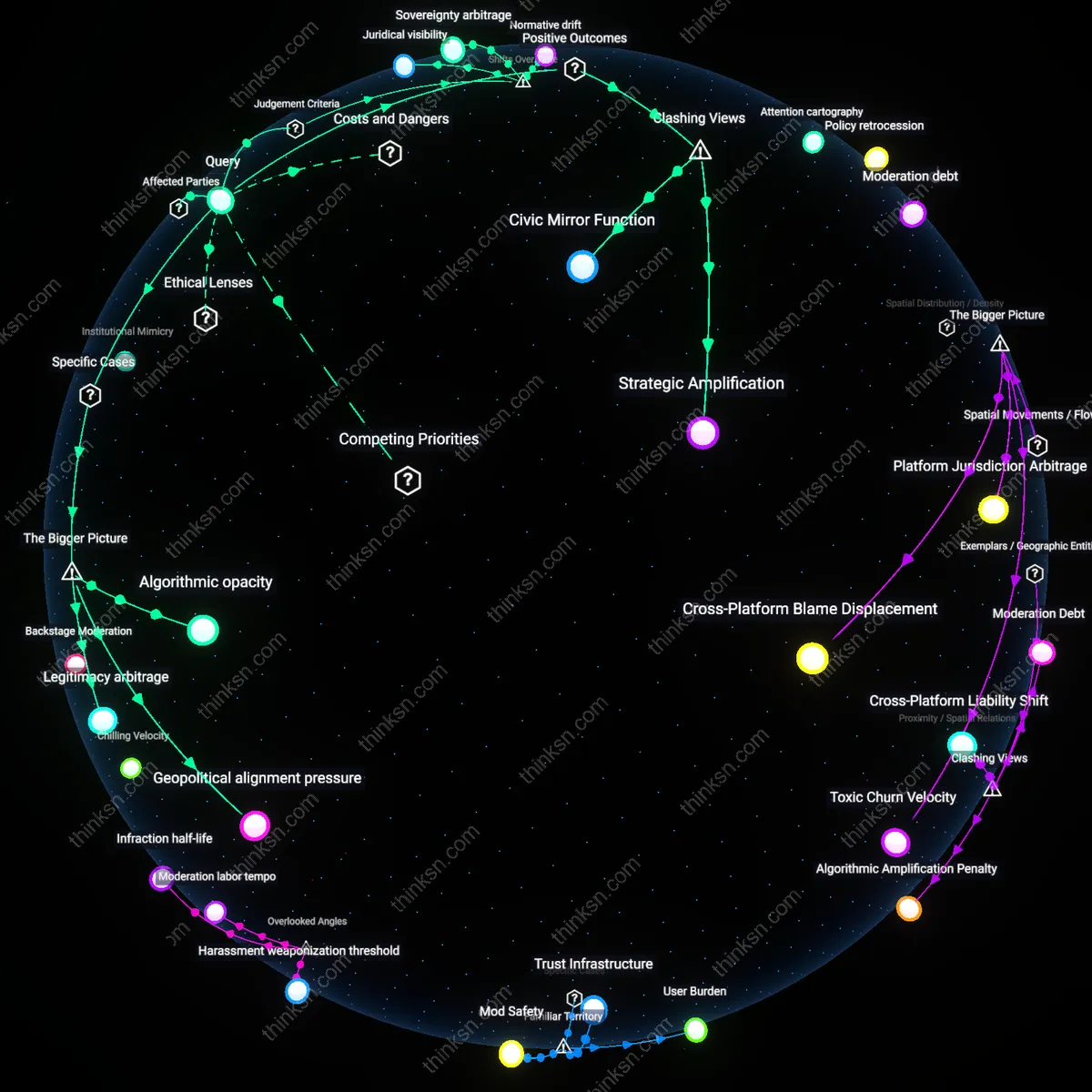

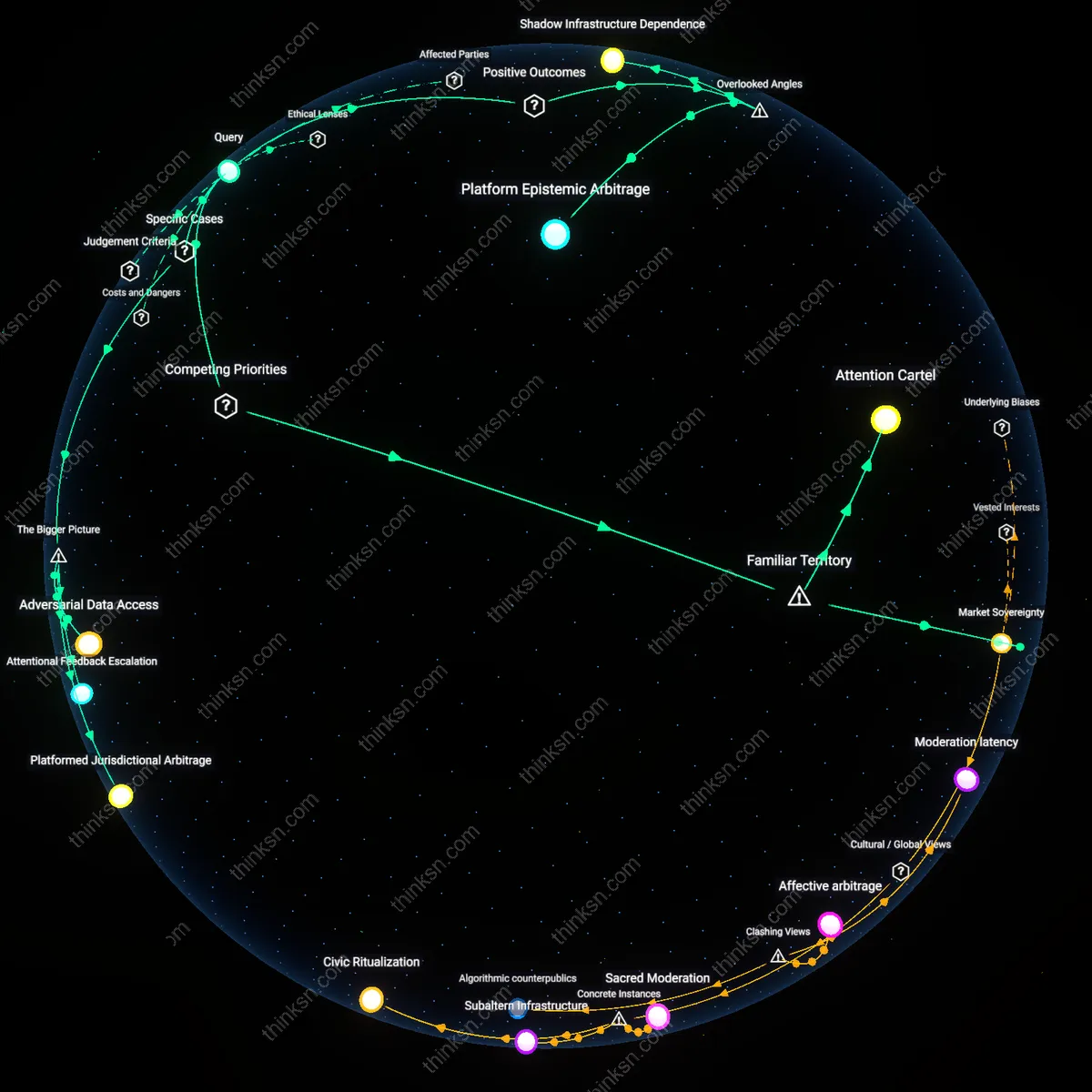

Analysis reveals 12 key thematic connections.

Key Findings

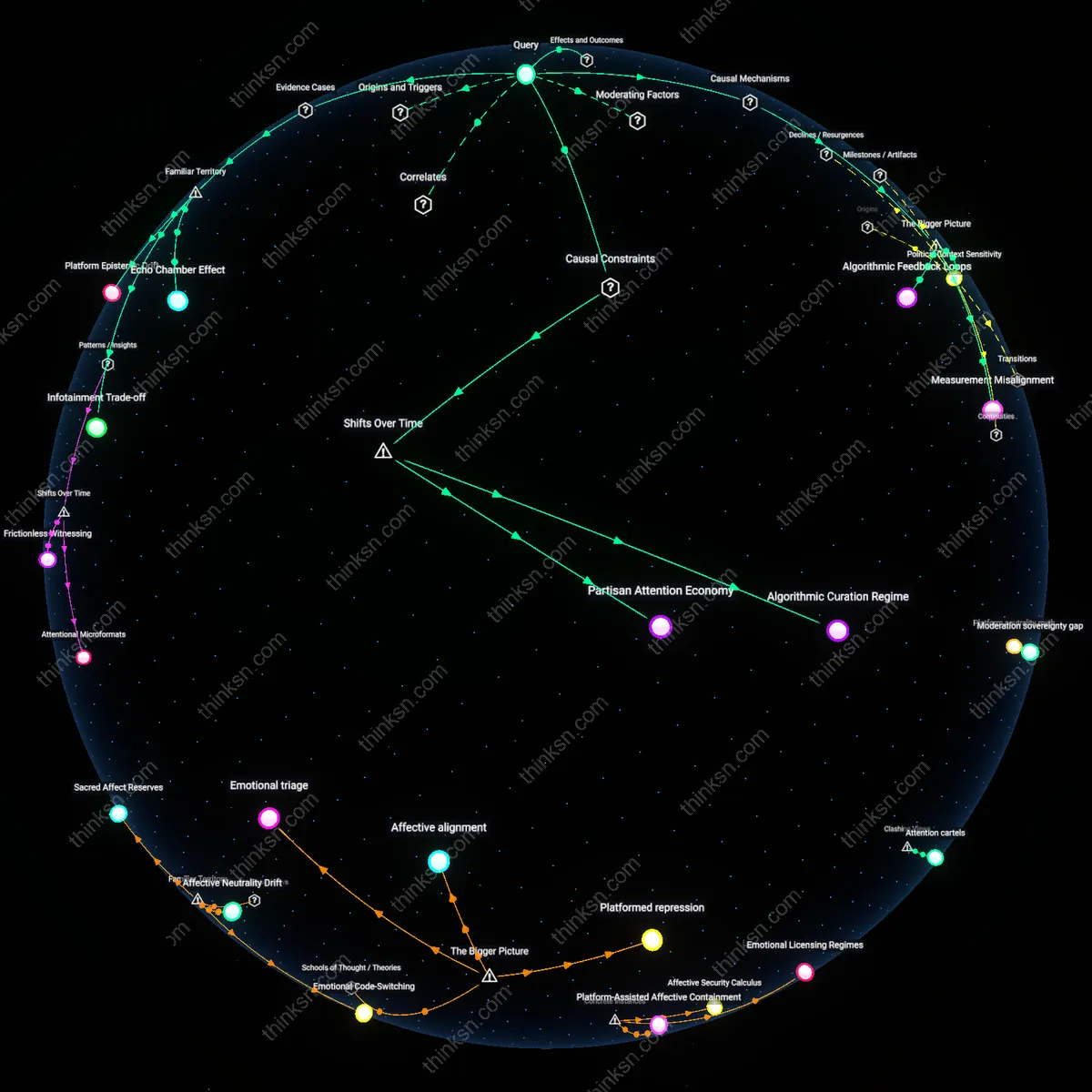

Algorithmic Feedback Loops

Divergent outcomes in longitudinal Facebook studies arise because personalized content algorithms amplify ideologically congruent information over time, reinforcing specific user beliefs while suppressing cross-cutting exposure. As users engage with politically aligned content, Facebook’s machine learning systems prioritize similar material to maximize engagement metrics, creating divergent epistemic trajectories that either deepen knowledge or entrench polarization depending on initial network composition. This mechanism is non-obvious because identical platform logic produces opposite effects based on pre-existing community structure—knowledge accrual in ideologically diverse networks versus polarization in homogeneous clusters. The system’s scalability and opacity mask how micro-level interactions accumulate into macro-level divergence, making uniform study results implausible despite shared methods.

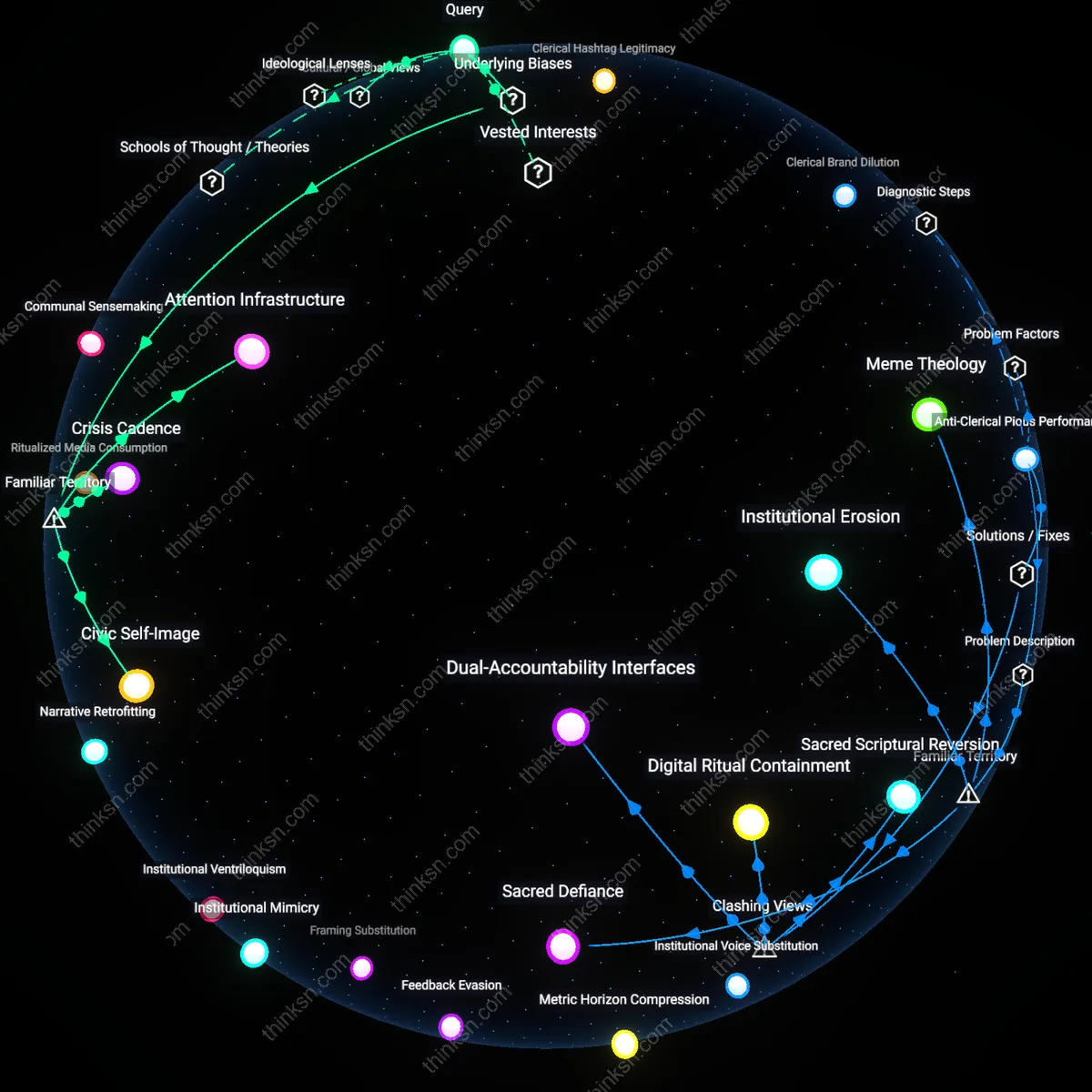

Political Context Sensitivity

Varied findings across Facebook studies emerge because political systems shape how platform use translates into knowledge or polarization—what appears as polarization in stable democracies may reflect opinion clarification, whereas in fragile regimes it signals mobilization. National regulatory environments, party system competitiveness, and press freedom determine whether Facebook exposure introduces novelties (enhancing knowledge) or amplifies conflict (driving polarization), meaning identical user behavior yields different outcomes under different institutional pressures. This dependency is underappreciated because most studies treat platform effects as context-invariant, overlooking how domestic political incentives mediate digital engagement. As a result, the same longitudinal design captures opposing trends simply because the state-level framework alters interpretation and expression of online activity.

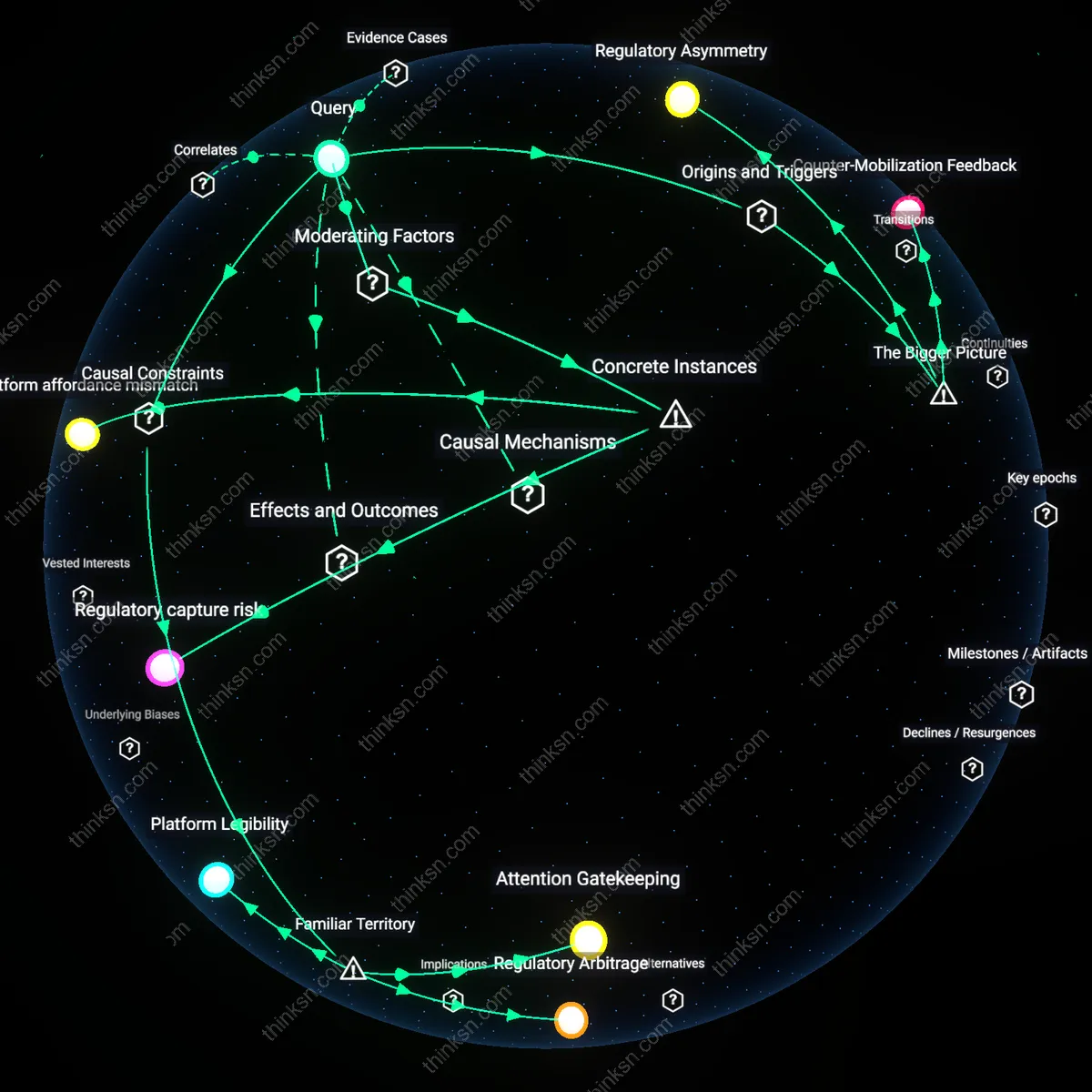

Measurement Misalignment

Inconsistent results in Facebook research stem from a disconnect between standardized survey-based metrics of political knowledge and the dynamic, network-driven nature of actual knowledge acquisition on the platform. Researchers often treat political awareness as a fixed, factual recall task while Facebook fosters situational, relational understanding through comment threads, memes, and shared narratives—processes invisible to traditional instruments but influential in shaping affective polarization. This blind spot arises because academic evaluation systems reward methodological comparability over ecological validity, causing repeated use of outdated metrics despite platform evolution. Consequently, studies measuring identical behaviors yield conflicting conclusions simply because their tools capture different dimensions of the same phenomenon.

Attention architecture

Variations in Facebook's algorithmic attention architecture—specifically how feed-ranking rules prioritize emotionally salient over cognitively demanding content—distort the measurement of political knowledge by privileging engagement metrics that correlate with outrage rather than comprehension. This mechanism systematically skews longitudinal datasets toward users whose 'knowledge' is embedded in reactive, affect-laden posts rather than substantive political discourse, rendering cross-study comparisons unreliable when platform algorithms shift without corresponding adjustments in survey timing or item design. The non-obvious dimension is that political knowledge is being captured as a byproduct of attention capture, not learning, which undermines the assumption that survey responses reflect stable cognitive gains.

Platform ethnography

Differing results in longitudinal Facebook studies arise because researchers rarely account for divergent user cohorts' platform ethnography—their tacit, learned practices of selective sharing, muted engagement, and identity curation—which shape what political content is expressed versus internalized. Users in politically volatile regions, such as post-election Kenya or Brazil during impeachment crises, develop defensive posting behaviors that suppress visible political expression even as private knowledge grows, creating measurement gaps in studies relying on public activity. This overlooked dynamic reframes polarization not as an attitudinal shift but as a visibility artifact, exposing how research methods conflate observable behavior with actual belief change.

Infrastructure temporality

Discrepancies in Facebook study outcomes stem from unrecorded infrastructural temporality—how intermittent internet access, device limitations, and local power cycles in Global South settings like rural Philippines or Nigeria fragment user engagement into episodic bursts that disrupt the continuity assumed by longitudinal designs. These material constraints produce non-random missing data patterns that mimic polarization trends or suppress apparent knowledge gains, even when neither is occurring, because standard models treat timing gaps as neutral rather than structurally induced. The overlooked factor is that digital political behavior is not just shaped by ideology or platform design, but by the pulsation of connectivity itself, which systematically biases data toward users in stable infrastructural zones.

Algorithmic Curation Regime

Facebook's shift from chronological to algorithmically ranked feeds after 2014 bottlenecked political knowledge accumulation by filtering civic content through engagement metrics, disrupting earlier patterns of organic exposure. This change in feed logic entrenched selective visibility, where emotionally charged or ideologically aligned content displaced context-rich political material, altering the conditions under which users encountered information. The non-obvious consequence was not increased polarization per se but the decoupling of exposure from civic relevance—a transformation rarely isolated in studies assuming static platform design.

Partisan Attention Economy

The rise of professionally managed political pages post-2016 bottlenecked short-term polarization effects by turning user attention into a monetizable commodity, where the rate of political learning became secondary to the velocity of partisan engagement. As campaigns and influencers adopted microtargeted content strategies optimized for virality, the mechanism of political knowledge diffusion fragmented across audience segments, suppressing measurable consensus without deepening ideological rigidity. The underappreciated shift was not in user psychology but in the timing and targeting infrastructure that now governs when and how political content surfaces.

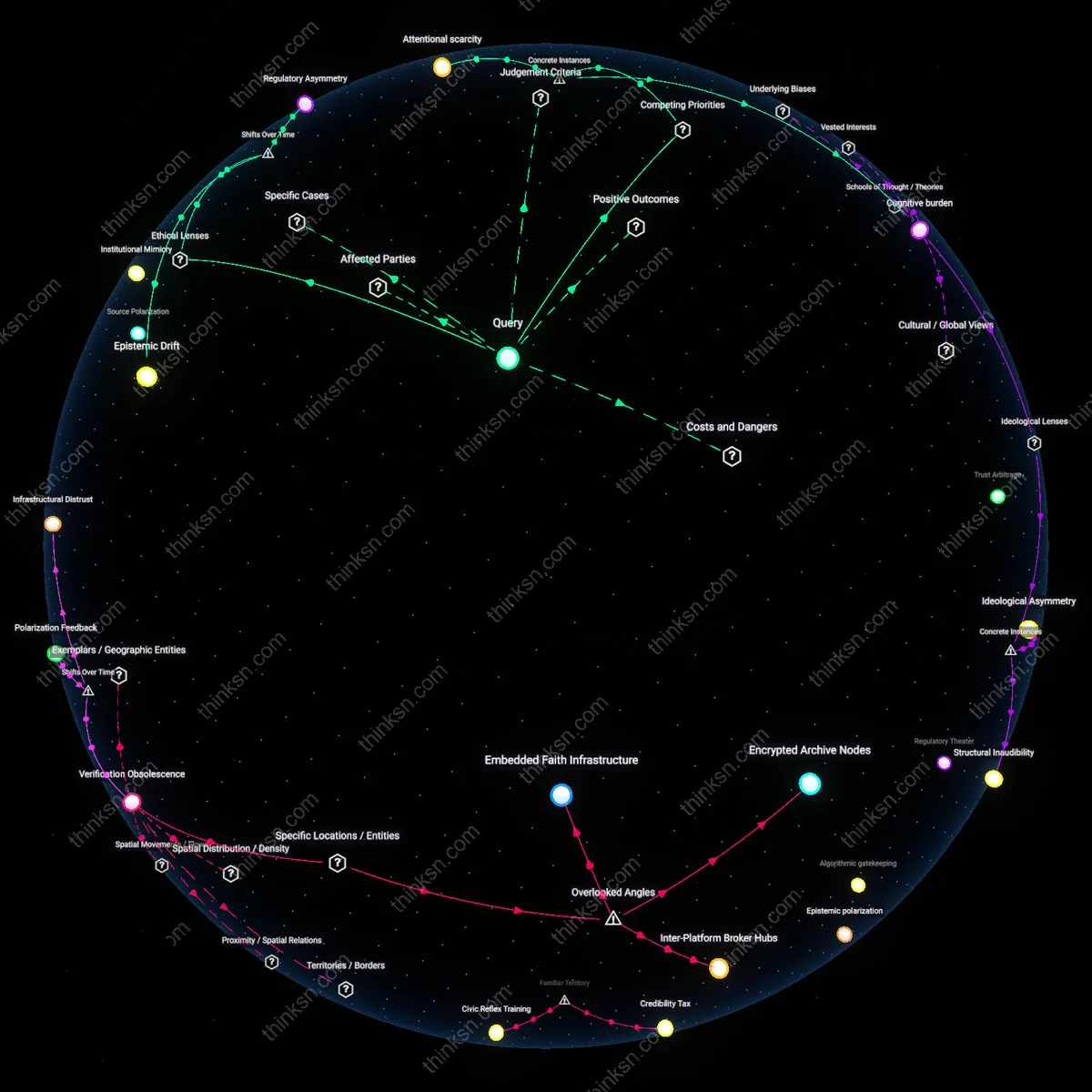

Platform-Dependent Literacy

The transition from desktop-dominated to mobile-first Facebook use between 2012 and 2015 bottlenecked sustained political learning by compressing user interaction into brief, habitual sessions unsuited for complex informational processing. As interface design prioritized scrolling velocity over dwell time, the mechanism of knowledge accumulation weakened, not due to content quality but to cognitive affordances degraded by device context. This trajectory reveals that polarization may spike momentarily without corresponding gains in knowledge—a disparity that emerged only when digital literacy became tethered to platform-specific interaction rhythms.

Echo Chamber Effect

Facebook's algorithmic feed amplifies ideologically congruent content, increasing exposure to one-sided political information. Users in politically homogenous networks—such as conservative rural communities in the U.S. Midwest or progressive urban clusters in coastal cities—receive repeated affirmation of their beliefs, which strengthens conviction without improving factual understanding. This dynamic creates the illusion of knowledge growth while actually narrowing informational range, a phenomenon visible in longitudinal studies tracking users in places like Alabama and Oregon where engagement correlates with polarization but not quiz-based political knowledge. The non-obvious insight is that the very engagement Facebook optimizes for—likes, shares, comments—erodes epistemic diversity under the guise of political interest.

Infotainment Trade-off

News outlets and pages that prioritize shareable, emotionally charged political content—like those dominating Facebook in Brazil during Bolsonaro's presidency or India under Modi—generate high engagement but low information density. These environments reward brevity, outrage, and moral clarity over complexity, causing users to absorb strong affective cues without gaining detailed policy knowledge. The mechanism operates through Facebook’s virality metrics, which elevate simple narratives over nuanced analysis, making users feel politically informed while primarily absorbing partisan framing. The overlooked reality is that people mistake emotional arousal for learning, a trend especially visible in Facebook-dominant information ecosystems where traditional news is sidelined.

Platform Epistemic Drift

In countries like the Philippines, where Facebook functions as the primary internet for millions, political knowledge is shaped not by diverse sources but by trending topics and influencer narratives that shift rapidly with platform trends. Over time, this creates a knowledge baseline that is highly responsive to algorithmic pulses—such as sudden surges in disinformation during elections—making political awareness volatile and context-dependent rather than cumulative. The longitudinal variability in polarization and knowledge measures reflects not user change but the platform’s shifting epistemic norms, driven by Meta’s global infrastructure and local content moderation gaps. The underappreciated point is that Facebook doesn’t just deliver information—it redefines what counts as knowledge in real time.