Do Personalized News Feeds Shape Political Beliefs on Free Search Engines?

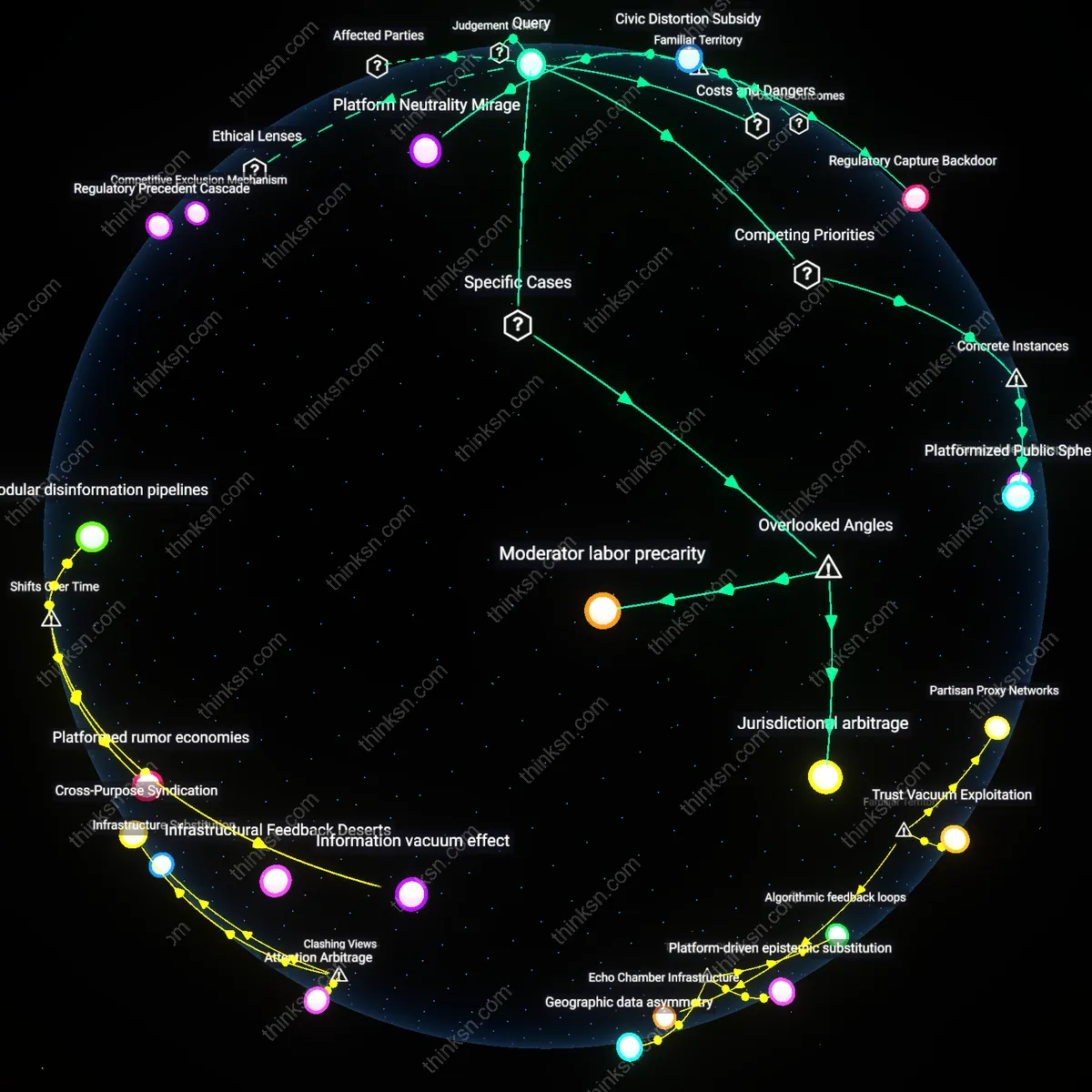

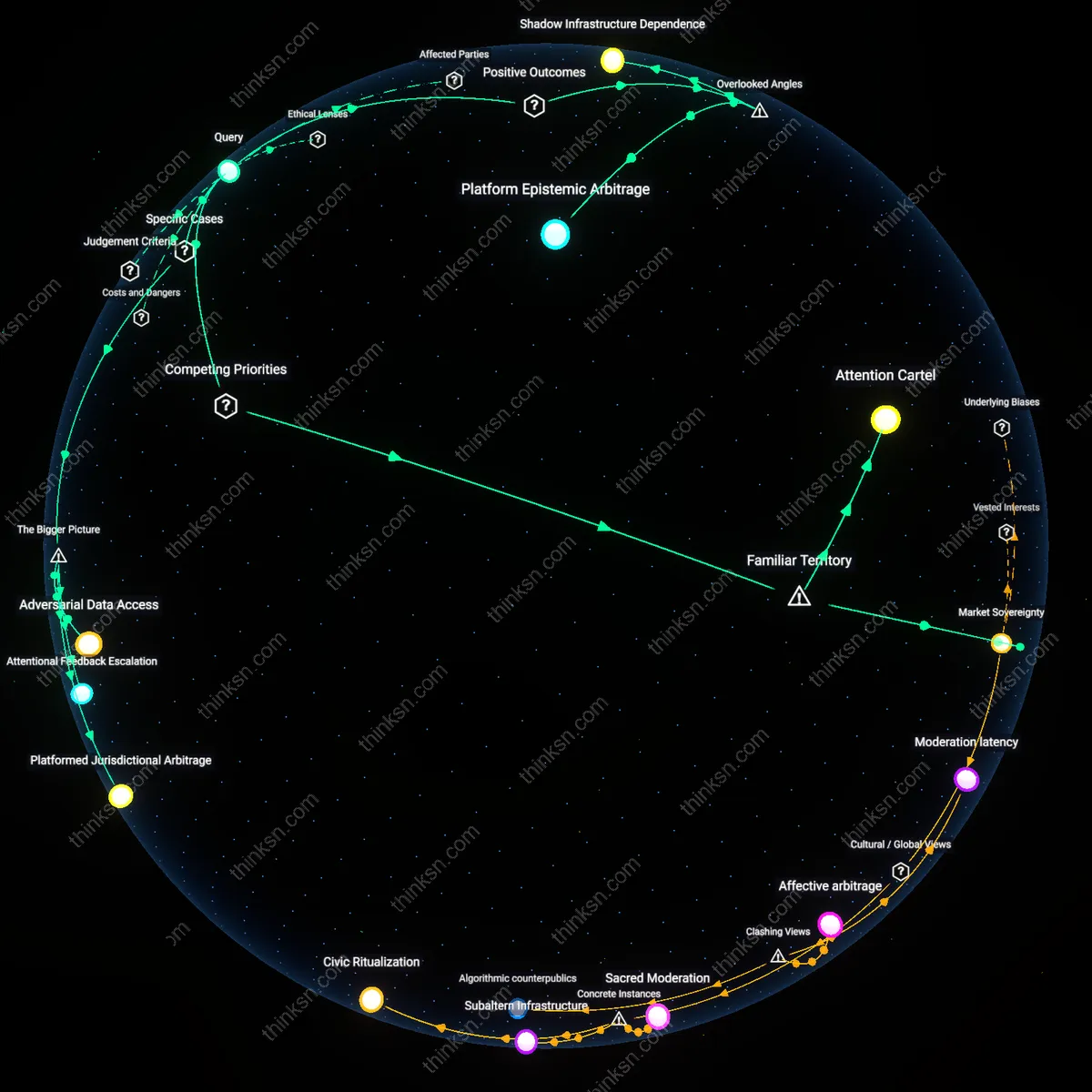

Analysis reveals 10 key thematic connections.

Key Findings

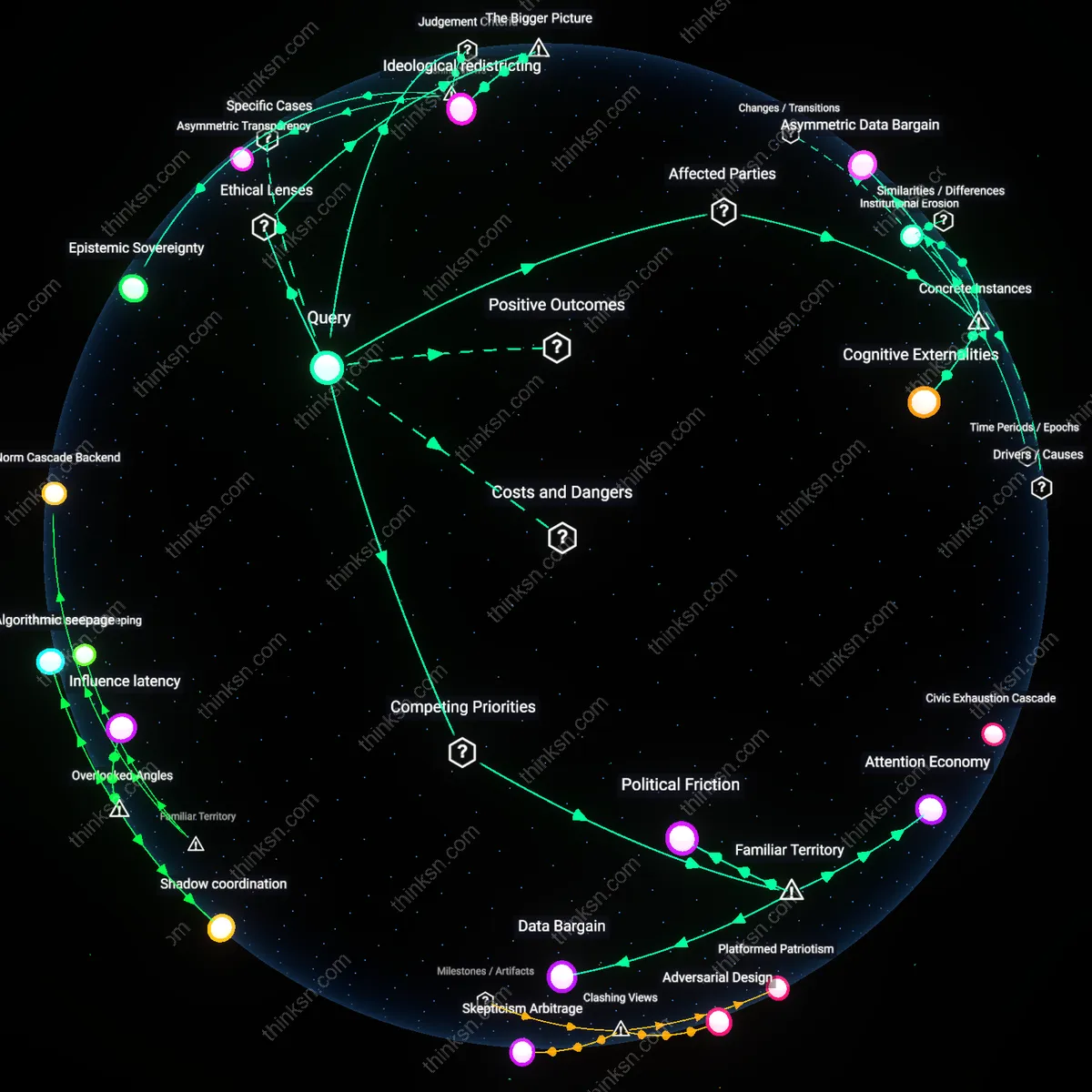

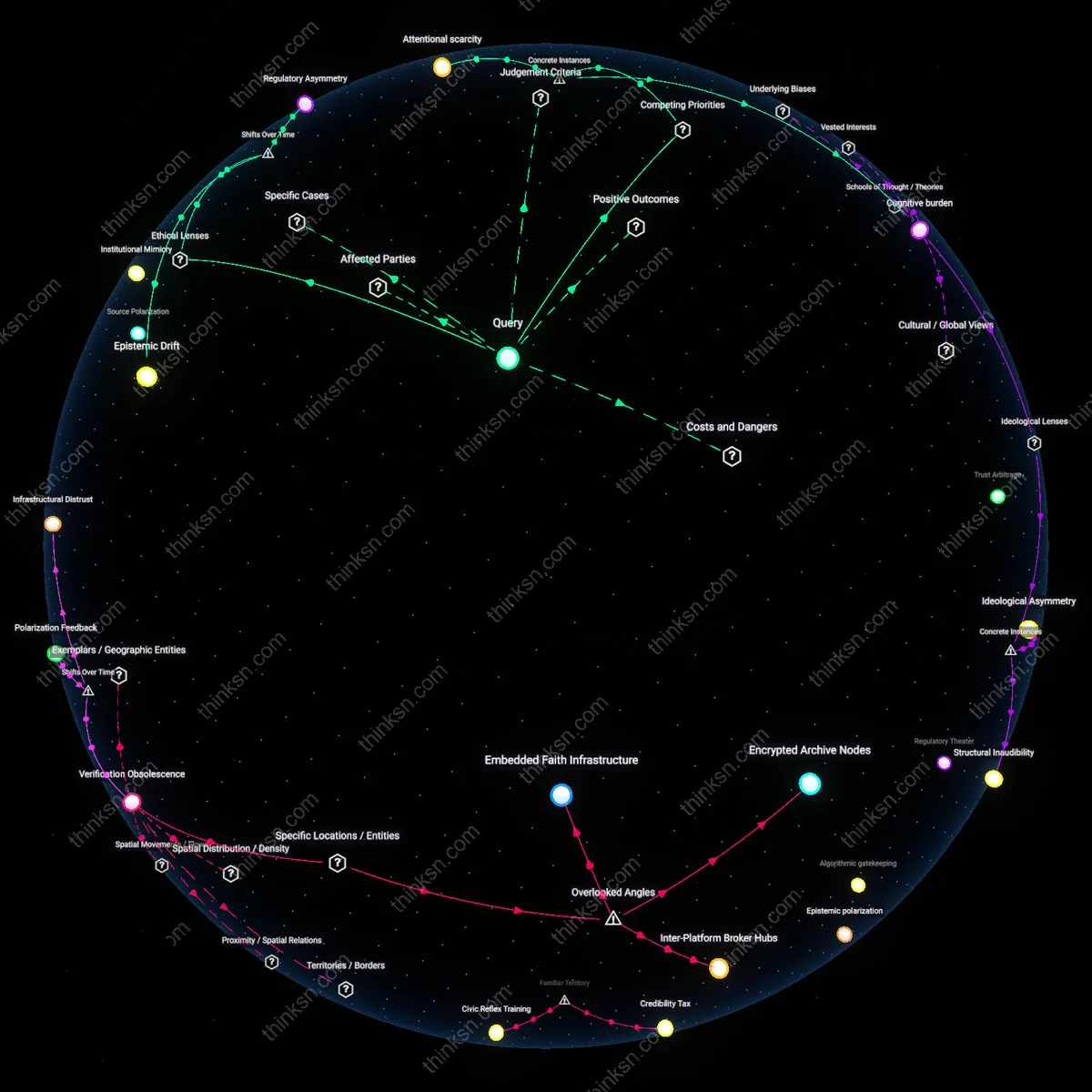

Cognitive Externalities

One should decline personalized news feeds when the profiling system amplifies societal polarization, as seen in the 2016 U.S. presidential election where Facebook’s microtargeting algorithms enabled politically divisive content to spread disproportionately among ideologically isolated users; the mechanism—behavioral data repurposed into engagement-driven content filtering—shifted political discourse by reinforcing preexisting beliefs, revealing that user profiling generates cognitive externalities that degrade public reasoning beyond individual choice.

Asymmetric Data Bargain

One should resist personalized news feeds when the data exchange disproportionately benefits platform profitability over user autonomy, exemplified by Google’s Ad Personalization system in India, where low-digital-literacy users in rural populations unknowingly traded browsing behavior for targeted political ads during the 2019 national election; the asymmetry—where users receive free access while platforms extract high-value behavioral profiles—demonstrates that the data bargain is structurally skewed against marginalized demographics who lack tools to audit or withdraw consent.

Institutional Erosion

One should disable personalized feeds when media pluralism is undermined by concentrated algorithmic control, as occurred in Brazil with Jair Bolsonaro’s 2018 campaign, where WhatsApp, owned by Facebook, became a primary vector for unverified, personalized political messaging that circumvented traditional journalistic oversight; the consolidation of influence within a private messaging infrastructure eroded institutional gatekeeping functions of the press, showing how profiling at scale can displace civic information ecosystems.

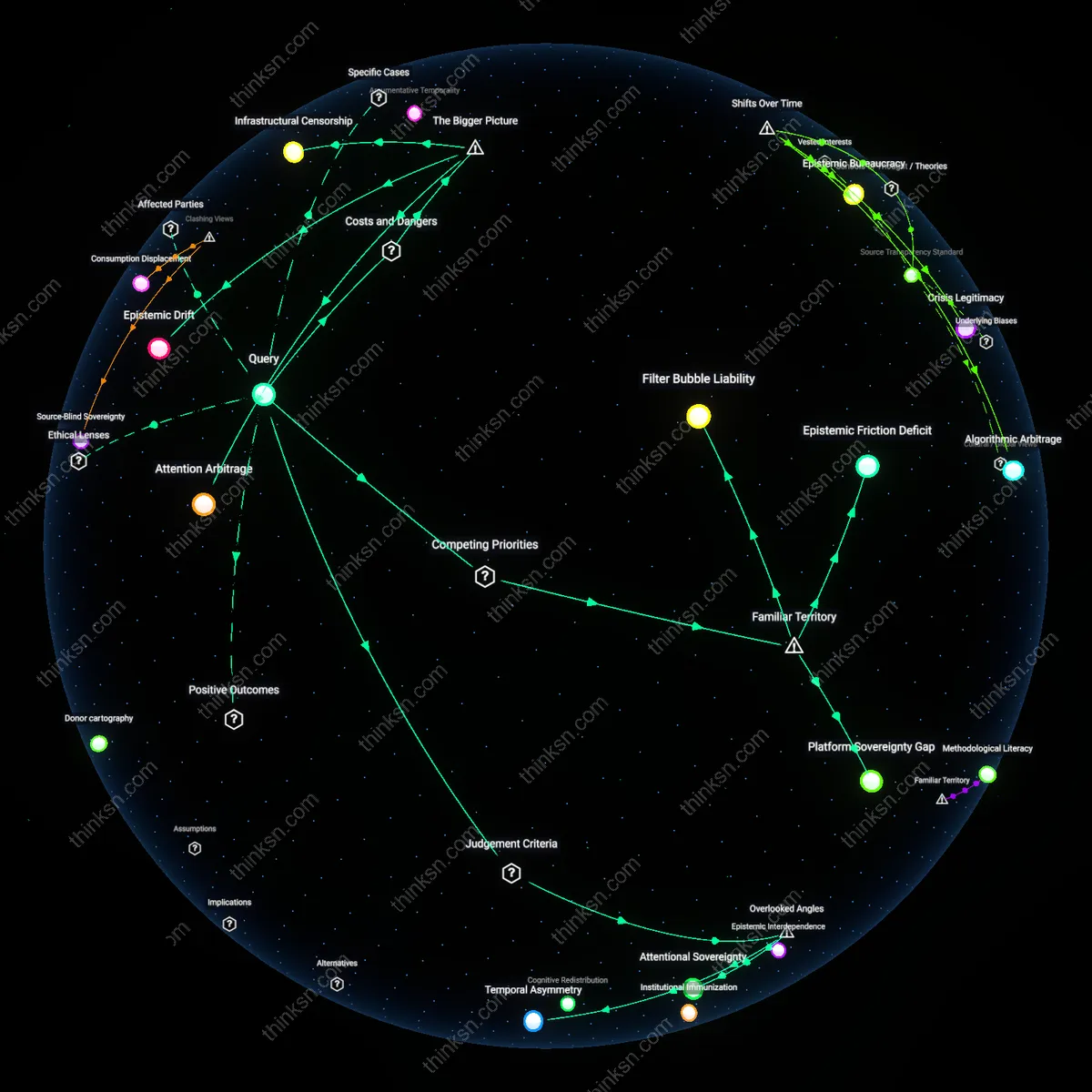

Epistemic Sovereignty

One should disable personalized news feeds to preserve epistemic sovereignty, a self-determination in belief-formation that resists algorithmic shaping by corporate architectures. Search engines like Google and Bing operationalize user profiling through behavioral tracking across platforms, constructing feedback loops that elevate emotionally reactive content over factually robust information, thereby transforming political opinion into a function of engagement optimization rather than public reasoning. This mechanism reframes the user not as a citizen but as a data point in a prediction market, where the non-obvious risk is not misinformation per se but the quiet substitution of democratic deliberation with infrastructural nudging masked as convenience.

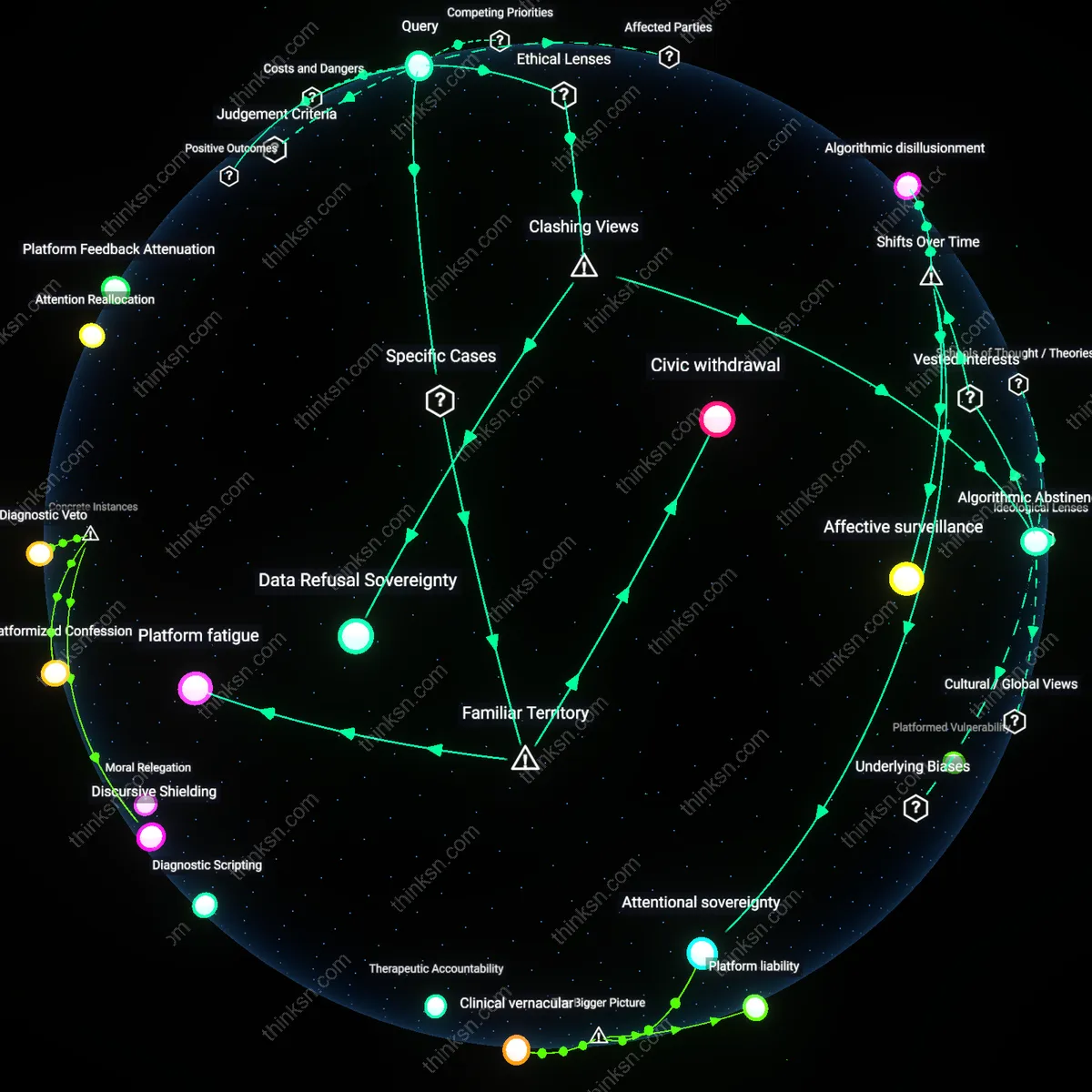

Structural Captivity

One should accept personalized feeds only when able to repurpose them as a means of exposing structural captivity in digital public spheres, where the illusion of choice masks systemic dependencies on proprietary algorithms. Evidence indicates that even users who actively seek diverse perspectives are funneled through unseen ranking hierarchies controlled by dominant search platforms, which prioritize brand safety and user retention over ideological diversity. The non-obvious truth this reveals is that refusal becomes politically inert without collective infrastructure—individual opt-outs reinforce the perception of personal responsibility while shielding corporations from accountability for shaping the contours of political thought at scale.

Asymmetric Transparency

One should conditionally enable personalized feeds only if exercised under regimes of asymmetric transparency, where users gain full access to the criteria shaping their content while corporations remain opaque to public oversight. In jurisdictions like the EU, GDPR enables data access requests that expose fragments of profiling logic, turning personalization into a forensic tool rather than passive consumption. The dissonant insight is that compliance with surveillance capitalism can become subversive when individuals weaponize transparency rights to reverse-engineer ideological sorting mechanisms—transforming user consent from complicity into a form of institutional sabotage.

Attention Economy

One should disable personalized news feeds to resist the erosion of democratic discourse by commercial algorithms. Search engines like Google and Meta monetize user attention through behavioral microtargeting, which rewards outrage and confirmation bias to increase engagement, thereby reshaping political perception not through overt censorship but through invisible reward structures. Most people recognize 'filter bubbles' as a risk, but fail to see that the bubble is not a side effect—it is the product design, sustained by real-time auction-based ad platforms that prioritize virality over accuracy. This reframes the user not as a citizen but as a node in a profit-driven distribution network where political influence is a byproduct of revenue optimization.

Data Bargain

One should accept personalization if they lack viable alternatives for accessing information, because opting out of profiling often means opting out of functionality in systems like Google Search or Facebook News Feed. The 'free' service is not truly free but paid for through continual data extraction, where users in lower-income communities or with limited digital literacy disproportionately trade privacy for utility. While the trade-off between convenience and surveillance is widely acknowledged, what remains obscured is that the bargain is structurally coercive—there are no mass-market non-profiling substitutes, making refusal a privilege of time, knowledge, and access.

Political Friction

One should enable personalized news feeds if they seek reinforcing views during periods of social instability, because algorithmic alignment increases perceived legitimacy of information among partisan groups. In polarized environments like the U.S. post-2016 or Brazil during election cycles, users turn to familiar narratives as cognitive anchors, and platforms respond by deepening ideological coherence in feeds to maintain trust in their service. While critics blame platforms for radicalization, what is overlooked is that personalization can function as a stabilizing force for individuals—reducing cognitive dissonance at the cost of societal consensus, turning political disagreement into a managed user experience rather than a public conflict.

Ideological redistricting

One should oppose personalized news feeds because they function as a form of ideological redistricting, where user preferences are algorithmically gerrymandered to maximize platform profitability rather than democratic equity, a process sustained by the regulatory vacuum around behavioral microtargeting in the European Union’s Digital Services Act enforcement gaps. This redistricting fragments the public sphere into privately managed opinion clusters, enabling political actors to exploit psychographic segmentation developed by firms like Cambridge Analytica’s successors. The overlooked mechanism is that these systems don’t just reflect political differences—they actively redraw the boundaries of ideological possibility to align with commercial engagement models, transforming democratic contestation into a byproduct of ad optimization.