Is YouTube Spreading Health Lies During Pandemics?

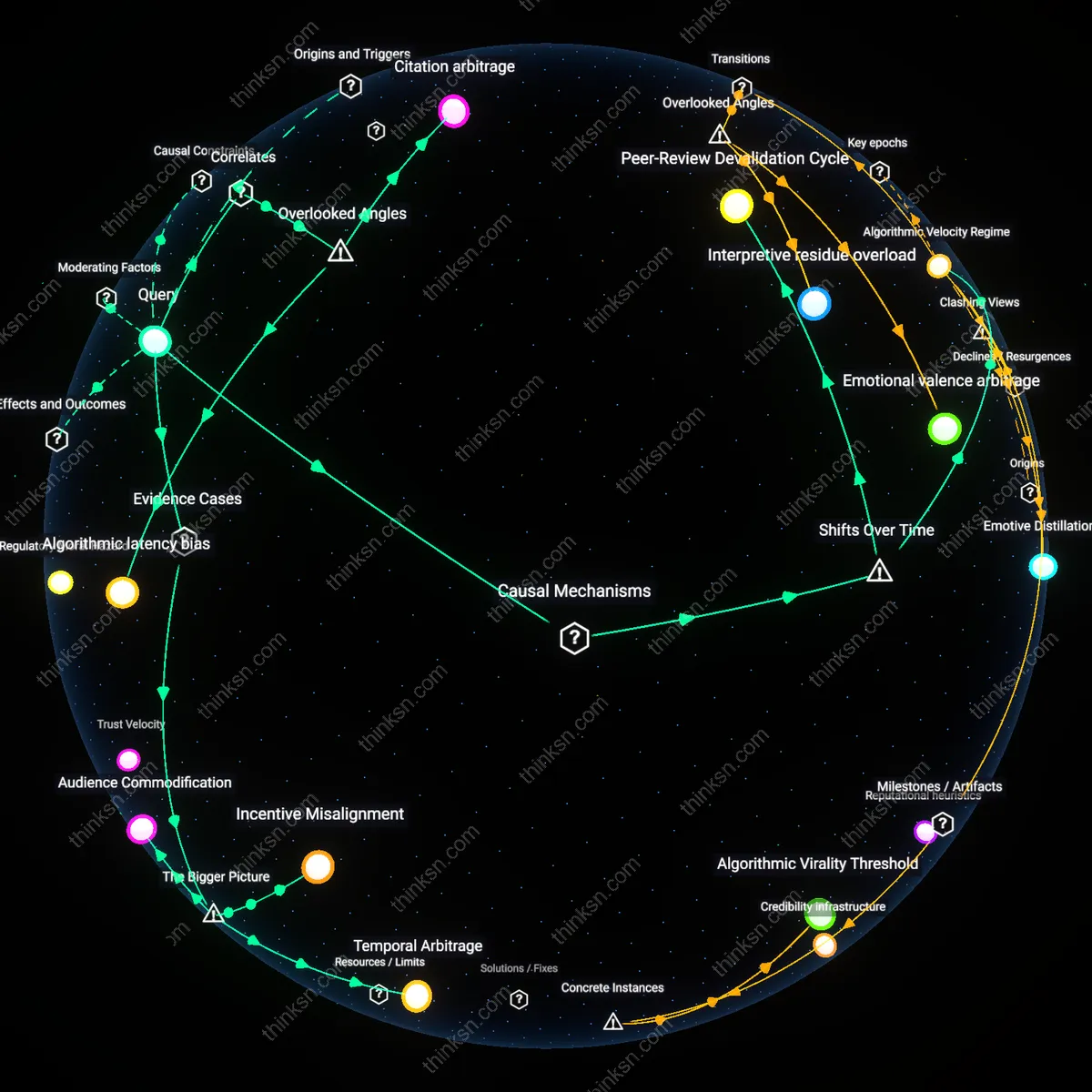

Analysis reveals 11 key thematic connections.

Key Findings

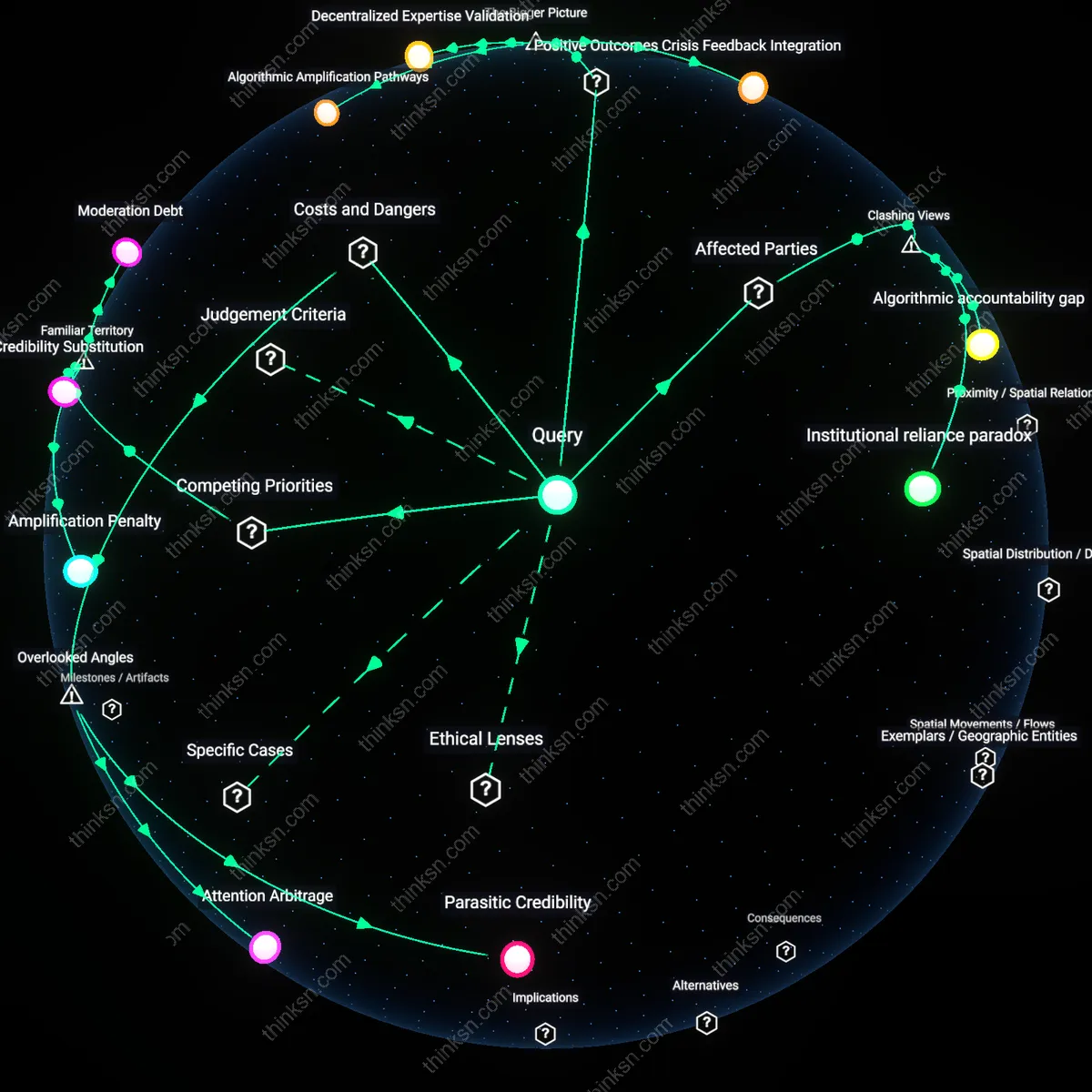

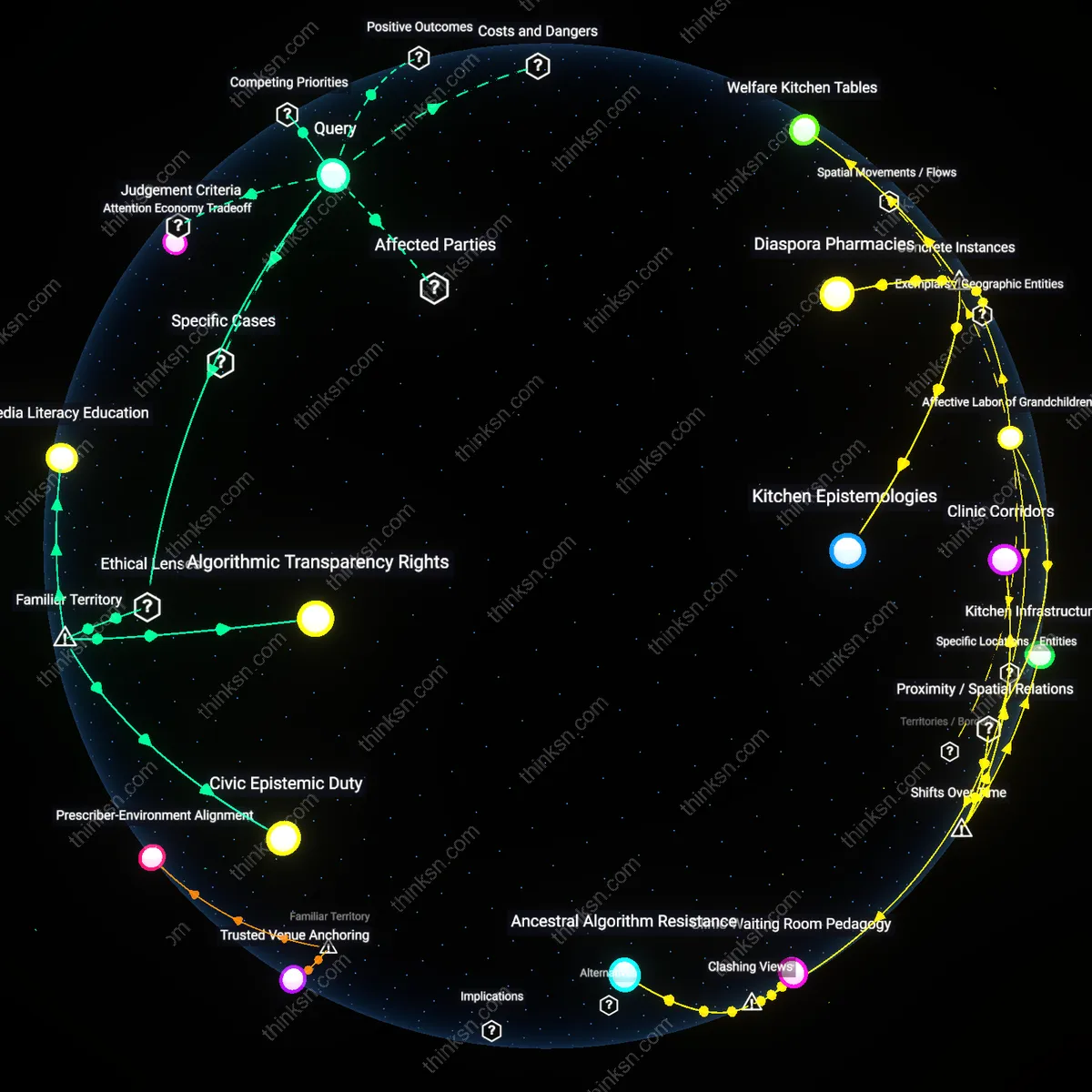

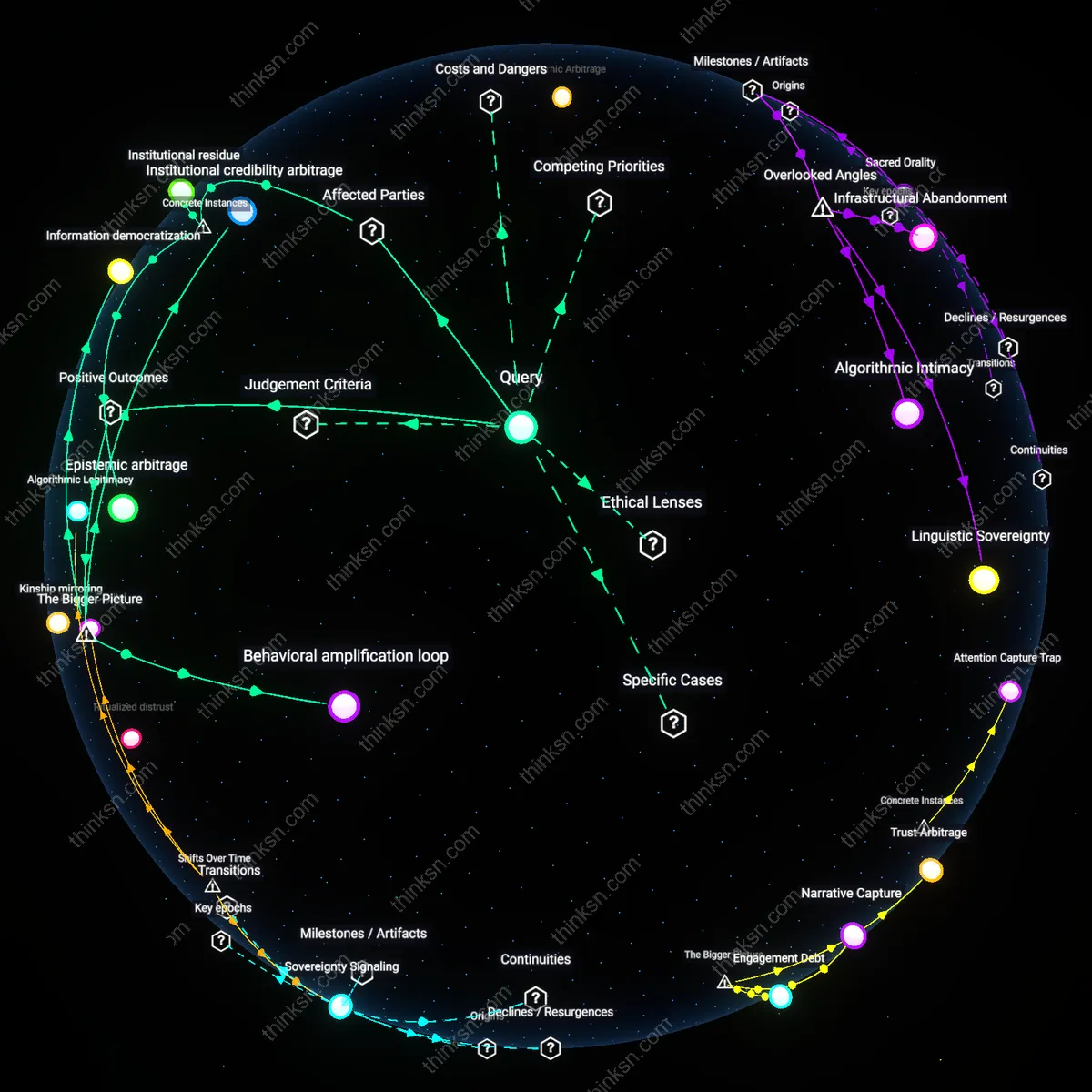

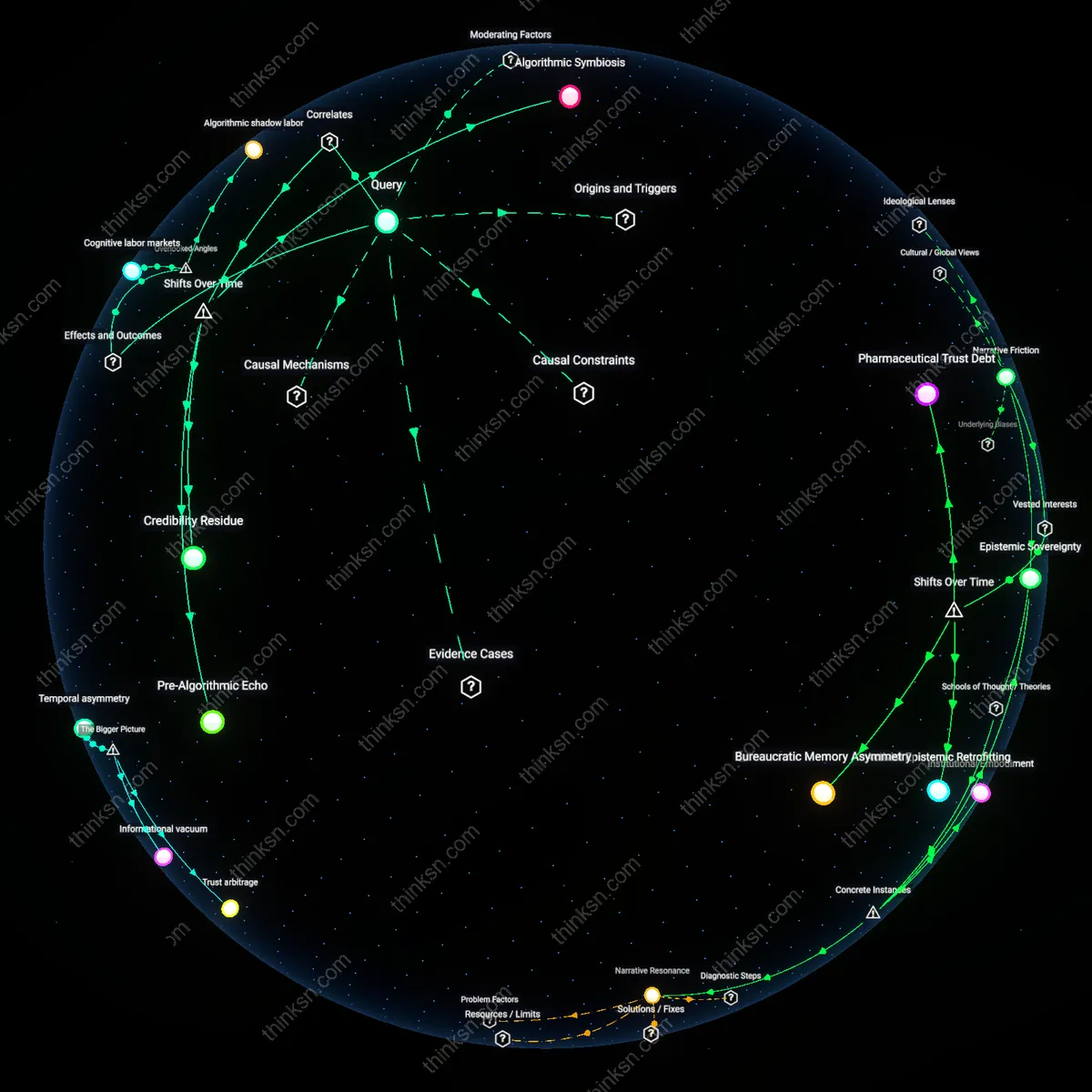

Algorithmic accountability gap

Early evidence shows YouTube's recommendation algorithm disproportionately amplifies health misinformation during pandemics by prioritizing engagement over accuracy, directly affecting vulnerable populations such as elderly viewers and non-English speakers who rely on visual content for medical guidance. This mechanism operates through automated content promotion in low-regulation environments, where fringe health claims generate higher watch time and are thus systematically boosted—implicating YouTube not as a neutral platform but as an active architectural participant in misinformation diffusion. The non-obvious insight is that the platform’s infrastructure, not just its content, constitutes a public health risk, undermining assumptions that user choice alone drives exposure.

Institutional reliance paradox

Public health agencies like the CDC and WHO have increasingly uploaded official guidance to YouTube, inadvertently legitimizing it as a primary health communication channel despite its documented flaws—making these institutions both victims of misinformation and co-signers of a problematic delivery system. This dynamic creates a dependency loop in which governments must use YouTube to reach mass audiences, thereby reinforcing its perceived authority even as it hosts contradictory, harmful content. The dissonance lies in recognizing that trustworthiness is being outsourced to a system whose incentives structurally oppose the stability and accuracy required for effective public health messaging.

Epistemic triage burden

Users, particularly younger adults and caregivers in medically underserved regions, are de facto tasked with evaluating the credibility of vaccine or treatment claims on YouTube without access to consistent markers of expertise, peer review, or sourcing—shifting the responsibility of verification from institutions to individuals. This burden operates through fragmented information ecologies where a single search returns equally polished videos from doctors, conspiracy theorists, and commercial sponsors, masking epistemic hierarchies beneath algorithmic neutrality. The overlooked reality is that 'media literacy' is insufficient when design features obscure provenance, turning every viewer into an under-resourced fact-checker during a crisis.

Algorithmic Amplification Pathways

YouTube’s recommendation algorithm accelerates the visibility of medically debunked content during pandemics because it prioritizes engagement metrics over source credibility. This occurs through Google’s automated systems that reward watch time and user retention, which extremist and fear-based health narratives exploit more effectively than clinical updates. As a result, public health authorities lose communicative dominance at critical decision windows, revealing how platform architecture—rather than user choice—shapes information hierarchies in crisis contexts.

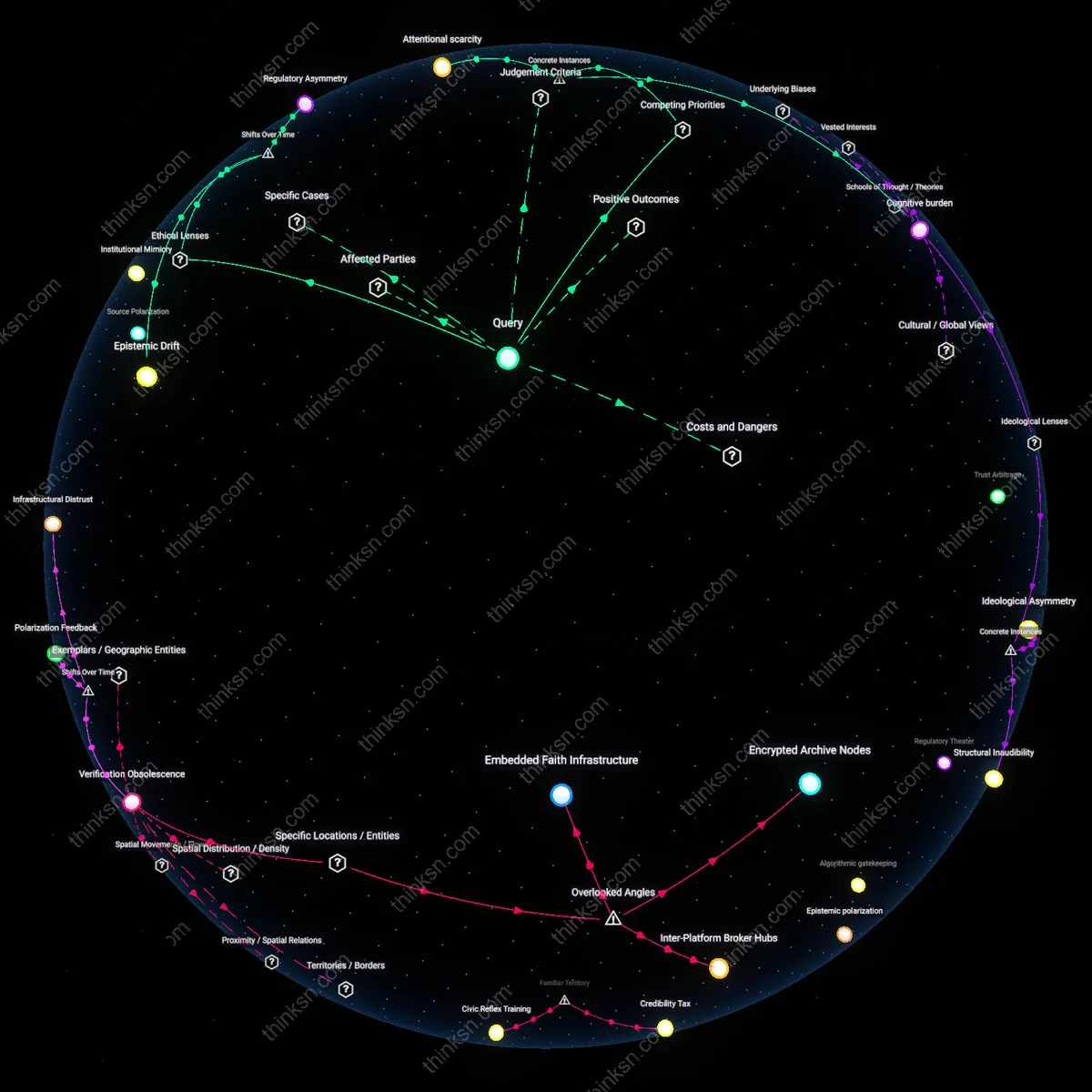

Decentralized Expertise Validation

Independent medical creators use YouTube to bypass traditional media gatekeepers and deliver real-time, science-aligned public health guidance during pandemics, particularly in underserved regions with weak institutional trust. This utility emerges from YouTube’s low-barrier publishing model, which enables credentialed professionals—like epidemiologists in Brazil or rural India—to build audiences directly, counteracting state-level misinformation through relatable, vernacular explanations. The platform’s value here lies not in moderation but in enabling parallel knowledge networks that function when official systems are slow or compromised.

Crisis Feedback Integration

YouTube-generated search and engagement data serve as early warning indicators for emerging public health concerns, allowing agencies like the WHO to detect and respond to misinformation trends faster than through clinical surveillance alone. By analyzing spike patterns in queries related to vaccine side effects or fake cures, institutions can identify geographic hotspots of confusion and tailor targeted campaigns. This transforms YouTube from a content distributor into an unintentional sensor network, where user behavior feeds back into health communication strategy at scale.

Parasitic Credibility

Early evidence shows that YouTube enables fringe health claims to gain legitimacy by parasitically attaching themselves to trusted institutions through video metadata, thumbnails, and algorithmic adjacency, rather than through explicit endorsement. Channels promoting misinformation frequently use titles, visuals, or keywords that mimic CDC reports, WHO briefings, or medical journal names—such as 'NIH reveals hidden cure'—which triggers YouTube’s search and recommendation systems to surface these videos alongside authentic sources, creating a false equivalence in user perception. This exploitation of semantic and visual mimicry bypasses content moderation that focuses on explicit falsehoods, allowing deceptive content to piggyback on the platform’s implicit trust in professional formats. The overlooked mechanism is not deception through content but through *epistemic mimicry*, where the appearance of scientific authority becomes a manipulable signal that distorts user assessments of trustworthiness.

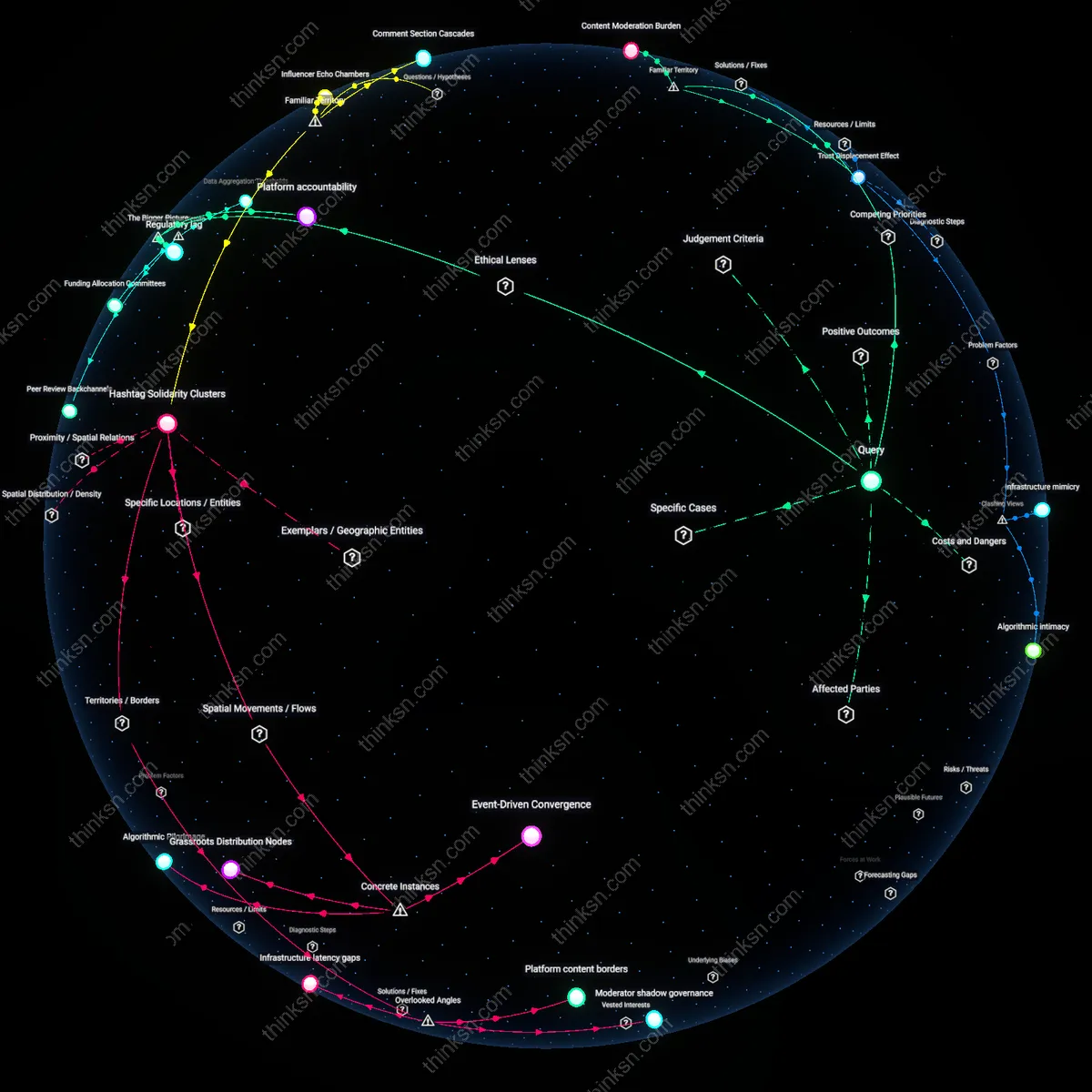

Attention Arbitrage

During pandemics, bad actors on YouTube exploit information scarcity and public anxiety to engage in attention arbitrage—gaining views by releasing low-effort, high-salience misinformation videos faster than credible producers can develop nuanced, evidence-based responses. Independent creators and foreign disinformation networks flood the platform with videos using automated editing tools, recycled footage, and click-optimized scripts that outpace public health agencies bound by review protocols, peer input, and regulatory caution. This asymmetry in production speed creates a first-mover advantage where misleading narratives dominate search results and suggested feeds during peak search periods, shaping user understanding before trustworthy alternatives exist. The overlooked cost is the *temporal monopolization of attention*, where speed, not truth, determines informational dominance in the short term, permanently altering belief trajectories even after corrections emerge.

Amplification Penalty

YouTube’s recommendation algorithm prioritizes engagement over accuracy, thereby elevating health misinformation during pandemics even when official sources are available. This occurs because the platform’s monetized attention economy rewards watch time, causing borderline or sensational content—such as unverified cures or conspiracy theories about vaccines—to be systematically promoted over dry, factual public health messaging. The non-obvious reality is that users searching for authoritative guidance may still be funneled into misinformation via adjacent recommendations, not because the content is labeled trustworthy but because it retains attention—a built-in conflict between public safety and platform growth.

Credibility Substitution

Viewers routinely mistake production quality and channel size for medical authority, treating popular science-adjacent YouTubers as equivalent to epidemiologists during pandemic crises. This substitution persists because familiar cues like confident delivery, professional editing, and subscriber counts act as cognitive shortcuts in information overload, effectively blurring the line between expertise and entertainment. The underappreciated mechanism is that YouTube’s interface reinforces this bias by elevating channels with high engagement regardless of qualification, allowing charismatic non-experts to displace institutional voices in public perception without ever claiming formal credentials.

Moderation Debt

YouTube’s reliance on reactive content moderation creates a sustained lag in removing harmful health misinformation, enabling dangerous narratives to spread widely before takedowns occur. This delay is structurally inevitable because policy enforcement depends on user reports, third-party fact-checkers, and appeals processes—all slower than algorithmic dissemination—creating a window where false claims gain traction and embed in comment threads, playlists, and reuploads. The overlooked conflict is that even when YouTube ‘wins’ by eventually removing content, its trustworthiness erodes in real time because public memory retains the exposure, not the correction.