Who Gains as Platforms Disclose Removal Stats? Public Trust at Stake?

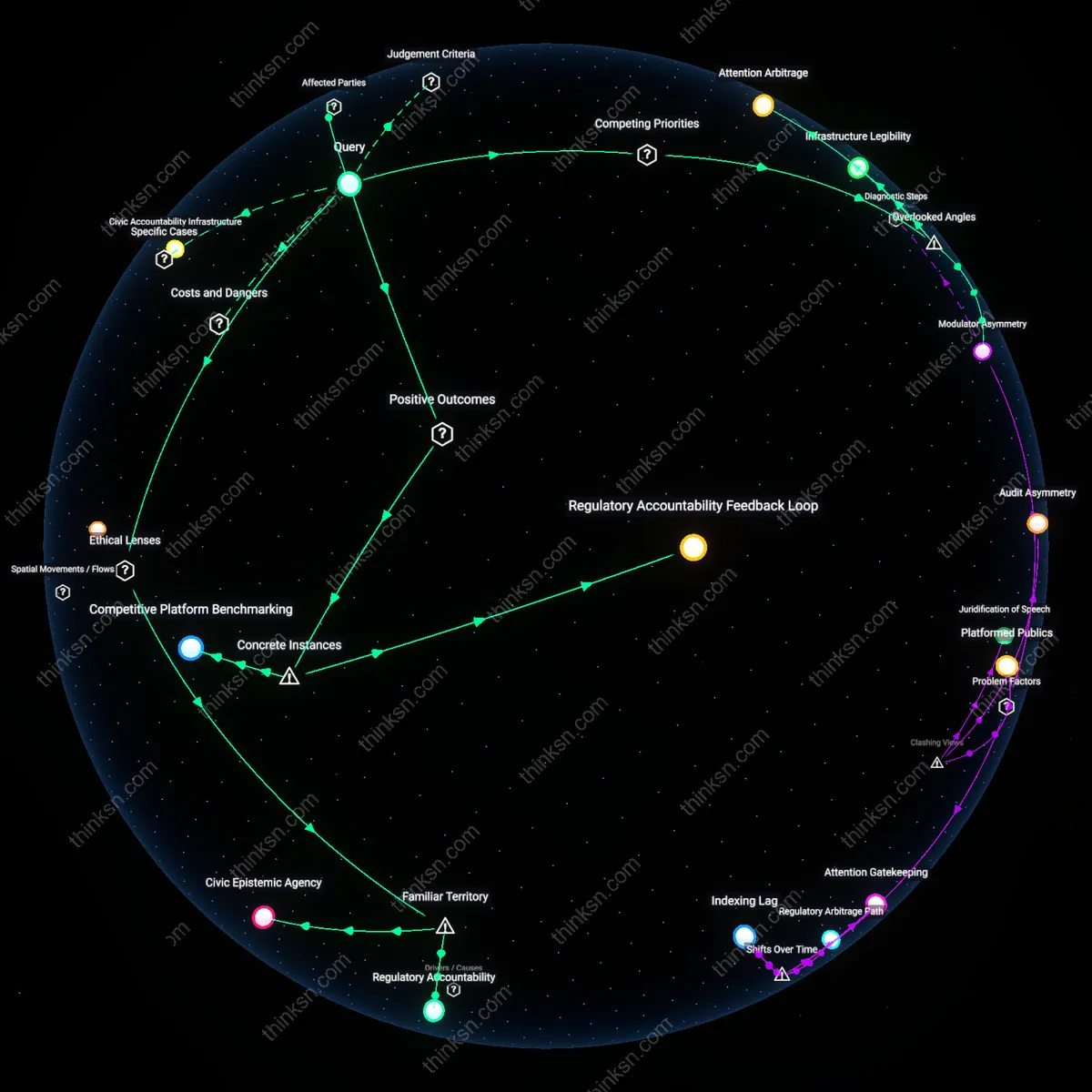

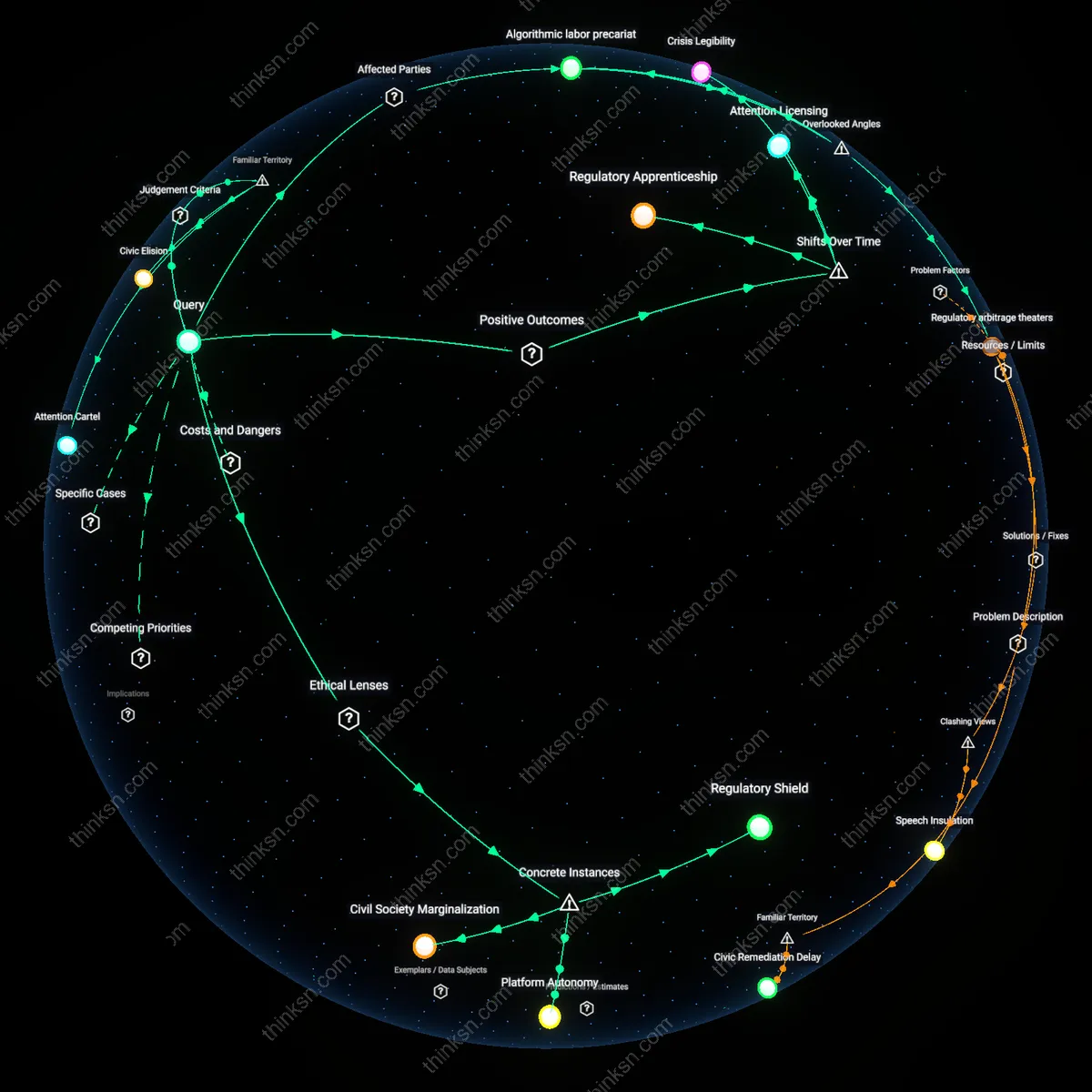

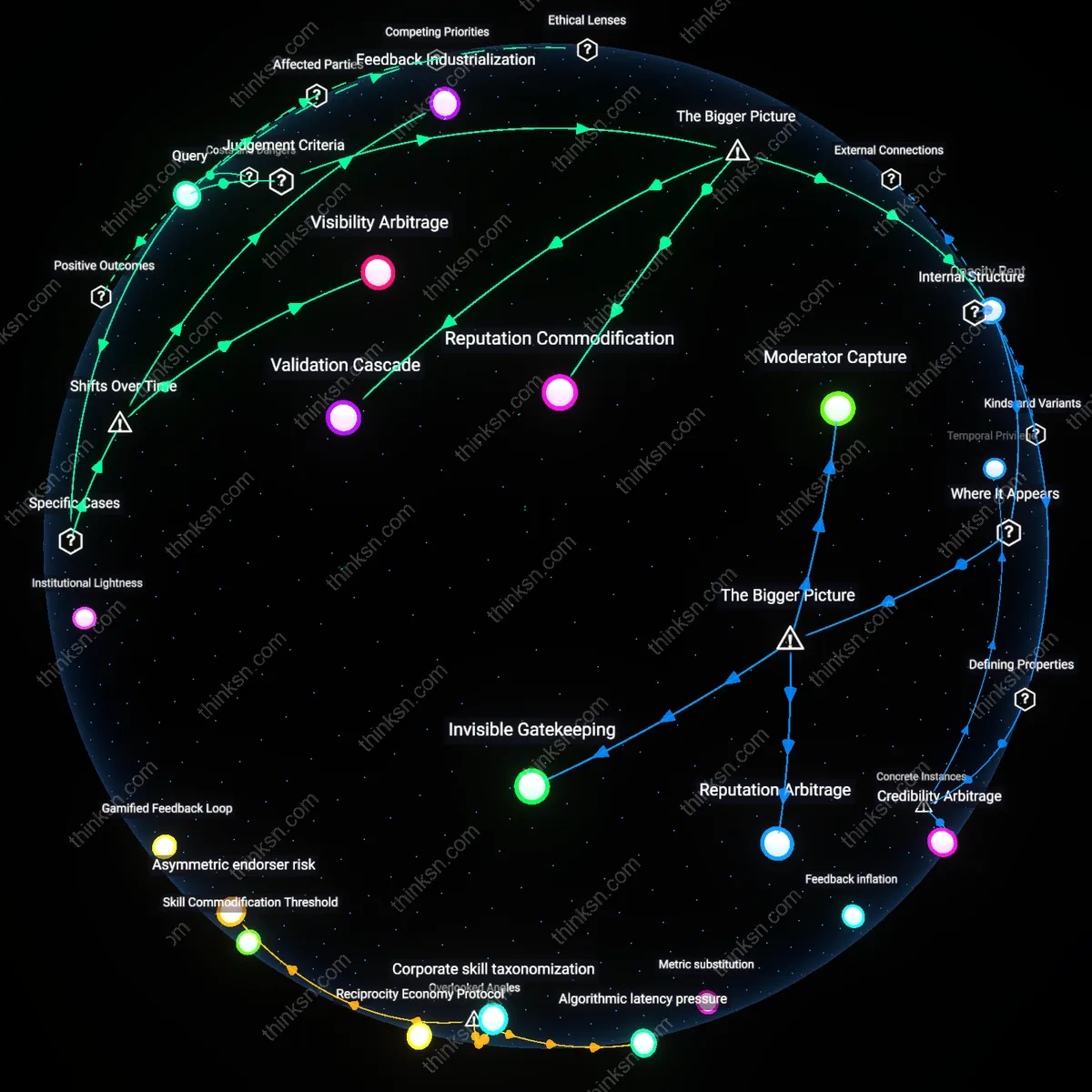

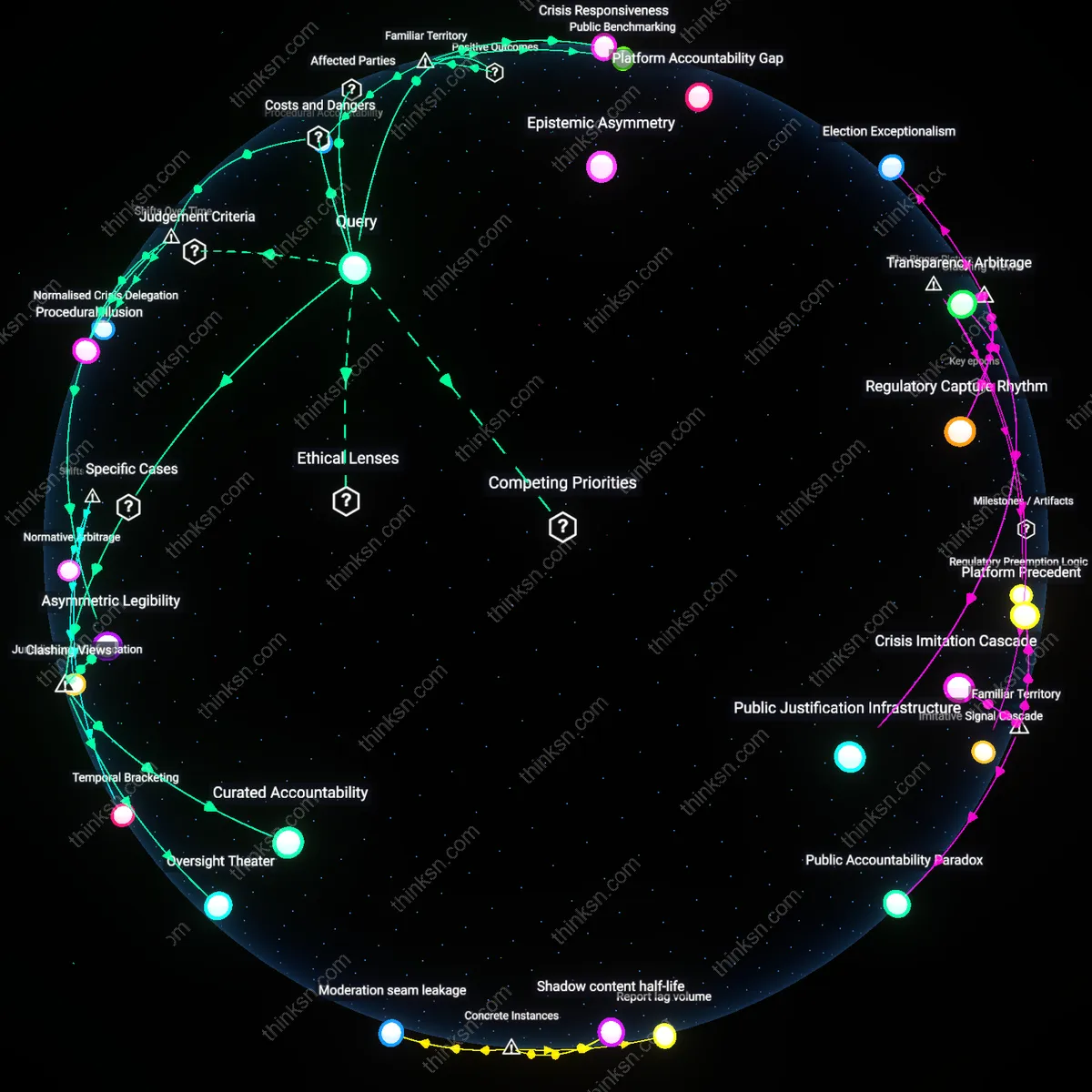

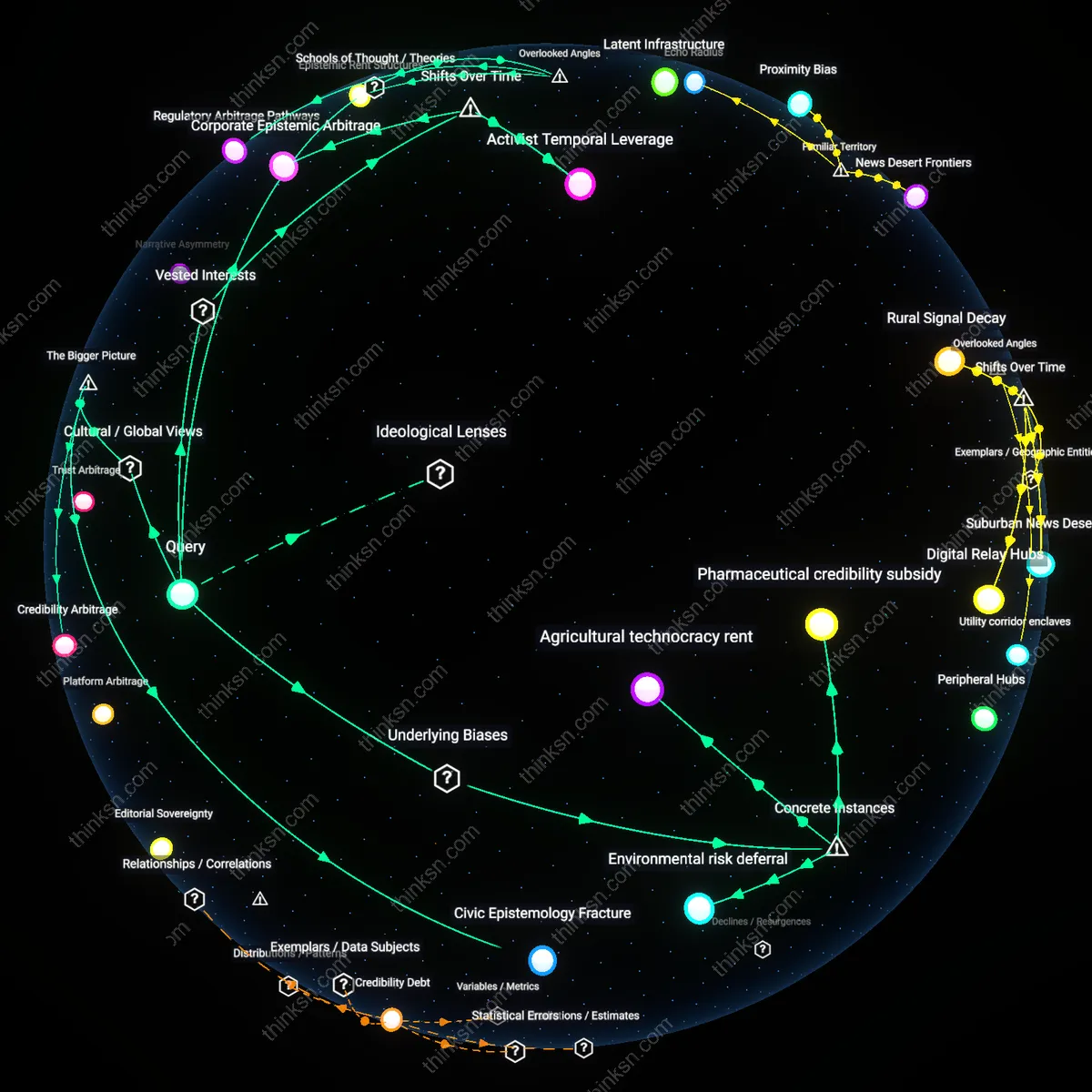

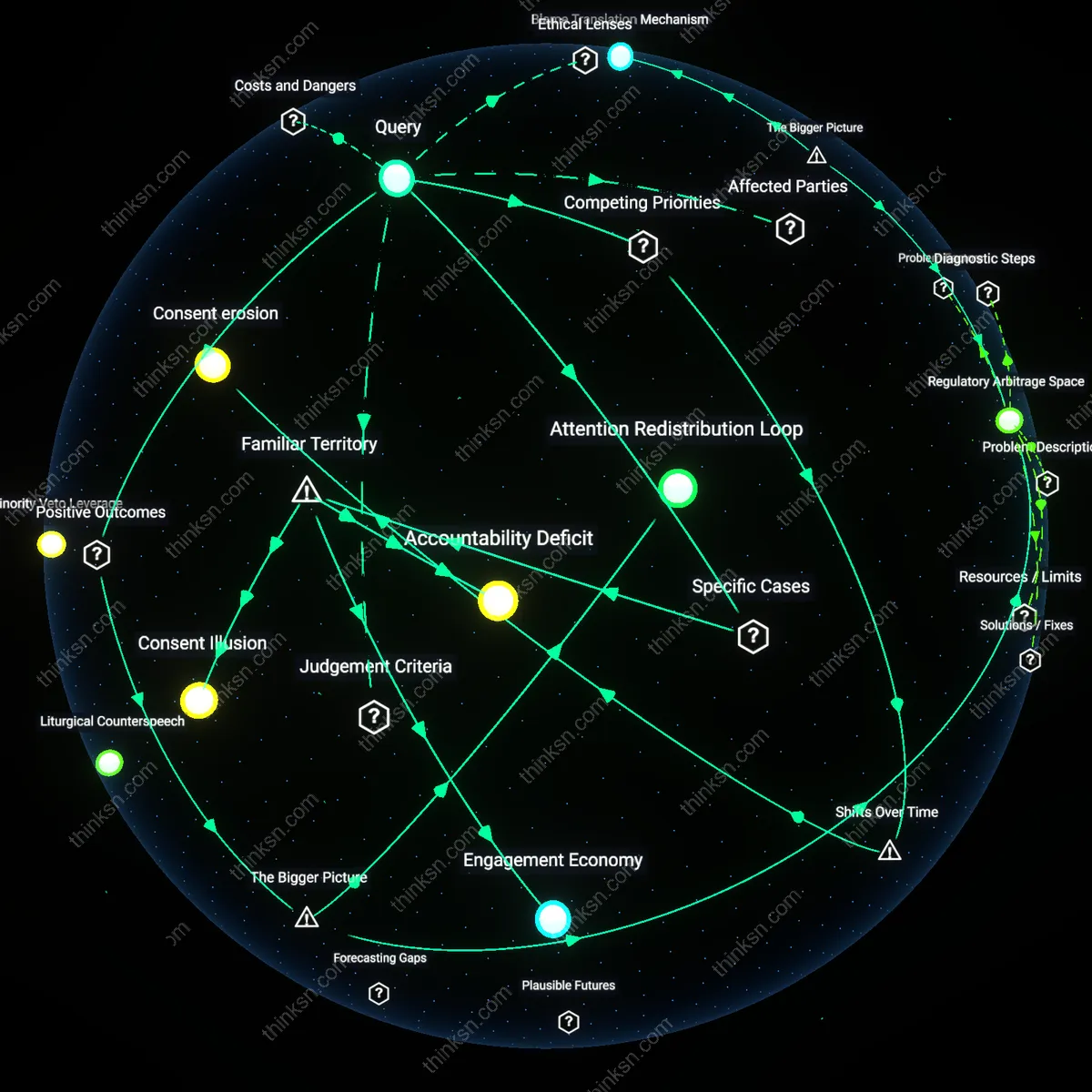

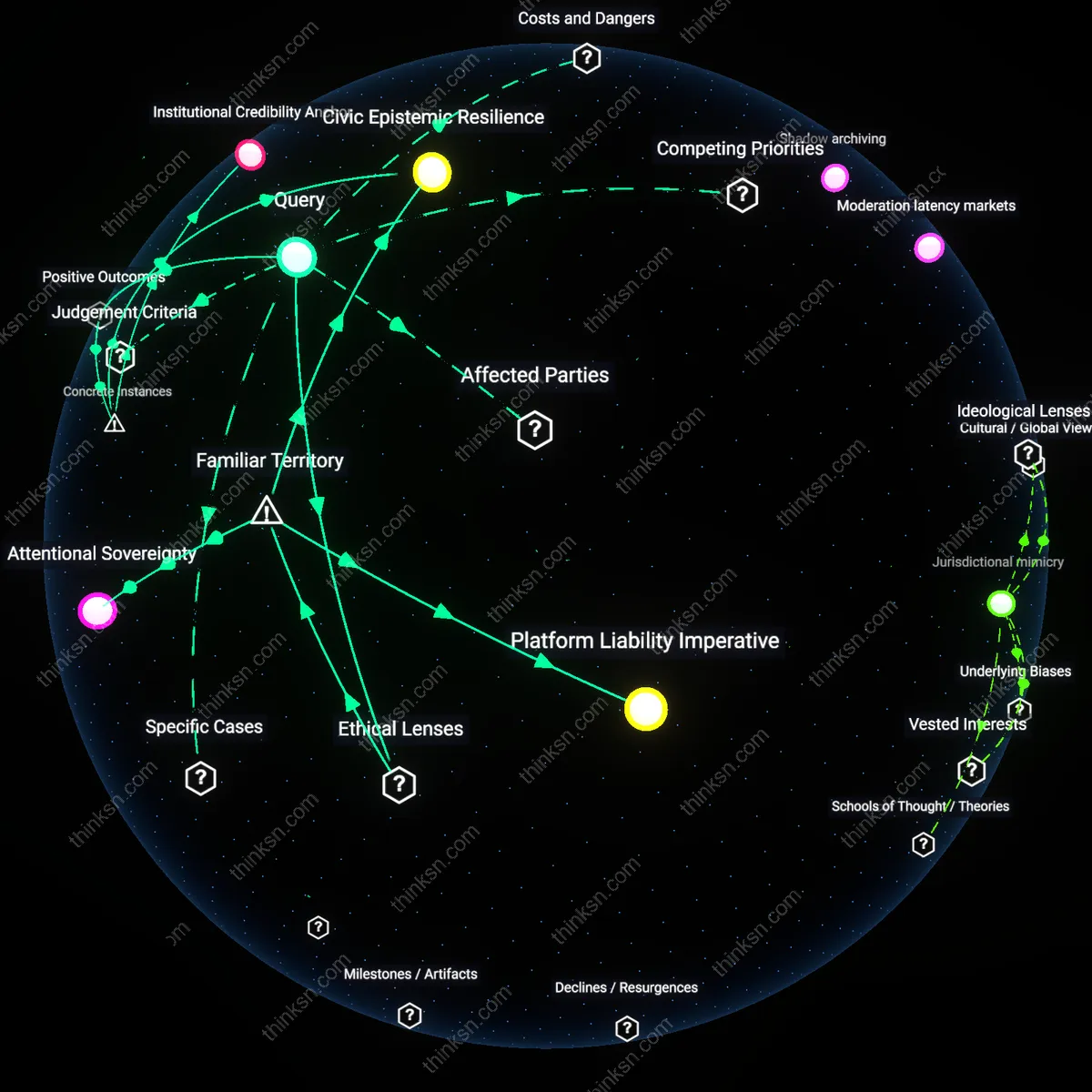

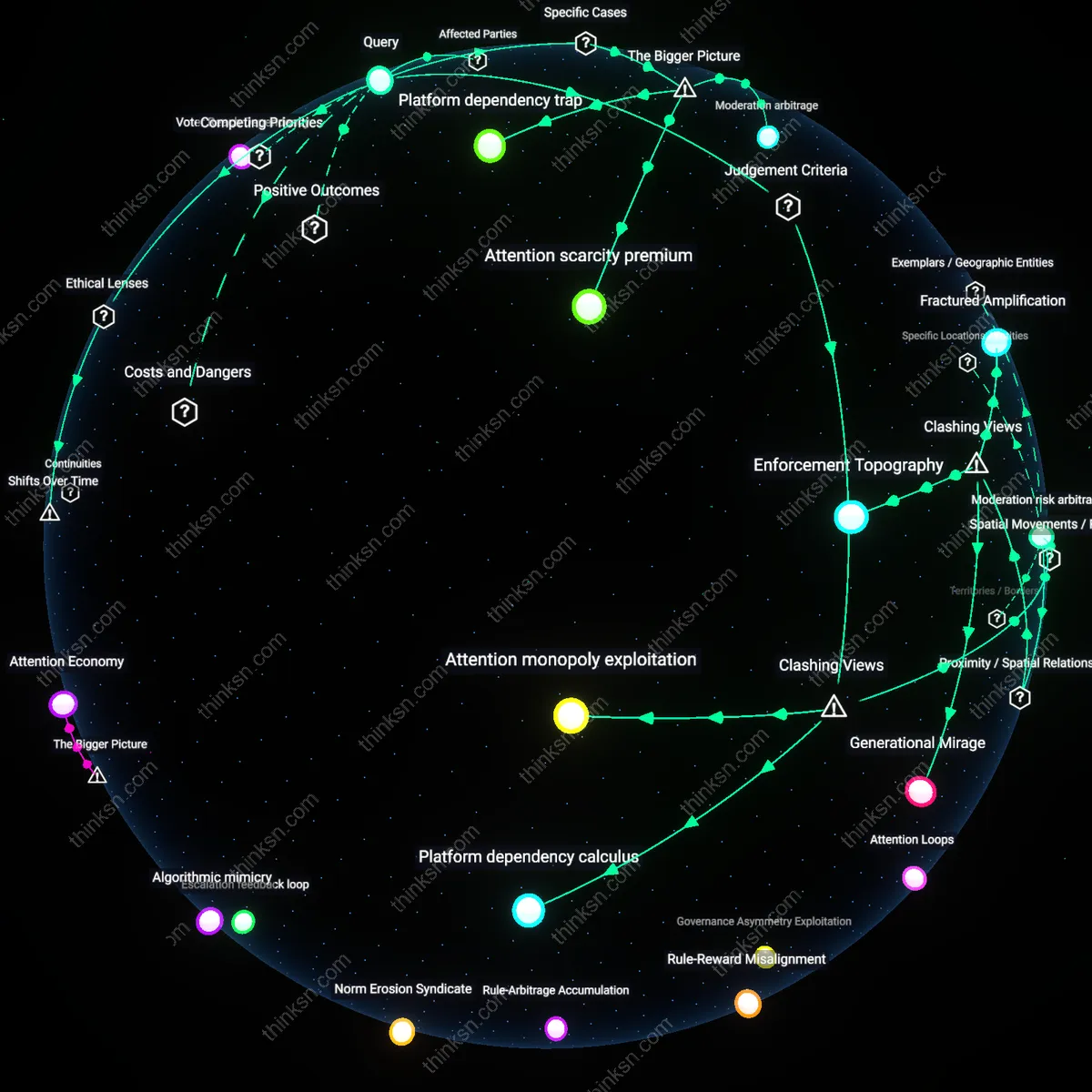

Analysis reveals 11 key thematic connections.

Key Findings

Regulatory Leverage

National governments gain influence over transnational platforms when mandated transparency reveals enforcement gaps, enabling targeted legislative pressure. By requiring reports on takedown volume, speed, and appeal outcomes, states like Germany under NetzDG or India under IT Rules can identify underperformance in addressing illegal content, using disclosures as empirical justification for fines or expanding jurisdictional reach—what makes this significant is that transparency becomes a compliance escalator, where data justifies deeper state intervention into private governance, turning corporate reporting into a mechanism of regulatory accumulation rather than public oversight.

Civic Accountability Infrastructure

Civil society coalitions—including digital rights NGOs like Access Now and journalist collectives—gain operational intelligence from content-moderation transparency reports, which they repurpose to map systemic bias and suppression risks. These groups treat disclosed data on removed post types, geographic targeting, and algorithmic amplification as forensic markers within authoritarian or fragile democracies, such as when Venezuela’s government-aligned platforms disproportionately censor opposition hashtags; the non-obvious consequence is that transparency mandates function less as public goods than as raw inputs for adversarial accountability networks, turning disclosure into a form of infrastructure that sustains counter-power operations in repressive information environments.

Legitimacy Arbitrage

Platform operators such as Meta or TikTok strategically use transparency reports to gain political cover and competitive distinction, reframing regulatory compliance as institutional integrity. By publishing granular data on government takedown requests or misinformation removal rates, companies position themselves as neutral stewards rather than partisan censors, particularly in contested markets like Brazil or Nigeria during elections—this matters because the disclosures do not primarily inform the public but are designed to be consumed by regulators and investors, making transparency a reputational currency that platforms exchange across jurisdictions to maintain operational latitude; the underappreciated dynamic is that legibility to power, not public understanding, drives the form and timing of disclosures.

Regulatory Accountability Feedback Loop

The European Union’s enforcement of the Digital Services Act (DSA) in 2023 compelled Meta to publish detailed transparency reports on content moderation actions taken across Facebook and Instagram in EU member states, which enabled national regulators and civil society groups to identify systematic under-enforcement in Polish political disinformation cases, triggering formal compliance inquiries that recalibrated platform enforcement behavior—revealing that mandated disclosures create a feedback loop where regulatory actors use published data not just to audit, but to iteratively correct platform governance, a function that operates through structured data access and public scrutiny mechanisms often overlooked in debates framed solely around user rights.

Civic Monitoring Leverage

Following Twitter’s implementation of the Indian IT Rules 2021 transparency reporting requirements, the Internet Freedom Foundation used the platform’s monthly government request disclosures to document a 300% surge in takedown orders from Indian authorities during the 2021 farmers’ protests, allowing the organization to legally challenge overbroad state censorship through targeted litigation—demonstrating that transparency mandates empower domestic civil society actors to convert platform disclosures into actionable evidence against state overreach, a dynamic that hinges on the existence of technically capable NGOs able to parse and weaponize disclosed data, a role rarely acknowledged in top-down policy discussions.

Competitive Platform Benchmarking

After YouTube and TikTok were required to submit transparency disclosures under Brazil’s Marco Civil da Internet during the 2022 national elections, the Brazilian Institute of Consumer Defense compared the platforms’ removal volumes and appeal response times, publishing a public scorecard that influenced advertisers and app store placement decisions—showing that mandated disclosures enable third-party actors to construct comparative performance metrics that introduce market discipline into content moderation, a mechanism where transparency indirectly shapes platform behavior not through legal penalty but reputational and economic competition, an outcome underappreciated in public discourse focused exclusively on compliance.

Modulator Asymmetry

Regulators gain covert influence over enforcement outcomes through uneven technical standards in transparency reporting, which allows them to shape content moderation without assuming liability for takedowns. Platform disclosure mandates often require granular reporting on removal volume, category, and origin, but the absence of uniform data schemas across firms enables regulators to selectively interpret compliance, rewarding cooperative platforms with softer oversight while penalizing dissenters through audit scrutiny. This dynamic privileges state-aligned platforms that can absorb regulatory complexity, disadvantaging smaller or adversarial platforms lacking bureaucratic capacity, a consequence rarely acknowledged in trust-building narratives. Most analyses assume transparency inherently constrains power, but the non-obvious reality is that asymmetrical modularity in reporting systems turns disclosure into a tool of regulatory capture.

Attention Arbitrage

Political activists with niche agendas gain disproportionate media amplification when platforms disclose content removals, because outlier takedown incidents are exploited to manufacture crises that reshape public discourse on trust. When transparency reports reveal removal statistics, journalists and advocacy groups gravitate toward anomalous cases—such as the deletion of a single provocative post—while ignoring systemic patterns, enabling actors who can stage such incidents to manipulate coverage. This distorts public perception of moderation fairness not through data, but through strategic noise injection into transparent systems, a mechanism invisible to conventional trust metrics. The overlooked dynamic is that transparency does not distribute attention equally; it creates arbitrage opportunities for those who can game its representational gaps.

Infrastructure Legibility

Telecom providers in semi-authoritarian regimes gain leverage over domestic platform governance when global platforms adopt standardized transparency mandates, because the demand for localized compliance data increases dependence on state-controlled network infrastructure for content attribution. Platforms must geolocate and log removals by jurisdiction to meet transparency benchmarks, requiring deeper integration with national internet exchange points and surveillance-adjacent tools—systems often managed by incumbent telecoms with state ties. This inadvertently legitimizes and reinforces hybrid public-private surveillance architectures under the guise of accountability, a dependency rarely visible in trust discourses centered on user-platform relations. The non-obvious shift is that transparency requirements can elevate backend infrastructure providers into de facto content governance brokers.

Regulatory Accountability

Governments gain from platform content-removal transparency mandates because these disclosures institutionalize oversight mechanisms that align corporate behavior with state-defined legal standards, particularly under rule-of-law doctrines emphasizing procedural fairness. Such mandates embed platforms within administrative accountability frameworks—like those in the EU’s Digital Services Act—where public trust is sustained not through moral virtue but verifiable compliance, making enforcement visible and contestable. The non-obvious insight is that transparency here does not primarily inform citizens but calibrates state-market power relations, transforming opaque corporate moderation into auditable governance processes.

Civic Epistemic Agency

Civil society actors gain from content-removal transparency mandates because standardized disclosures enable watchdog groups, journalists, and academics to detect patterns of censorship that would otherwise remain buried in proprietary algorithms, operating through the ethical framework of discursive democracy. This access strengthens public reasoning by grounding political speech debates in empirical data, as seen in Facebook’s Transparency Center reports being cited in human rights litigation. The underappreciated reality is that these disclosures do not just reveal content takedowns—they equip communities with the evidentiary means to challenge power, transforming transparency from passive visibility into active democratic intervention.