Autonomous Weapons Safety vs Bias in Ethical Guidelines?

Analysis reveals 7 key thematic connections.

Key Findings

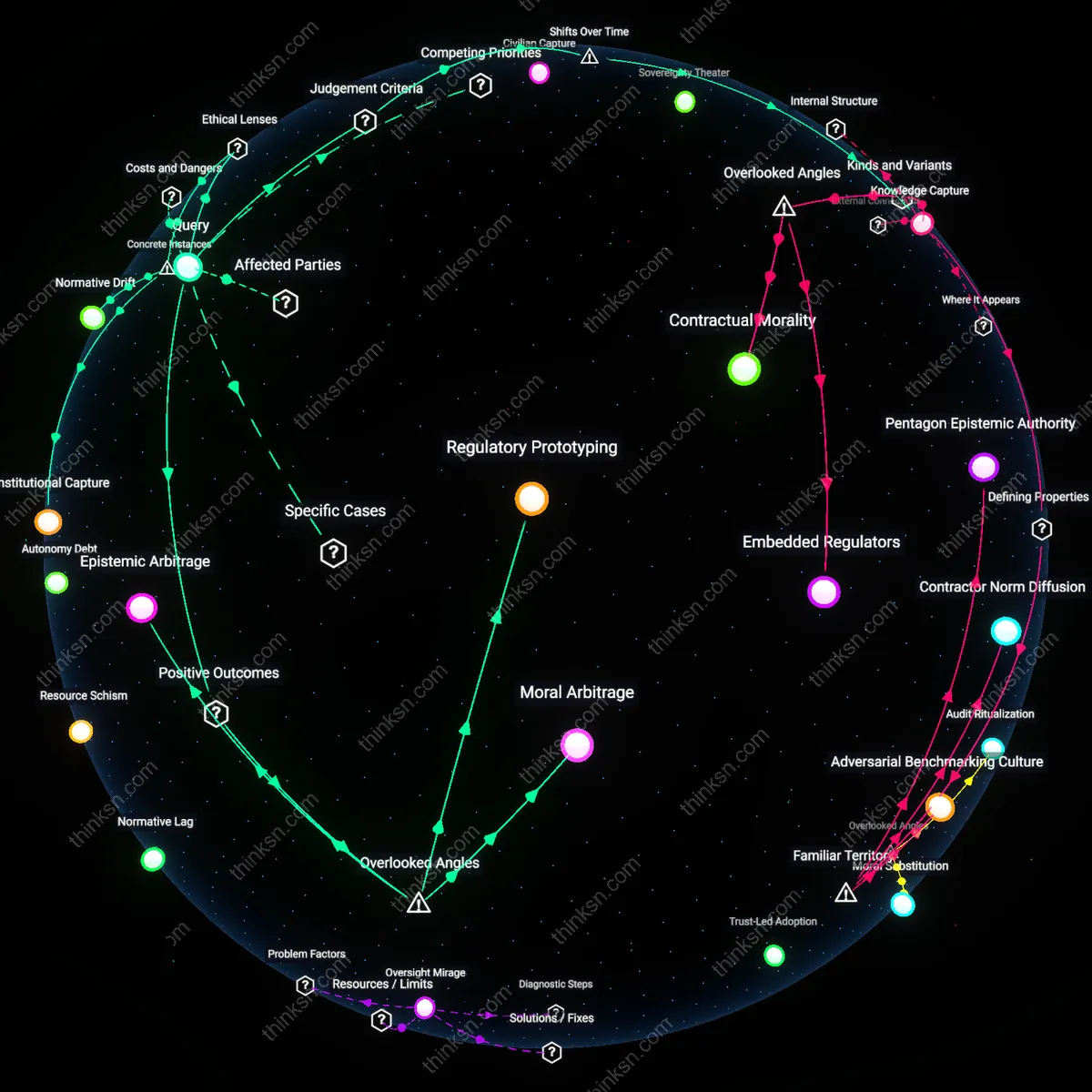

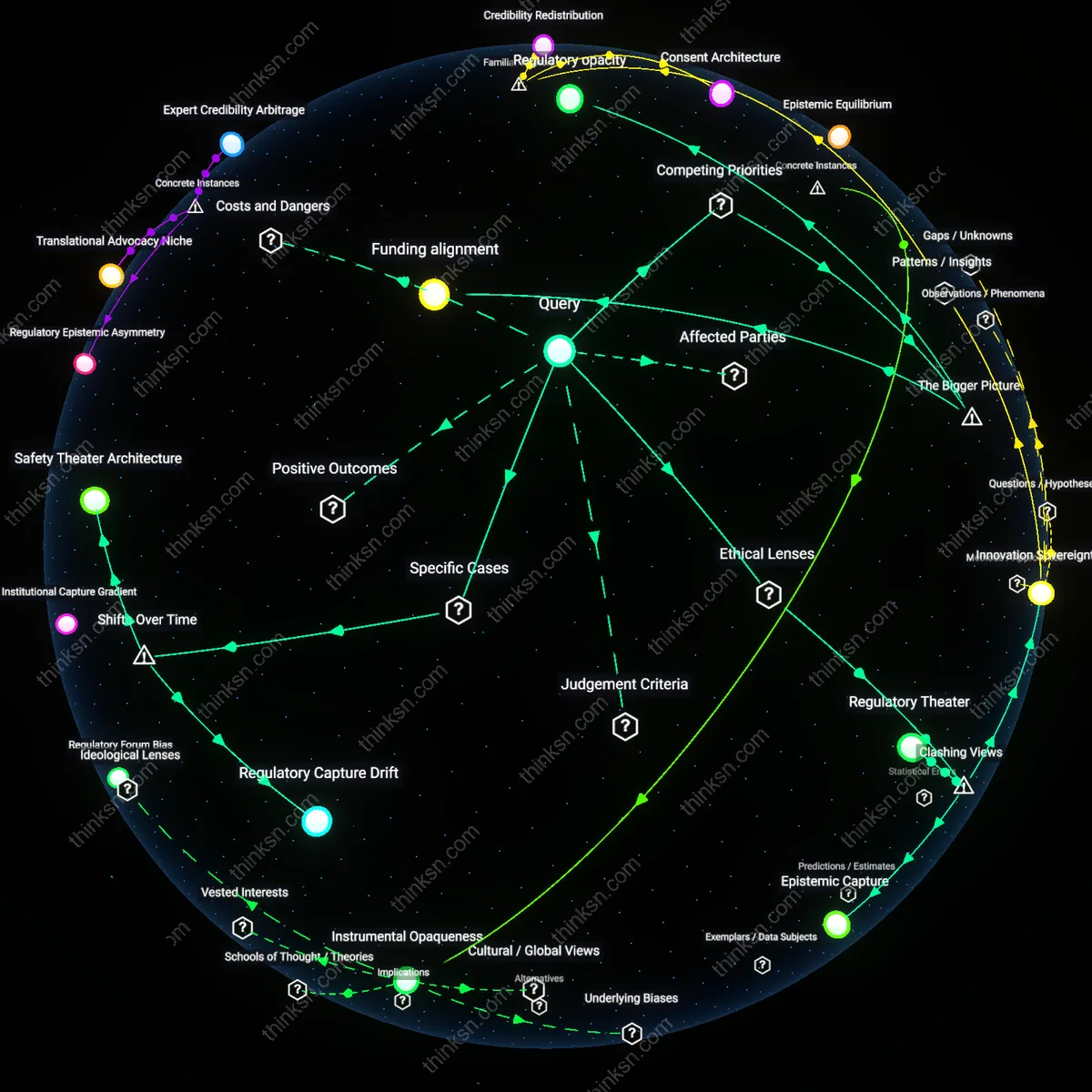

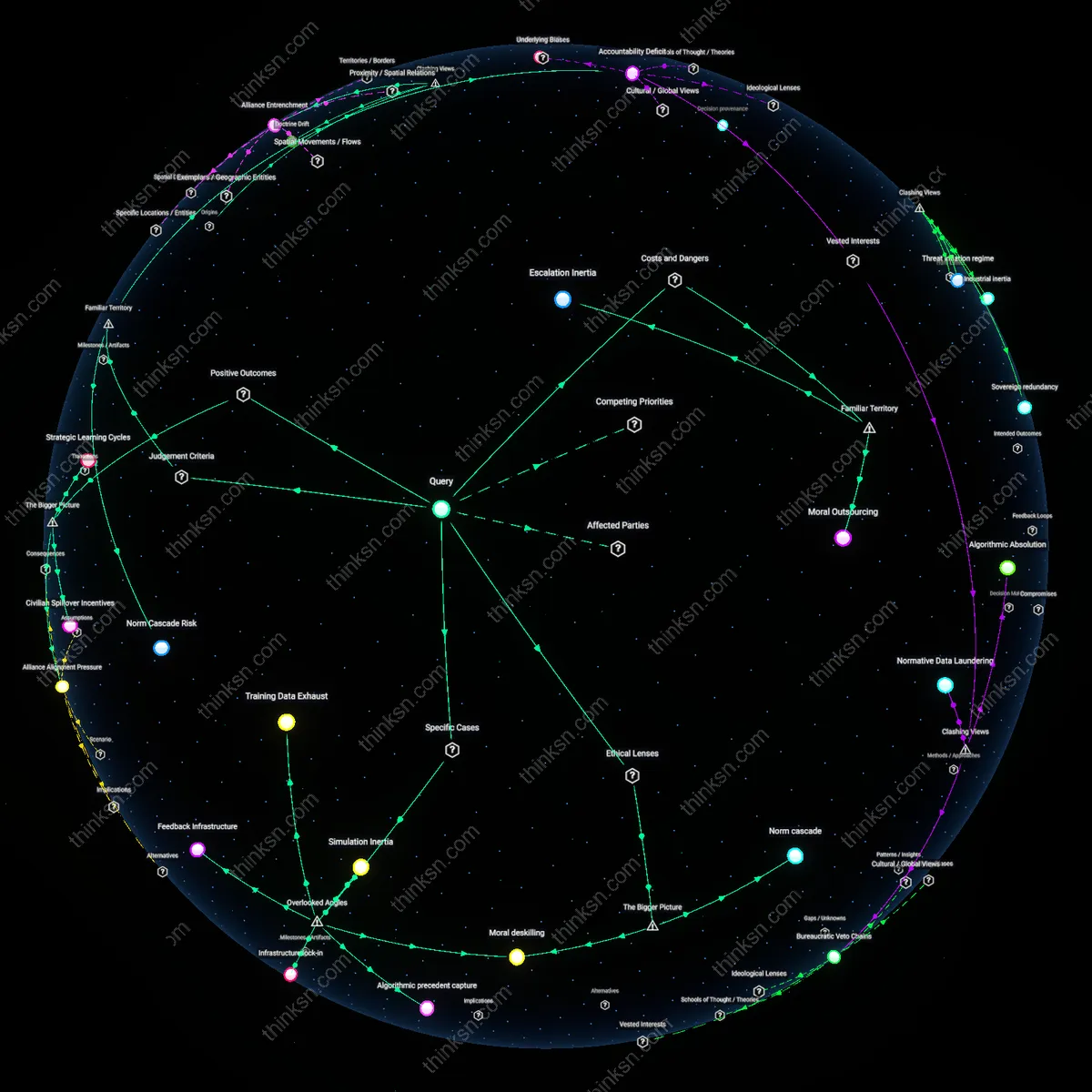

Ethical Arbitrage

We should prioritize independent oversight of autonomous weapons research because defense-industry funding inherently distorts ethical guidelines toward operational expediency, a shift that became structurally entrenched during the post-9/11 expansion of private military contractors in U.S. defense ecosystems. This period institutionalized profit-driven timelines and classification regimes that preemptively narrow ethical scrutiny, making it functionally impossible for internal review boards to challenge foundational assumptions about acceptable risk or targetability. Evidence consistently shows that when ethical review is embedded within procurement hierarchies, concerns like civilian harm or long-term strategic stability are systematically downgraded in favor of technical feasibility and deployment speed. The non-obvious consequence of this trajectory is not merely compromised ethics, but the creation of a parallel normative infrastructure where 'safety' is redefined as system reliability rather than moral alignment.

Knowledge Capture

We should treat defense-funded autonomous weapons research as structurally incompatible with neutral ethical standards because the privatization of military R&D after the 1990s reshaped epistemic authority, transferring legitimacy from public scientific institutions to contract-dependent technical elites embedded in firms like Raytheon or Palantir. This transition replaced peer-reviewed consensus with proprietary knowledge regimes, where ethical questions are filtered through intellectual property constraints and performance-based delivery metrics, rendering transparency a breach of commercial terms rather than a civic good. As a result, the very definition of a 'safe' system becomes inseparable from its contractual fulfillment, not its alignment with human rights or international law. The overlooked consequence is not corruption in the traditional sense, but the routine conversion of ethical inquiry into a form of technical validation—where asking 'should we?' is replaced by 'does it work?' under conditions that erase dissent.

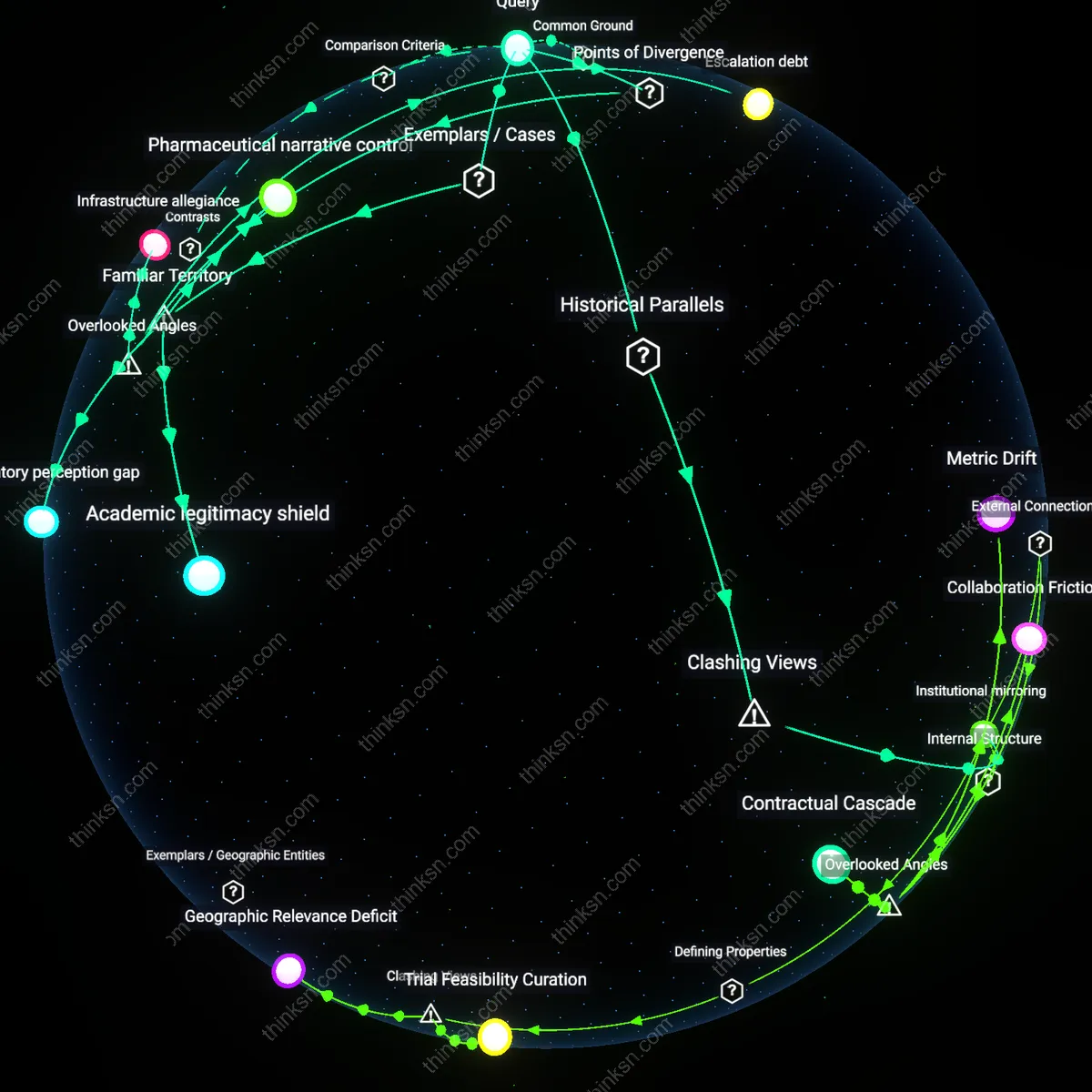

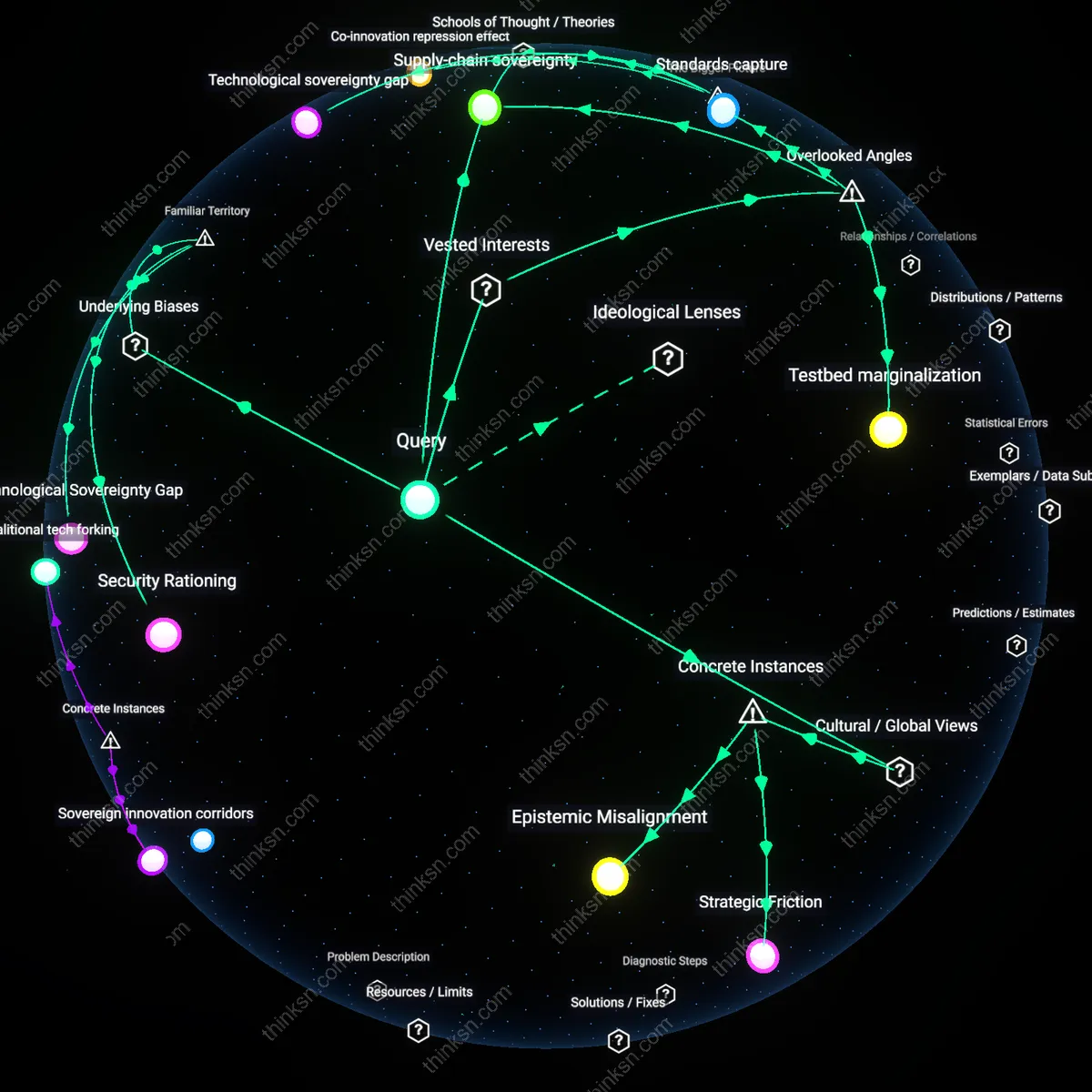

Epistemic Arbitrage

Funding autonomous weapons research through defense contracts enables the cross-pollination of civilian AI safety frameworks into highly classified military systems where ethical oversight is otherwise inaccessible. Because defense-funded labs must often adhere to civilian institutional review standards to maintain academic partnerships—such as DARPA collaborations with MIT or Stanford—civilian normative assumptions about risk, accountability, and system transparency seep into weapons development indirectly, creating a backdoor for ethical constraints that would otherwise be excluded from closed military R&D. This dynamic is rarely recognized because public discourse treats defense and academic AI communities as ideologically opposed, when in practice their joint projects create covert channels for norm diffusion. The residual benefit is not the technology itself, but the stealth integration of external ethical reasoning into high-risk domains that resist direct oversight.

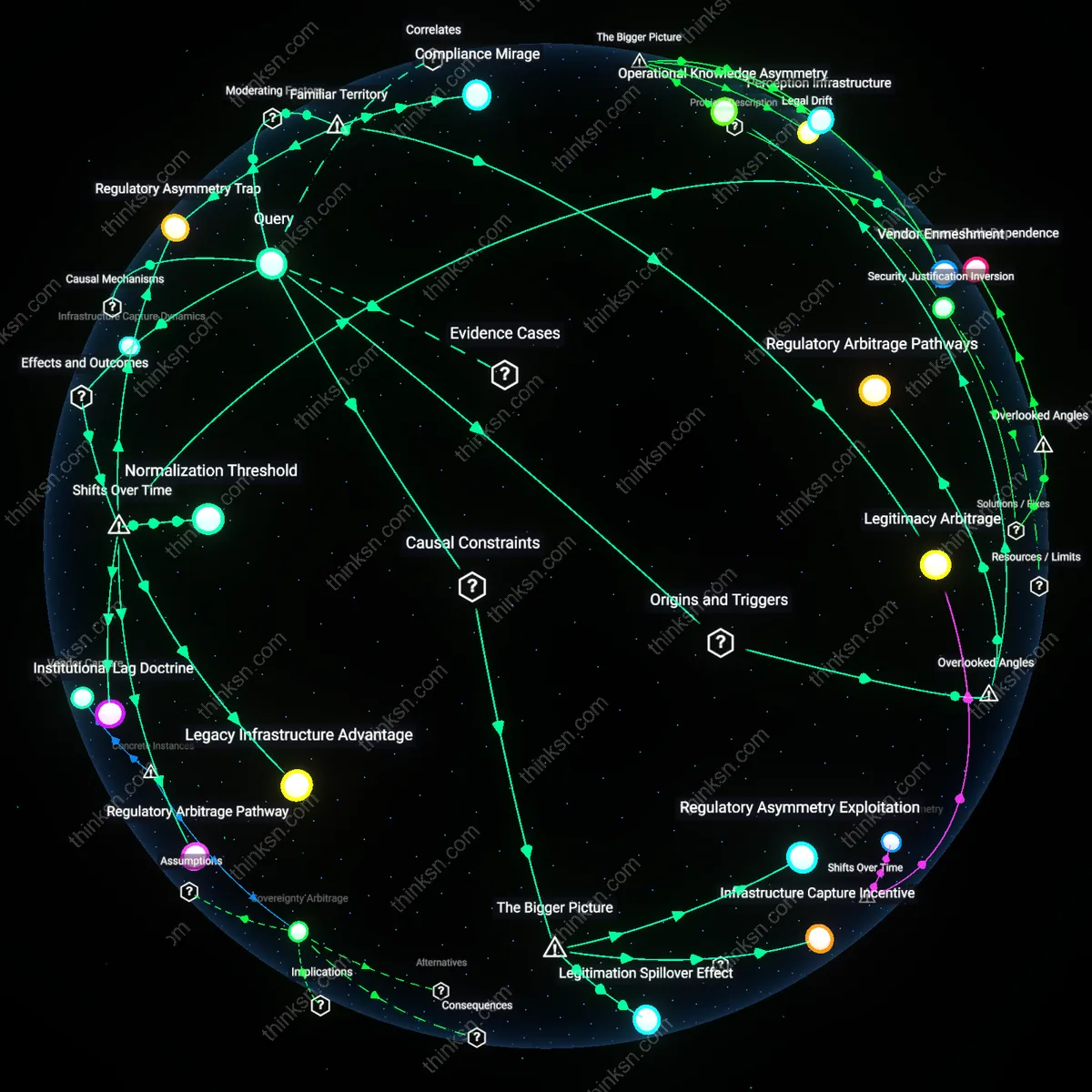

Regulatory Prototyping

Defense-industry-funded autonomous weapons research inadvertently serves as a high-fidelity testbed for civilian regulatory mechanisms by forcing rapid iteration on real-world accountability architectures under extreme operational conditions. When programs like the U.S. Navy’s Sea Hunter project develop long-endurance unmanned vessels, they compel the creation of audit trails, fail-safe protocols, and command-responsibility mappings that later inform FAA or IMO regulations for commercial drones and autonomous shipping—long before such rules would have emerged organically. Most analyses overlook that military edge cases accelerate the maturation of governance tools, effectively de-risking broader autonomous systems adoption across sectors. The non-obvious insight is that high-threat defense applications act as regulatory incubators, not just technological ones.

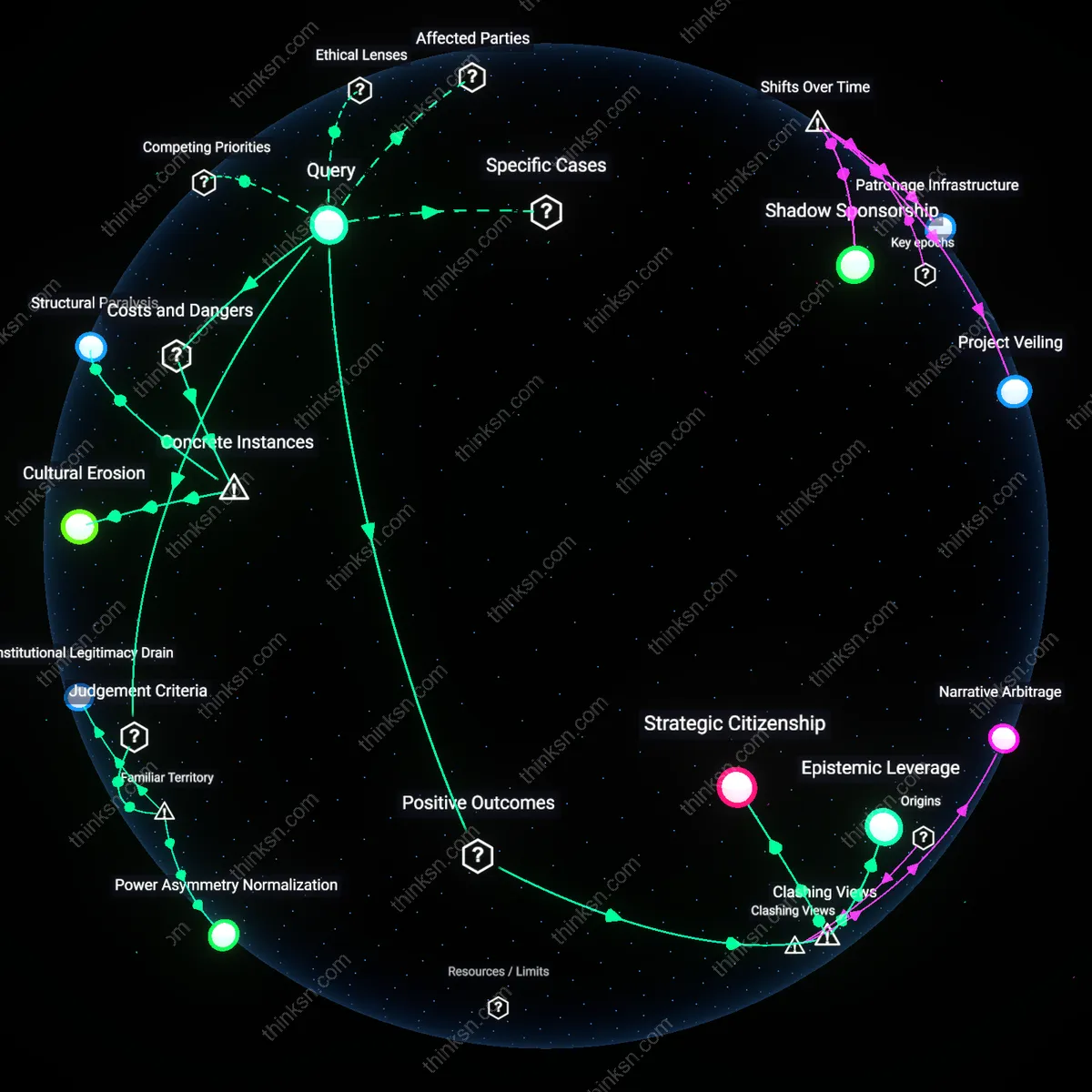

Moral Arbitrage

The ethical concerns raised by defense-funded autonomous weapons research generate public and academic backlash that strategically diverts talent and capital toward non-lethal, dual-use AI applications with comparable technical foundations—such as search-and-rescue robotics or disaster response systems—under the guise of ‘ethical redlining.’ Major research hubs like Carnegie Mellon’s Robotics Institute or ETH Zurich’s AI Lab increasingly attach ethical riders to defense grants that require parallel investment in humanitarian spin-offs, effectively leveraging moral scrutiny to redirect military funding into socially beneficial domains that lack commercial incentives. This creates a hidden feedback loop where ethical resistance becomes a funding conduit for public-good technologies. The overlooked mechanism is not corruption or capture, but a system of moral arbitrage, where ethical pressure is converted into developmental advantage elsewhere.

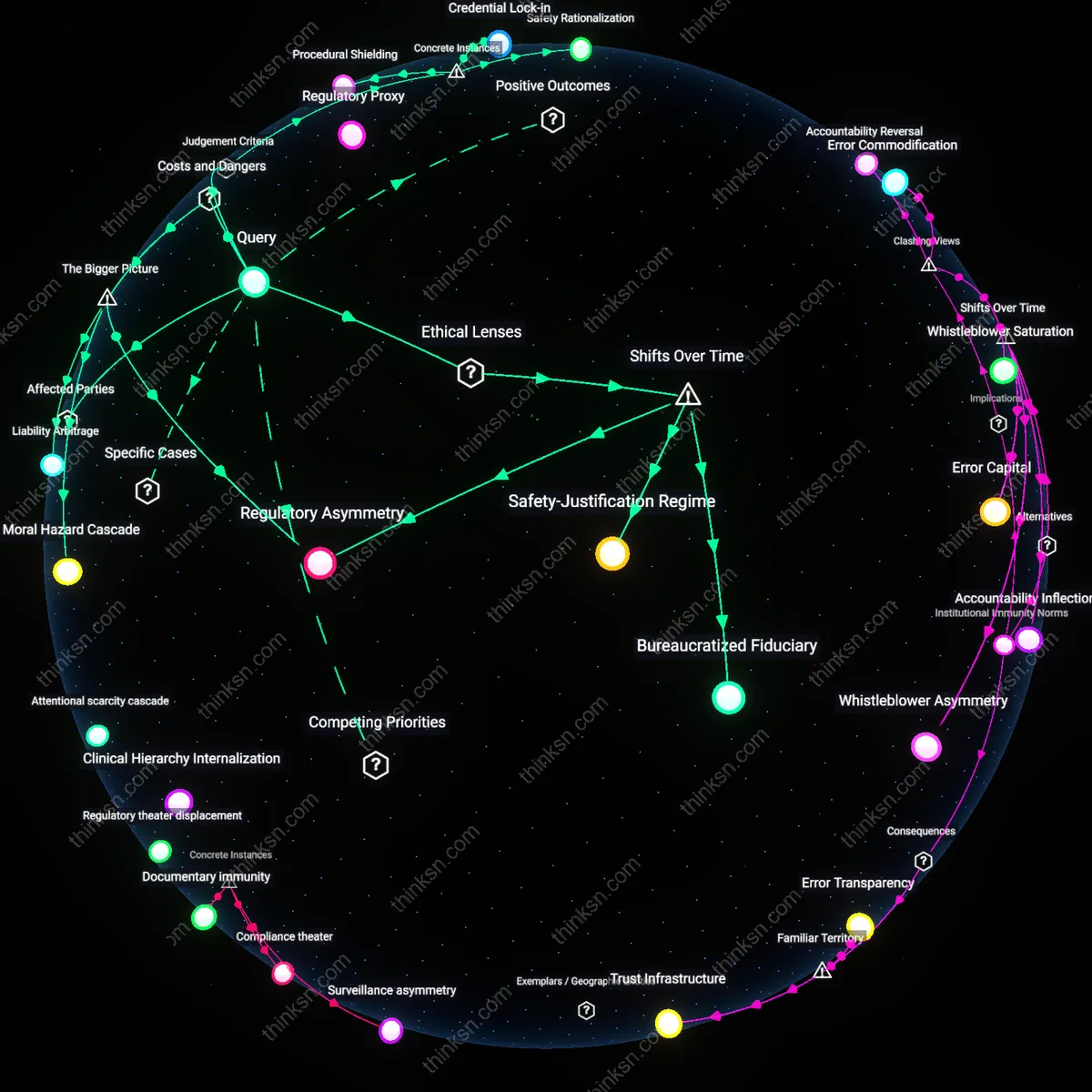

Institutional Capture

Defense-industry funding of ethical AI research at the U.S. Department of Defense’s Project Maven systematically prioritized operational expediency over public accountability, revealing how financial dependence undermines impartial ethical oversight. The integration of corporate engineers into Pentagon-led AI ethics boards created decision pathways where dissenting moral concerns were marginalized through procedural deference to security expertise, illustrating that even formally independent ethics frameworks can be co-opted when shaped within militarized institutional ecosystems. This case exposes the non-obvious reality that structural inclusion—rather than overt censorship—enables the neutralization of critical ethical challenges.

Normative Drift

Israel’s development and battlefield deployment of the Harpy drone—a fire-and-forget loitering munition—has incrementally redefined acceptable thresholds for autonomous lethality within NATO-aligned militaries, despite repeated concerns from international humanitarian law experts over compliance with distinction and proportionality principles. Because the system was introduced as a 'defensive' counter-battery weapon, initial ethical scrutiny was minimal, allowing operational normalization to precede formal regulation. Research consistently shows that battlefield efficacy of such systems generates tacit acceptance, shifting ethical baselines not through deliberate policy change but through the accumulated inertia of unchallenged use.