Are Pharma-Funded Studies as Reliable as Independent Research?

Analysis reveals 7 key thematic connections.

Key Findings

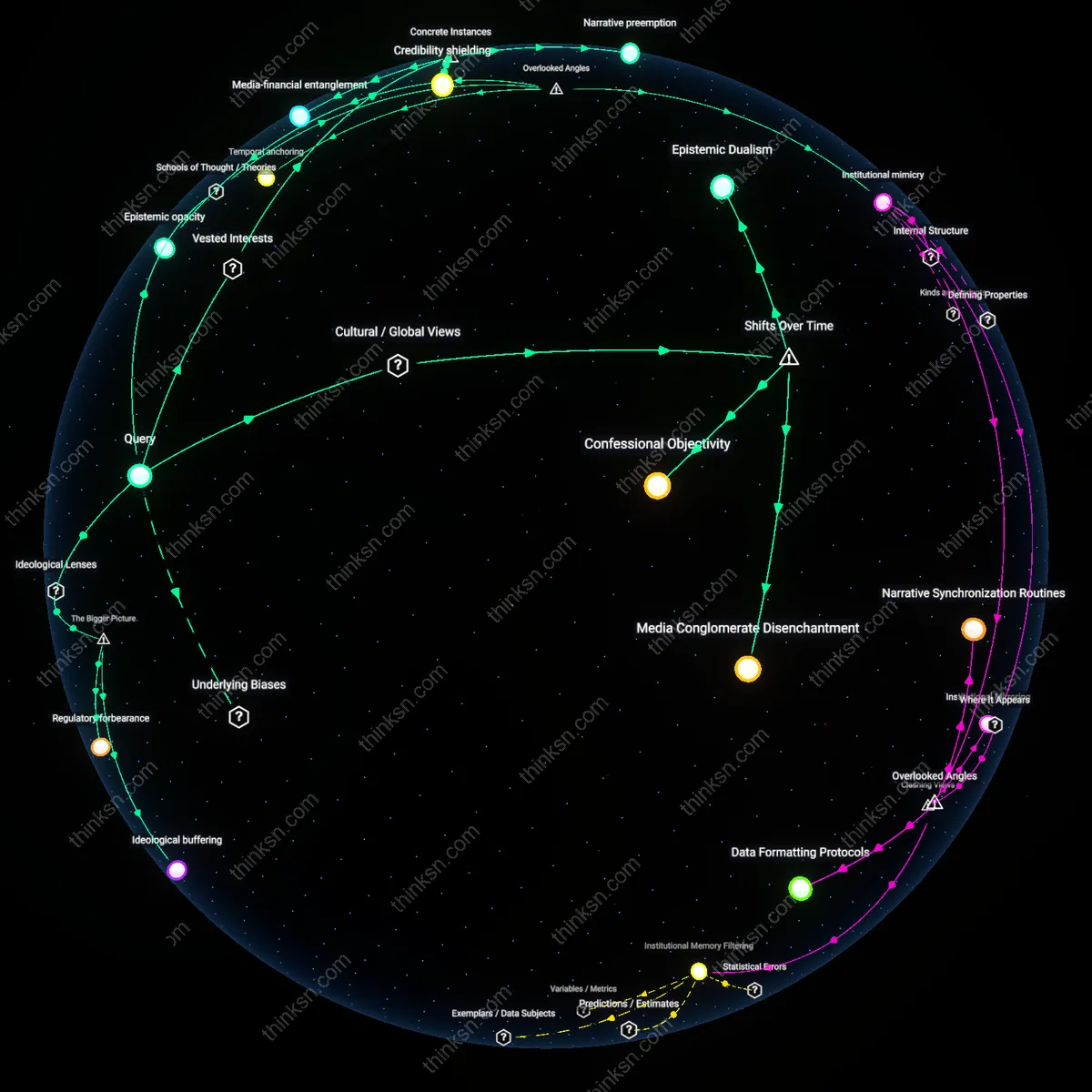

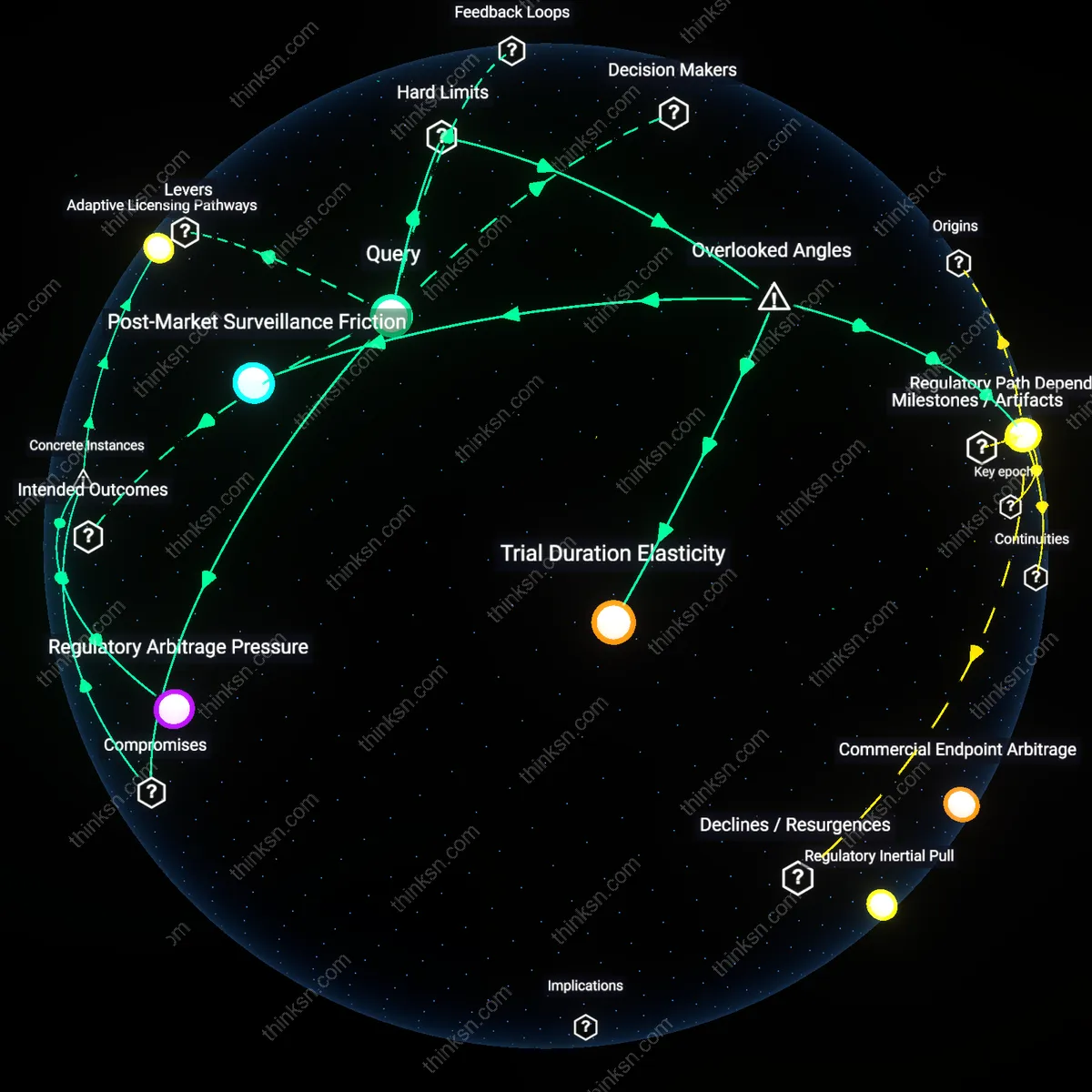

Institutional mirroring

Manufacturer-funded observational studies are more reliably consistent in reporting favorable outcomes than independent academic ones because sponsors embed design norms into contract research organizations that replicate regulatory trial logic in real-world settings. Pharmaceutical firms channel post-market surveillance through specialized CROs like ICON or PRA Health Sciences, which apply Good Observational Practice frameworks pre-approved by the FDA—creating procedural fidelity that masks selection bias in site recruitment and endpoint definition. This standardized compliance, mistaken for methodological rigor, systematically suppresses heterogeneity in patient responses that independent studies—such as those coordinated through the European Network of Observatories in Cardiovascular Disease—are more likely to capture, despite being seen as less 'reliable' under current evaluation templates. The non-obvious reality is that reliability here measures institutional alignment, not truth-convergence.

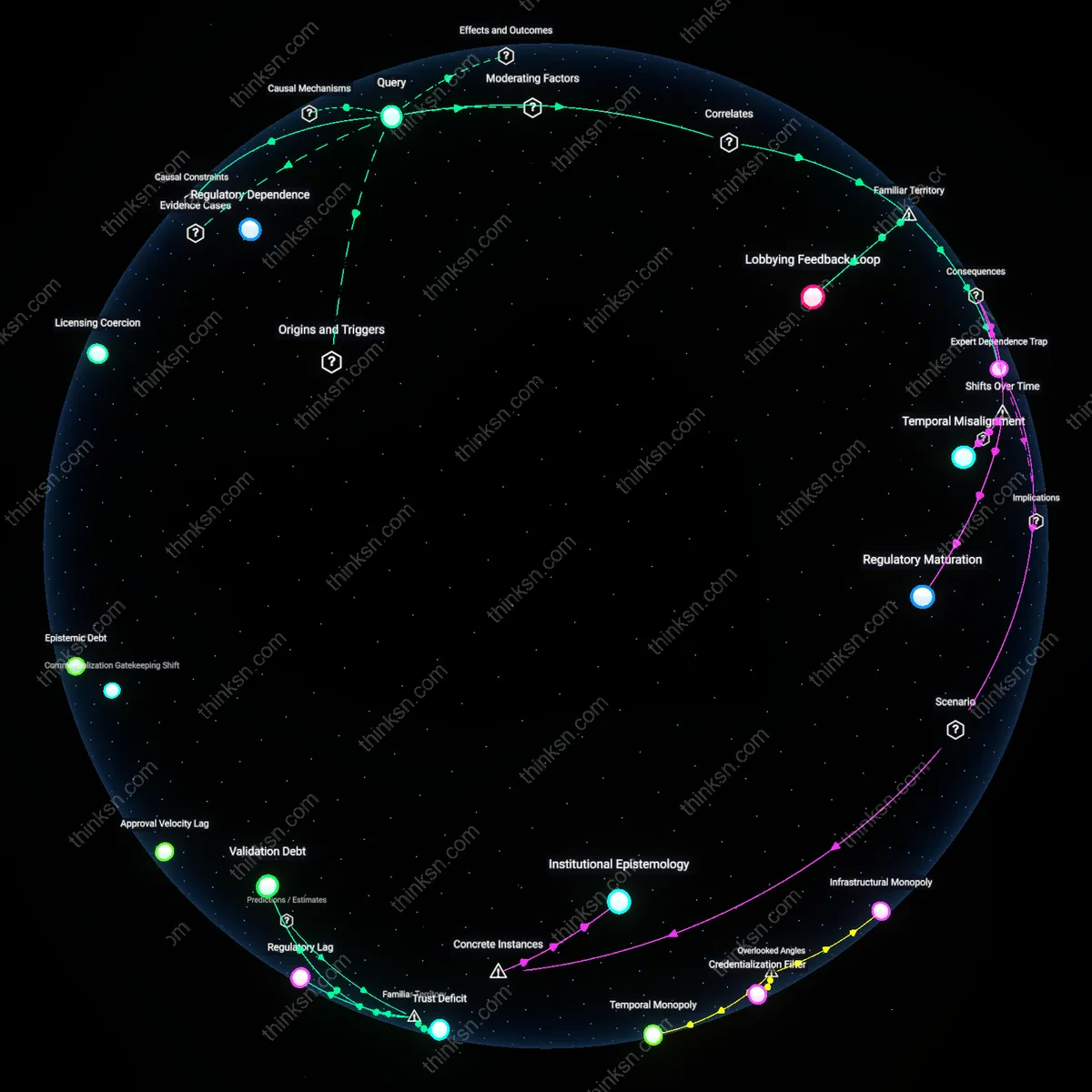

Temporal capture

manufacturer-funded studies are less reliable due to earlier observational onset that captures transient clinical patterns not representative of long-term use

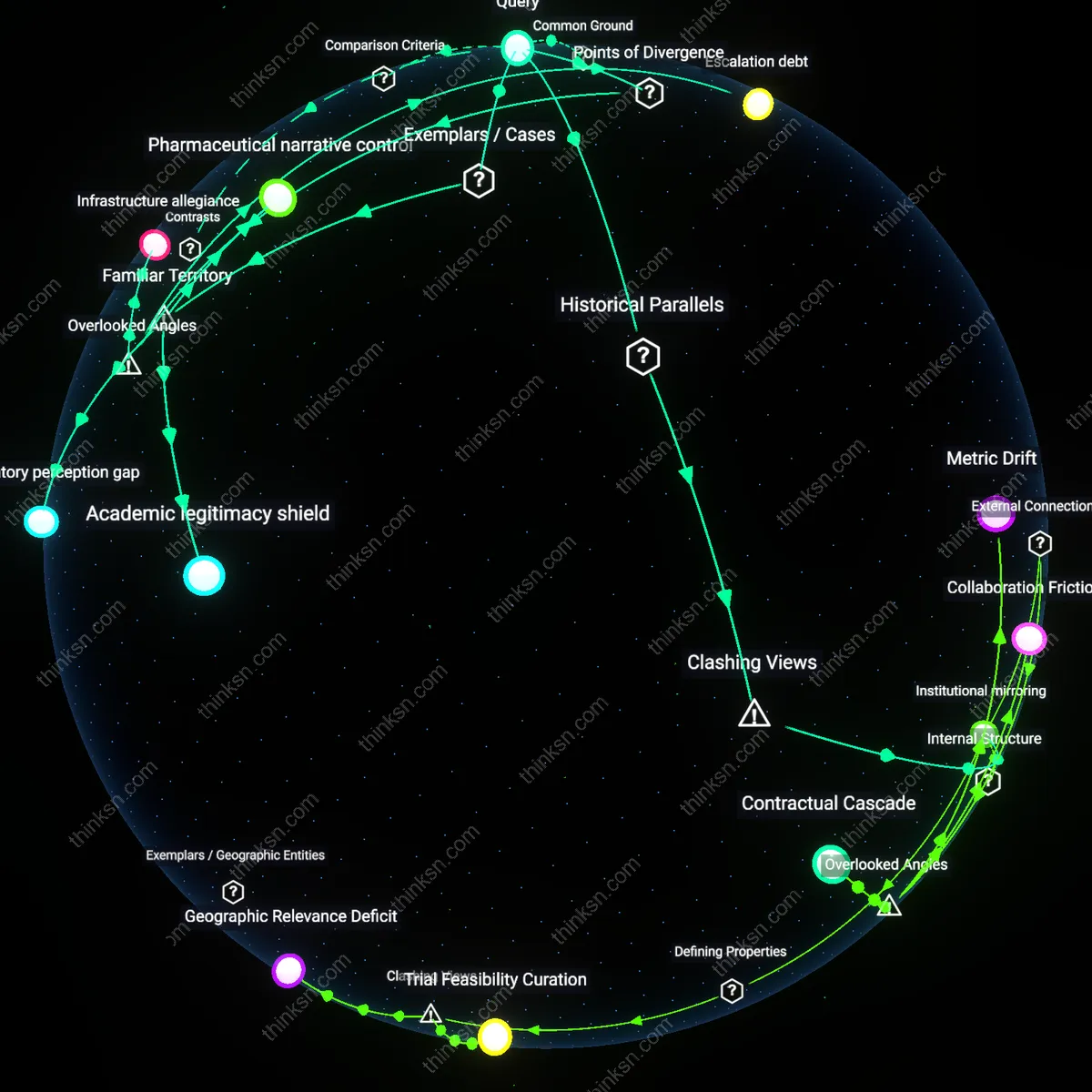

Infrastructure allegiance

independent academic studies are less reliable because they inherit and reproduce manufacturer-shaped data classification systems embedded in shared observational infrastructures

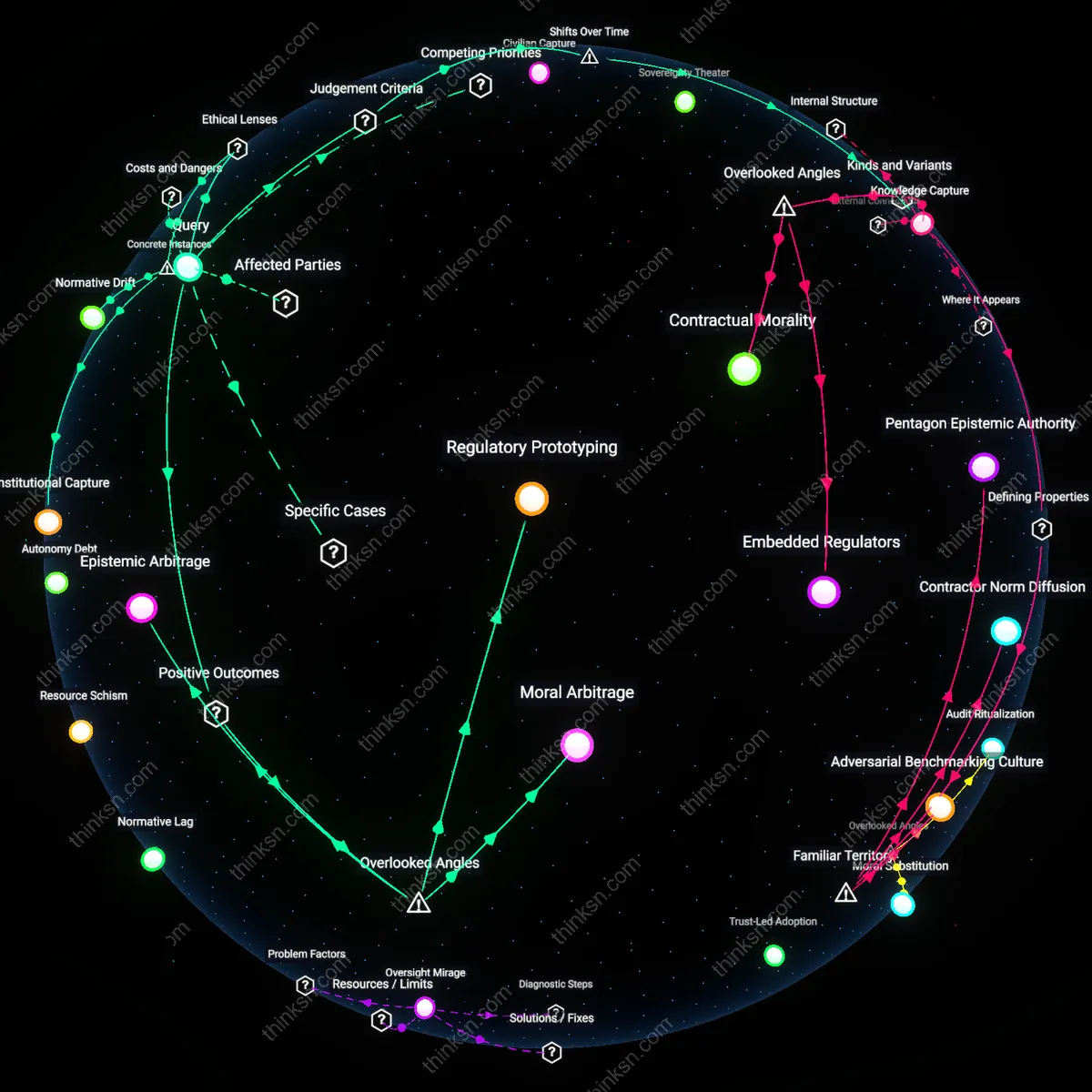

Escalation debt

independent studies lose reliability through cumulative methodological entanglement with manufacturer-funded research trajectories they aim to critique

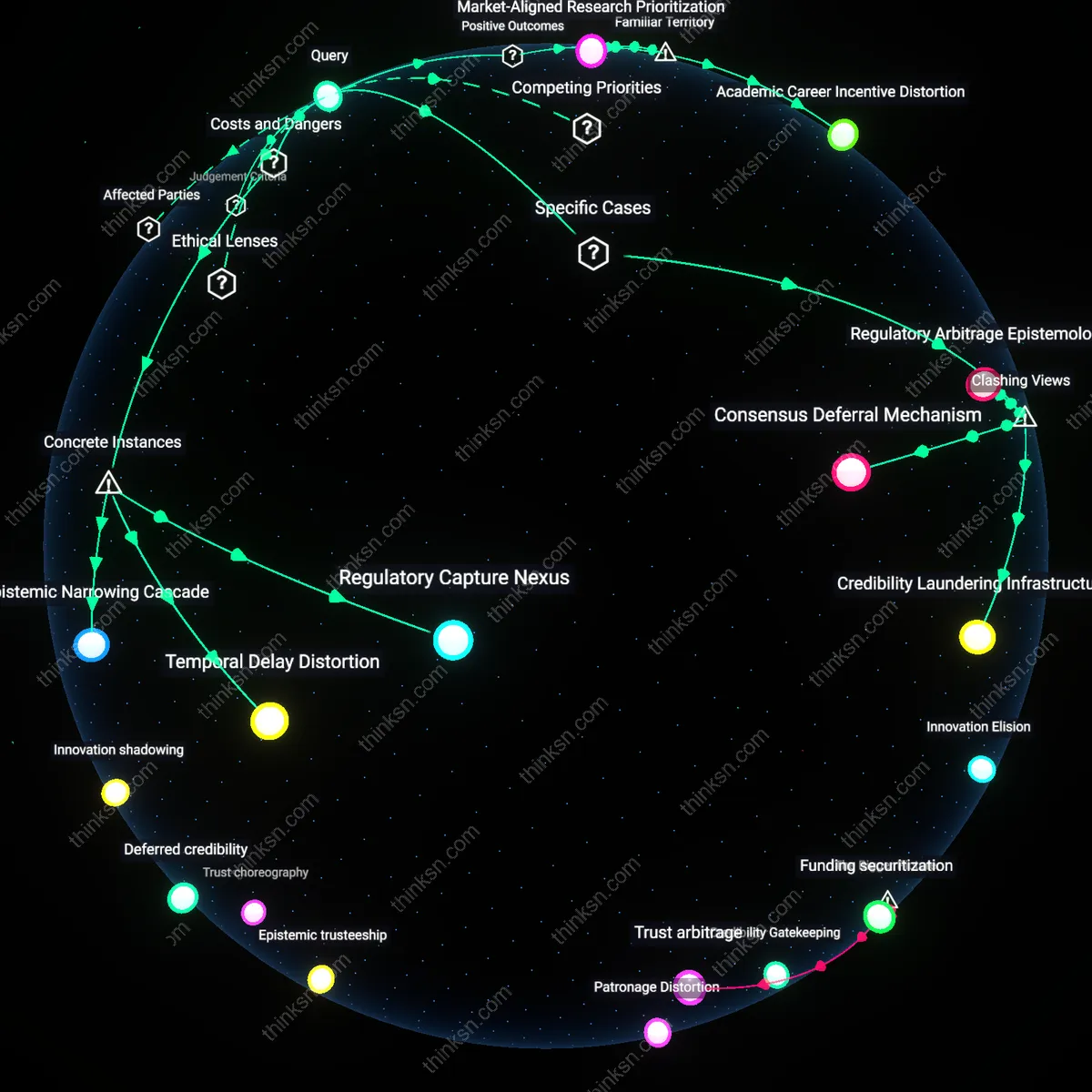

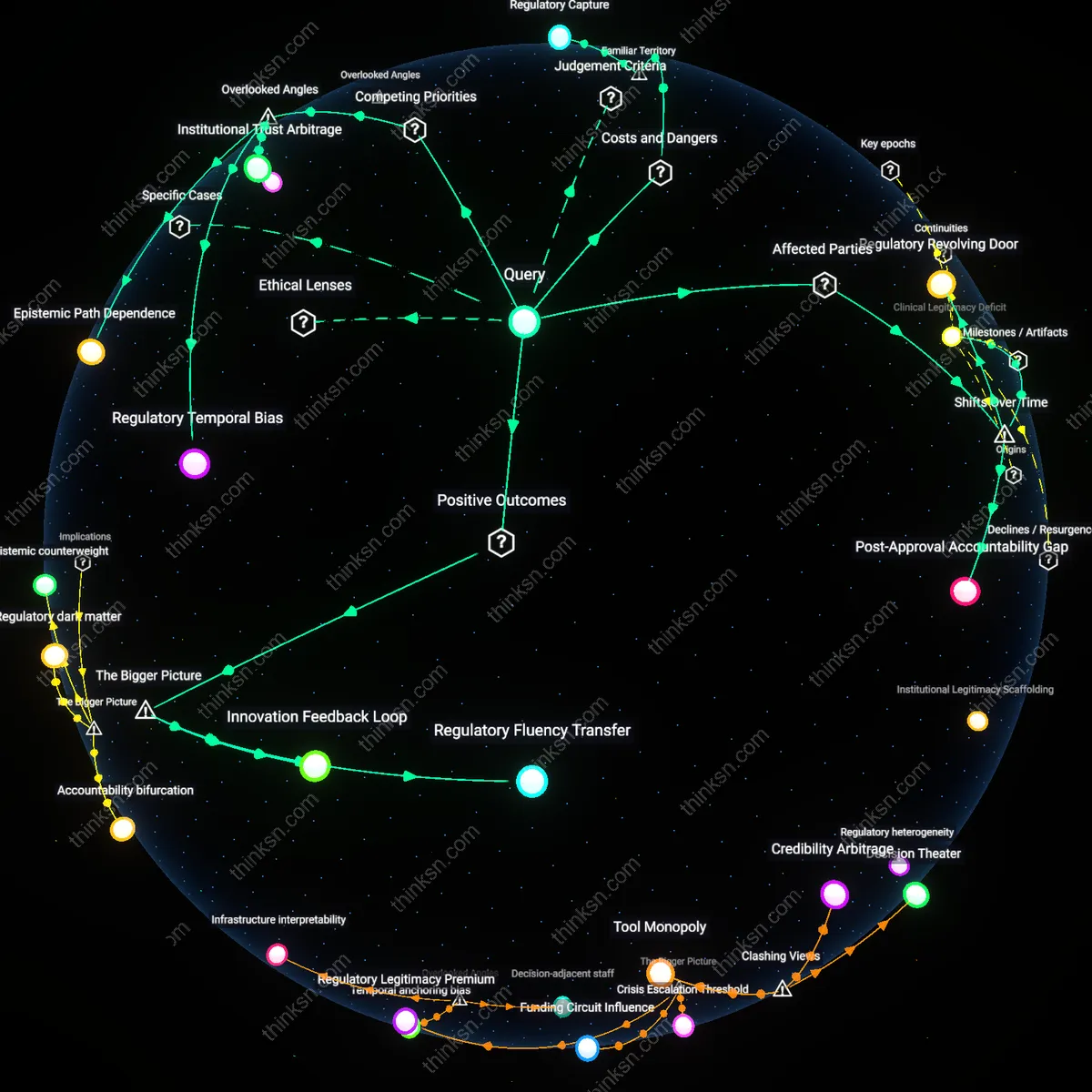

Pharmaceutical narrative control

Manufacturer-funded post-marketing studies are more likely to report favorable outcomes because drug companies directly manage study design and data interpretation at major academic medical centers like those in the Pfizer-led RESPOND trial for antivirals. The sponsorship structure enables selective endpoint reporting and delays in public data release, which systematically shapes the observed safety and efficacy profile—despite appearing scientifically legitimate through peer-reviewed publication. What’s underappreciated in public discourse is not just bias in results, but how manufacturer control over timing, framing, and access transforms observational research into an extension of marketing operations, even when conducted at prestigious institutions.

Academic legitimacy shield

Independent academic studies gain public trust because institutions like Harvard Medical School or the Karolinska Institute lend authoritative credibility to post-marketing surveillance, such as the NIH-funded Observational Medical Outcomes Partnership analyzing cardiovascular risks of diabetes drugs. The perceived neutrality of university affiliations and open-methods frameworks creates a psychological buffer against skepticism, even when limitations in sample selection or confounding exist. The non-obvious reality is that this shield of academic legitimacy often obscures inconsistent methodological rigor—meaning reliability is assumed rather than verified, and errors propagate because critique is socially muted by institutional prestige.

Regulatory perception gap

Regulatory agencies treat manufacturer-funded studies as compliance instruments, as seen in post-approval requirements for Johnson & Johnson’s Xarelto trials monitored by the FDA, where data collection serves legal benchmarks rather than scientific discovery. This generates a false equivalence in policy settings between these mandatory industry submissions and independent academic efforts, even though the former are optimized for regulatory checkboxes, not robust causal inference. The underrecognized issue is that this equivalence shapes public health decisions not through evidence quality, but through procedural recognition—making reliability a function of bureaucratic acceptance rather than empirical validity.