Does EU Privacy Backfire for Weaker Member States?

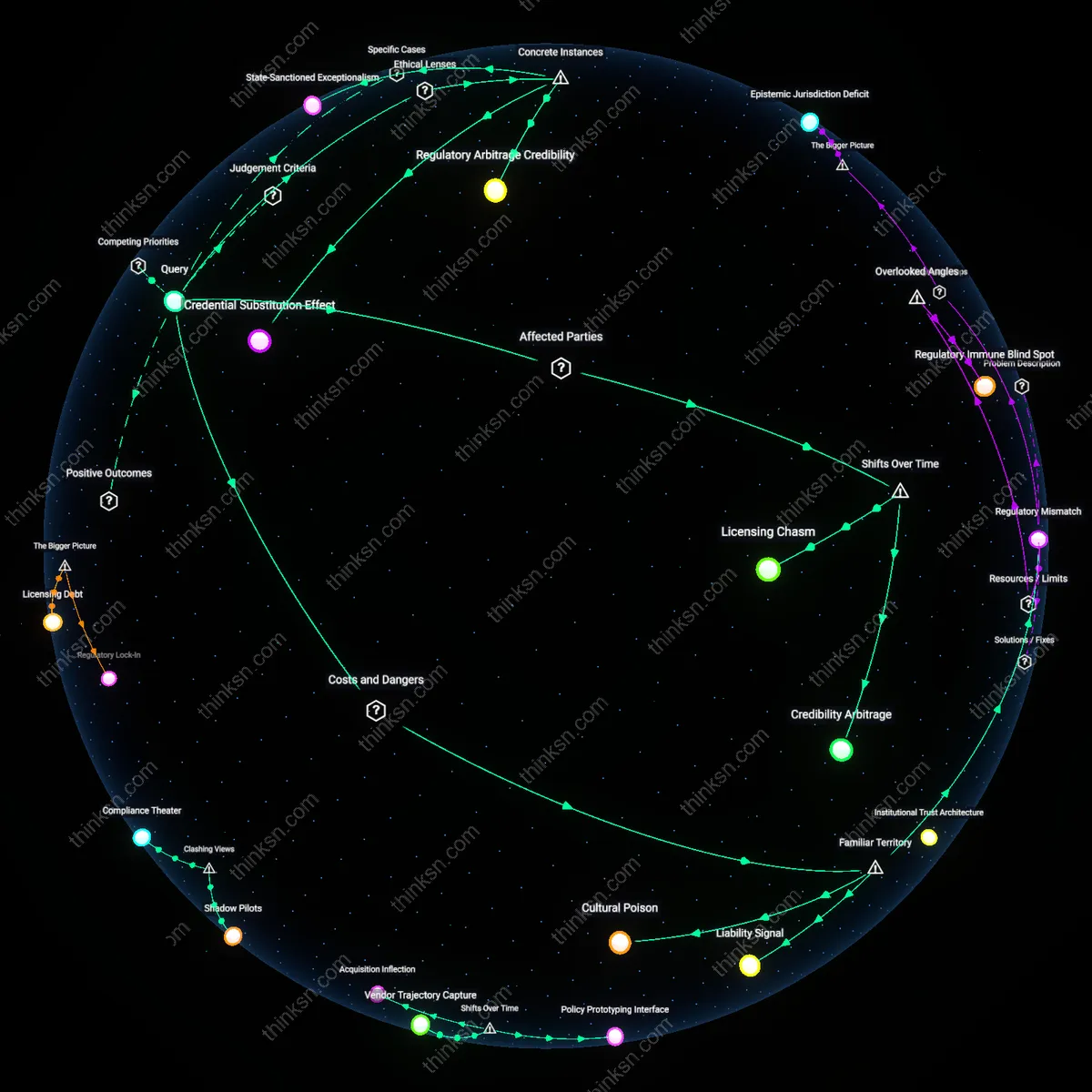

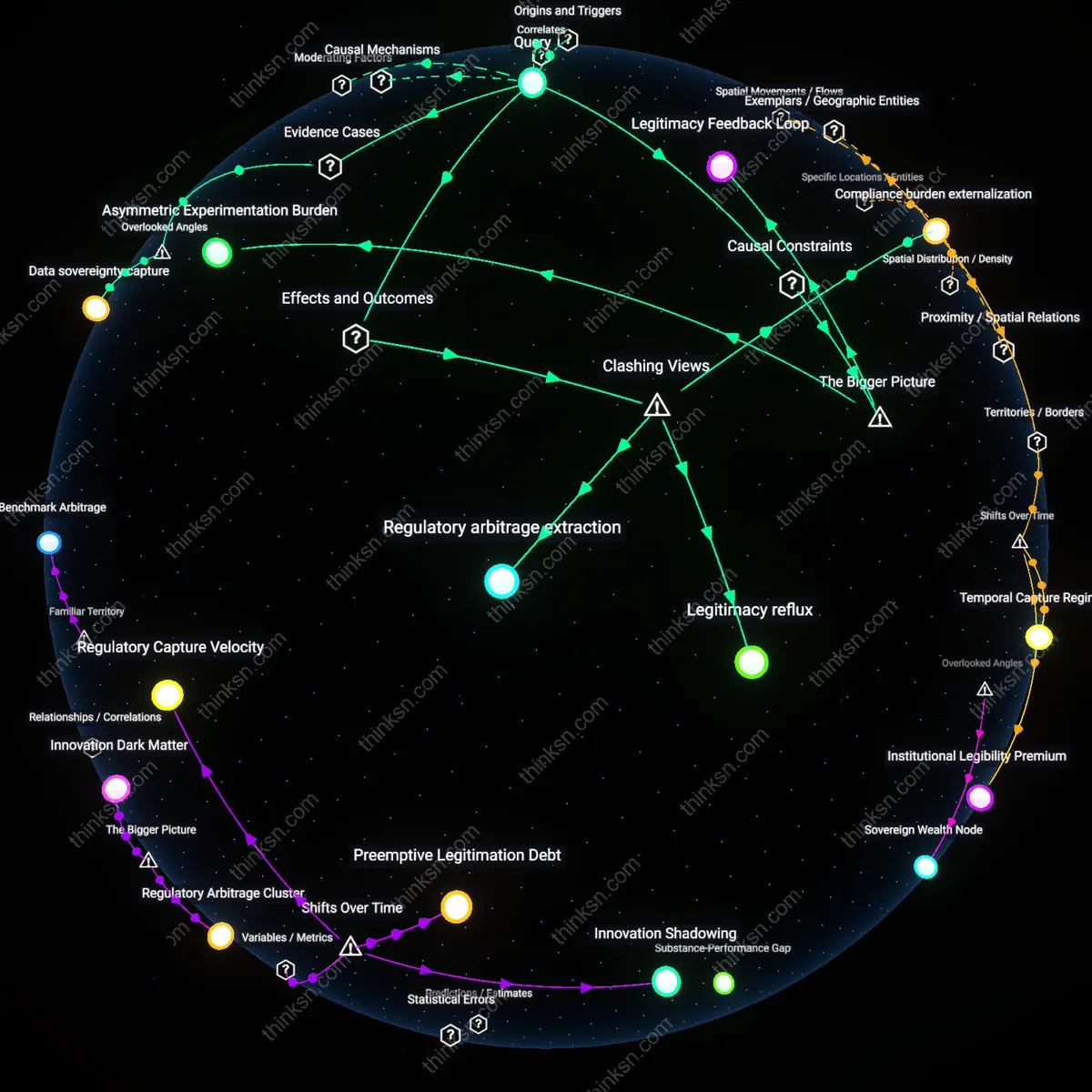

Analysis reveals 17 key thematic connections.

Key Findings

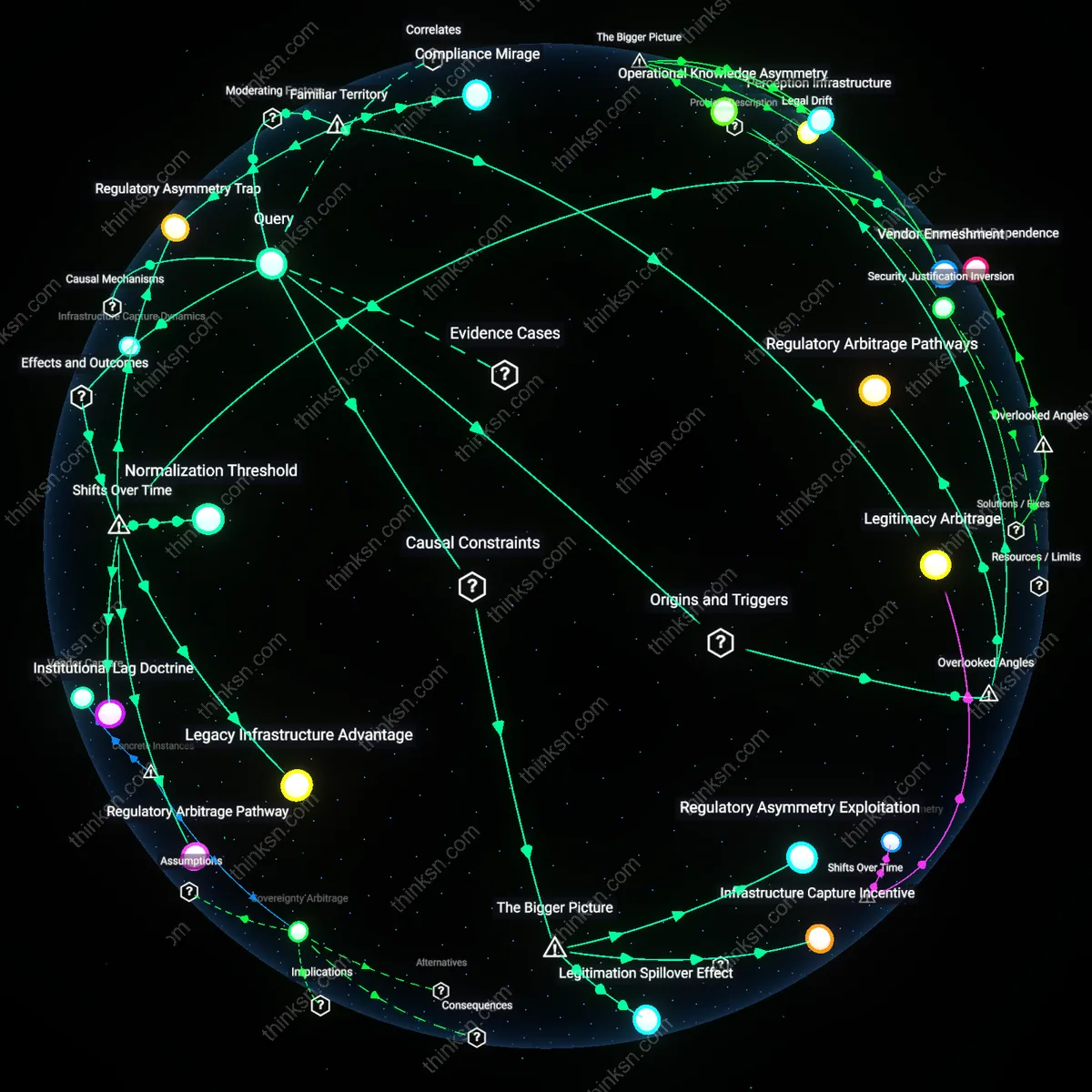

Regulatory Arbitrage Pathways

The EU's fragmented enforcement of facial-recognition regulations enables member states with weaker privacy traditions to host data-processing infrastructure that benefits from lax local oversight while still operating under the EU’s external legitimacy. National data protection authorities in countries like Poland or Hungary can selectively delay or dilute implementation of EU standards, allowing state-linked entities to develop facial-recognition capabilities using data routed through these jurisdictions—capabilities that would be legally constrained in stricter regimes like Germany or France. This creates a backdoor centralization of surveillance capacity in permissive states, an effect amplified by the EU’s reliance on mutual recognition of national regulatory compliance. The non-obvious insight is that EU harmonization efforts, intended to raise standards uniformly, inadvertently codify loopholes by treating procedural compliance as equivalent to substantive privacy protection, thus rewarding strategic non-alignment.

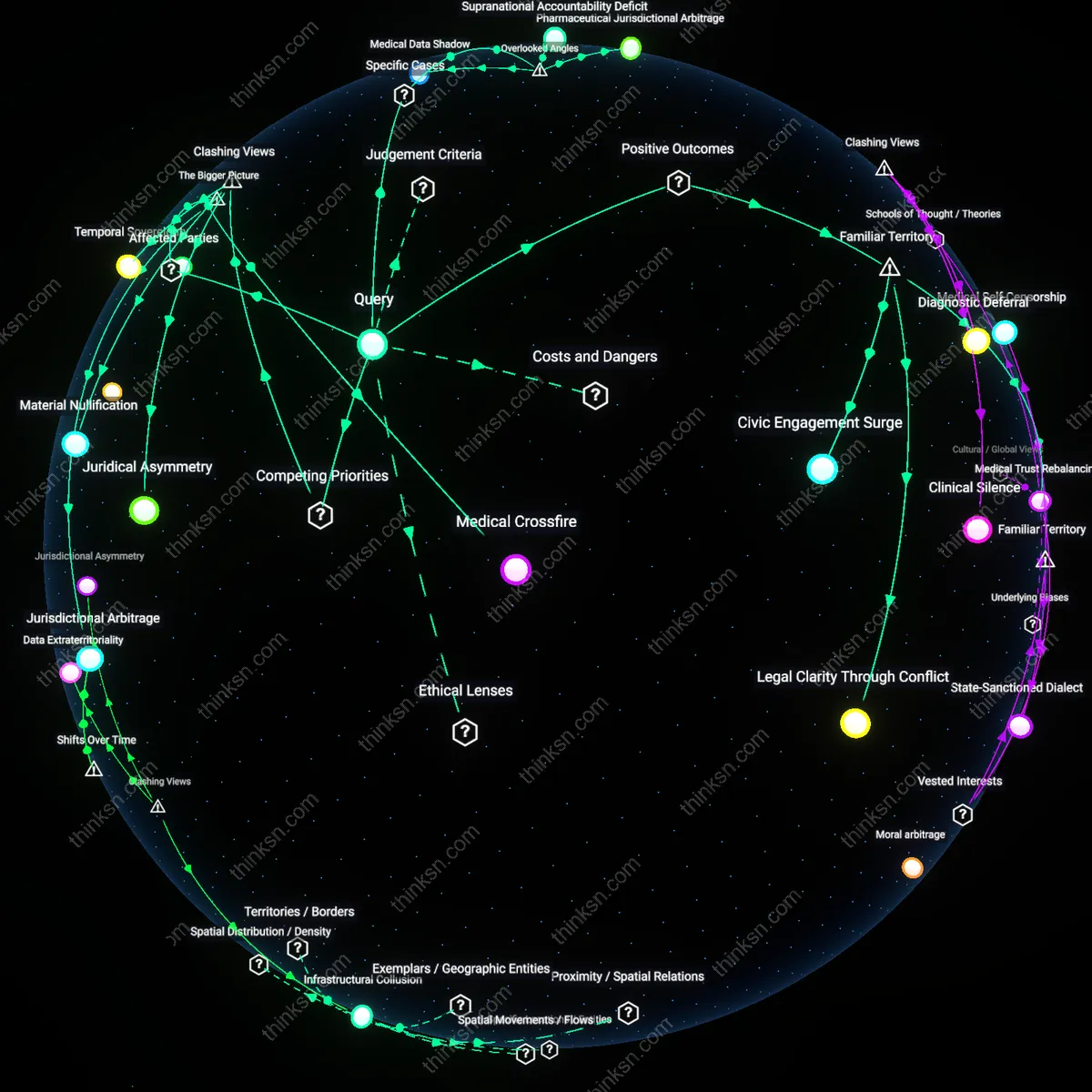

Legitimacy Laundering Mechanism

Member states with underdeveloped privacy safeguards can leverage EU-level approval of limited facial-recognition exceptions—such as those for border security under eu-LISA or migration monitoring—to justify expansive domestic surveillance programs that align nominally with EU policy but exceed its intent. For instance, Greece’s deployment of facial recognition in migrant processing centers gains implicit credibility through participation in the EU’s Entry/Exit System, enabling the normalization of biometric tracking in contexts far beyond the system’s original scope. This transnational endorsement functions as legitimacy laundering, where alignment with EU frameworks shields authoritarian-leaning practices from domestic scrutiny. The overlooked dynamic is that EU institutional partnerships do not just transfer technology or funding—they transfer normative cover, transforming politically fragile practices into presumed best practices by association.

Operational Knowledge Asymmetry

The concentration of EU-funded facial-recognition pilots in Eastern and Southern member states—such as Romania’s smart border projects or Bulgaria’s INTERPOL-linked databases—creates a reservoir of operational expertise within state security agencies that is not matched by equivalent oversight capacity. While Western EU states engage in protracted ethical debates, these frontline agencies accumulate technical proficiency, data access, and inter-agency coordination experience under the banner of EU external border management. This asymmetry entrenches a de facto hierarchy where peripheral states become instrumentation hubs for EU-wide surveillance, yet retain discretion in domestic reuse of acquired tools. The underappreciated consequence is that caution at the EU level does not prevent capability build-up—it redirects it geographically, allowing less scrutinized actors to become latent centers of surveillance gravity through experiential learning rather than formal mandate.

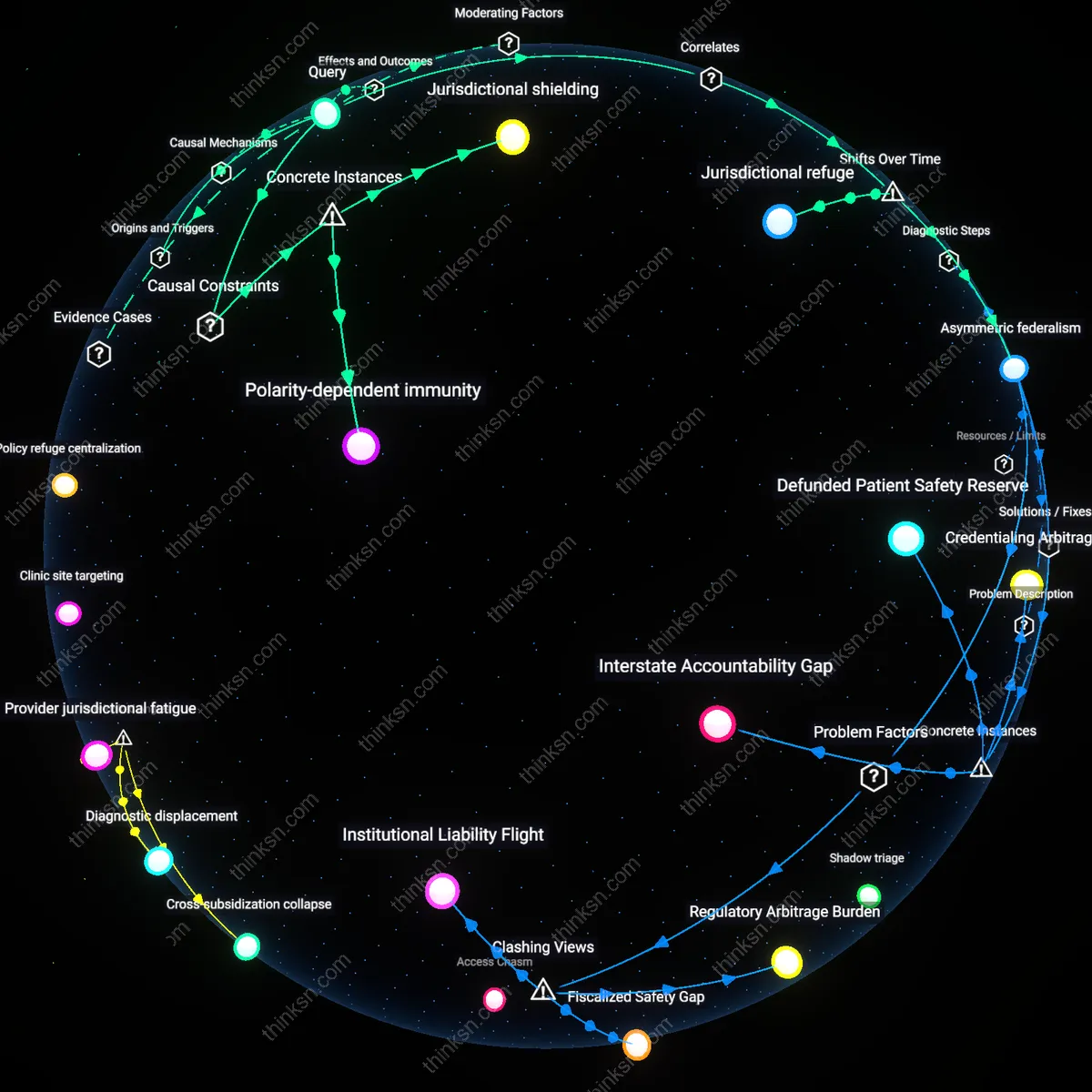

Regulatory Arbitrage Incentives

The EU's stringent and slow-moving regulations on facial-recognition technology create a de facto standard that only well-resourced state actors can legally challenge or delay, which inadvertently incentivizes member states with weaker privacy traditions to accelerate domestic adoption outside EU harmonization channels. National interior ministries in countries like Hungary or Poland can exploit the gap between EU-level deliberation and national implementation to justify unilateral pilot programs, using public security narratives to expand surveillance powers under the guise of filling regulatory vacuums. Because EU oversight mechanisms rely on post-facto infringement procedures, these actors embed systems before legal challenges mature, turning procedural caution into operational impunity. This dynamic reveals that centralized caution does not uniformly constrain power—it fragments enforcement and rewards preemptive action by less accountable states, a reality obscured by the EU’s normative emphasis on harmonized rights protection.

Infrastructure Capture Dynamics

By delaying unified EU-wide rules on facial recognition, the Commission leaves member states to develop their own technical infrastructures and data-sharing protocols, enabling governments with weaker judicial oversight to shape interoperable systems that later become too entrenched to dismantle. Countries such as Bulgaria or Romania, when early-adopting EU-funded border surveillance tools like those linked to eu-LISA, gain outsized influence in shaping backend architectures for facial recognition by becoming default testing grounds. Their lower thresholds for public consent allow rapid integration of biometric databases into cross-border law enforcement networks, effectively locking in permissive standards before EU-wide safeguards are codified. This contradicts the assumption that procedural delay preserves democratic control—it instead cedes technical agency to those most willing to experiment, rendering future EU safeguards performative rather than transformative.

Legitimation by Contrast

The EU’s methodical, rights-based framing of facial recognition debates unintentionally legitimizes more aggressive national deployments by positioning them as pragmatic alternatives to bureaucratic paralysis, particularly in member states where rule-of-law institutions are politicized. When EU institutions emphasize risk assessment and fundamental rights impact studies, leaders in countries like Slovakia or Latvia can portray rapid deployment as necessary for national efficiency, framing caution as elitist indecision that endangers public safety. This rhetorical inversion allows state actors to reposition surveillance expansion not as a rights violation but as corrective action against supranational inertia, thereby using EU deliberation itself as justification for bypassing constraints. The resulting legitimation effect reveals how normative caution can be weaponized to validate repression when governance is asymmetrically distributed across member states.

Regulatory Arbitrage Pathway

The EU’s delayed binding regulation on facial-recognition technology has created a window where member states with weaker privacy traditions, such as Hungary and Poland, host early deployment pilots funded by national budgets or opaque public-private partnerships, exploiting the absence of EU-wide prohibitions before 2023; this mechanism channels surveillance capacity development into jurisdictions with compliant judiciaries and low media scrutiny, transforming what was previously a regulatory gap into an institutionalized backdoor for durable monitoring infrastructures. The non-obvious consequence is that caution, intended to allow deliberation, has instead enabled path dependency on illiberal testing grounds—revealing how temporary delays in supranational oversight can crystallize into hardened territorial asymmetries in state surveillance capacity.

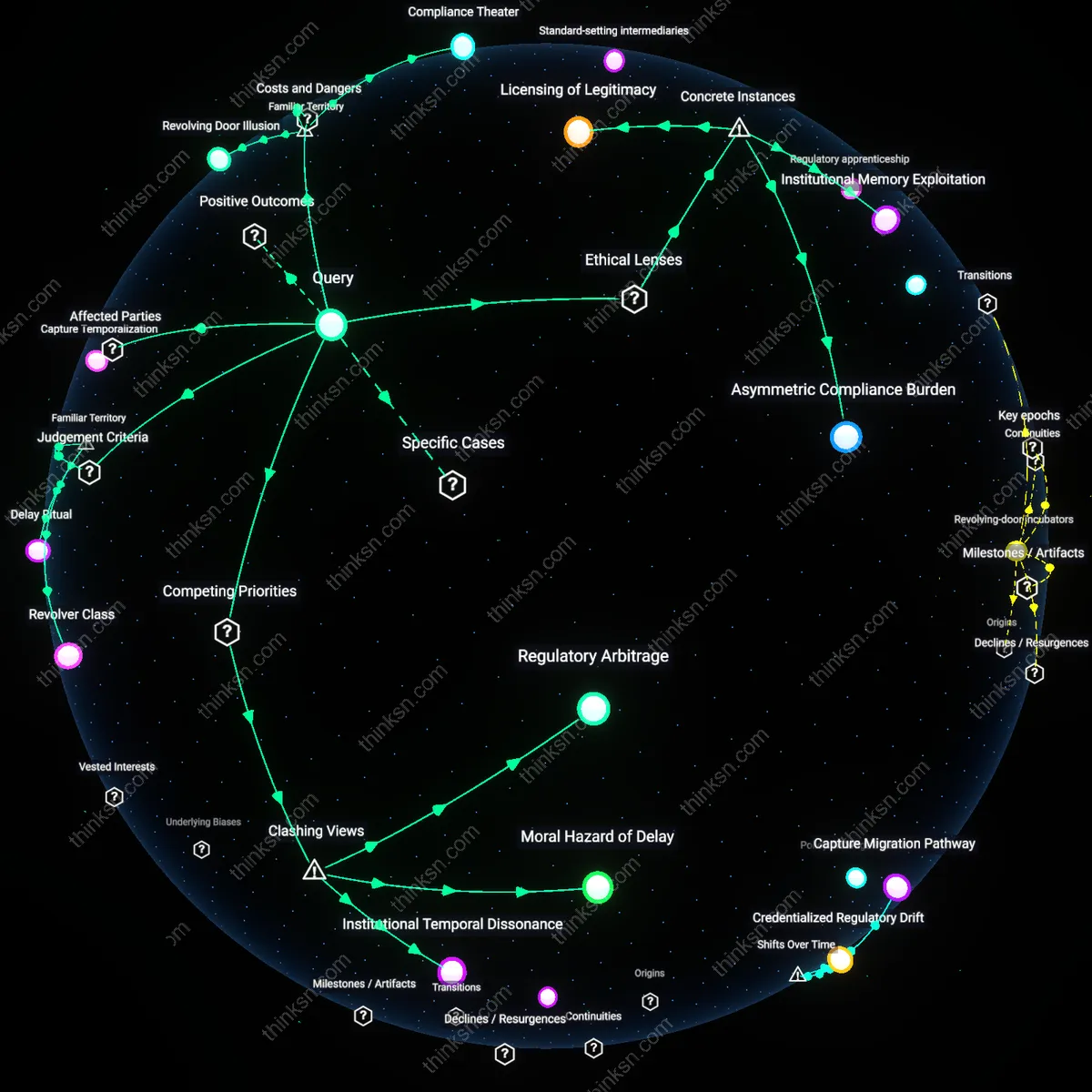

Institutional Lag Doctrine

As the EU shifted from reactive data protection policies pre-2016 (under GDPR’s development) to anticipatory governance in the AI Act debates post-2020, its methodical pace unintentionally validated national-level justifications for ‘experimental’ facial-recognition programs in states like Greece and Bulgaria during border enforcement modernization; because EU institutions emphasized procedural caution over preemptive interdiction, local authorities framed adoption as compliance with broader security mandates, embedding surveillance tools into asylum processing systems during a period of migration crisis escalation. The underappreciated outcome is that a shift toward deliberative temporality in EU governance was reinterpreted locally as permissive inertia, allowing weaker privacy regimes to expand prosecutorial discretion under the guise of procedural experimentation.

Security Justification Inversion

After the 2015–2017 wave of terrorist attacks in Western Europe, national interior ministries in countries including France and Italy leveraged the EU’s hesitation to regulate real-time biometric surveillance as evidence that such tools remained legally ambiguous, thus constructing ad hoc legal frameworks authorizing police use during transitional emergency periods that outlasted their mandates; by anchoring deployment to crisis temporality while EU deliberations dragged into the late 2020s, these states established operational permanence through repetitive 'temporary' use, turning what was originally a provisional exception into normalized doctrine. The overlooked effect is that EU caution was not merely delayed action but became a retroactive alibi for mission creep, revealing how pauses in supranational regulation can be instrumentalized to produce irreversible domestic securitization.

Regulatory Asymmetry Trap

The EU’s harmonized restrictions on facial recognition have paradoxically empowered member states with pre-existing surveillance infrastructures by shifting enforcement burdens onto weaker institutions, enabling selective compliance. As centralized EU oversight stalled after the 2018 GDPR framework, countries like Hungary and Poland institutionalized opaque facial recognition deployments under public safety pretexts, exploiting transitional enforcement gaps between 2020–2023. This mechanism—a divergence between formal regulation and local implementation capacity—reveals how delays in EU-level standard setting inadvertently legitimize entrenched surveillance practices in states where judicial independence has eroded, making regulatory compliance a function of political will rather than technical adherence.

Legacy Infrastructure Advantage

States that expanded facial recognition during the 2010–2016 period under minimal oversight now circumvent EU caution by reclassifying systems as 'security modernization,' preserving operational continuity despite post-2020 regulatory shifts. In France and Italy, legacy investments in urban surveillance networks allowed law enforcement agencies to incrementally upgrade capabilities without triggering new regulatory scrutiny, framing enhancements as maintenance rather than expansion. This trajectory, rooted in pre-GDPR procurement decisions, demonstrates how prior technological entrenchment creates path dependencies that neutralize supranational constraints, turning past infrastructural choices into present-day governance loopholes.

Normalization Threshold

By deferring binding facial recognition legislation until after 2025, the EU has allowed repeated pilot deployments in border regions to establish de facto surveillance norms, particularly in Greece and Bulgaria, where Frontex collaborations normalized real-time biometric monitoring between 2019 and 2023. The transitional phase of 'experimental' use created a permissive environment where continuous operation substituted for formal authorization, effectively resetting expectations of privacy in Southeast Europe. This shift—from temporary exception to routine practice—reveals how prolonged regulatory hesitation enables localized normalization to become irreversible, transforming interim measures into permanent operational baselines.

Legitimacy Arbitrage

Governments in member states with lower judicial independence use the EU’s deliberative caution as proof that biometric surveillance is inherently contentious, thereby positioning their own rapid adoption as a sovereign necessity. By framing EU hesitation as indecision, actors such as national police forces in Greece or Romania justify domestic rollouts as filling a security vacuum, wrapping expansion in the language of stability. This dynamic allows states to align rhetorically with EU norms while materially diverging. The underappreciated risk is that procedural caution can be weaponized to legitimize unilateralism.

Compliance Mirage

The presence of strong EU-level guidelines creates a false impression of uniform control, enabling member states with politicized data protection authorities to meet minimal procedural standards while pursuing aggressive facial recognition programs. For instance, Bulgaria or Latvia may satisfy reporting requirements but deploy systems through opaque municipal contracts that evade public scrutiny. The façade of compliance reduces external scrutiny from EU institutions and civil society, making overreach harder to detect. The key surprise is that visible regulation can mask divergence when audits and transparency mechanisms are unevenly applied.

Regulatory Asymmetry Exploitation

The EU’s uniform restrictions on facial-recognition technology create a de facto advantage for member states with weaker privacy enforcement capacities, enabling them to act as hubs for surveillance experimentation. National agencies in such states leverage gaps between EU-level prohibitions and inconsistent domestic implementation to host infrastructure—such as local biometric databases or AI pilot programs—that would be blocked in more stringent jurisdictions. This occurs through fragmented oversight mechanisms where supranational rules lack real-time enforcement, allowing discretion in how directives are transposed. The significance lies in how central constraints can decentralize power to those least equipped to uphold them, turning compliance weakness into strategic autonomy.

Infrastructure Capture Incentive

Strict EU-wide limits on facial recognition push development underground or toward state-controlled entities in member states where oversight is lax, effectively incentivizing national governments to capture emerging surveillance capabilities. In countries like Hungary or Poland, where judicial independence is compromised, public tenders for AI-powered systems are awarded to politically aligned firms that embed backdoor access or opaque algorithms into municipal security networks. These systems are justified as compliance with law enforcement needs under EU exceptions for public safety, yet operate without audit or accountability. The overlooked dynamic is that top-down bans, lacking parallel investment in transparent alternatives, create a vacuum filled by clientelistic techno-authoritarian upgrading.

Legitimation Spillover Effect

EU hesitation to fully ban facial recognition, framed as precautionary, inadvertently legitimizes its restricted use, which member states with weak privacy norms interpret as tacit endorsement for expansive domestic deployment. When the European Commission allows narrow exceptions—for border control or counterterrorism—these categories are rhetorically stretched by states like Greece or Bulgaria to justify mass scanning in public housing or migration camps. The enabling condition is the absence of a clear, positive standard for what constitutes 'proportionate' use, allowing local authorities to align with EU language while violating its spirit. The underappreciated consequence is that ambivalence at the center becomes a rhetorical resource for peripheral actors seeking normative cover.