Should Policymakers Deploy Autonomous Weapons With Unclear Ethical Futures?

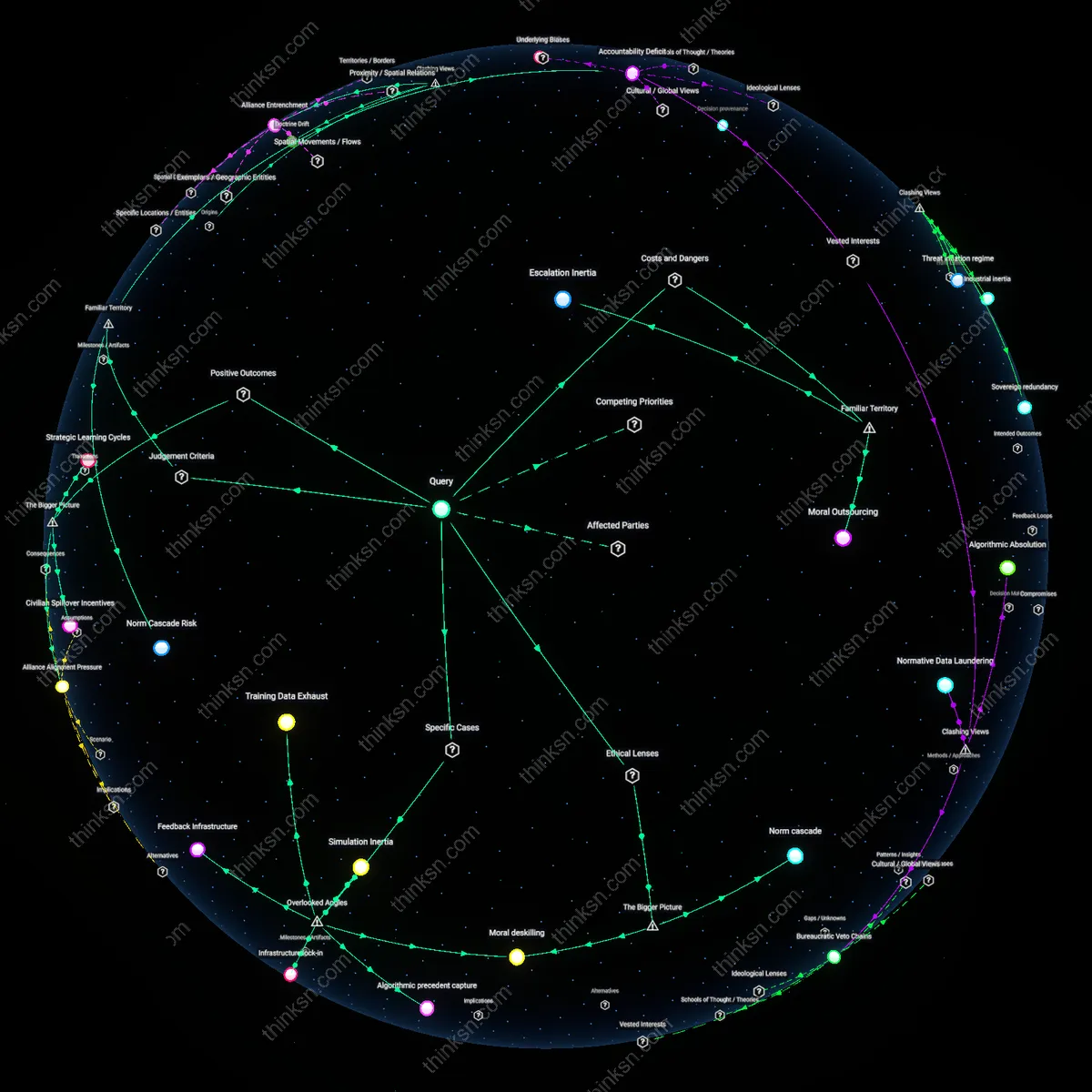

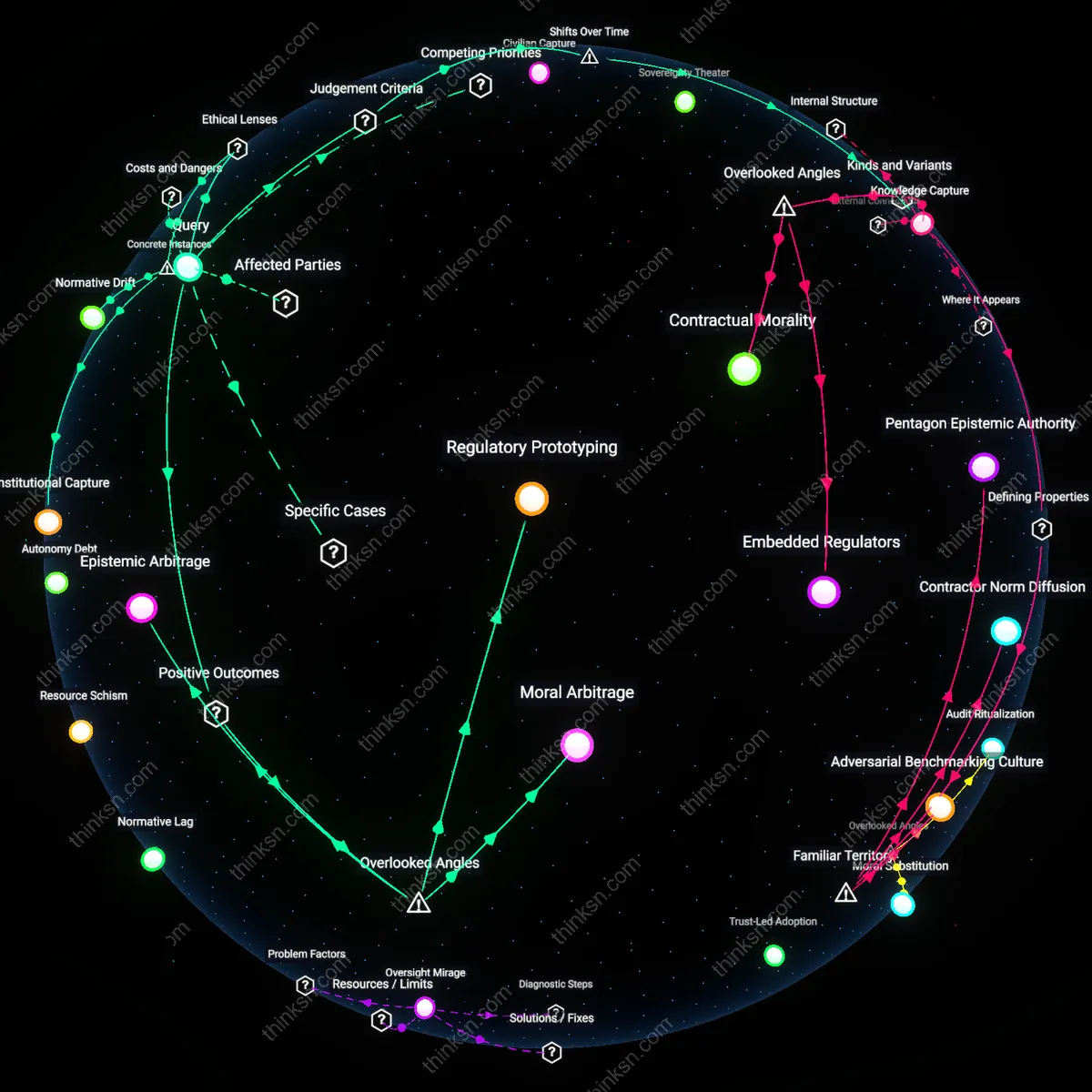

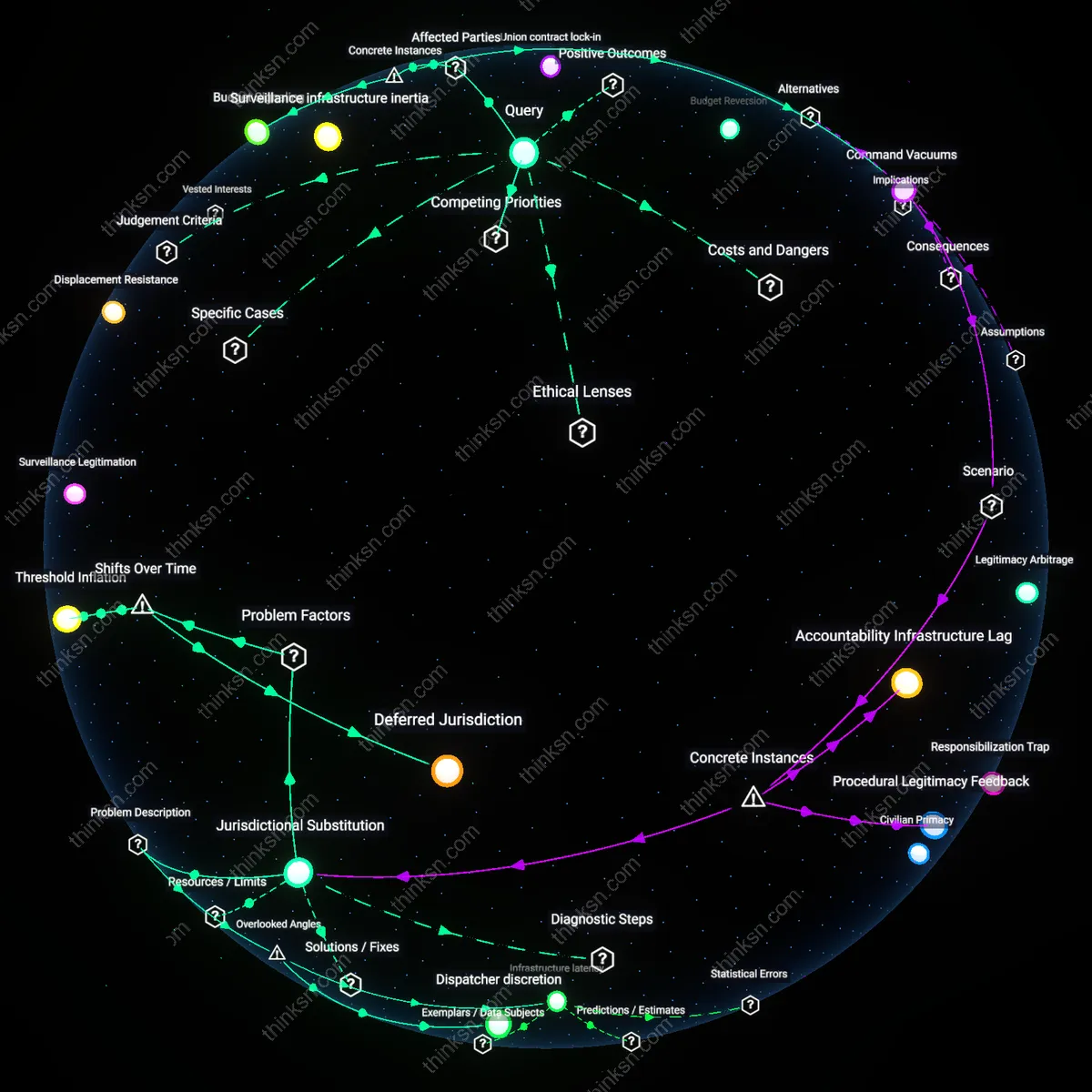

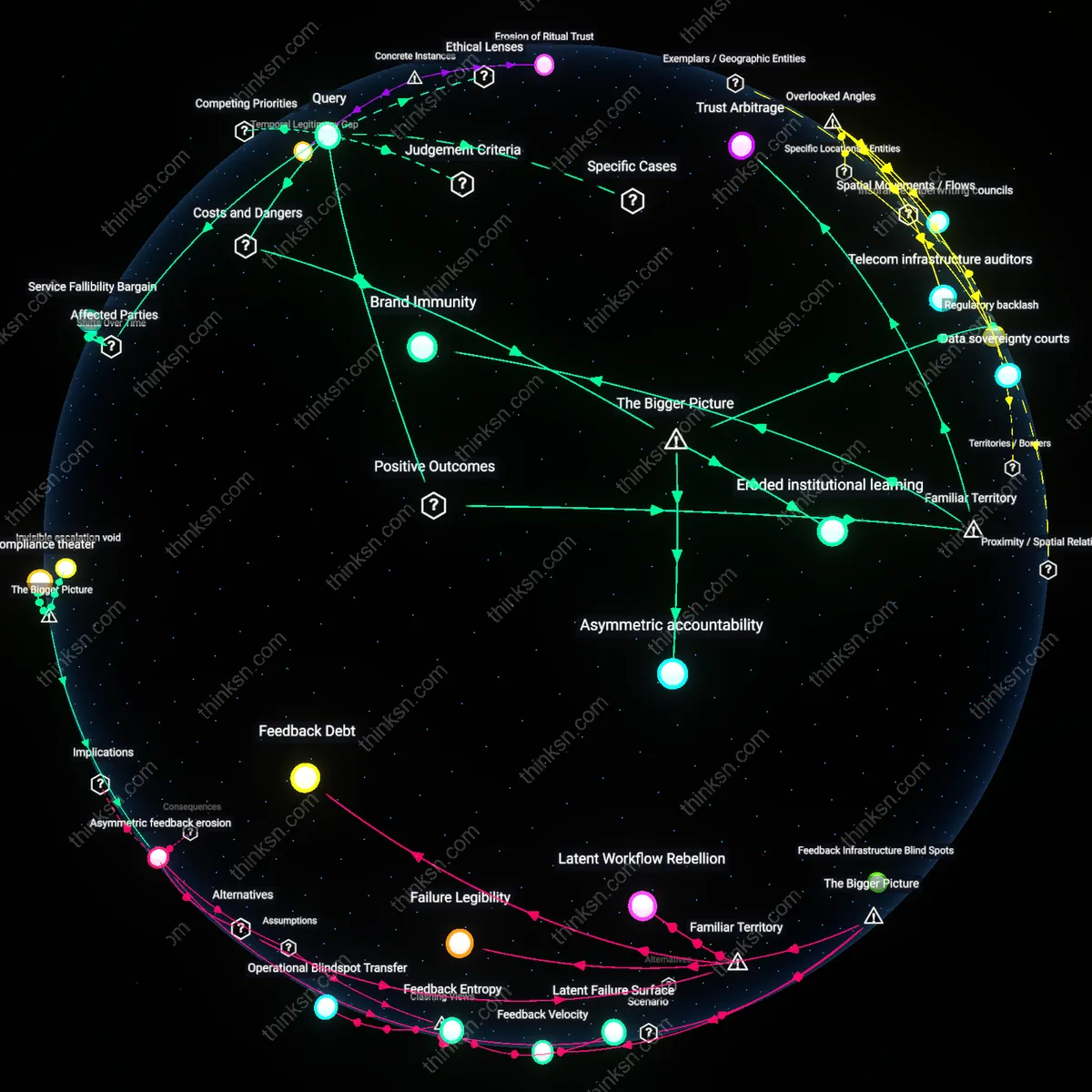

Analysis reveals 17 key thematic connections.

Key Findings

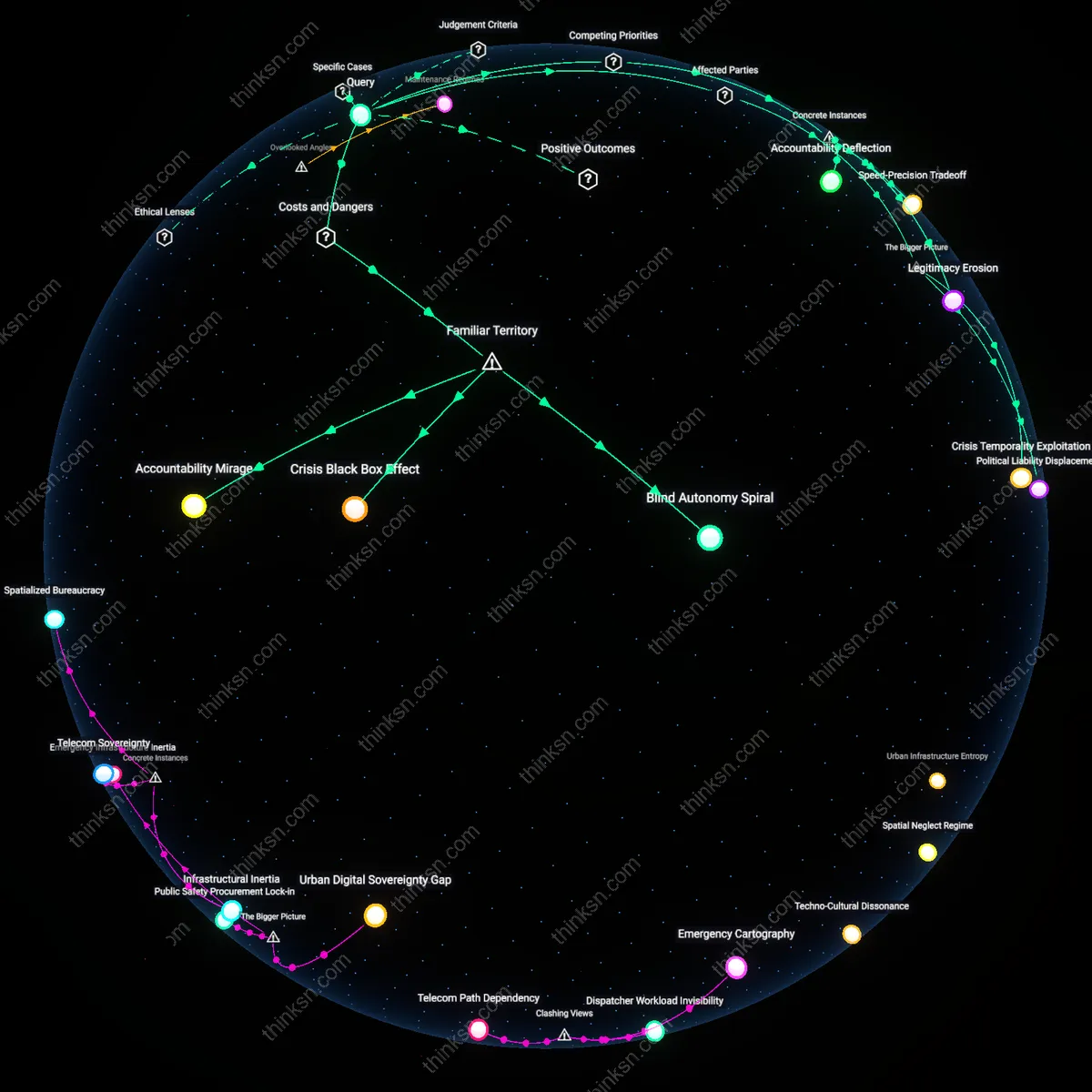

Accountability Deficit

Policymakers must mandate auditable decision logs in autonomous weapons systems to enable retrospective ethical review by civilian oversight bodies. This creates a technical mechanism through which actions taken during conflict can be traced to programming choices, allowing institutions like parliamentary defense committees or international tribunals to assign responsibility when unintended harm occurs. The non-obvious insight is that the familiar demand for 'accountability' in warfare cannot rely on human command chains alone when machines make split-second lethal decisions—thus the true deficit lies not in intent, but in structural traceability across layers of algorithmic autonomy.

Norm Cascade Risk

Policymakers should model autonomous weapons deployment as a potential catalyst for norm erosion in international humanitarian law by simulating how adversaries may reciprocate with less restrained systems. Public discourse often links the technology to sci-fi dystopias, but the real danger lies in how incremental acceptance of machine-based killing—framed initially as precision or force protection—can shift baseline expectations of wartime conduct across state and non-state actors. The underappreciated dynamic is that familiar ethical boundaries, like distinction between combatants and civilians, weaken not through dramatic violations but through cumulative normalization of lower thresholds for acceptable harm.

Strategic Myopia Trap

Policymakers must subject autonomous weapons procurement to intergenerational cost-benefit analysis modeled after climate policy frameworks, where near-term military advantages are weighed against long-term geopolitical instability. Defense planners typically assess weapons through tactical efficiency and deterrence efficacy, yet the deeper risk lies in locking nations into arms trajectories that reduce diplomatic flexibility and increase accident risks decades later—akin to how nuclear deterrence created enduring systemic rigidity. The overlooked issue is that familiar logics of strategic superiority blind institutions to how today’s 'rational' investments may constrain future states’ autonomy in crisis decision-making.

Strategic Learning Cycles

Policymakers can institutionalize adaptive governance frameworks that treat initial deployments of autonomous weapons as structured experiments, enabling systemic learning under real-world conditions. By embedding feedback mechanisms from military operators, allied governments, and defense ethics boards into review cycles, such frameworks convert operational data into ethical risk assessments that evolve with technological maturity—this creates a feedback-dominant policy environment where deployment informs restraint. What is underappreciated is that limited, monitored use generates higher-resolution ethical intelligence than theoretical modeling alone, allowing normative standards to emerge from practice rather than conjecture. The mechanism hinges on interagency coordination bodies—like the U.S. Department of Defense’s AI Ethics Board—gaining enforcement authority over deployment pauses, transforming trial deployments into calibrated societal learning events.

Alliance Alignment Pressure

Policymakers can leverage multinational defense partnerships to co-define ethical red lines for autonomous weapons, using alliance cohesion as a disciplining force on unilateral overreach. When major powers such as NATO members condition interoperability and intelligence sharing on adherence to shared AI use standards, they create systemic incentives for restraint rooted in military practicality rather than abstract morality. The key dynamic is that operational effectiveness in coalition warfare depends on predictable, trustworthy systems—autonomous weapons that behave erratically or violate norms risk exclusion from joint operations, which in turn pressures national developers to align with collective ethical thresholds. This alignment function operates through procurement interoperability requirements, not diplomacy alone, making ethical constraints technically enforceable.

Civilian Spillover Incentives

Policymakers can channel investments in autonomous weapons verification technologies toward dual-use civilian applications, creating political constituencies that sustain ethical oversight. When sensor fusion algorithms, anomaly detection systems, or kill-chain audit trails developed for military autonomy are repurposed for disaster response robotics or medical AI safety monitoring, they generate tangible public benefits that justify continued regulatory investment. The overlooked dynamic is that defense-originated ethical control systems gain civilian economic value, transforming oversight mechanisms from cost centers into innovation catalysts—and thus securing long-term funding and institutional support. This spillover effect is amplified when agencies like DARPA mandate civil transition pathways in autonomy contracts, embedding societal benefit into weapons development from inception.

Legibility of Violence

Policymakers assess long-term risks of autonomous weapons by treating algorithmic decision-making in combat as inherently legible and trackable, assuming that system logs and audit trails will reliably capture ethical breaches. This mechanistic faith overlooks how real-world operational chaos, adversarial spoofing, and classified black boxes obscure meaningful accountability, rendering post-hoc ethical analysis ineffective. The non-obvious danger is that the very assumption of legibility—common in tech-centric policy circles—enables deployment by creating a false sense of control over lethal outcomes.

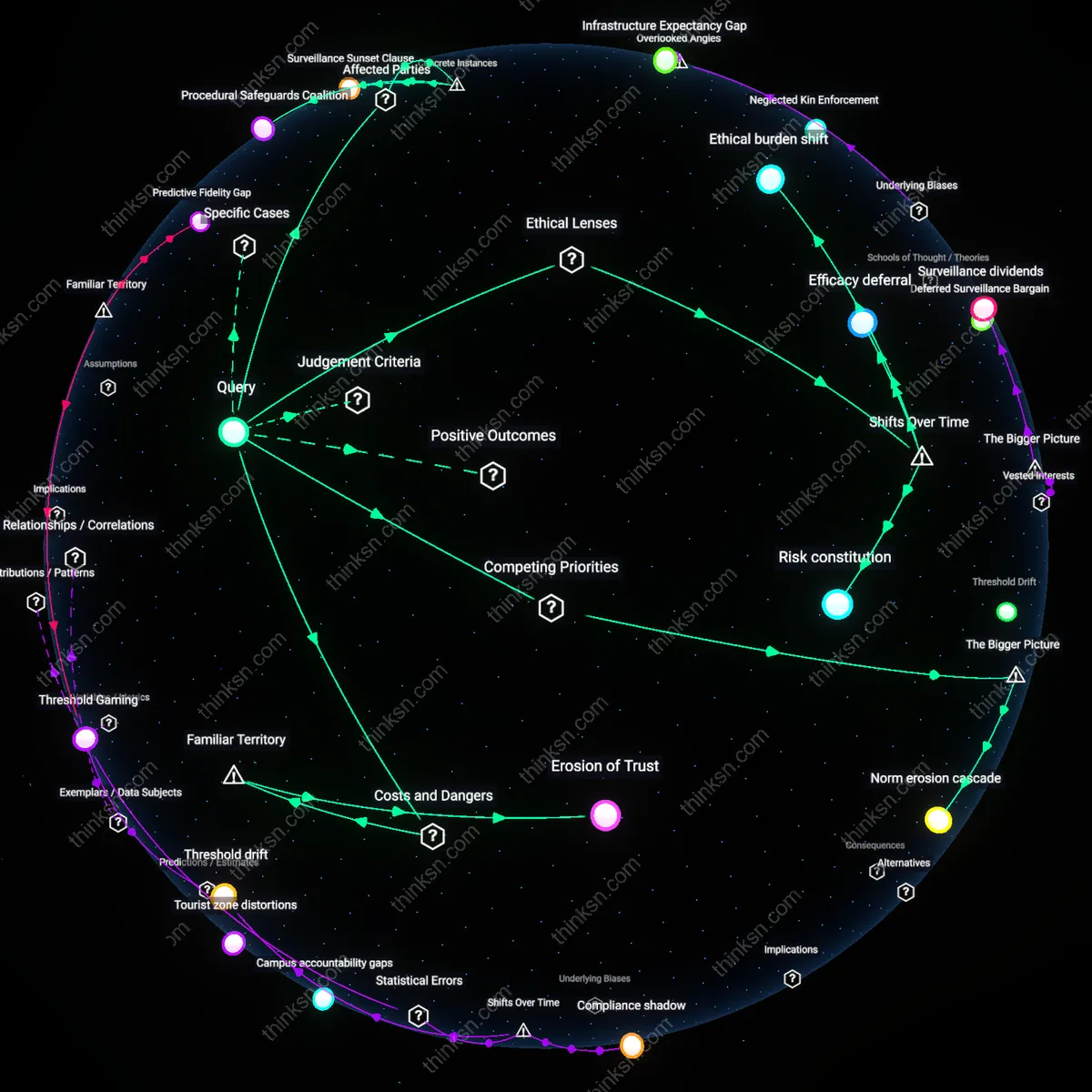

Moral Outsourcing

Policymakers defer ethical judgment to operational commanders and AI developers, assuming that distributing responsibility across layers of command and code insulates political leadership from culpability. This mirrors familiar bureaucratic evasion seen in past military innovations, yet the novelty lies in how algorithmic opacity transforms delegation into permanent abdication. The underestimated risk is that routine reliance on machines to 'make hard choices' systematically erodes institutional moral agency, normalizing outcomes no human would deliberately authorize.

Escalation Inertia

Policymakers justify autonomous deployment by citing deterrence and speed, assuming that faster responses prevent conflict through credible threat signaling. But in practice, once integrated into nuclear or border defense doctrines—as seen in U.S.-China-Taiwan or NATO-Russia postures—these systems create irreversible momentum toward preemption due to compressed decision cycles. The hidden cost is that the familiar logic of strategic stability relies on human deliberation; removing it risks conflicts escalating not by design, but by unchallengeable machine timing.

Norm cascade

Policymakers can assess long-term societal consequences of autonomous weapons by recognizing how early deployment decisions activate norm cascades through the observability and replicability of tactical success among peer and emerging militaries. Once a critical mass of states adopts autonomous systems due to demonstrated battlefield efficacy, ethical reservations become strategically marginalized and disarmament multilateralism erodes, not because of ideological shifts but due to systemic competitive emulation—what is underappreciated is not moral indifference but the structural inevitability of norm erosion under security dilemma dynamics.

Liability opacity

Policymakers can evaluate ethical consequences by exposing how autonomous weapons dissolve accountability into technical indeterminacy, where neither programmers, commanders, nor manufacturers can be reliably assigned legal responsibility for unlawful outcomes under current frameworks like the Geneva Conventions. This creates liability opacity—an erosion not of intent but of juridical clarity—enabling de facto impunity not through malice but through the complexity of distributed agency across hardware, software, and command hierarchies, a feature amplified in asymmetric warfare contexts.

Moral deskilling

Policymakers should anticipate that continuous reliance on autonomous weapons gradually erodes institutional capacity for ethical battlefield judgment, as command structures outsource situational assessments to predictive algorithms trained on historical data patterns devoid of contextual moral reasoning. This phenomenon—moral deskilling—represents not a deliberate ethical abdication but a systemic atrophy of human judgment within military cultures, where risk-avoidant, algorithm-mediated decisions become default, undermining proportionality and distinction principles in ways that only manifest after generational transition in officer corps.

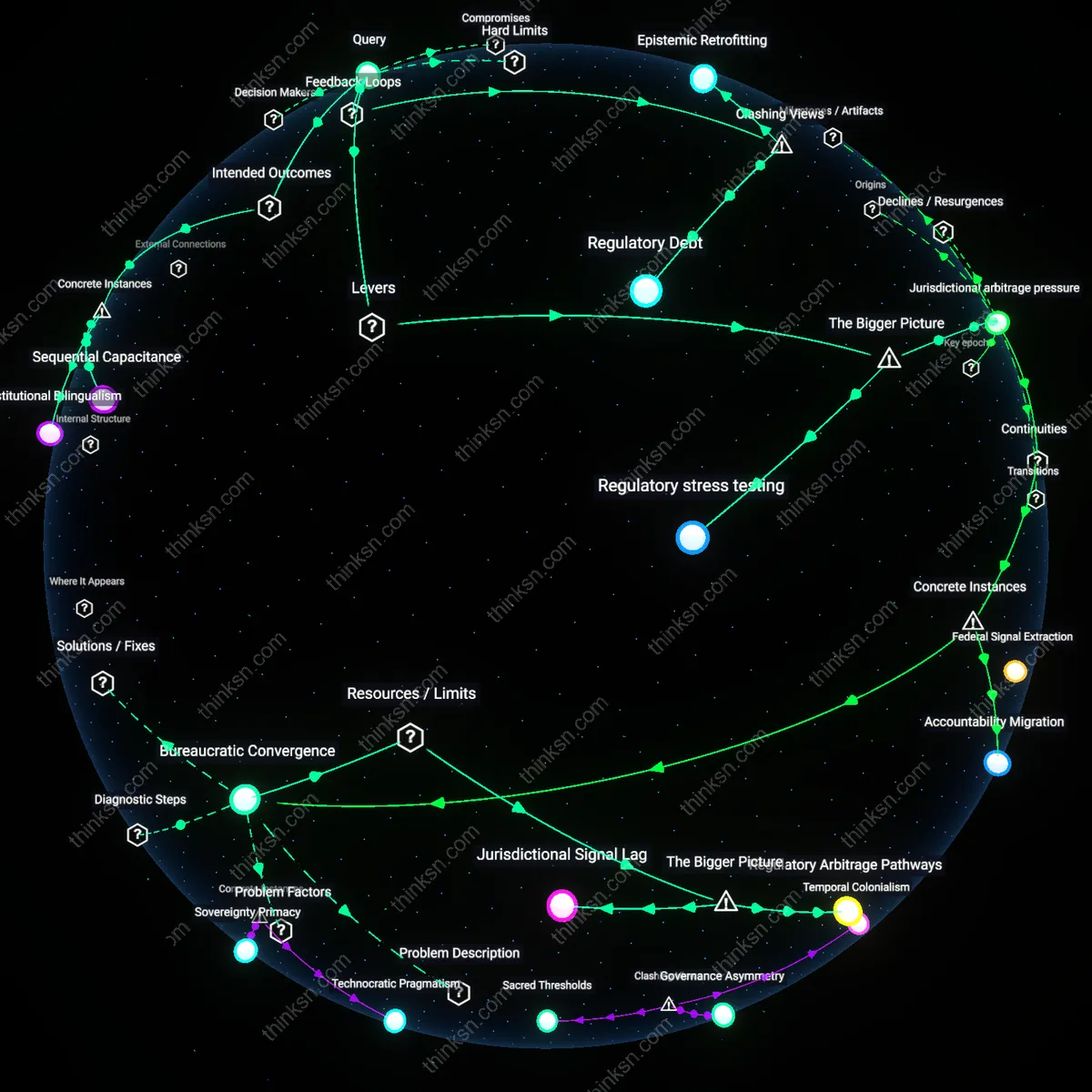

Algorithmic precedent capture

Policymakers should examine how early autonomous weapon deployments create irreversible legal and ethical path dependencies by entrenching classification standards that later systems must follow, as seen in the U.S. Department of Defense’s use of machine-learning classifiers for identifying combatants in Afghanistan, which subsequently became de facto benchmarks for civilian casualty assessment across NATO missions. Once a training dataset or decision threshold gains institutional authority, it shapes how future systems interpret ambiguity, even as contexts change—such as labeling patterns in urban environments being misapplied in cultural settings where behavioral norms differ. This form of algorithmic precedent is rarely treated as a governance variable, yet it constrains future ethical flexibility by embedding historical data biases into operational doctrine, thereby making it harder to adapt to evolving humanitarian norms or asymmetric engagement scenarios.

Infrastructure lock-in

The long-term societal consequences of autonomous weapons are best assessed by studying how defense procurement cycles in countries like South Korea—where the Agency for Defense Development has committed to integrated AI command systems for border surveillance—create material dependencies that limit future policy reversibility, as decommissioning becomes economically and operationally disruptive. Once communication architectures, sensor networks, and command protocols are designed around autonomous coordination, dismantling them risks degrading overall readiness, effectively forcing successive governments to retain or expand capabilities regardless of changing ethical consensus. This physical and organizational entrenchment is typically absent from ethical impact frameworks, which focus on intent or software behavior, yet it is the hard infrastructure—not the code—that ultimately constrains democratic recalibration and perpetuates deployment trajectories.

Feedback Infrastructure

Policymakers can assess long-term societal consequences of autonomous weapons by analyzing how operational feedback loops from battlefield AI integration reshape civilian oversight capacity, as seen in the U.S. Defense Innovation Unit’s Project Maven, where machine learning systems deployed for drone video analysis gradually eroded congressional reporting norms through opaque model updates and data drift. This mechanism operates through classified algorithmic refinements that escape public scrutiny, making traditional legislative review cycles obsolete; the non-obvious insight is that the degradation of democratic accountability occurs not through overt secrecy but through the pace and opacity of software maintenance infrastructure, which quietly bypasses deliberative governance.

Training Data Exhaust

Assessment of ethical consequences must account for how the environmental and labor costs of generating training data for autonomous weapons systems embed long-term ecological debts, exemplified by the rare earth mining and digital sweatshops powering image labeling for Chinese military AI systems like those developed by SenseTime for border surveillance drones. These systems rely on extractive data chains that externalize environmental harm and cognitive labor onto marginalized geographies, revealing that the ethical footprint of autonomy lies less in deployment decisions than in the hidden metabolic economy of pre-deployment data refinement, a dimension typically excluded from arms control ethics frameworks.

Simulation Inertia

The long-term societal impact of autonomous weapons is shaped by the way military simulation environments—such as those used by the UK Ministry of Defence’s Porton Down facility to model AI-driven combat scenarios—become self-validating belief systems that conflate predictive fidelity with strategic inevitability, thereby narrowing policy alternatives over time. Because these simulations are repeatedly used to justify procurement paths, they generate path dependency not through accuracy but through bureaucratic repetition, an overlooked mechanism where the ritual use of synthetic futures crowds out non-technocratic strategic imagination, effectively locking in ethical assumptions prior to real-world deployment.