Personalized AI Learning: Equity Risk vs. Benefit?

Analysis reveals 8 key thematic connections.

Key Findings

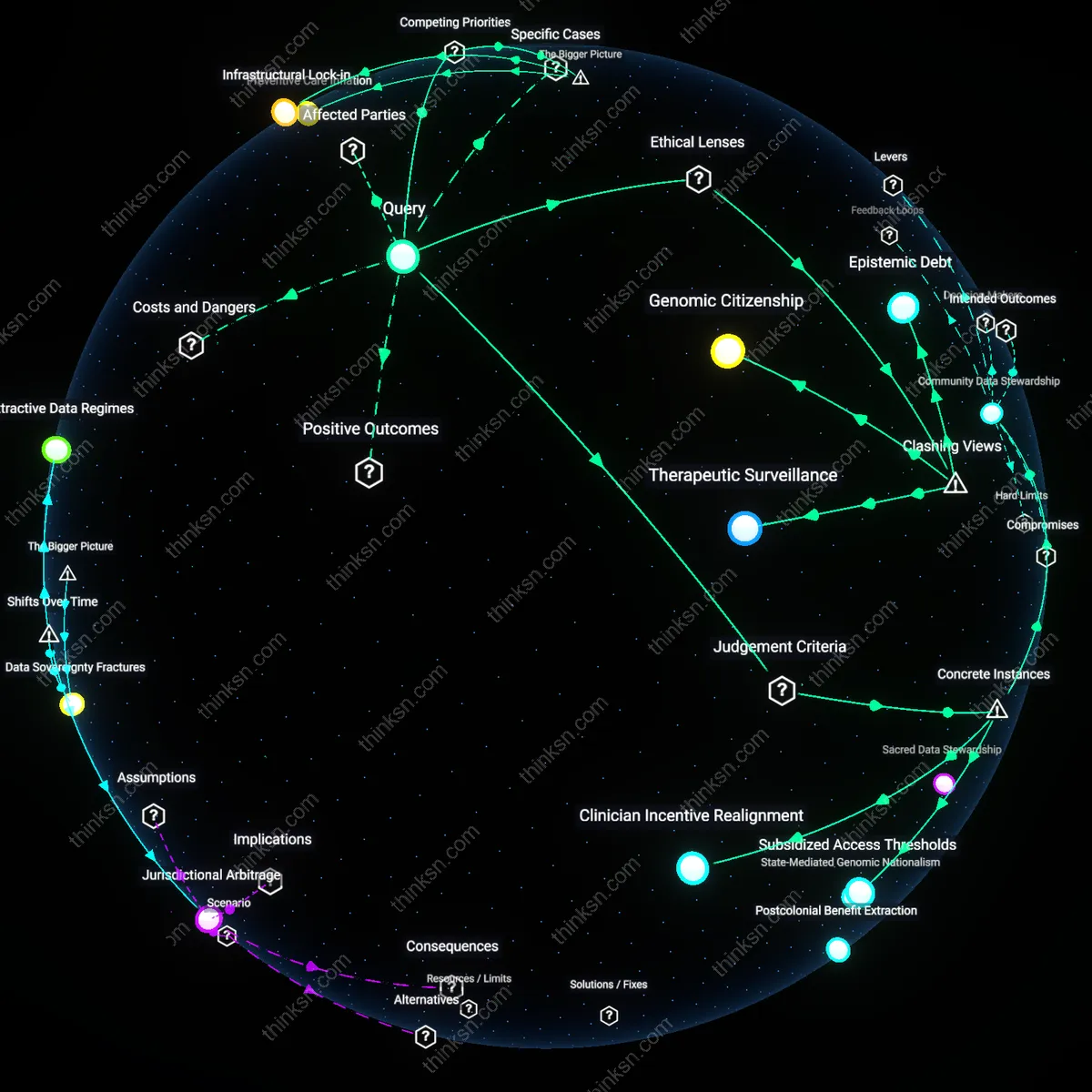

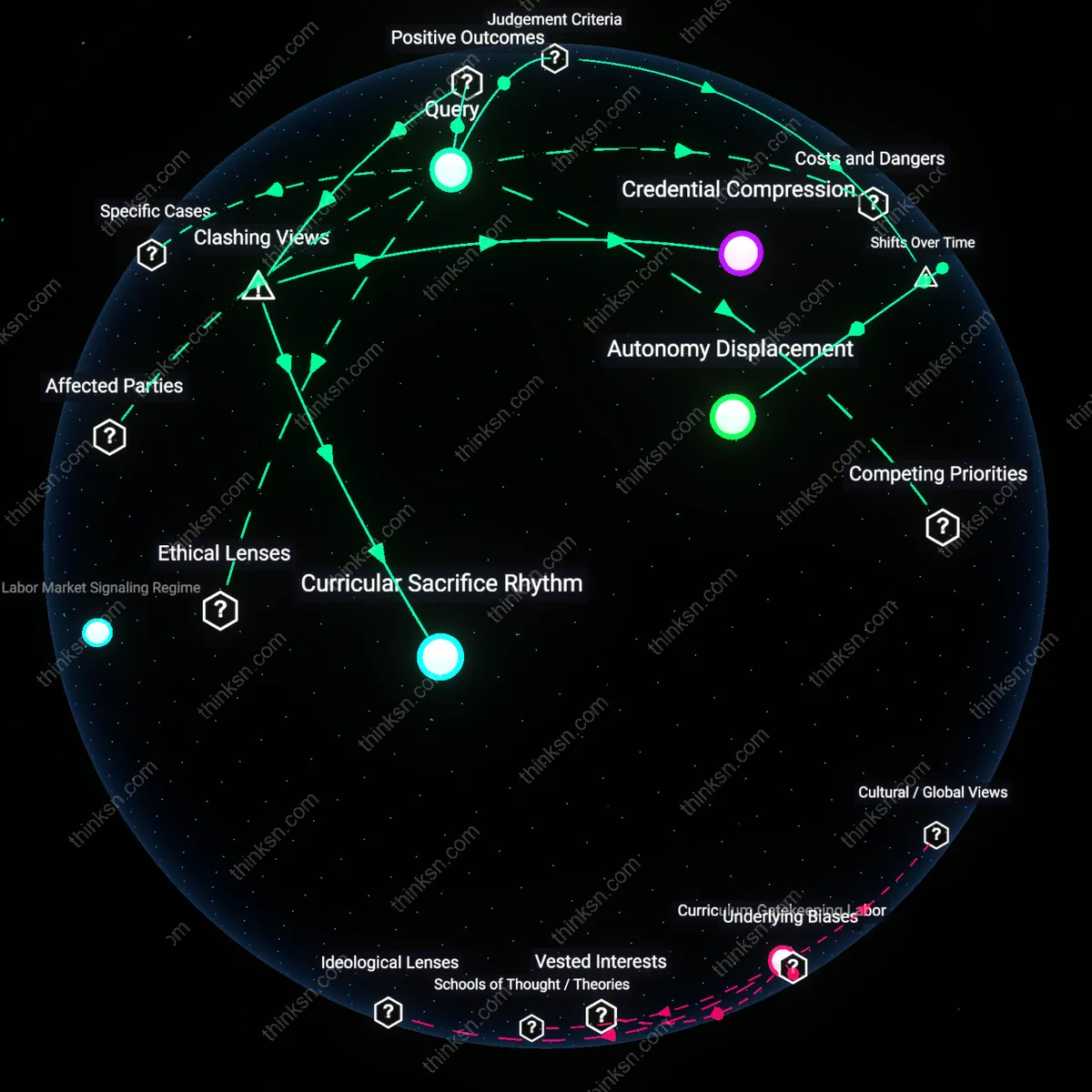

Teacher Agency Erosion

We can assess the balance by measuring how AI-driven personalization displaces teacher judgment in low-resource schools, where scripted digital curricula replace adaptive professional decision-making. Public school systems under pressure to raise test scores rapidly adopt AI platforms that standardize instruction, particularly in urban districts with high teacher turnover, thereby reducing pedagogical flexibility for the very educators serving marginalized students. This shift is significant because it reframes the equity risk not as a mere access gap but as a quiet transfer of instructional authority from trained professionals to proprietary algorithms. What’s underappreciated is that personalized learning’s benefit to privileged students—customization—comes at the cost of professional discretion for teachers in underfunded contexts, intensifying existing power asymmetries rather than flattening them.

Parental Algorithmic Advocacy

We can assess the balance by observing how middle- and upper-class parents leverage AI learning dashboards to negotiate for their children in school meetings, turning personalized data into new forms of academic entitlement. These parents, already skilled in navigating educational bureaucracy, use granular performance analytics from apps like DreamBox or IXL to demand differentiated assignments or teacher time, while working-class parents with less digital literacy or flexible schedules cannot activate this feedback channel. This dynamic is critical because it transforms personalization from a pedagogical tool into an engine of symbolic capital, where data fluency becomes a new front in unequal advocacy. The subtlety missed in most discourse is that the same transparency promising equity enables intensified micro-advocacy by those already advantaged, deepening influence gaps behind the scenes.

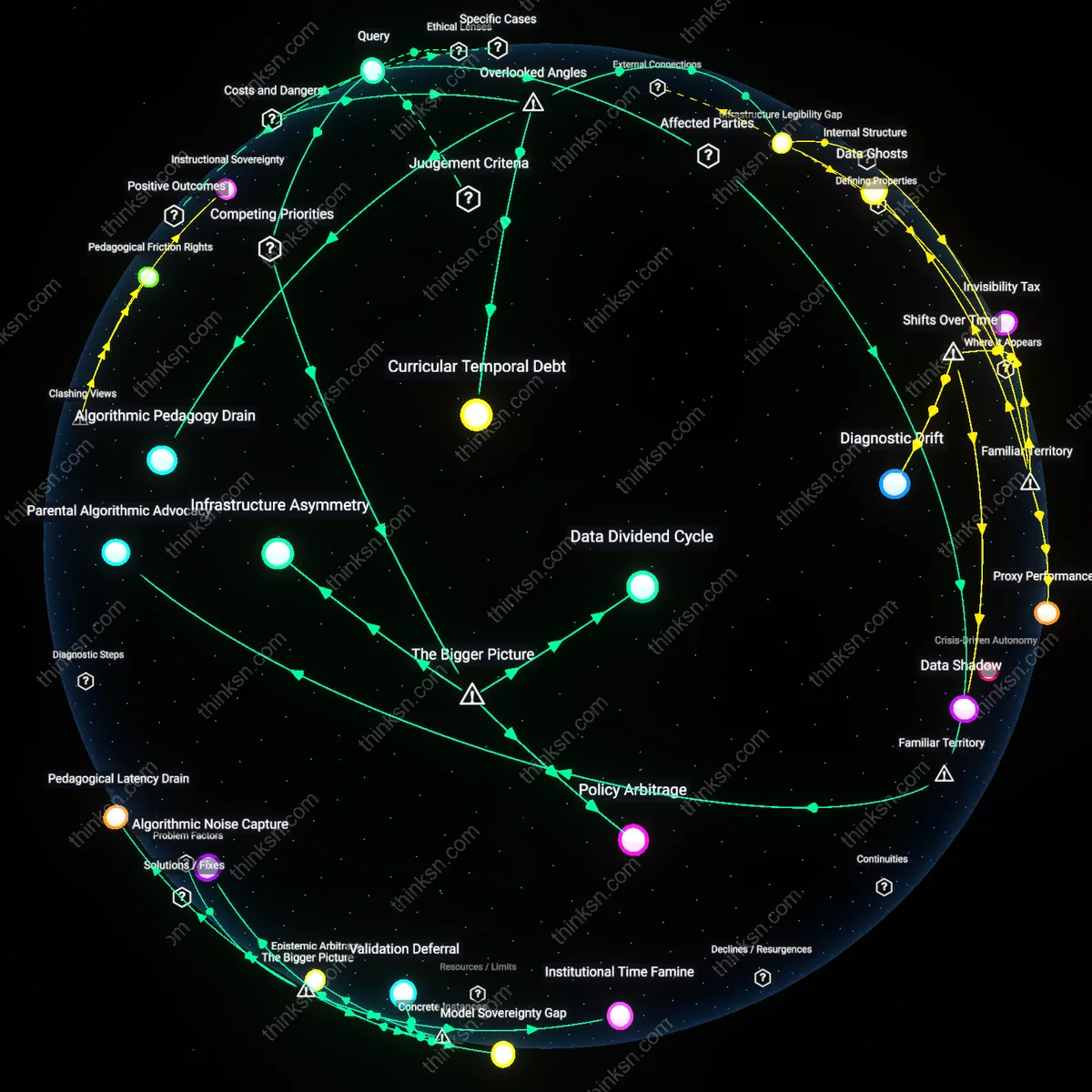

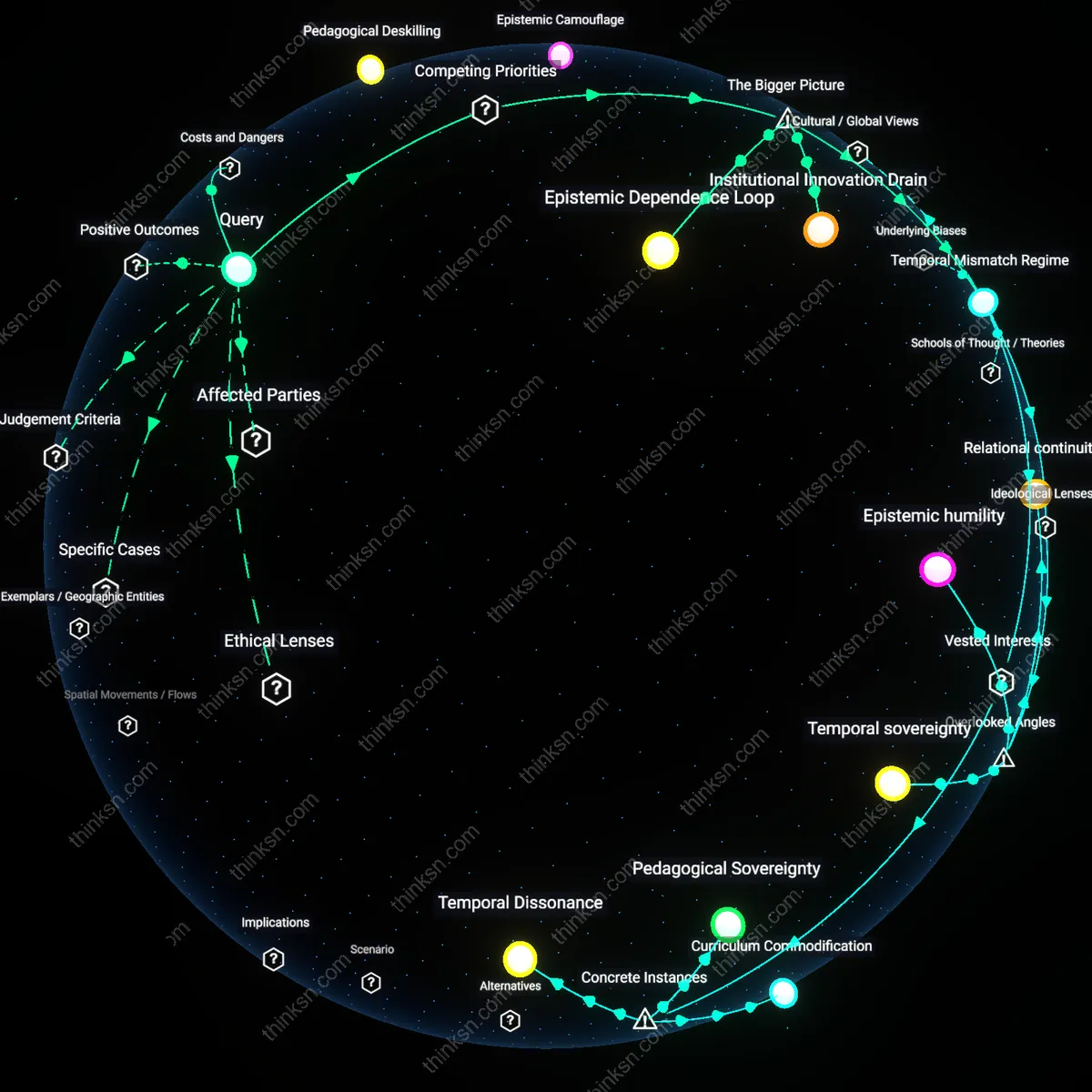

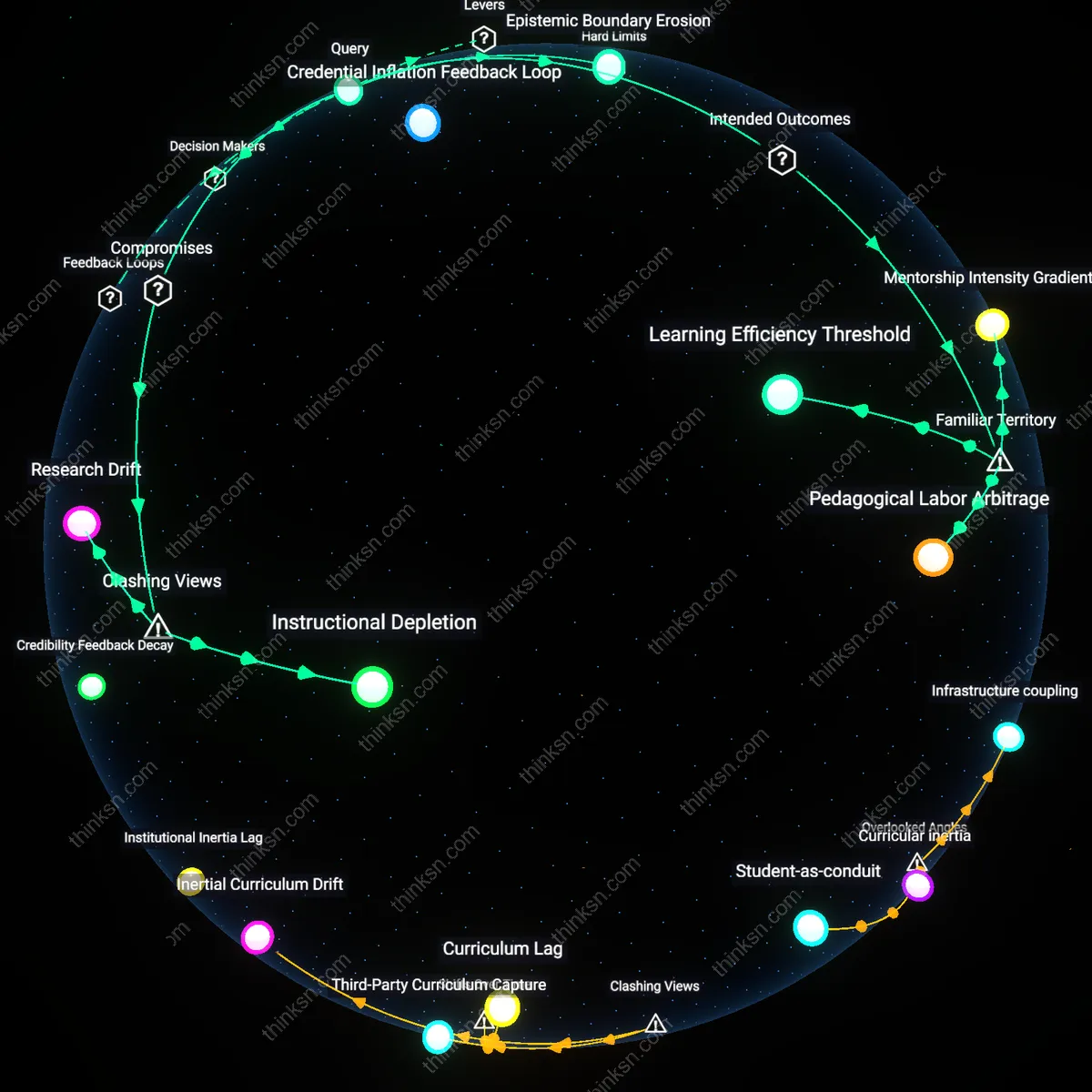

Algorithmic Pedagogy Drain

AI-driven personalized learning diverts instructional design expertise from curricular innovation to algorithm maintenance in under-resourced schools. As districts with constrained professional development budgets allocate teacher time to troubleshooting adaptive platforms rather than collaborative lesson planning, the pedagogical capacity of experienced educators erodes, reinforcing a hidden feedback loop where AI dependence weakens the very teaching quality it purports to enhance. This shift matters because it transforms teachers from knowledge mediators into technical operators, a dynamic rarely measured in equity research that focuses on access or outcomes but not professional capital erosion.

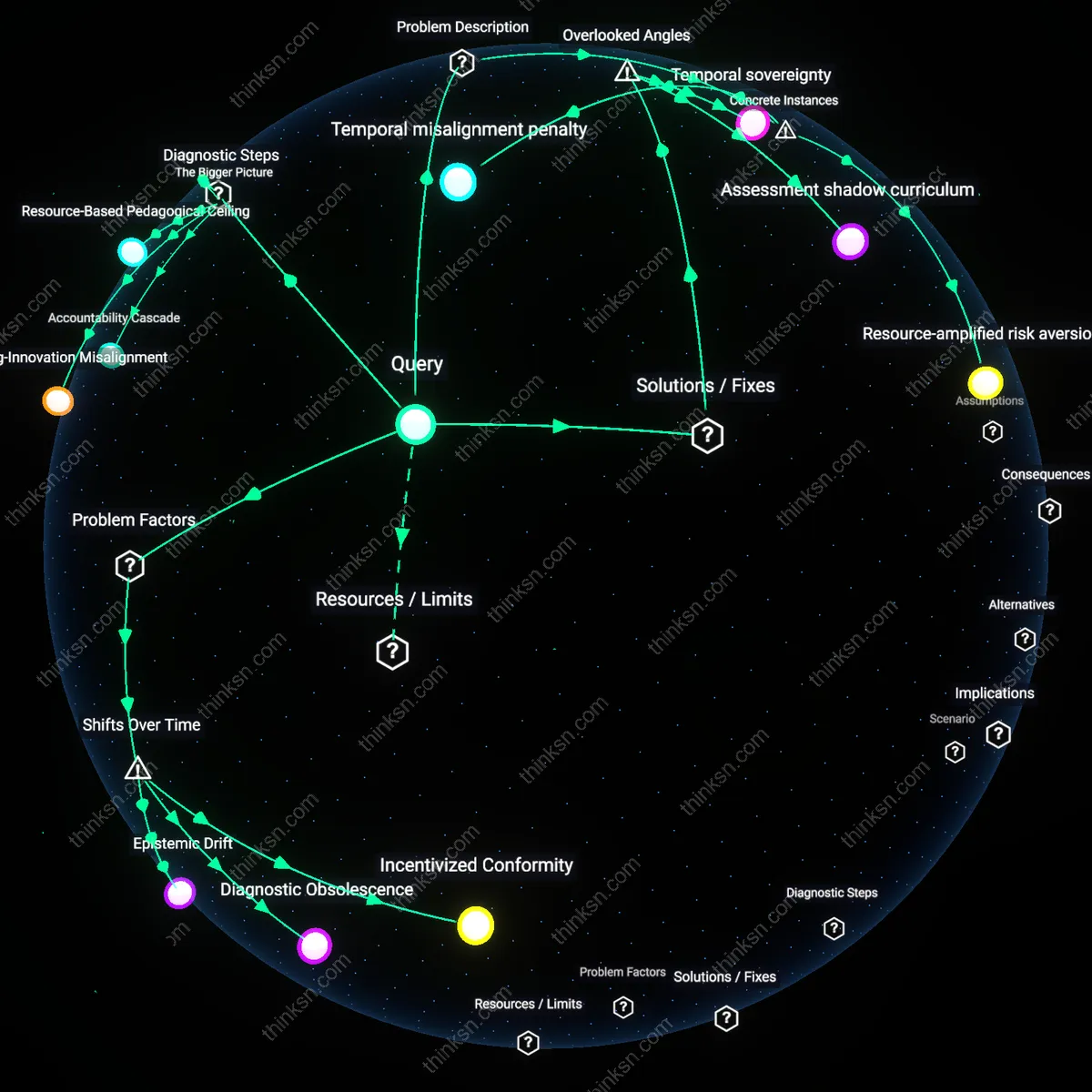

Infrastructure Legibility Gap

Schools in rural or low-income districts face disproportionate penalties when AI systems assume high-speed connectivity and standardized device availability, rendering personalized platforms intermittently functional and thus epistemically unstable. Because AI learning models interpret disconnected usage patterns as student disengagement rather than infrastructure failure, they generate misleading analytics that result in misaligned interventions—such as reduced content difficulty—for students who are, in fact, academically capable. This matters because most equity assessments treat connectivity as a binary access issue, overlooking how unstable legibility in data streams actively distorts educational judgment and reproduces bias through technical misinterpretation.

Curricular Temporal Debt

AI-driven curricula in underfunded schools often default to skill-gap remediation rather than exploratory learning, compressing instructional time into narrow, algorithmically prioritized sequences that defer enrichment activities indefinitely. This creates a temporal debt where students in equity-targeted programs accumulate fewer cumulative hours of creative or interdisciplinary engagement, altering long-term academic trajectories even when short-term test scores rise. This dimension is overlooked because impact studies rarely track the opportunity cost of time allocation, yet it fundamentally reshapes what 'personalization' means for future educational mobility.

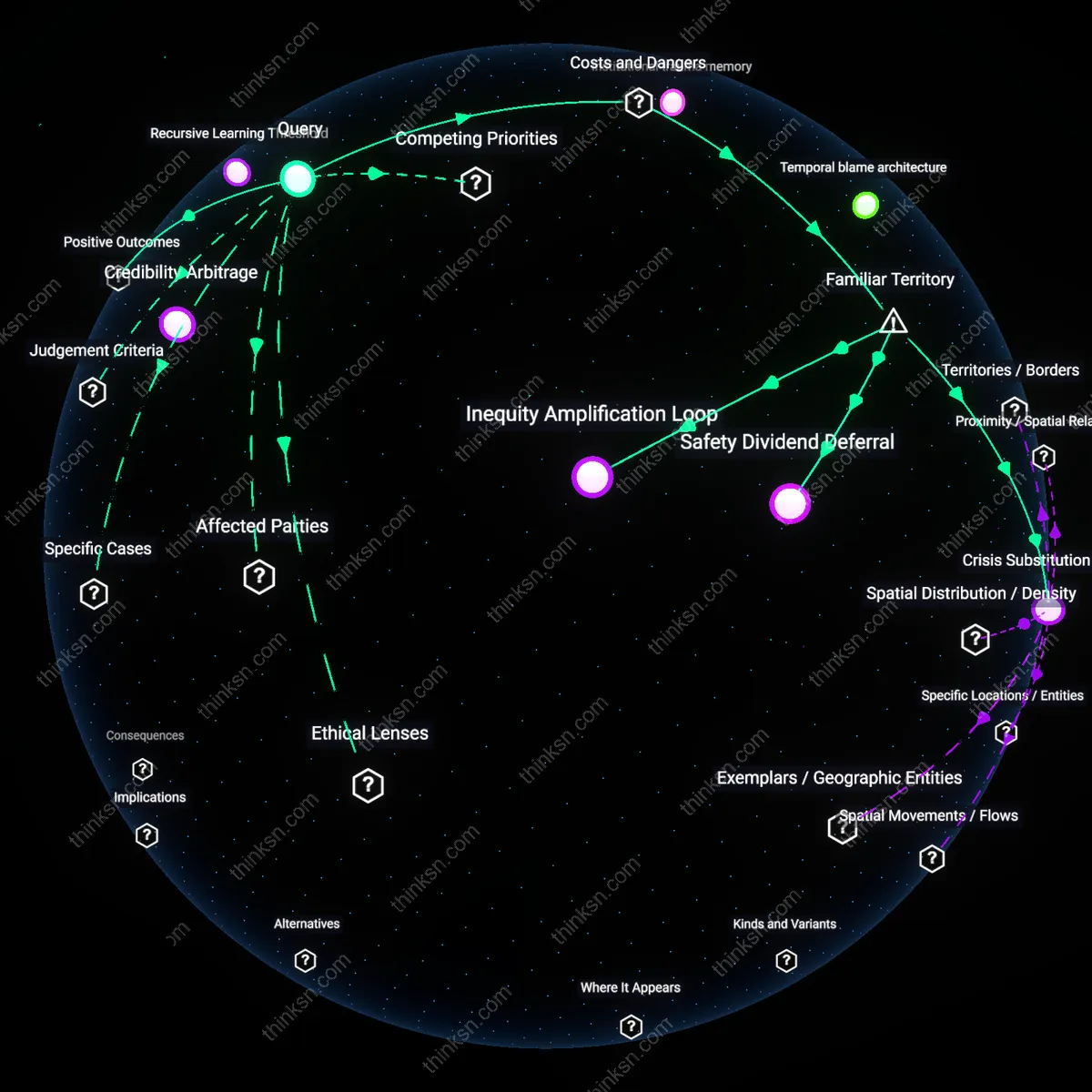

Infrastructure Asymmetry

The balance tilts against equity when AI-driven personalized learning depends on high-bandwidth connectivity and device ownership, because public schools in low-income U.S. districts—particularly in rural Mississippi and urban Detroit—routinely lack consistent access to updated hardware and broadband, while state funding formulas fail to close the capital expenditure gap; this material disparity means the technology meant to enhance learning instead entrenches a two-tier system where personalization becomes a privilege, revealing that scalability of AI education tools is constrained not by algorithmic sophistication but by uneven physical and fiscal infrastructure.

Data Dividend Cycle

Personalized learning systems generate the most accurate adaptations for students in well-resourced schools because those environments produce dense, longitudinal educational data that AI models require for refinement, whereas underfunded schools—whose data ecosystems are fragmented by legacy systems and high student transience—yield sparser inputs, weakening model efficacy; this creates a recursive advantage where AI improves most where it is least needed, and the feedback loop between data volume and algorithmic performance becomes a covert mechanism of advantage accumulation disguised as technical neutrality.

Policy Arbitrage

EdTech companies prioritize integration with school districts that have innovation grants or charter flexibility—such as those in California’s Bay Area or Massachusetts’ innovation zones—because these sites offer legal leeway and technical support to deploy complex AI systems, which in turn shapes the development of personalized learning tools around the regulatory and pedagogical norms of elite pilot sites, thereby making the final products less transferable to high-need public systems bound by federal compliance and staffing shortages, illustrating how market incentives align AI evolution with institutional privilege rather than equity mandates.