Is Relying on AI Feedback Sabotaging Student Writers?

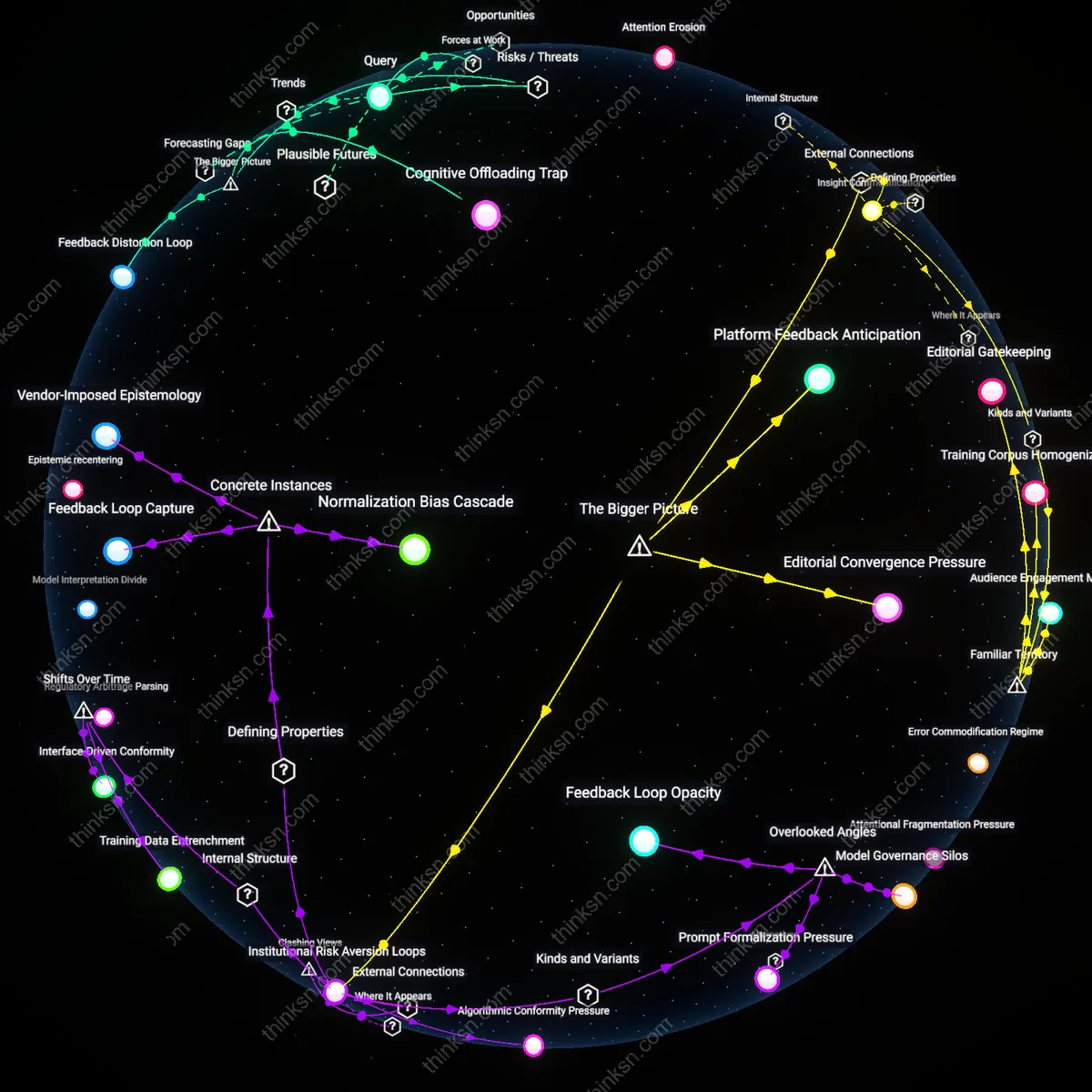

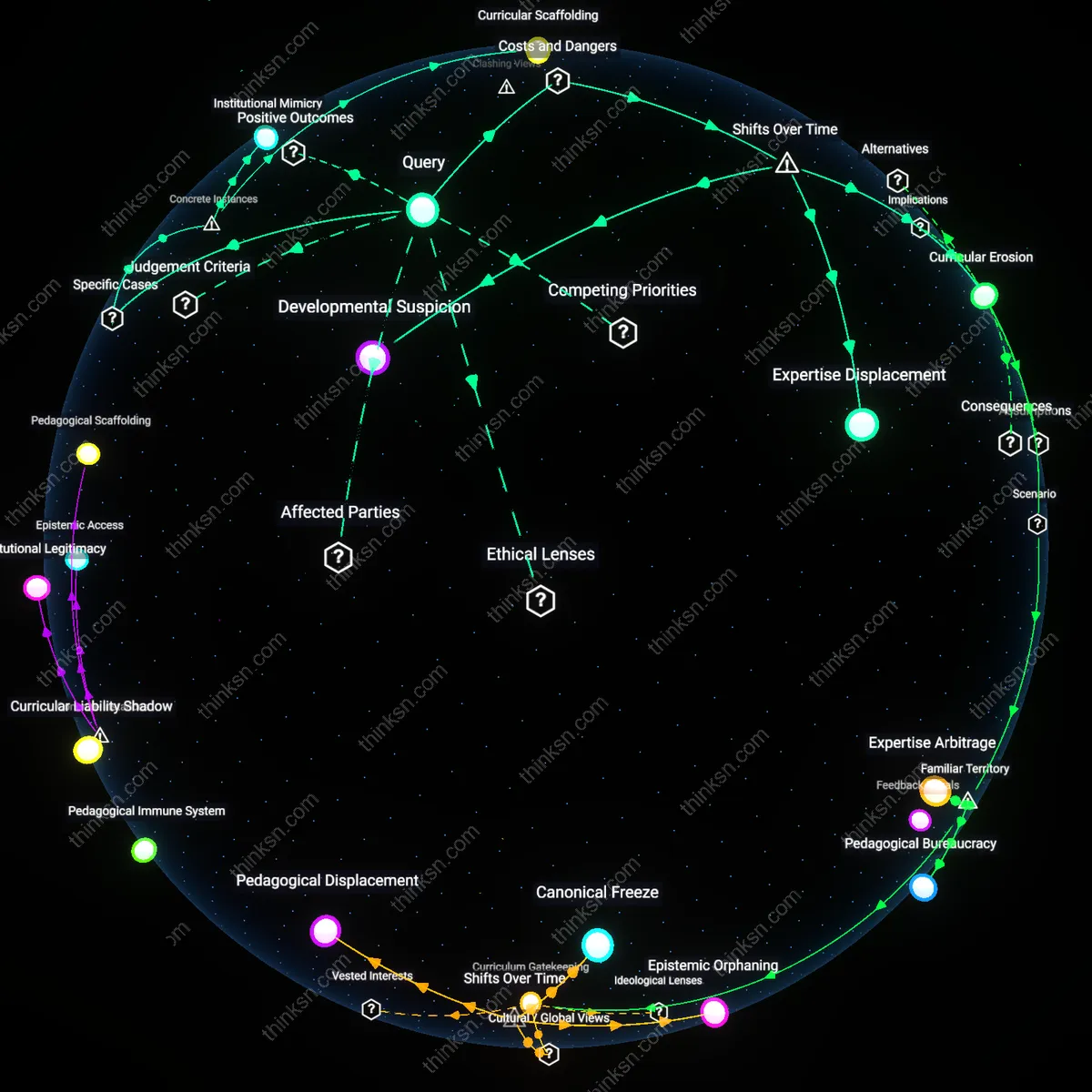

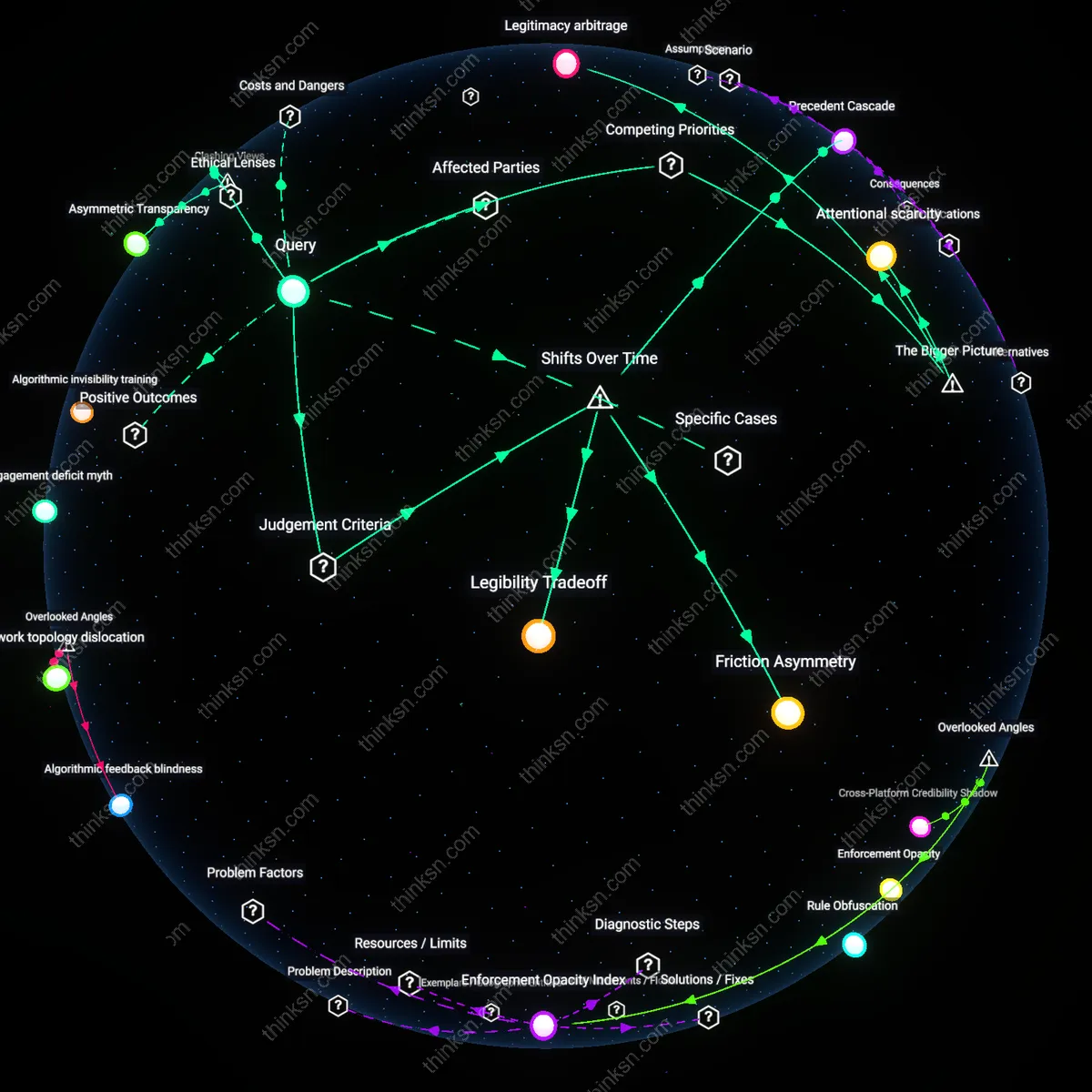

Analysis reveals 11 key thematic connections.

Key Findings

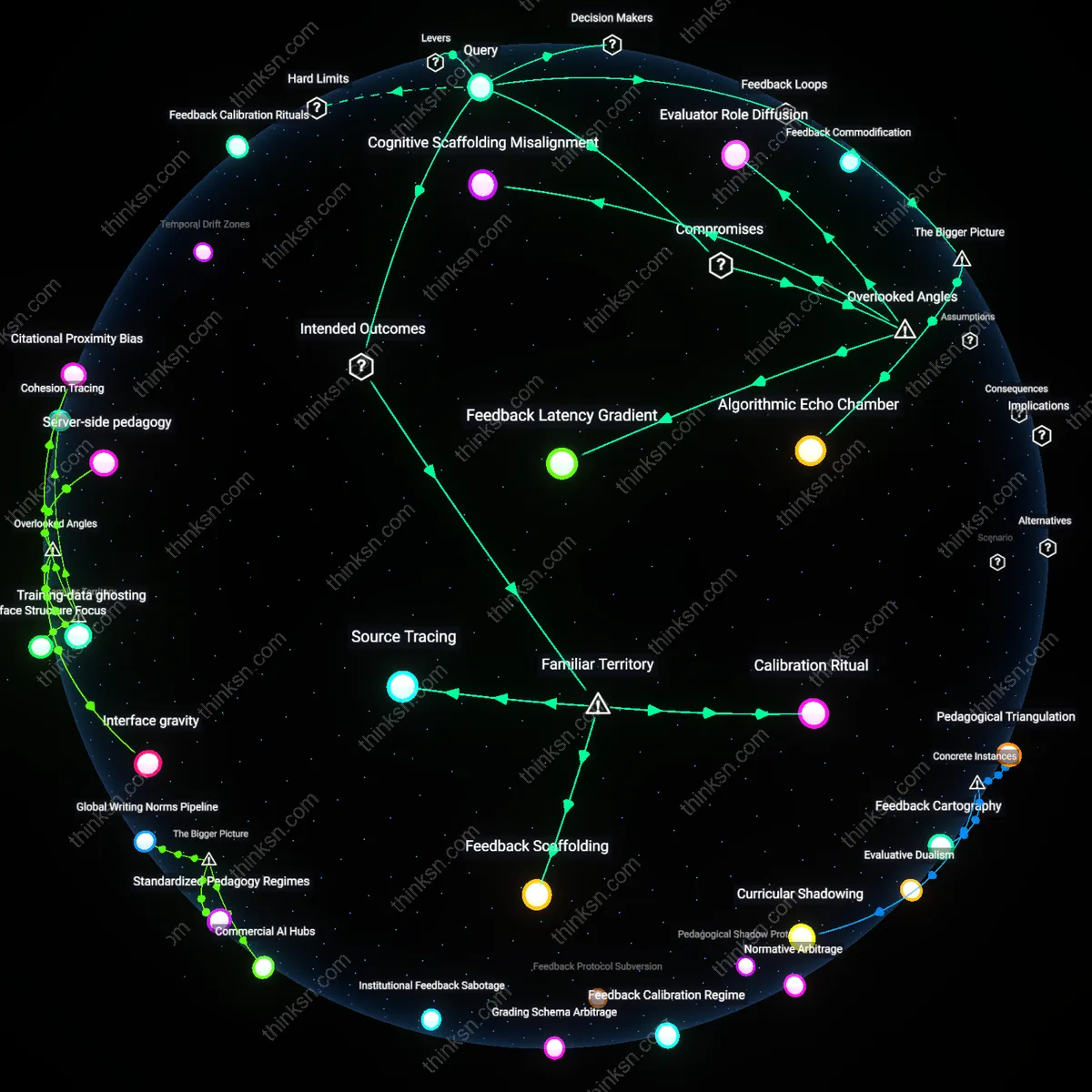

Feedback Calibration Rituals

Students should compare AI feedback against teacher-marked drafts from past assignments to identify patterns of agreement or divergence in commentary. This leverages the familiar institution of the graded paper as a benchmark, using teacher feedback—something students already trust and expect—as a calibration device for AI-generated critique. The mechanism operates through direct side-by-side comparison in routine writing revision cycles, most effectively when teachers release annotated drafts alongside AI tools during writing workshops. What’s underappreciated is that students can use this not just to verify AI accuracy, but to build a personal rubric of trusted feedback signals, turning repeated exposure into a structured diagnostic habit rather than passive reliance.

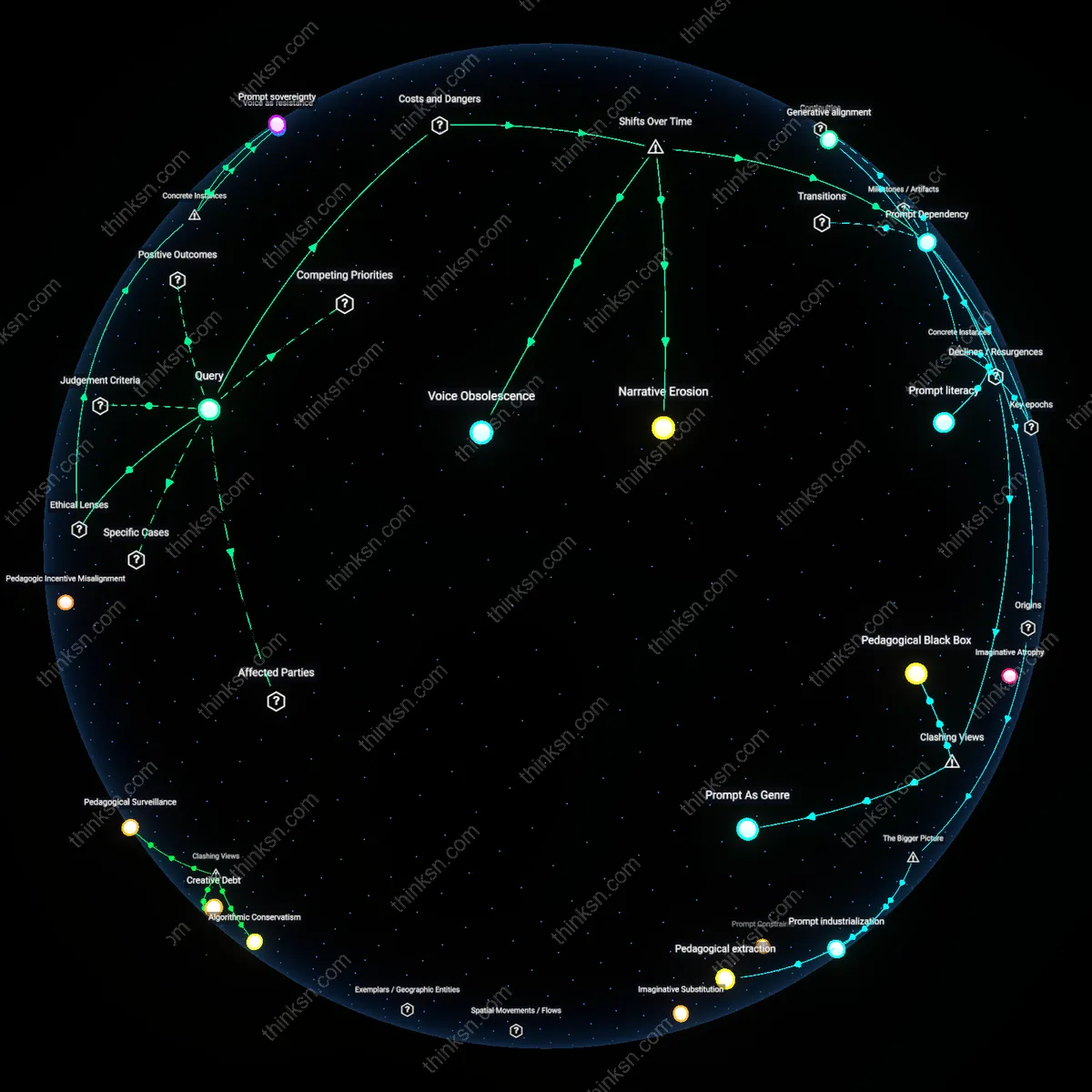

Algorithmic Citation Standards

Require AI tools to cite specific sentence-level justifications from the student’s own essay when making evaluative claims, using highlighting or direct quotation as proof of grounding. This builds on the familiar expectation of textual evidence from teachers, translating the academic norm of ‘show your work’ into an interface demand for AI explainability. The intervention works through increasing transparency in AI logic, forcing systems to justify suggestions with anchor points in the original text, which students can then assess for relevance and accuracy. The non-obvious insight is that citation isn’t just for student writing—it can be a compliance tool that makes AI feedback legible and contestable, transforming opaque suggestions into accountable statements.

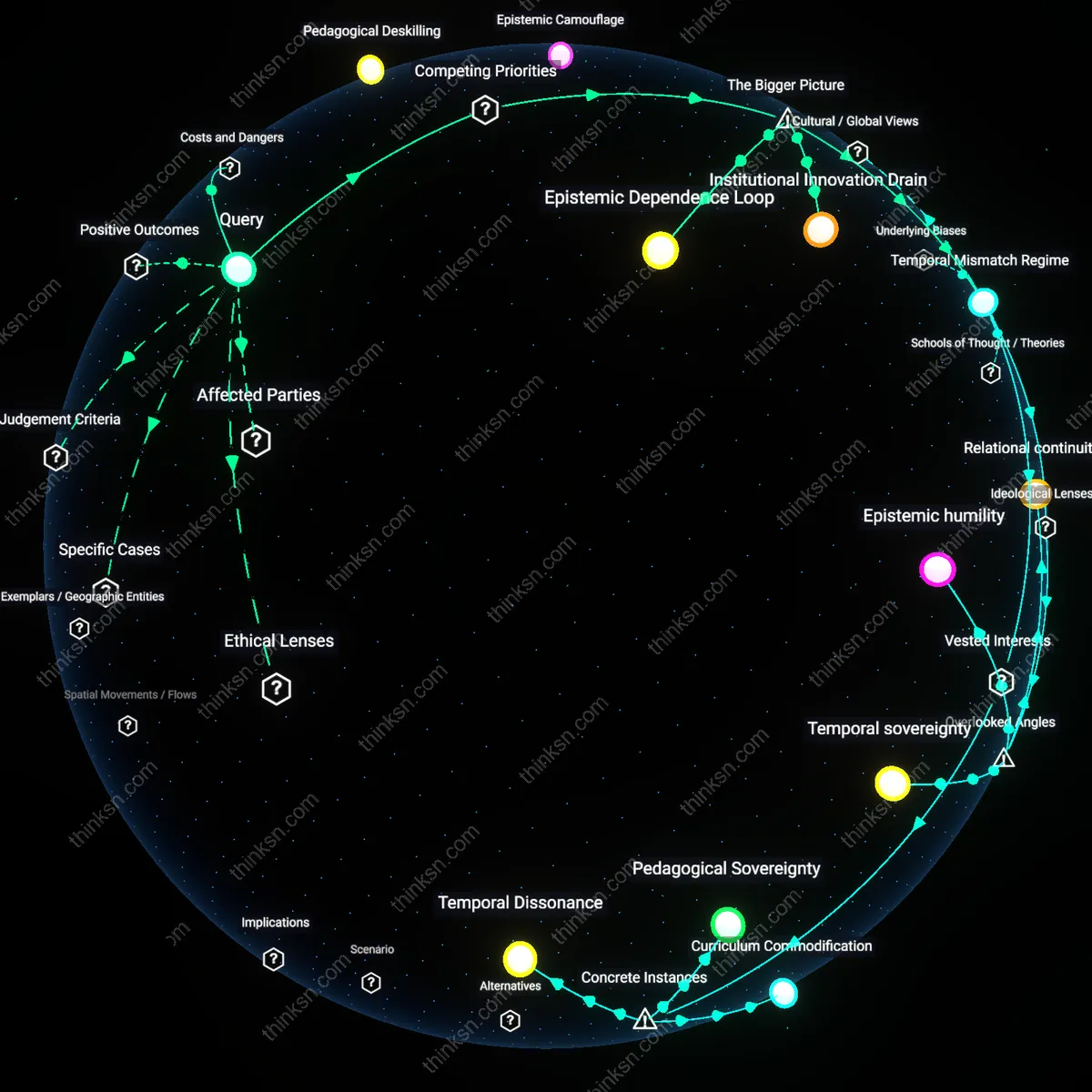

Pedagogical Scaffold Lock-in

High-school students can evaluate AI feedback reliability by using teacher-validated checkpoints to test AI suggestions against curriculum-aligned writing criteria, thereby activating a balancing loop that stabilizes skill development. When students compare AI-generated edits with teacher feedback on shared rubrics—such as argument cohesion or evidence integration—they expose discrepancies in AI outputs, creating corrective signals that prevent overreliance. This mechanism operates through the classroom’s established feedback ecosystem, where teachers serve as epistemic authorities who calibrate AI input against developmental learning progressions. What is underappreciated is that without these institutional anchors, AI feedback can trigger a reinforcing loop of technical compliance over critical thinking—students optimize for AI approval rather than intellectual authenticity, eroding independent judgment.

Algorithmic Echo Chamber

Students improve evaluation of AI feedback by documenting and reflecting on repeated AI recommendations across multiple drafts, which reveals patterned biases that stem from the model’s training data. By keeping a revision log that tracks when AI consistently suppresses stylistic risk-taking or favors formulaic structures—such as always suggesting passive voice reduction or three-point thesis templates—students detect systemic skews embedded in the tool’s design. This process functions through a feedback loop mediated by student metacognition, where iterative comparison across writing tasks surfaces latent homogenizing pressures in commercially deployed models. The broader system at play involves ed-tech platforms optimizing for engagement and perceived utility, which rewards AI that produces confident, prescriptive feedback—even when inaccurate—thus amplifying conformity over voice development.

Rubric Standardization Regime

School districts must mandate standardized AI feedback rubrics aligned with state writing assessments to create consistent benchmarks. Centralized curriculum boards—like those in Florida or California—now dictate not only scoring criteria but also which AI tools are permitted in classrooms, shifting authority from teachers’ subjective judgment to algorithmic systems trained on decades of past essays. This formal integration marks a departure from the early 2010s, when automated feedback was experimental and locally deployed, revealing how policy-driven scalability has prioritized grading efficiency over pedagogical variation, thereby institutionalizing a hidden normativity in what counts as 'good writing.'

Feedback Latency Gradient

A high-school student can evaluate AI feedback reliability by measuring how feedback specificity changes when they resubmit incrementally revised drafts to the same AI system over time—because AI models often generate more granular suggestions as syntactic and surface errors are removed, revealing a hidden gradient in feedback depth. This mechanism operates through the model’s internal prioritization of high-impact errors (e.g., missing thesis statements) over nuanced ones (e.g., rhetorical cohesion), which only surfaces after coarse issues are resolved—a dynamic invisible in one-off interactions. Most students and educators overlook this latency-dependent refinement, assuming AI feedback is static per query, but the evolving nature of AI critique actually mirrors human pedagogical sequencing, exposing a non-obvious developmental pathway embedded in temporal reuse.

Cognitive Scaffolding Misalignment

Students should assess AI feedback by comparing the cognitive level at which suggestions are made (e.g., sentence-level corrections vs. argument architecture) against their own current writing stage—because AI systems frequently scaffold at a fixed cognitive tier, typically favoring structural compliance over conceptual risk-taking, which risks reinforcing algorithmic safety over intellectual originality. This misalignment manifests when strong student ideas are ‘corrected’ into conventional frameworks not because they are wrong, but because they deviate from training data patterns, a dynamic obscured by the AI’s authoritative tone. The overlooked reality is that AI feedback often optimizes for gradable conformity, subtly compromising the development of voice and independent reasoning even when factual accuracy is high.

Evaluator Role Diffusion

Students can detect unreliable AI feedback by tracking how consistently the AI shifts between roles—e.g., peer reader, grammar checker, or academic gatekeeper—within a single response, because these undifferentiated stances produce contradictory advice (e.g., encouraging creativity while penalizing non-standard syntax) that mimics human grading inconsistency but lacks accountability. This role diffusion arises from the AI’s training on heterogeneous source data where feedback styles are conflated without meta-awareness, creating a hidden source of confusion that students typically interpret as personal failure rather than systemic ambiguity. The overlooked consequence is that AI doesn’t just assess writing—it implicitly teaches students to accommodate unpredictable evaluative norms, distorting their self-evaluation habits in ways human teachers usually mitigate through role clarity.

Calibration Ritual

Compare AI feedback against teacher-marked essays on the same drafts to anchor reliability judgments in institutional grading norms. This involves the student systematically collecting past or parallel assignments that received both AI and instructor feedback, then identifying patterns where AI aligns with or diverges from human grading criteria, especially in areas like thesis clarity, evidence integration, and rubric adherence; the mechanism operates through empirical benchmarking against a known authority—teachers within the school’s curriculum system—making it significant because it transforms vague trust in AI into a testable, localized standard. The non-obvious insight is that most students treat AI as either fully independent or wholly untrustworthy, missing that its reliability emerges not abstractly but relationally, through calibration to familiar evaluative institutions.

Feedback Scaffolding

Use AI feedback only after drafting by hand or through unassisted free writing to preserve the integrity of independent idea generation. The student begins without digital tools, writing initial drafts on paper or in isolated environments, then applies AI critique only in revision stages, ensuring that the origin of ideas remains internally governed; this works through a temporal partitioning of creation and analysis, mediated by classroom writing process models such as prewriting-drafting-revising cycles. What’s underappreciated is that AI’s threat to autonomy isn’t in its commentary but in its premature presence—intervening too early collapses the cognitive space where students encounter their own thinking, turning feedback into a design constraint rather than a reflective resource.

Source Tracing

Interrogate AI feedback by tracing its advice back to visible features in the student’s own text, demanding that each AI suggestion map to a specific sentence, paragraph, or structural decision. The student treats every AI comment as a hypothesis—e.g., 'your thesis lacks focus'—and verifies whether the critique corresponds to an actual section of their essay, using this traceability to filter out generic or hallucinated feedback; this operates through a verification protocol rooted in textual accountability, similar to how peer reviewers justify edits with line references. The overlooked reality is that people assume AI feedback is analytical when much of it is patterned rephrasing, and that reliability isn’t a property of the AI itself but of the student’s ability to ground its output in observable evidence from their own work.