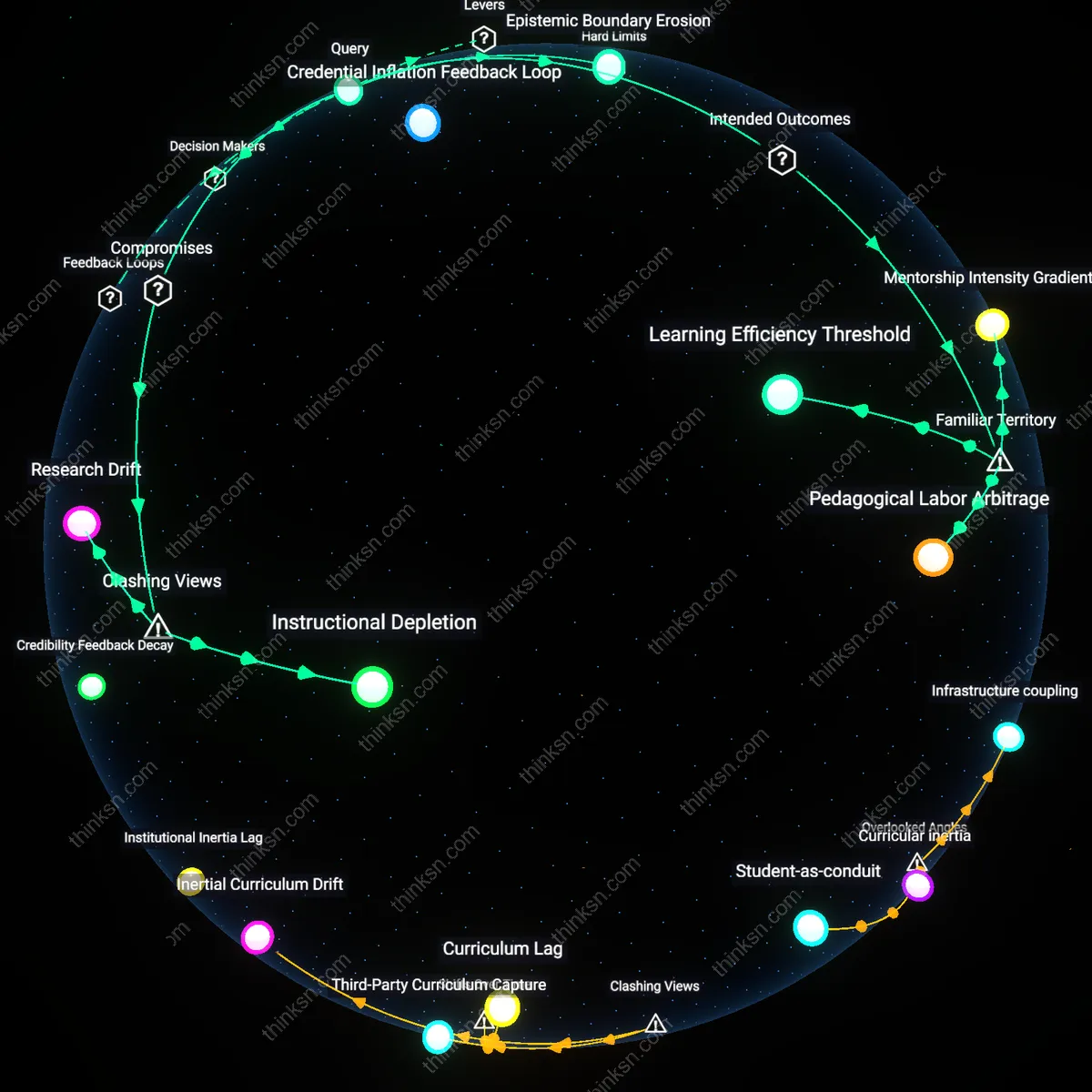

Pedagogical Orphanhood

The displacement of community educators by AI tutors in rural areas creates a new condition of sustained instructional absence, where students are neither guided by familiar cultural interlocutors nor reliably served by distant algorithmic systems, marking a rupture from mid-20th-century rural education models that relied on locally recruited, socially integrated teachers. This shift—crystallized during the 2020s remote learning mandates—transformed educators from community-housed mentors into data-dependent interfaces, severing the informal accountability and motivational scaffolding those relationships provided. The system operates through policy-driven automation that treats teacher presence as interchangeable with algorithmic availability, ignoring how motivation and persistence in low-resource settings depend on trust-based continuity. The non-obvious consequence is that spotty internet doesn't just limit access—it intensifies abandonment, as no human fallback remains to compensate for technological failure.

Infrastructure Ghosting

When AI tutors replace rural educators without prerequisite digital infrastructure, it produces a form of institutional withdrawal disguised as innovation, tracing back to the 2010s shift in global education aid from building public teaching capacity to funding proprietary EdTech pilots. In this transition, international development actors and national ministries began measuring 'access to education' by digital device distribution rather than learning outcomes, enabling the dismantling of rural teaching posts under the premise that AI could 'scale' instruction. The mechanism operates through public-private partnerships that deploy AI platforms in areas specifically selected for low regulatory resistance, leaving students stranded between defunded schools and nonfunctional technology. The underappreciated reality is that the technological gap is not a temporary flaw but a deliberate byproduct—what remains is a hollowed-out educational landscape where responsibility is dispersed and untraceable.

Infrastructural betrayal

Students in rural areas are harmed not because AI tutors fail but because their rollout presumes infrastructural parity, exposing how educational technology policies treat spotty internet as a technical glitch rather than a political artifact. This assumption enables governments and ed-tech firms to offload responsibility for educational continuity onto digital solutions while disinvesting from physical schools and local educators, particularly in Indigenous or marginalized regions like the Navajo Nation or rural Appalachia. The non-obvious truth is that unreliable connectivity isn’t a barrier to innovation—it’s evidence of deliberate underdevelopment, making the failure of AI tutors a symptom of a deeper abandonment masked as progress.

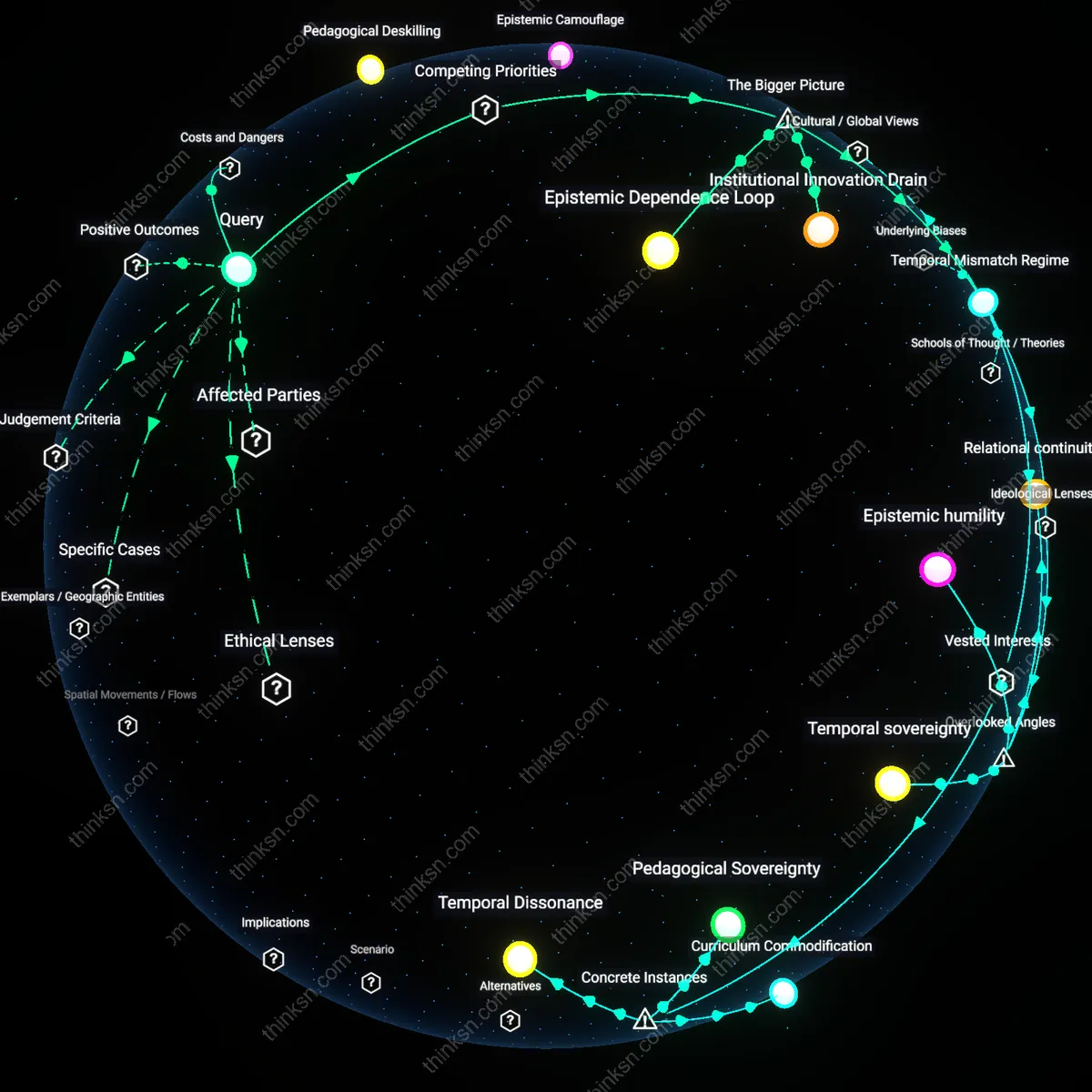

Linguistic colonization

When AI tutors operate in standardized national languages like English or Mandarin, they actively erode the cognitive and pedagogical value of local dialects such as Quechua in Peruvian highlands or Gullah in coastal South Carolina, reframing language loss as a computational limitation rather than an act of epistemic violence. Developers label these dialects ‘low-resource’ for NLP training, but this obscures how AI design conscripts education into a homogenizing global data regime that devalues oral traditions and context-rich community teaching. The dissonance lies in recognizing that the ‘language gap’ isn’t a flaw in AI—it’s the system working as intended, replacing plural epistemologies with a single extractive linguistic modality disguised as neutrality.

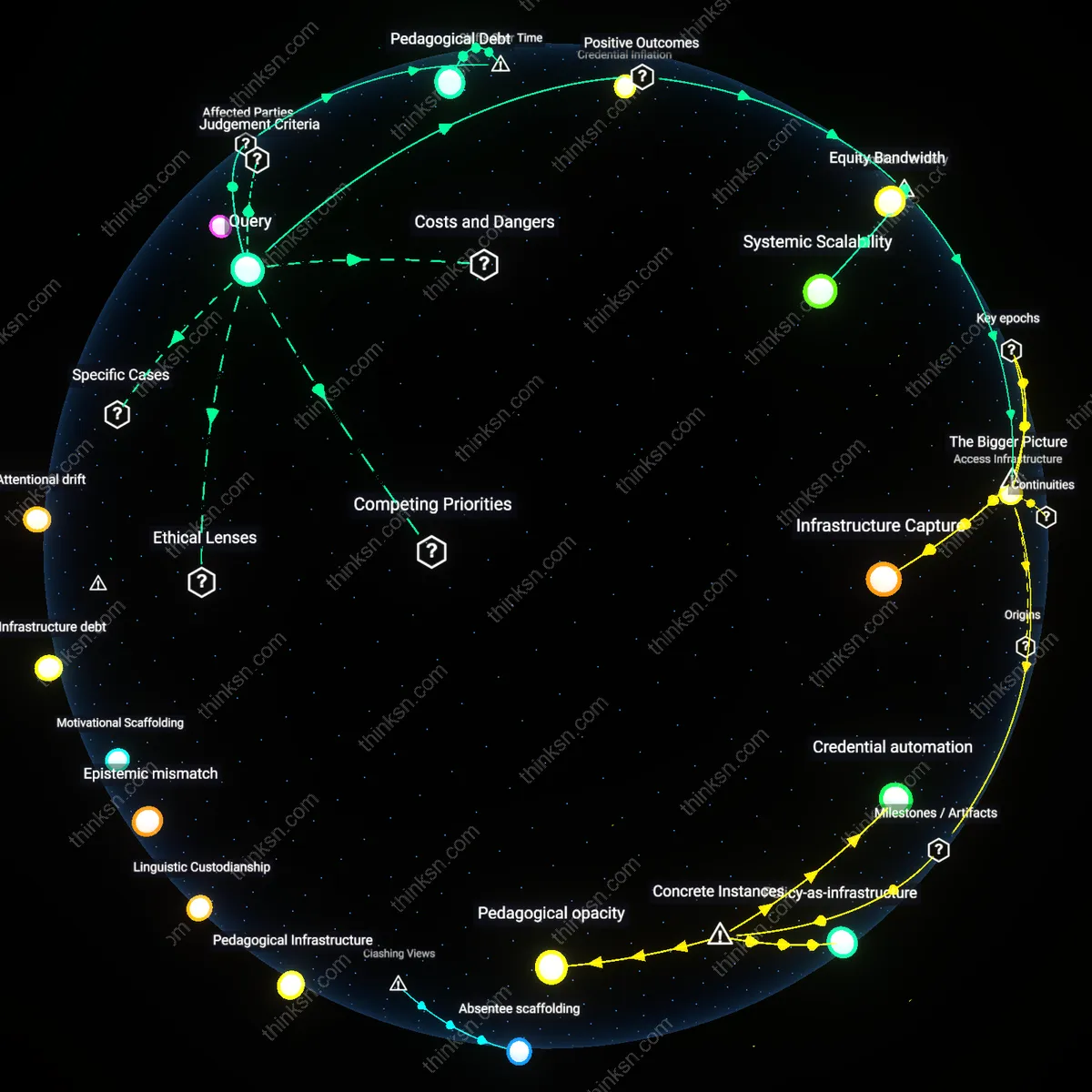

Absentee scaffolding

The collapse of student persistence isn't caused by missing internet or language errors but by the removal of embodied guidance—such as a teacher noticing a student’s fatigue or a village mentor checking in after harvest season—which cannot be replicated by algorithmic nudges programmed in urban Silicon Valley labs. Platforms like Khan Academy or Socratic by Google assume motivation is intrinsic and measurable through engagement metrics, ignoring how socioemotional traction in rural education is woven through face-to-face accountability and intergenerational obligation. The overlooked reality is that ‘staying on track’ is not a cognitive task to be optimized but a socially maintained practice, revealing AI's fundamental mismatch with communal learning ecologies.

Linguistic imperialism

AI tutors reinforce educational marginalization when deployed in monolingual or dominant-language formats that disregard local vernaculars, because the datasets and training models are drawn overwhelmingly from urban, high-resource language corpora; for instance, a Quechua-speaking student in rural Peru accesses an AI tutor trained on Spanish from Lima or Madrid, which lacks contextual, cultural, and phonemic alignment. The mechanism is algorithmic hegemony—global AI systems encode the epistemic norms of the data dominant regions, rendering peripheral languages as noise rather than valid cognitive pathways. The systemic significance lies in how this reproduces colonial patterns of knowledge control, where language becomes a gatekeeper not by accident but by design in AI training pipelines.

Pedagogical Infrastructure

Deploy local AI tutors on offline mesh networks managed by regional education cooperatives to maintain instructional continuity without relying on centralized internet. This system uses compressed, multilingual models preloaded onto low-cost devices that sync through peer-to-peer radio protocols when connectivity is available, preserving data privacy and reducing latency; what’s overlooked is that AI dependency isn’t just about bandwidth but the absence of a decentralized pedagogical infrastructure—schools in rural Malawi and Ladakh have sustained learning during outages using radio-synchronized local servers, revealing that the real bottleneck is not technology access but the lack of locally governed, education-specific network topologies that can operate independently of commercial internet providers.

Linguistic Custodianship

Train local teaching assistants as linguistic custodians who co-adapt AI tutor outputs to regional dialects, cultural idioms, and contextually relevant examples, using lightweight fine-tuning toolkits operated on edge devices. Unlike centralized NLP pipelines that flatten linguistic diversity, this process embeds community knowledge into AI interactions—such as adapting math word problems to reflect subsistence farming practices in rural Guatemala—highlighting the overlooked reality that language accuracy in AI education fails not from lack of translation but from absence of living linguistic stewardship, where meaning is continuously negotiated by those who speak it natively in everyday practice.

Motivational Scaffolding

Integrate AI tutors with community-based motivational scaffolding systems, where weekly in-person mentor check-ins—conducted by trained youth leaders or retired educators—anchor digital progress with human accountability and emotional reinforcement. These meetings review AI-generated learning logs and set social commitments, transforming isolated digital labor into a collectively witnessed journey; the overlooked insight is that attrition in rural AI-assisted learning stems less from technical gaps than from eroded social momentum, and that motivation functions as a shared, ritualized resource rather than an individual trait, as observed in participatory education models in post-conflict northern Uganda.

Infrastructure debt

In rural Oaxaca, Mexico, AI-driven education platforms failed to sustain student engagement because intermittent electricity prevented consistent device charging, leaving tablets offline even when satellite internet briefly worked. This material constraint reveals how technological interventions assume functional utility grids, but in regions where power arrives only six hours daily, the cost of maintaining digital tools outweighs benefits, and any learning continuity depends on hardware availability at all times—yet schools lack backup systems. The non-obvious insight is that digital infrastructure cannot compensate for absent analog foundations, rendering even well-designed AI tutors inert due to unsynchronized resource layers.

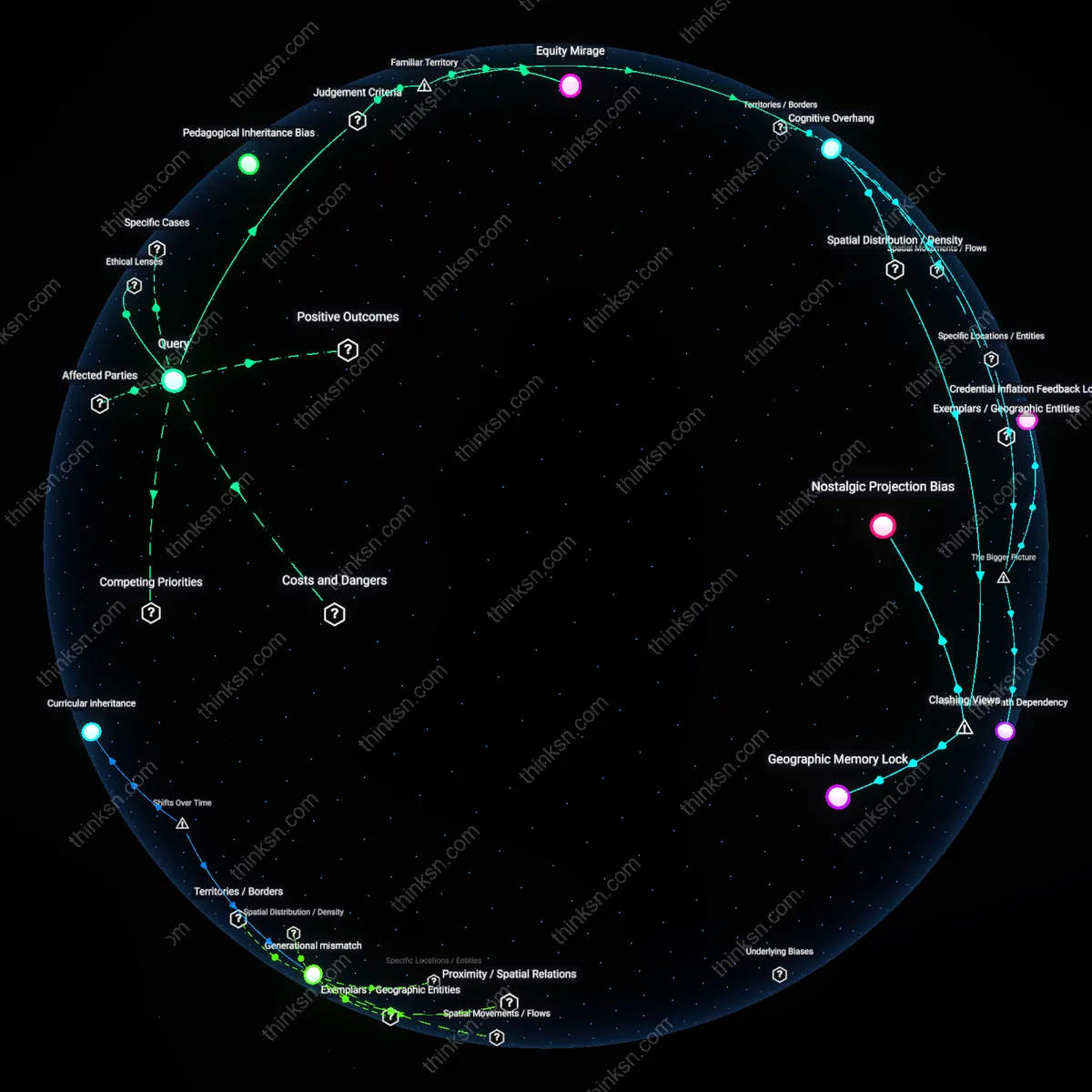

Epistemic mismatch

In marginalized schools across Papua New Guinea, where local instruction occurs in over 800 dialects but AI tutoring systems deliver content in standardized English, students disengage within weeks of deployment, as demonstrated by the 2021 UNESCO Port Moresby pilot. This failure arises not from lack of motivation but because the AI cannot parse locally meaningful examples or worldviews, turning math problems into cultural riddles—such as referencing 'apples' in regions where pineapples are the dominant fruit. The underappreciated reality is that language discordance creates cognitive estrangement, where content becomes unrelatable, not just untranslated, disrupting the pedagogical bond between knowledge and lived context.

Attentional drift

When Arizona’s Navajo Nation trialed AI-based homework assistants in 2020, absenteeism rose despite initial enthusiasm, because without in-person mentors to redirect students when internet dropped mid-session, learners defaulted to passive scrolling or switched tasks entirely—observed in real-time via school usage logs at Tuba City High. The issue stems from the absence of human cues that regulate focus, such as a teacher’s glance or a peer’s question, which digital systems cannot replicate when connectivity fails unpredictably. The overlooked mechanism is that sustained learning requires social scaffolding, not just content delivery, and without it, attention dissipates faster than tutors can recover it.