Mental Health Values vs TikTok Risks for Youth

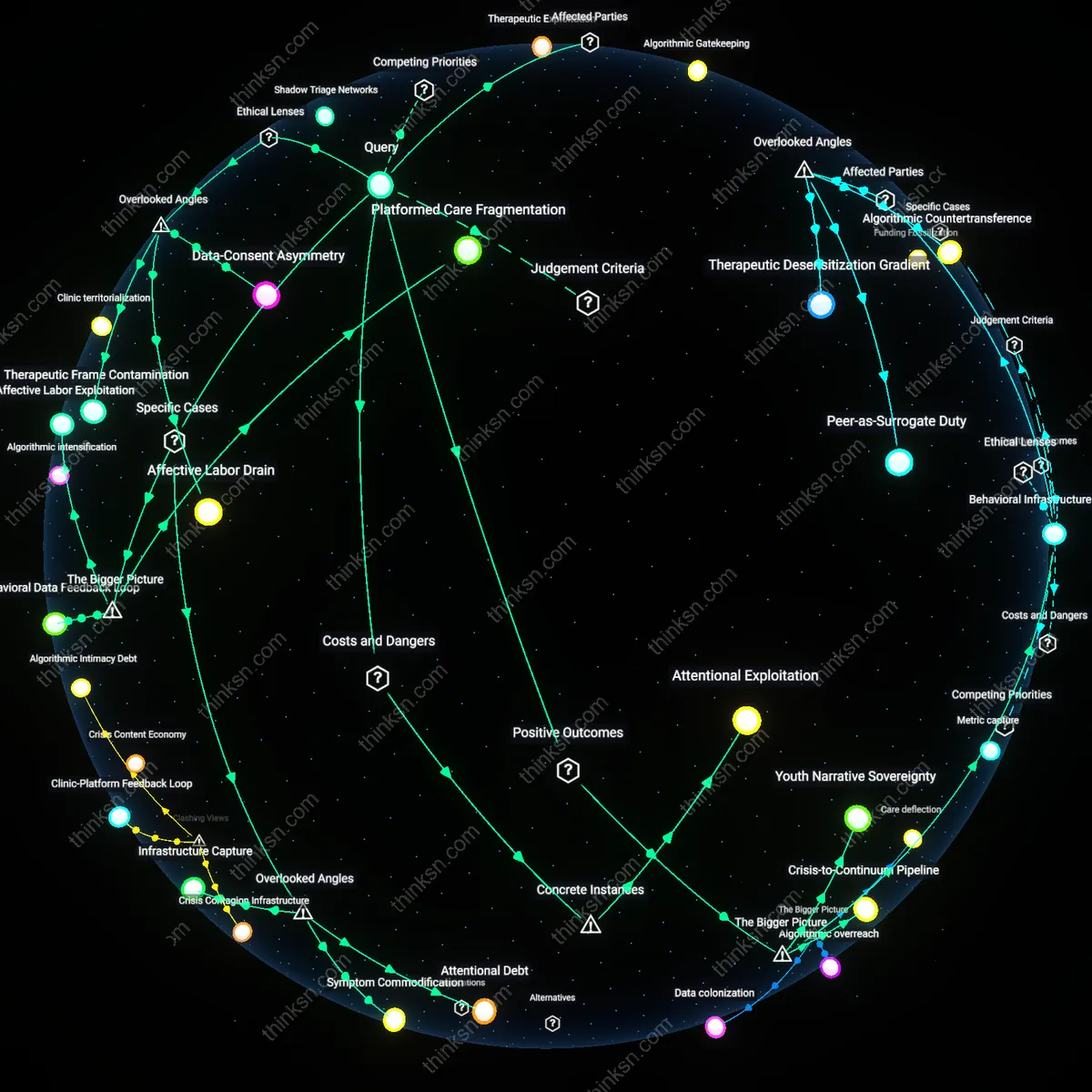

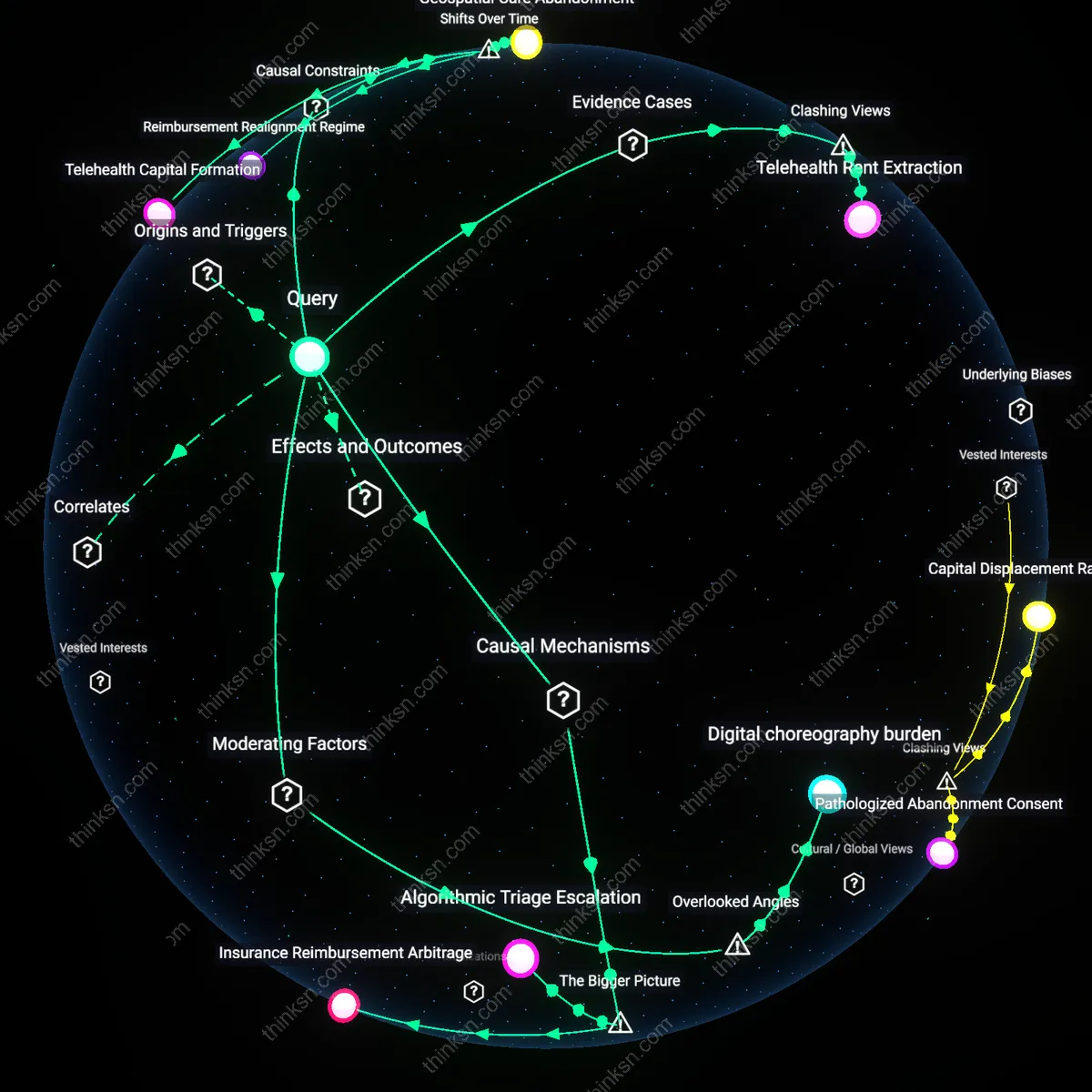

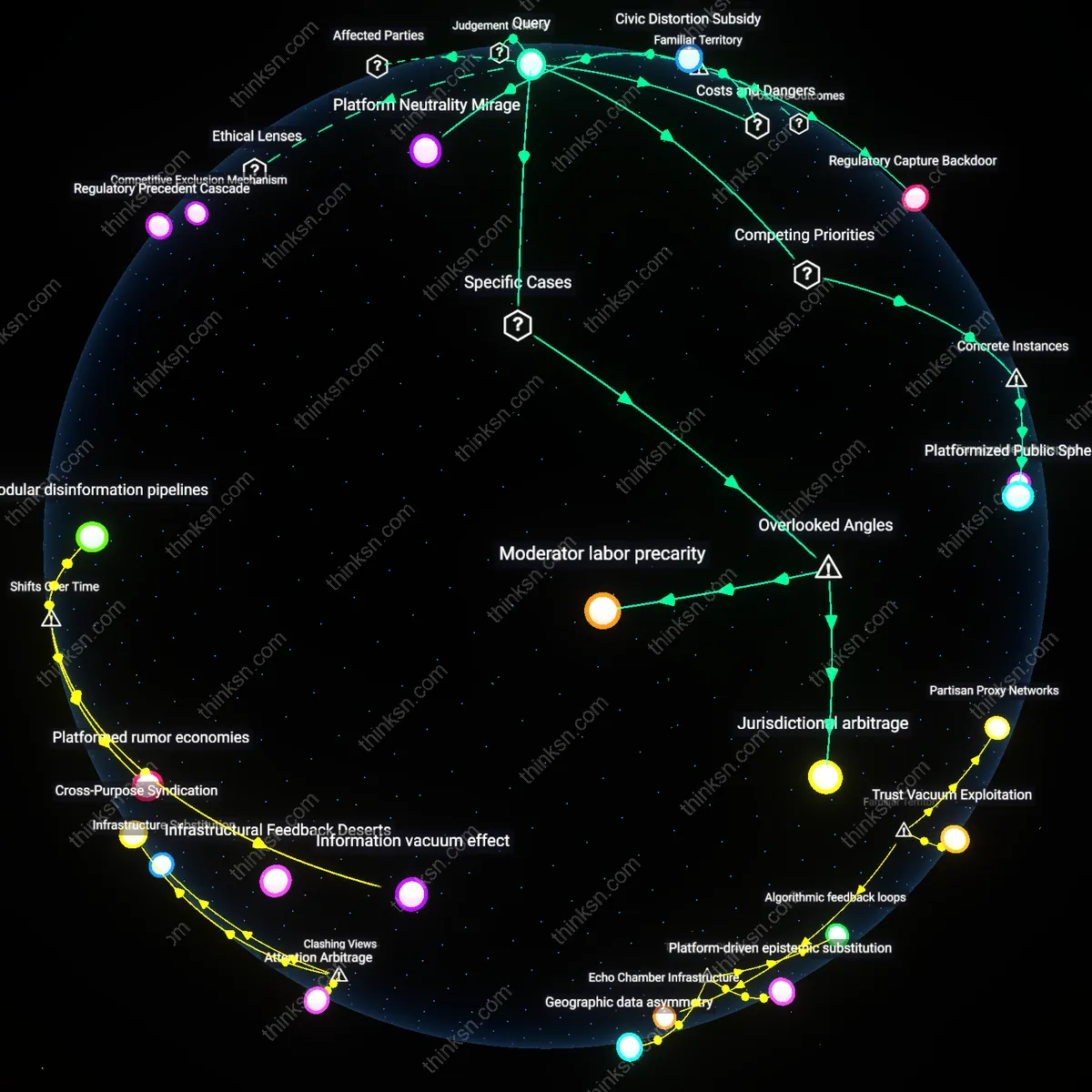

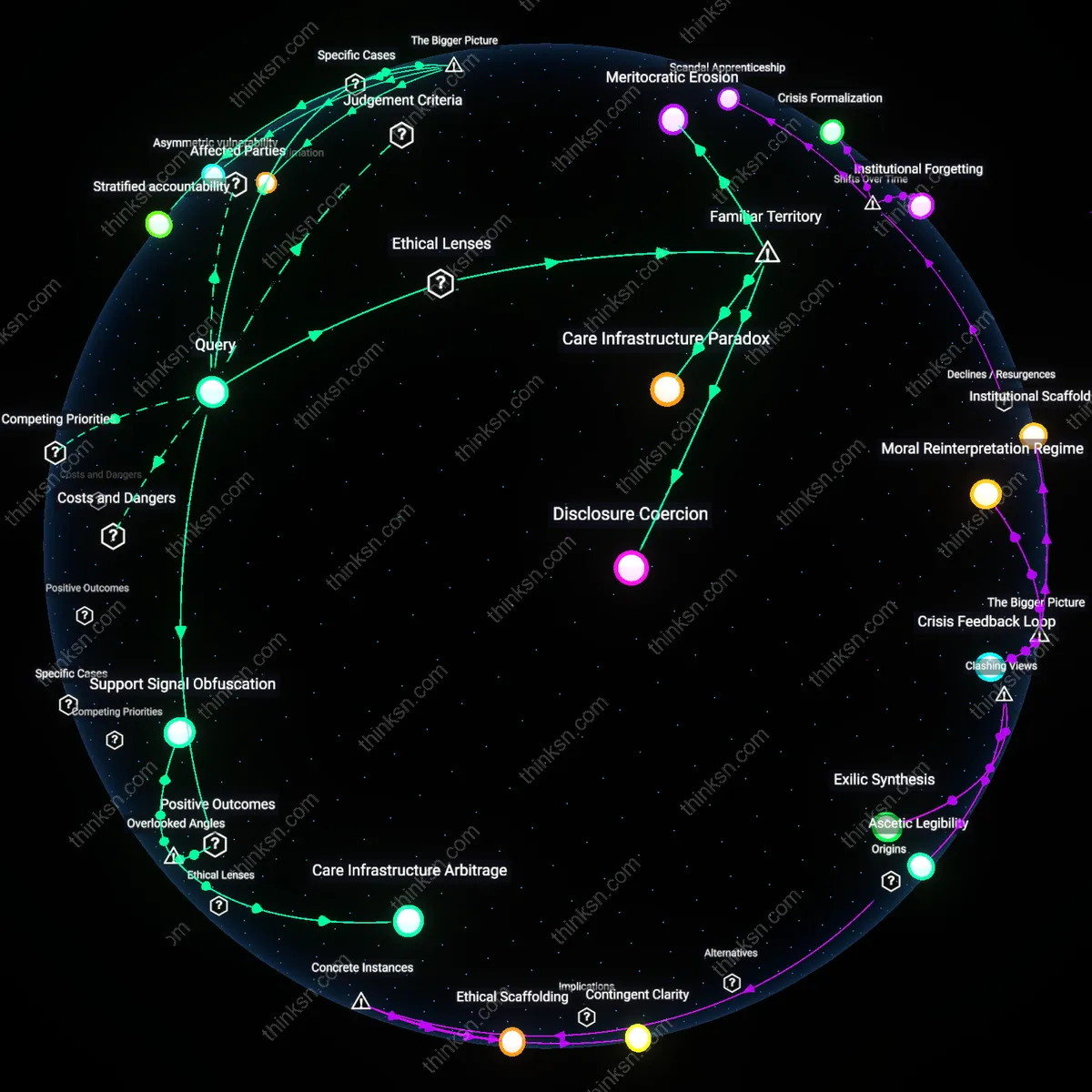

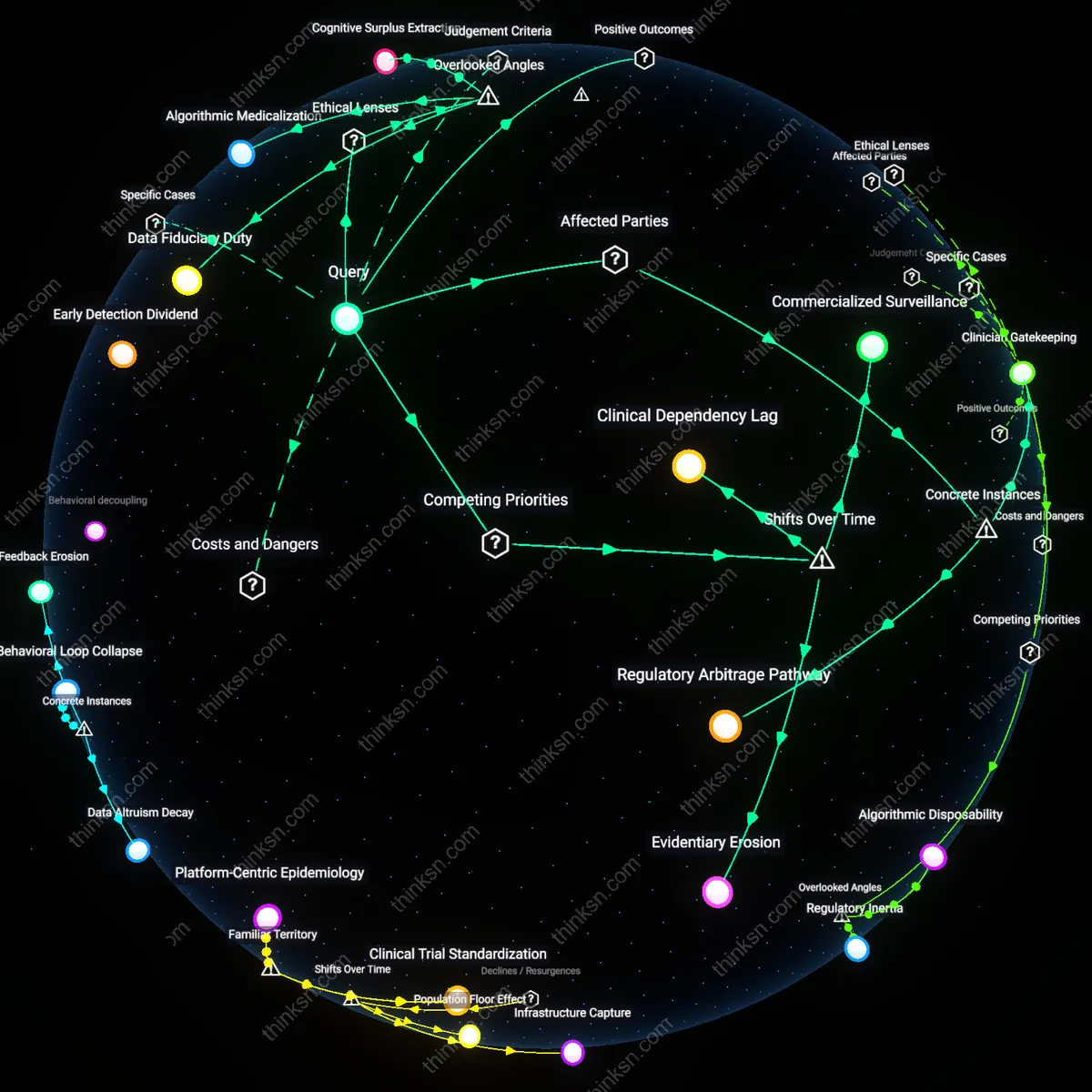

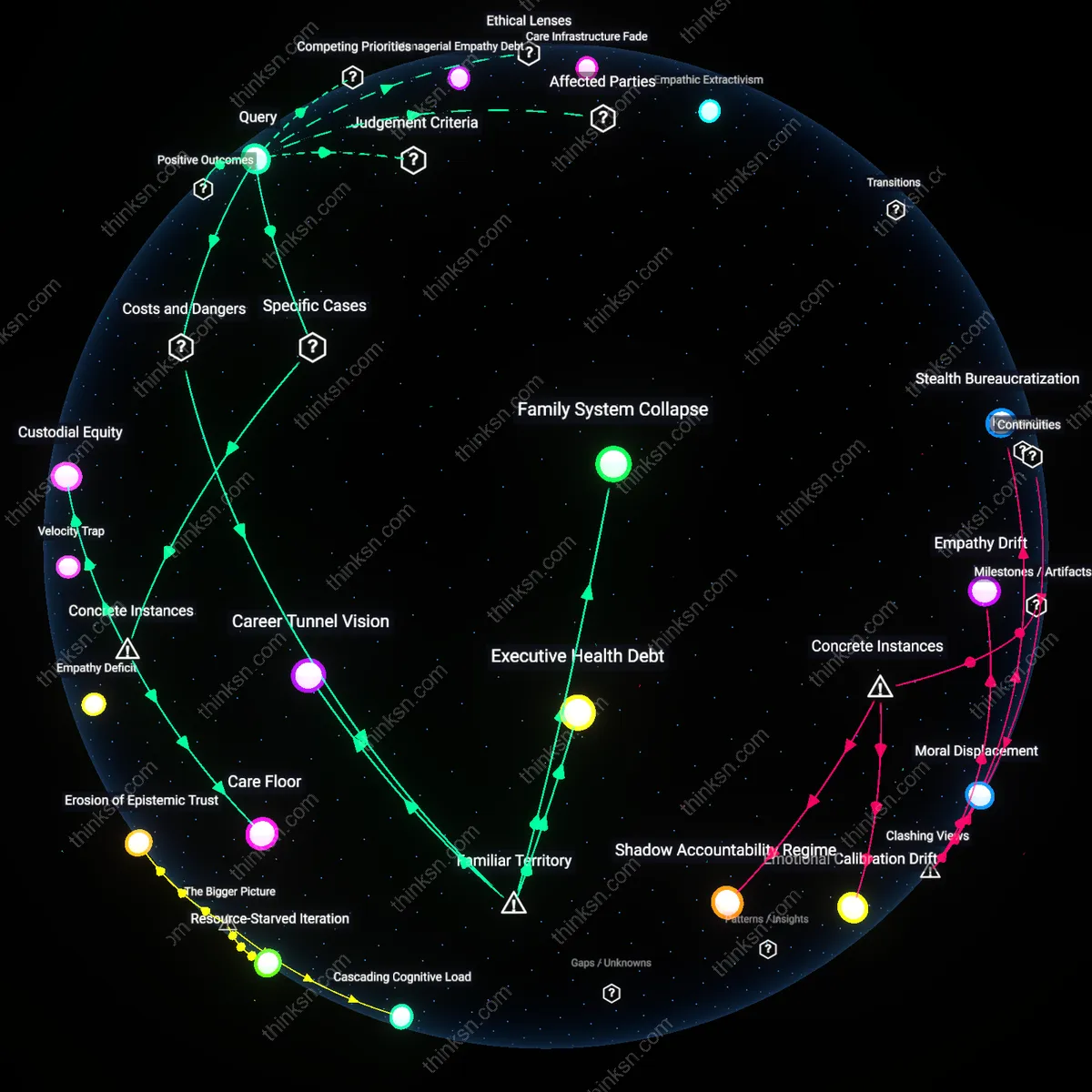

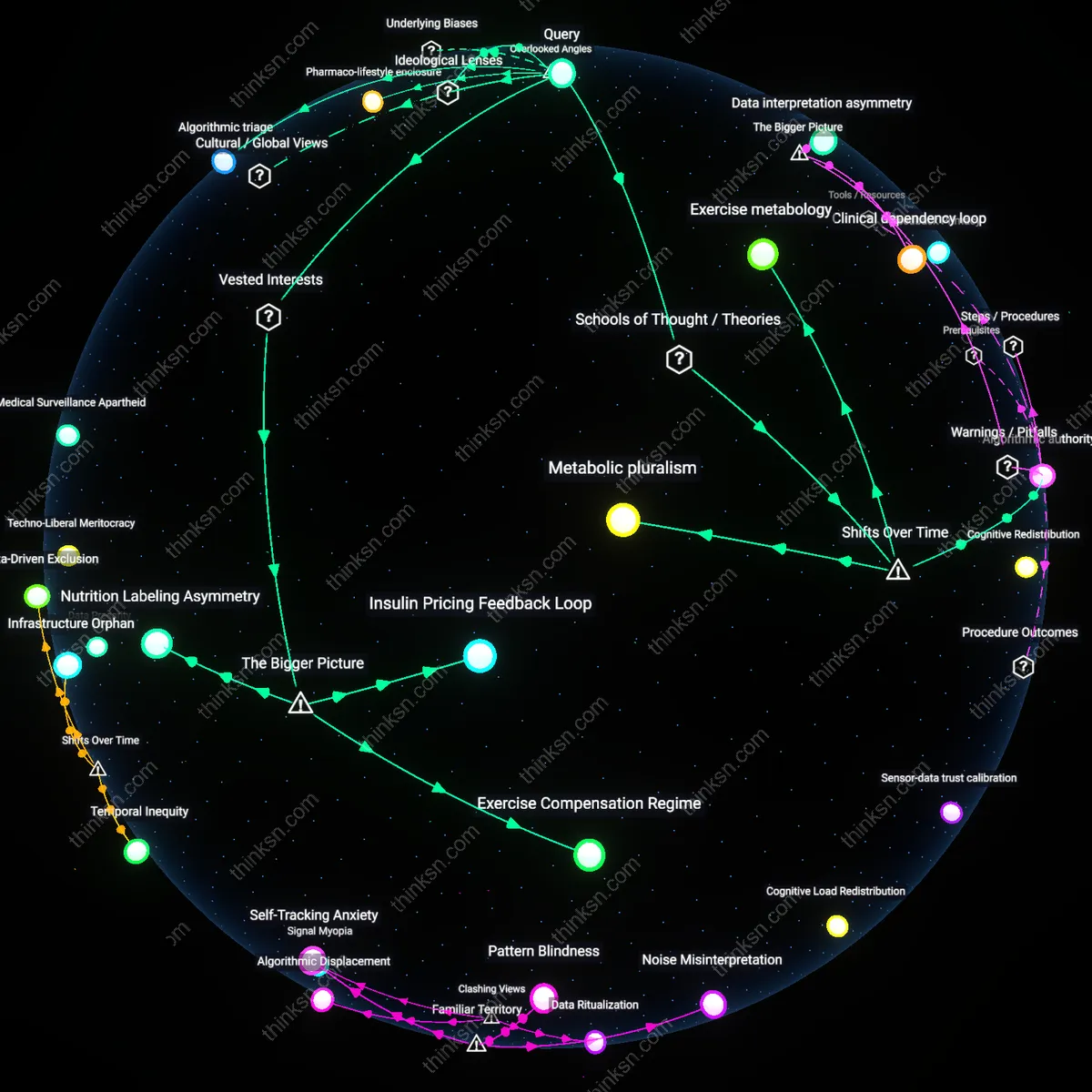

Analysis reveals 16 key thematic connections.

Key Findings

Therapeutic Exploitation

Mental-health organizations that partner with TikTok inadvertently legitimize a platform whose algorithmic design deepens the very distress they seek to treat. By operating within TikTok’s engagement-driven architecture—where compulsive scrolling and emotionally charged content amplify anxiety and dysphoria—these organizations become co-participants in behavioral manipulation, privileging reach over recovery. This undermines their therapeutic integrity not through malice but through structural alignment with a system that profits from psychological instability, revealing that clinical outreach on addictive platforms can become a form of iatrogenic harm—treatment that reproduces the pathology it aims to resolve.

Algorithmic Gatekeeping

Youth facing mental-health crises are not freely choosing TikTok as their support portal but are instead being herded toward it by an algorithmic infrastructure that intercepts emotional vulnerability as a signal for content amplification. When mental-health organizations shift services to TikTok, they cede triage decisions to an unregulated, profit-driven logic that prioritizes virality over clinical appropriateness, meaning the most distressed users may receive attention not because they are best served but because their expressions maximize retention metrics. This redirects care from professional judgment to algorithmic exposure, transforming public health outreach into a performance governed by recommendation engines rather than medical need.

Civic Abandonment

The state’s retreat from funding accessible youth mental-health services creates a vacuum that organizations fill by migrating to TikTok, effectively outsourcing public welfare to a corporation incentivized to maximize engagement over well-being. In this dynamic, governments become passive beneficiaries of privately delivered distress intervention, accepting the facade of care while avoiding accountability, while TikTok gains social legitimacy without altering its harmful design. This transfer frames addiction not as a platform failure but as a public-sector escape hatch, normalizing the surrender of developmental safeguarding to extractive digital regimes.

Behavioral Infrastructure Capture

Mental-health organizations leveraging TikTok gain access to a behavioral infrastructure fine-tuned to youth attention patterns, thereby increasing the reach and resonance of therapeutic content. TikTok’s algorithmic architecture, originally optimized for commercial engagement, becomes a vector for public health messaging when repurposed by clinicians and nonprofits to deliver micro-interventions like emotion-regulation techniques or crisis resources. This inversion of intent—where a design meant to extract user time is redirected to foster psychological resilience—reveals how platform-mediated attention economies can be co-opted by welfare-oriented actors when institutional expertise aligns with native content formats. The non-obvious insight is that addictive design, rather than being solely exploitative, creates a shared infrastructure that public-interest actors can exploit asymmetrically if they master platform grammar.

Youth Narrative Sovereignty

When mental-health organizations use TikTok to engage youth, they cede narrative authority to a decentralized, user-driven ecosystem where young people shape, remix, and validate mental health discourse in real time, amplifying peer-led recovery narratives over top-down clinical scripts. This shift enables authentic, culturally embedded expressions of distress and resilience—such as through hashtag challenges or duet responses—that resonate more deeply than traditional psychoeducation because they emerge from lived experience rather than expert authority. The systemic consequence is a flattening of epistemic hierarchy, where TikTok’s participatory architecture pressures mental health institutions to adapt to youth-defined paradigms rather than impose diagnostic conformity. The underappreciated dynamic is that platform-driven narrative democratization forces institutional actors to negotiate legitimacy with distributed user communities rather than assume it by credential.

Crisis-to-Continuum Pipeline

TikTok’s capacity to convert fleeting emotional spikes into sustained engagement with mental-health resources generates a novel pathway from crisis exposure to therapeutic onboarding, transforming momentary vulnerability into entry points for care. Youth encountering distress-related content—whether through self-search or algorithmic suggestion—are increasingly met with embedded crisis lines, CBT-based captions, or therapist-led explainers, which function as low-threshold interventions that bridge immediate affective states with structured support systems. This pipeline effect is sustained by TikTok’s real-time feedback loops, where comment sections and stitch features evolve into informal peer support networks that persist beyond initial contact. The overlooked systemic mechanism is that platform-induced emotional arousal, typically weaponized for engagement, can be clinically repurposed to initiate longitudinal care trajectories when coupled with timely, context-aware mental health outreach.

Attentional Exploitation

The American Psychological Association’s 2023 advisory on social media use in adolescents identifies TikTok’s infinite scroll and micro-reward feedback loops as mechanisms that compromise autonomous attention spans in therapy-seeking youth. When mental-health organizations like Active Minds partner with TikTok to distribute coping strategies, they inadvertently align clinical messaging with platform architectures designed to maximize engagement through variable reinforcement schedules—similar to slot machines. This integration normalizes attention fragmentation at the very moment youth are taught to cultivate self-awareness, undermining therapeutic fidelity through temporal dislocation. The non-obvious risk is not misinformation but the erosion of sustained cognitive presence necessary for mental health recovery.

Data-Consent Asymmetry

Using TikTok for mental-health outreach entangles clinical ethics with surveillance capitalism because informed consent in therapy assumes deliberate, reflective disclosure, whereas TikTok’s design thrives on continuous, ambient data extraction that undermines users’ capacity to make meaningful privacy choices. Mental health organizations relying on TikTok inherit a system where the platform’s algorithmic infrastructure captures behavioral biometrics—such as scroll speed, pause duration, and rewatch frequency—that reveal psychological states more accurately than self-reports, yet these data are governed by TikTok’s terms of service, not HIPAA or clinical confidentiality standards. This creates a hidden transfer of epistemic authority from clinician to algorithm, a dynamic rarely acknowledged in digital engagement ethics, where the focus remains on content rather than the silent extraction of psycho-physiological signals. The non-obvious shift is that therapeutic trust is no longer just strained by platform addiction but actively reconfigured by a data-consent regime that is structurally incompatible with clinical fiduciary duties.

Affective Labor Drain

When mental-health organizations deploy clinicians to produce TikTok content, they inadvertently shift professional labor from therapeutic intervention to emotionally optimized performance, subjecting clinical staff to the platform’s demand for virality through affective exaggeration—rapid emotional pivots, heightened vulnerability, or curated distress—that can degrade professional identity and emotional sustainability over time. This represents an unrecognized exploitation of clinical care work through the gig-economy logic of TikTok, where emotional authenticity is not just shared but weaponized for engagement metrics, and clinicians become de facto content creators accountable to algorithms rather than therapeutic goals. Most ethical analyses focus on user risk, but this angle reveals how the platform reshapes provider ethics by embedding care work within an attention economy that rewards burnout through visibility. The overlooked consequence is institutional erosion of therapeutic presence, as clinicians internalize platform rhythms that conflict with paced, boundary-respecting clinical practice.

Therapeutic Frame Contamination

Engaging youth on TikTok risks contaminating the therapeutic frame by situating mental-health guidance within a context where content is interspersed with commercial influencers, viral challenges, and algorithmically promoted self-harm material, thereby normalizing psychological support as one affective stimulus among many rather than as a bounded, intentional process. Unlike clinical settings where context stabilizes meaning, TikTok’s feed operates through associative randomness, teaching users to process mental-health advice with the same cognitive passivity as dance trends or product placements, which undermines the development of reflective self-engagement central to psychological growth. This erosion of epistemic boundaries is rarely addressed in platform ethics, which tend to focus on misinformation rather than the destabilization of judgment architecture itself. The hidden cost is not just distraction but the long-term attenuation of youth capacity to distinguish therapeutic insight from ambient entertainment.

Attentional Debt

A mental-health organization that runs targeted TikTok campaigns for crisis intervention inadvertently trains adolescents to associate emotional distress with algorithmic reward cycles, as seen in the case of the UK’s Samaritans partnering with TikTok during Mental Health Awareness Month 2023; this occurs because the platform’s UI conditions users to seek relief through engagement—likes, comments, shares—rather than internal regulation or clinical support, embedding a dependency where coping becomes entangled with digital validation. The overlooked mechanism is not just screen time or content quality, but the temporal misalignment between clinical recovery timelines and TikTok’s instant feedback loops, which generate a deferred psychological cost—attentional debt—where youth must later disentangle self-worth from algorithmic responsiveness, a cost never accounted for in public health efficacy metrics.

Symptom Commodification

When the National Alliance on Mental Illness (NAMI) launched a TikTok series featuring youth sharing lived experiences of anxiety and depression using trending audio and hashtags, it amplified authentic narratives but simultaneously enabled the platform’s ad-tier data pipelines to classify mental distress as behavioral metadata for content optimization, as evidenced by subsequent targeted ads for self-care products and teletherapy apps appearing in users’ For You Pages. The underappreciated dynamic is that clinical legitimacy granted by trusted organizations increases the commercial salience of symptom expression, transforming subjective suffering into a signal for monetization; this shifts the incentive structure such that the visibility of mental illness becomes more valuable than its resolution, a transformation rarely acknowledged in digital outreach ethics frameworks.

Infrastructure Capture

The Australian government-funded headspace initiative’s reliance on TikTok for youth engagement has resulted in the gradual offloading of psychoeducational content curation to TikTok’s recommendation algorithm, which prioritizes emotionally charged, short-form expressions over clinical accuracy or developmental appropriateness, as seen in the disproportionate amplification of videos describing extreme coping mechanisms over preventative strategies. The overlooked factor is not platform design alone, but the institutional dependency that forms when public health entities surrender content distribution control to private infrastructure, creating a feedback loop where mental-health messaging must conform to algorithmic logic to remain visible—thus redefining therapeutic communication by the hidden grammar of engagement engineering, a dependency rarely acknowledged in public-private digital health partnerships.

Behavioral Data Feedback Loop

Mental health organizations that use TikTok to reach youth inadvertently intensify platform engagement by contributing to the normalization of frequent app use, which reinforces TikTok’s algorithmic capture of attention through emotionally resonant content. When clinically oriented organizations post therapeutic messaging in short-form video format, they feed behavioral data—such as watch time, likes, and shares—into TikTok’s machine learning systems, which then optimize for content that maximizes emotional arousal, not psychological well-being. This creates a feedback loop wherein mental health outreach becomes a fuel source for the very addictive mechanisms it seeks to mitigate. The non-obvious systemic consequence is that well-intentioned public health interventions can become data inputs in behavioral engineering systems they do not control.

Affective Labor Exploitation

When mental health nonprofits like Active Minds or The Trevor Project collaborate with TikTok creators to deliver coping strategies in emotionally authentic performances, they outsource therapeutic affect to unpaid or underpaid peer advocates who must repeatedly simulate vulnerability to sustain audience connection. These creators, often youth with lived mental health challenges, perform intimate disclosures within a platform architecture designed to extract engagement through emotional contagion, thereby commodifying their affective labor while the platform and organizations benefit reputationally and metrically. The systemic enabler is TikTok’s reliance on user-generated authenticity to maintain retention, which intersects with the nonprofit sector’s shift toward participatory, peer-led programming to increase relevance. The underappreciated dynamic is that scalability in youth outreach is achieved through the invisible burden placed on marginalized users to reenact their trauma within attention economies.

Platformed Care Fragmentation

Institutions like school-based mental health programs in Seattle Public Schools that integrate TikTok videos into wellness curricula risk displacing structured therapeutic support with episodic, algorithmically mediated exposure to psychological content, weakening continuity of care. By adopting TikTok as an engagement tool, these programs align with a logic of immediacy and virality rather than clinical progression, where success is measured in views rather than outcomes, thus fragmenting mental health care into isolated moments of awareness unlinked to treatment pathways. This shift is enabled by municipal funding constraints that favor low-cost digital outreach over hiring counselors, making schools dependent on platform affordances they cannot govern. The critical but overlooked consequence is that public health infrastructure becomes parasitic on privately controlled attention architectures, eroding institutional capacity to deliver sustained care.