Does Facebook Polarize Elderly Users While Offering Community?

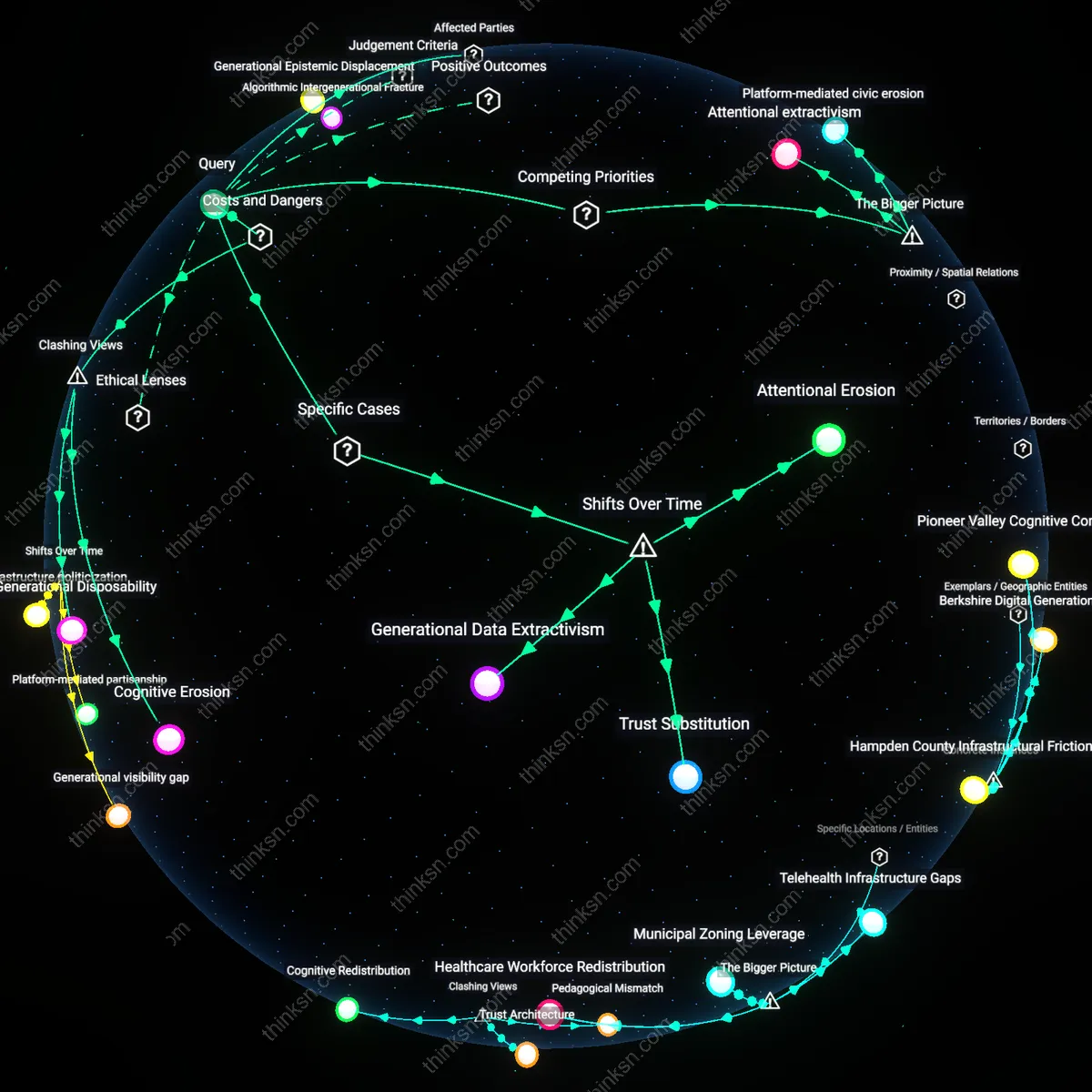

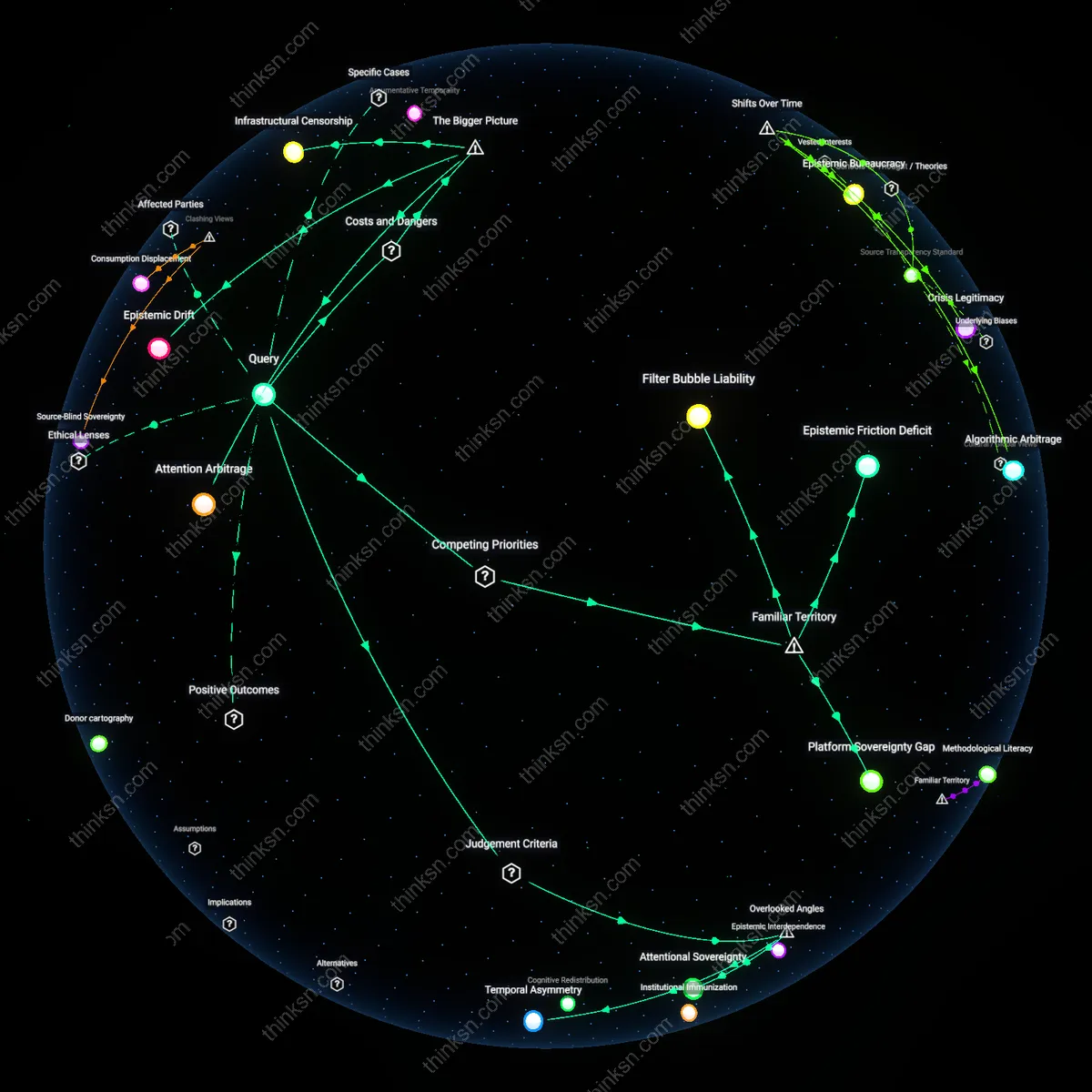

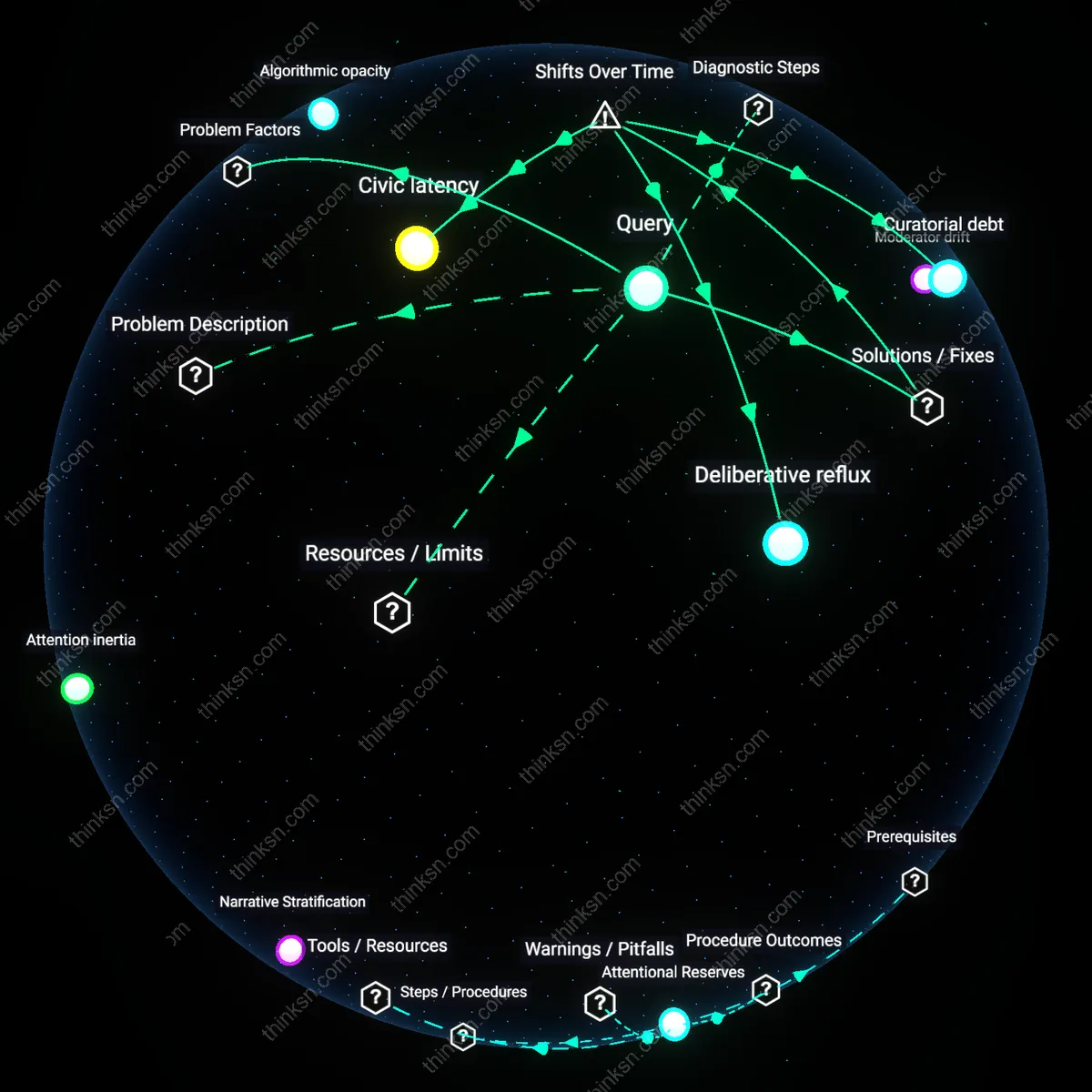

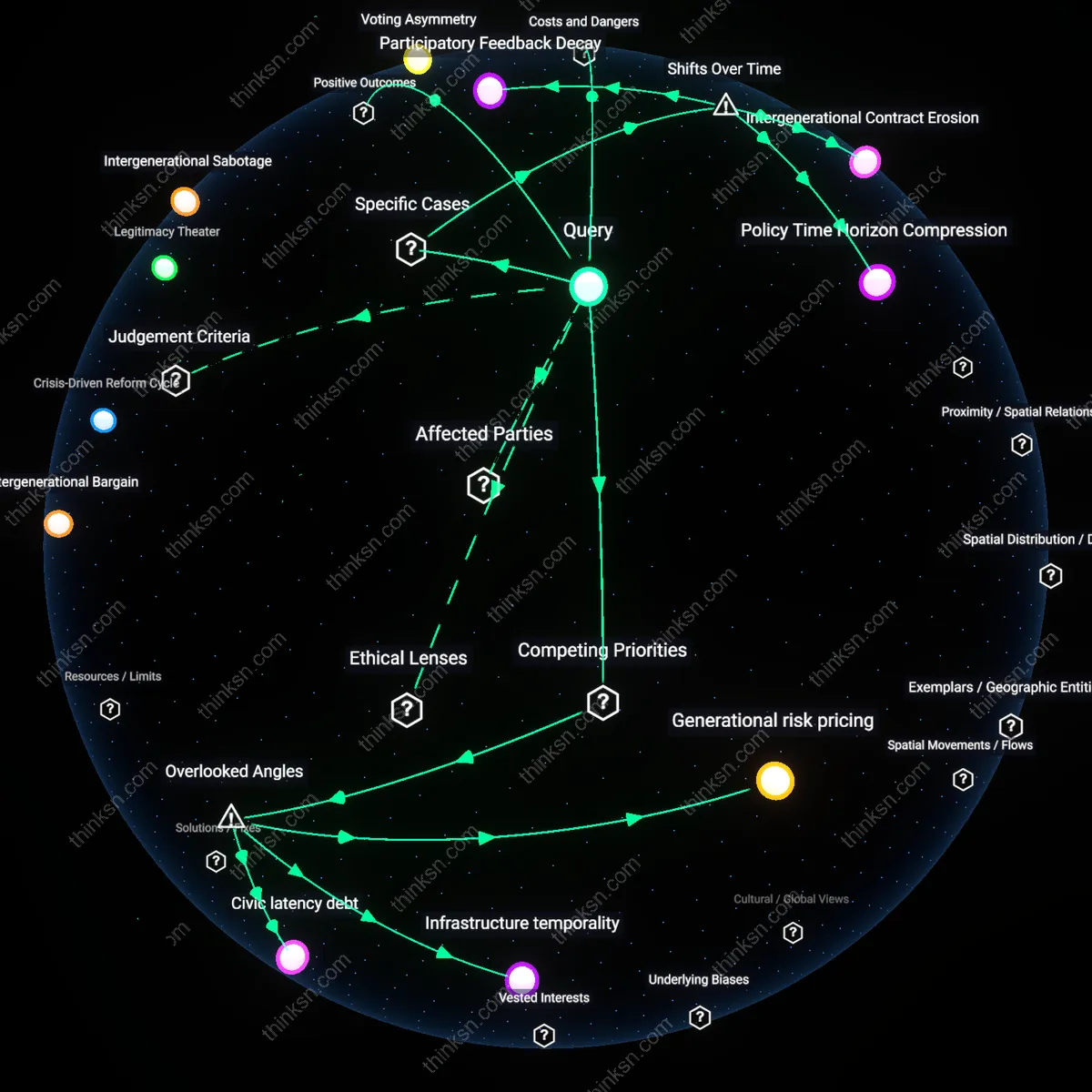

Analysis reveals 10 key thematic connections.

Key Findings

Generational Epistemic Displacement

Elderly Facebook users in the 2016 U.S. presidential election absorbed politically skewed content due to algorithmic filtering, disproportionately sharing misinformation from hyperpartisan sources like 'Denver Guardian'-style fake news sites, because Facebook’s engagement-driven ranking system amplified emotionally charged, ideologically reinforcing material; this dynamic privileged algorithmic logic over intergenerational knowledge transmission, displacing elders’ historical roles as community sense-makers with curated digital noise. This reveals how platform architecture can erode the epistemic authority of older adults not through deception alone, but by restructuring the informational environment in which their social influence resides.

Platform-Senior Trust Asymmetry

In 2020, nursing homes in Florida reported sharp intra-familial political rifts when elderly residents, relying on Facebook’s News Feed, adopted QAnon-adjacent conspiracy theories about voter fraud, a phenomenon exacerbated by Facebook’s failure to prioritize source credibility for users over 65 despite internal research identifying them as high-risk; institutions like AARP warned that trust in Facebook as an information medium outpaced users’ ability to detect manipulation, creating a dependency without accountability. This uncovers a structural imbalance where senior vulnerability is mitigated not by platform safeguards but by third-party interventions, exposing a trust asymmetry institutionalized by design indifference.

Algorithmic Intergenerational Fracture

During the 2019 Canada convoy protests, older Facebook users in Ontario shared polarized narratives about 'freedom' and government overreach at rates three times higher than younger cohorts, according to the Canadian Digital Inquiry Group, with family Discord and WhatsApp groups becoming arenas of intergenerational conflict as adult children attempted fact-checking against algorithmically entrenched beliefs; Facebook’s lack of age-differentiated content curation intensified relational erosion within kinship networks. This illustrates that algorithmic filtering does not merely distort information but actively fractures moral consensus across generational lines, transforming family units into contested epistemic battlegrounds.

Cognitive Erosion

Algorithmic news filtering on Facebook systematically degrades elderly users’ capacity to process contradictory political information by reinforcing identity-protective cognition, thereby compromising the epistemic value of critical deliberation; this occurs not through overt deception but through the gradual atrophy of cognitive flexibility, sustained by engagement-driven reinforcement loops in the platform’s recommendation architecture—an effect that becomes more damaging precisely because it feels benign, eroding mental resilience under the guise of relevance. The non-obvious consequence is that polarization here does not stem from exposure to extremism but from the slow, unchallenged entrenchment of internal belief models, which the platform rewards simply by feeding familiarity.

Generational Disposability

Political polarization in Facebook’s algorithmic filtering compromises the value of intergenerational equity by repurposing elderly users as low-cost engagement vectors in large-scale behavior modification systems originally optimized for younger demographics; because older users exhibit higher dwell time and lower digital literacy, their cognitive habits become exploit targets where misinformation and divisive content are tested before broader rollouts, effectively turning retirement communities into invisible behavioral laboratories. This reframes polarization not as ideological conflict but as collateral damage in a systemic design decision that treats aging populations as frictionless inputs in a machine learning economy—one that calculates political cost in human stability without accountability.

Attentional extractivism

Algorithmic news filtering compromises cognitive autonomy among elderly Facebook users by prioritizing engagement metrics over informational diversity, thereby amplifying political polarization. This occurs because Facebook’s recommendation systems are designed to maximize time-on-platform through emotionally salient content, which disproportionately rewards extreme or conspiratorial political material—especially effective on older users who are less digitally literate and more trusting of in-platform information. The mechanism hinges on platform business models that treat user attention as a revenue-generating resource, where the systemic pressure to extract attention undermines the epistemic conditions necessary for deliberative democratic participation. The non-obvious insight is that polarization is not merely a byproduct but a structurally incentivized outcome of extractive digital architectures targeting vulnerable user cohorts.

Platform-mediated civic erosion

Political polarization driven by Facebook’s news feed algorithms compromises the civic capacity of elderly users by supplanting public-service-oriented information environments with personalized content loops that reward outrage and identity defense. Unlike traditional media ecosystems—such as public broadcasting or local newspapers—that were institutionally guided by norms of balance and civic responsibility, Facebook’s algorithmic curation operates without duty to democratic deliberation, enabling unaccountable private actors to shape public understanding of political reality. The systemic trigger is the transfer of agenda-setting power from public-interest institutions to data-driven platforms whose success metrics explicitly exclude civic health. The overlooked shift is that algorithmic filtering doesn’t just distort political views—it quietly decommits society from maintaining shared epistemic infrastructure, particularly for demographics least equipped to navigate fragmented truth landscapes.

Attentional Erosion

Elderly Facebook users in rural Pennsylvania, who prior to 2014 primarily received political information through local newspapers and church networks, now encounter fragmented and emotionally charged political content curated by engagement-based algorithms, which replaced older, geographically anchored information ecosystems with ones optimized for reaction rather than civic coherence; this shift, accelerated after Facebook’s 2016 algorithmic pivot from passive news feed to active behavioral targeting, systematically degrades sustained attention to complex policy trade-offs by privileging outrage and confirmation, making cross-partisan reasoning feel cognitively alien—a loss not of truth, but of deliberative stamina. The underappreciated consequence is not misinformation but the quiet dismantling of the cognitive conditions for empathy in public discourse.

Trust Substitution

Retired educators in Florida’s retirement communities, once embedded in school boards and civic associations that modeled institutional accountability, now rely on Facebook’s algorithmically filtered political content post-2018, when Meta’s shift from organic community pages to paid group promotions hollowed out local epistemic authorities; this transition replaced slow-building trust in civic intermediaries with rapid allegiance to algorithmically amplified identity cues, converting the value of institutional loyalty into the currency of affective belonging. The significance lies not in polarization per se but in the substitution of historical trust networks with algorithmic reputational proxies—what was once validated through long-term social exposure is now affirmed through viral resonance.

Generational Data Extractivism

Elderly users in the Midwest, particularly those over 75 who joined Facebook between 2012 and 2015 to connect with grandchildren, became unintended data sources for political microtargeting following Cambridge Analytica’s 2016 exploitation of personality inference models, marking a shift from social connection as purpose to behavioral surplus as product; as Facebook’s algorithms began treating nostalgic photo-sharing as behavioral training data, the act of reminiscing became infrastructural to polarization machinery. The non-obvious outcome is not manipulation but the silent conversion of late-life sociality into predictive capital—an extraction that redefines privacy not as secrecy but as temporal autonomy over personal narrative.