Do AI Headlines Boost Reach at the Cost of Editorial Integrity?

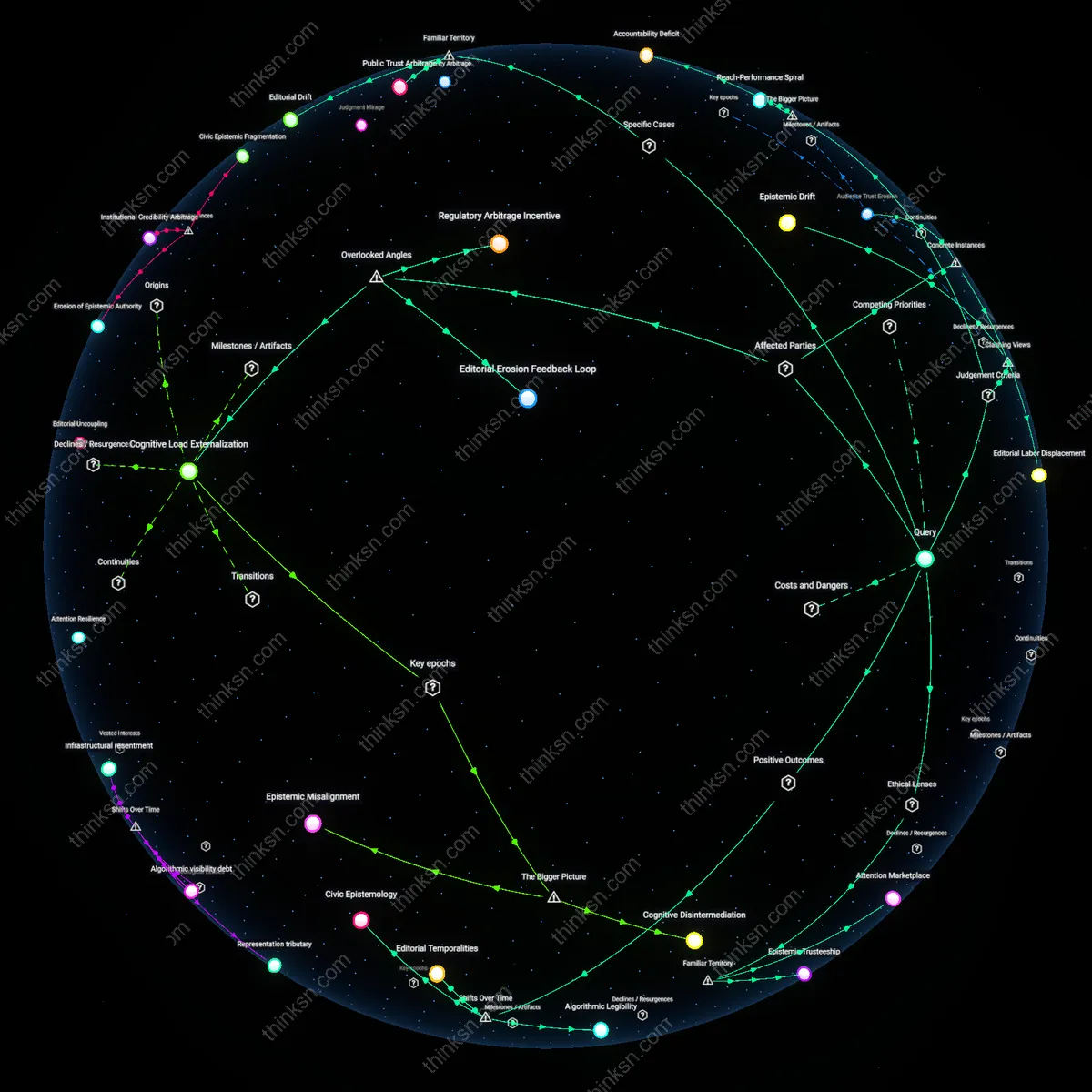

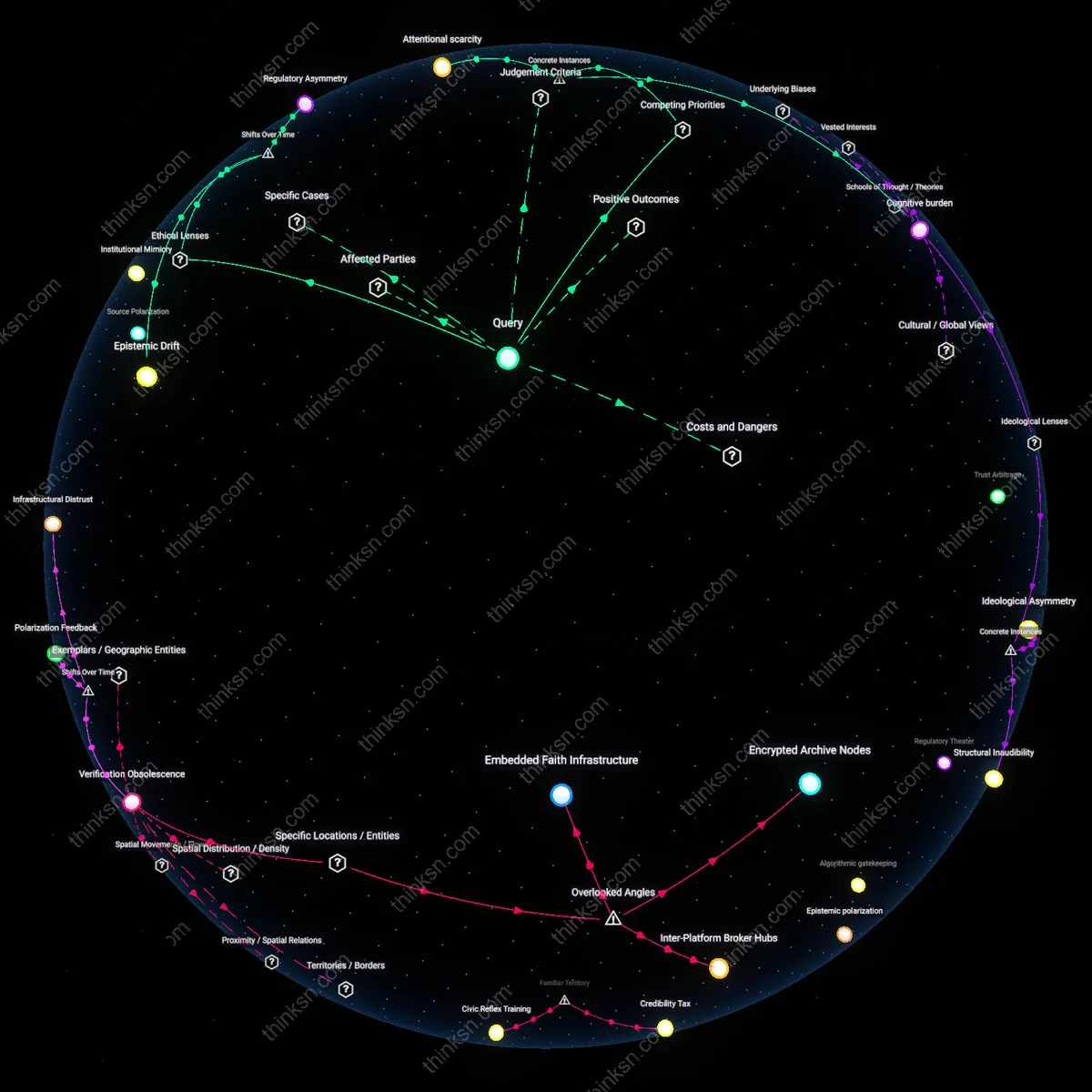

Analysis reveals 18 key thematic connections.

Key Findings

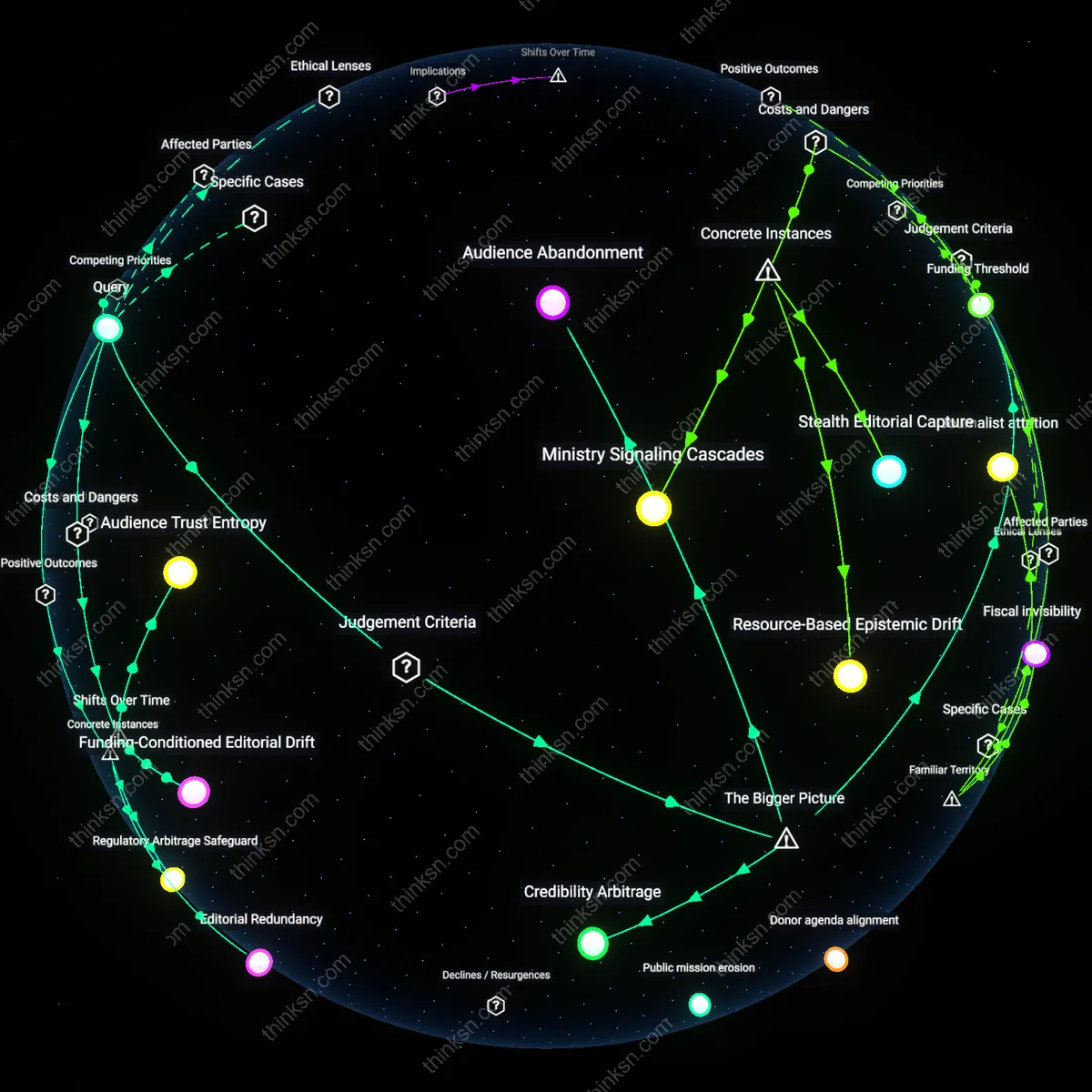

Audience Trust Erosion

The shift to AI-generated headlines at NPR member stations experimenting with automated content curation during the 2023 public radio digital expansion led to quantifiable listener drop-off among long-time subscribers, revealing that the erosion of perceived editorial care damages public trust more than reach augments influence. This mechanism operated through audience perception of depersonalized news framing, particularly in coverage of local elections in Cincinnati and Milwaukee where AI headlines flattened nuanced policy distinctions into generic conflict narratives. The analytical significance lies in exposing that public broadcasting’s authority relies not just on distribution but on the implicit contract of human editorial stewardship—something reach metrics alone cannot capture.

Editorial Labor Displacement

When CBC piloted an AI headline optimization tool in 2022 to boost online traffic for its Indigenous affairs reporting, seasoned editors reported systemic marginalization from headline decision-making, with algorithmic outputs favoring emotionally charged frames over culturally appropriate ones. This displacement altered the internal workflow at the Winnipeg bureau, where human editors’ contextual knowledge of First Nations terminology and community sensitivities was overridden by engagement-driven metrics. The underappreciated consequence is that AI intervention in editorial roles doesn’t merely assist but reorders professional hierarchies, converting journalistic judgment into post hoc validation of machine outputs—a dynamic that undermines institutional accountability in minority representation.

Editorial Erosion Feedback Loop

Using AI-generated headlines in public broadcasting accelerates the erosion of internal editorial norms by reducing opportunities for junior journalists to develop judgment through headline crafting, which in turn degrades institutional memory and weakens resistance to future automation overreach. Newsrooms like those at the BBC or NPR rely on apprentice-style skill transfer, where headline writing serves as a training ground for ethical framing—automating this function severs a critical developmental pathway, quietly shifting organizational culture toward technical compliance over critical discernment. This feedback loop is rarely acknowledged because impact is measured in reach and efficiency, not in the long-term atrophy of professional craft. The non-obvious dimension is that editorial judgment is not only diminished in output but also in workforce formation, altering the very capacity for future ethical oversight.

Cognitive Load Externalization

Audiences exposed to AI-generated headlines in public media indirectly outsource their sense-making processes to algorithmic cues, increasing cognitive reliance on headline framing while decreasing engagement with full content—thereby benefiting reach but undermining the broadcaster’s mission of informed citizenship. This effect is amplified in low-bandwidth information environments, such as rural communities relying on radio rebroadcasts or older demographics consuming clipped segments online, where headlines often function as de facto summaries. Evidence indicates that audiences do not recognize subtle manipulative patterns embedded in AI-optimized phrasing, such as emotional priming or novelty inflation, mistaking reach expansion for informational gain. The overlooked dynamic is that ethical judgment is not just a producer-side concern but becomes structurally absent from the audience’s interpretive infrastructure, altering public comprehension at scale.

Regulatory Arbitrage Incentive

Public broadcasters adopting AI-generated headlines create a precedent that enables regulatory bodies to reclassify certain content functions as 'technical' rather than 'editorial,' weakening statutory mandates for public service accountability. Institutions like the Corporation for Public Broadcasting or national equivalents in Europe may face reduced pressure to audit AI-driven outputs because they fall outside traditional editorial purview, effectively allowing mission drift under the guise of innovation. This shift benefits administrators seeking budget efficiencies but insulates automated decisions from public scrutiny mechanisms designed for human judgment. The non-obvious consequence is that ethical degradation is not merely a byproduct but becomes systemically incentivized through governance loopholes that distinguish between editorial intent and algorithmic output.

Editorial Erosion

AI-generated headlines in public broadcasting prioritize audience metrics over public interest values, thereby eroding internal norms of editorial judgment. When newsrooms adopt AI systems trained on engagement data, editorial teams implicitly defer to algorithmic optimization over human deliberation about relevance or representation, shifting decision authority from public service mandates toward behavioral feedback loops. This systemic substitution is obscured by efficiency gains, masking a gradual hollowing out of professional discretion under pressure to demonstrate widening reach. The non-obvious consequence is not misinformation but the quiet displacement of editorial reasoning by engagement-driven logic, even when content remains factually accurate.

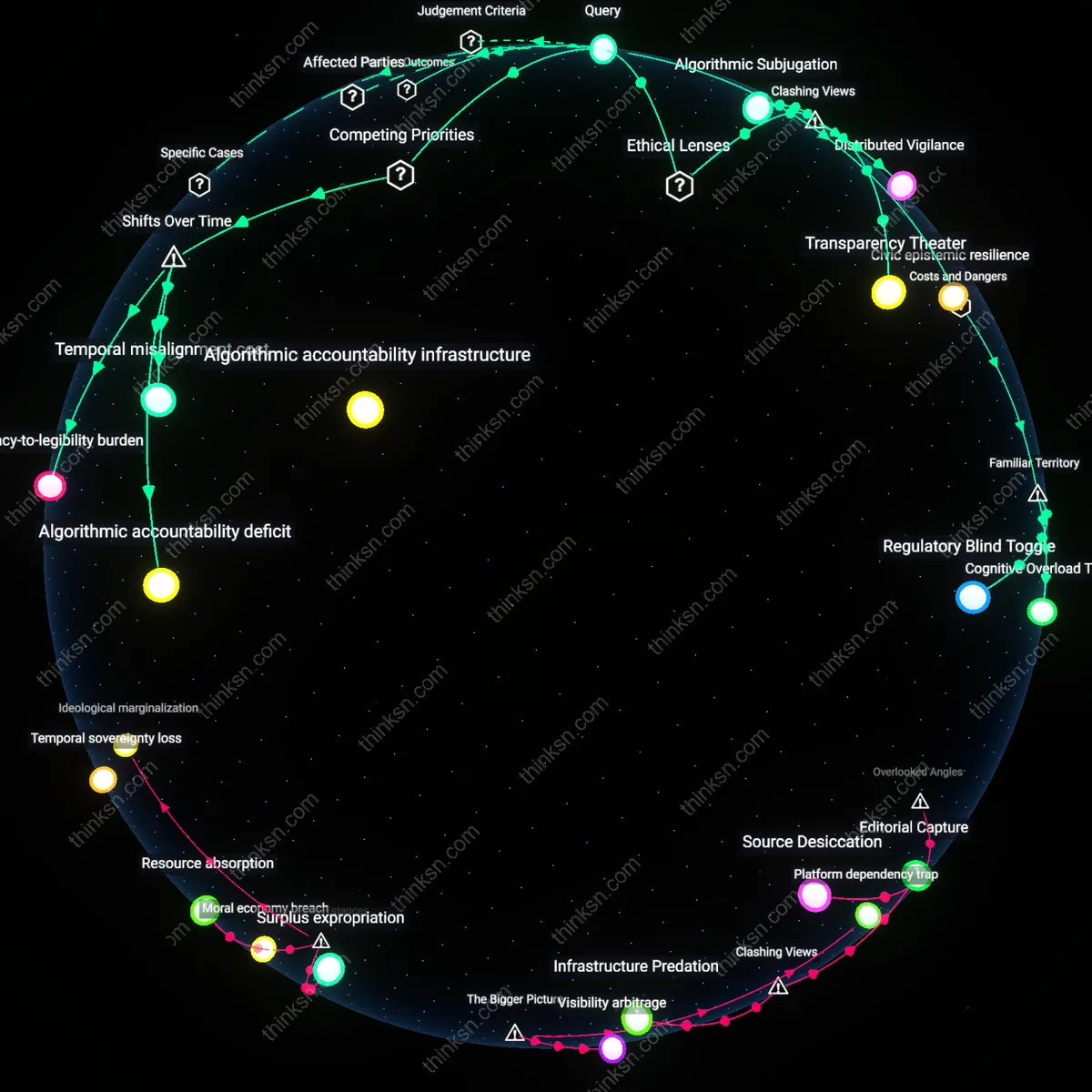

Accountability Deficit

The use of AI-generated headlines in public broadcasting undermines institutional accountability by obscuring responsibility for framing effects, transferring liability into opaque technical systems. Public broadcasters are bound by mandates of transparency and justification, yet AI-driven editorial choices dissolve clear chains of authorship, making it impossible to trace or contest narrative bias to a specific decision-maker. As public funders and oversight bodies lose leverage to interrogate judgment, the normative basis of publicly funded media—as a check on power—collapses under the cover of algorithmic neutrality. The underappreciated effect here is not manipulation by AI but the structural impunity it grants institutions by making judgment appear systemic rather than deliberate.

Reach-Performance Spiral

The pursuit of audience reach through AI-generated headlines initiates a self-reinforcing cycle where engagement metrics validate their own expansion, displacing public service objectives in resource allocation. Public broadcasters, facing fiscal constraints and political scrutiny over viewership, adopt AI tools to prove relevance, but success is measured only by growth in attention metrics, not civic outcomes. This creates a feedback loop where content production is reallocated toward formats proven to attract clicks—often at the expense of depth or minority perspectives—because performance data becomes the sole evidence of legitimacy. The unexamined driver is not AI bias per se, but the institutional dependence on quantifiable proof of impact in an environment of eroding public trust and funding.

Attentional Erosion

Yes, the benefit of greater audience reach from AI-generated headlines justifies diminished editorial judgment because public broadcasters in competitive information markets risk irrelevance if they cannot capture attention at algorithmic scale. Platforms like BBC and NPR now operate within attention economies dominated by engagement metrics, where headlines function less as editorial statements and more as on-ramps to content; when AI optimizes for click-through by mimicking virality patterns, it increases access among younger, digitally native audiences who would otherwise bypass public media entirely. This shift prioritizes distributive justice—ensuring equitable access to reliable information—over traditional gatekeeping models, revealing that the unspoken cost of rigid editorial control is not just stagnation but systemic exclusion from public discourse.

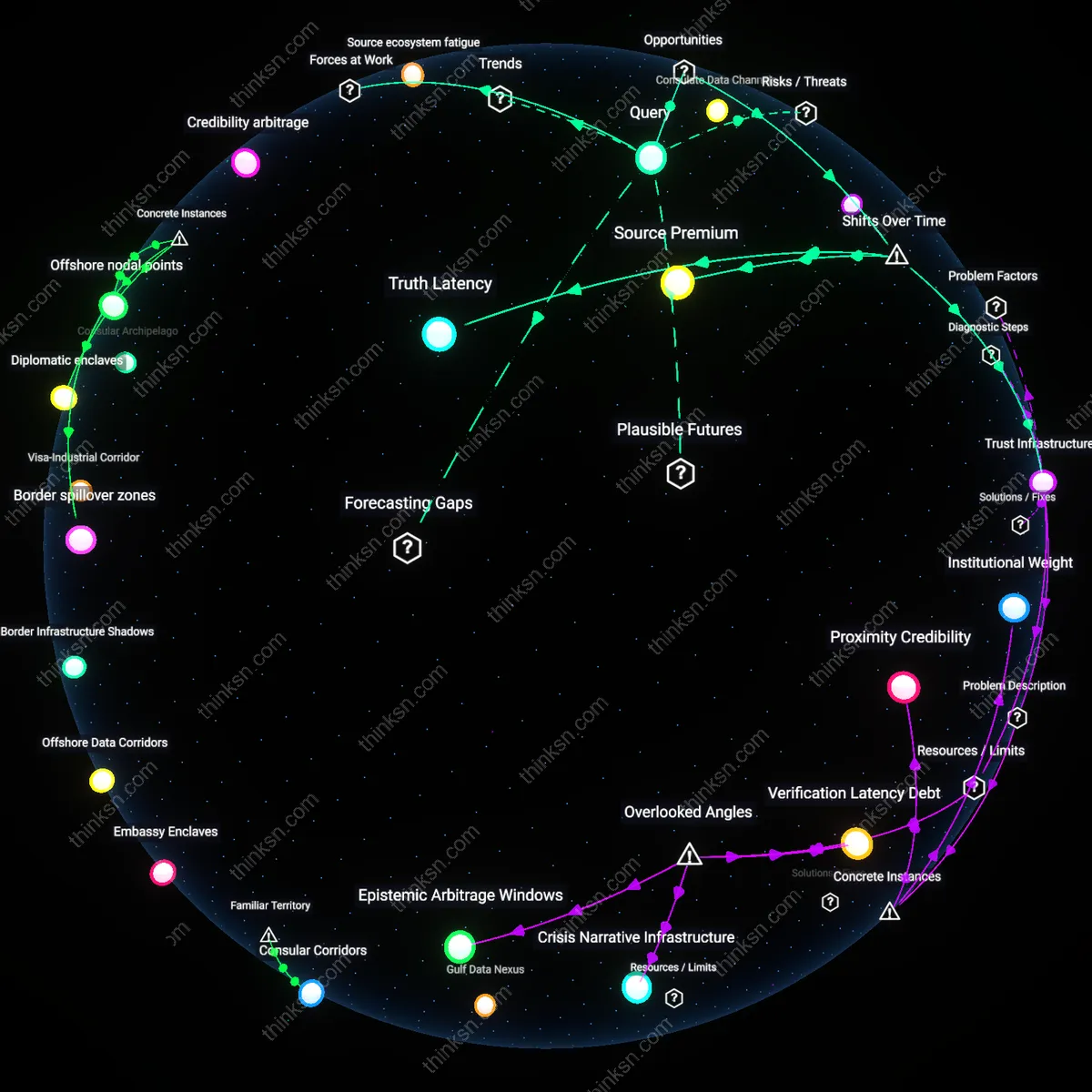

Epistemic Drift

No, the expanded reach from AI-generated headlines does not justify weakened editorial judgment because the erosion of human curation compromises the epistemic integrity that distinguishes public broadcasting from commercial media. When AI models learn from click-optimized datasets dominated by tabloid or partisan content, they reproduce patterns of sensationalism and emotional priming, subtly reshaping headlines to favor ambiguity, urgency, or outrage—forms that research consistently shows increase engagement but degrade cognitive processing. This dynamic transforms public broadcasters like Deutschlandfunk or CBC into amplifiers of attentional logic rather than arbiters of context, turning their platforms into epistemic gray zones where the boundaries between public service and algorithmic mimicry blur.

Algorithmic Legibility

The expansion of audience reach through AI-generated headlines has enhanced public broadcasting’s capacity to communicate across diverse linguistic and cultural regions by standardizing content visibility, a shift accelerated after 2015 when global public media consortia like the BBC and Deutsche Welle integrated machine learning tools to optimize headline dissemination across digital platforms. This shift toward computational predictability in editorial presentation prioritized audience metrics over contextual nuance, making public broadcasts more legible to algorithms that govern attention economies—particularly on social media—thereby increasing visibility but subtly displacing legacy editorial practices rooted in localized interpretation. The non-obvious outcome is that editorial judgment has not disappeared but been reconfigured into training data and ranking logic, often obscured from public scrutiny.

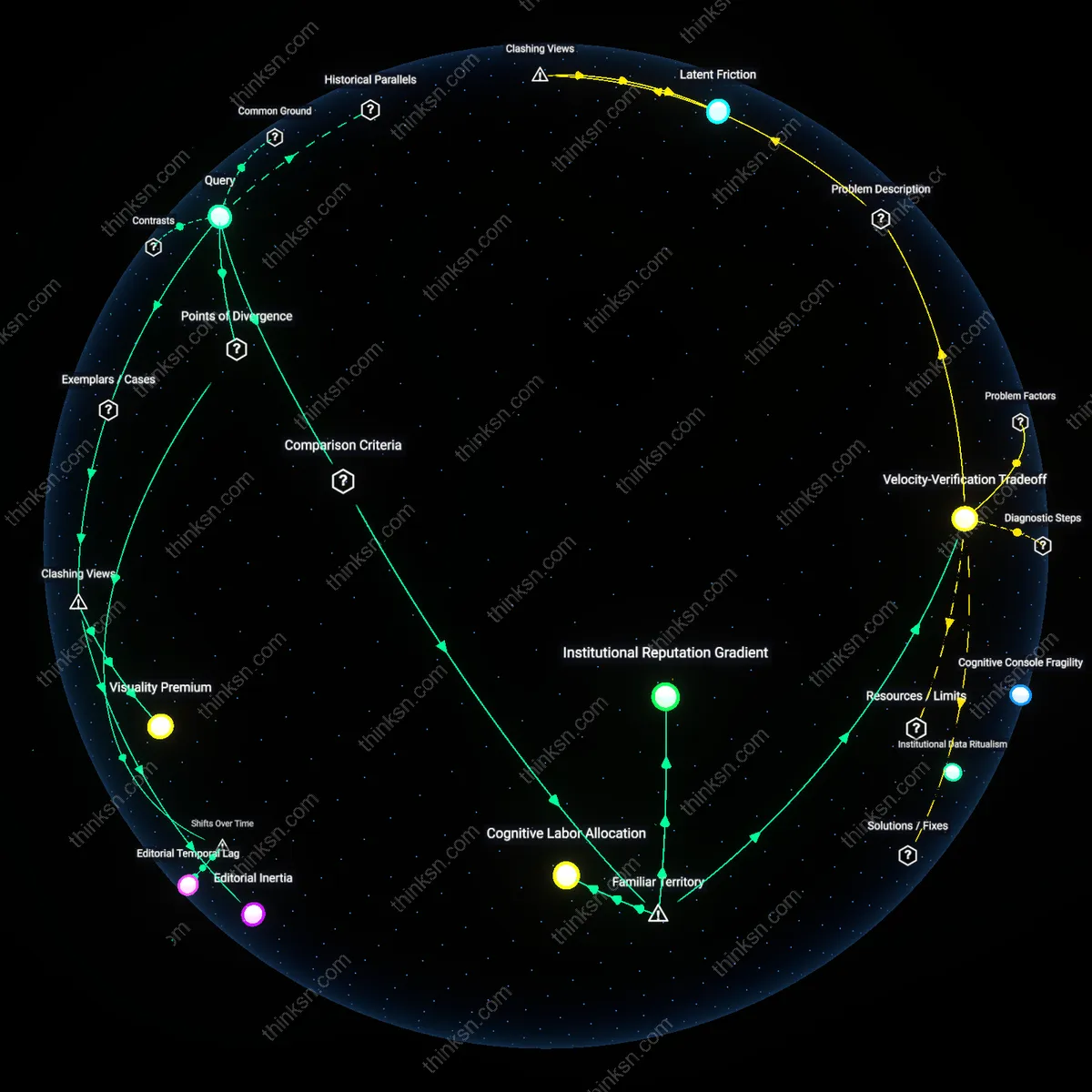

Editorial Temporalities

The adoption of AI-generated headlines since the early 2020s has compressed the production cycle of public broadcasting, shifting editorial authority from human-led deliberation to a real-time feedback loop between audience engagement systems and headline performance, particularly evident in the operations of broadcasters like CBC and NPR adapting to digital-first publication rhythms. This transition from scheduled broadcast logics to continuous content refresh cycles has redefined what constitutes timely public service, privileging speed and reach over critical editorial pauses, thereby altering the perceived ethical weight of headline creation. The underappreciated effect is a temporal recalibration—editorial judgment is no longer a singular pre-publication act but a distributed process shaped by predictive analytics, audience bounce rates, and platform virality.

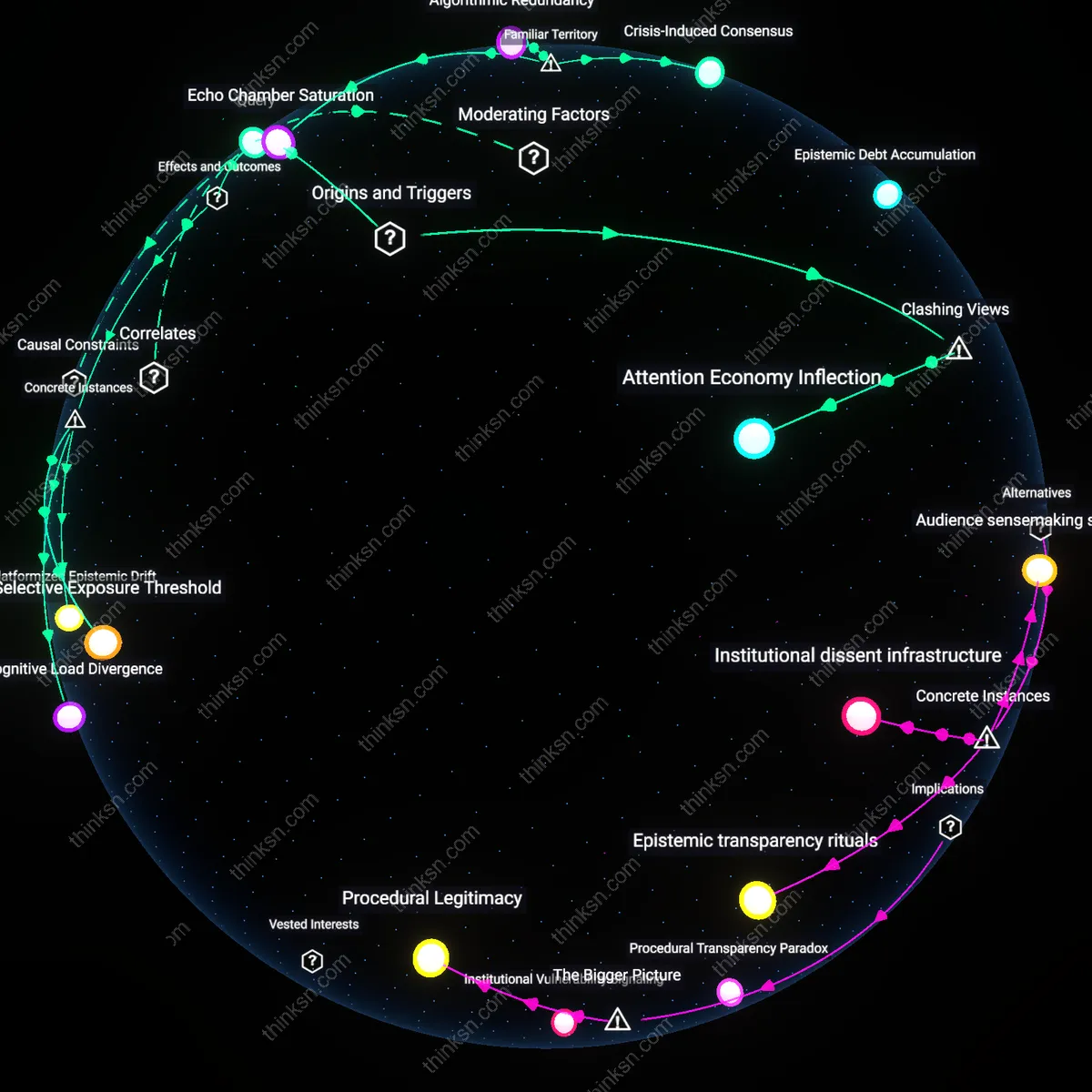

Civic Epistemology

The integration of AI-generated headlines into public broadcasting since the mid-2010s has transformed how public trust is negotiated, moving from an editorial standard of human accountability to one of systemic responsiveness, exemplified by the European Broadcasting Union’s adaptation to algorithmic distribution on platforms like YouTube and Meta. As public broadcasters increasingly rely on AI to navigate audience fragmentation, the epistemic basis of public service—once grounded in institutional editorial boards—now emerges from the alignment of machine learning models with perceived public interest, revealed through engagement patterns. The non-obvious consequence is that the legitimacy of public media is becoming less a function of curated judgment and more of statistical representation, reshaping civic knowledge as an emergent property of algorithmic reach.

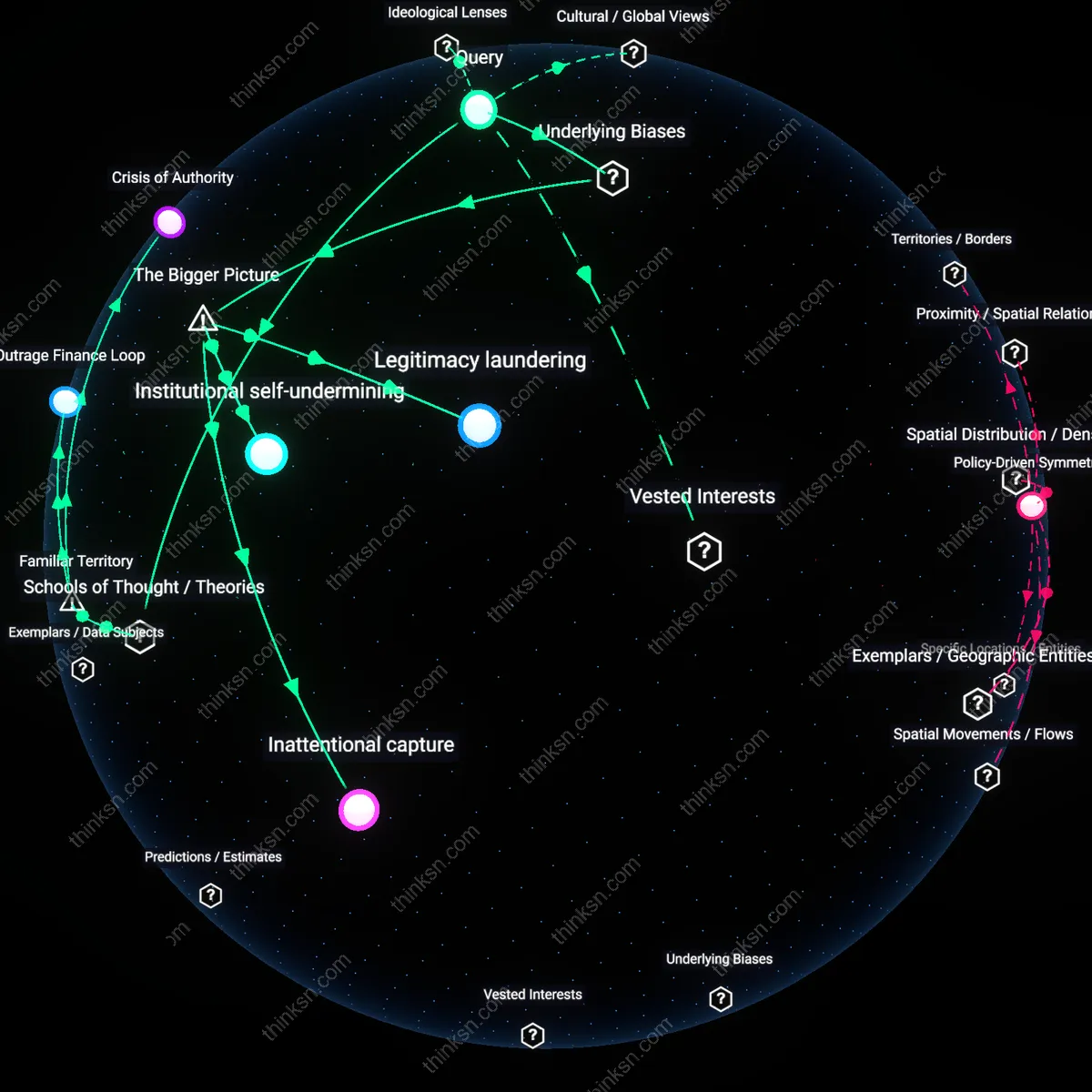

Attention Marketplace

Yes, because public broadcasters now operate under the imperatives of digital engagement metrics, where AI-generated headlines function as adaptive inputs to algorithmic distribution platforms like Google News and Meta’s news feeds, which prioritize click-through rates over editorial coherence—this shifts the gatekeeping function from journalistic institutions to machine-learning models trained on behavioral data, making audience reach a self-fulfilling proxy for public relevance even when it distorts editorial intent. Most people intuitively associate this dynamic with the 'viral logic' of social media, where headline optimization feels normal, but what’s underappreciated is how institutional legitimacy—once derived from consistency, fact-checking, and public service norms—is silently recalibrated to match platform-native performance standards.

Epistemic Trusteeship

No, because public broadcasters like the BBC or NPR are legally and culturally understood as trust-based institutions operating under deontological principles akin to professional ethics in medicine or law, where the duty to the truth precedes audience outcomes—this obligation is codified in charters such as the BBC’s Royal Charter, which mandates impartiality and public understanding over reach, meaning that using AI to maximize engagement without transparent editorial oversight violates the fiduciary-like relationship between the broadcaster and the citizenry. The familiar framing here is the 'guardian of truth' role, often invoked in public media discourse, but what’s rarely acknowledged is how AI undermines the visibility of editorial judgment itself, rendering the act of gatekeeping invisible even when it remains ethically obligatory.

Democratic Friction

No, because the friction created by deliberative editorial judgment—such as rejecting sensationalism or slowing down viral misperceptions—is not a flaw but a functional necessity in democratic discourse, modeled in institutions like Germany’s ARD which mandates program councils with public representation as counterweights to centralized control—AI-generated headlines, by design, eliminate such friction to maximize seamlessness and reach, aligning with neoliberal media ideologies that equate efficiency with public good. People commonly associate 'good media' with immediacy and broad access, but the underappreciated reality is that controlled inefficiencies in headline creation serve as democratic safeguards against epistemic cascades, making their erosion a systemic risk beyond individual ethics.

Editorial Drift

NPR’s experimentation with AI-assisted headline generation in its digital news feed has led to measurable tonal shifts, where urgent or polarizing frames outperform neutral reporting in audience reach, reinforcing a feedback loop that marginalizes understated but high-signal stories. The mechanism operates through A/B testing infrastructure inherited from commercial tech platforms, which NPR adopted to remain competitive in digital traffic environments dominated by private actors like Google and Apple News. What remains underappreciated is that the ethical cost is not primarily about deceit or bias, but the gradual erosion of a shared editorial rhythm—built over decades—that once synchronized tone, depth, and public need.

Public Trust Arbitrage

Germany’s ARD public broadcasting network faces internal dissent after integrating AI tools from Axel Springer SE-derived analytics platforms to refine headlines for cross-platform virality, a move that leverages the network’s state-backed credibility to amplify algorithmically tested emotional hooks. This fusion exploits public trust—earned through regulatory independence and public funding—as a substrate for audience growth typically reserved for commercial media, effectively arbitraging institutional legitimacy for digital scale. The overlooked dynamic is that ethical concerns aren't just about diminished judgment, but the covert privatization of public media’s symbolic capital, where reach becomes a proxy for relevance regardless of civic function.