Is the AI Ethics Board Push About Standards or Politics?

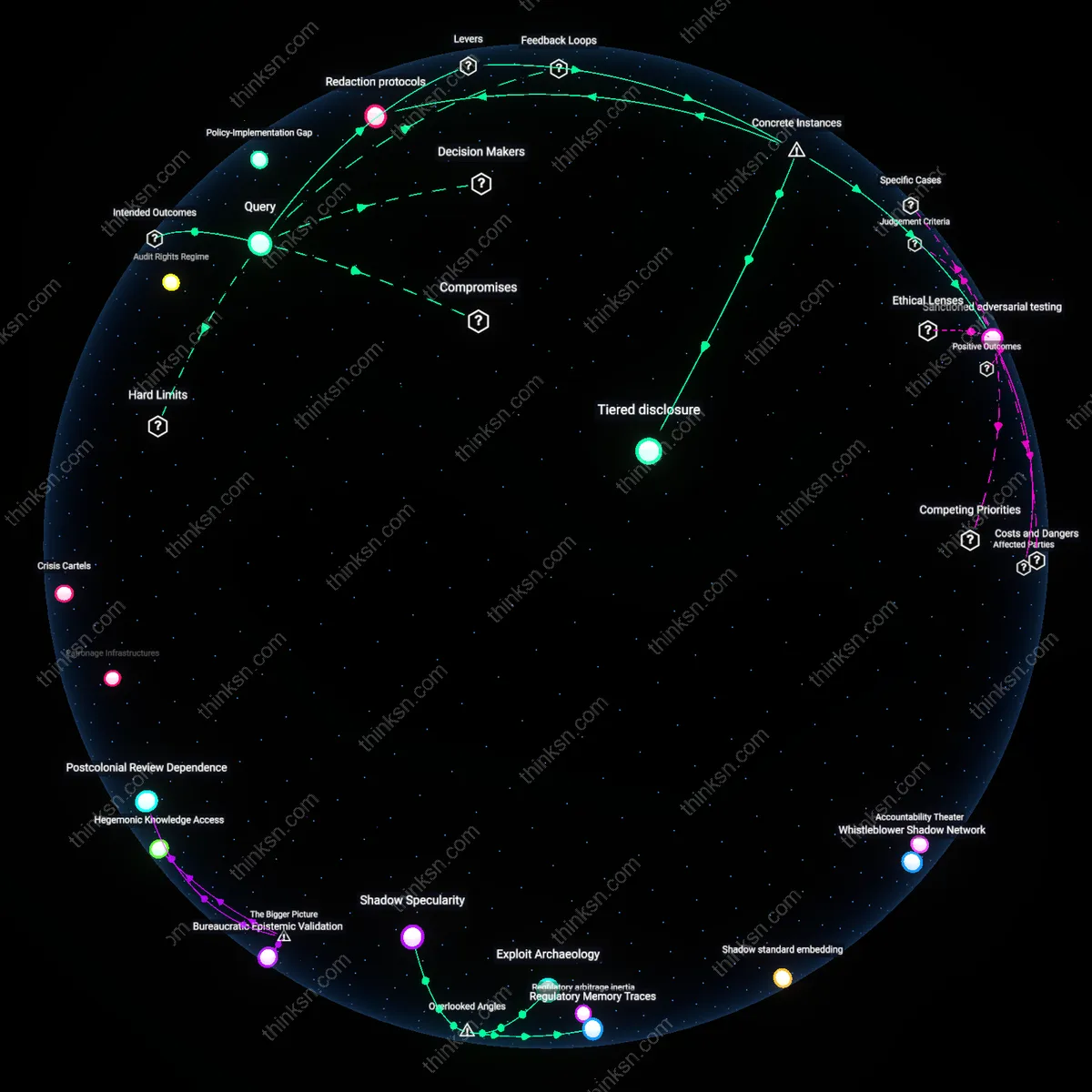

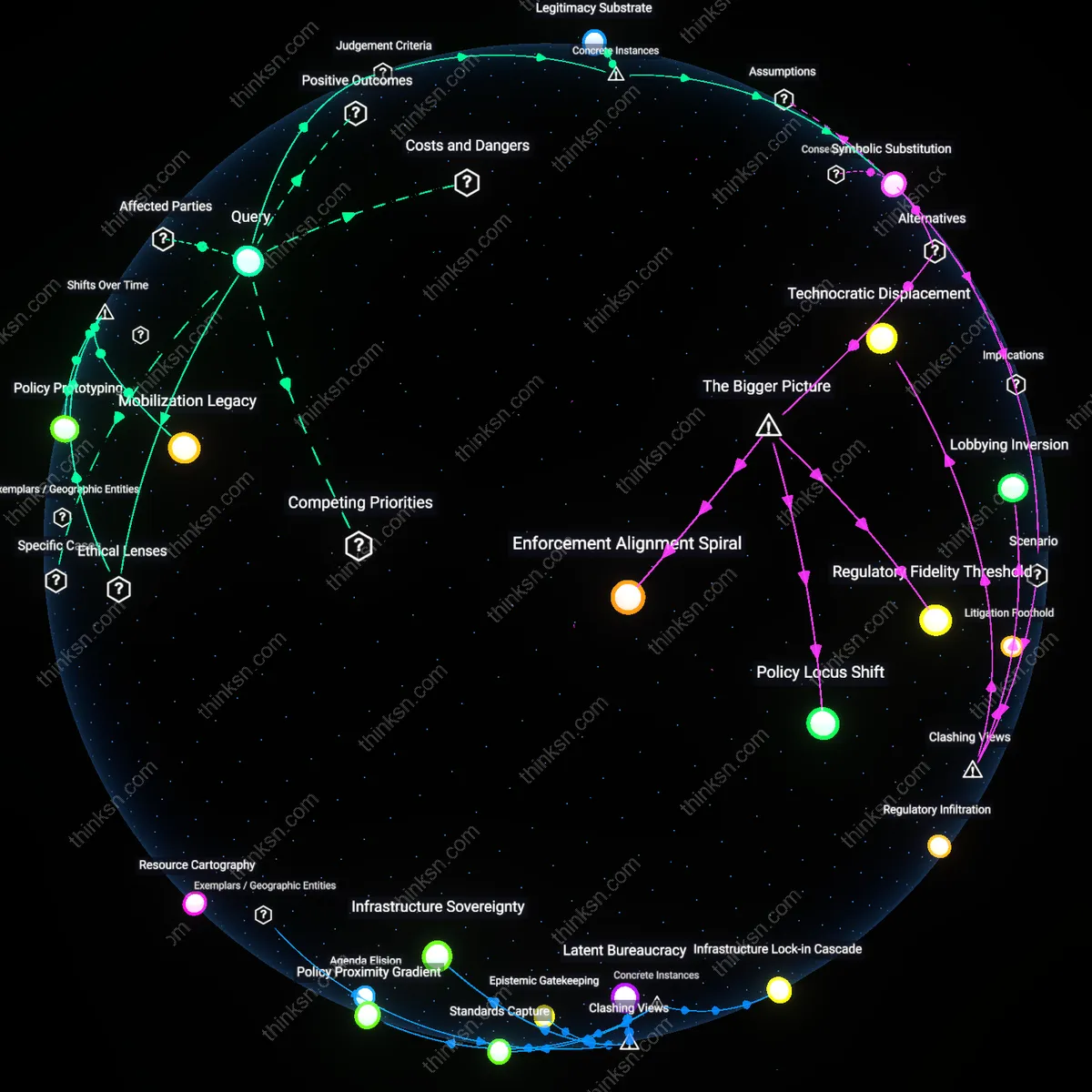

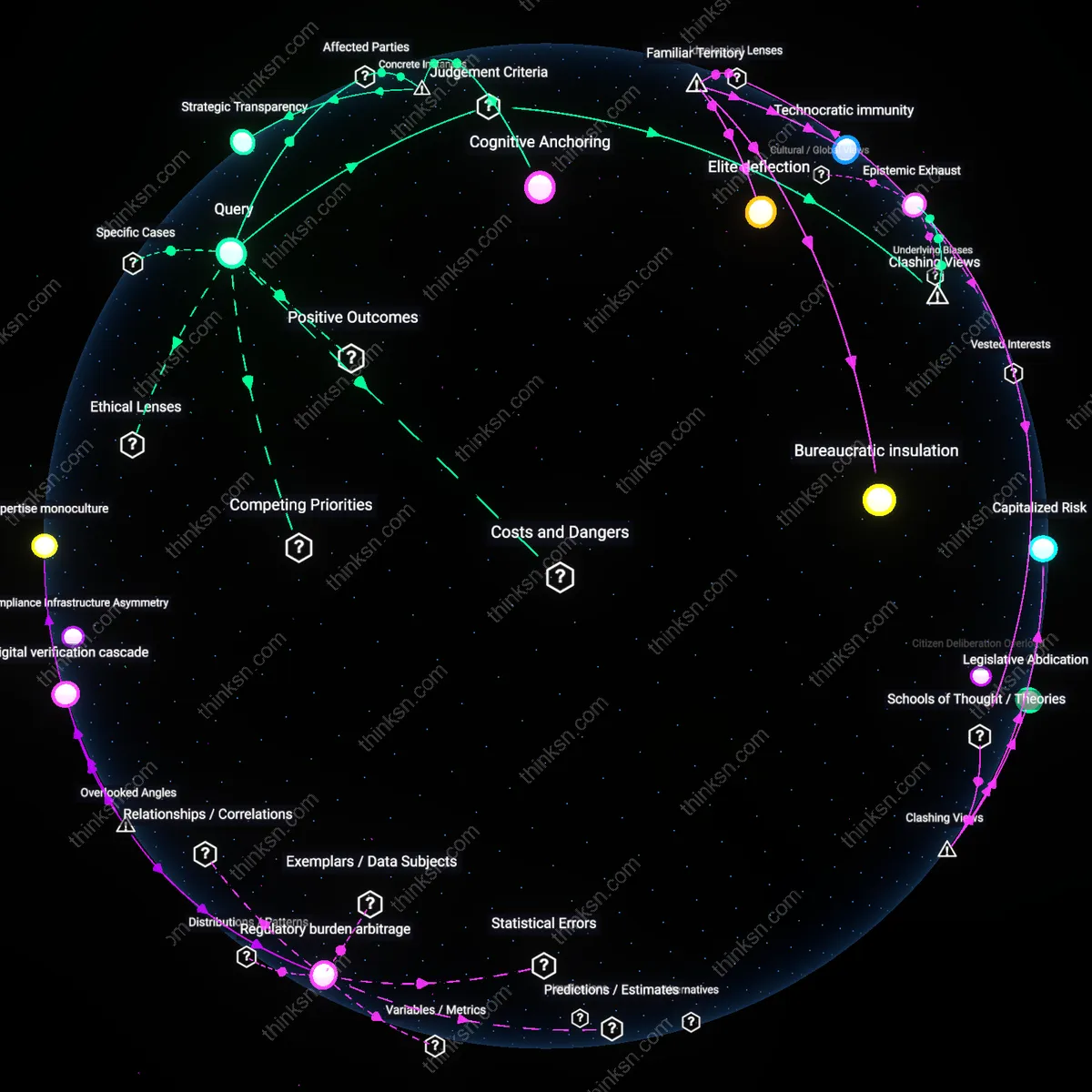

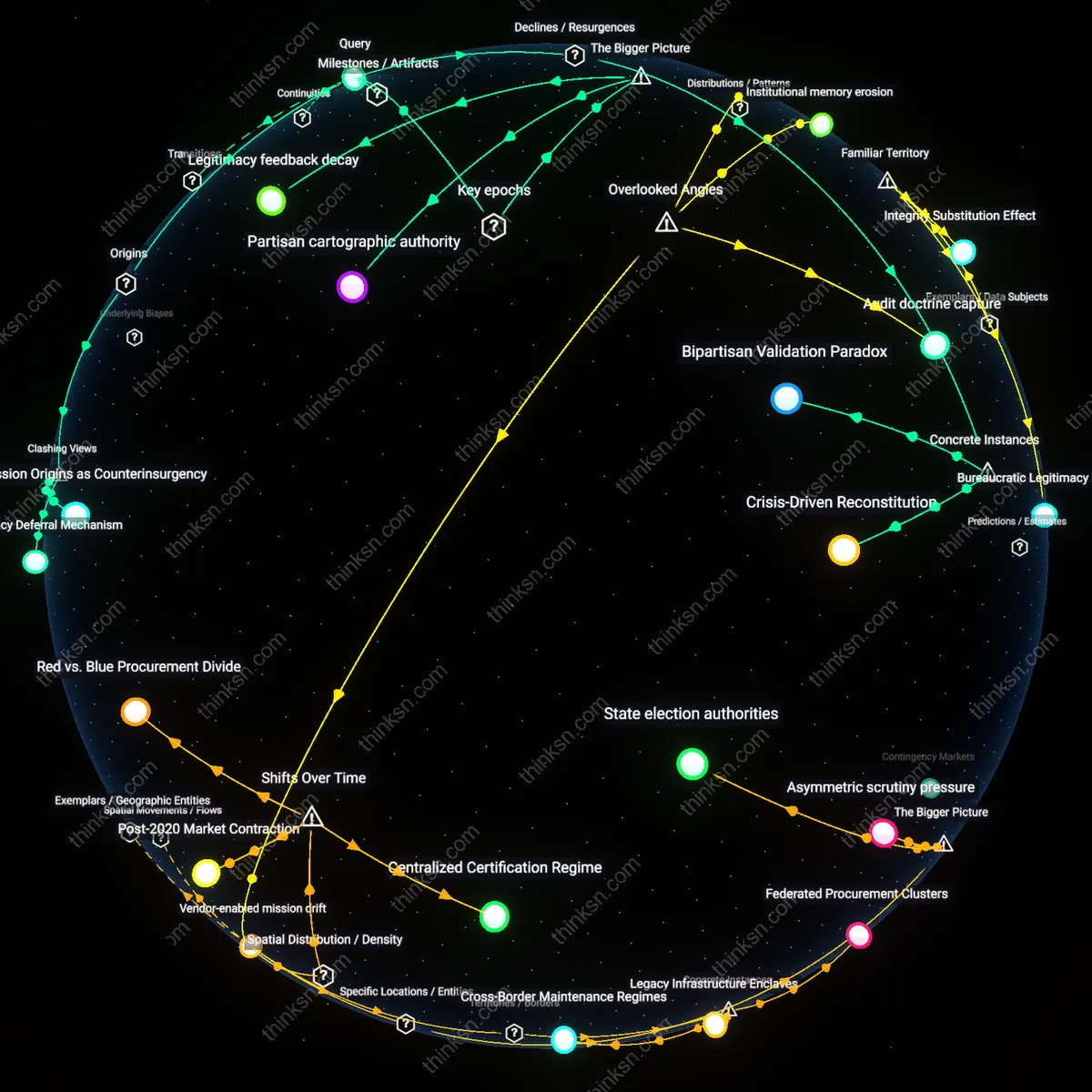

Analysis reveals 6 key thematic connections.

Key Findings

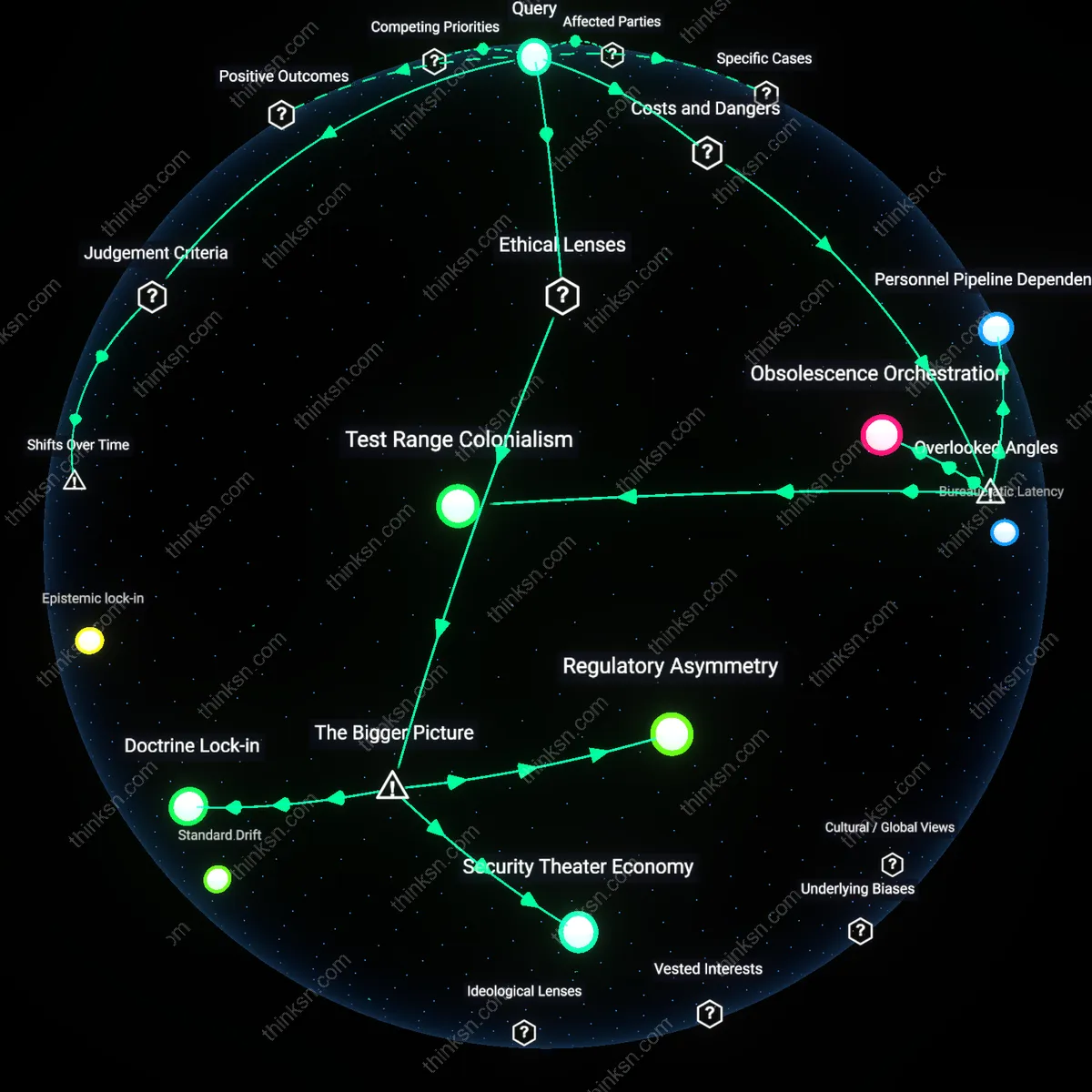

Regulatory legitimacy

The primary motivation behind the push for a U.S. federal AI ethics board is to establish coordinated standards, as regulatory agencies and industry stakeholders seek uniform guidelines to manage liability, interoperability, and public trust. This effort operates through federal interagency coordination—such as between the OSTP, NIST, and the FCC—where standardized frameworks reduce fragmentation in state-level policymaking and private sector compliance. What is often overlooked is that, despite partisan noise, the core demand comes from tech firms themselves, which prefer predictable federal baselines over a patchwork of conflicting state laws, revealing this as a governance stability play rather than ideological control.

Narrative sovereignty

The primary motivation is enabling political influence over the narrative on emerging technologies, where elected officials and party-aligned think tanks use the ethics board proposal to frame AI development within moral and national competitiveness discourses. This functions through media visibility and congressional spectacle, such as high-profile hearings positioning lawmakers as guardians of public values against corporate technocracy. The underappreciated reality is that the board’s symbolic function—projecting oversight even without enforcement power—matters more than its technical output, allowing political actors to claim ownership of AI’s societal meaning amid election cycles and geopolitical rivalry.

Institutional consensus

The push reflects an ideological compromise among technocratic liberals who believe expert-driven, neutral oversight can balance innovation and civil rights, contrasting with both conservative skepticism of federal overreach and Marxist critiques of capital-state collusion. This is institutionalized through advisory bodies like the National AI Advisory Committee, where academic researchers and corporate ethicists converge on procedural norms that avoid structural challenges to ownership or labor displacement. The unstated truth is that this consensus deliberately excludes grassroots AI justice movements, framing ethics as technical calibration rather than power redistribution.

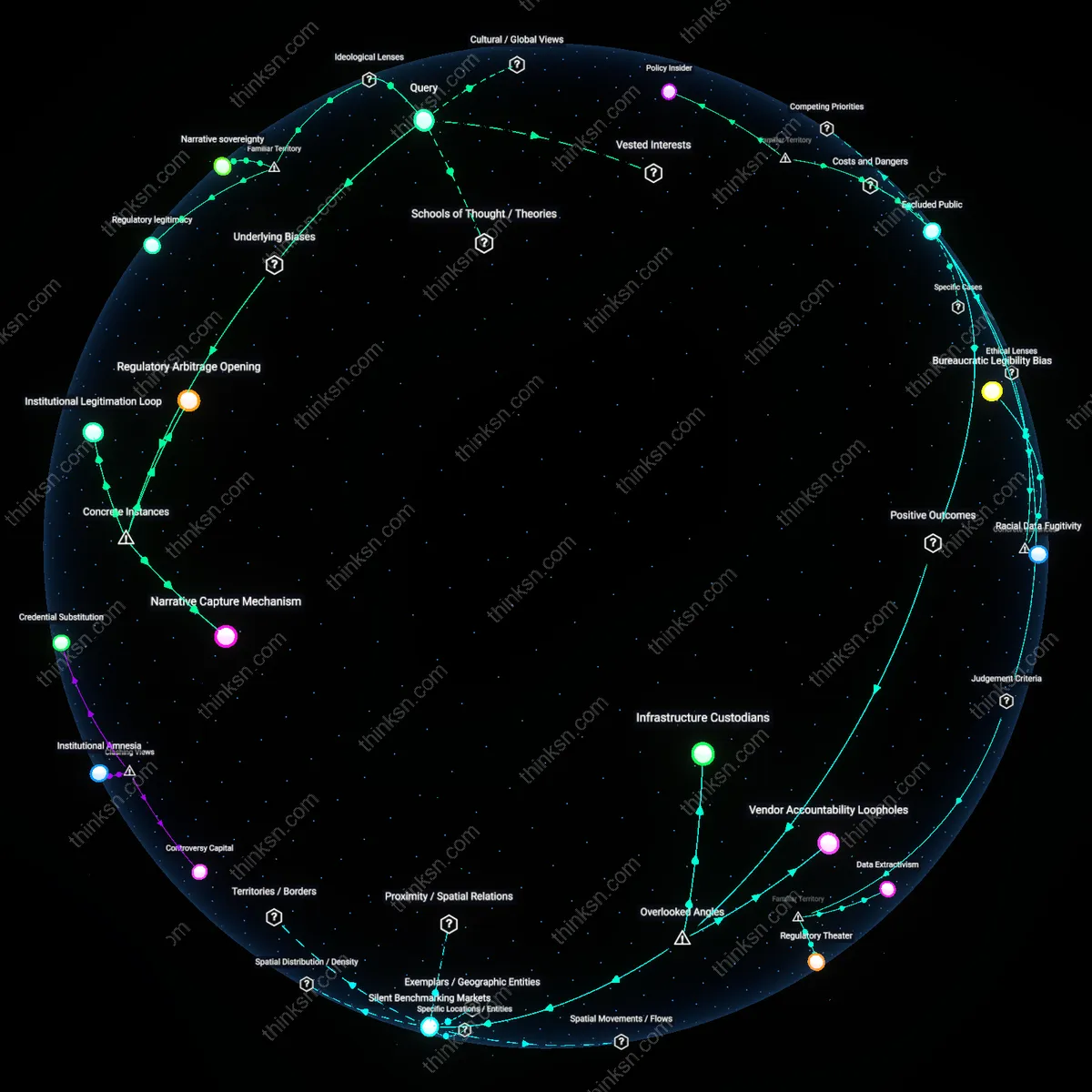

Regulatory Arbitrage Opening

The primary motivation behind the push for a U.S. federal AI ethics board is to preempt state-level regulatory fragmentation by creating a centralized, industry-friendly standard that protects dominant tech firms from stricter local oversight, as seen in how Meta and Google lobbied for federal privacy legislation following California’s enactment of the CCPA in 2018. This mechanism allows national standards to function as a floor rather than a ceiling, effectively invalidating more stringent state rules under federal preemption principles, which systematically benefits Silicon Valley corporations by reducing compliance variability. The non-obvious insight is that standardization is not neutral—it institutionalizes corporate preferences under the guise of coordination, enabling firms to shape ethics as operational constraints rather than transformative accountability.

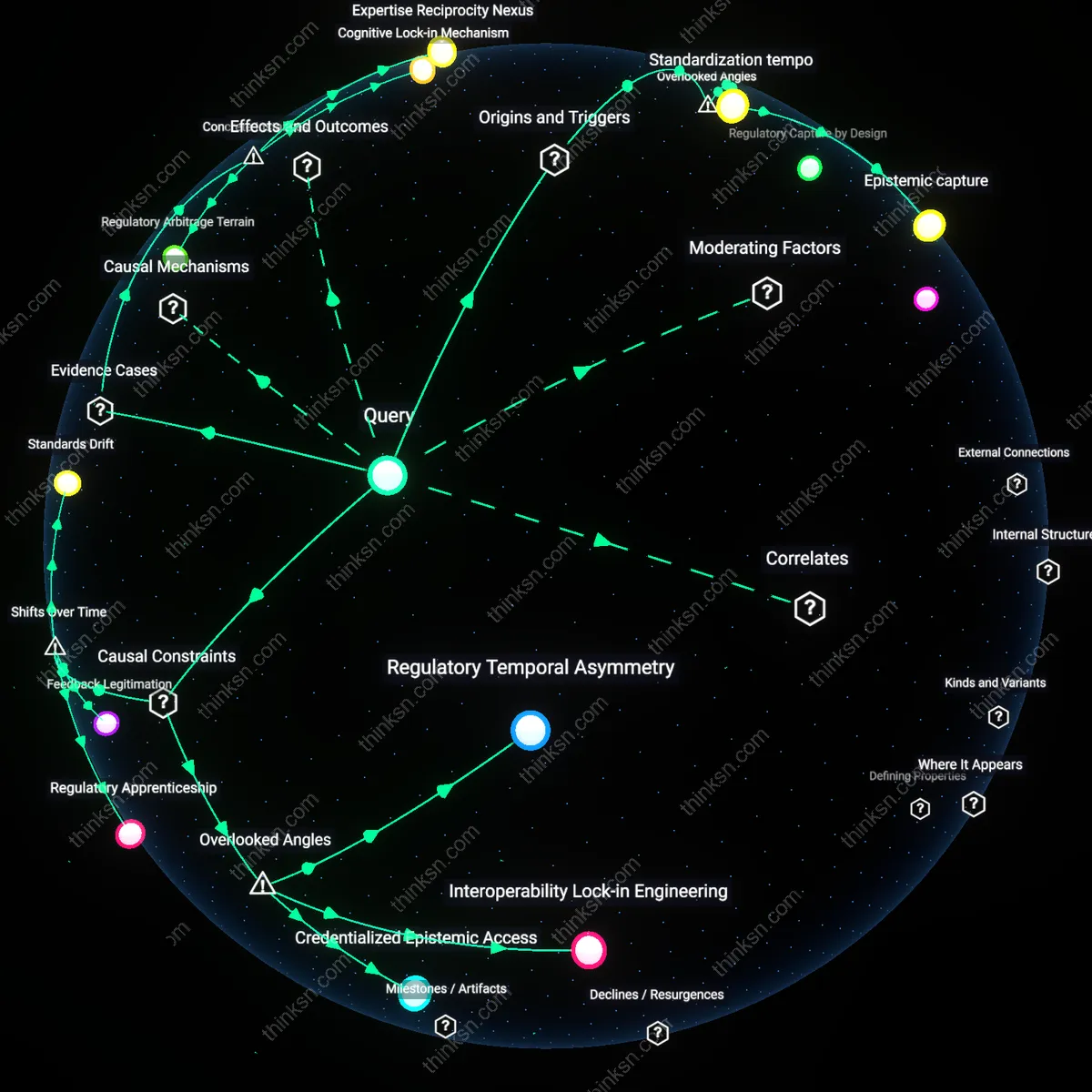

Narrative Capture Mechanism

The primary motivation is to position federal institutions as authoritative arbiters of AI legitimacy, exemplified by the White House’s 2023 rollout of the AI Bill of Rights Blueprint, which elevated administration-aligned technocrats and sidelined civil society experts in defining ethical boundaries. This effort operationalized ethics discourse through executive branding rather than legislative process, embedding political narratives about innovation and safety that prioritize continuity of technological investment over democratic scrutiny. The underappreciated dynamic is that ethics boards function less as oversight bodies than as signaling platforms, where control over language and classification systems enables political actors to manage public perception and marginalize dissenting analyses.

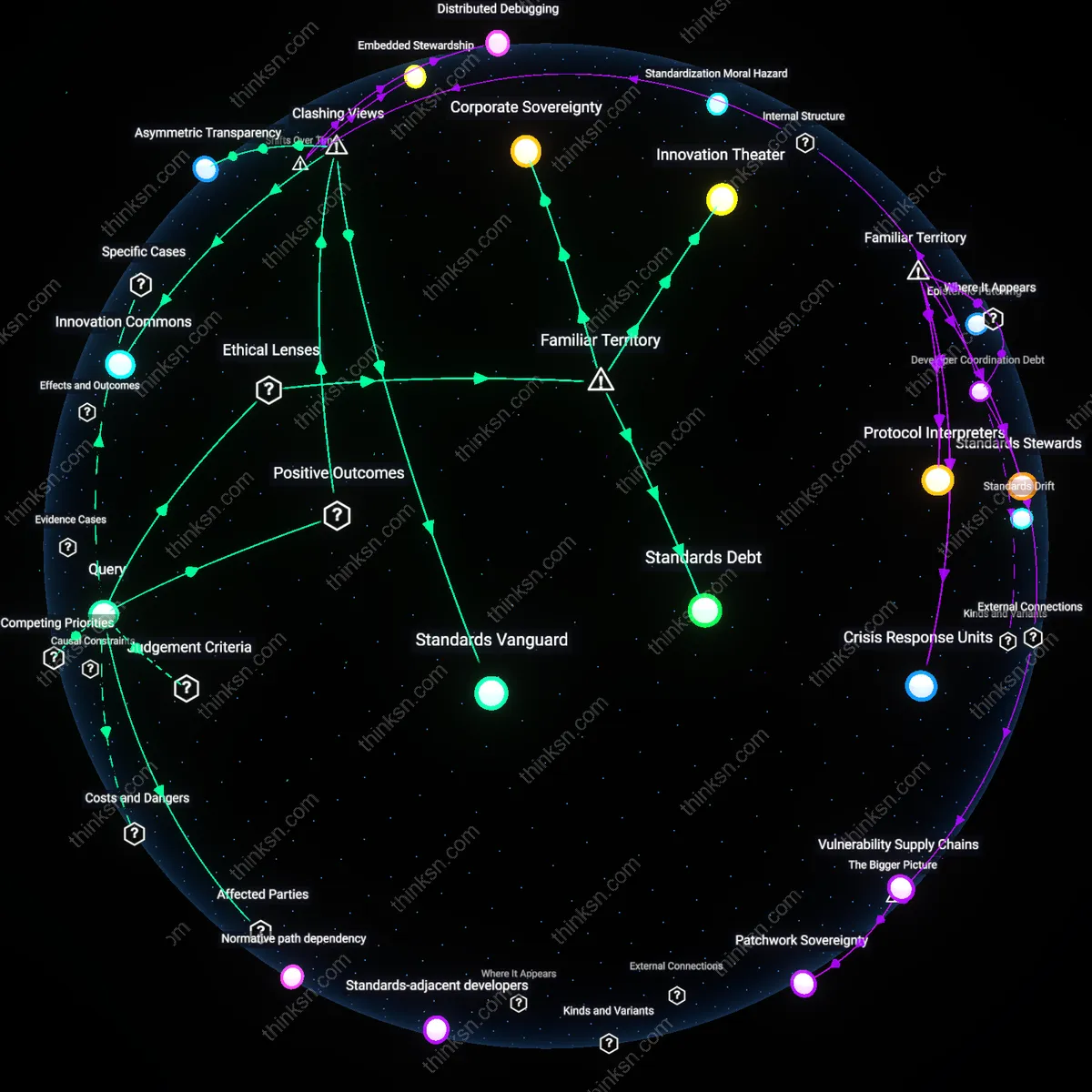

Institutional Legitimation Loop

The push originates from federal research agencies seeking to maintain funding dominance by aligning AI development with state-defined ethical frameworks, illustrated by the National Institute of Standards and Technology (NIST) becoming the lead coordinator of the U.S. AI Risk Management Framework in 2023. NIST’s neutral posture enabled it to absorb competing stakeholder inputs while ultimately privileging defense and corporate partners through closed-door workshops and proprietary testing benchmarks, reinforcing its role as gatekeeper of technical legitimacy. The overlooked consequence is that ethics standardization becomes a self-referential process where the authority to define risk consolidates within existing bureaucratic hierarchies, turning ethics into a credentialing mechanism rather than a constraint on power.