Is Screen Time Educational or Just Commercial Pressure?

Analysis reveals 12 key thematic connections.

Key Findings

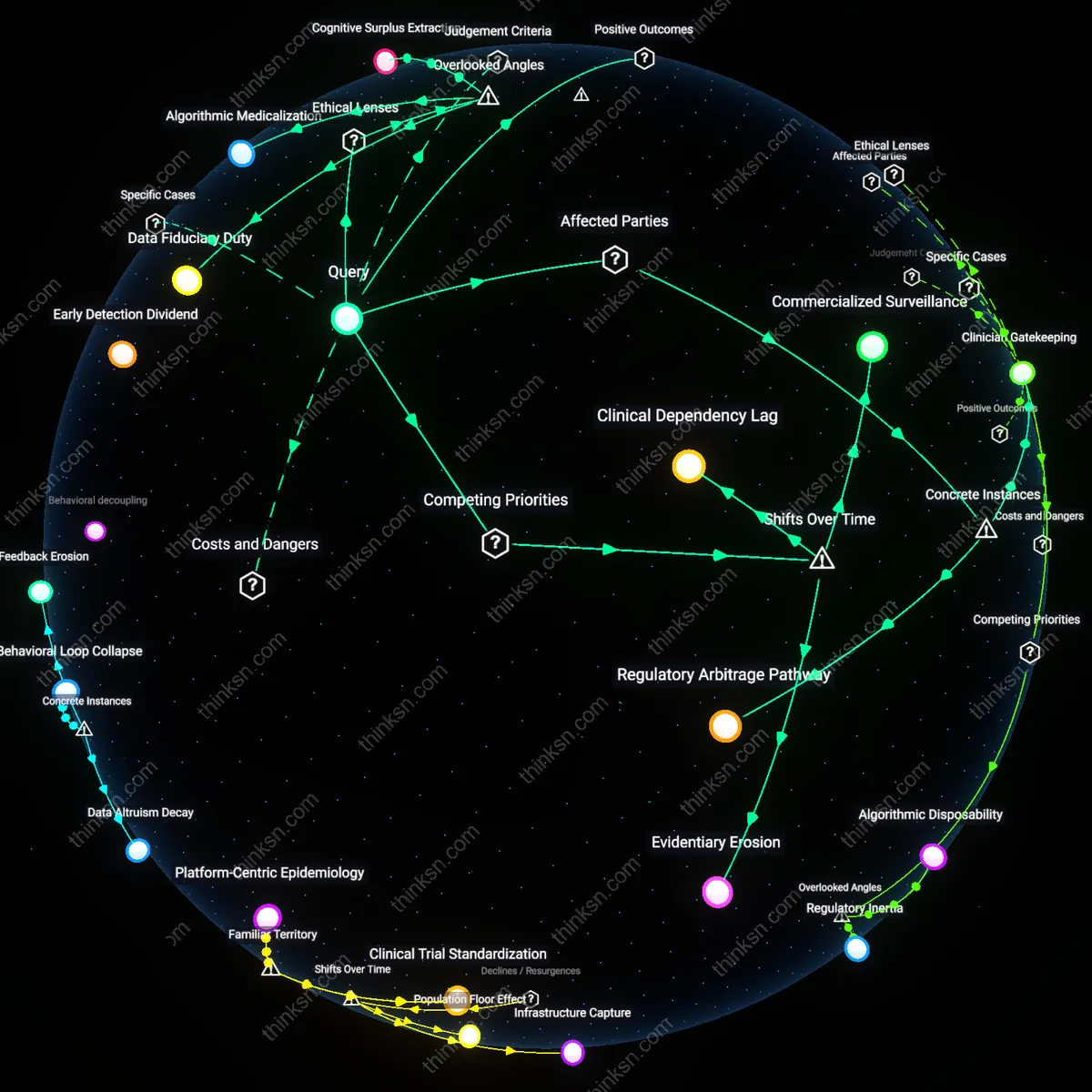

Methodological Capture

Commercial pressure from app developers distorts screen time research by funding only studies with built-in design biases that favor positive outcomes. Developers channel resources into academic partnerships that prioritize engagement metrics over learning efficacy, structure trials around short durations insufficient to detect cognitive trade-offs, and exclude control groups using non-digital interventions—thereby producing evidence that appears rigorous but systematically omits comparative harm. This undermines the epistemic foundation of educational technology research not through data falsification but through the strategic colonization of inquiry frameworks, a non-obvious form of influence that evades traditional conflict-of-interest scrutiny.

Pedagogical Greenwashing

App developers reshape the meaning of 'educational benefit' itself by redefining cognitive outcomes to match what their products can measure, such as task completion speed or level progression, rather than externally validated skills like critical thinking or knowledge retention. This reframing allows commercially driven actors to claim efficacy on their own terms, shifting the epistemology of learning from depth to trackability, which distorts policy and parental perception. The distortion is not in suppressing data but in altering the criteria of value—a hidden institutional substitution that appears neutral but advances market logic into pedagogical judgment.

Evidence Asymmetry Regime

Negative findings on screen-based learning are structurally disincentivized because independent researchers lack access to user data held by private platforms, while developers selectively publish only favorable results from the same datasets, creating a skewed public record. This asymmetry is institutionalized through proprietary algorithms that prevent replication studies and through terms of service that block third-party monitoring of cognitive impact, effectively making disconfirming evidence unobservable. The resulting evidentiary distortion is not due to biased interpretation but to a data monopoly that makes falsification impossible—a challenge to the scientific norm of open verification that remains invisible in meta-analyses.

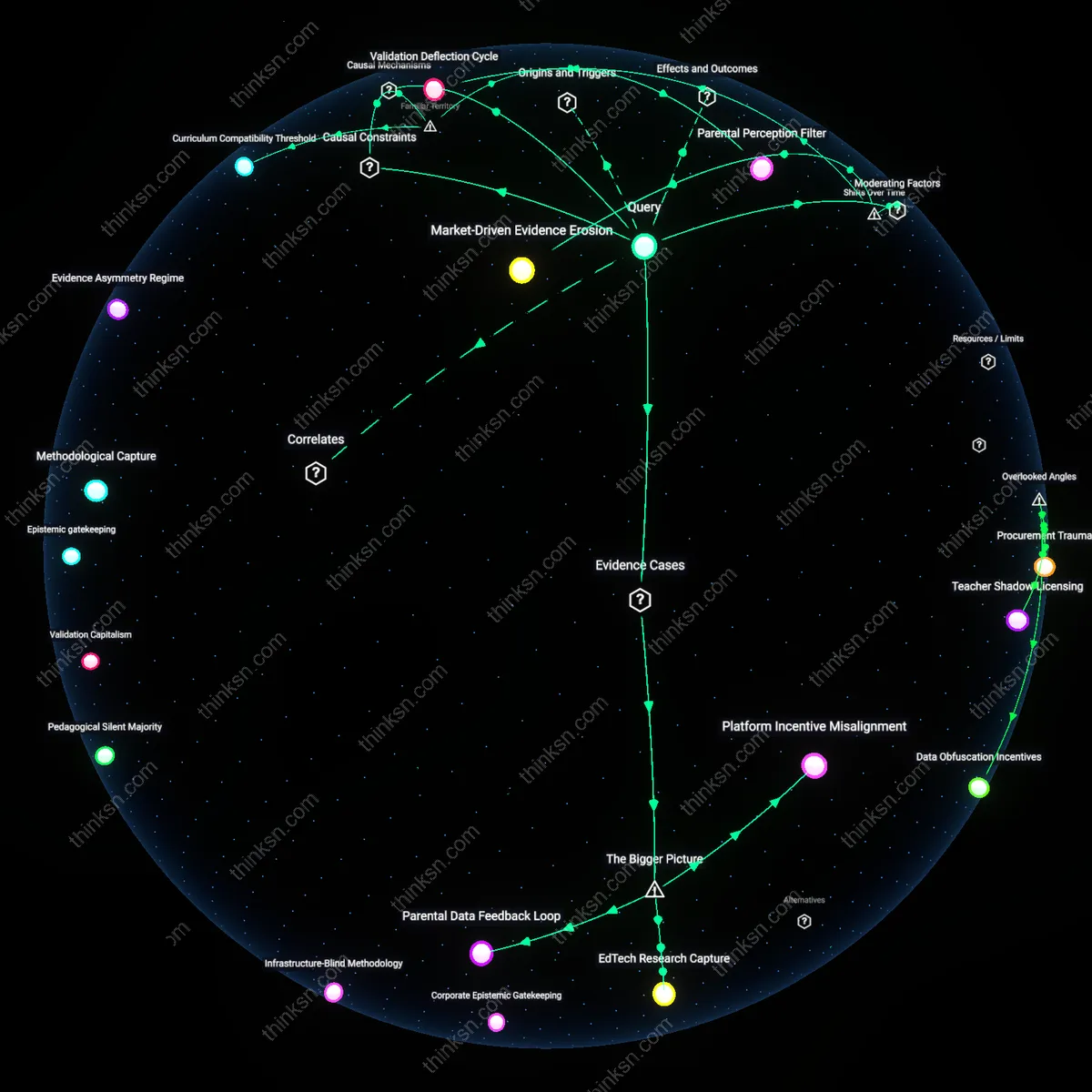

Market-Driven Evidence Erosion

Commercial pressure from app developers has increasingly redefined what counts as 'evidence' of educational benefit by privileging scalable engagement metrics over longitudinal learning outcomes, particularly after 2012 when venture capital flooded into edtech startups targeting K–12 schools. This shift replaced curriculum-aligned efficacy studies with A/B-tested user retention data, repositioning screen time as inherently beneficial if it sustains attention—effectively altering standards of proof. The non-obvious consequence is not just biased research, but the institutional displacement of developmental psychology by product analytics as the normative evaluator of educational value.

Validation Deflection Cycle

As school districts began adopting one-to-one device programs between 2015 and 2019, app developers circumvented traditional pedagogical validation by aligning their products with state digital infrastructure rollouts, thereby making screen time a logistical inevitability before educational claims could be assessed. By embedding apps into district-wide learning management systems, developers shifted the burden of proof from 'Does this improve learning?' to 'Can schools function without it?'. This reversal—where operational dependence precedes evidentiary review—reveals how infrastructural integration during a phase of rapid digitization neutralized critical evaluation cycles.

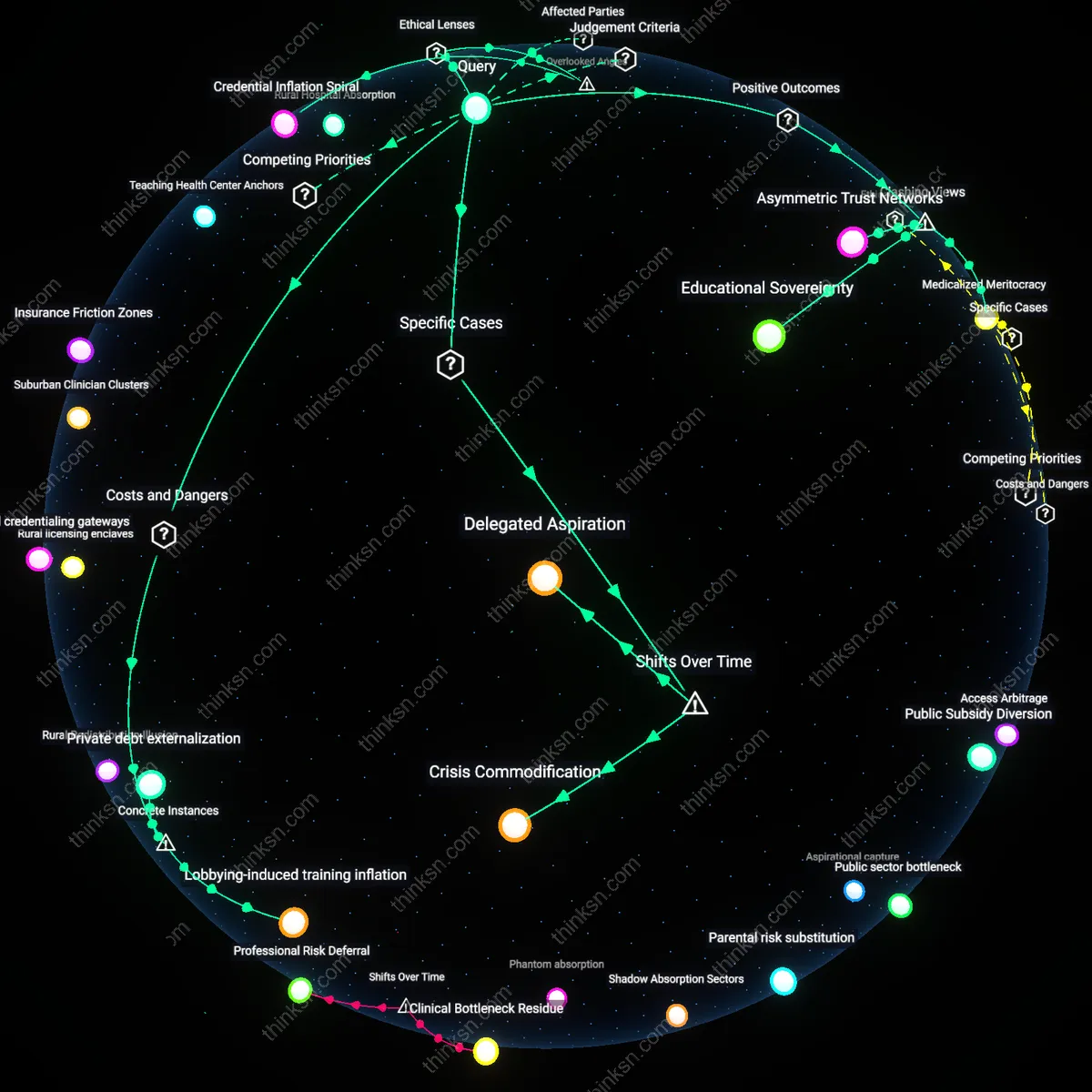

Credibility Arbitrage

Following the surge in remote learning during 2020–2021, app developers exploited the collapse of controlled classroom environments by rebranding recreational screen-based activities as 'emergency education solutions', leveraging crisis temporality to bypass peer-reviewed validation altogether. Platforms originally designed for gamified entertainment retrofitted claims of academic support, capitalizing on policymakers’ urgent need for deployable tools; this pivot transformed commercial viability into de facto evidence of utility. The underappreciated shift here is not mere exaggeration, but the deliberate use of temporal disarray to offload evidentiary responsibility onto overwhelmed school administrators acting in real-time triage.

Market-Driven Research Bias

Commercial pressure distorts evidence because app developers fund studies that prioritize engagement metrics over longitudinal learning outcomes. Tech companies sponsor research through university partnerships, where the design of screen time efficacy trials often incorporates proprietary app usage data, creating embedded conflicts of interest. The non-obvious twist is that these studies appear independent but are methodologically constrained by access to data controlled by the developers themselves, which systematically excludes comparisons with non-digital interventions.

Curriculum Compatibility Threshold

Commercial apps shape evidence by aligning only with curriculum standards that are easily digitized, such as multiple-choice math drills, while ignoring complex literacies like critical thinking. Developers produce evidence based on performance gains in narrow, testable domains where screen-based instruction can demonstrate quick wins, thus defining 'educational benefit' in ways that match their product scope. The underappreciated reality is that this creates a false ceiling on what counts as valid learning, marginalizing slower, qualitative development that resists app-based measurement.

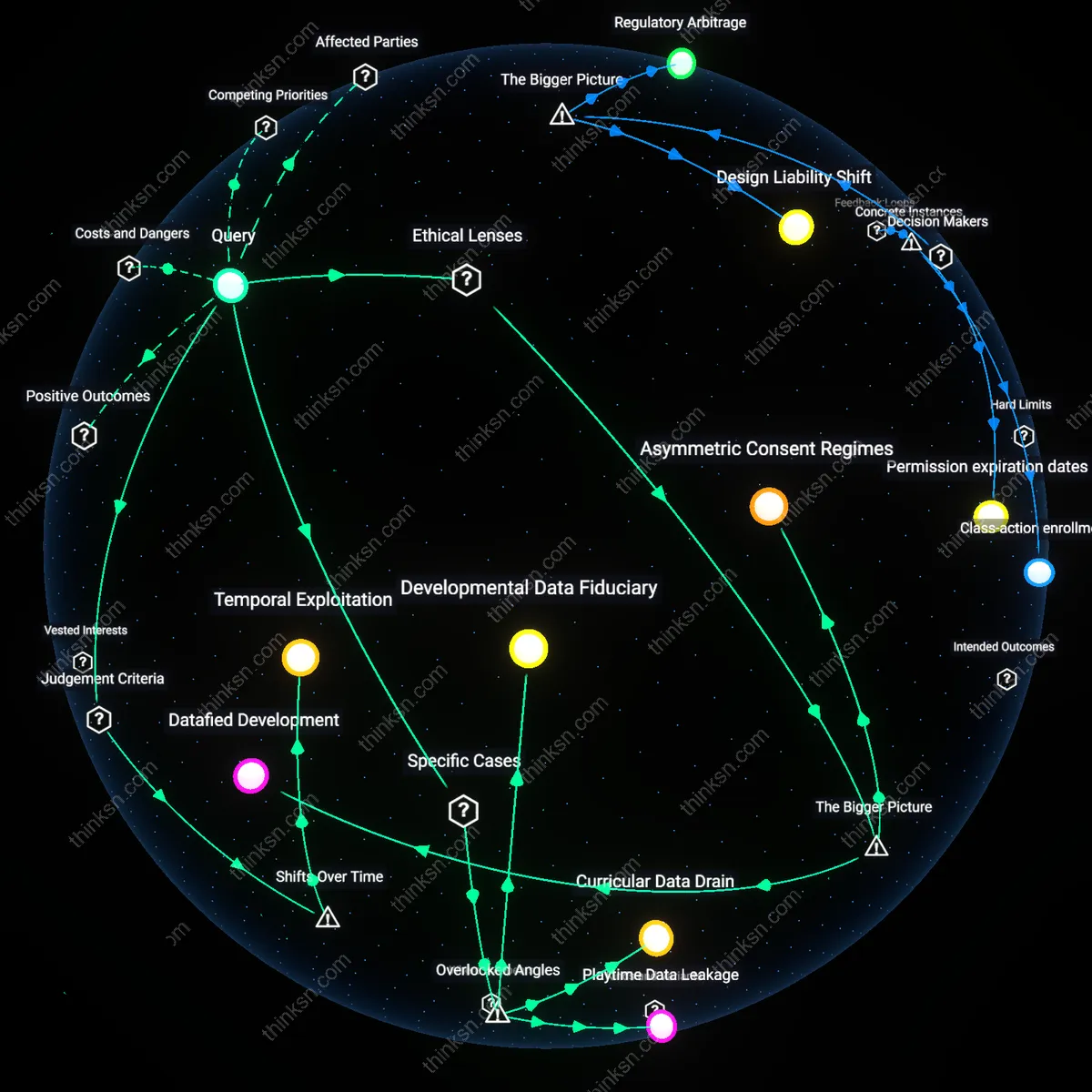

Parental Perception Filter

App developers influence evidence by shaping what parents recognize as 'learning' through real-time progress dashboards and gamified rewards visible in home environments. These interfaces generate anecdotal validation that circulates in social networks and PTA discussions, retroactively pressuring educators to accept screen time as beneficial even when independent studies contradict it. The overlooked mechanism is that commercial design choices in UI—like animated achievement badges—function as perceptual evidence, short-circuiting scientific deliberation with emotionally salient, immediately visible 'proof'.

Platform Incentive Misalignment

Commercial pressure from app developers distorts educational screen time evidence by incentivizing platform design over pedagogical validity. Companies like TikTok and YouTube optimize for engagement-driven metrics, not learning outcomes, shaping research agendas and public perception toward usage patterns that sustain attention rather than cognitive development. This creates a structural bias where studies highlighting benefits are disproportionately funded or amplified if they align with business models reliant on frequent, prolonged interaction. The non-obvious consequence is not mere bias in data, but the active reshaping of what counts as 'evidence' within policy and academic discourse due to dominance of platform-generated usage analytics.

EdTech Research Capture

App developers distort screen time research by directing academic collaboration toward proprietary tools embedded in school curricula, as seen with companies like DreamBox Learning and Khan Academy integrating analytics into district-wide deployments. These partnerships enable developers to co-define metrics of success—such as 'time-on-task' or 'completion rates'—which substitute measurable engagement for validated learning gains. The systemic dynamic lies in the quiet transfer of evaluative authority from independent researchers to product teams who control access to user data and frame interpretation. What remains underappreciated is how institutional dependence on such platforms compromises ex-post evaluation, making disentangling educational benefit from commercial interest nearly impossible.

Parental Data Feedback Loop

The commercialization of family-facing apps like ABCmouse or Sago Mini skews screen time evidence by generating personalized efficacy narratives from behavioral data, which are then marketed back to parents as proof of learning impact. Through A/B testing and machine learning, these platforms construct convincing but ungeneralizable 'success stories' based on engagement trends rather than standardized assessment outcomes. This creates a feedback loop where perceived educational value is validated privately (in homes) but never subjected to public scrutiny, allowing commercial actors to bypass traditional scientific gatekeeping. The overlooked mechanism is the shift from peer-reviewed evidence to experiential affirmation, eroding collective standards for what constitutes valid educational benefit.