Is Sharing Health Data for Education Worth the Privacy Risk?

Analysis reveals 8 key thematic connections.

Key Findings

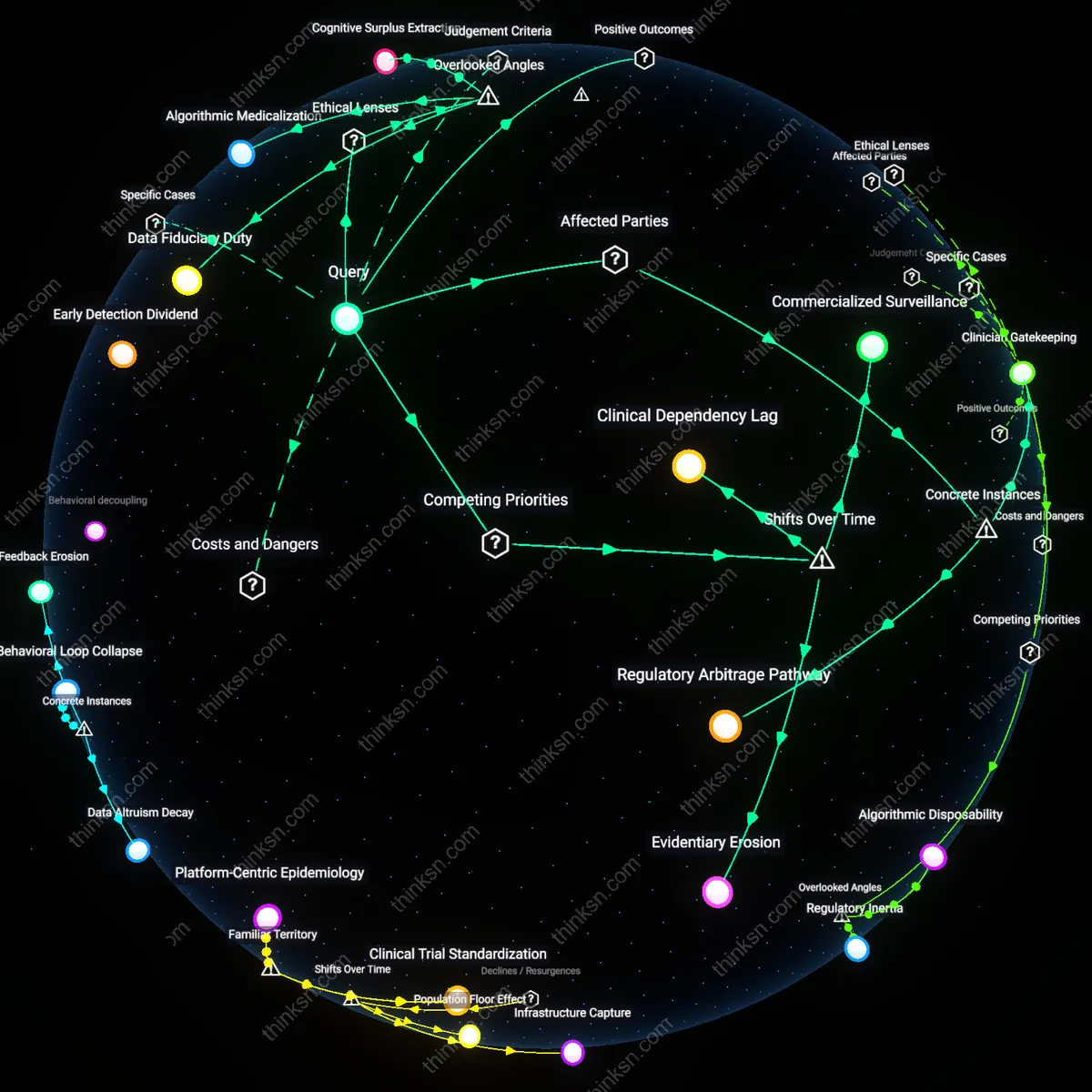

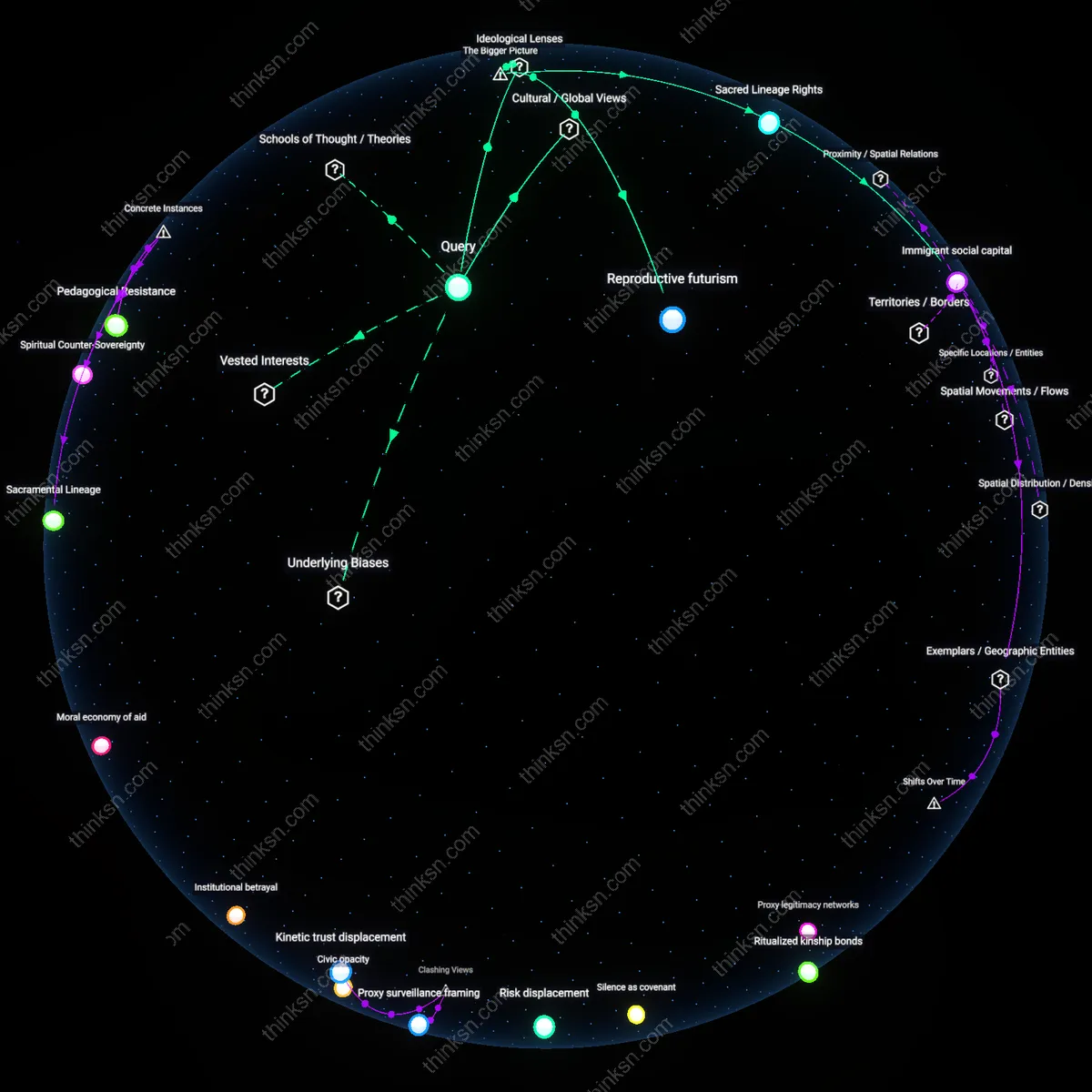

Consent Drift

Parents must prioritize the erosion of meaningful consent mechanisms over time, as child data practices have shifted from passive collection in isolated apps to continuous, cross-platform surveillance ecosystems after the 2010s digital advertising consolidation. This transformation renders static privacy policies ineffective, embedding data extraction into the developmental arc of childhood itself through adaptive learning algorithms that reclassify health indicators as behavioral commodities. The non-obvious outcome is that parental consent becomes performative, masking a systemic shift where data is repurposed beyond educational contexts despite nominal compliance with regulations like COPPA.

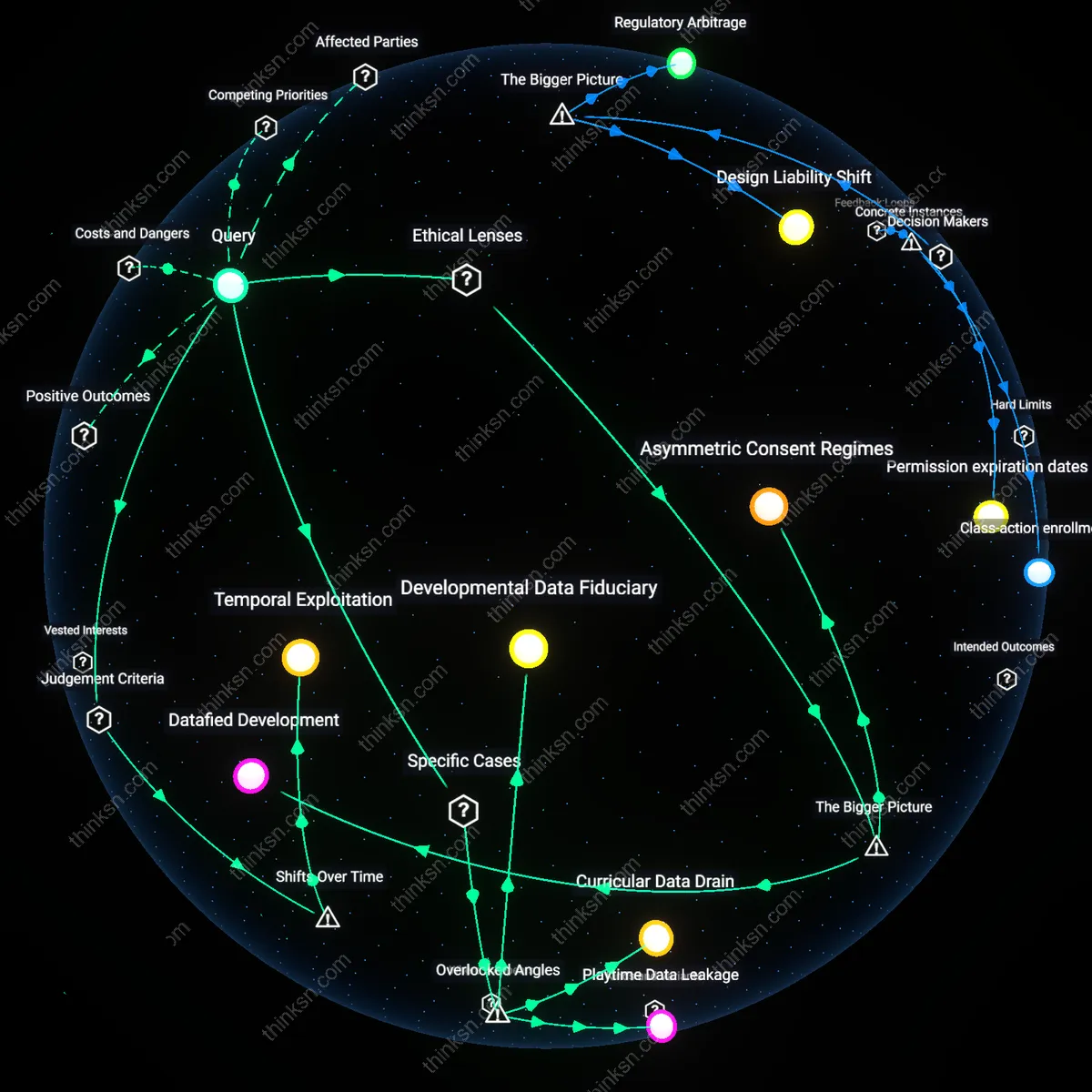

Temporal Exploitation

Parents ought to consider how health data collected during critical developmental windows—such as early literacy or emotional regulation phases—can be exploited years later due to weakened data retention limits, a risk amplified since the mid-2010s when cloud storage commodification enabled indefinite archiving. Longitudinal behavioral profiles built from pediatric inputs become high-value assets for predictive modeling in insurance, hiring, or behavioral advertising, creating lifetime vulnerabilities from childhood decisions made under information asymmetry. The underappreciated consequence is that temporary educational tools generate permanent digital phenotypes shaped by developmental timing, not just content.

Datafied Development

A parent should refuse health data collection because children’s developmental vulnerability is systematically exploited by edtech firms operating under weak regulatory oversight, enabling the normalization of surveillance during formative life stages. Commercial apps leverage ambiguously governed spaces in child privacy law—such as the gaps in COPPA enforcement for indirect data use—to embed data extraction into educational routines, effectively turning learning behaviors into biometric training sets. This mechanism is powered by the convergence of venture-backed platform expansion and under-resourced public education systems, where schools outsource digital pedagogy to third parties lacking fiduciary duties to students. The non-obvious consequence is not mere privacy loss but the institutional inscription of developmental processes into predictive algorithms that classify and channel children’s futures before autonomy is fully formed.

Asymmetric Consent Regimes

A parent should treat consent to health data collection as a structurally coerced decision, not a voluntary choice, because the burden of data protection is displaced onto individual families within a market where opting out often means forfeiting educational access. Edtech platforms are embedded in school-issued devices and curricula, creating de facto mandatory use while offering opaque, take-it-or-leave-it terms that simulate consent without enabling informed refusal—a condition sustained by the privatization of public education infrastructure and the lack of enforceable data rights. This imbalance reflects a broader neoliberal shift where citizenship protections are replaced by consumer contracts, rendering parents’ decisions less ethical choices than responses to systemic duress. The overlooked effect is that parental consent becomes a legal fig leaf that legitimizes data extraction while absolving governments and corporations of accountability.

Predictive Equity Gaps

A parent should withhold consent because health data collected through educational apps can be repurposed to train algorithmic models that reproduce social inequities under the guise of personalization, linking pediatric biometrics to risk-profiling systems in education and health. Even if data is anonymized, aggregation enables third-party actors—such as insurers, advertisers, or school districts using AI-driven interventions—to infer developmental delays or behavioral conditions, triggering resource allocation or disciplinary pathways that disproportionately affect marginalized communities. This occurs through feedback loops between machine learning systems and institutional decision-making, where early data shadows shape access to support services or advanced tracks. The hidden impact is that seemingly benign apps become upstream vectors for structural discrimination, operationalizing bias before a child ever enters formal assessment systems.

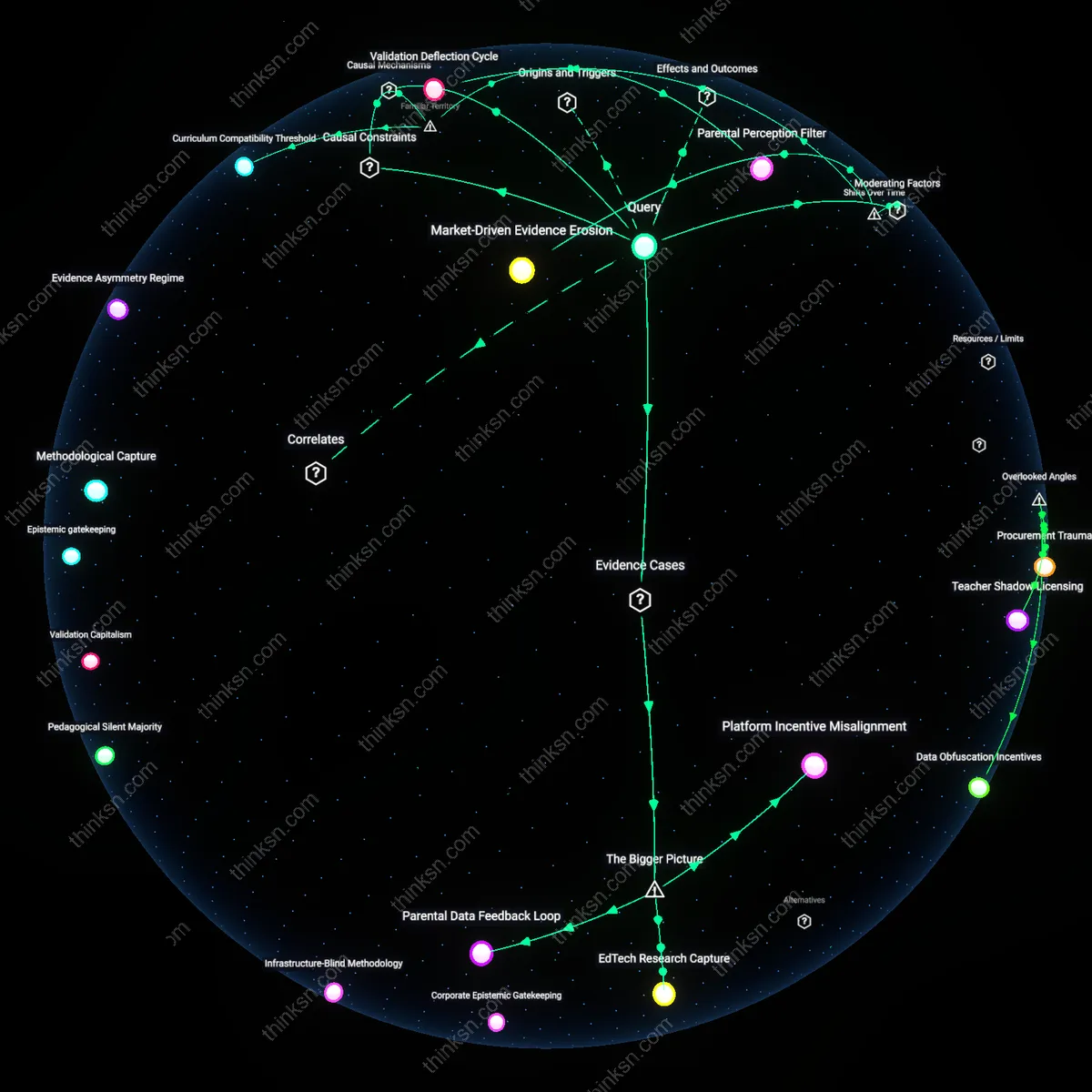

Developmental Data Fiduciary

Parents should refuse consent when educational apps lack a legally designated developmental data fiduciary because in jurisdictions like California’s CCPA enforcement gaps allow app developers such as Epic! Platforms Inc. to monetize behavioral biometrics—like reading fluency patterns or response-time variability—under the guise of ‘personalized learning,’ which covertly transforms health-adjacent signals into developmental risk profiles that influence future insurance or special education access; this dimension is overlooked because regulatory discourse focuses on consent rather than stewardship, obscuring how the absence of a mandated data guardian enables irreversible data exploitation during neuroplastic-sensitive childhood phases.

Curricular Data Drain

Parents must consider how the app’s integration into classroom routines—such as when Florida school districts adopted ClassDojo amid ambiguous FERPA interpretations—creates a curricular data drain where teachers inadvertently reinforce data collection through reward systems tied to attention metrics, making refusal socially isolating for children and subtly pressuring parents to consent even when privacy risks are high; this dynamic is typically ignored because oversight centers on corporate actors, not the pedagogical infrastructure that normalizes health-data extraction as part of learning engagement.

Playtime Data Leakage

Parents should recognize that health data extracted during gamified learning sessions—like when the UK’s National Literacy Trust endorsed Lexplore Analytics’ eye-tracking apps used in after-school programs—escape regulatory containment because ‘play behavior’ is not officially classified as medical observation, enabling unregulated accumulation of neurological indicators under entertainment exemptions in data law; this loophole matters because classification determines oversight jurisdiction, and the failure to define certain interactions as diagnostic creates a blind spot where developmental surveillance occurs without clinical accountability.