Sleep Data for Science: Privacy vs. Progress?

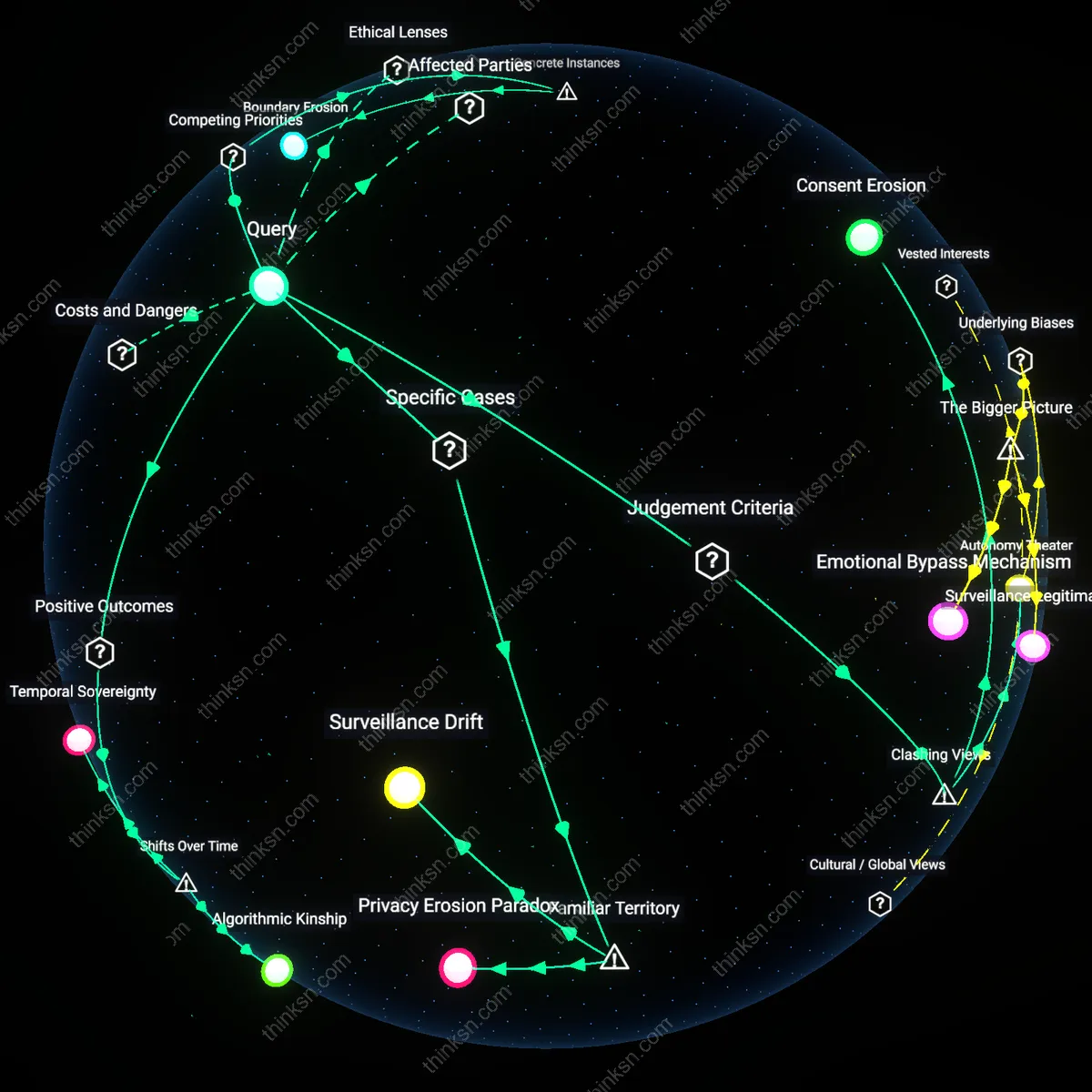

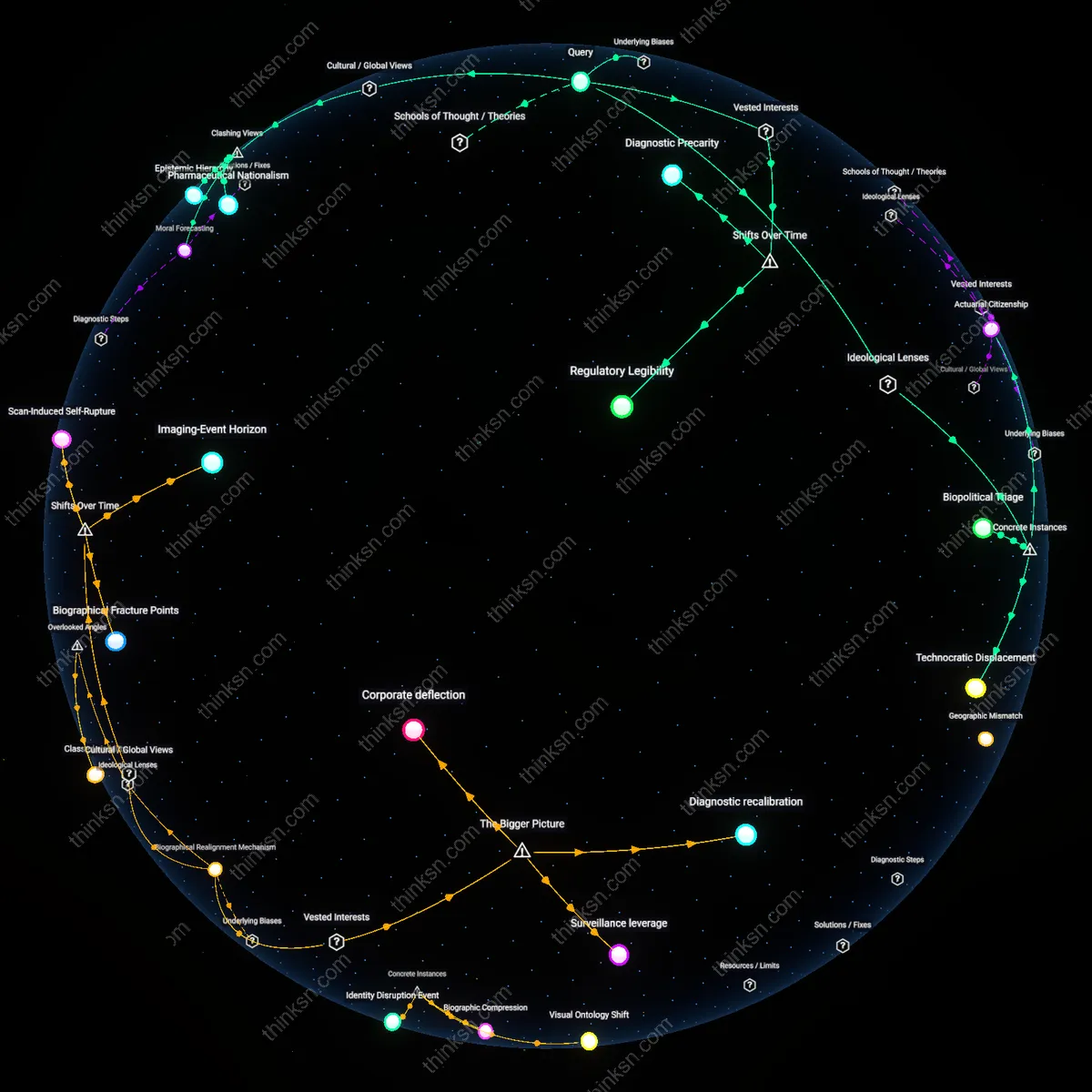

Analysis reveals 5 key thematic connections.

Key Findings

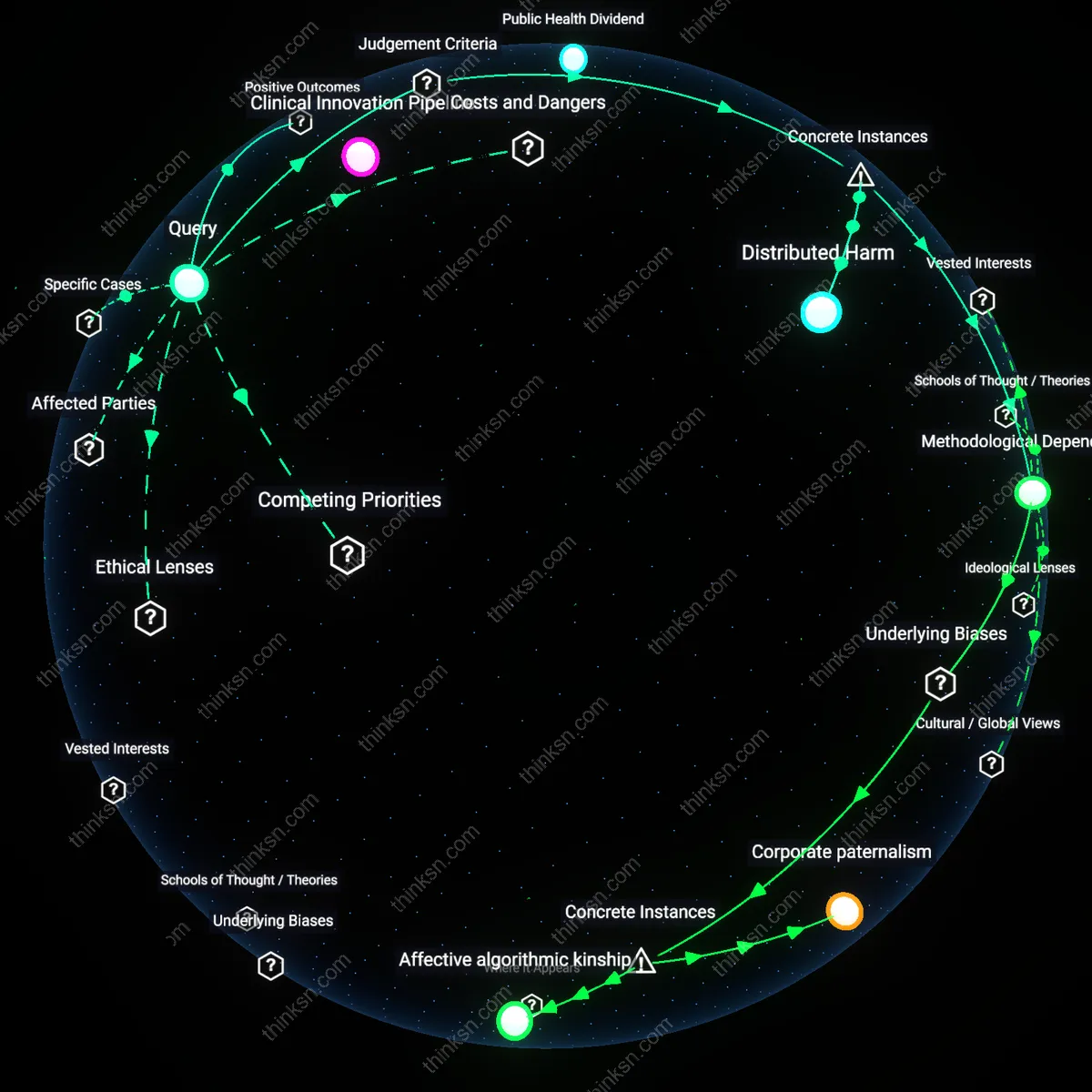

Distributed Harm

Aggregating sleep data without individual consent harms marginalized communities disproportionately, as seen in the 2018 University of California, San Francisco’s partnership with a wearable tech company that extracted nocturnal biometrics from low-income shift workers across Oakland’s public transit system—where data contributed to models predicting worker fatigue while offering no benefit or opt-out to participants. The mechanism was institutional data pooling under public health justifications, which obscured how systemic vulnerability amplified privacy loss without reciprocal gain, revealing that harm is distributed unevenly even when data is anonymized. This case underscores that aggregate benefits often ignore asymmetries in exposure and consent, making the underlying yardstick justice—the equitable distribution of risk and reward—decisive in evaluating such research ethics.

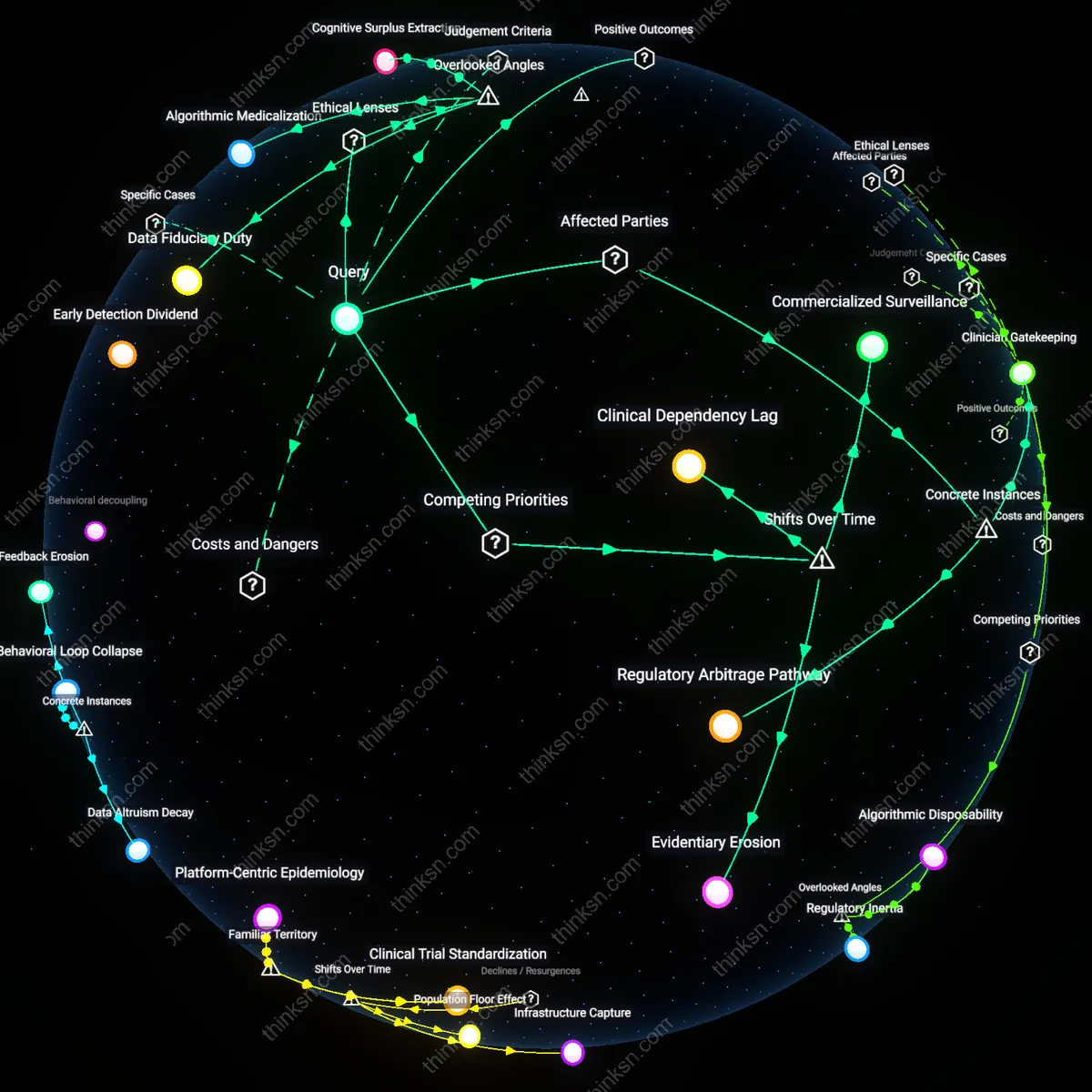

Methodological Dependence

The societal benefit of sleep data aggregation is essential for epidemiological breakthroughs, as demonstrated by the Netherlands Sleep Registry’s role in identifying circadian disruptions linked to metabolic disease across 15,000 participants from 2012 to 2020, where anonymized data enabled population-scale modeling that informed public health policy on shift work regulations. The system operated through opt-in anonymization with dynamic consent protocols, balancing privacy against public outcomes by embedding individual control within scalable research infrastructure. The non-obvious insight is that privacy loss is not inherent to aggregation but contingent on governance design, revealing efficiency—maximizing health insights per privacy unit—as the guiding judgment criterion, which allows ethical scalability when safeguards are structurally embedded rather than retrofitted.

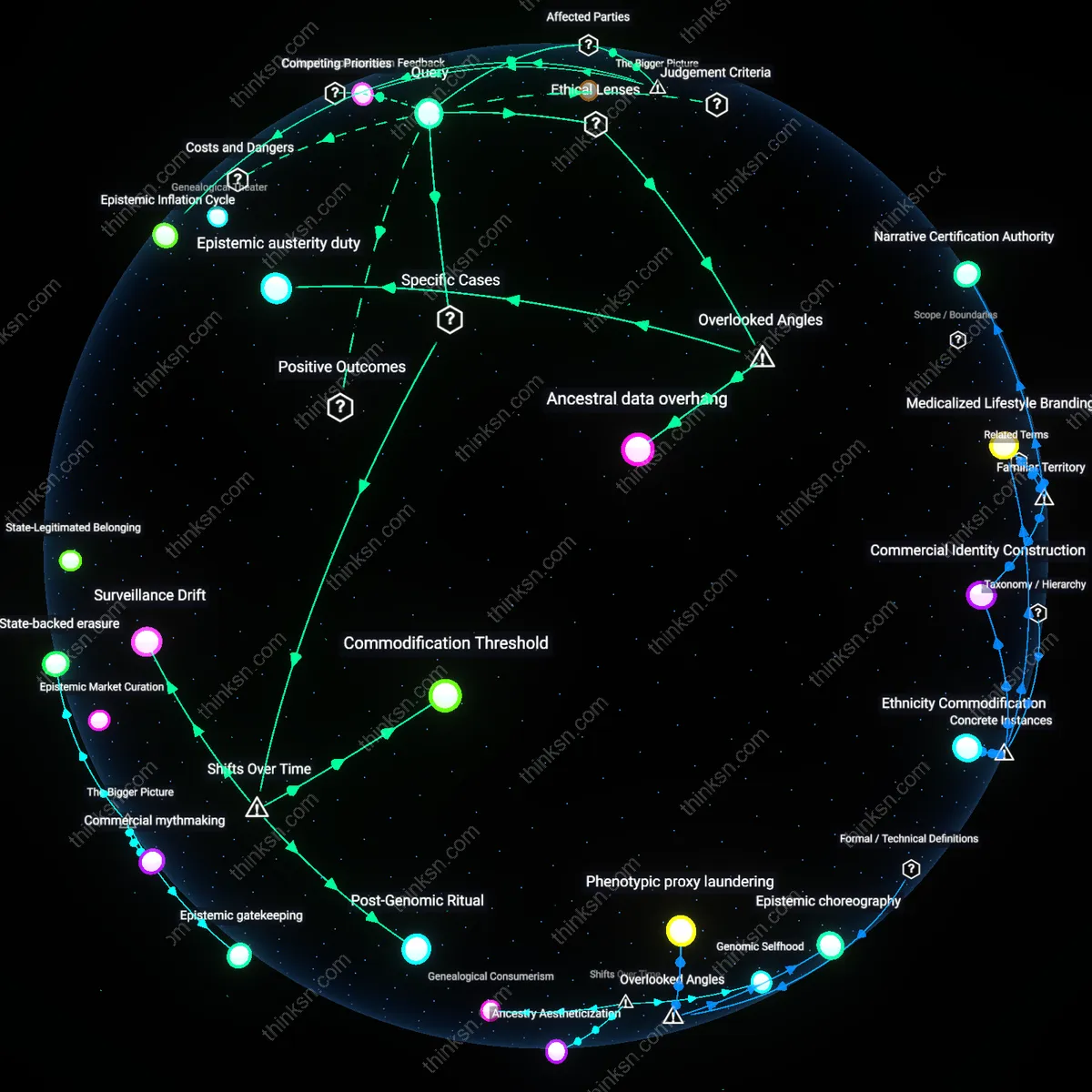

Public Health Dividend

Aggregating sleep data enables epidemiologists to identify population-level patterns in sleep disorders, leading to earlier public health interventions in urban clinics across regions like Los Angeles and Copenhagen. This systemic monitoring reveals hidden correlations—such as the link between shift work and metabolic disease—driving policy changes in workplace regulations and municipal health programs. What’s rarely acknowledged in everyday discussions about data sharing is how anonymized aggregates quietly reshape preventive care standards, not through individual insight but through silent recalibration of clinical guidelines.

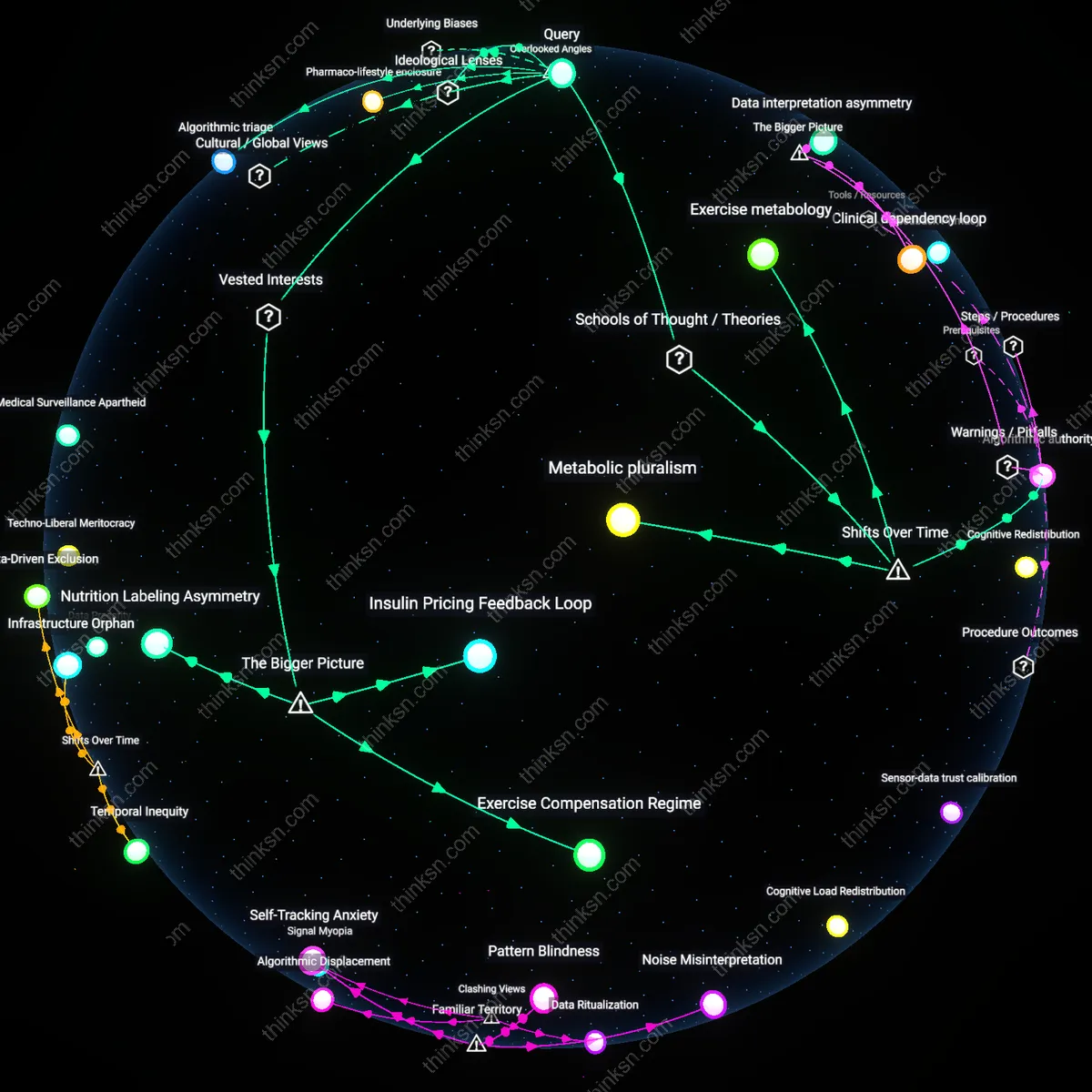

Clinical Innovation Pipeline

Medical device companies like Philips and ResMed use pooled sleep data to refine algorithms in diagnostic machines deployed in sleep labs throughout the U.S. and Europe, accelerating the development of more accurate apnea detection tools. This feedback loop between real-world data and product iteration makes diagnostics faster and more accessible—transforming what was once a niche specialty into routine outpatient screening. Surprisingly, the most familiar concern over personal privacy overlooks how the clinical tools we trust emerge directly from data that no single person could provide alone.

Behavioral Nudge Infrastructure

Wellness platforms such as Fitbit and Apple Health integrate aggregated sleep trends into personalized feedback systems, prompting users in cities like Austin and Berlin to adjust bedtime habits based on peer benchmarks. These nudges operate through mass data but feel personal, quietly normalizing healthier patterns across demographics that typically resist medical advice. Most people assume privacy loss matters only at the level of exposure or misuse, failing to recognize that the greatest impact may be how subtly the collective shapes the individual’s sense of normal.