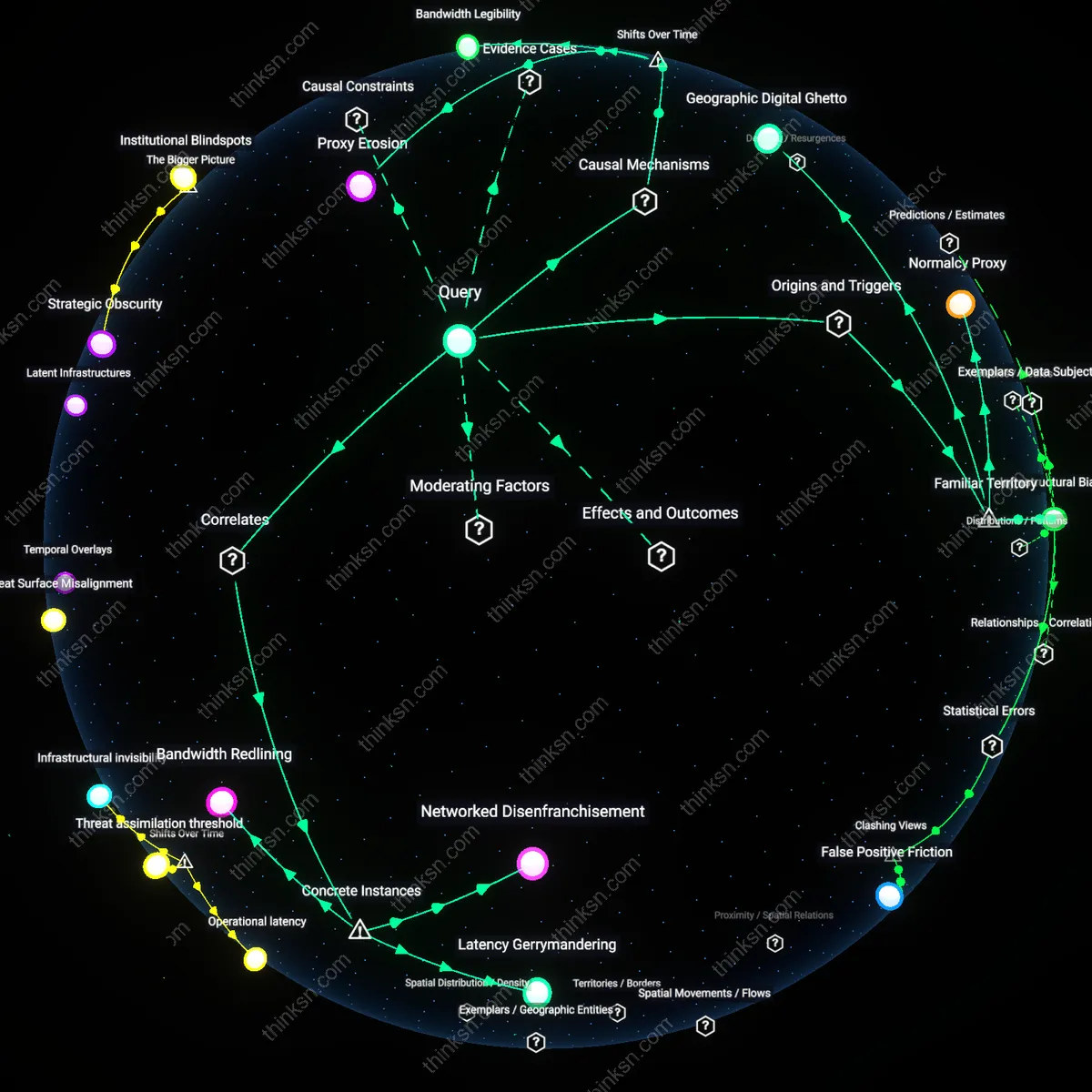

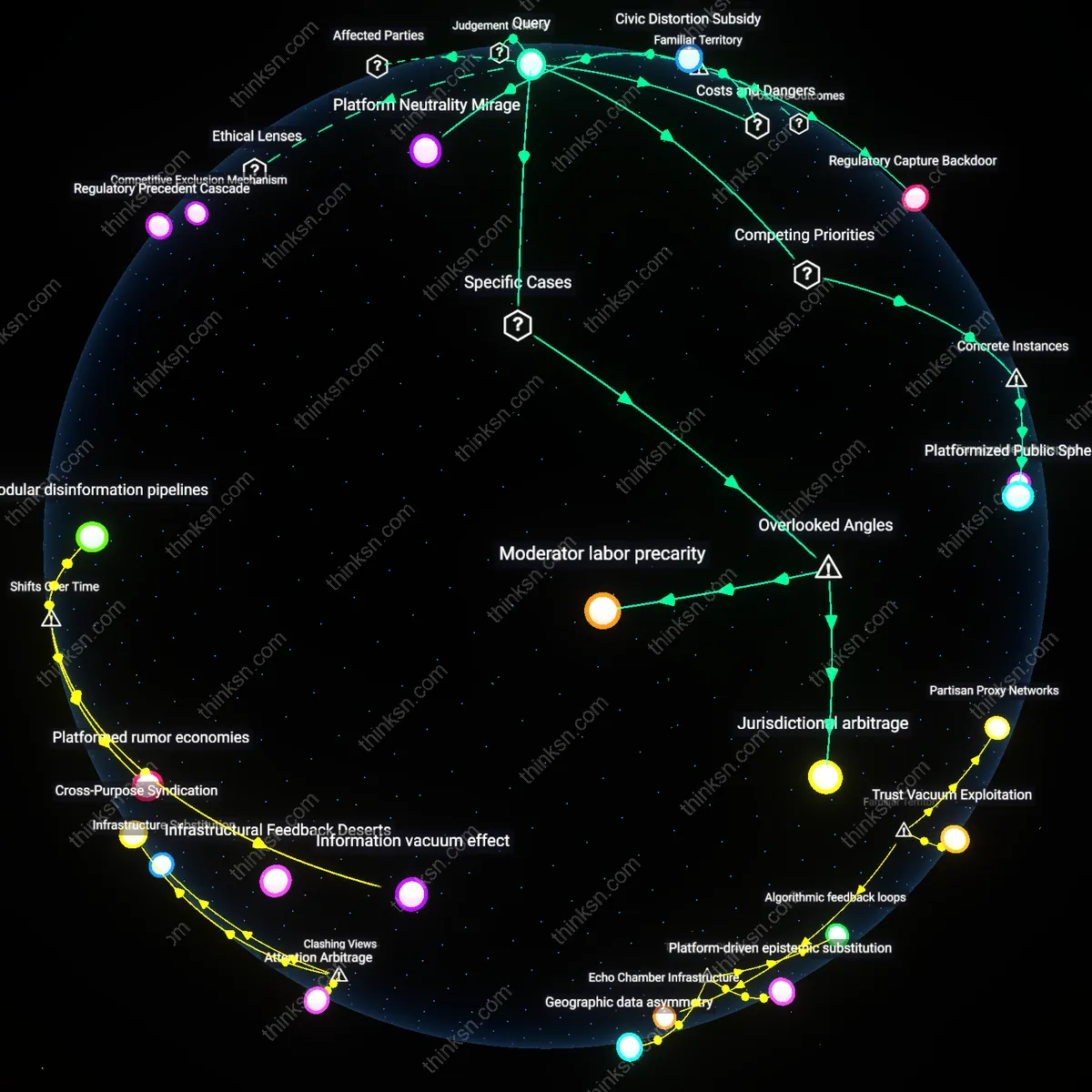

Is Monetizing Location Data for Ads Ethical on Free Social Networks?

Analysis reveals 11 key thematic connections.

Key Findings

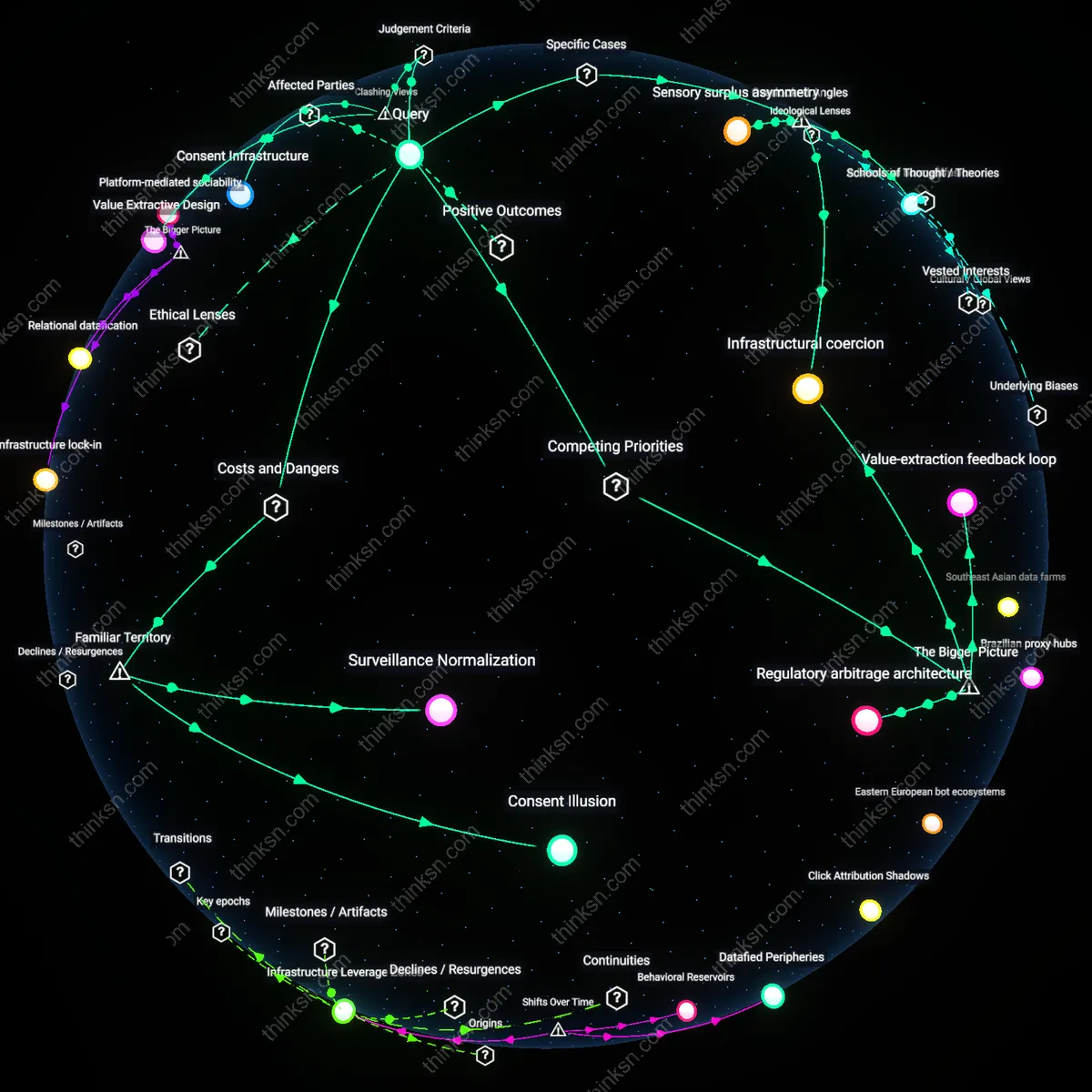

Consent Infrastructure

No, because the structural impossibility of opting out collapses informed consent into a procedural fiction, rendering the system ethically unjustifiable under autonomy-based frameworks; the mechanism of data extraction operates not through user choice but through interface design that defaults to surrender, implicating the architecture itself as the ethical actor—this reveals that the locus of moral failure is not individual companies but the engineered inevitability of data leakage, a condition obscured when focusing solely on corporate intent.

Value Extractive Design

Yes, because in digital markets governed by venture capital logics, user data functions not as a commodity but as the primary revenue substrate, and the structural absence of opt-out channels is not a flaw but a feature that sustains platform viability; this efficiency in data capture enables the provision of 'free' service while externalizing privacy costs onto users, exposing a hidden exchange rate—attention and location for access—that undermines the moral primacy of autonomy when weighed against widespread service distribution.

Spatial Coercion Norm

No, because location data is qualitatively distinct from other personal data due to its inseparability from physical movement and real-world vulnerability, and when opt-out is structurally blocked, users are subjected to continuous spatial surveillance without recourse; this transforms public mobility into a monitored condition, normalizing a form of coercive presence that redefines freedom of movement as a privilege conditioned on digital invisibility—what emerges is not mere exploitation but the quiet institutionalization of geofenced social control.

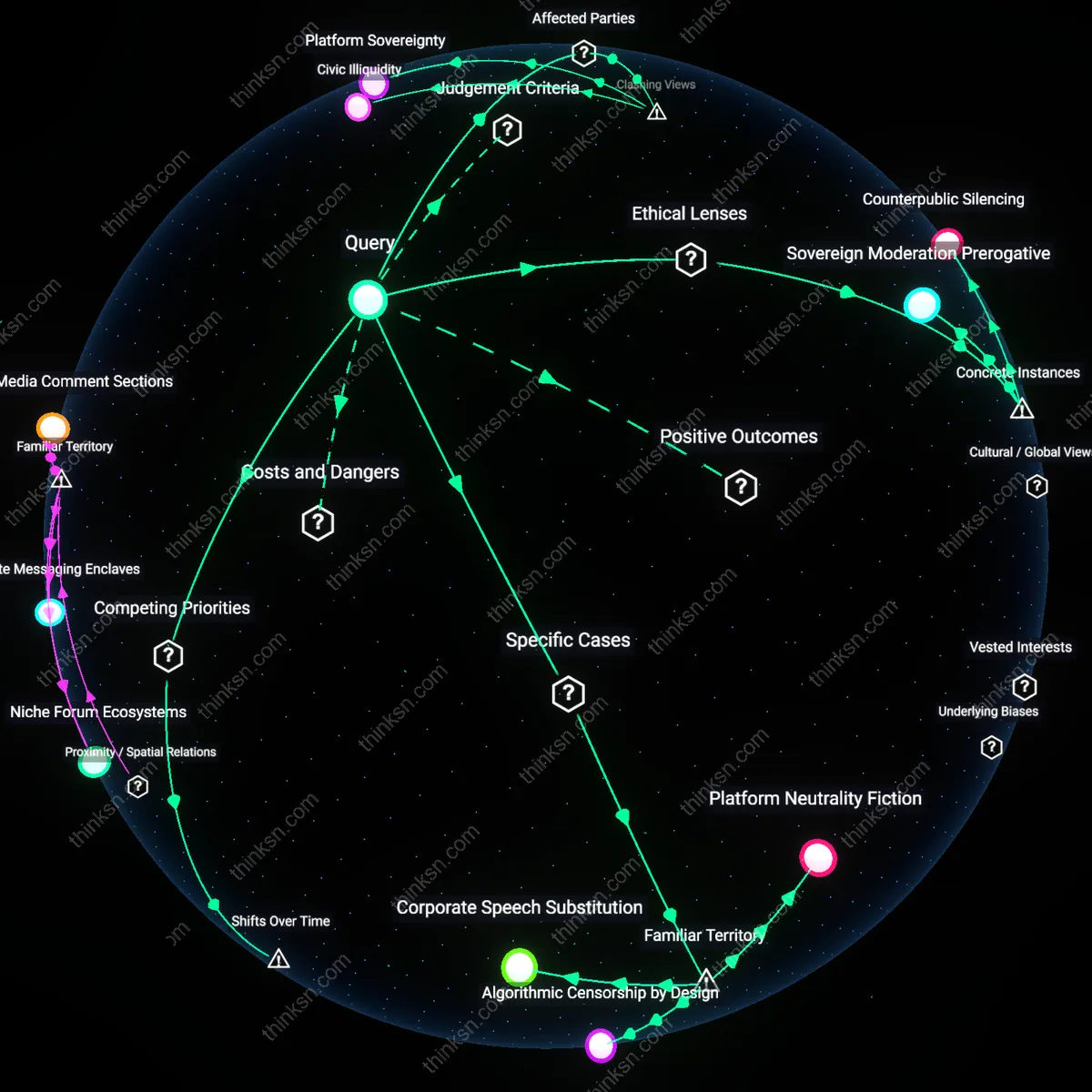

Consent Illusion

No, because the absence of a functional opt-out transforms consent into a procedural fiction maintained by design. Users interact with interfaces governed by corporate platforms whose default settings algorithmically suppress privacy control, making refusal practically inaccessible even when technically present. This mechanism leverages the gap between legal disclosure and behavioral reality, where most users navigate the system under the assumption of autonomy they do not possess. The non-obvious risk is that compliance theater—such as buried toggle menus or multi-step deactivation—mimics user agency while systematically eroding it.

Surveillance Normalization

No, because selling location data without meaningful opt-out conditions entrenches perpetual observation as an ambient feature of digital life. Advertising-driven business models depend on continuous data extraction, turning smartphones into tracking devices by default, not choice. The system operates through networked third-party brokers who aggregate and resell geospatial patterns to entities far removed from the original service, enabling re-identification and behavioral prediction. The underappreciated danger is that routine surveillance becomes culturally invisible, recasting historical fears of monitoring as passive resignation to 'how apps work now.'

Extraction Infrastructure

No, because the structural impossibility of opting out reveals the platform not as a service but as a data-capturing utility disguised as social interaction. The mechanism is the integration of location monitoring into core functionalities—check-ins, local recommendations, friend-finding features—so that disengagement requires abandoning core use, not adjusting a setting. This binds participation to exposure, transforming everyday mobility into a commodity routed through real-time bidding markets in advertising. What’s rarely acknowledged is that the cost isn’t just privacy loss, but the systemic conditioning of populations to accept data extraction as the price of belonging.

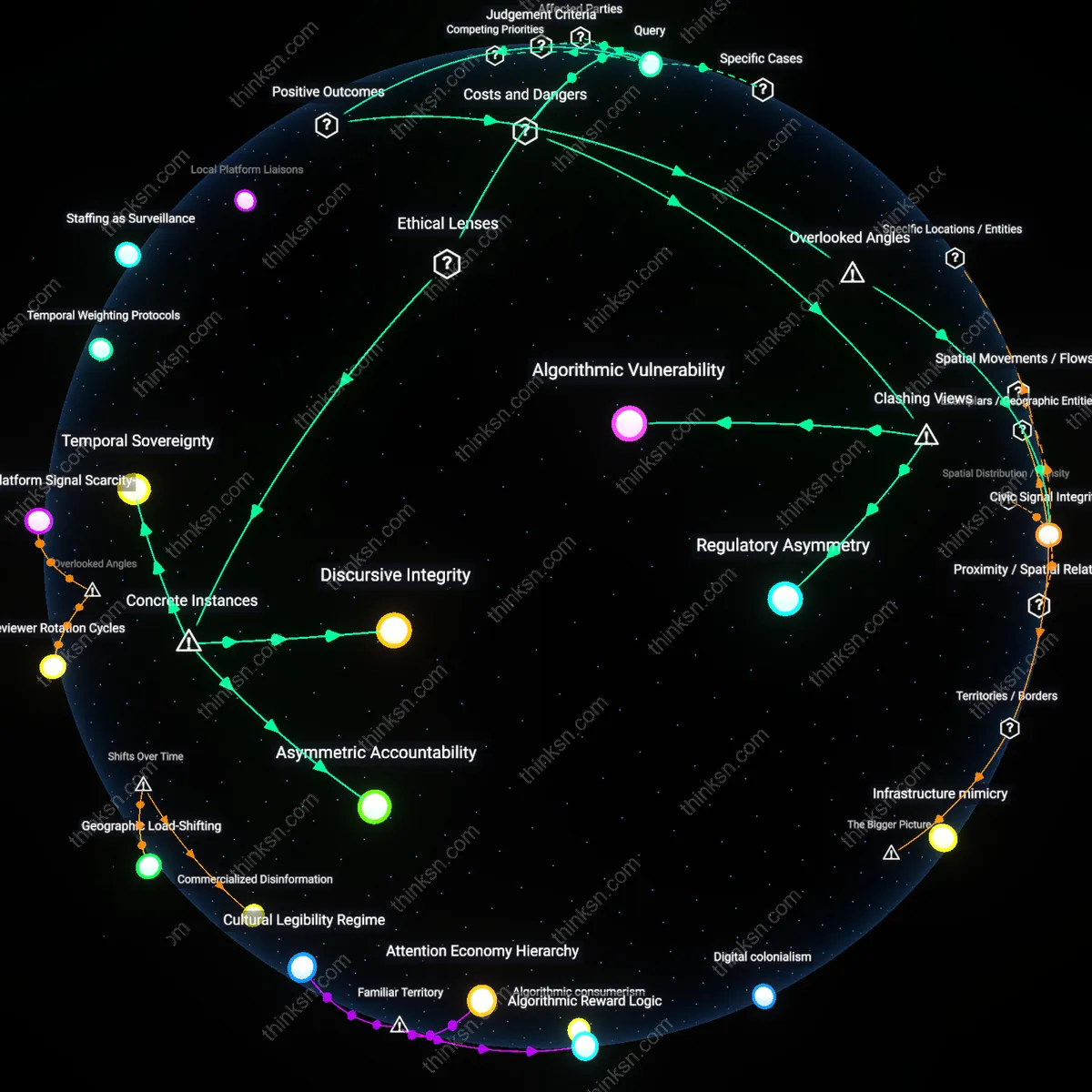

Infrastructural coercion

Selling users' location data without feasible opt-out is ethically unjustifiable because the platform’s technical architecture actively disables user agency, making consent a procedural fiction. The network’s data collection protocols are embedded in low-level software design—such as background GPS polling and default-enabled permissions—that operate independently of user awareness or control, effectively automating exploitation. This structural determination reflects not user choice but the systemic prioritization of ad-tech integration over autonomy, revealing how technical defaults function as binding constraints rather than neutral tools. The non-obvious insight is that ethical failure lies not in the act of selling data, but in the preemptive elimination of exit mechanisms through engineered dependency.

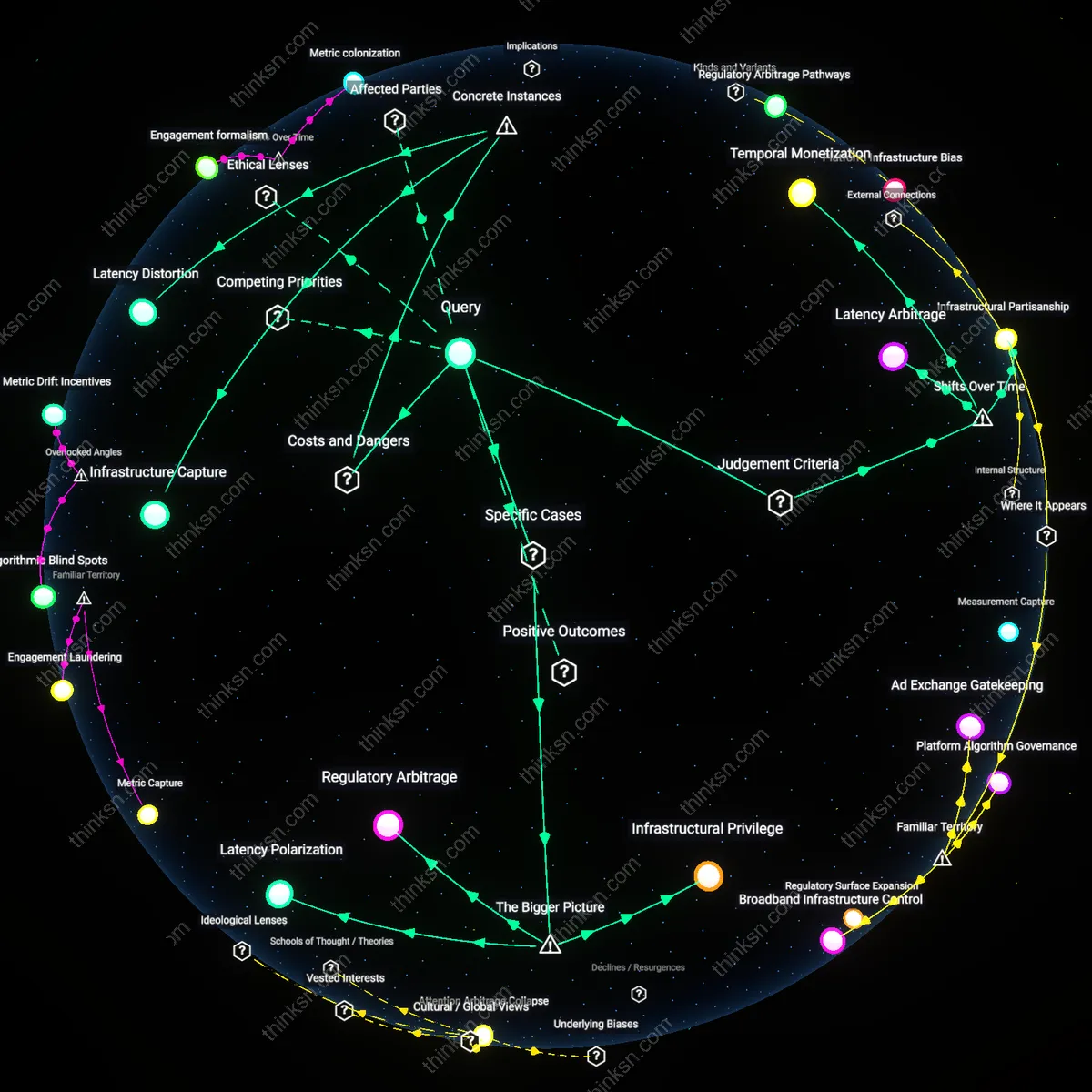

Value-extraction feedback loop

The practice is ethically unjustifiable because it entrenches a self-reinforcing economic dependency on surveillance capitalism, where the platform’s survival hinges on escalating data commodification. Advertisers and data brokers create continuous demand for granular behavioral signals, pressuring the social network to maximize data throughput—and thus advertising yield—by minimizing friction in data harvesting, including eliminating functional opt-outs. This dynamic transforms user privacy into a cost center that must be suppressed for platform viability, illustrating how market logic reshapes design constraints. The underappreciated reality is that 'free' services are not merely monetized through data, but actively deteriorate as public goods in direct proportion to their commercial success.

Regulatory arbitrage architecture

It is ethically unjustifiable because the platform leverages jurisdictional fragmentation and weak enforcement regimes to externalize the social costs of surveillance while capturing private profits. By hosting servers in data-havens and exploiting gaps in transnational privacy laws—such as inconsistent interpretations of GDPR or the absence of federal privacy legislation in the U.S.—the network creates a de facto permissionless environment for data exploitation. This legal indeterminacy allows the company to present opt-out impossibility not as a violation but as a compliance workaround, shifting ethical burden onto users and regulators. The systemic insight is that unethical data practices persist not due to ignorance or oversight, but through deliberate design aligned with exploitable governance voids.

Adversarial norm diffusion

The sale of location data by ostensibly free platforms becomes ethically indefensible when observed in humanitarian contexts such as refugee camps, where UNHCR partnerships with mobile providers inadvertently enable Facebook to acquire mobility patterns via aggregated carrier data. Here, data circulates through third-party intermediaries that obscure lineages, allowing platforms to claim non-involvement while still profiting from displacement trajectories. The overlooked mechanism is norm collapse via data brokerage ecosystems, where ethical constraints dissolve not through direct action but through the assimilation of vulnerable population metrics into advertising markets via neutralized intermediaries.

Sensory surplus asymmetry

Location data monetization by free social networks is ethically unjustifiable because platforms like Snapchat leverage ambient sensor economies—such as continuous Wi-Fi triangulation and Bluetooth beaconing in urban malls—to extract behavioral baselines without requiring active participation, as demonstrated in Toronto’s Sidewalk Labs deployments where Snapchat’s AR filters implicitly harvest pedestrian dwell times. The underappreciated condition is that ethical frameworks assume intentional data submission, yet here value extraction operates through passive sensory excess—environmental data treated as free input—thereby disembedding consent from action and anchoring exploitation in backgrounded infrastructures.