Must-Carry for Social Media: Protecting Democracy or Entrenching Control?

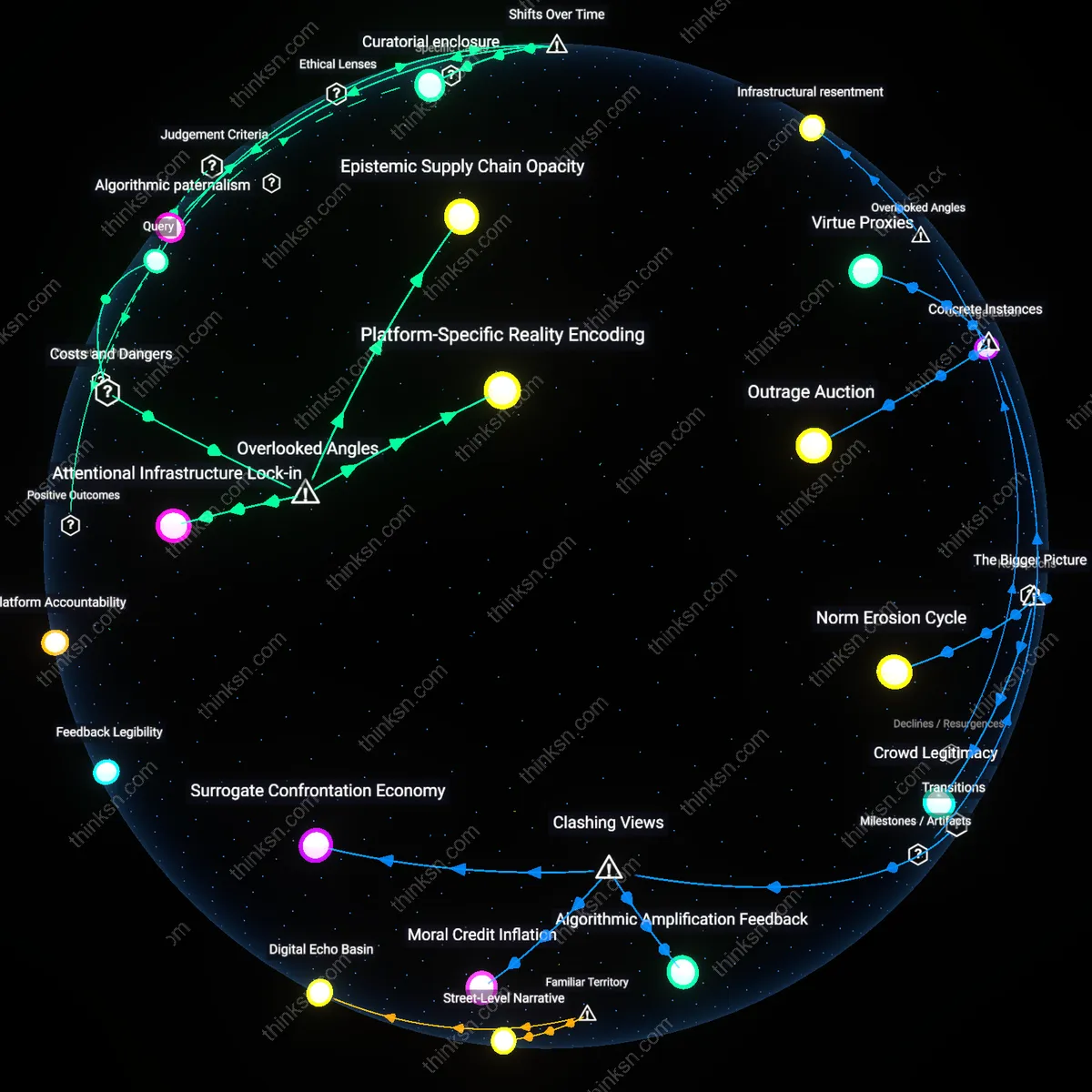

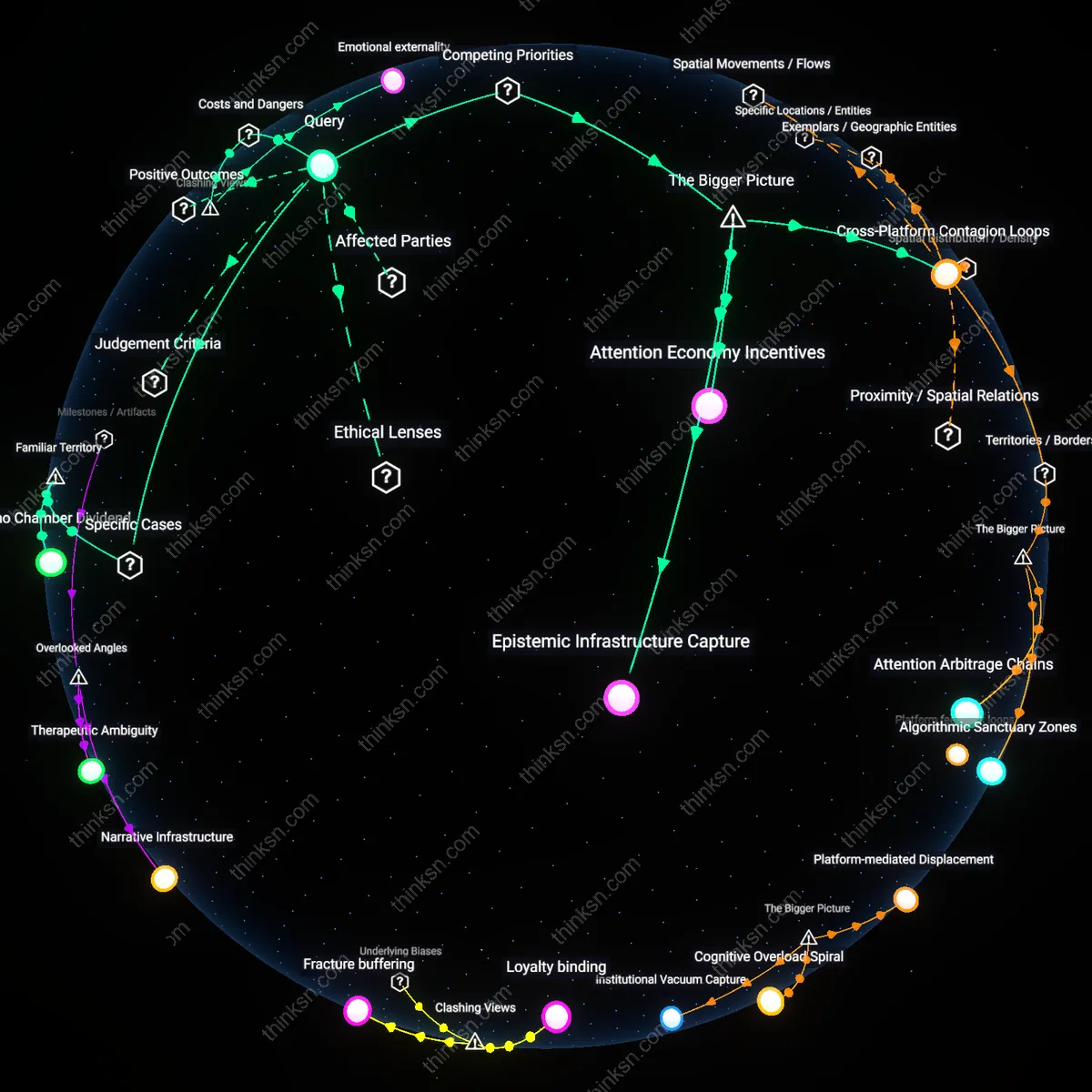

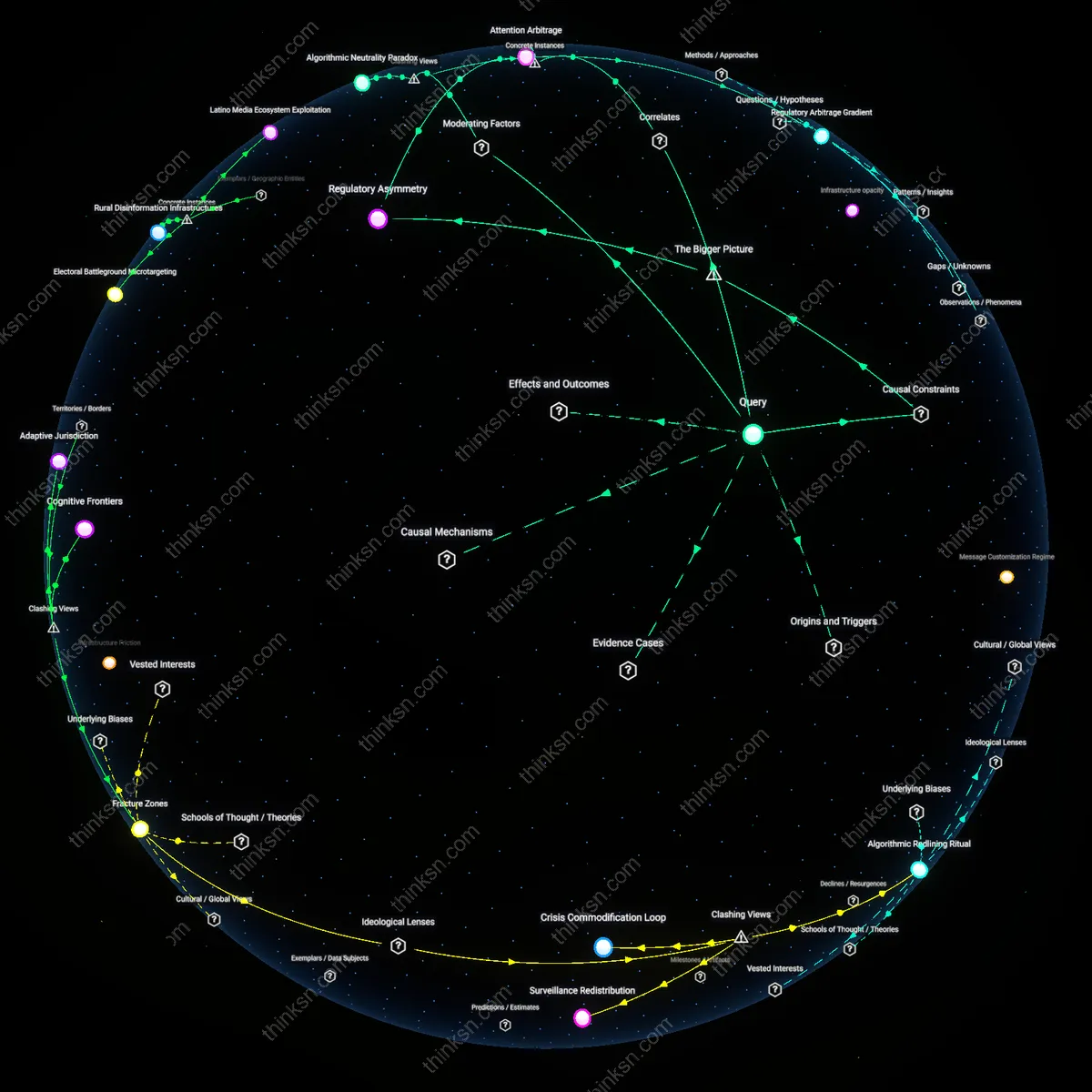

Analysis reveals 9 key thematic connections.

Key Findings

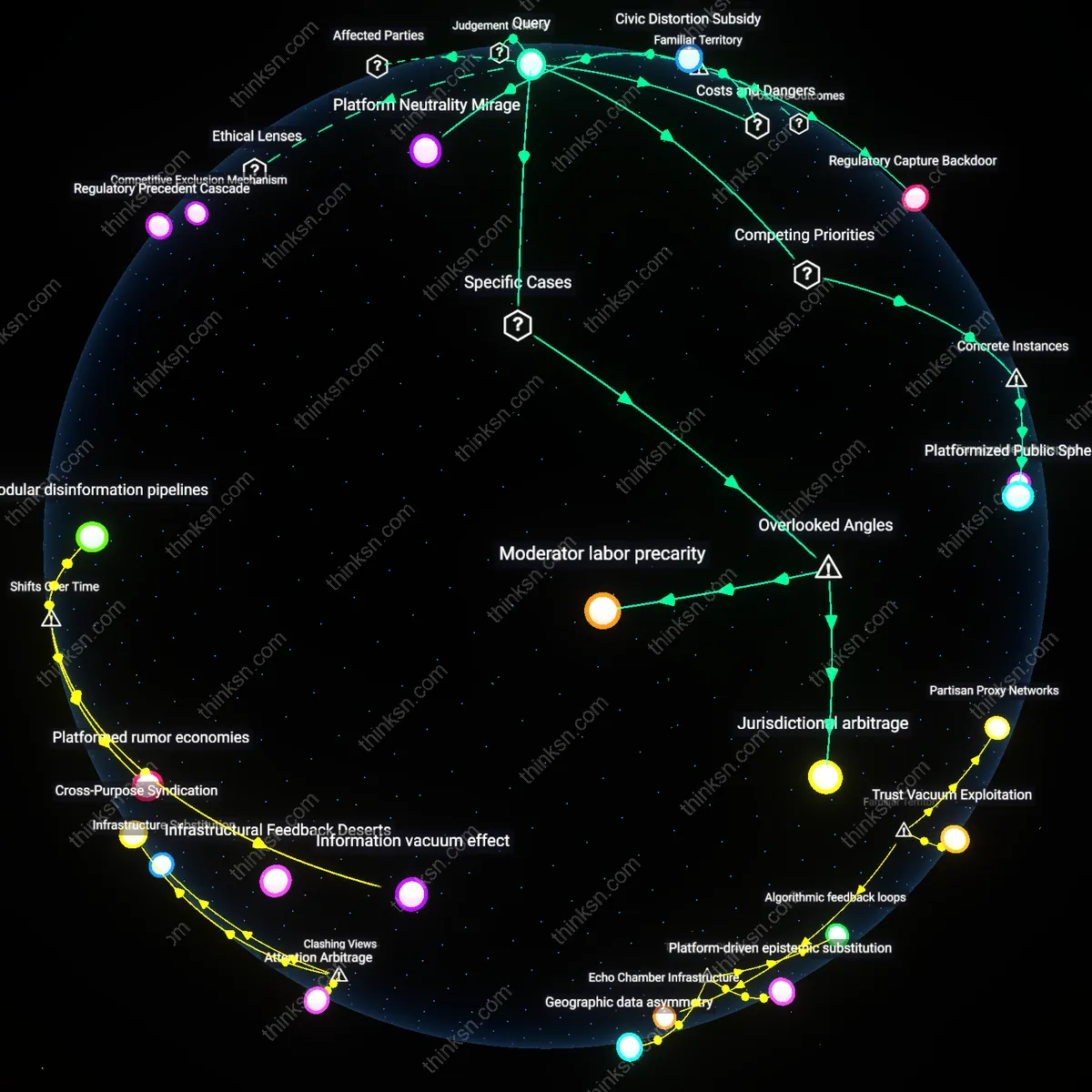

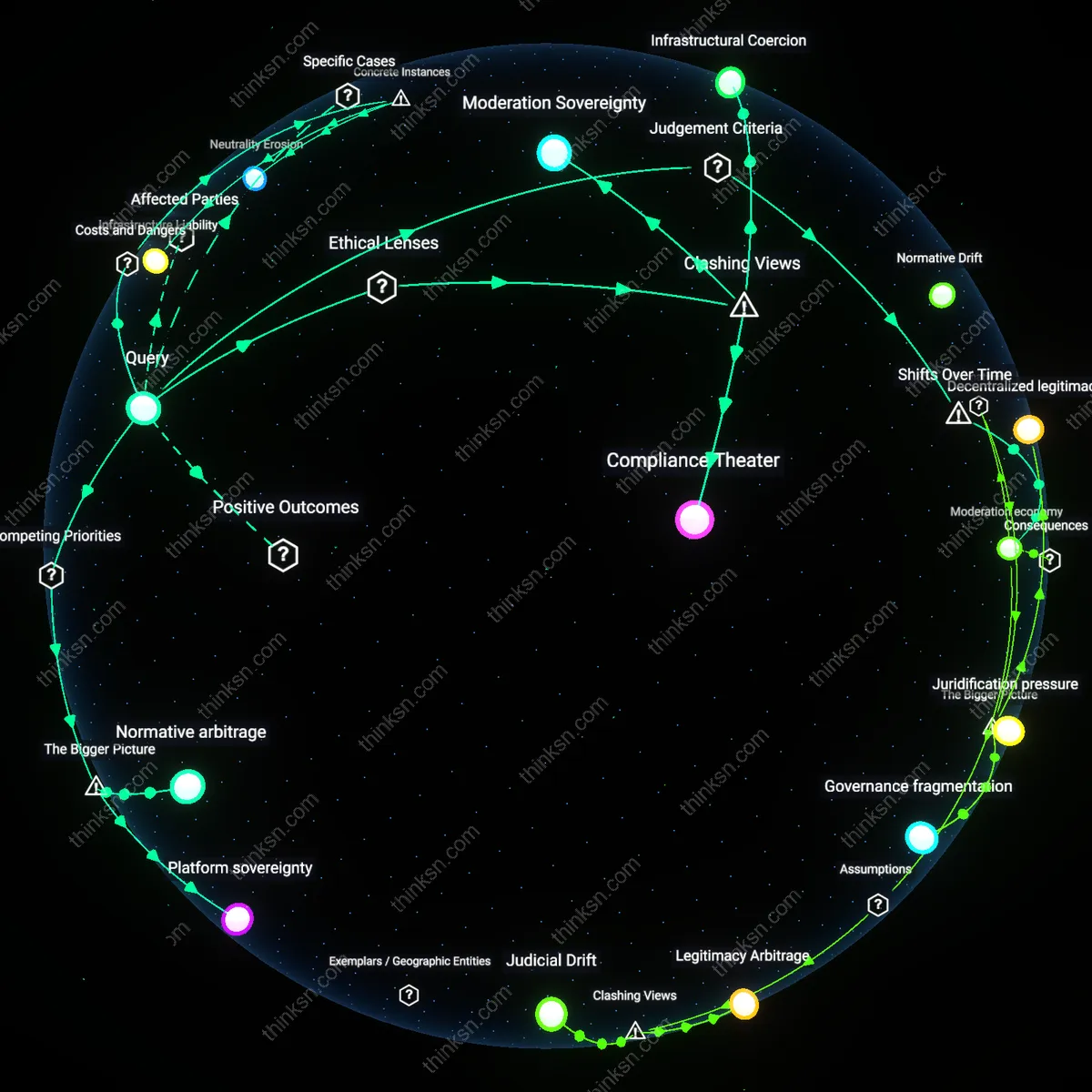

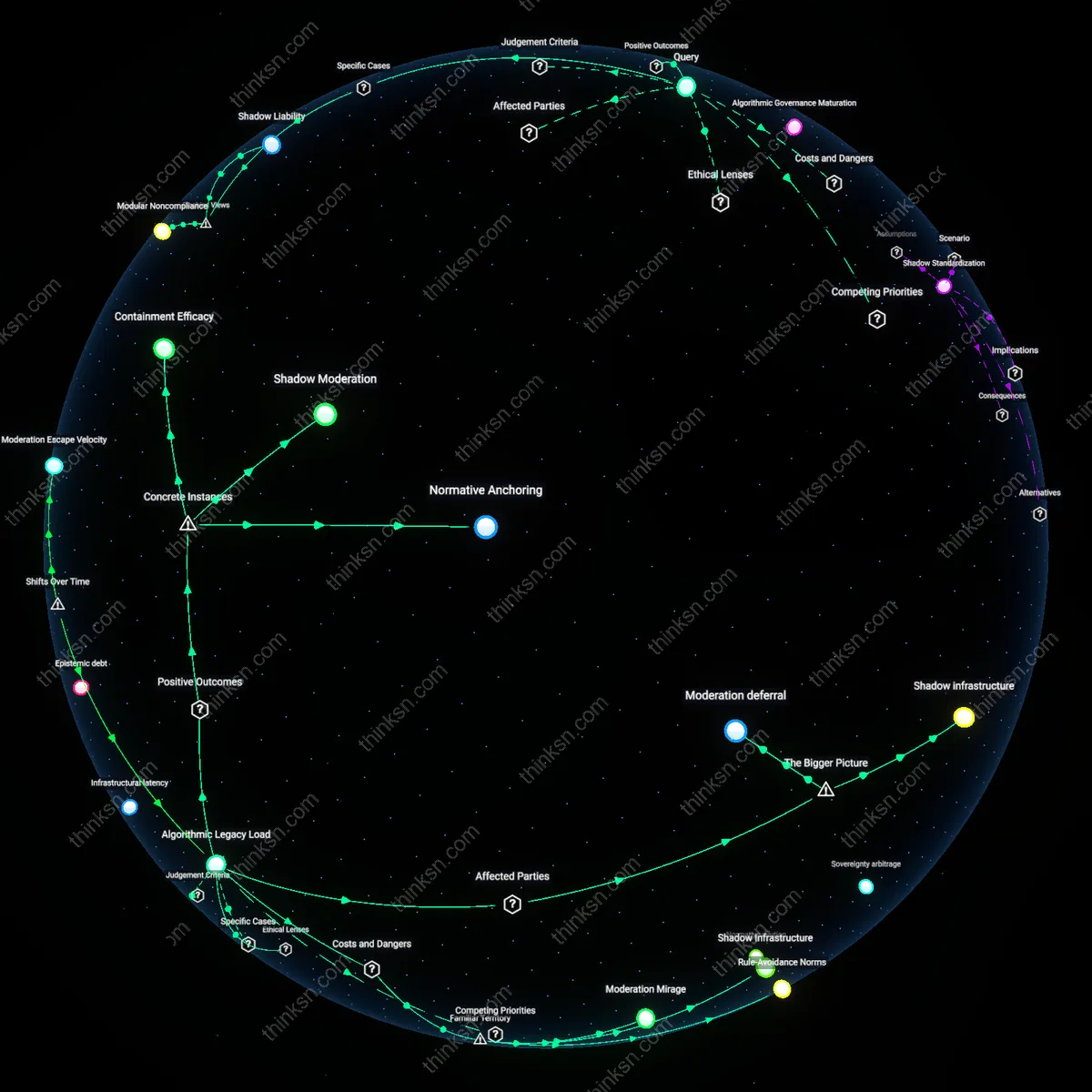

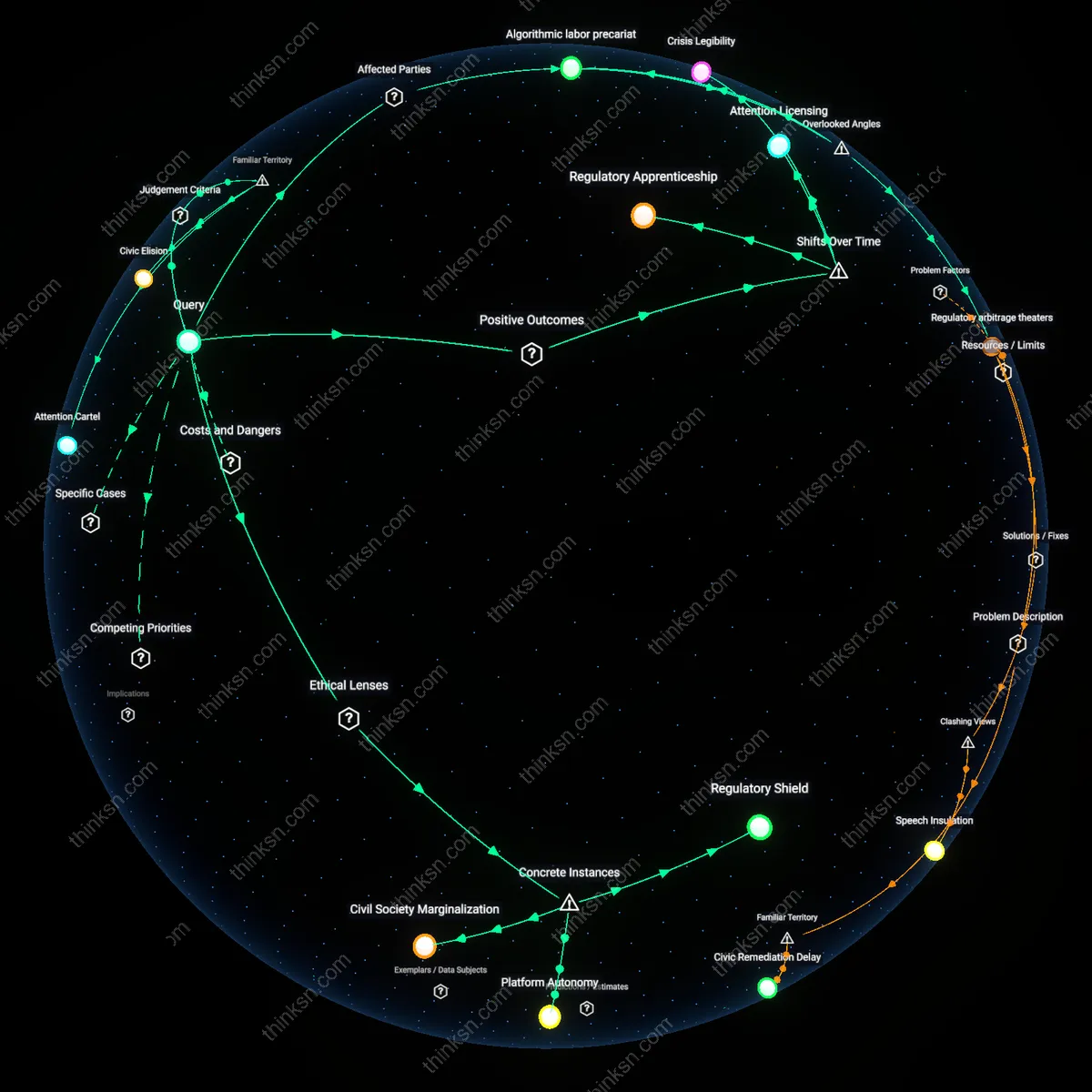

Attention Infrastructure

One should support must-carry regulations if they correct asymmetries in attention allocation that otherwise amplify algorithmically rewarded extremism. Social media platforms act as de facto editors not through editorial intent but via engagement-driven ranking systems—engineered by corporate product teams in Silicon Valley—that systematically elevate polarizing content because it increases user retention, a dynamic exposed in internal whistleblower documents from Meta and YouTube. This mechanism transforms platforms into attention gatekeepers whose design choices, not speech intentions, skew democratic discourse by privileging virality over veracity, an effect most pronounced during electoral cycles in fragmented media environments like the U.S. or India. The non-obvious insight is that content amplification is less a speech act than an infrastructural function—one that operates through machine learning pipelines trained on user behavior, making the platform an unintentional architect of discursive distortion rather than a deliberate censor or advocate.

Regulatory Precedent Cascade

One should oppose must-carry regulations when they risk normalizing asymmetric state intervention that could be exploited by illiberal regimes to compel platform complicity in propaganda. Democratic governments like Canada or the EU implementing must-carry rules to counter misinformation establish legal templates that are then cited by authoritarian states—such as Indonesia or Turkey—to justify mandating the amplification of state-approved narratives under the same language of 'public interest access.' This cascade functions through the globalized legal design of intermediary liability frameworks, where regulatory innovations in liberal democracies are reverse-engineered by digital authoritarian states via policy transfer networks like the Internet Governance Forum or bilateral tech diplomacy channels. The underappreciated dynamic is that platform regulation in open societies creates normative momentum that erodes the boundary between democratic transparency mandates and autocratic speech coercion, even when initial intent differs radically.

Competitive Exclusion Mechanism

One should conditionally support must-carry obligations only if they are paired with interoperability mandates to prevent entrenchment of dominant platforms as speech arbiters. When large platforms like Facebook or X are required to carry certain voices—say, local news or public health agencies—it reinforces their position as indispensable distribution hubs, raising barriers for emerging competitors that cannot absorb the compliance costs of similar mandates. This exclusion operates through venture capital calculus, where investors deprioritize decentralized or niche social media startups knowing that regulatory burden scales with user base, effectively protecting incumbents under the guise of public service. The overlooked consequence is that speech pluralism is compromised not through censorship but through market foreclosure, turning regulation meant to democratize discourse into a tool of platform permanence.

Platform Neutrality Mirage

One should reject must-carry rules because they falsely promise neutrality while institutionalizing editorial rigidity in social media, where platforms are forced to amplify all legal speech regardless of context or community standards. This mechanizes content distribution through algorithmic amplification systems that were designed for engagement, not civic balance, turning platforms into passive transmitters that erode their own safety and coherence functions. The non-obvious risk is that public expectations of 'fair' speech distribution actually disable responsive moderation, transforming familiar corporate platforms into ungovernable public utilities by accident rather than design.

Civic Distortion Subsidy

One should oppose must-carry mandates because they subsidize the most inflammatory speech under the guise of free discourse, rewarding outrage and extremity in democratic conversation where visibility equals legitimacy. Political actors and media figures exploit these rules by flooding platforms with polarizing content, knowing takedown resistance grants automatic amplification—this distorts civic attention through systemic incentives that mirror campaign finance overreach but without disclosure or limits. The underappreciated danger is that the familiar ideal of 'open access' becomes a loophole for strategic manipulation, making incivility the de facto norm in public debate.

Regulatory Capture Backdoor

One should resist must-carry regulations because they create a backdoor for state-mediated control over digital speech ecosystems under the pretense of protecting users from corporate bias. Governments, particularly in semi-authoritarian regimes or polarized democracies, use legal mandates to compel platforms to carry state-aligned or legally ambiguous content, leveraging the rule to shield propaganda from deplatforming. The overlooked cost is that the familiar fear of Big Tech censorship ironically enables a more insidious form of capture—where the remedy becomes the weapon, and the platform is no longer a private actor nor a public trustee, but a coerced conduit.

Platformized Public Sphere

When Brazil’s Superior Electoral Court compelled platforms including YouTube and Telegram to retain and amplify official electoral information during the 2022 general election, it established a precedent where must-carry obligations displaced organic information ecosystems with court-mandated factual frameworks, prioritizing informational stability over pluralistic debate. This judicial intervention functioned through emergency arbitration powers rather than legislative process, illustrating how democratic legitimacy can be temporarily centralized in non-representative institutions to counter misinformation—yet doing so institutionalizes platforms as extensions of the judiciary's communicative reach. The overlooked consequence is that such precedents erode the boundary between judicial authority and platform governance, transforming social media into juridically administered speech environments where corporate actors become de facto enforcement arms of court-ordered epistemic order.

Jurisdictional arbitrage

Support for must-carry rules should be contingent on a platform’s use of jurisdictional arbitrage to evade localized democratic oversight, exemplified by Telegram’s refusal to comply with Brazilian electoral disinformation orders while hosting politically sensitive channels from within the EU. By strategically situating infrastructure and legal domiciles across regulatory gaps, such platforms exploit sovereignty fragmentation to resist public interest mandates, turning technical decentralization into a shield against accountability. The non-obvious mechanism is that must-carry regulations in one jurisdiction can inadvertently strengthen a platform’s ability to manipulate others by creating 'compliance theater'—superficial adherence that masks deeper evasion. This reframes corporate speech control not as a First Amendment issue but as a spatial maneuver, where the geography of server placement and legal registration becomes a decisive factor in democratic resilience.

Moderator labor precarity

Must-carry mandates should be rejected when they exacerbate the precarity of content moderation labor, as occurred with YouTube’s enforcement of its 2019 hate speech policies amid pressure to maintain 'open discourse.' Contract moderators in Manila and Nairobi faced intensified exposure to traumatic content due to inconsistent guidelines and unrelenting volume, a consequence of the platform expanding carriage without proportionally resourcing human oversight. The hidden cost is that democratic ideals of inclusivity are externalized onto an invisible workforce whose psychological burden destabilizes consistent enforcement, creating erratic moderation patterns that undermine the very discourse these rules aim to protect. This surfaces the overlooked dimension that corporate speech control is not only exercised through policy but also through labor stratification, where the human infrastructure of moderation becomes the breaking point of regulatory design.