Restricting Politics on Social Media: Threat to Democracy?

Analysis reveals 10 key thematic connections.

Key Findings

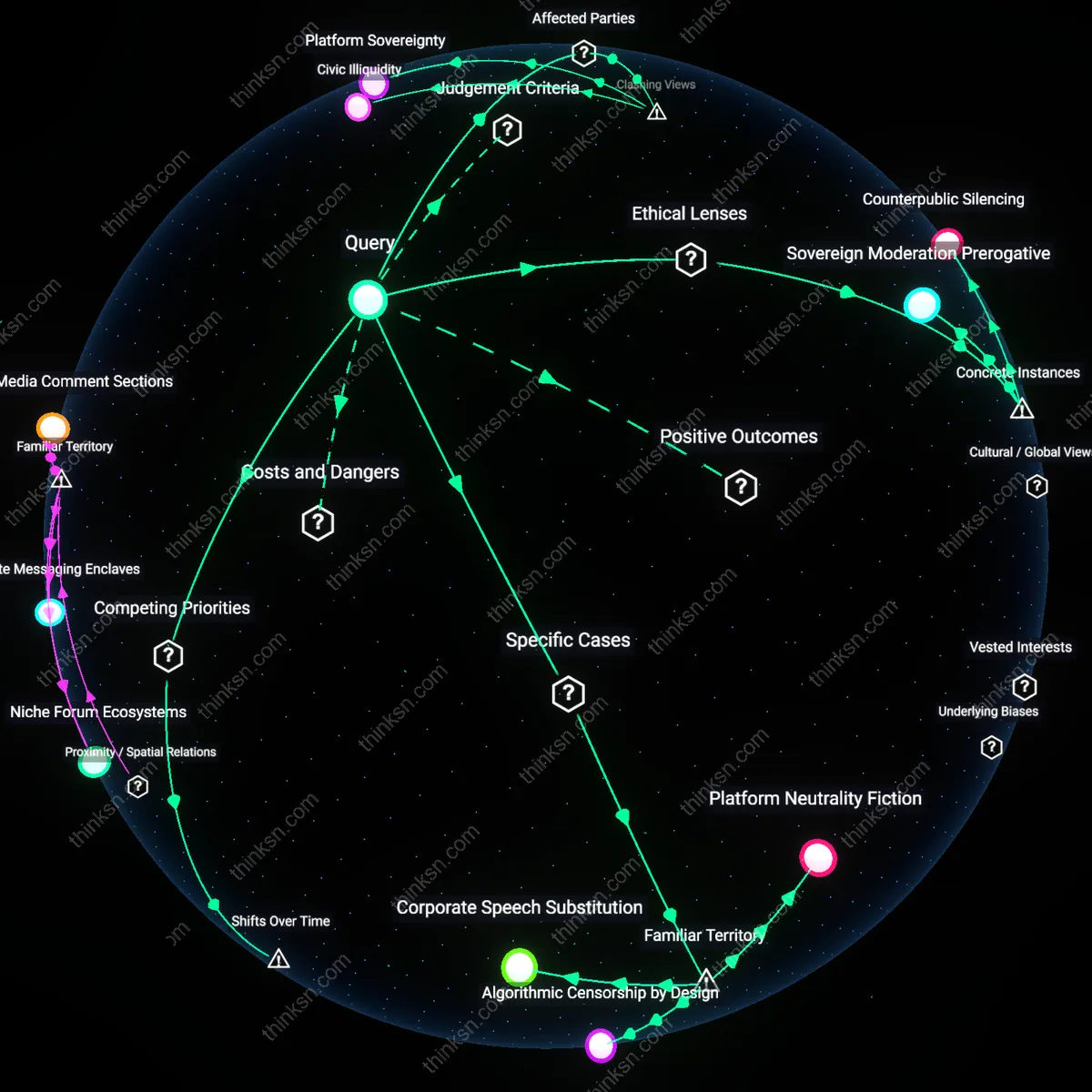

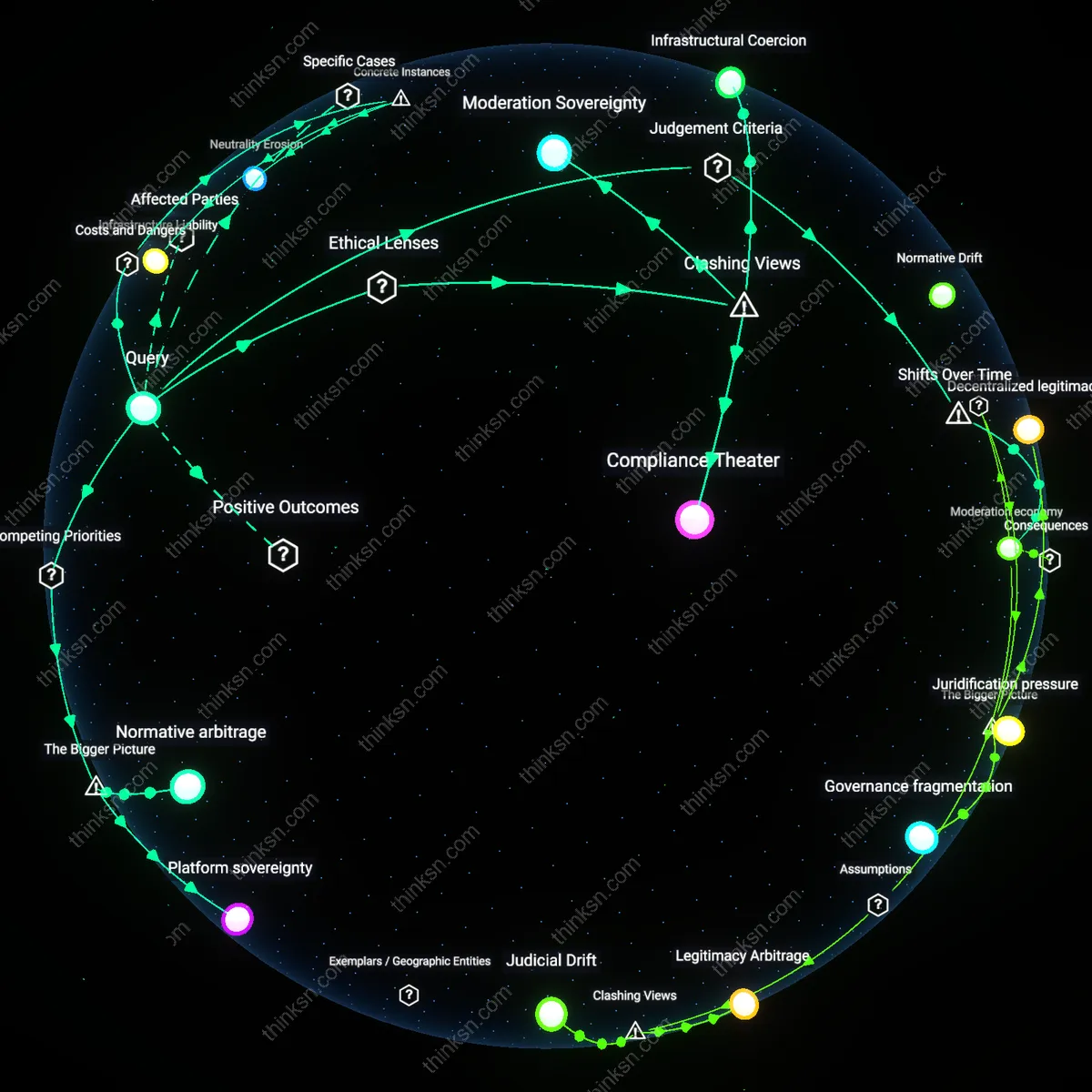

Platform Sovereignty

Prohibiting political persuasion in social media terms of service does not undermine democratic participation but institutionalizes a new form of governance where private platforms function as de facto public authorities regulating political speech. Major platforms like Facebook and Twitter enforce content policies that restrict coordinated influence operations, often under pressure from states to combat disinformation, yet they do so through opaque, unaccountable mechanisms that override local democratic norms in favor of global operational consistency. This shift empowers platform governance teams—staffed by technocrats in Silicon Valley—to define the boundaries of legitimate political expression for billions, particularly in fragile democracies where political mobilization occurs primarily online. The non-obvious reality is that these prohibitions are not neutral speech protections but exercises of sovereign-like power masked as contract enforcement, revealing that digital public spheres are now governed by corporate jurisdiction rather than civic deliberation.

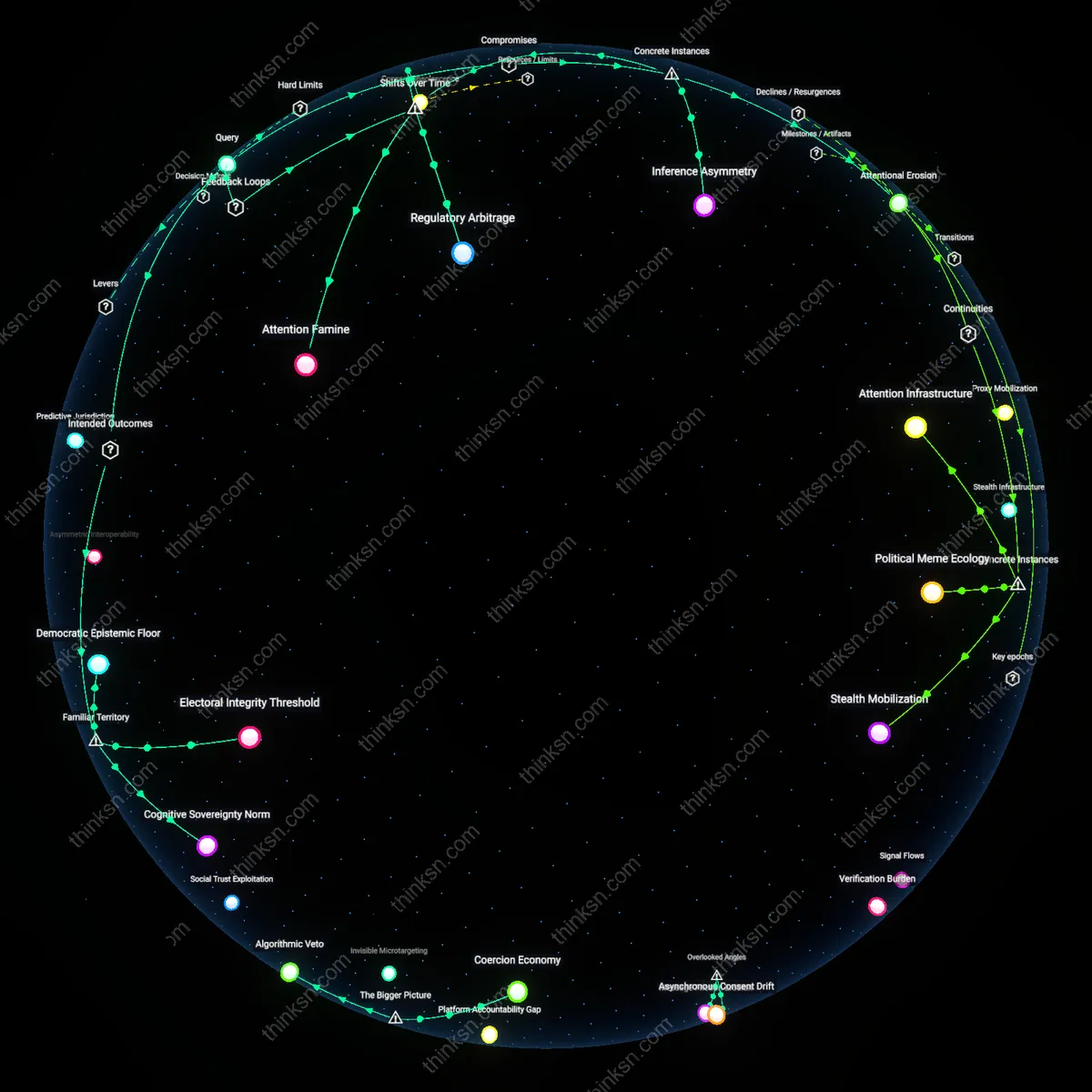

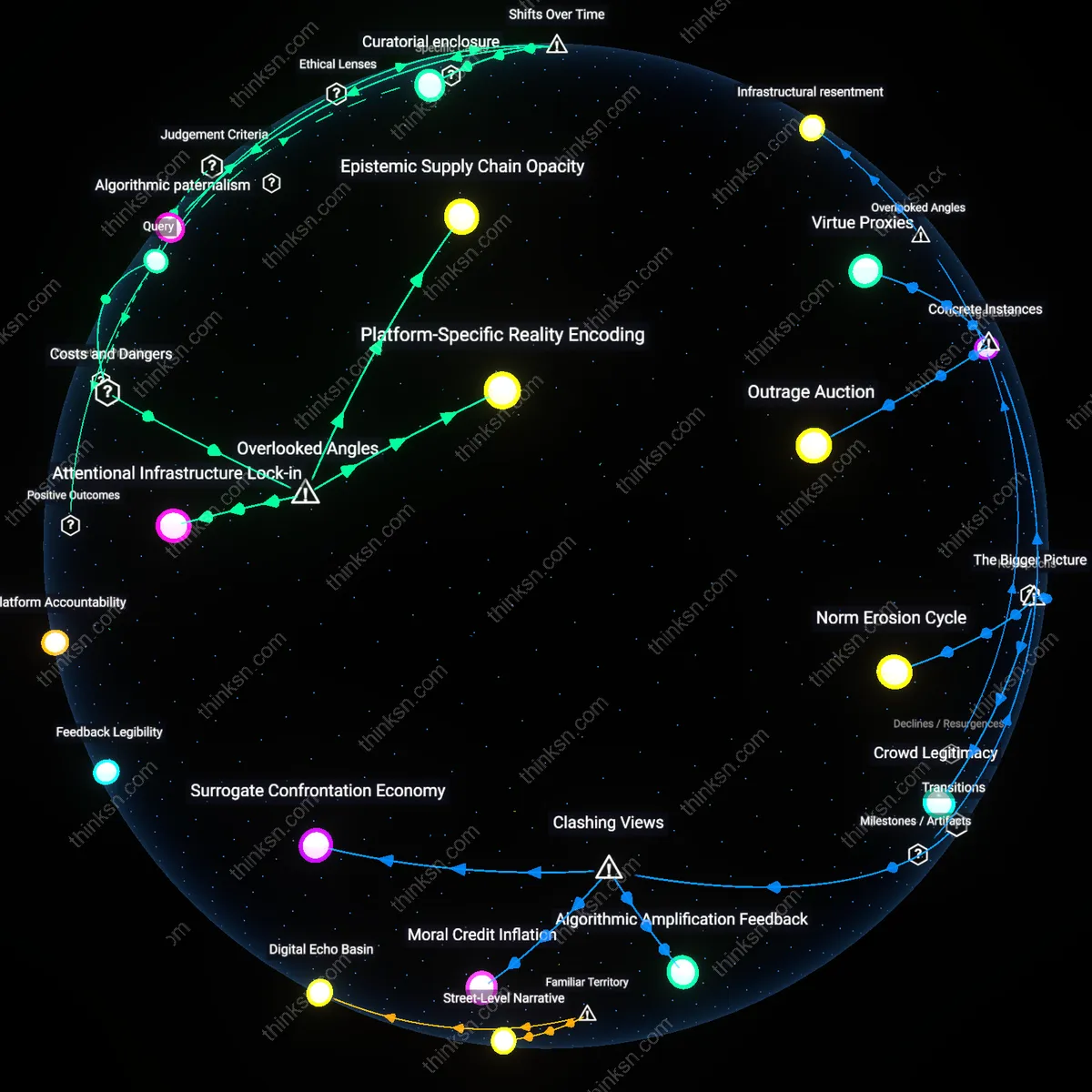

Participatory Asymmetry

Restricting political persuasion on social media benefits institutional actors with existing media access while disadvantaging grassroots movements that rely on viral engagement to overcome resource gaps. Established political parties and state-aligned media can bypass platform limitations through broadcast channels and paid advertising, but marginalized groups—such as youth-led climate activists in the Global South or anti-corruption organizers in authoritarian regimes—depend on organic persuasive tactics like meme campaigns or hashtag mobilization to enter the political arena. When platforms uniformly ban 'coordinated inauthentic behavior' or 'manipulative engagement,' they conflate harmful disinformation with legitimate bottom-up persuasion, effectively privileging top-down communication in democratic discourse. The counterintuitive result is that content neutrality intensifies participatory inequality, exposing how formally fair rules can reproduce structural exclusion under the guise of impartial moderation.

Civic Illiquidity

Prohibiting political persuasion fragments the public’s ability to form cohesive political will by discrediting the very act of deliberate civic mobilization as suspect or manipulative. When platforms label persuasive efforts—such as peer-to-peer voter outreach or religious community organizing—as violations of authenticity standards, they delegitimize traditional democratic practices recontextualized in digital form, such as diaspora communities coordinating abroad to influence home-country elections. This creates a condition where political energy cannot 'flow' freely because normative behaviors are recategorized as policy breaches, enforced through automated detection systems trained on Western liberal individualism that misread collective action as inauthentic. The overlooked consequence is not censorship per se, but the systemic damping of civic momentum—where democratic participation becomes possible in isolated nodes but fails to coalesce into transformative pressure, revealing the erosion of political agency through procedural hygiene.

Attentional Commons

Prohibiting political persuasion in social media terms of service undermines democratic participation because it privatizes the attentional infrastructure that once sustained civic discourse, a shift accelerated after the 2016 election cycle when platforms redefined 'political persuasion' as behavioral manipulation rather than democratic engagement. This reclassification—driven by ad-tech ecosystems and regulatory avoidance—transformed public discourse into a modulated risk variable, where amplification is reserved for algorithmically compliant speech, not civic relevance. The non-obvious consequence is not censorship per se but the quiet substitution of democratic attention with behaviorally optimized engagement, a transition from open civic contestation to managed user states.

Civic Epistemic Exclusion

Banning political advocacy in Facebook’s Community Standards during the 2020 U.S. election suppresses democratic participation by designating certain speech as disruptive rather than deliberative, a mechanism justified through neoliberal platform governance that prioritizes 'safety' over contestation; this reflects rule-consequentialist ethics, where anticipated harms to platform stability override individual rights to persuasion, as seen when civic organizers in Flint, Michigan were temporarily suspended for urging voters to support water safety ballot measures, revealing how content moderation systems systematically exclude marginalized political epistemologies under neutral policies.

Sovereign Moderation Prerogative

Japan’s 2022 amendment to the Act on Regulation of Transmission, which enables private platforms like LINE to remove politically persuasive content if deemed likely to incite public disorder, is justified under communitarian ethics that subordinate individual expression to social harmony; the case of a Hokkaido city council candidate censored for criticizing national defense policy illustrates how the state outsources speech regulation to platforms under a shared normative framework, exposing the normalization of private enforcement of public order objectives in consensual democratic systems.

Counterpublic Silencing

When Twitter (pre-2022) suspended Black Lives Matter activists for allegedly violating ‘manipulation’ policies while permitting institutional political advertising, it enacted a liberal pluralist justification that treats algorithmic neutrality as fairness while erasing asymmetrical power in discourse; the 2020 purge during the Minneapolis uprisings, where decentralized organizers were flagged for ‘coordinated behavior’, demonstrates how terms of service become doctrinal tools to depoliticize dissent under the guise of impartial enforcement, revealing the latent bias in treating all persuasion equally when access and legitimacy are structurally uneven.

Platform Neutrality Fiction

Banning political persuasion on platforms like Facebook or Twitter undermines democratic legitimacy because users expect these spaces to function as public forums despite corporate ownership. The mechanism lies in the mismatch between user perception—shaped by habitual use and public discourse—and the legal reality of private terms of service, where companies can restrict speech without First Amendment constraints. This gap sustains the illusion that social media operates like a town square, when in fact content moderation serves brand safety and regulatory avoidance, not civic health. The underappreciated point is that the very familiarity of these platforms as 'where debate happens' makes their political restrictions feel like civic suppression, even when legally permissible.

Algorithmic Censorship by Design

When YouTube’s recommendation system downranks politically persuasive content under policies labeled 'misinformation prevention,' it silently shapes political participation more than explicit bans. The mechanism is not user-facing rules but backend algorithmic damping—demonetization, reduced reach, and shadow prioritization—that discourages creators from engaging in advocacy. This operates through engagement-driven infrastructure that equates controversy with risk, thus de-incentivizing democratic contestation. The non-obvious truth is that people associate censorship with visible takedowns, but the real constraint today is invisibility engineered through opaque recommendation logic dressed as neutrality.

Corporate Speech Substitution

During elections, when platforms like Instagram prohibit grassroots organizing messages but allow sponsored political ads, they replace civic dialogue with transactional persuasion. The mechanism is the prioritization of monetizable, advertiser-friendly political speech over organic user advocacy, enforced through automated enforcement systems that flag peer-to-peer mobilization as 'coercion' while letting paid content slide. This dynamic plays out visibly in battleground states like Michigan or Georgia, where GetOutTheVote campaigns are shadow-banned while PAC ads proliferate. The underappreciated reality is that people assume equal rules apply online, but the platform economy structurally favors corporate political voice over democratic voice—even when both are 'persuasion.'