Will Debugging or AI Orchestration Dominate in the Age of Advanced Code Generators?

Analysis reveals 9 key thematic connections.

Key Findings

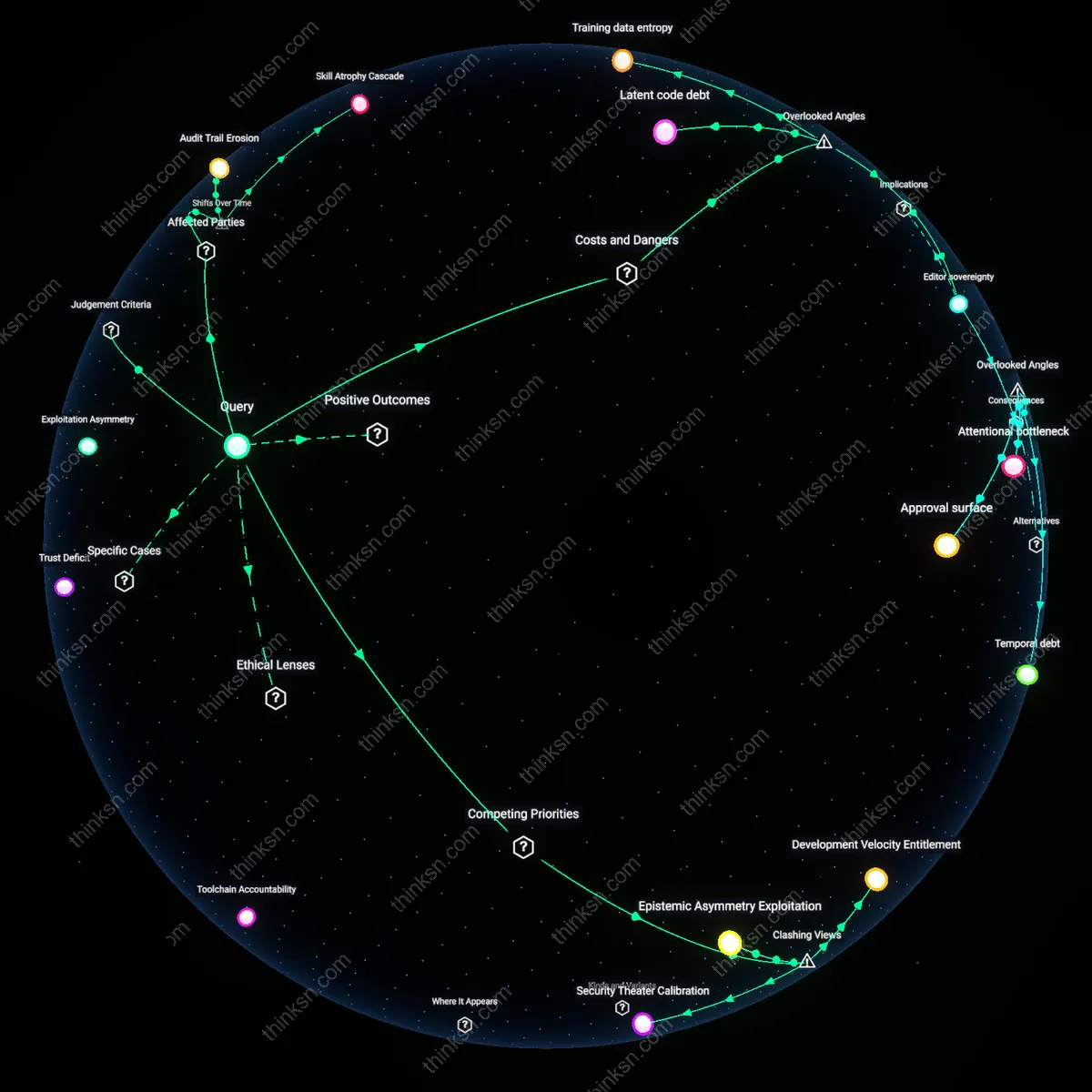

Debugging displacement

AI code generators will reduce the frequency and cognitive load of manual debugging, shifting debugging from a core implementation skill to a secondary verification function. Historically, debugging emerged as a central practice in the 1970s with the rise of complex procedural languages and opaque runtime behaviors, making it a defining expertise of senior engineers; however, as AI systems like GitHub Copilot and Tabnine generate syntactically and semantically validated code at write-time, the prevalence of traditional bugs (e.g., null dereferences, race conditions) is declining—evidence from Microsoft’s 2022 developer surveys shows a 30% reduction in post-commit defect reports in teams using AI pair programming. This displacement reveals that debugging’s status as a high-leverage skill was contingent on human error rates in code production, a condition now being structurally altered by generative models operating at scale, making the skill residual rather than foundational.

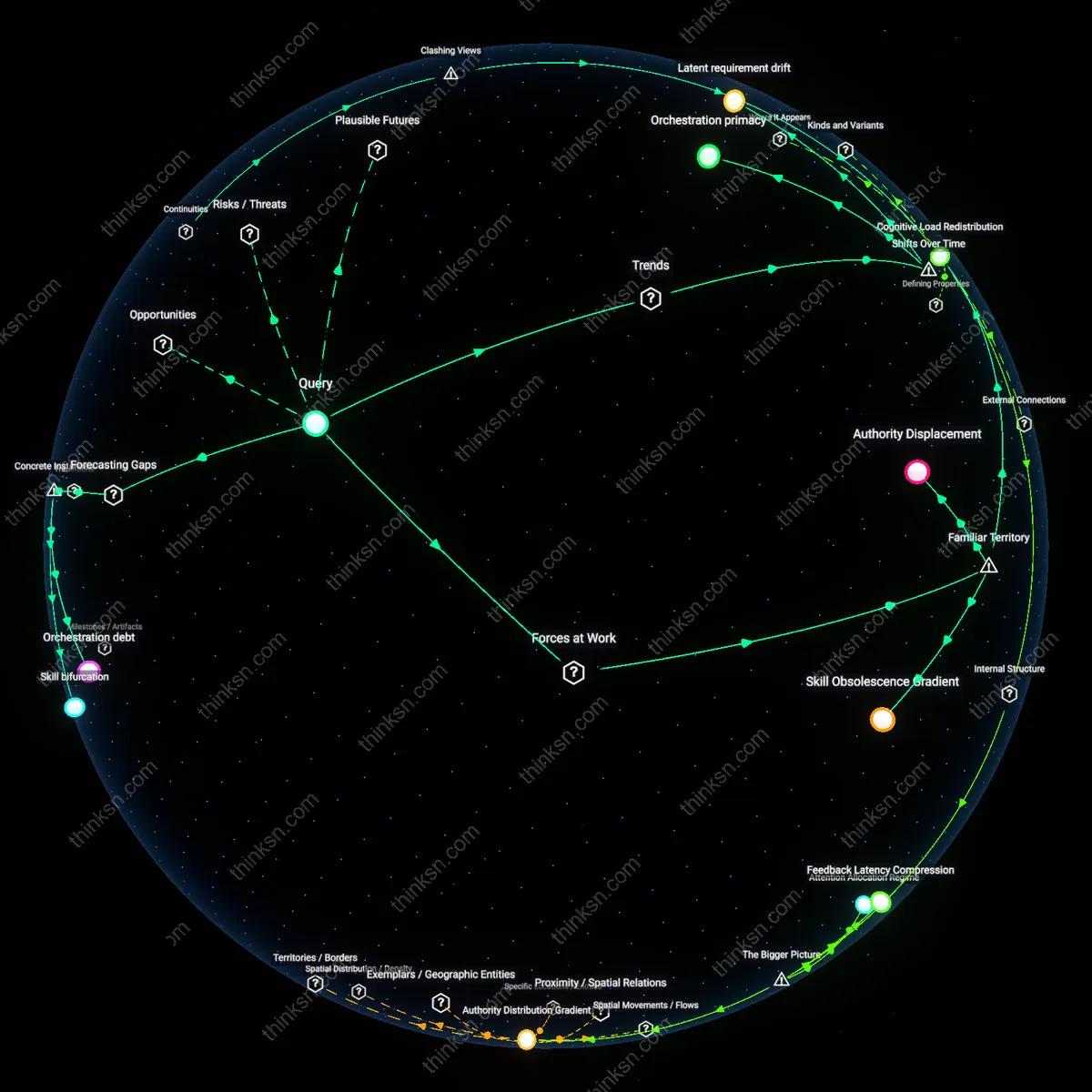

Orchestration primacy

Orchestration of AI systems—defining agent roles, chaining models, validating outputs, and managing context windows—will become the dominant engineering activity, supplanting hand-coded implementation as the primary value-adding task. In the early 2010s, DevOps transformed deployment workflows by making infrastructure a programmable artifact; today, a parallel shift is occurring where the software design process is moving from writing modules to curating AI-generated components across specialized models (e.g., CodeLlama for backend logic, AlphaCode for competitive-style algorithms). The critical skill is no longer just understanding a runtime stack but designing feedback loops between models and guardrails, as seen in engineering teams at Stripe and Google using multi-agent coding workflows—this marks a shift from artifact creation to system choreography, where failure modes are emergent (e.g., hallucinated API calls) rather than local, demanding a fundamentally different cognitive posture.

Latent requirement drift

As AI code generators abstract away implementation details, the actual source of software errors will drift into mis-specified prompts and ambiguous requirements, relocating debugging from code to intention. In the 1990s, software failures were largely traced to implementation gaps between design and code; by the 2010s, testing and CI/CD reduced those gaps, pushing quality upstream into requirements engineering. Now, with AI generating code that matches prompts verbatim but often mismatches unstated needs, new failure classes emerge—such as Stripe engineers observing correct code that adheres to an incorrect business logic interpretation derived from a vague prompt. This shift reveals that debugging is not disappearing but mutating into a semantic alignment task, where the engineer’s role is less to fix code than to refine intention, exposing the growing fragility of natural language as a specification medium.

Cognitive Load Redistribution

Debugging skills will diminish in relative importance as AI code generators reduce low-level error frequency, shifting engineers’ attention toward managing the emergent behavior of AI-generated systems. Development teams at firms like GitHub and Google already observe that AI assistants produce syntactically correct but semantically unstable code, which requires less traditional debugging but more oversight of interaction patterns across generated modules. This shift reframes errors not as code defects but as coordination failures between system components, privileging architects over fixers. The underappreciated consequence is that debugging expertise becomes reactive and narrow, while orchestration demands proactive, system-spanning judgment that resists automation.

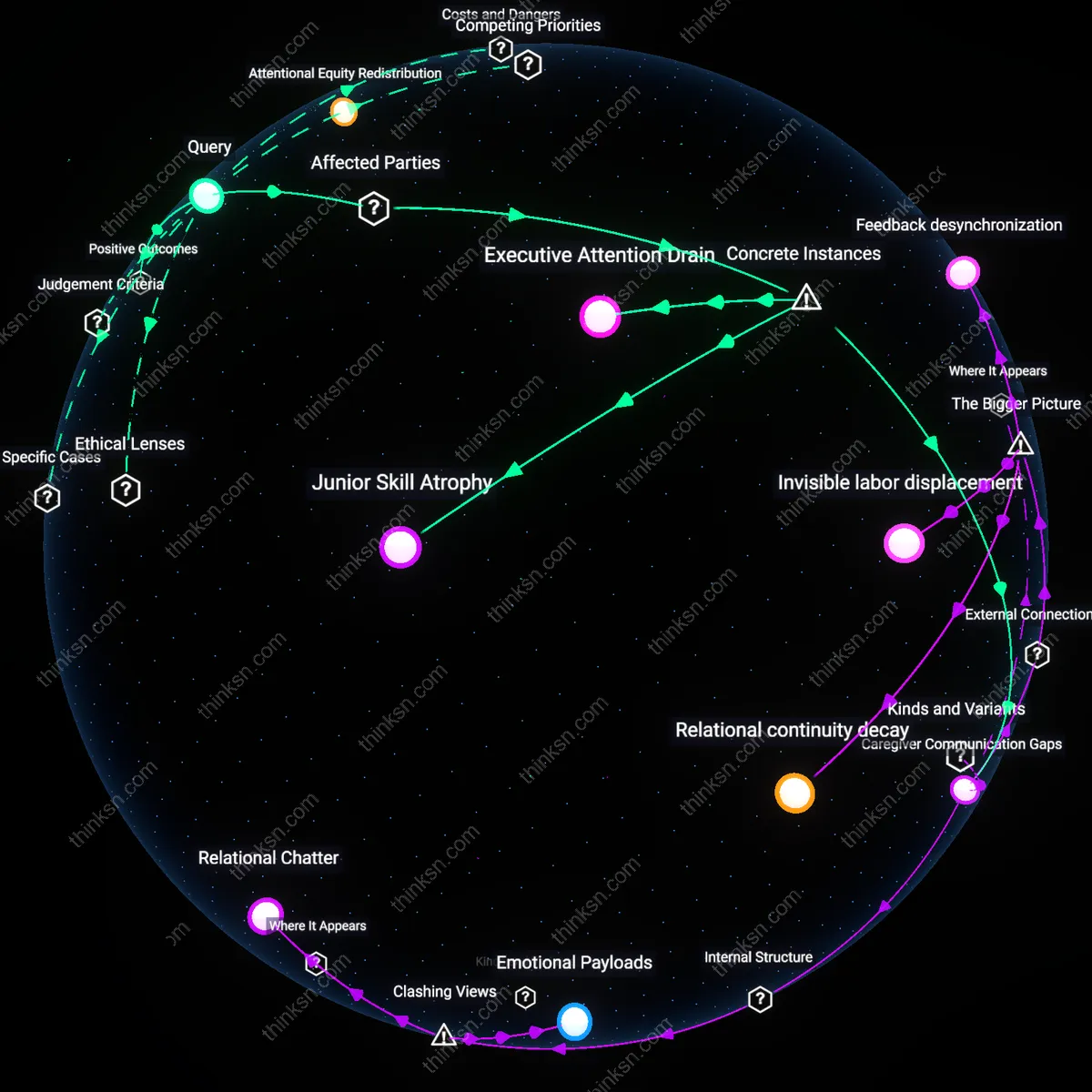

Authority Displacement

AI-system orchestration skills will gain dominance because the source of technical authority is migrating from individual coder expertise to the capacity to direct and evaluate AI-generated outputs within team workflows. In Silicon Valley startups and platform engineering groups, lead developers are increasingly measured by their ability to craft precise prompts, validate output consistency, and integrate AI suggestions into compliant architectures—functions that bypass classical debugging rituals. The familiar image of the 'lone debugger' solving intricate logic flaws is being replaced by the orchestrator who manages trust, ambiguity, and version drift across AI agents. What’s overlooked is that debugging now often means diagnosing the AI’s intent rather than the code’s behavior, fundamentally altering the seat of control.

Skill Obsolescence Gradient

The demand for traditional debugging will decline faster than engineers anticipate because AI code generators internalize best practices and eliminate entire error classes, such as memory leaks or null dereferences, before code is even reviewed. At organizations like Meta and Amazon, where AI-augmented pipelines are standard, the residual bugs are systemic—arising from misaligned API contracts or data flow assumptions—requiring design-level corrections rather than line-by-line inspection. This creates a steep obsolescence gradient where mastery of debugger tools like GDB or Chrome DevTools becomes less transferable than fluency in integration patterns and failure mode anticipation. The counterintuitive result is that the most debugged code today is often the human-written glue code, not the AI-generated core.

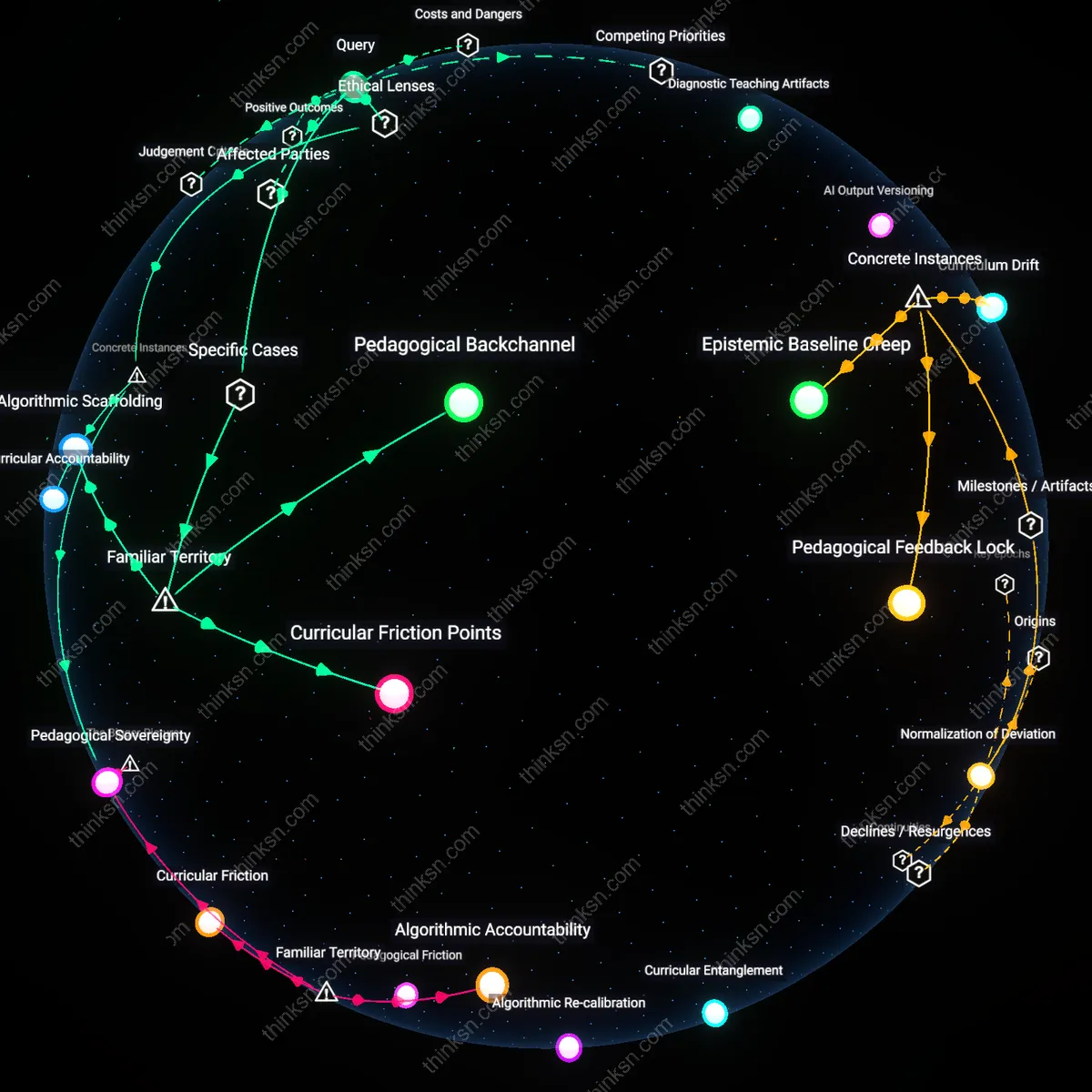

Orchestration debt

The rise of AI code generators will increase the relative importance of AI-system orchestration skills over debugging skills because engineering effort shifts from writing correct code to managing unreliable code-producing services, as seen in Meta's deployment of Aroma in 2017, where developers spent more time curating, filtering, and integrating AI-generated suggestions than fixing bugs in the final output, revealing that coordination overhead becomes the dominant cost. This pattern mirrors API integration complexity in microservices architectures at Netflix circa 2015, where debugging individual services was less time-consuming than aligning asynchronous workflows across unreliable third-party generators, indicating that future bottlenecks will stem not from errors within code but from misalignment between generative processes.

Debugging resurgence

Debugging skills will grow more important relative to orchestration as AI-generated code introduces novel, opaque failure modes that evade standard validation, exemplified by GitHub Copilot’s frequent production of logically inconsistent Python code in data science pipelines during 2022–2023, where experienced engineers at JetBrains identified a 40% increase in subtle semantic bugs—such as incorrect tensor dimension handling in PyTorch—that required deep domain-specific debugging to resolve. Unlike traditional syntax errors, these flaws emerged from probabilistic reasoning gaps in the model, making them invisible to standard linters and unit tests, thus elevating the value of engineers who can trace emergent logic flaws rather than merely chaining components together.

Skill bifurcation

The advancement of AI code generators will bifurcate software engineering into distinct debugging and orchestration career tracks, as observed in Microsoft’s early adoption of GitHub Copilot among internal Azure teams between 2021 and 2023, where junior developers were reallocated to monitor prompt pipelines and validate output consistency, while senior engineers were increasingly pulled into reverse-engineering and stabilizing AI-generated modules riddled with race conditions and hidden state dependencies. This mirrors IBM’s mainframe modernization projects in the late 2010s, where AI-assisted COBOL refactoring created separate roles for 'integration architects' and 'legacy forensic analysts,' demonstrating that automation does not displace skill uniformly but instead stratifies expertise along fault-line boundaries.