Do AI-G graded essays stunt student reflection?

Analysis reveals 10 key thematic connections.

Key Findings

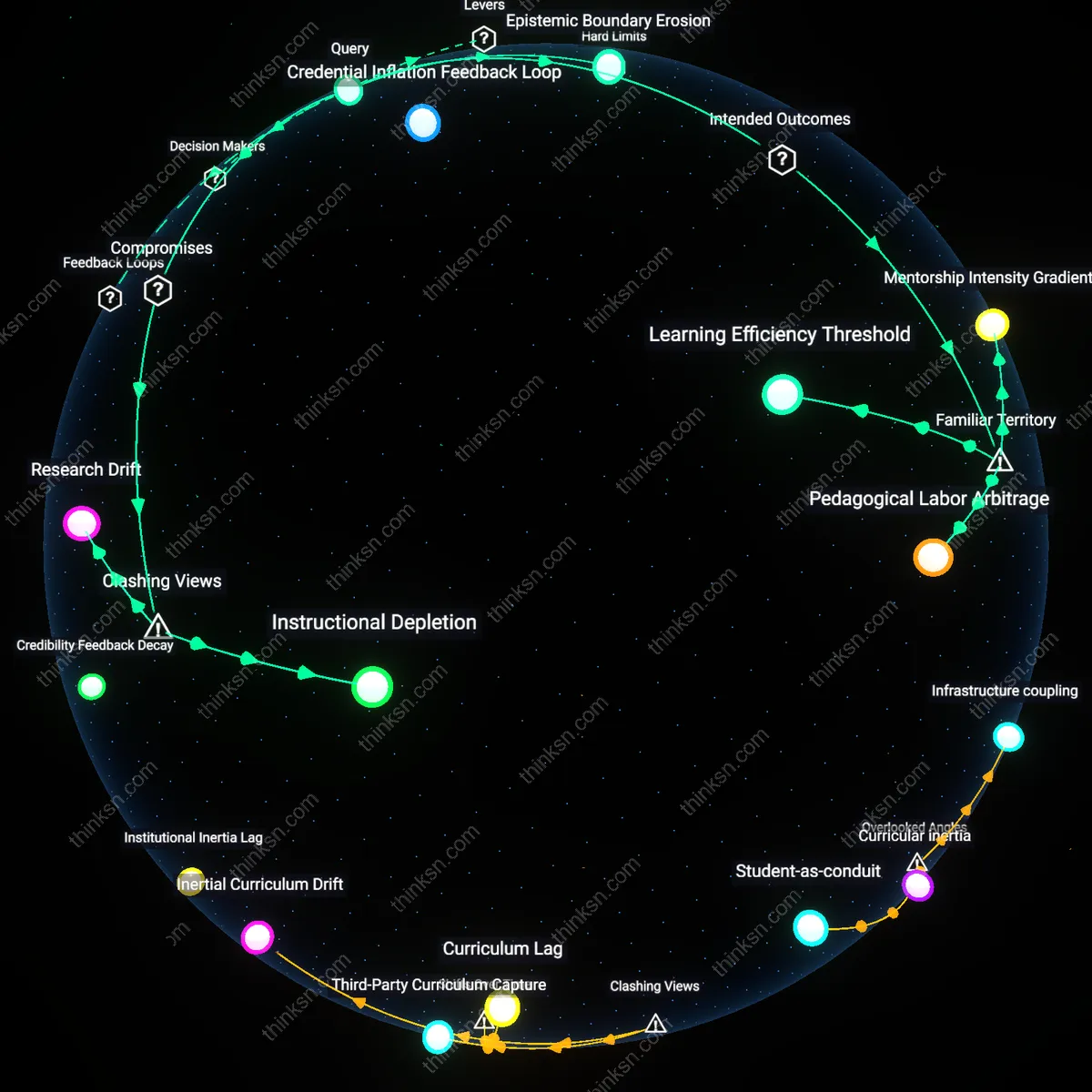

Automated authority

Using AI to grade essays strengthens formative feedback by decoupling assessment from instructor bias, thus enabling more consistent and equitable critique that students are more likely to trust and act upon. In large public university writing programs—such as those in the California State system—AI graders calibrated to rubrics based on decades of scored student writing deliver feedback devoid of the socio-linguistic prejudice that often skews human evaluations, particularly against non-native English speakers; this mechanized objectivity, though depersonalized, increases compliance with revision because students perceive the feedback as impartial and repeatable, thereby enhancing self-reflection through procedural legitimacy rather than interpersonal rapport. This underappreciated dynamic reframes AI not as a feedback diminisher but as a credibility-enhancing medium whose lack of empathy paradoxically boosts pedagogical efficacy by installing an impersonal authority that resists contested judgments.

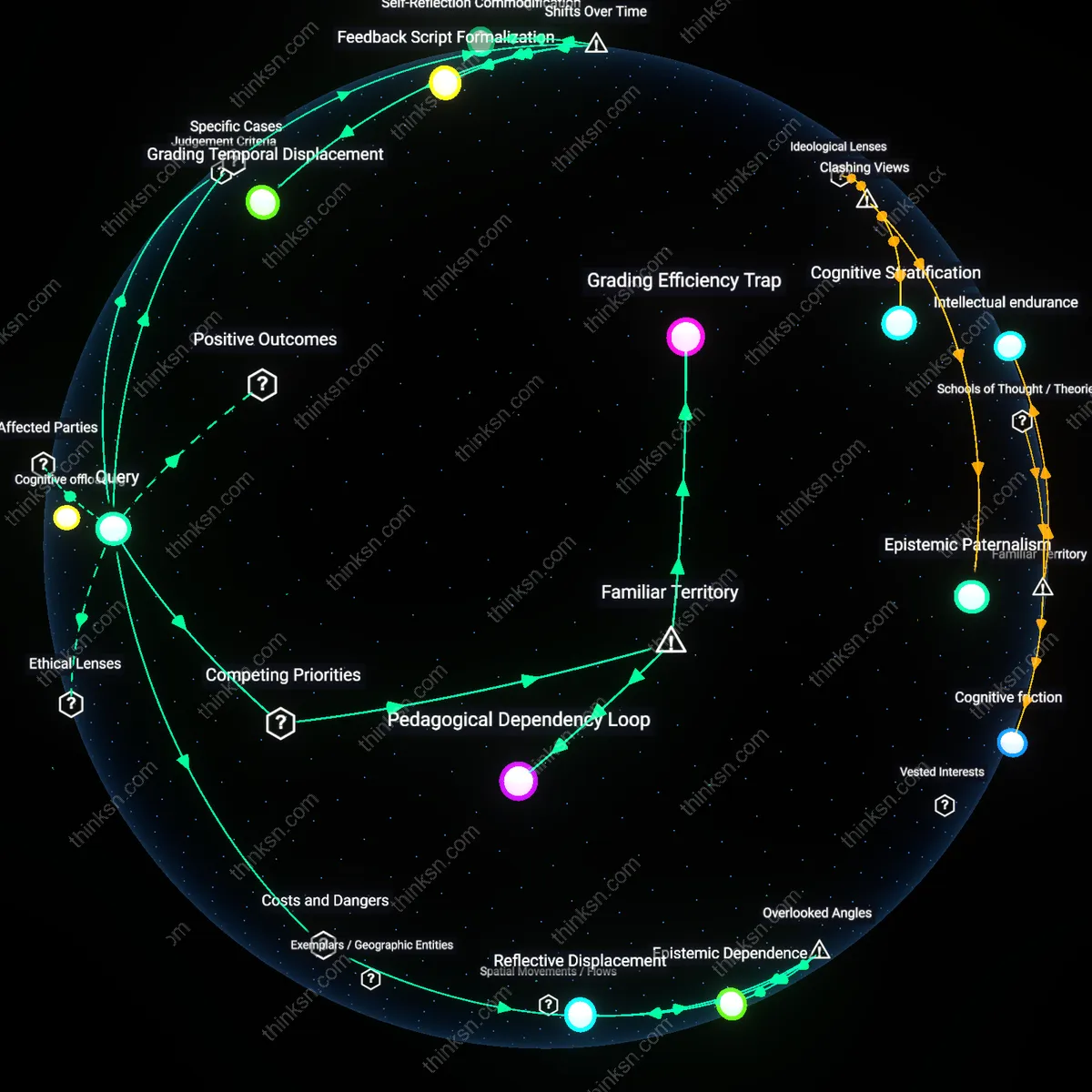

Cognitive offloading

AI grading erodes self-reflection not through poor feedback quality but by shifting the cognitive labor of evaluation from student to system, making metacognition a residual habit rather than a practiced skill. In secondary schools using Turnitin’s Feedback Studio with AI-assisted scoring, teachers increasingly rely on automated comments—such as 'Needs stronger evidence'—which students accept as sufficient critique without engaging in deeper diagnosis of their own work; the immediacy and volume of AI-generated remarks displace the slower, dialogic process of instructor-student negotiation over writing quality that traditionally scaffolds autonomous judgment. This unnoticed mechanism reveals that even accurate, consistent AI feedback undermines formative goals not because it is wrong, but because its very efficiency removes the friction necessary for students to develop their own evaluative intelligence.

Feedback Occlusion

Using AI to grade essays systematically blocks students’ ability to engage with ambiguous or contested evaluations, because algorithmic grading prioritizes resolution over dialectic; unlike human instructors who might flag interpretive tension as part of intellectual development, AI systems eliminate uncertainty by design, replacing dialogic feedback with fixed verdicts. This occlusion occurs not due to technological flaw but structural purpose—automated systems optimize for consistency, thereby erasing the pedagogical value of interpretive friction that traditionally prompts self-reflection. The overlooked danger is not bias or inaccuracy but the silent removal of cognitive dissonance as a learning engine, which transforms feedback from a site of engagement into a terminal output.

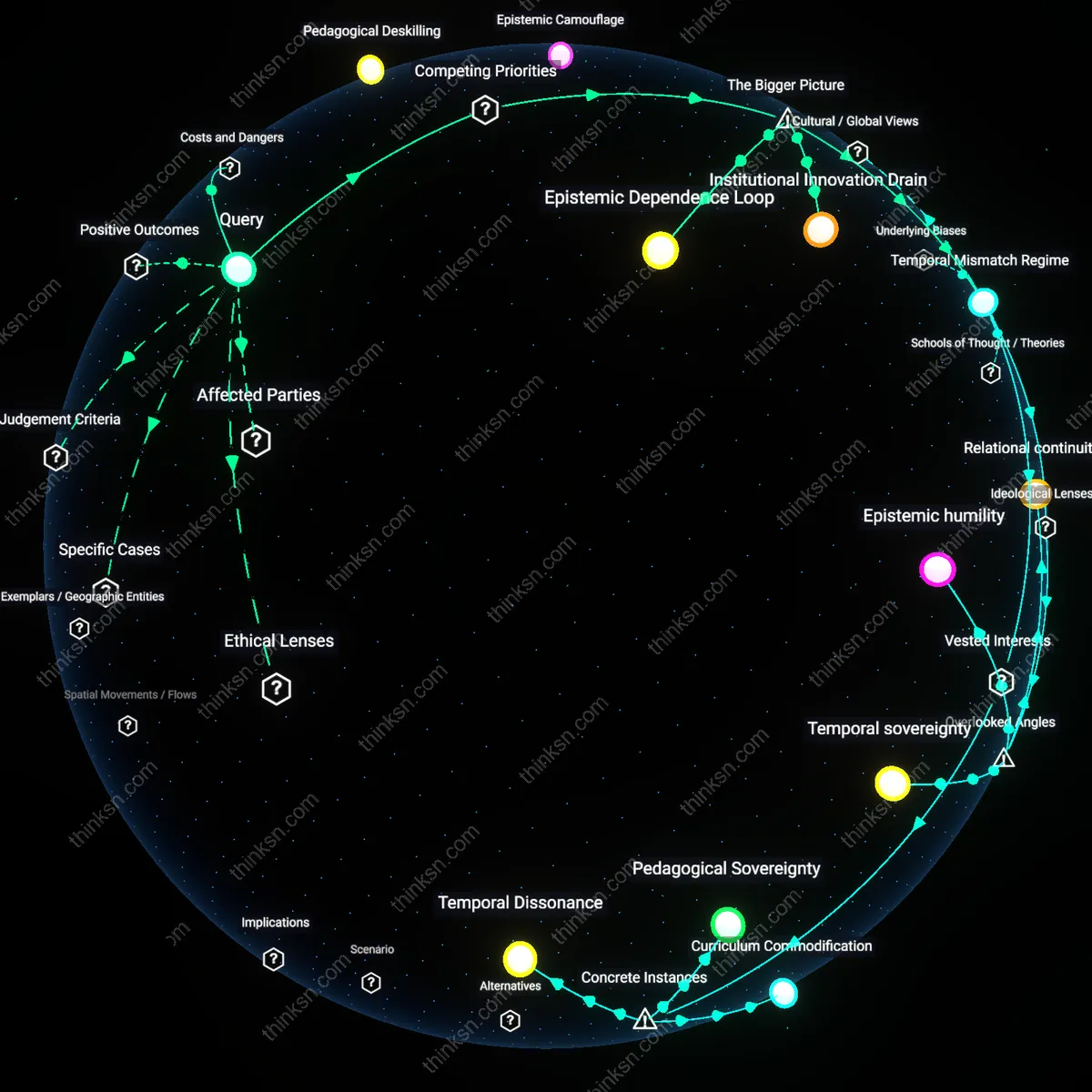

Epistemic Dependence

Deploying AI graders quietly binds educators to opaque epistemic frameworks that predefine what constitutes rhetorical quality, such as privileging syntactic conformity over narrative invention, thereby making teachers inadvertently enforce norms they did not author and cannot modify. Because these evaluative criteria are embedded in model training data—often drawn from standardized test corpora—teachers lose curricular agency not through top-down mandate but through technical necessity, as overriding AI feedback at scale is institutionally impractical. This dependence is rarely acknowledged because it does not appear as coercion but as workflow, yet it reshapes pedagogy by aligning classroom outcomes with the unacknowledged epistemology of legacy assessment datasets.

Reflective Displacement

AI grading shifts the locus of self-reflection from internal heuristic development to external validation calibration, because students learn to reverse-engineer algorithmic logic rather than cultivate personal writing values—e.g., revising phrases not because they feel inauthentic but because they previously triggered low scores. This displacement occurs gradually through repeated interactions with automated feedback loops, which reward pattern compliance over introspective risk-taking; the danger lies in producing writers who are highly adaptive to machine assessment but alienated from their own authorial intuition. The underrecognized cost is not reduced critical thinking per se, but its redirection toward gaming latent space boundaries instead of exploring conceptual originality.

Grading Efficiency Trap

Using AI to grade essays prioritizes scalable, uniform evaluation at the expense of personalized feedback, directly weakening the pedagogical conditions for self-reflection. Teachers, pressured by standardized testing mandates and large class sizes, outsource evaluation to algorithms that deliver consistent scores but lack the capacity to engage with a student’s evolving voice or rhetorical intent. This mechanistic consistency reinforces the illusion of fairness while hollowing out the interpretive dialogue between reader and writer—the very core of formative growth. The non-obvious cost is that efficiency doesn’t just sideline feedback quality; it redefines what counts as valid assessment in classrooms, making rich commentary feel like a luxury rather than a necessity.

Pedagogical Dependency Loop

Schools adopting AI grading become structurally reliant on its output for timely reporting, curriculum pacing, and accountability metrics, reducing teachers’ time and institutional permission to offer slower, reflective feedback. As educators align their instruction with AI-evaluable criteria—clear thesis, paragraph structure, keyword use—curricula gradually exclude exploratory writing where reflection develops through ambiguity and revision. The shift isn’t driven by teacher preference but by administrative demands to demonstrate consistent, defensible assessment across classrooms. The hidden dynamic is that AI doesn’t merely assist grading; it reshapes teaching priorities by making certain kinds of learning visible and rewarded while rendering introspective growth invisible and thus irrelevant.

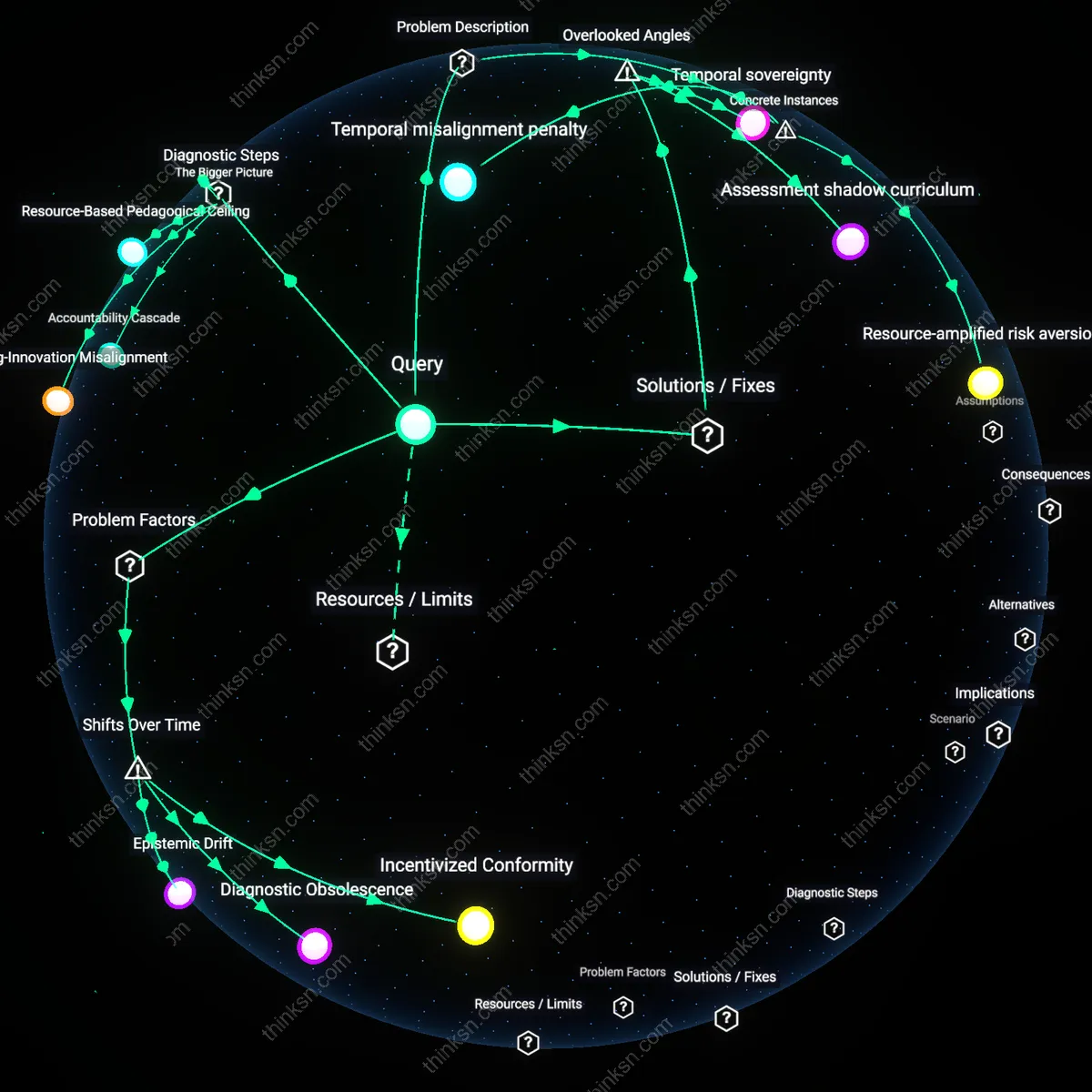

Grading Temporal Displacement

Using AI to grade essays decouples feedback timing from pedagogical rhythm, delaying actionable responses beyond the cognitive window when students engage most critically with their work. At public universities like Arizona State, where automated essay scoring was scaled after 2015 to handle large first-year composition courses, instructors report feedback arriving 48–72 hours after submission—double the prior turnaround—because AI pipelines batch-process submissions overnight and require manual overrides for borderline cases. This shift from immediate, classroom-embedded feedback cycles to backend, system-coordinated timing disrupts the developmental moment when students are most receptive to revising their thinking, revealing how automation reorders educational temporality not by design but by infrastructural necessity.

Feedback Script Formalization

Automated essay scoring in the UK’s GCSE exam system since 2020 has progressively narrowed the range of acceptable feedback language by encoding assessor rubrics into static AI-generated comments, reducing the contingent, dialogic nature of formative input. Exam boards like AQA now deploy natural language generation tools that map student errors to pre-approved correction scripts, which teachers then relay without modification due to accountability constraints. This marks a departure from the 2000s-era practice of teacher-authored, context-sensitive feedback, exposing how AI entrenches bureaucratic standardization in pedagogy by freezing feedback into repeatable, auditable statements that privilege consistency over insight.

Self-Reflection Commodification

In the post-2022 expansion of AI grading on platforms like Turnitin’s Feedback Studio, student self-reflection is increasingly treated as a measurable textual artifact rather than an internal process, with algorithms flagging ‘reflection depth’ based on keyword density and syntactic complexity. Institutions such as the University of Melbourne have adopted these metrics to assess reflective journals in capstone courses, shifting expectations from introspective authenticity to performative compliance. This transition, accelerated by AI’s need for quantifiable inputs, transforms self-reflection into a genre optimized for algorithmic recognition, revealing how formative development is redefined as output conformity within automated assessment regimes.