Does Faster Screening with AI Marginalize Innovative Research?

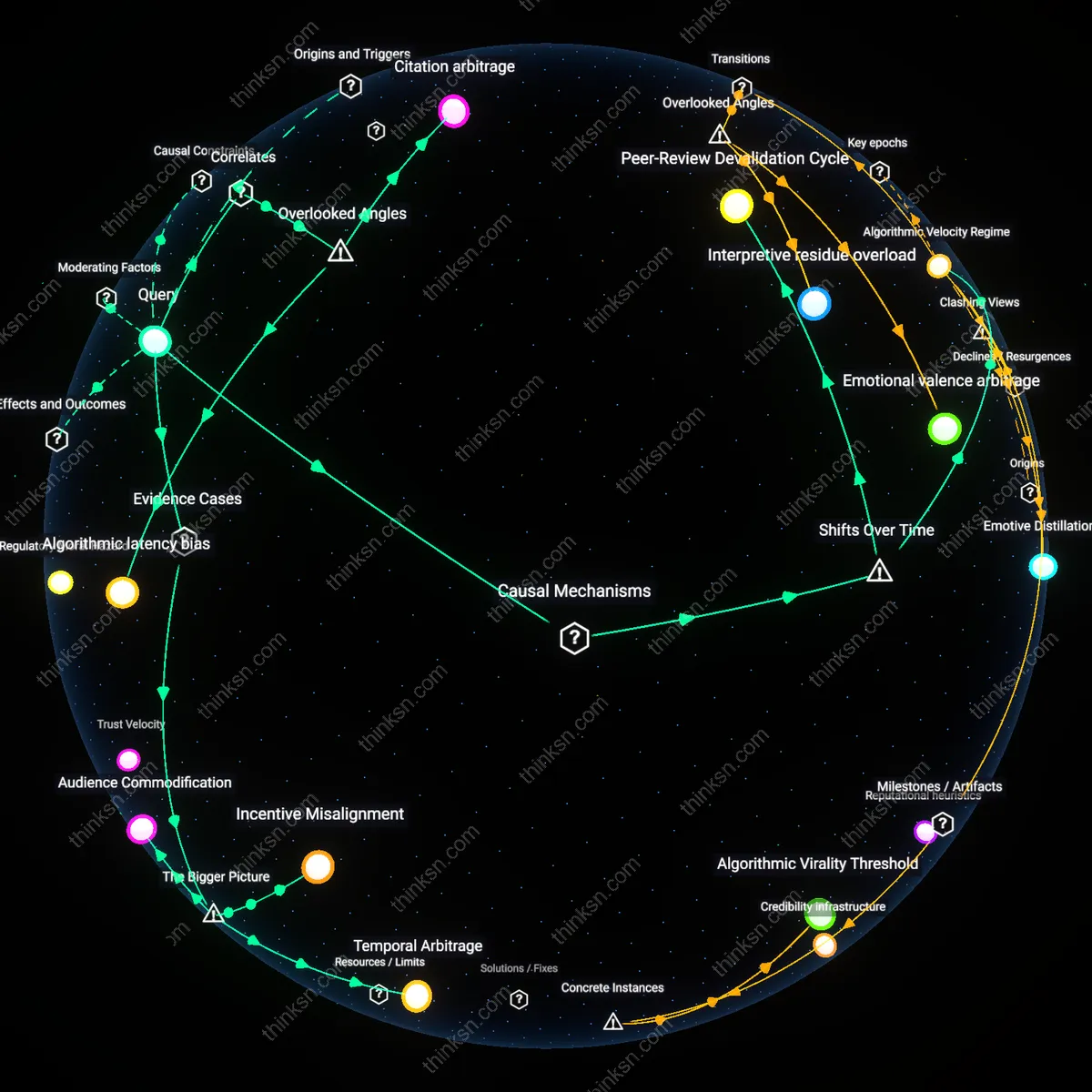

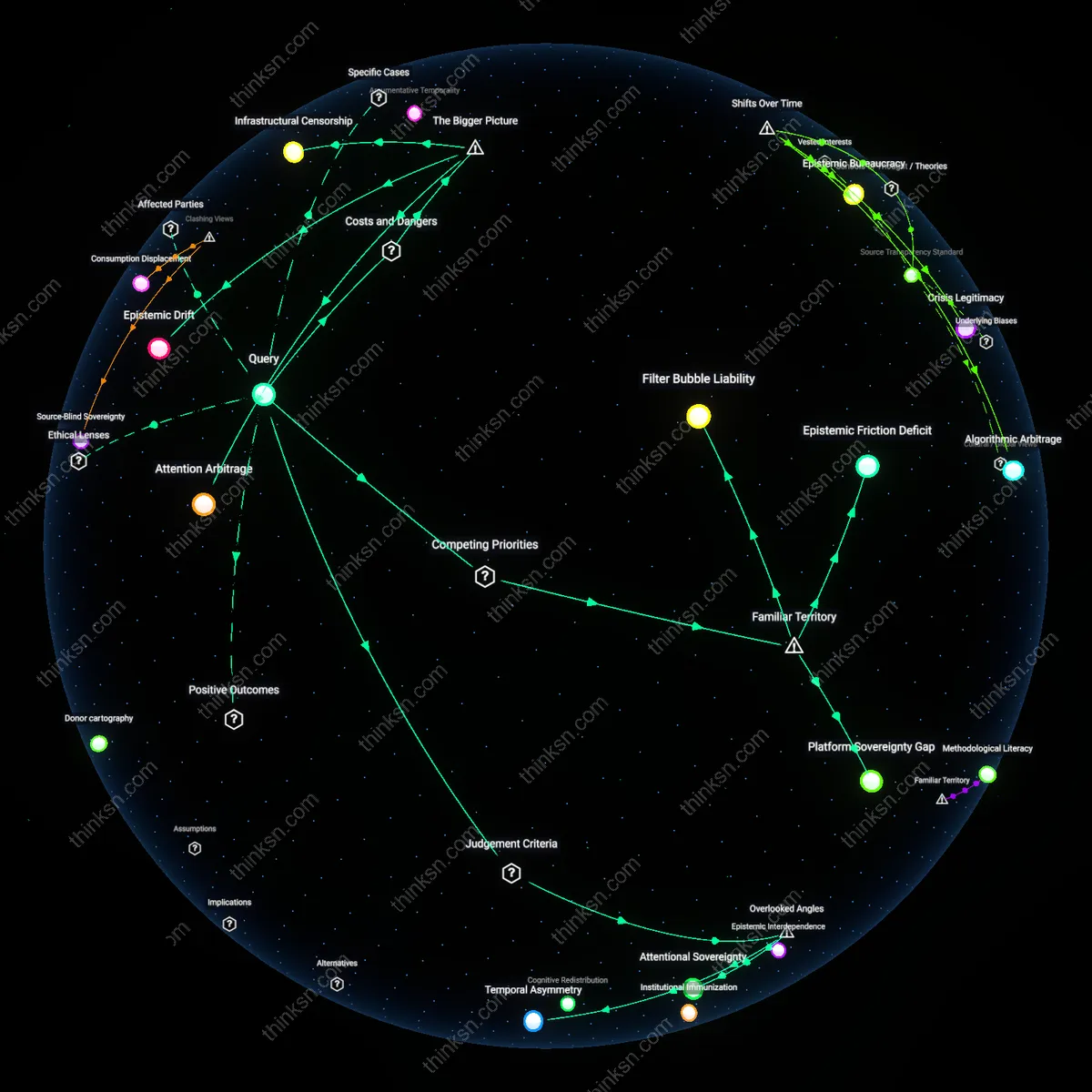

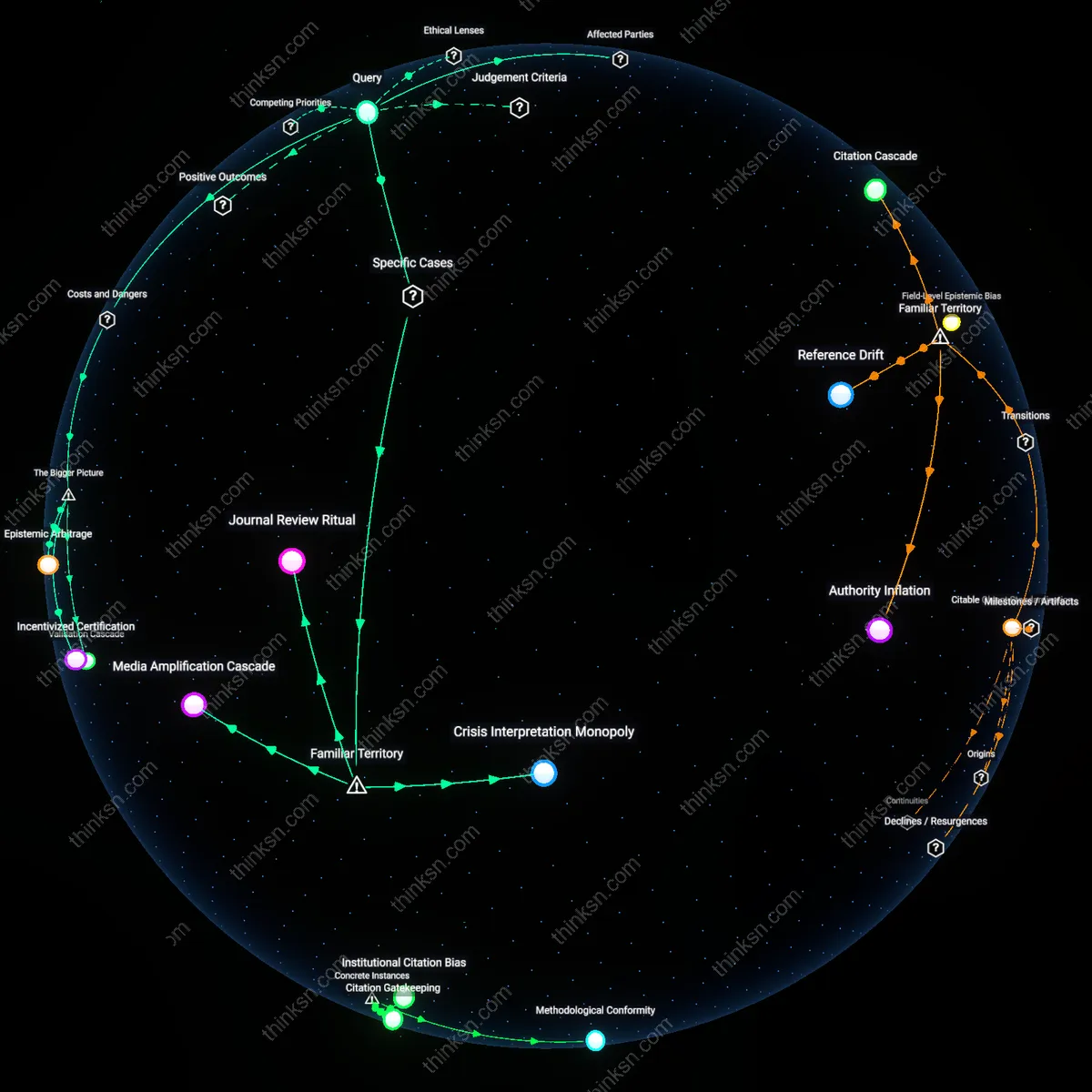

Analysis reveals 5 key thematic connections.

Key Findings

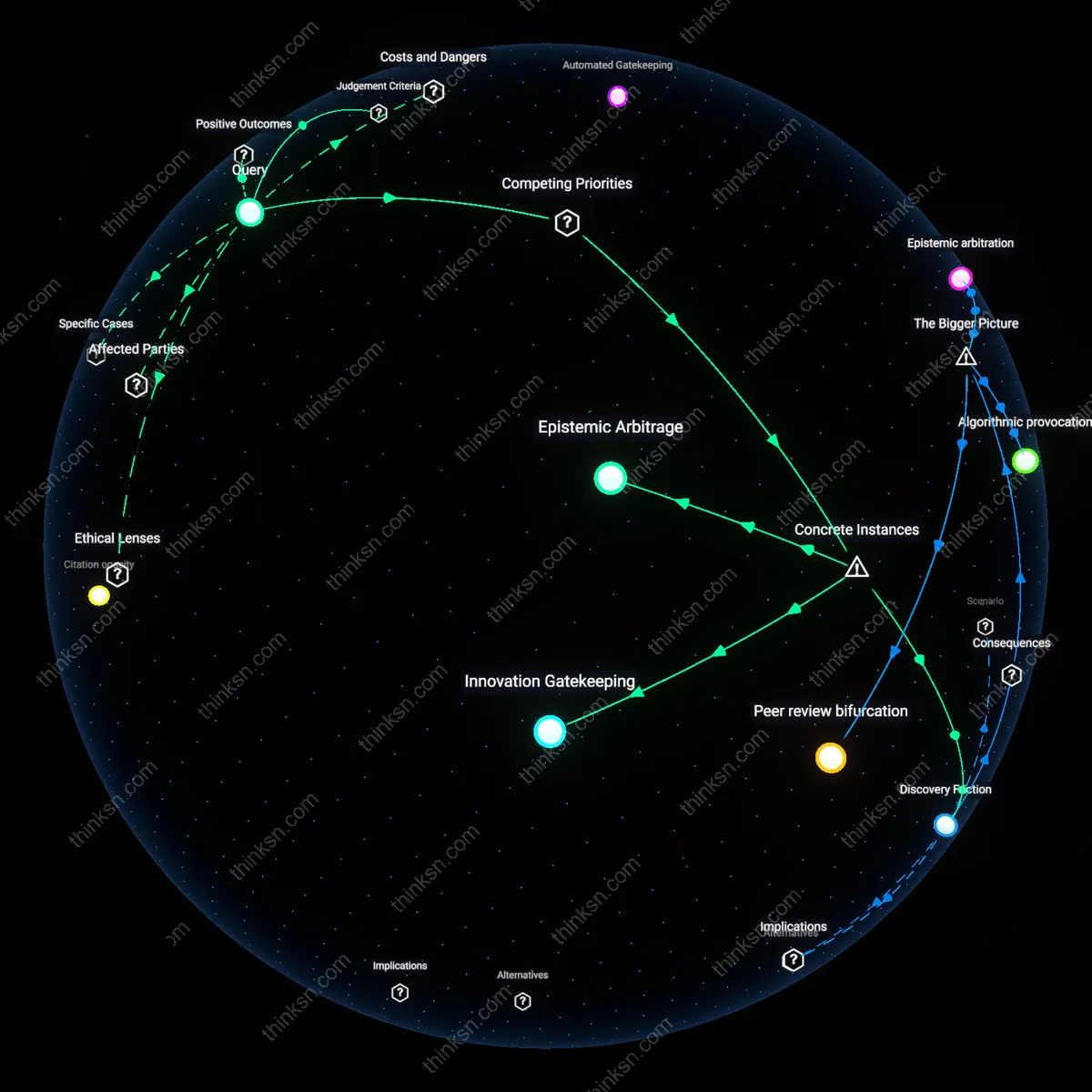

Automated Gatekeeping

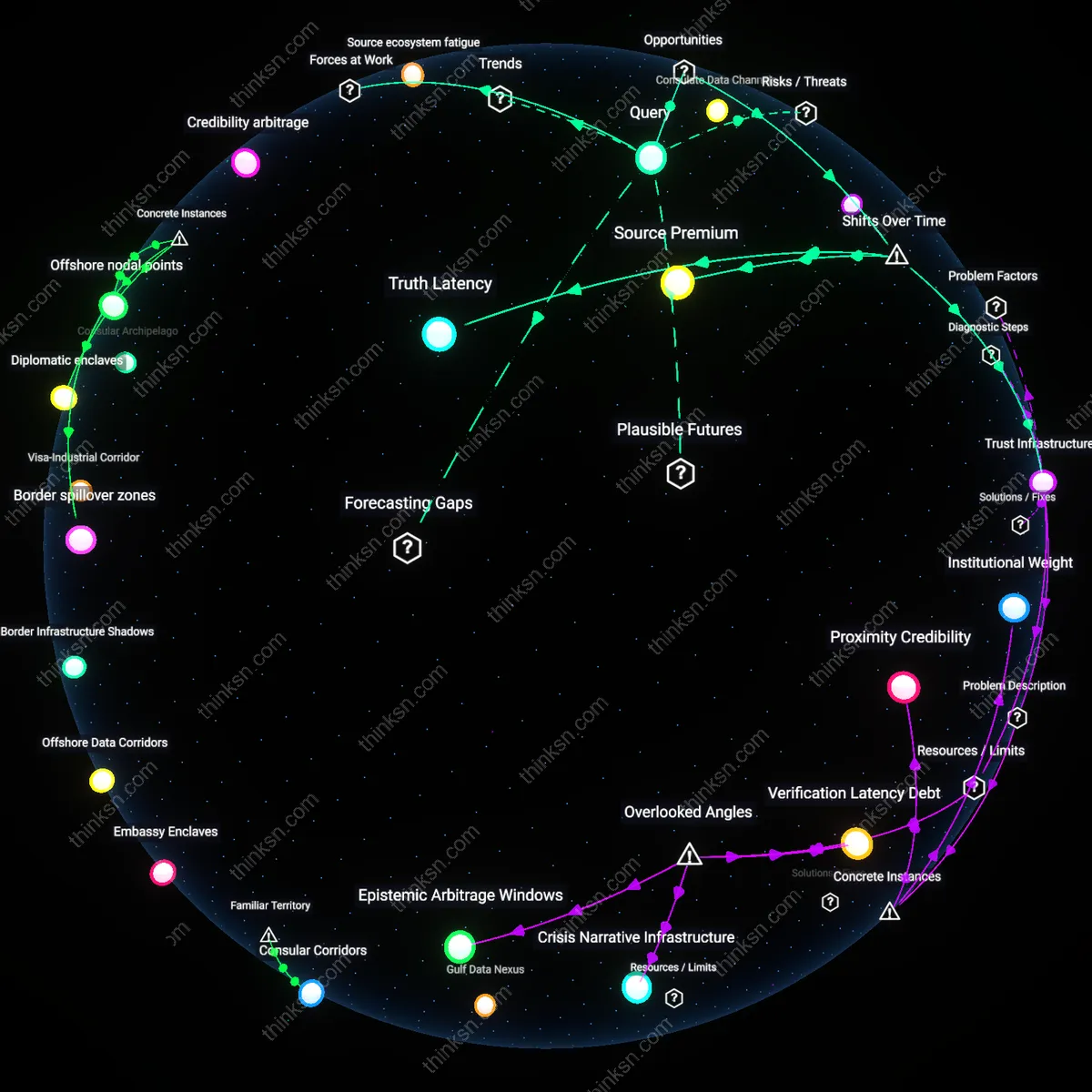

The speed benefits of AI screening do not outweigh ethical concerns because the shift from peer-based evaluation to algorithmic triage since the 2010s has reconfigured academic legitimacy around computational efficiency, privileging pattern-recognizable novelty over disruptive heterodoxy. Journal editorial systems in fields like computational biology now deploy AI to filter submissions before human review, using citation vectors and lexical similarity metrics that systematically disadvantage research departing from established paradigms. This mechanism, driven by the expansion of commercial publishing platforms like Elsevier’s Article Transfer Service, reveals how the historical transition from disciplinary self-regulation to industrialized publication has produced automated gatekeeping—not merely as a tool, but as a structural logic that recasts epistemic risk as processing cost.

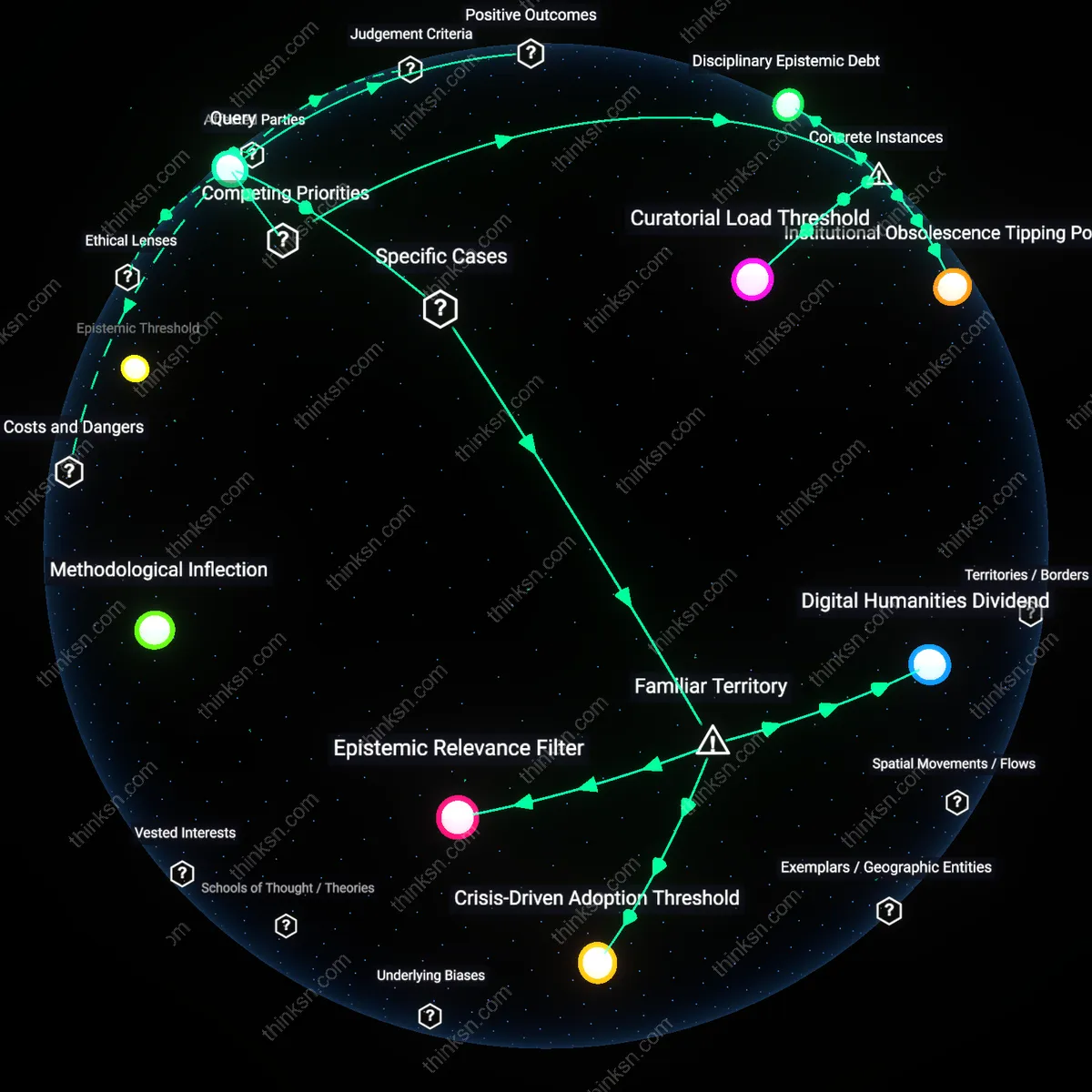

Temporal Compression

The ethical risk of undermining unconventional research is outweighed by AI screening’s speed only when judged against the post-2008 academic temporality where grant cycles, tenure clocks, and global rankings compress knowledge production into measurable throughput. As national assessment frameworks like the UK’s REF increasingly emphasize output velocity and citation immediacy, AI tools embedded in submission portals at journals such as *Nature Communications* accelerate review by predicting novelty via training data from the prior decade’s high-impact papers. This shift from cumulative, generational knowledge building to real-time innovation signaling has produced temporal compression—a condition where the past ten years become the ontological boundary of the thinkable, rendering radical departures epistemically invisible not by intention but by temporal myopia.

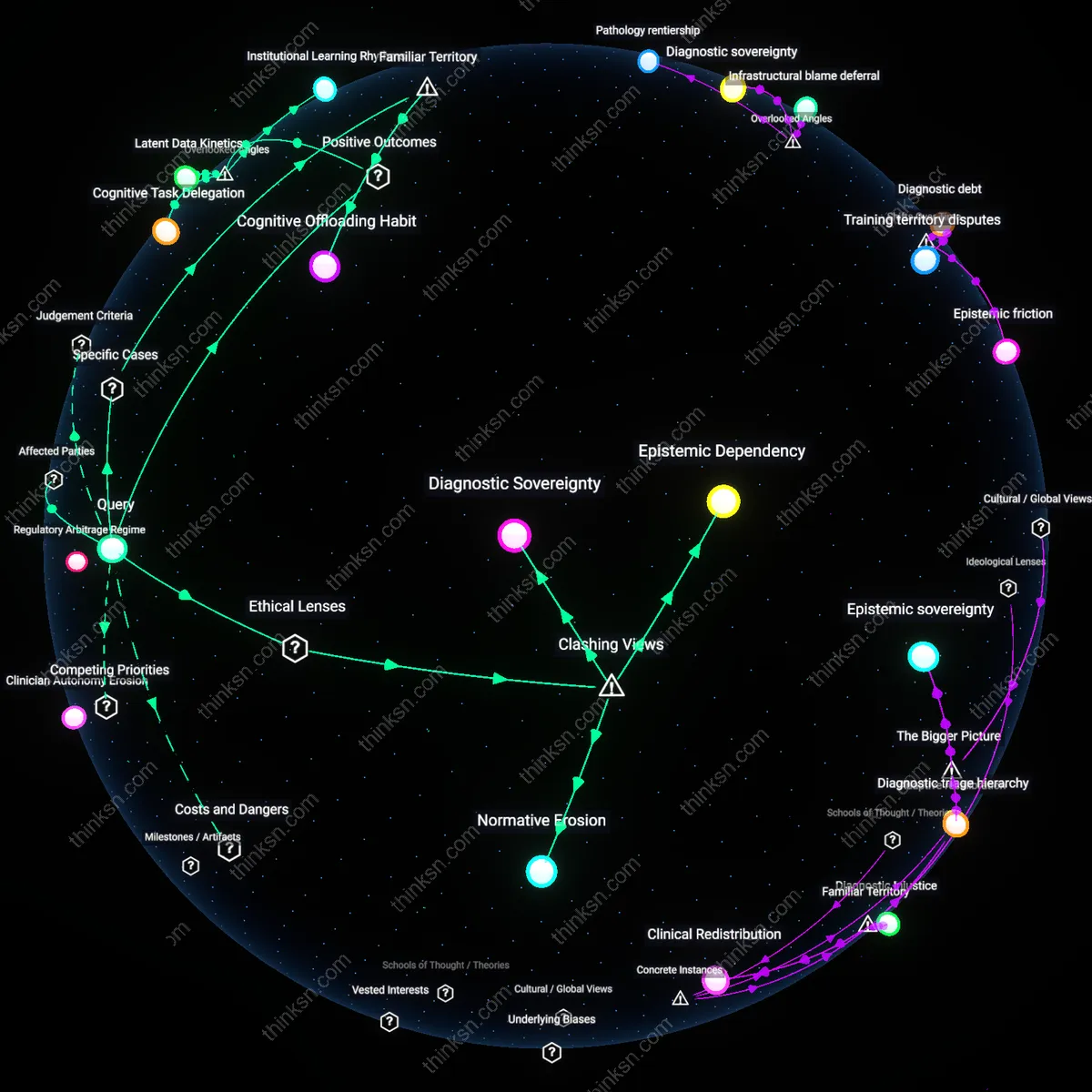

Innovation Gatekeeping

The implementation of AI-driven plagiarism and originality screening in China's academic publishing system systematically disadvantages interdisciplinary and non-positivist research, as evidenced by the 2022 exclusion of Yunnan University anthropologists whose ethnographic methods produced low algorithmic novelty scores; this occurs because machine learning models trained on citation-based metrics privilege statistical divergence over conceptual innovation, revealing that speed in detecting superficial novelty enforces methodological orthodoxy.

Epistemic Arbitrage

When the U.S. National Science Foundation piloted AI triage for grant applications in 2021, proposals from historically Black colleges were disproportionately flagged as low-priority due to atypical keyword usage and non-standard framing, exposing how rapid novelty assessment exploits linguistic predictability as a proxy for intellectual value—this mechanism rewards research that mimics dominant discourse patterns, making the efficiency of AI screening dependent on epistemic assimilation.

Discovery Friction

The 2018 retraction of a novel protein folding hypothesis from Nature Communications—later validated as correct—occurred because editorial AI tools dismissed its structural claims as implausible anomalies, demonstrating that automated screening prioritizes consensus alignment over high-risk insight; the cost of accelerated review is the suppression of findings that contradict established models, exposing friction between discovery and validation as a hidden price of speed.