When AI Aid Exceeds the Cost of Lifelong Learning for Humanities Scholars?

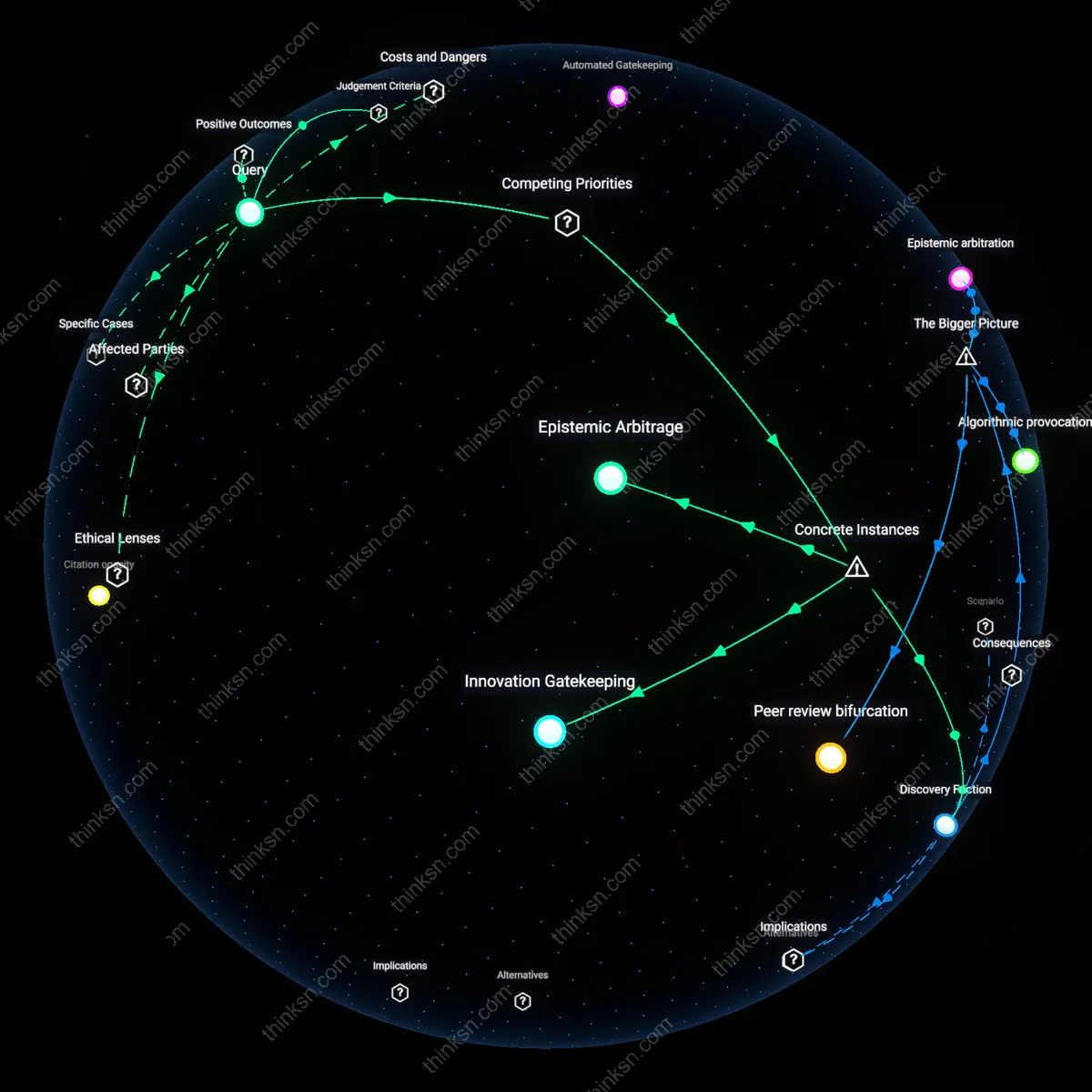

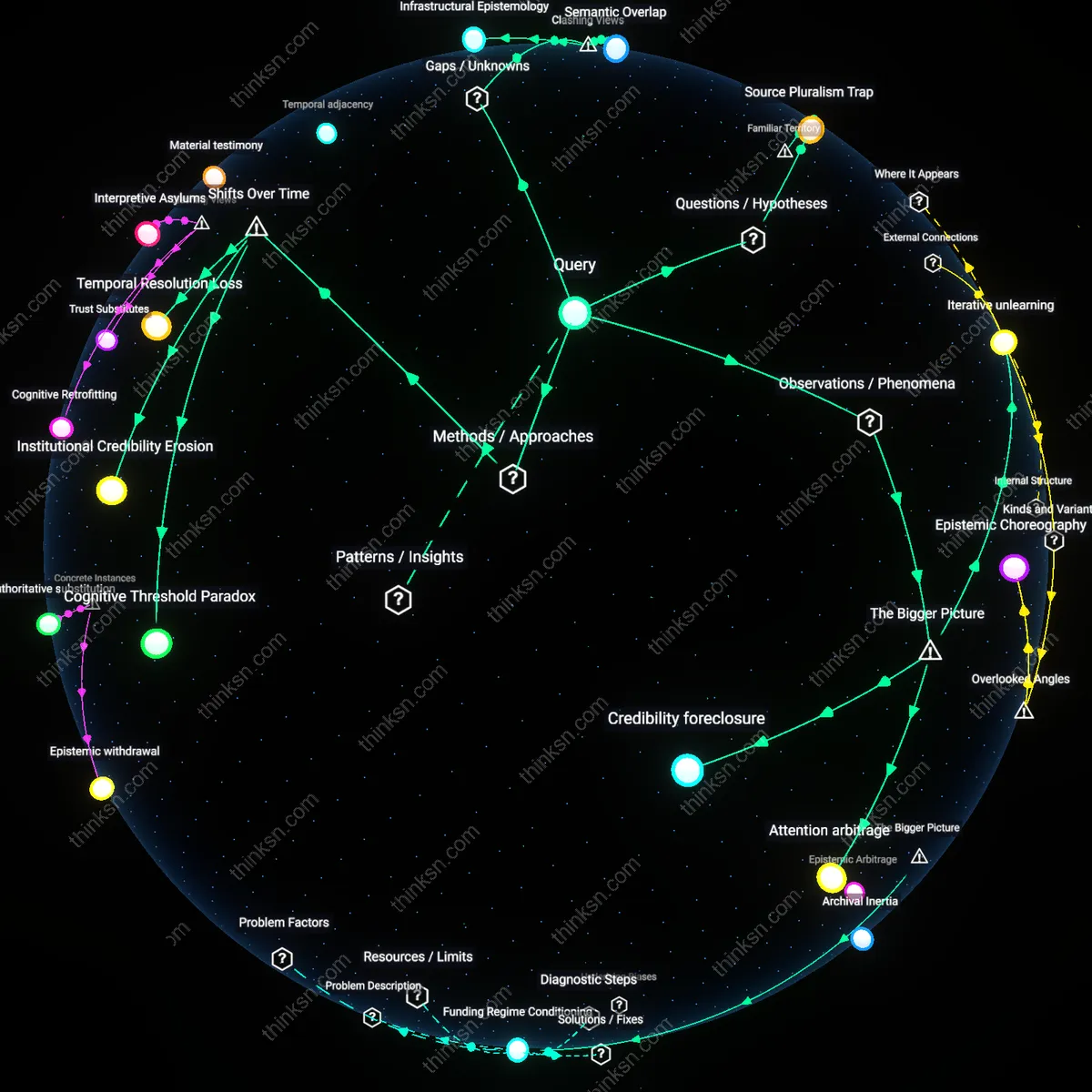

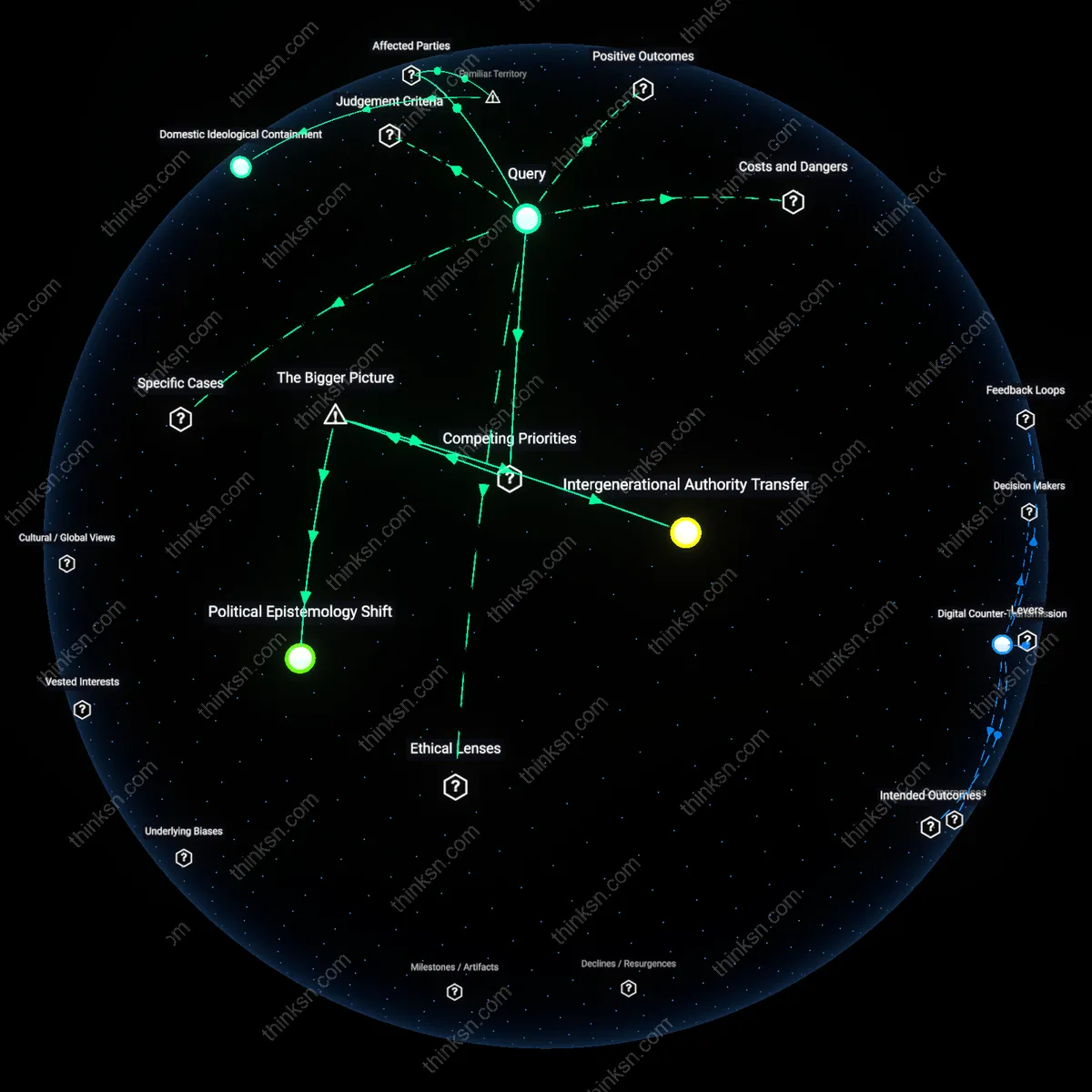

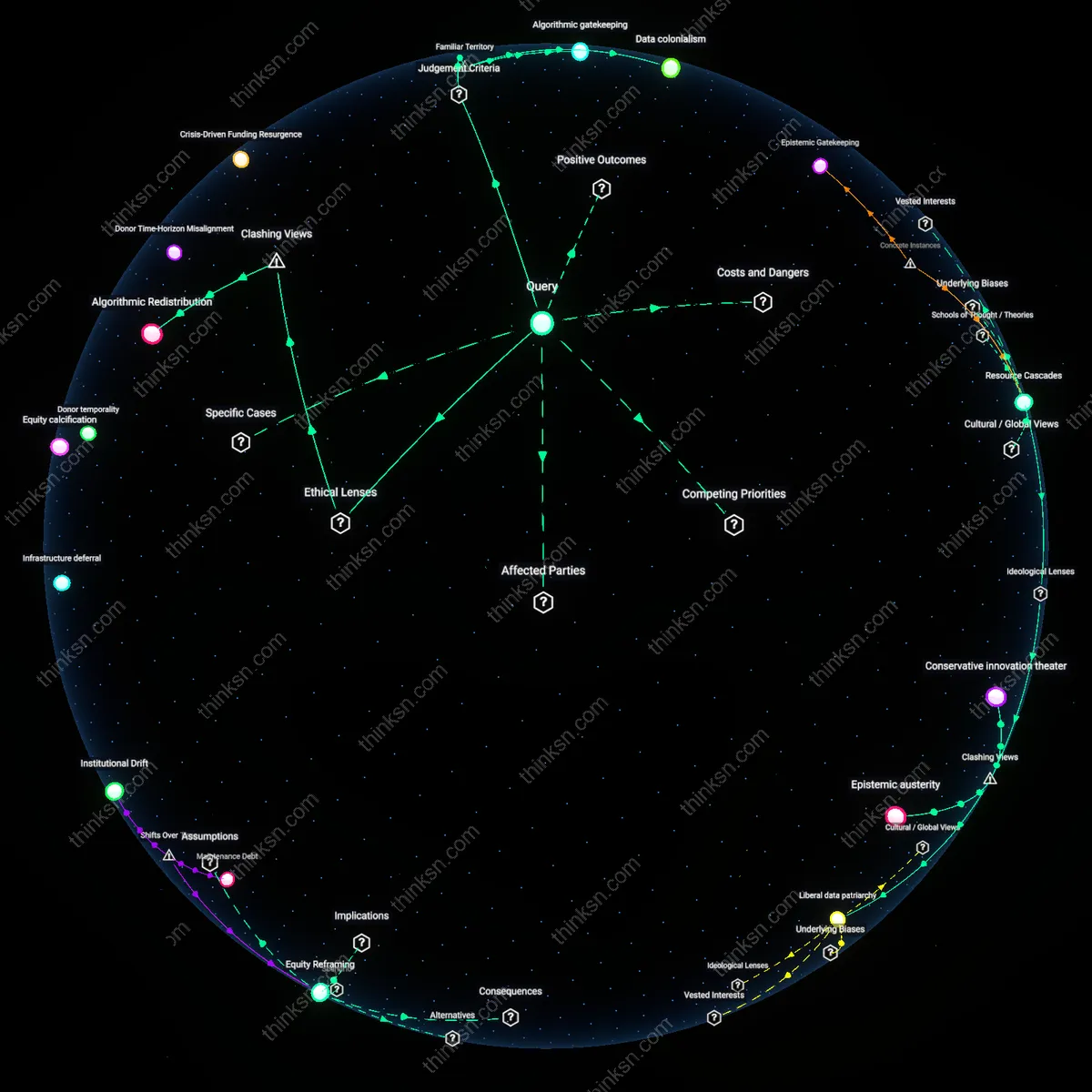

Analysis reveals 9 key thematic connections.

Key Findings

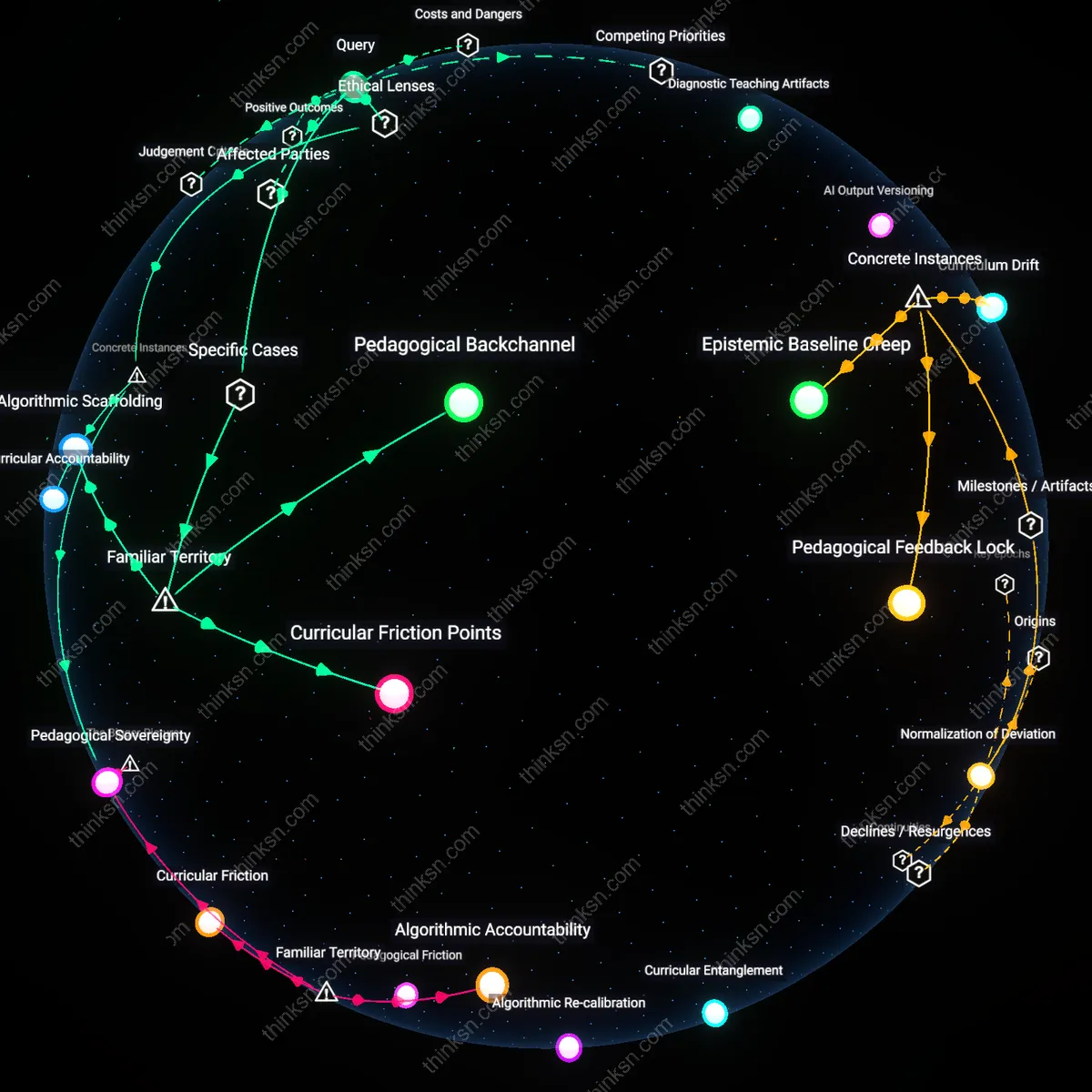

Methodological Inflection

The benefit of AI-enhanced research surpasses retraining costs for senior humanities academics when AI tools begin to reconfigure archival access around pattern discovery in post-2010 digital repositories, shifting their function from annotation aids to generative research partners. This shift occurs as institutions like the Stanford Literary Lab and the British Library integrate machine learning into corpus analysis, enabling previously intractable inquiries—such as transnational thematic diffusion in 19th-century print culture—to become empirically tractable; the non-obvious insight is that the value ceiling is not determined by individual faculty adaptation but by infrastructural alignment between AI systems and long-form humanistic questions, transforming retraining from a personal burden into a collective epistemological pivot.

Epistemic Threshold

The advantage of AI-enhanced research overtakes retraining costs in the mid-2020s when longitudinal AI literacy programs—such as those piloted at the University of Chicago’s Humanities Research Center—reach a critical mass of cohort participation among tenured faculty, enabling intergenerational research teams to co-produce scholarship using natural language processing on multilingual datasets. This transition marks a shift from ad hoc tool adoption to sustained methodological hybridization, where the real mechanism is not skill mastery per se but the institutionalization of shared cognitive frameworks between computer scientists and humanities scholars; what is underappreciated is that the retraining cost curve flattens not through individual proficiency but through the emergence of bilingual mediating roles—such as digital curators and algorithmic ethnographers—who absorb translation work previously forced onto senior academics.

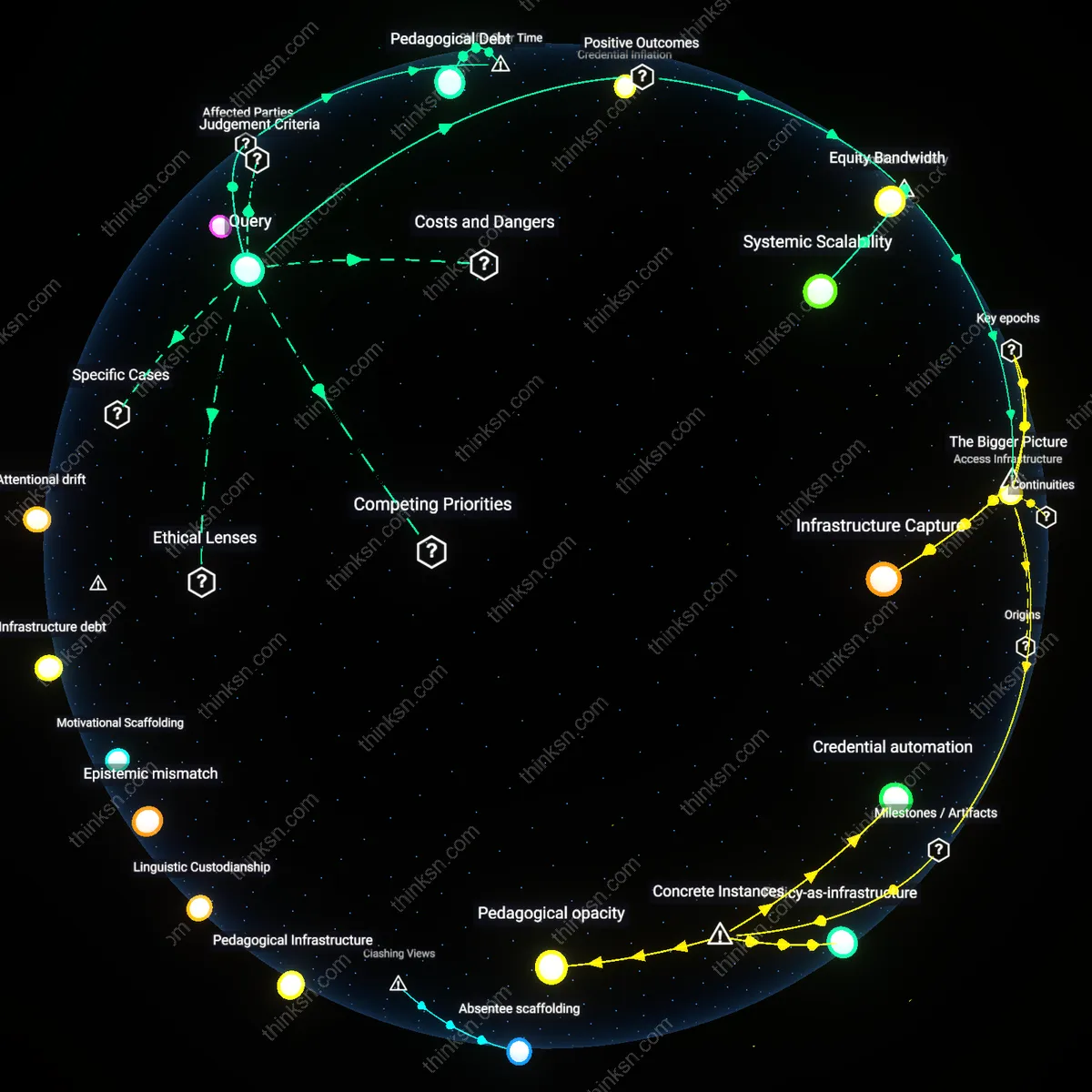

Generational Asymptote

The return on AI-enhanced research exceeds retraining costs around 2030, when the final cohort of pre-digital-era humanities scholars retires, removing systemic resistance to AI integration that had previously inflated training expenditures through remedial onboarding and tool customization. This turning point is marked not by technological advancement alone but by the demographic exhaustion of scholars trained in exclusively print-based hermeneutics, allowing institutions such as the Modern Language Association to standardize AI-embedded workflows across conferences, peer review, and tenure criteria; the overlooked dynamic is that declining retraining costs are less a function of better pedagogy than of generational replacement, revealing retraining not as a continuous investment but as a one-time transitional friction amortized over time.

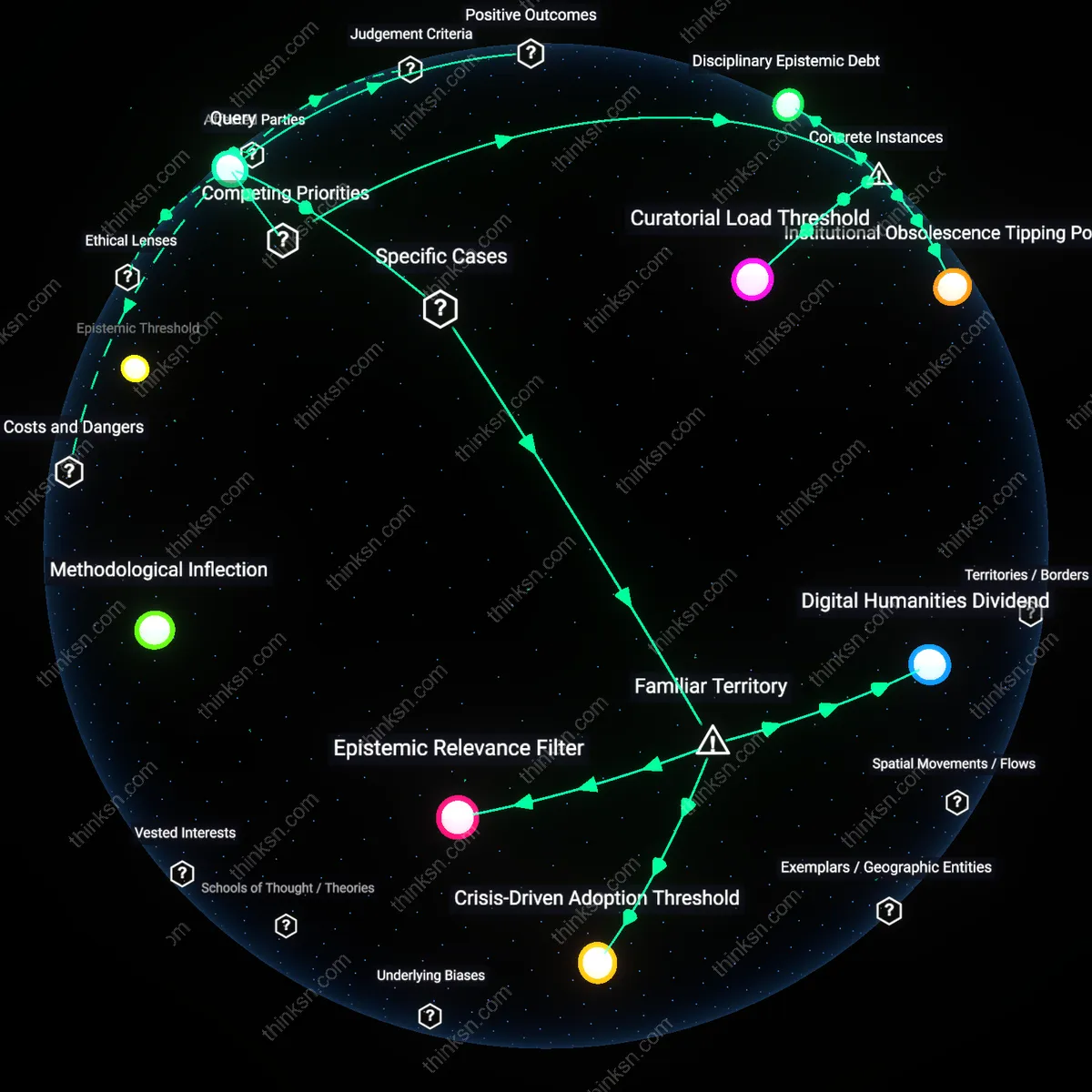

Curatorial Load Threshold

The benefit of AI-enhanced research exceeds retraining costs for senior humanities scholars when AI tools begin to reduce the curatorial load of managing vast, unstructured archives, as seen in the shift at the Herzog August Bibliothek in Wolfenbüttel, where early adoption of text-mining algorithms for 17th-century manuscript collections allowed retired philologists to contribute meaningfully without mastering query syntax, instead delegating complex searches to curated pipelines. This mechanism functions through institutional mediation—where digital humanities staff translate scholarly intent into machine-executable tasks—making AI useful without requiring individual skill overhauls, revealing that the real cost barrier is not technical learning but the time required to maintain dual competency in both source material and tooling.

Disciplinary Epistemic Debt

AI-enhanced research surpasses retraining costs only when accumulated disciplinary obsolescence threatens a field’s legitimacy, as occurred in classical philology during the 2015 rollout of the Tesserae project at the University at Buffalo, where scholars unable to manually trace intertextual echoes across Latin and Greek corpora faced diminished publication leverage against quantitatively fluent peers. The project introduced accessible similarity-mapping tools that reduced the epistemic debt of older academics by offloading pattern recognition to algorithms while preserving interpretive authority, demonstrating that retraining becomes justifiable not when tools improve but when non-adoption risks erasure from scholarly conversation.

Institutional Obsolescence Tipping Point

The inflection occurs when university departments face measurable enrollment collapse and administrative withdrawal, as exemplified by the closure of the University of Leicester’s Centre for New Literatures in 2021 amid pressure to rebrand into digital culture studies, where senior faculty adopted AI-driven discourse analysis tools not to enhance research but to justify curricular continuity. Here, the cost of retraining was absorbed as a survival imperative—administrators mandated tool use in exchange for preserved FTE lines—revealing that individual skill investment only outweighs cost when institutional extinction, not intellectual enhancement, is the proximate threat.

Digital Humanities Dividend

The benefit of AI-enhanced research exceeds retraining costs for senior humanities academics when their institutions centralize AI support within established digital humanities labs, such as at the University of Virginia’s Scholars’ Lab, where AI tools are embedded into existing workflows for textual analysis and historical mapping. This integration reduces individual skill acquisition pressure by shifting labor to shared technical staff and curated platforms, allowing senior scholars to leverage machine learning for pattern discovery in archival materials without mastering coding or algorithms. The non-obvious insight is that the value does not come from broad faculty retraining, but from institutionalizing AI as infrastructure within familiar collaborative units—units the humanities already trust.

Crisis-Driven Adoption Threshold

Senior humanities academics experience AI research benefits outweighing retraining costs only after a field-defining crisis, such as the sudden digitization of rare manuscript collections during the pandemic, forces reliance on automated transcription and metadata generation tools like those deployed by the Bibliothèque nationale de France. In such moments, the immediate necessity of accessing otherwise unusable materials overrides resistance to technical learning, and short-term training becomes justified by urgent scholarly survival. The overlooked mechanism is that habitual aversion to skill renewal reverses when the alternative is total exclusion from research participation, making crisis a covert enabler of sustainable AI integration.

Epistemic Relevance Filter

AI-enhanced research surpasses retraining costs for senior humanities scholars at institutions like the University of Chicago’s Humanities Division when AI applications directly amplify interpretive debate—such as using natural language processing to trace ideological shifts in 19th-century political tracts—thereby aligning technical gains with core disciplinary values of hermeneutic depth. Here, the determining condition is not tool sophistication but whether outputs feed recognizable scholarly conversation, allowing seniors to validate results through existing critical frameworks rather than new technical metrics. The underappreciated point is that skill retraining becomes cognitively affordable not when it's simplified, but when its outcomes are immediately contestable and meaningful within traditional academic discourse.