Should Regulators Tame YouTubes Sensationalist Algorithm?

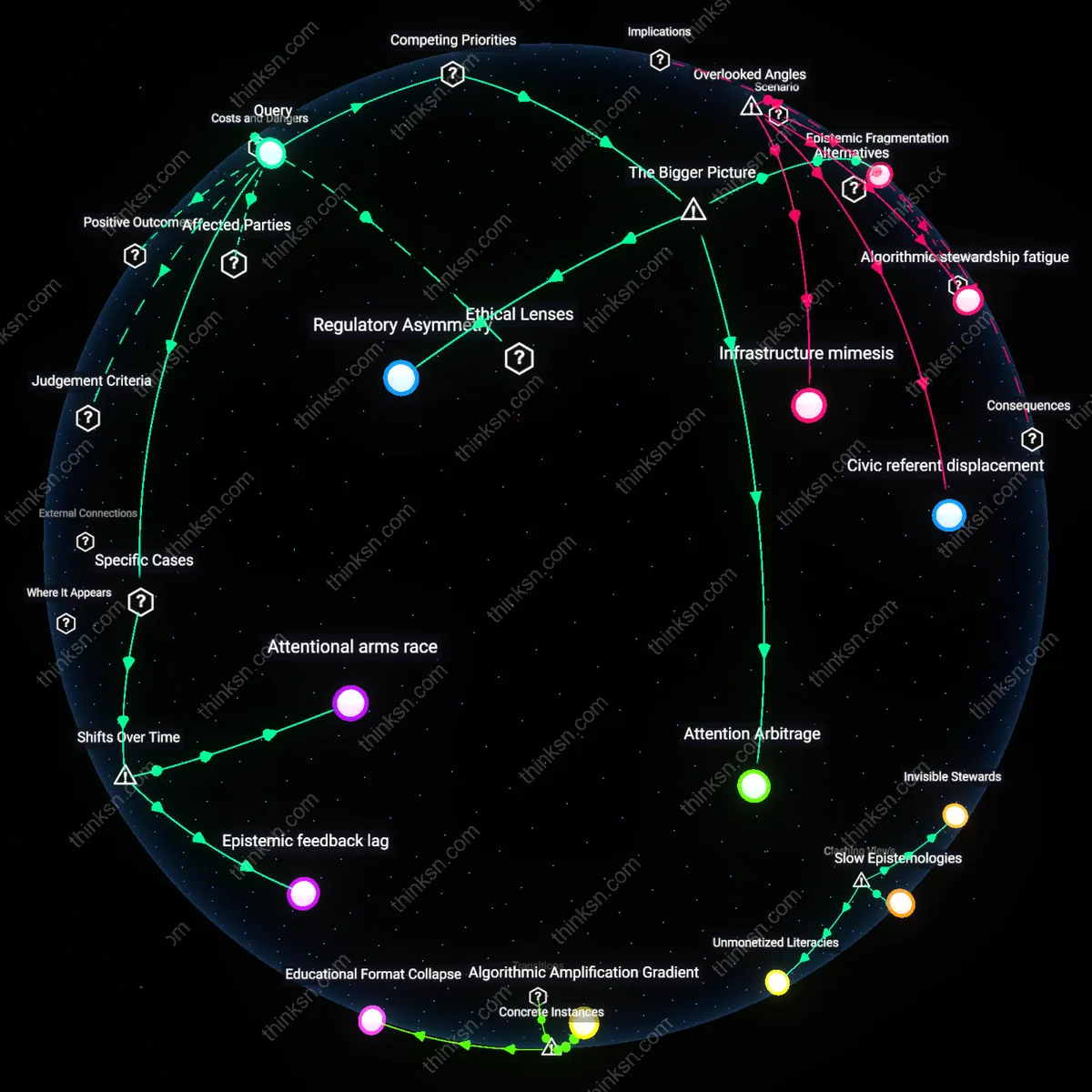

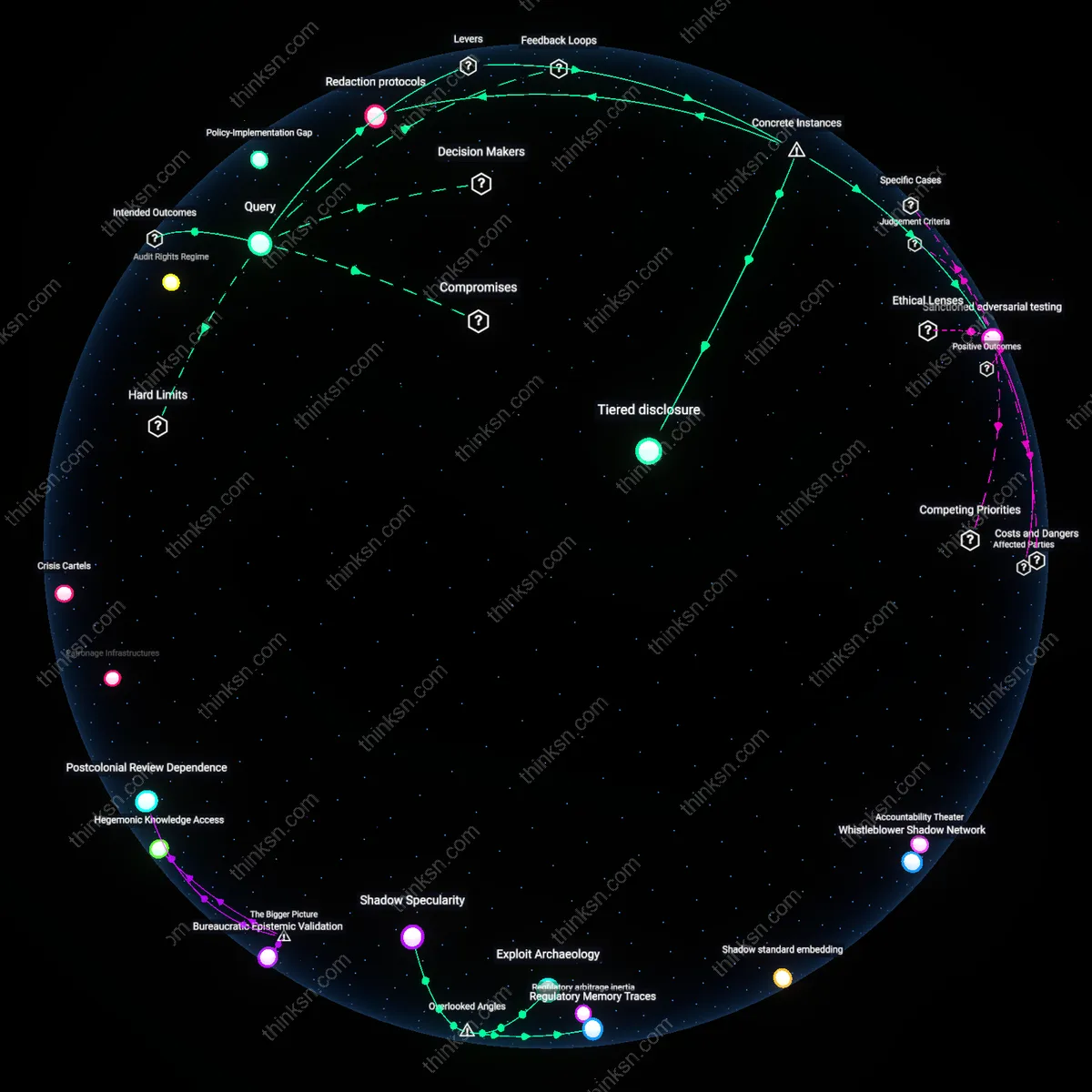

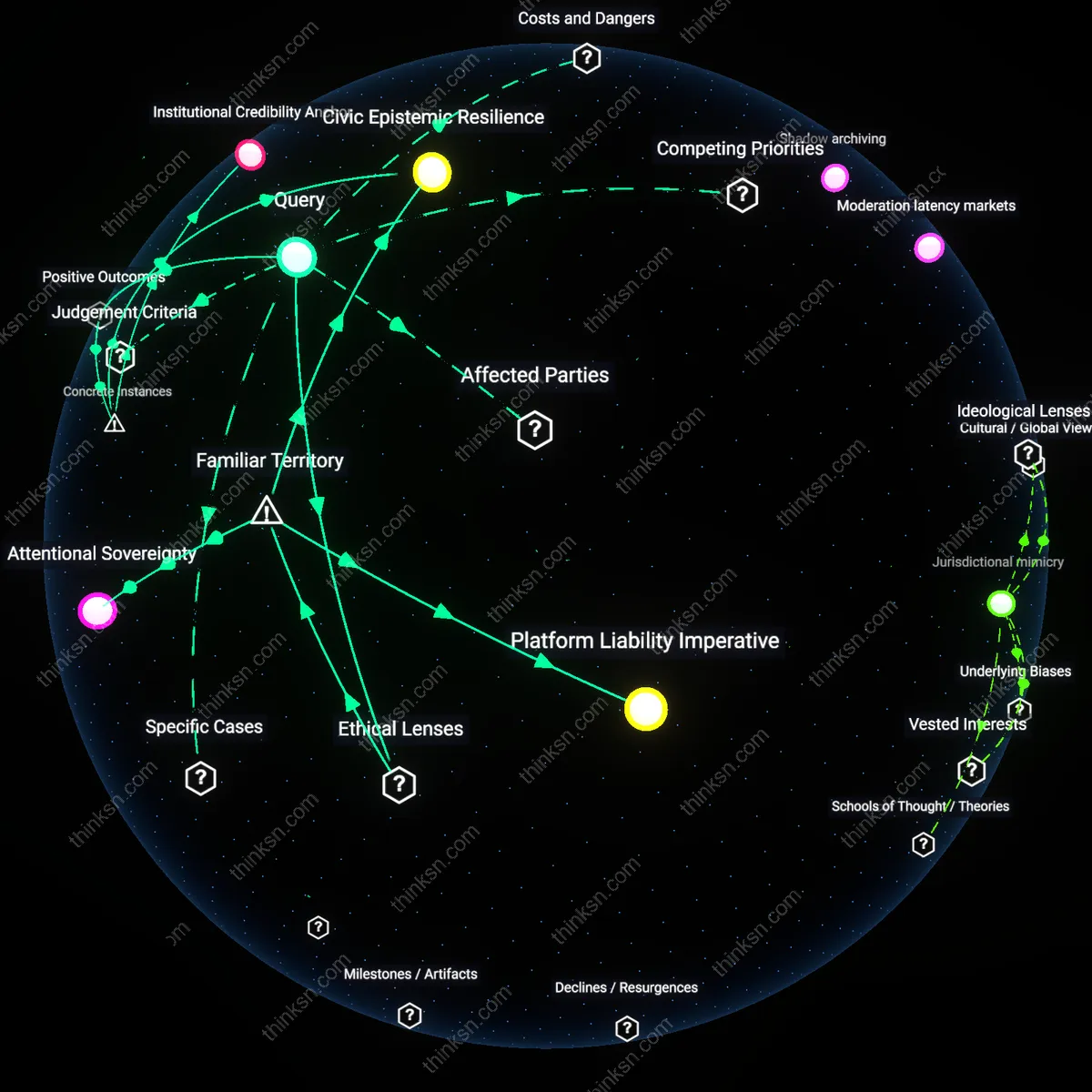

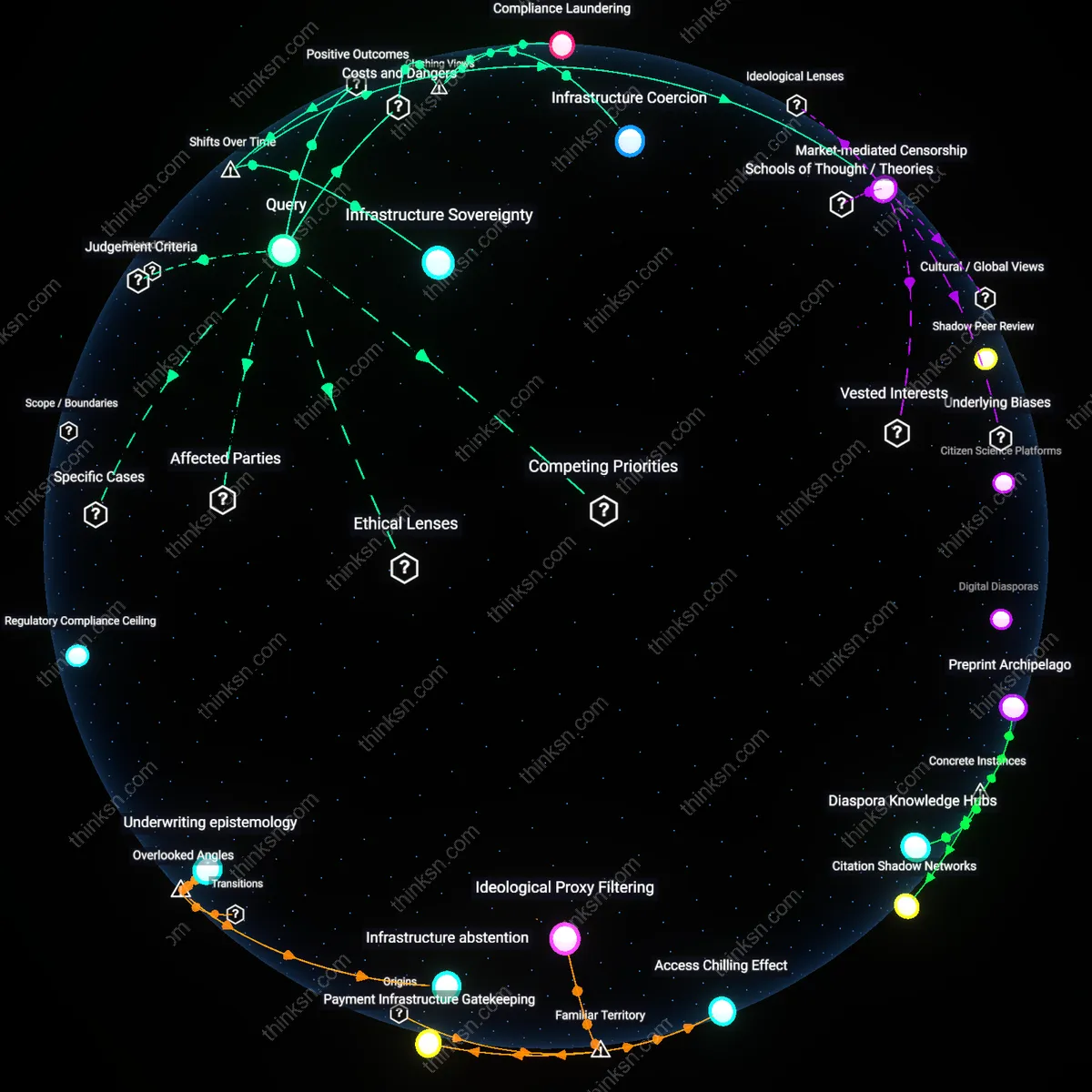

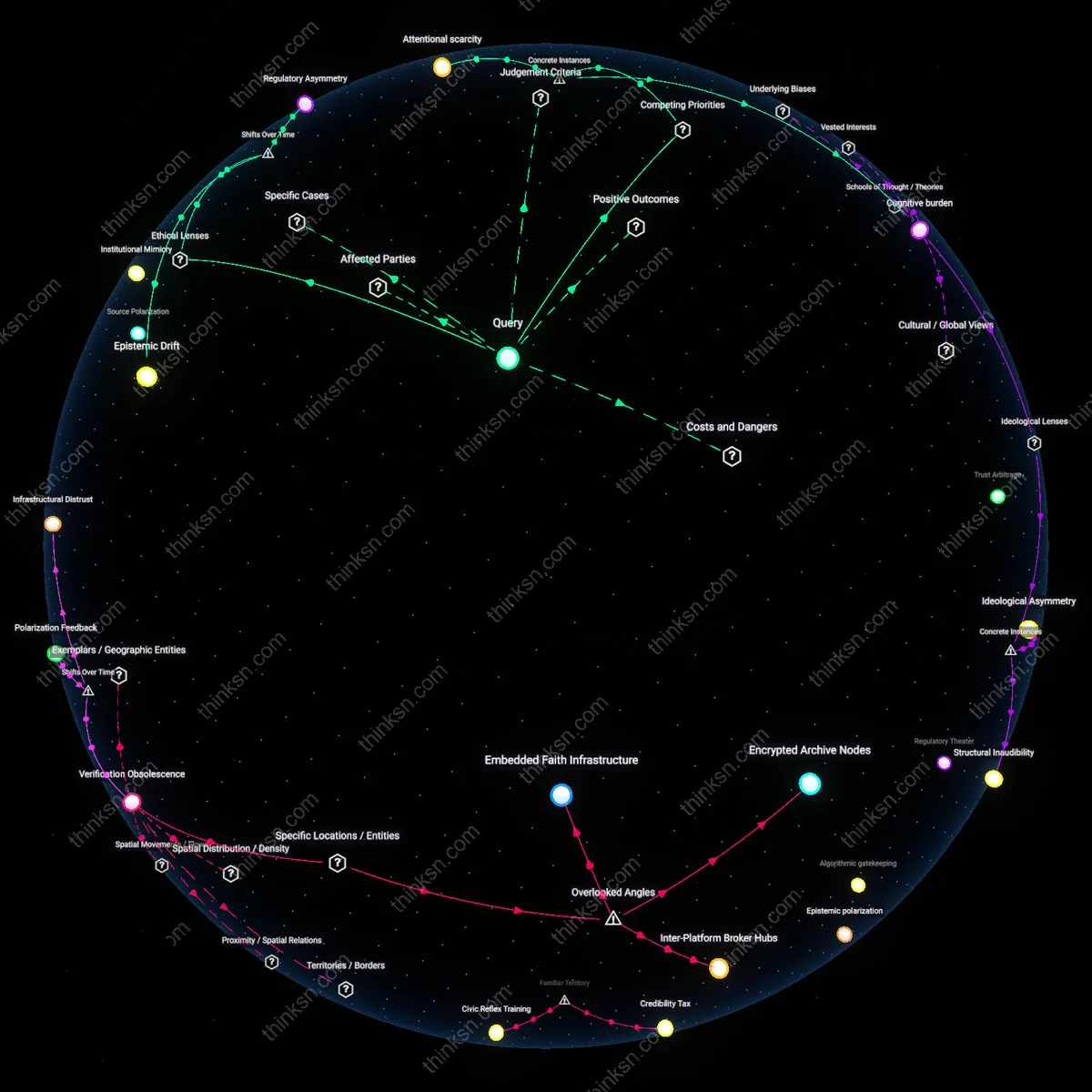

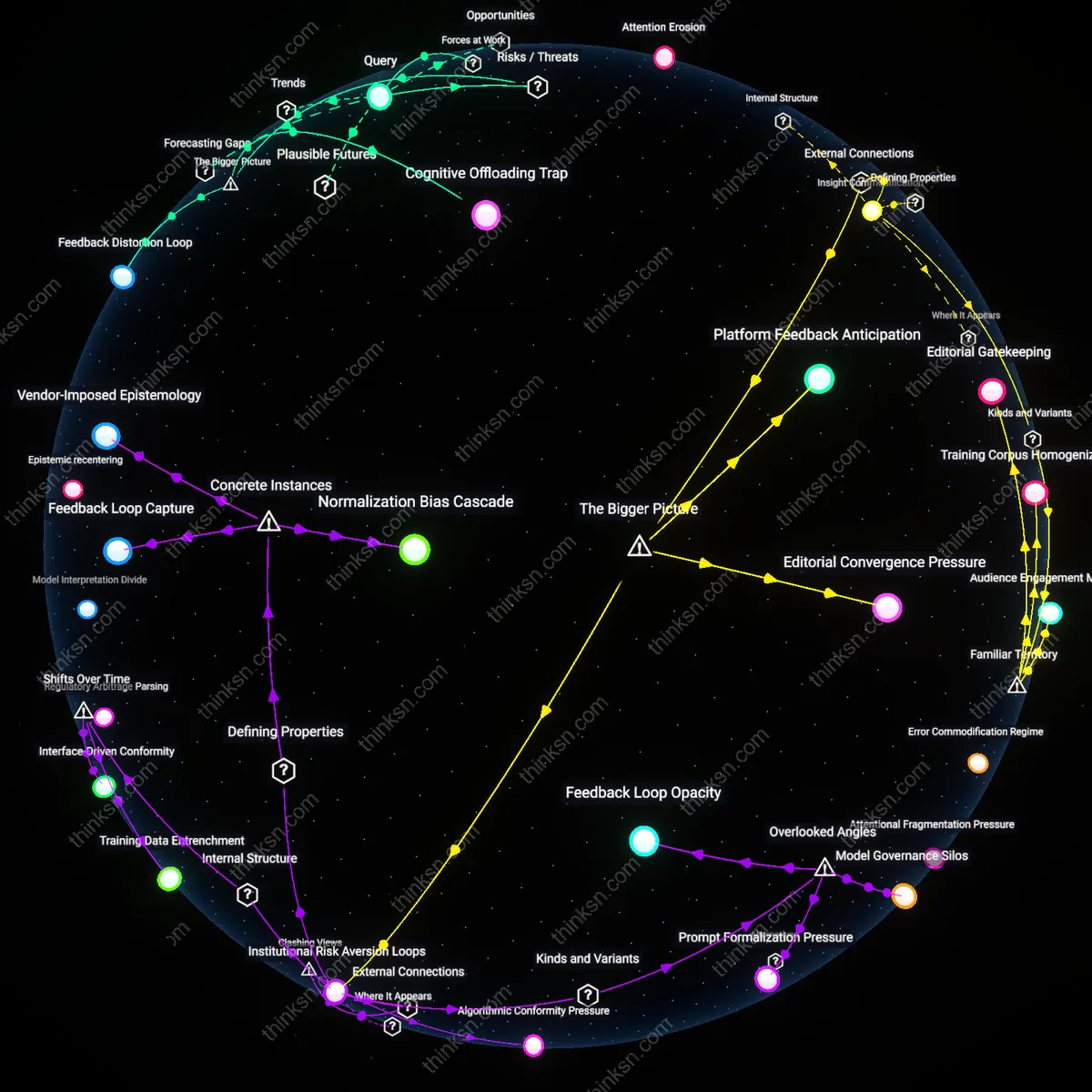

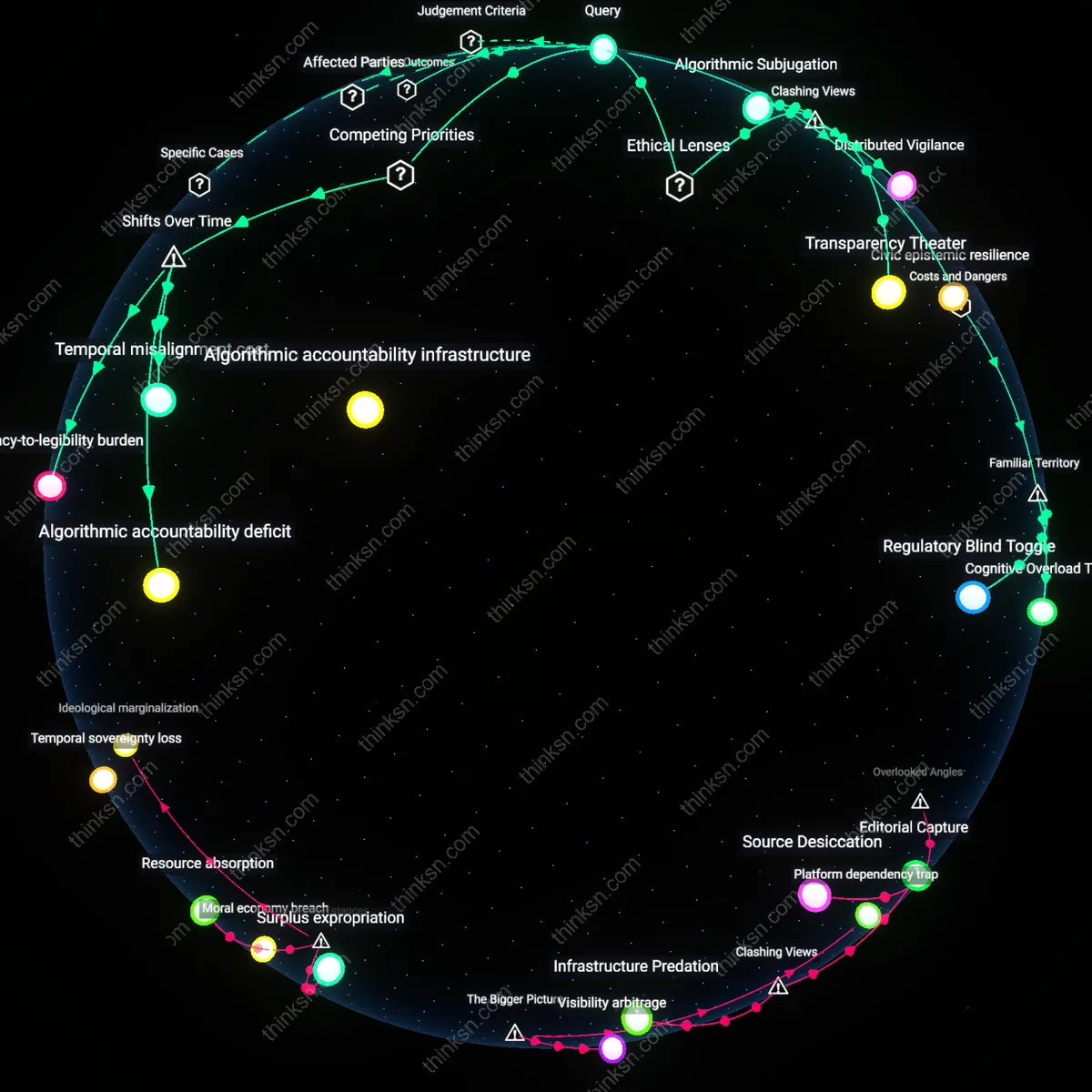

Analysis reveals 6 key thematic connections.

Key Findings

Epistemic Privatization

Regulators should intervene because YouTube’s recommendation engine functions as a privatized epistemic authority, displacing traditional institutions of knowledge validation without assuming their civic responsibilities. Unlike universities, media, or scientific bodies, the algorithm has no mandate to prioritize truth, coherence, or social utility—it selects based on retention metrics that favor emotionally charged, contestable claims, thereby privatizing the formation of public belief. This occurs through a distributed infrastructure of content ranking that mimics editorial judgment while disavowing accountability, replacing pluralistic discourse with a single, closed-loop logic trained on user exhaustion and dopamine cycles. The non-obvious implication is that the danger isn’t sensationalism per se, but the quiet substitution of a public epistemic commons with a corporate-controlled inference engine that operates without transparency or redress—transforming what we know into a byproduct of engagement optimization.

Attention Arbitrage

Regulators must refrain from intervening because YouTube’s business model is structurally dependent on maximizing user engagement through algorithmic amplification of emotionally charged content, and any regulatory constraint on recommendation logic directly undermines its competitive valuation in digital advertising markets. The platform’s reliance on real-time A/B testing to optimize for watch time creates a feedback loop where marginal increases in sensationalism yield disproportionate gains in ad revenue, locking engineers into a design paradigm that prioritizes reactivity over accuracy—enabled by the absence of enforceable harm thresholds in Section 230 jurisprudence. This dynamic reveals the non-obvious reality that intervening isn’t just a technical fix but a structural challenge to platform economics.

Epistemic Fragmentation

Regulators should intervene because YouTube’s recommendation engine functions as a de facto public knowledge infrastructure, and its unregulated amplification of contested claims accelerates the systemic divergence of belief clusters across demographic and geographic lines. The algorithm’s tendency to prioritize novelty and outrage—operating through user-specific retention models trained on billions of interactions—enables politically salient misinformation to propagate faster than community-level verification mechanisms can respond, especially in non-urban, low-media-diversity regions of the U.S. South and Midwest. The underappreciated consequence is that platform-driven epistemic drift weakens shared civic epistemologies, making democratic consensus a casualty of engagement-optimized AI systems.

Regulatory Asymmetry

Regulators cannot effectively intervene because the speed and opacity of YouTube’s recommendation algorithms outpace the procedural capacity of institutions designed for rule-based oversight, creating a persistent lag in accountability that advantages platform autonomy. The Federal Trade Commission and similar bodies rely on post-hoc investigations and retrospective harm assessments, while recommendation models retrain continuously—driven by decentralized teams in Mountain View and Dublin—on behavioral datasets that evolve faster than legislative cycles can comprehend. The overlooked systemic truth is that algorithmic scale and bureaucratic inertia are asymmetric forces, making harm containment structurally elusive even when political will exists.

Attentional arms race

Regulatory intervention is necessary because YouTube’s recommendation engine evolved after 2016 into a systemic inducement of escalating sensationalism, observable in the trajectory of channels like Logan Paul’s vlogs or Shane Dawson’s docu-series, where narrative complexity was rewarded only insofar as it retained viewer attention through emotional exaggeration. As watch time became the dominant metric for algorithmic promotion, creators and their production teams adapted by embedding cliffhangers, moral ambiguity, and speculative claims within ostensibly factual content, shifting the platform’s epistemic baseline toward performative controversy. The pivotal change—from user upload as self-expression to content as algorithmic negotiation—reveals that the engine does not merely reflect user preference but produces it through competitive feedback loops, making harm an emergent property of the attentional market itself, not individual intent.

Epistemic feedback lag

Regulators should intervene because YouTube’s recommendation system, particularly evident during the 2020 U.S. election cycle and the subsequent proliferation of false claims about voting machines linked to figures like Rudy Giuliani, operates on a timescale that outpaces institutional verification; the machinery of fact-checking and democratic consensus cannot react within the seven-to-fourteen-day window in which recommended content peaks in visibility. This temporal misalignment—where algorithmic velocity exceeds epistemic validation—was not inherent in YouTube’s early architecture (pre-2015), when recommendation played a minor role relative to direct search and subscription traffic, but emerged as the platform centralized discovery around machine learning models trained on short-term engagement signals. The underappreciated consequence is that contested evidence is no longer merely spread but structurally favored because verification inherently requires delay, and the system interprets such delay as disinterest.