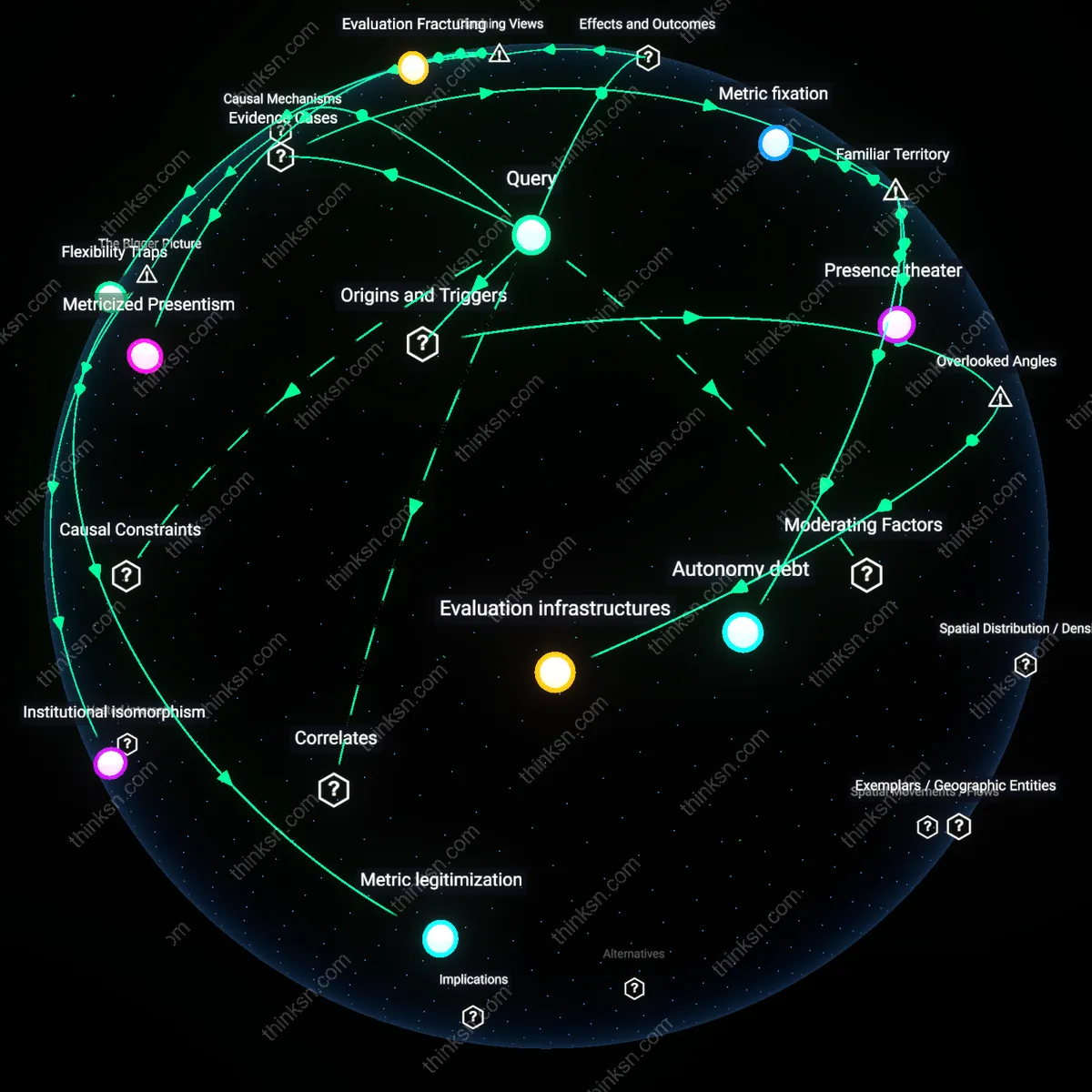

Is Workplace Efficiency Worth the Cost of Privacy?

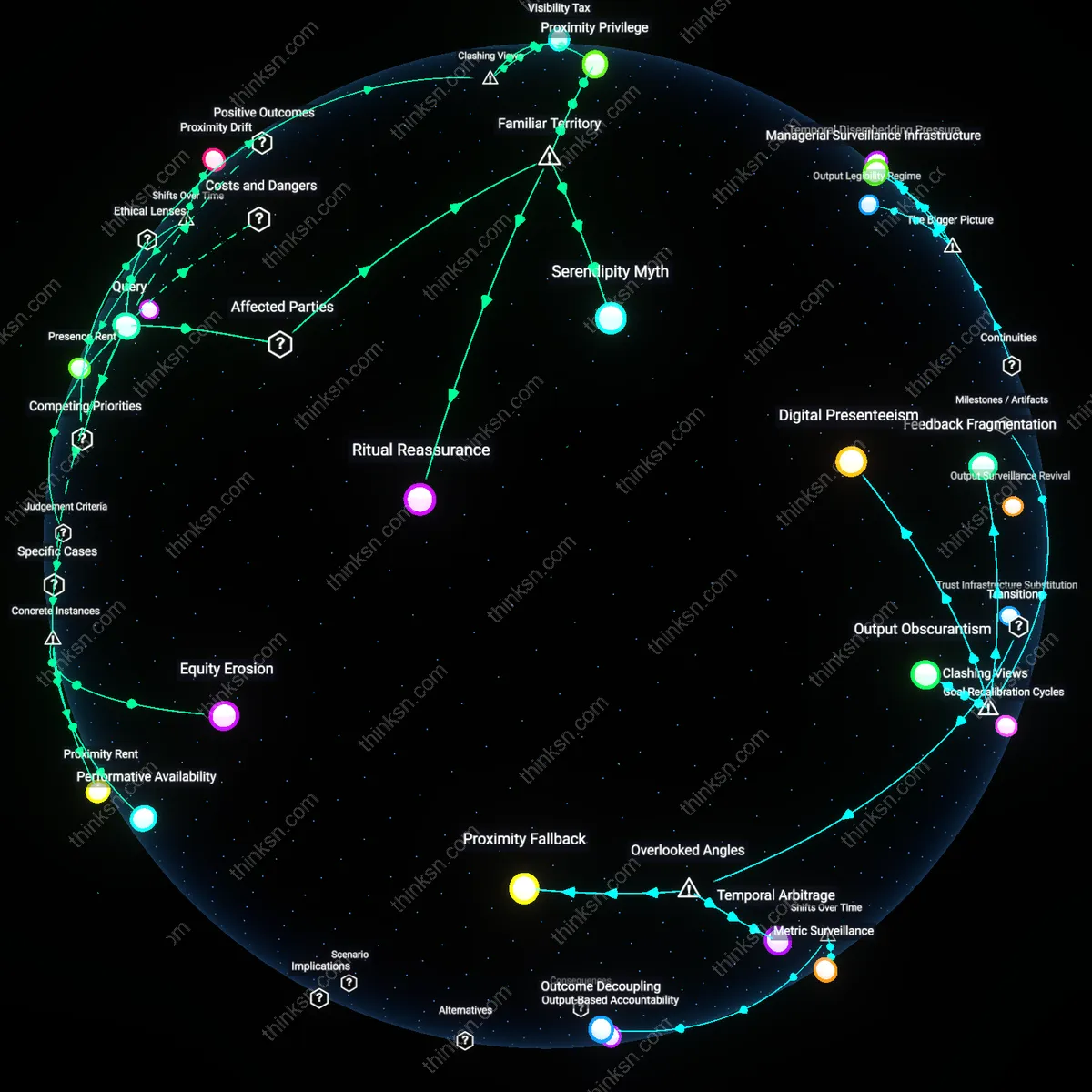

Analysis reveals 4 key thematic connections.

Key Findings

Surveillance Meritocracy

Yes, workplace productivity tracking through keystroke monitoring justifies the loss of employee privacy because it operationalizes a utilitarian ethics in which collective organizational efficiency outweighs individual privacy interests. In corporate environments governed by performance metrics—particularly in digital labor sectors like customer support or software development—keystroke data enables management to allocate resources, identify bottlenecks, and optimize workflows with precision unattainable through observational methods. This system aligns with rule utilitarianism, where the consistent application of monitoring rules generates greater long-term outcomes for productivity and economic output, thereby morally justifying intrusions into personal autonomy. The non-obvious insight is that privacy is not eradicated but renegotiated as a conditional privilege tied to measurable contribution, revealing a shift from rights-based to output-based ethical recognition.

Metric fixation

Keystroke monitoring at companies like Crossover for Work justifies privacy erosion by equating measurable input with productivity, despite the flawed assumption that typing frequency correlates with output quality. This practice is driven by investors and platform managers who demand quantifiable performance indicators, replacing professional judgment with algorithmic oversight. The systemic pressure to represent labor as auditable data in global remote-work platforms privileges visibility over value, making measurable activity — however superficial — the basis for retention and pay. What is underappreciated is that the justification for privacy loss does not stem from operational necessity but from financial demands for standardized, real-time performance metrics across dispersed teams.

Surveillance infrastructure

At the Indian IT services firm Wipro, keystroke tracking was integrated into employee performance dashboards during the pandemic to manage remote work, normalizing continuous behavioral surveillance under the banner of productivity assurance. This expansion was made possible by pre-existing procurement agreements with monitoring software providers like Teramind, turning temporary remote-work adaptations into permanent surveillance infrastructure. The deeper systemic issue is that once surveillance is embedded in enterprise IT ecosystems, it outlives its initial rationale due to integration costs and administrative inertia, shifting the balance of power toward automated oversight regardless of actual productivity impact.

Trust deflation

The implementation of keystroke logging at remote-first U.S. startups such as Automattic subsidiaries signals a structural decline in managerial trust, where productivity is no longer presumed but must be continuously proven through digital traces. This deflation of trust is driven by venture capital expectations to scale quickly while minimizing human resource risks, transforming employees into data streams subject to constant validation. In this system, the act of monitoring becomes a substitute for institutional trust, reshaping workplace culture around suspicion rather than autonomy. The critical insight is that surveillance in high-growth tech environments functions primarily as a ritual of accountability to investors, not as a response to actual productivity deficits.

Deeper Analysis

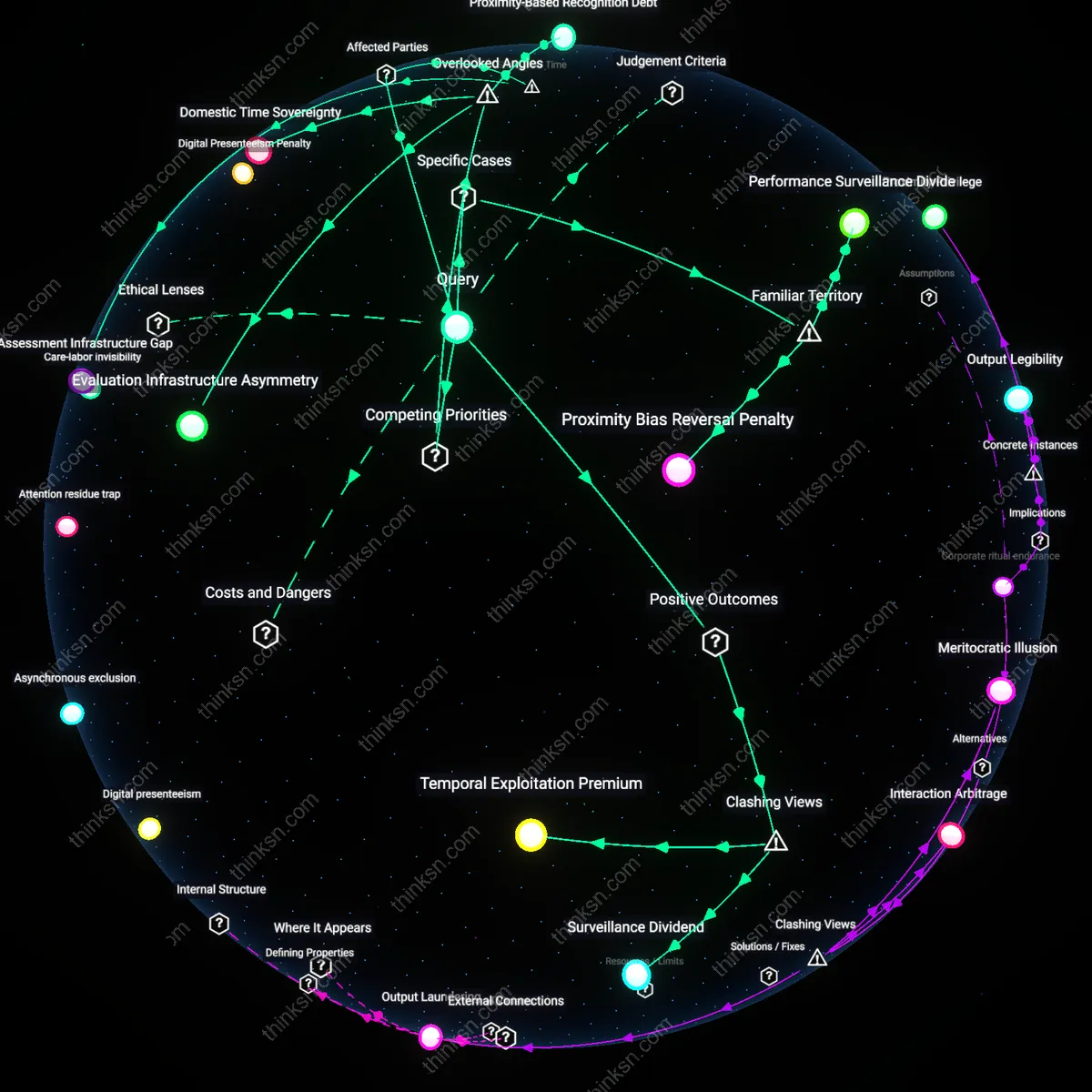

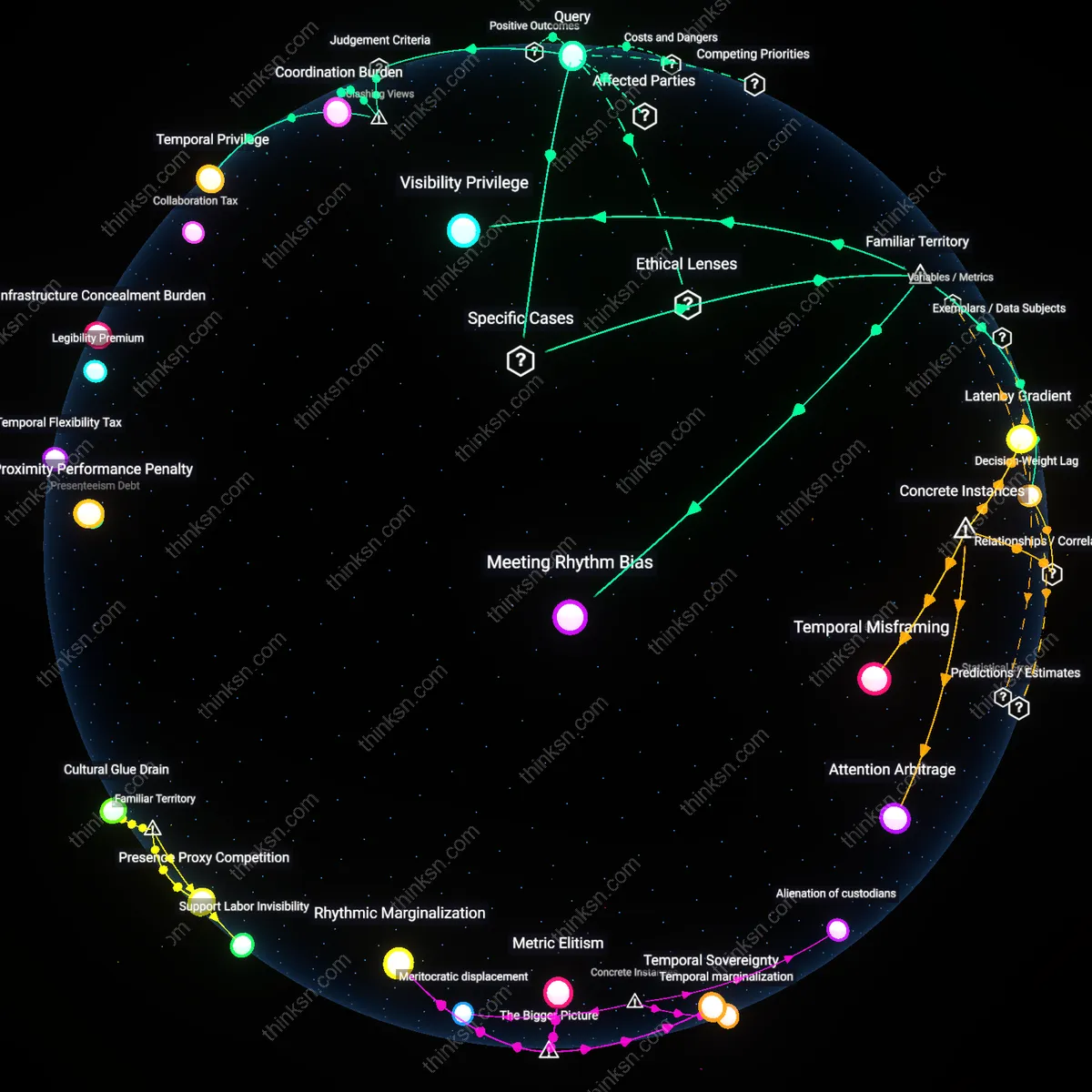

If privacy now depends on how much you produce, what happens to workers whose roles can't be measured by keystrokes?

Temporal opacity

Workers in crisis response roles, such as school social workers or emergency nurses, maintain privacy not through data minimization but through the inherent unpredictability of their time-use, which resists algorithmic scheduling and output tracking. Because their value emerges in rare, high-stakes interventions that cannot be averaged into daily metrics, their unmeasurable rhythms create a form of protective ambiguity that deflects constant surveillance. The overlooked dynamic is that irregular temporal patterns function as a stealth privacy shield—systems avoid monitoring what they cannot benchmark, privileging predictable, measurable labor for both oversight and intrusion.

Infrastructural legibility

In municipal school districts using centralized HR analytics platforms, roles involving physical mobility—like maintenance supervisors or cafeteria managers—are increasingly subjected to GPS-tagged check-ins and task logging, not because their productivity demands it, but because the software’s design assumes legibility as a prerequisite for recognition. When institutional systems equate traceability with validity, untracked labor becomes administratively invisible and thus vulnerable to budget cuts or surveillance upgrades that reframe oversight as inclusion. The hidden dependency is that privacy is eroded not by malicious intent but by software architecture that treats measurable behavior as the price of organizational belonging.

Digital Labor Precariat

Workers whose value is reduced to measurable digital outputs are discarded from the privacy economy when their roles resist quantification. This exclusion operates through corporate data regimes that privilege algorithmic visibility, rendering teachers, nurses, and other qualitative laborers invisible to privacy-as-credit systems. The non-obvious truth here—the lie beneath familiar meritocratic narratives—is that privacy has become a dividend of data productivity, not a right, and the quiet erasure of unquantified workframes a new underclass.

Surveillance Meritocracy

Liberal technocracies reward measurable output with privacy concessions, framing data transparency as the price of participation. In this system, software engineers gain encrypted sanctuaries while warehouse packers are subjected to biometric surveillance, revealing a hierarchy where privacy is earned through data-generating behaviors. What feels familiar—the idea of earning rights through work—is weaponized here, turning workplace surveillance into a ladder one climbs by becoming more machine-readable.

Labor Dignity Divide

The erosion of unquantifiable labor’s moral worth in Western industrial cultures after the 1970s transformed privacy from a universal right into a privilege of productive visibility. As managerial capitalism adopted digital surveillance to monetize output metrics, clerical and service workers in North America and Western Europe found their privacy eroded not through overt coercion but through exclusion from recognition—those whose labor could not be rendered in data streams were treated as idle by default, a shift starkly visible in the post-Fordist restructuring of office work. This reframing obscured the cultural assumption that only measurable contribution merits autonomy, revealing how Protestant-derived work ethics merged with neoliberal governance to delegitimize non-instrumental human presence in the workplace.

Soultime Residue

In contrast to Western productivity regimes, East Asian Confucian-influenced workplaces in Japan and South Korea preserved collective privacy norms into the early 2000s by anchoring dignity to duration and devotion rather than discrete output. The implicit social contract of *service as loyalty*—whereby workers exchanged long hours not for tracked performance but for lifetime employment and group harmony—delayed the penetration of keystroke-style monitoring even as digital tools spread. This continuity, ruptured only during the 1997 Asian Financial Crisis and subsequent IMF-led corporatization, exposed how moral economies of obligation once absorbed surveillance pressures by treating time itself as a form of production invisible to Western metrics.

Spiritual Data Debt

The rise of platform economies in post-1990s India revealed a stratified spiritual economy of privacy, where gig workers serving Western digital markets entered a karmic deficit by surrendering personal data without reciprocal recognition. Unlike agrarian Jain or Gandhian traditions that equated self-restraint with spiritual sovereignty, new tech-driven labor patterns reframed invisibility not as virtue but as vulnerability—drivers, coders, and moderators in Bangalore or Hyderabad found their unmeasured hours treated as disposable, a condition accelerated not by local values but by global data feudalism. This disjuncture exposed how colonial patterns of extraction re-emerged in algorithmic form, where moral worth became hostage to traceable output, severing labor from lineage-based dignity systems.

Metrics Resistance Underground

Labor activists in sectors like home health care and adjunct education are reframing privacy as a collective weapon by refusing to produce trackable data, deliberately underutilizing monitoring tools or falsifying inputs to destabilize algorithmic oversight, thus asserting autonomy through strategic invisibility; this subversion challenges the assumption that privacy loss is inevitable for low-measurability workers by showing that opacity can be organized, not accidental. The non-obvious insight is that unmeasurable labor does not passively lose privacy—it can actively weaponize its elusiveness, constructing privacy not as withdrawal but as sabotage of the measurement economy itself.

Disciplinary Convergence

Warehouse labor would collapse into the same architectural surveillance that defines modern software engineering, exposing the fiction of privacy as a professional tier rather than a technical condition. While software engineers' privacy is routinely compromised through code audits, keystroke logging, and mandatory collaboration tools like Slack or Jira—disguised as productivity protocols—extending these same practices retroactively to warehouse workers means accepting constant monitoring as the normalized substrate of cognitively demanding work; the shift from physical time-motion studies in the early 20th century to algorithmic management in Amazon fulfillment centers marks a continuity in control, but the assumption that privacy protections could be 'equalized' obscures how such protections were never really withheld from engineers, only differently justified. This reveals that the residual category of ‘privacy’ in tech work is not a shield but a calibrated disclosure regime specific to data-intensive management eras.

Task Entanglement

Work processes would reorganize around granular data entitlements, forcing logistics operations to mimic software development’s permissioned access hierarchies. As software engineers operate under the premise of differential visibility—where repositories, credentials, and error logs are segmented by role and clearance—extending this model to warehouse workers implies access to inventory maps, restocking alerts, or scheduling algorithms could become rights rather than de facto exposures; the historical shift from Taylorist observation in the 1910s to SAP-based warehouse management systems in the 2000s centralized decision-making in software, but treating warehouse workers’ visibility as a privacy concern flips the power dynamic by recognizing their labor produces the data that feeds the system. This exposes how operational transparency was once unidirectional—from worker to system—but now becomes a negotiable flow subject to access governance.

Labor Abstraction

Management would shift toward outcome-based metrics indistinguishable from agile software sprints, collapsing bodily presence into performance tokens. In the transformation from industrial-era productivity tracking—where foremen timed pallet movements with stopwatches—to today’s AI-driven forecasting in warehouses like those run by DHL or FedEx, work has already become abstracted from the body; yet granting warehouse workers the same privacy norms as software engineers means their biometric rhythms, location trails, and equipment interactions can no longer be mined as ambient data, forcing companies to treat labor not as observable behavior but as contractual output, mirroring the way engineers are evaluated through deploy frequency and bug resolution rather than keystrokes. The non-obvious consequence is that this does not empower workers but accelerates the erasure of bodily labor from managerial visibility, producing a new species of invisible work.

Surveillance infrastructure reallocation

Warehouse operations would repurpose existing monitoring hardware for worker autonomy rather than compliance enforcement, as unified privacy standards compel firms like Amazon to decommission line-of-sight AI tracking systems that detect micro-movements in fulfillment centers. This shift redirects IT investment toward encrypted personal device networks for logistics staff, paralleling how software engineers use virtual private environments to shield coding activity—exposing how surveillance is not inherent to productivity but a configurable layer shaped by labor classification. The non-obvious insight is that the physical architecture of monitoring—cameras, RFID tags, motion sensors—is underutilized as a plastic resource that can be reprogrammed for privacy, not just control, when labor parity demands it.

Temporal accountability decoupling

Performance evaluations in distribution hubs would disconnect exact timing data from disciplinary consequences, because synchronized timestamping of tasks—like pallet scanning or cart replenishment—would become personally identifiable information if granted engineer-level privacy. This forces managers at companies like FedEx Ground to replace real-time productivity dashboards with aggregated weekly throughput metrics, weakening the link between instant behavioral feedback and job security. The overlooked mechanism is that granular time tracking in warehouses operates as a form of continuous performance adjudication, which collapses when time-series data can no longer be attributed to individuals—revealing that efficiency in logistics relies on temporal transparency, not just physical output.

Union data stewardship

Labor unions would emerge as custodians of operational data collected from warehouse personnel, mirroring how engineering guilds informally govern access to proprietary codebases within tech firms. With workers legally entitled to withhold personal workflow data from employer algorithms, unions like the ILWU could negotiate data trusts that aggregate anonymized labor patterns to optimize shift design without enabling surveillance. This surfaces the latent function of privacy regimes as institutional power brokers—where control over data flow determines who shapes working conditions, not just who monitors them—shifting the balance of influence from corporate analytics teams to collective labor bodies.

Surveillance Friction

Granting warehouse workers the same privacy protections as software engineers would immediately disrupt logistics giants’ capacity to monitor productivity via biometric tracking and algorithmic management, because Amazon’s Fulfillment by Algorithm system in facilities like Phoenix AZ-9 now depends on constant location pinging and time-motion analysis to enforce work quotas—what makes this significant is that privacy becomes a material disruptor of efficiency engineering, revealing how worker visibility, not just wage or skill, is the hidden architecture of operational control in physical supply chains.

Document Class War

If warehouse workers had the same documentation boundaries as software engineers, managers at distribution hubs would lose their unilateral access to behavioral logs, forcing middle supervision to negotiate work instructions through formal channels rather than real-time punitive alerts, because Salesforce’s field service modules assume paperless, auditable workflows for knowledge workers while disallowing them for laborers—this exposes how documentation asymmetry, not just pay grade, structures managerial dominance by treating labor as legible only through correction, not authorship.

Abstraction Reversal

Extending software engineers’ privacy norms to warehouse workers would collapse the assumed hierarchy between cognitive and manual labor by requiring companies like Flexport to anonymize motion data the way GitHub anonymizes code contributor metadata in internal repositories, thereby forcing logistics firms to stop treating physical movement as inherently public while mental work is protected—this inverts the myth of abstraction as progress, showing instead that privacy functions as a prestige good rationed by occupational caste.

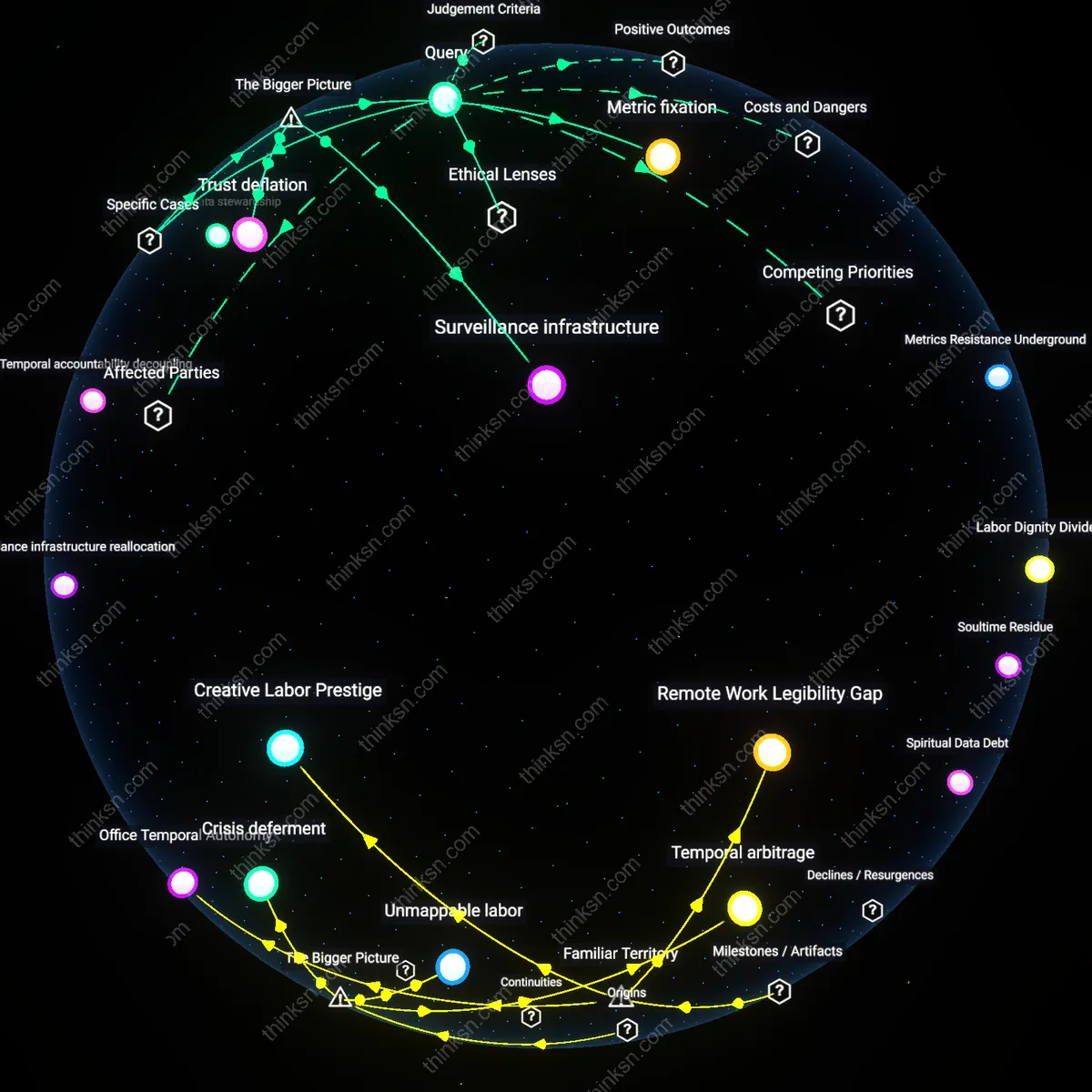

How did unpredictable work patterns come to shield certain jobs from being monitored over time?

Temporal arbitrage

Unpredictable work patterns emerged in post-industrial service economies during the 1980s in cities like London and New York, where deregulation and the decline of fixed-hour employment in finance and consulting created roles requiring irregular, on-call availability. High-value professionals such as mergers-and-acquisitions advisors or crisis PR consultants structured their time around volatile client demands rather than fixed schedules, making standardized performance metrics irrelevant—not because oversight was absent, but because productivity was measured in crisis resolution rather than observable labor. This exception to time-based monitoring was accepted as efficiency, institutionalizing temporal unpredictability as a proxy for elite status, a move obscured by equating visibility with value. The underappreciated dynamic here is that irregular hours were not loopholes but deliberate markers of indispensability, enabling selective autonomy under capitalism. This normalization of erratic schedules in high-stakes sectors created a legitimizing model later adopted by gig platforms to justify opaque algorithmic management—thus originating the residual concept of temporal arbitrage.

Unmappable labor

The rise of unpredictable work patterns as shields against monitoring began in remote maritime and extractive industries in the early 20th century, where geographically isolated crews—such as North Sea oil rig workers or Pacific tuna longliners—operated beyond real-time supervision due to communication lags and harsh conditions. Management responded not with rigid schedules but with outcome-based evaluations, emphasizing results like barrels extracted or tons caught, allowing irregular rhythms to become institutionally entrenched as necessary adaptations rather than deviations. This created a precedent where spatial inaccessibility legitimized temporal unpredictability, redefining the absence of oversight as operational necessity rather than resistance. The underappreciated consequence is that systemic remoteness became a blueprint for later justifications of unmonitored labor in digital nomadism and decentralized tech teams, revealing how physical disconnection from command centers historically enabled autonomy in time use—thus producing the concept of unmappable labor.

Crisis deferment

Unpredictable work patterns gained protective status in Cold War–era scientific and intelligence communities, particularly in U.S. nuclear weapons labs like Los Alamos and NSA research divisions, where the imperative to solve unforeseeable technical failures or decode emergent threats rendered standard time tracking counterproductive. Scientists and cryptographers were granted temporal autonomy not as privilege but as operational necessity, since breakthroughs could not be scheduled and monitoring was seen as disruptive to cognitive flow, thus embedding unpredictability into the professional ethic of critical innovation roles. This carved out an exception where output legitimacy overruled process scrutiny, justified by existential risk—if a physicist missed a deadline but averted a reactor meltdown, oversight was retrospectively invalidated. The underappreciated insight is that the state’s tolerance for erratic rhythms in these domains was not indifference but strategic investment in delay-resistant problem-solving, establishing crisis deferment as a systemic alibi for shielding cognitive labor from routine monitoring.

Office Temporal Autonomy

The rise of salaried professional roles in mid-20th century corporate offices allowed employees to escape time-based oversight by normalizing output-based evaluation. Managers in firms like IBM and General Motors shifted from industrial-era punch-clock discipline to trusting white-collar workers to regulate their own schedules, as long as deliverables were met—this created a cultural precedent where unpredictable hours became a badge of responsibility rather than evasion. The non-obvious consequence was that temporal unpredictability, once a sign of low oversight in manual labor, was revalued as a marker of elite work status, insulating knowledge jobs from later surveillance trends.

Creative Labor Prestige

The institutionalization of ‘creative genius’ as a cultural ideal in postwar advertising and media industries excused erratic work patterns by framing them as essential to innovation. Figures like those at Doyle Dane Bernbach or later Steve Jobs at Apple popularized the image of the visionary working outside routine schedules, making irregular hours a legitimizing trait rather than a monitoring red flag. This association seeped into public consciousness through biographies, corporate mythmaking, and television, normalizing unpredictability as a necessary condition for high-value ideation—thus shielding design, tech, and marketing roles from standardized time audits.

Remote Work Legibility Gap

The rapid adoption of home-based work during the 2020 pandemic lockdowns severed physical surveillance mechanisms, forcing companies to rely on self-reported logs and digital activity proxies that were easily gamed or obscured. Tools like Zoom and Slack became artifacts of presence rather than productivity, privileging workers who could simulate availability while maintaining opaque, irregular rhythms—especially in roles like software development or academic research. The overlooked reality is that the sudden collapse of spatial oversight made unpredictability a structural advantage, not just a personal habit, allowing certain knowledge workers to evade temporal scrutiny under the guise of technical necessity.

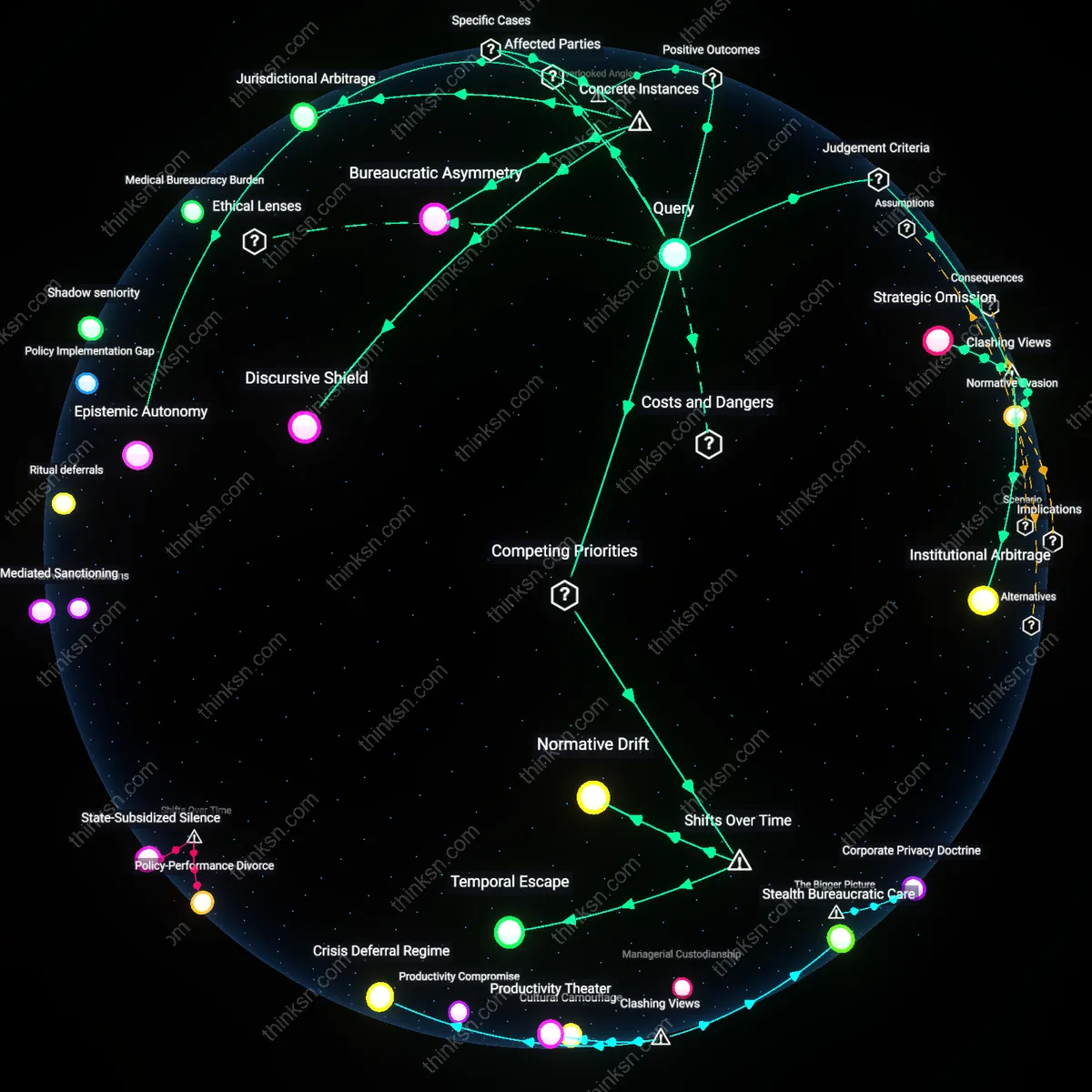

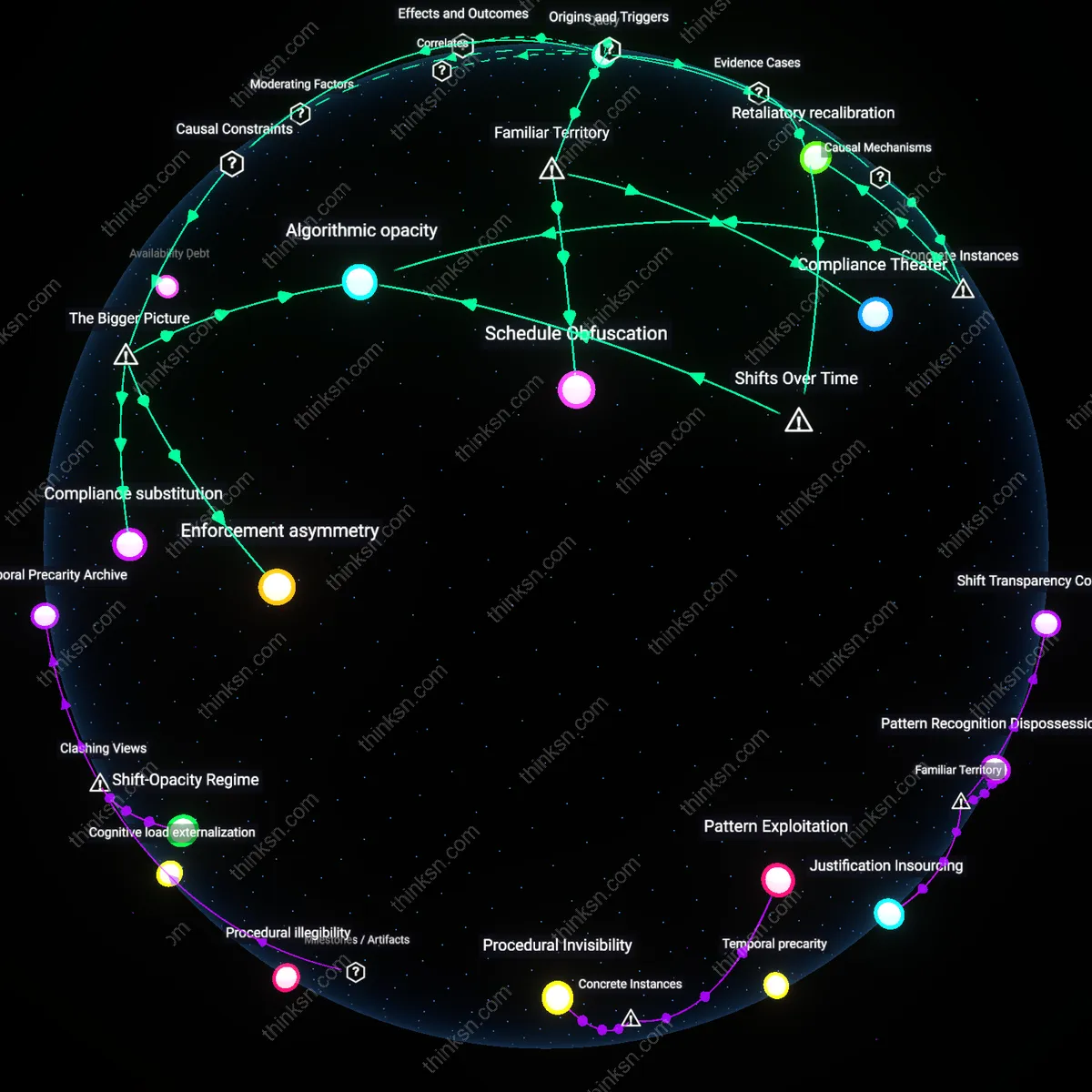

What would happen if warehouse workers had the same privacy protections as software engineers, and how would work processes adapt?

Disciplinary Convergence

Warehouse labor would collapse into the same architectural surveillance that defines modern software engineering, exposing the fiction of privacy as a professional tier rather than a technical condition. While software engineers' privacy is routinely compromised through code audits, keystroke logging, and mandatory collaboration tools like Slack or Jira—disguised as productivity protocols—extending these same practices retroactively to warehouse workers means accepting constant monitoring as the normalized substrate of cognitively demanding work; the shift from physical time-motion studies in the early 20th century to algorithmic management in Amazon fulfillment centers marks a continuity in control, but the assumption that privacy protections could be 'equalized' obscures how such protections were never really withheld from engineers, only differently justified. This reveals that the residual category of ‘privacy’ in tech work is not a shield but a calibrated disclosure regime specific to data-intensive management eras.

Task Entanglement

Work processes would reorganize around granular data entitlements, forcing logistics operations to mimic software development’s permissioned access hierarchies. As software engineers operate under the premise of differential visibility—where repositories, credentials, and error logs are segmented by role and clearance—extending this model to warehouse workers implies access to inventory maps, restocking alerts, or scheduling algorithms could become rights rather than de facto exposures; the historical shift from Taylorist observation in the 1910s to SAP-based warehouse management systems in the 2000s centralized decision-making in software, but treating warehouse workers’ visibility as a privacy concern flips the power dynamic by recognizing their labor produces the data that feeds the system. This exposes how operational transparency was once unidirectional—from worker to system—but now becomes a negotiable flow subject to access governance.

Labor Abstraction

Management would shift toward outcome-based metrics indistinguishable from agile software sprints, collapsing bodily presence into performance tokens. In the transformation from industrial-era productivity tracking—where foremen timed pallet movements with stopwatches—to today’s AI-driven forecasting in warehouses like those run by DHL or FedEx, work has already become abstracted from the body; yet granting warehouse workers the same privacy norms as software engineers means their biometric rhythms, location trails, and equipment interactions can no longer be mined as ambient data, forcing companies to treat labor not as observable behavior but as contractual output, mirroring the way engineers are evaluated through deploy frequency and bug resolution rather than keystrokes. The non-obvious consequence is that this does not empower workers but accelerates the erasure of bodily labor from managerial visibility, producing a new species of invisible work.

Surveillance infrastructure reallocation

Warehouse operations would repurpose existing monitoring hardware for worker autonomy rather than compliance enforcement, as unified privacy standards compel firms like Amazon to decommission line-of-sight AI tracking systems that detect micro-movements in fulfillment centers. This shift redirects IT investment toward encrypted personal device networks for logistics staff, paralleling how software engineers use virtual private environments to shield coding activity—exposing how surveillance is not inherent to productivity but a configurable layer shaped by labor classification. The non-obvious insight is that the physical architecture of monitoring—cameras, RFID tags, motion sensors—is underutilized as a plastic resource that can be reprogrammed for privacy, not just control, when labor parity demands it.

Temporal accountability decoupling

Performance evaluations in distribution hubs would disconnect exact timing data from disciplinary consequences, because synchronized timestamping of tasks—like pallet scanning or cart replenishment—would become personally identifiable information if granted engineer-level privacy. This forces managers at companies like FedEx Ground to replace real-time productivity dashboards with aggregated weekly throughput metrics, weakening the link between instant behavioral feedback and job security. The overlooked mechanism is that granular time tracking in warehouses operates as a form of continuous performance adjudication, which collapses when time-series data can no longer be attributed to individuals—revealing that efficiency in logistics relies on temporal transparency, not just physical output.

Union data stewardship

Labor unions would emerge as custodians of operational data collected from warehouse personnel, mirroring how engineering guilds informally govern access to proprietary codebases within tech firms. With workers legally entitled to withhold personal workflow data from employer algorithms, unions like the ILWU could negotiate data trusts that aggregate anonymized labor patterns to optimize shift design without enabling surveillance. This surfaces the latent function of privacy regimes as institutional power brokers—where control over data flow determines who shapes working conditions, not just who monitors them—shifting the balance of influence from corporate analytics teams to collective labor bodies.

Surveillance Friction

Granting warehouse workers the same privacy protections as software engineers would immediately disrupt logistics giants’ capacity to monitor productivity via biometric tracking and algorithmic management, because Amazon’s Fulfillment by Algorithm system in facilities like Phoenix AZ-9 now depends on constant location pinging and time-motion analysis to enforce work quotas—what makes this significant is that privacy becomes a material disruptor of efficiency engineering, revealing how worker visibility, not just wage or skill, is the hidden architecture of operational control in physical supply chains.

Document Class War

If warehouse workers had the same documentation boundaries as software engineers, managers at distribution hubs would lose their unilateral access to behavioral logs, forcing middle supervision to negotiate work instructions through formal channels rather than real-time punitive alerts, because Salesforce’s field service modules assume paperless, auditable workflows for knowledge workers while disallowing them for laborers—this exposes how documentation asymmetry, not just pay grade, structures managerial dominance by treating labor as legible only through correction, not authorship.

Abstraction Reversal

Extending software engineers’ privacy norms to warehouse workers would collapse the assumed hierarchy between cognitive and manual labor by requiring companies like Flexport to anonymize motion data the way GitHub anonymizes code contributor metadata in internal repositories, thereby forcing logistics firms to stop treating physical movement as inherently public while mental work is protected—this inverts the myth of abstraction as progress, showing instead that privacy functions as a prestige good rationed by occupational caste.