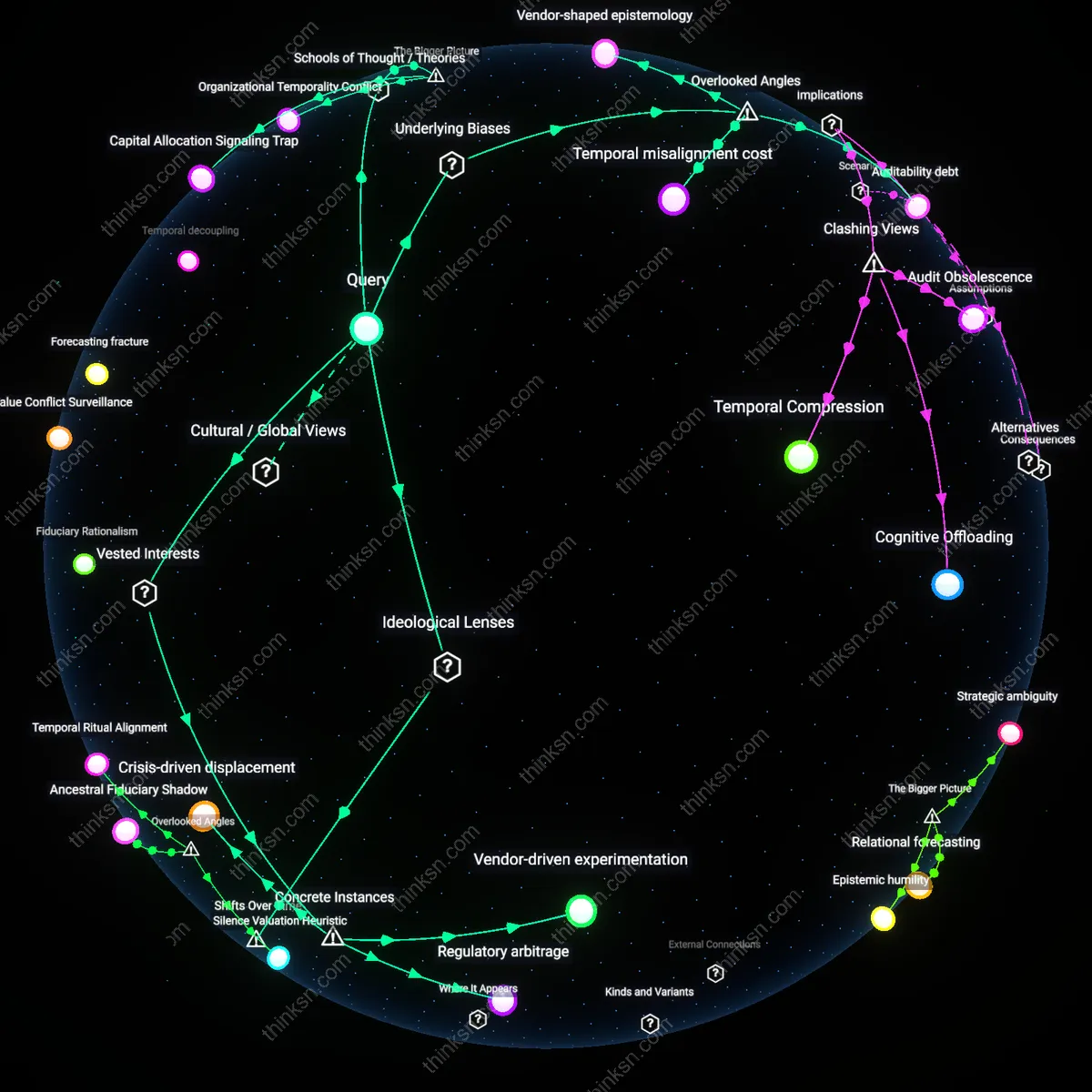

Are AI Default Investments Worth the Loss of User Control?

Analysis reveals 4 key thematic connections.

Key Findings

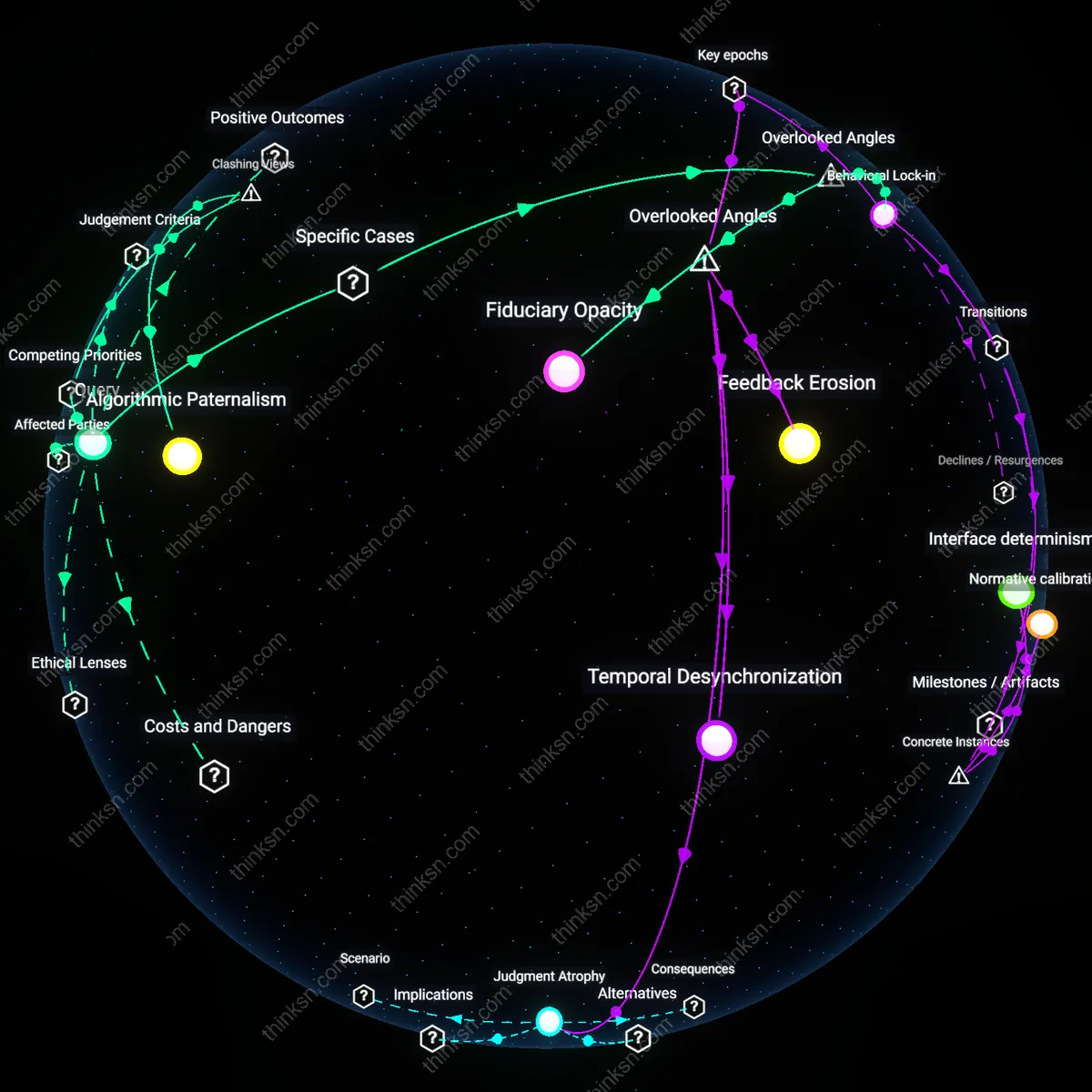

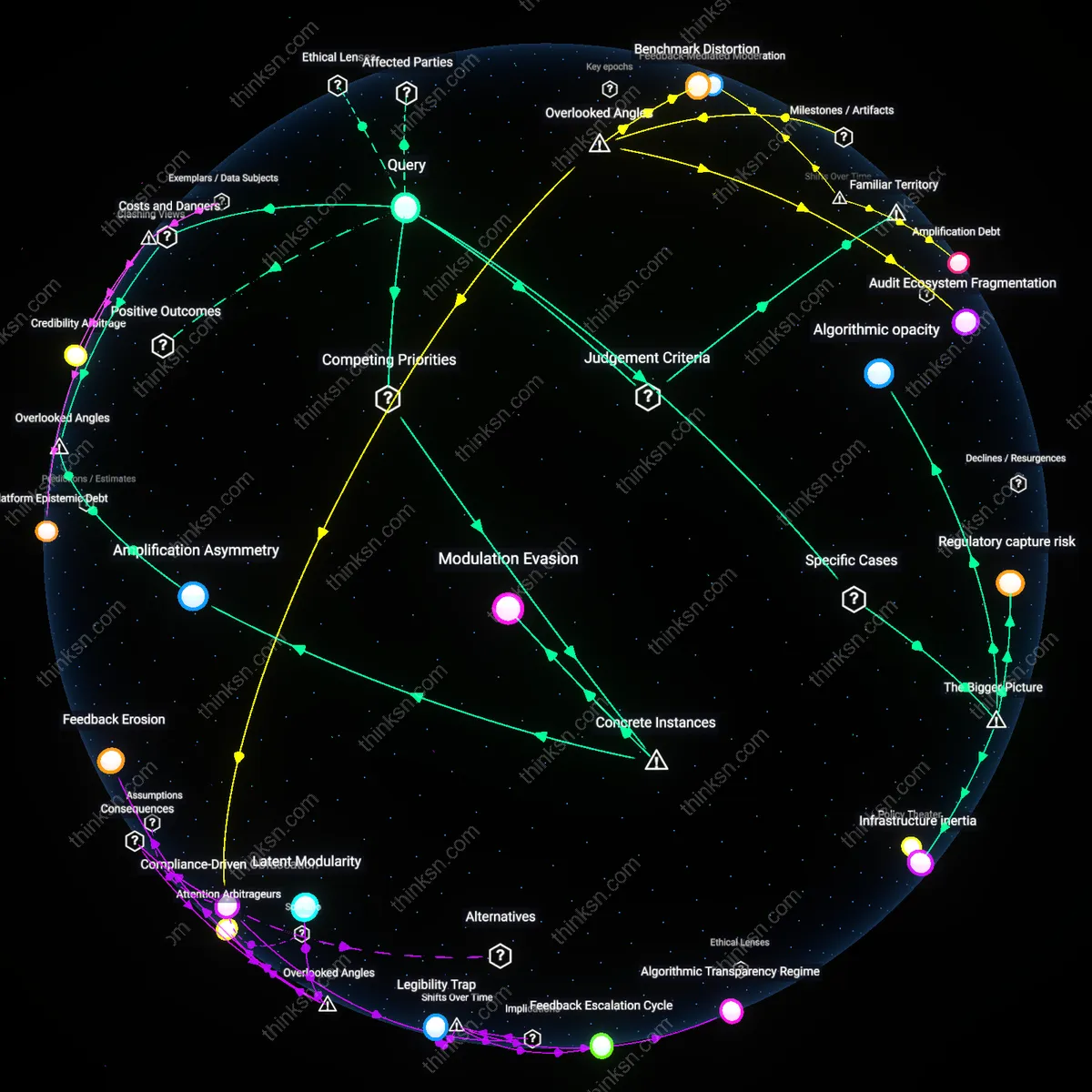

Algorithmic Paternalism

It is unreasonable for a personal finance app to override user autonomy with AI-suggested allocations because doing so in the context of uncertain long-term returns transforms provisional recommendations into de facto financial mandates under the guise of optimization, effectively privileging systemic efficiency and platform accountability over individual agency. The mechanism operates through behavioral nudging embedded in interface design, where default allocations and simplified risk profiles condition users to defer to algorithmic judgment—even when historical backtesting fails to guarantee future performance—thereby institutionalizing a form of algorithmic paternalism. This dynamic is underappreciated because it reframes financial guidance not as empowerment but as quiet displacement of decision rights, revealing how platforms manage liability by shifting responsibility onto users while simultaneously engineering their compliance.

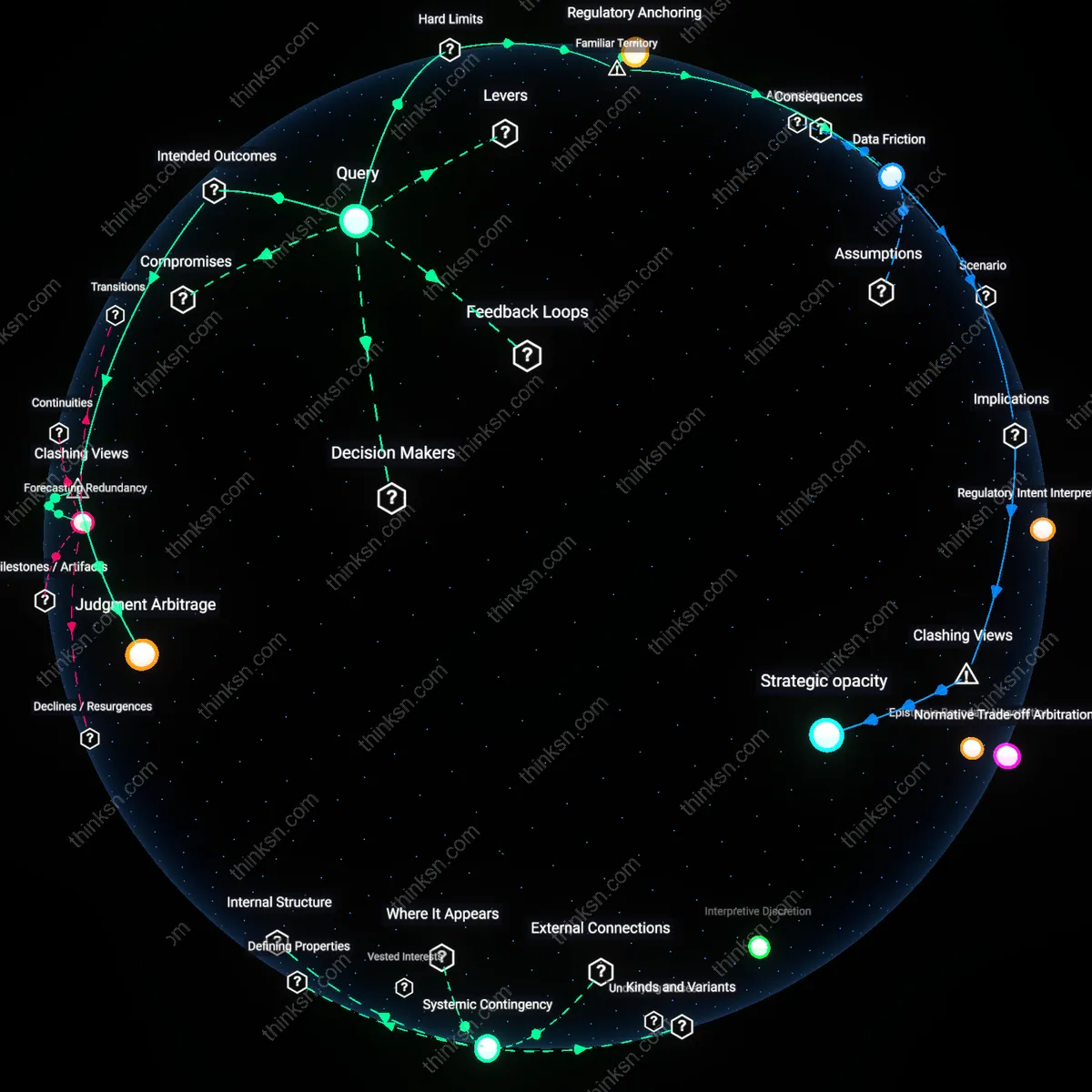

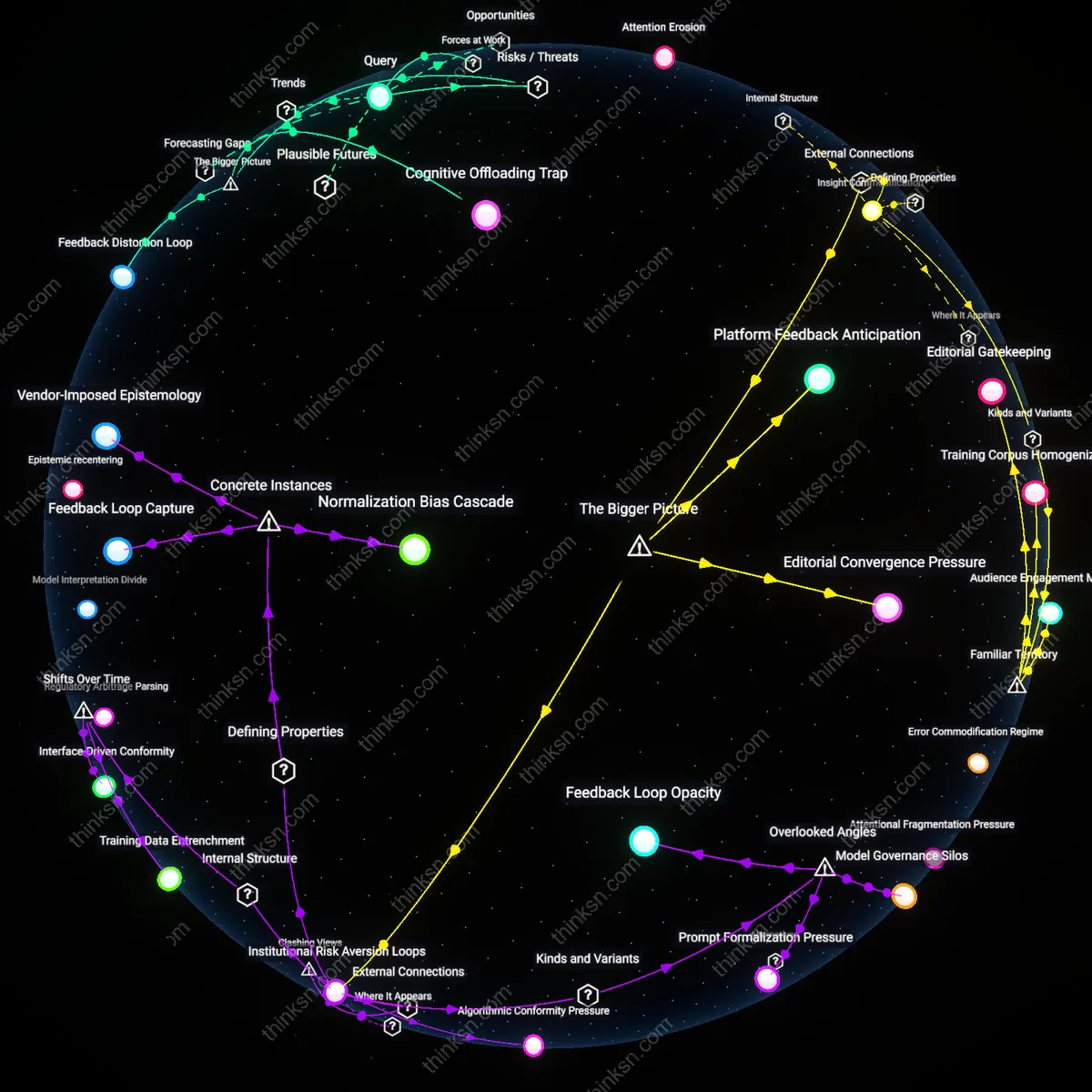

Return Obfuscation

It is reasonable for a personal finance app to prioritize AI-suggested allocations because long-term return uncertainty renders user autonomy a misleading ideal—one that privileges subjective confidence over systematically modeled outcomes, allowing platforms to reframe diegetic data as investment authority. By constructing forecasts from market correlations, risk volatility, and macroeconomic proxies that users cannot independently verify, the AI positions itself not as a tool but as the epistemic limit of financial judgment, thereby displacing retail intuition with calibrated uncertainty. This challenges the dominant view that user sovereignty should trump algorithmic input, exposing how 'uncertainty' itself becomes a rationale for centralized prediction, which in turn legitimizes platform control under the banner of rational stewardship.

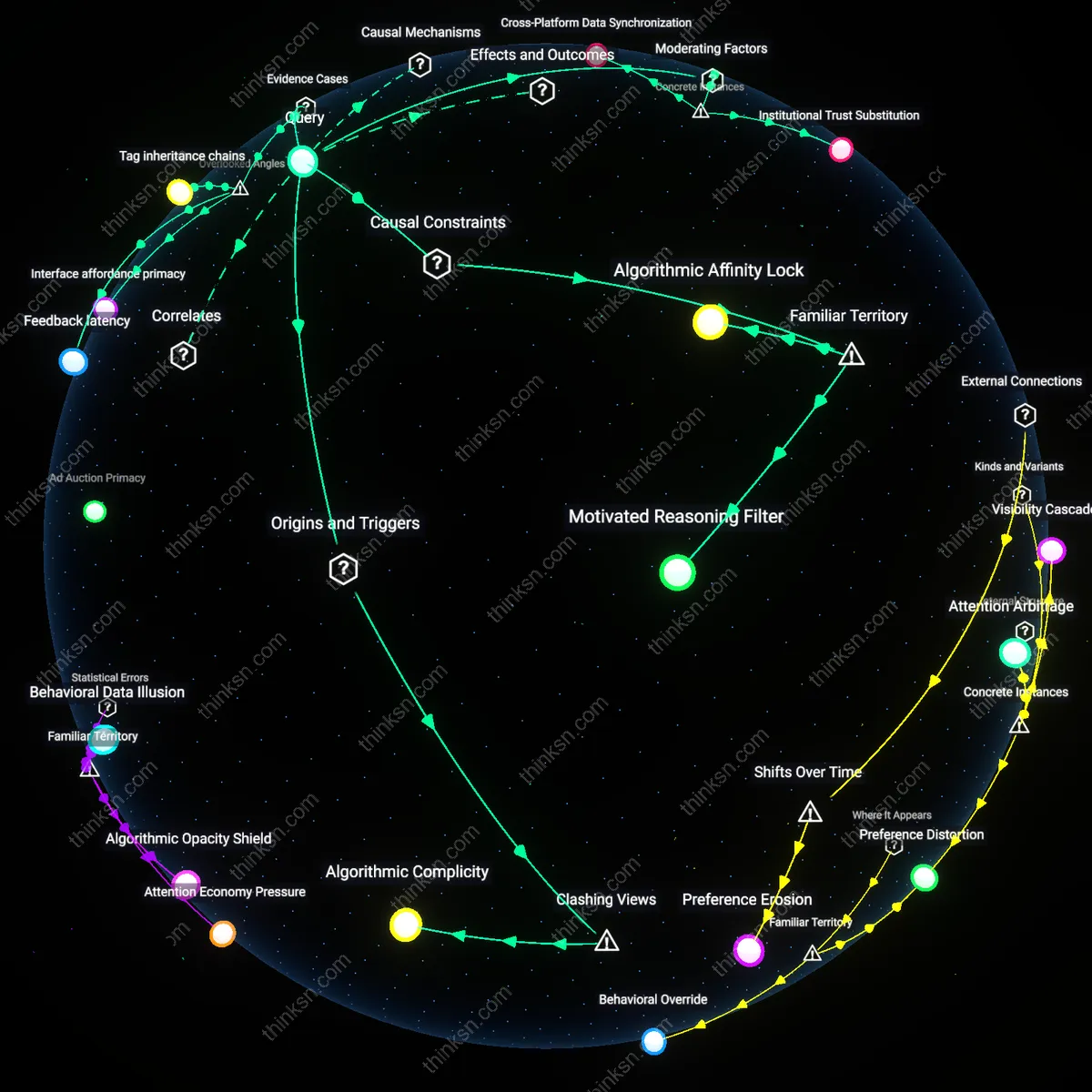

Behavioral Lock-in

A personal finance app that prioritizes AI-suggested allocations over user input risks creating behavioral lock-in, as seen in Betterment’s early auto-rebalance defaults, where users who accepted algorithmic prescriptions disengaged from financial learning, reducing long-term agency. This occurs because repeated deferral to opaque recommendations trains users to treat financial decisions as outsourced cognitive labor, especially when outcomes are delayed and uncertain. The mechanism operates through interface-driven habituation—a feedback loop where ease of use suppresses critical scrutiny. What’s overlooked is that the erosion of decision muscle, not the investment outcome itself, becomes the dominant risk, shifting the ethical focus from accuracy to developmental stagnation in financial literacy.

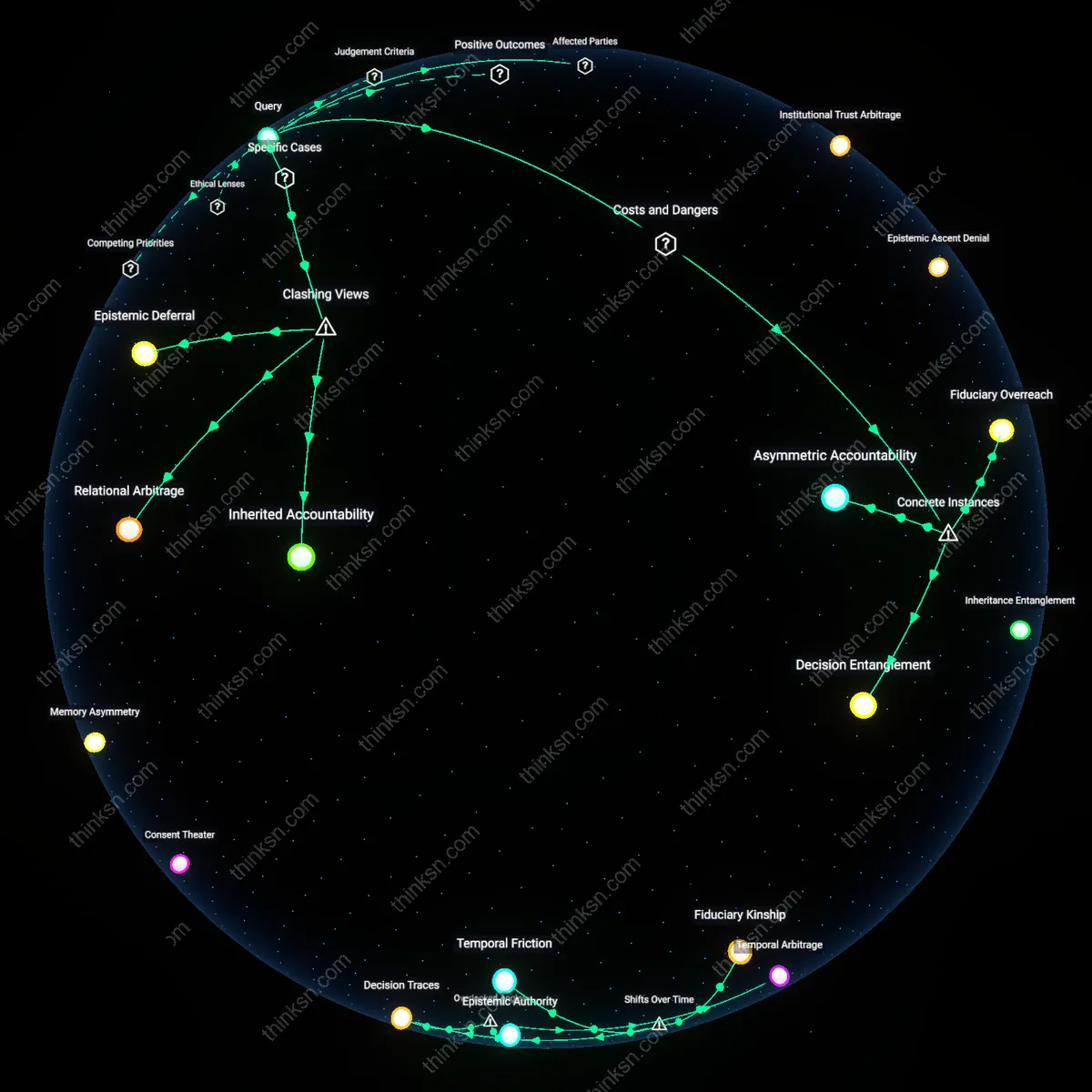

Fiduciary Opacity

In Robinhood’s ‘Wealth Transfer’ IRAs, AI-driven allocation defaults are embedded in a structure where fiduciary duty is diluted across third-party fund providers and black-box models, making it unreasonable to override user autonomy when the accountability chain is fragmented. The app's suggestions are shaped not by pure return optimization but by revenue-sharing agreements with asset managers whose funds are algorithmically favored. This creates a hidden incentive layer where AI outputs reflect embedded kickbacks masked as personalization. The overlooked dynamic is that the uncertainty in returns is compounded by uncertainty in intent—users can’t challenge suggestions they don’t know are commercially biased, turning autonomy suppression into a systemic feature, not a design flaw.