CFO Dilemma: AI Analytics or Fiduciary Judgment in Financial Planning?

Analysis reveals 10 key thematic connections.

Key Findings

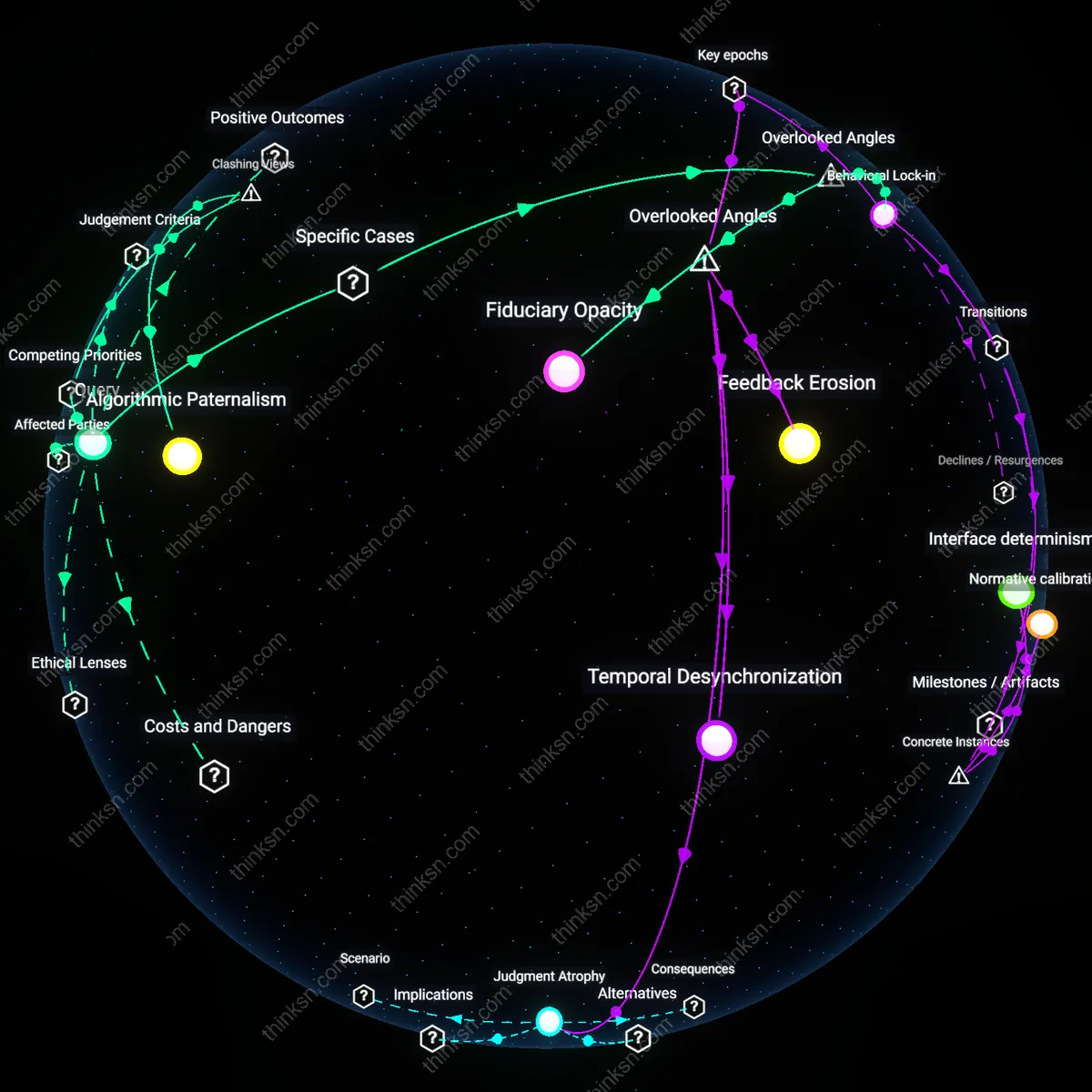

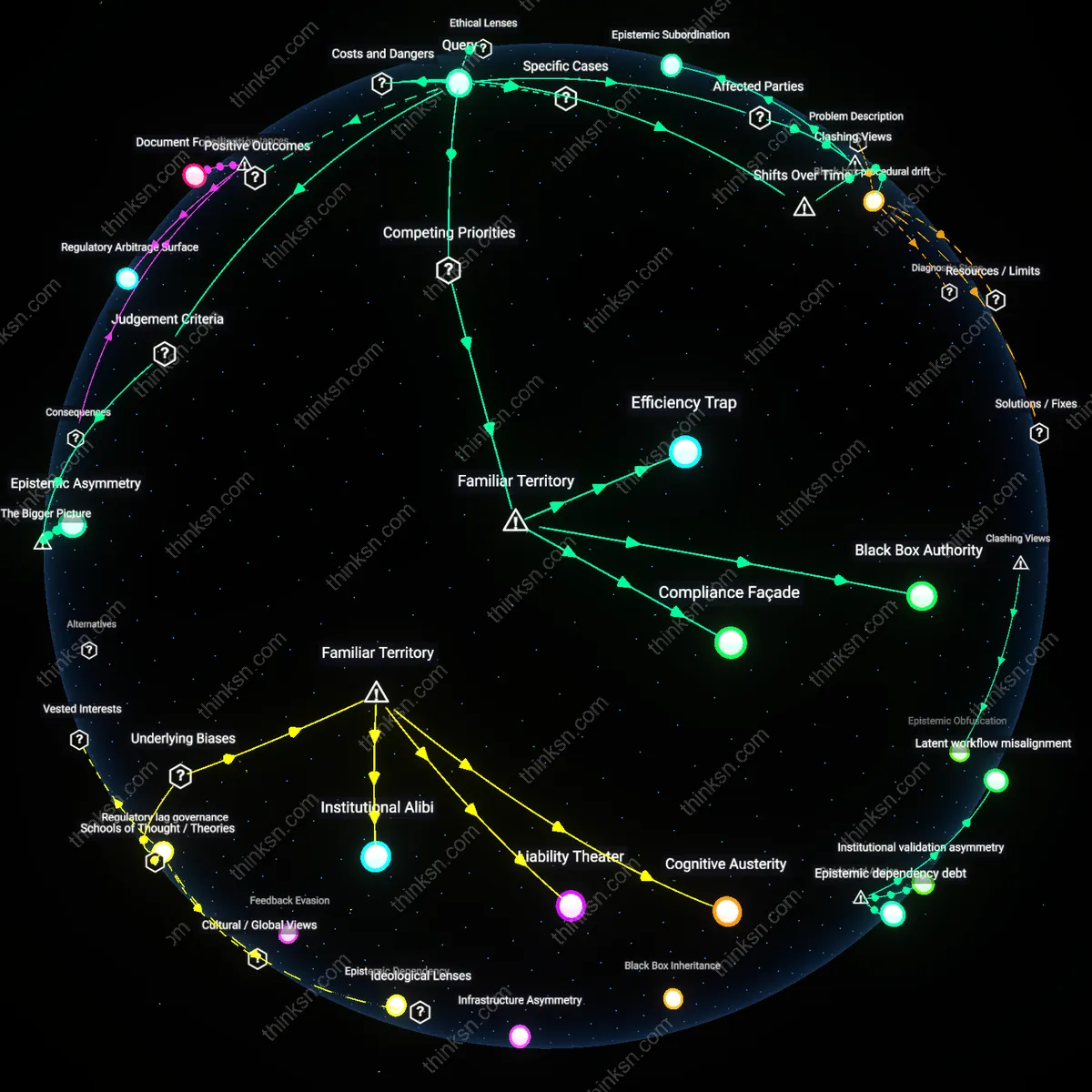

Epistemic Uncertainty Regime

CFOs hesitate to allocate capital decisively toward AI analytics or human judgment because the financial management field lacks a consensus epistemic framework for evaluating AI's strategic reliability, leaving practitioners in an uncertainty regime where evidence is neither conclusively valid nor dismissible. This condition is sustained by competing methodological paradigms—positivist data-driven modeling dominant in AI research versus interpretive, context-sensitive reasoning central to traditional financial leadership—that create divergent standards for what counts as credible evidence, a tension institutionalized in both accounting academia and corporate governance structures. As a result, CFOs within S&P 500 firms operate under a de facto procedural rationality that treats AI adoption as a form of experimental compliance rather than strategic transformation, reinforcing a feedback loop where inconclusive outcomes perpetuate ambiguity. The non-obvious implication is that the dilemma is not primarily financial or technical but rooted in irreconcilable theories of knowledge that govern how financial executives validate decisions.

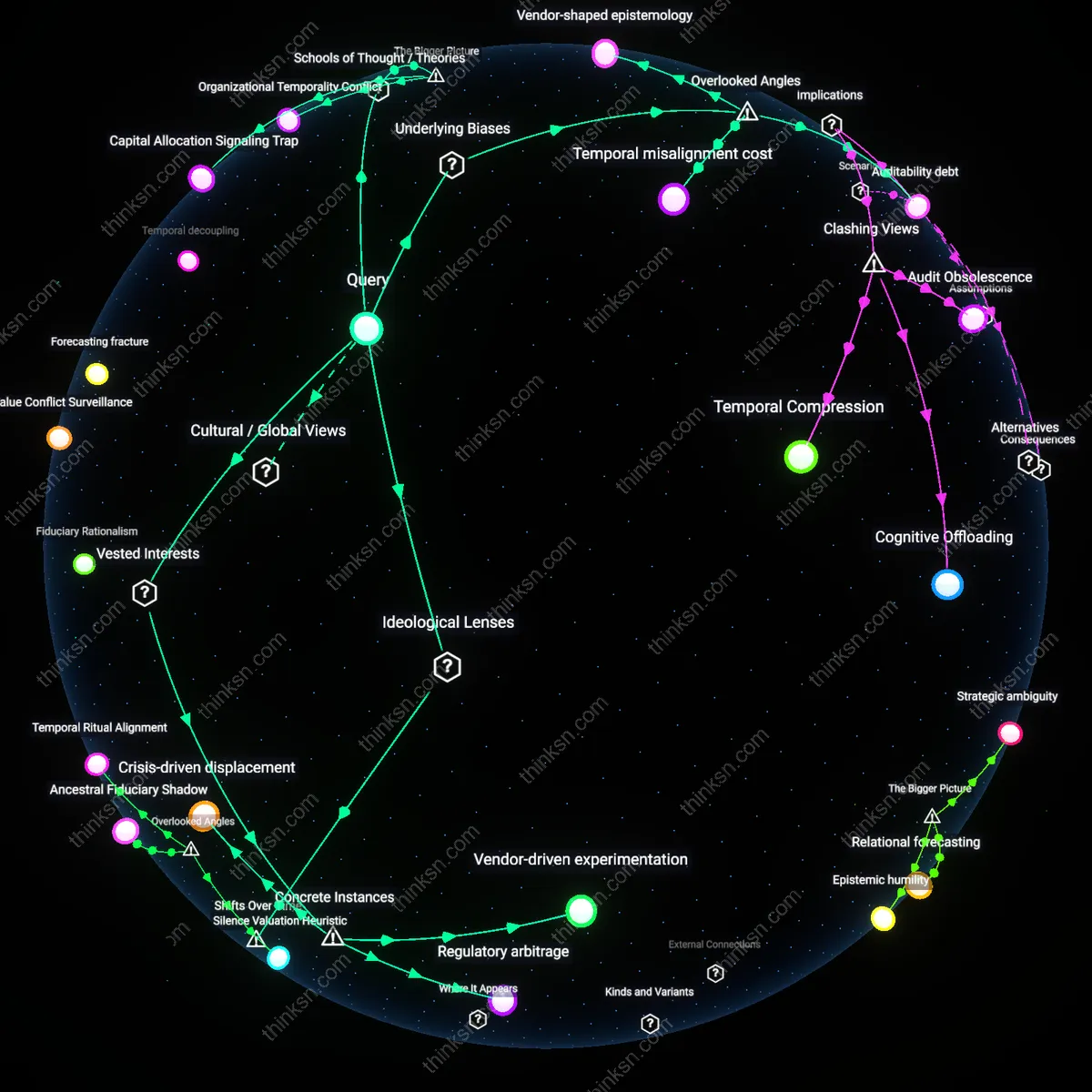

Capital Allocation Signaling Trap

CFOs face a binding trade-off between AI investment and human capital enhancement because equity markets penalize ambiguity in strategic narratives, forcing financial leaders to signal decisive resource commitment even when AI’s planning efficacy remains context-dependent and unproven at scale. In the current earnings-call-driven accountability regime, any balanced or iterative approach—such as co-developing AI tools alongside judgment refinement—is interpreted by investors and analysts as indecisiveness, a perception amplified by sell-side models that reward visible, quantifiable modernization spending. This dynamic is institutionalized in capital allocation signaling practices, where AI software licenses or platform contracts serve as tangible proof of innovation, whereas investments in judgment frameworks—like scenario planning workshops or behavioral finance training—lack commensurate visibility. Thus, the dilemma emerges not from technological limitations but from the need to project strategic clarity within a market discourse that conflates visibility with capability.

Organizational Temporality Conflict

The CFO's dilemma crystallizes from a structural misalignment between the long development cycles of human judgment and the rapid deployment timelines expected of AI analytics systems, creating incompatible temporal rhythms within financial planning functions. While AI vendors and internal IT departments deliver predictive models on quarterly sprint schedules, mastery of strategic foresight—such as capital structure intuition or crisis anticipation—relies on multi-year experiential learning cycles embedded in rotational assignments, mentorship, and post-mortem reviews. This tension is exacerbated in multinational corporations where AI implementations are centralized and fast-tracked by shared services, while judgment-building remains decentralized and culturally embedded in regional financial teams. The underappreciated reality is that this temporal dissonance undermines hybrid strategies, forcing CFOs into false binaries not due to cost constraints but because organizational time itself becomes a scarce and contested resource.

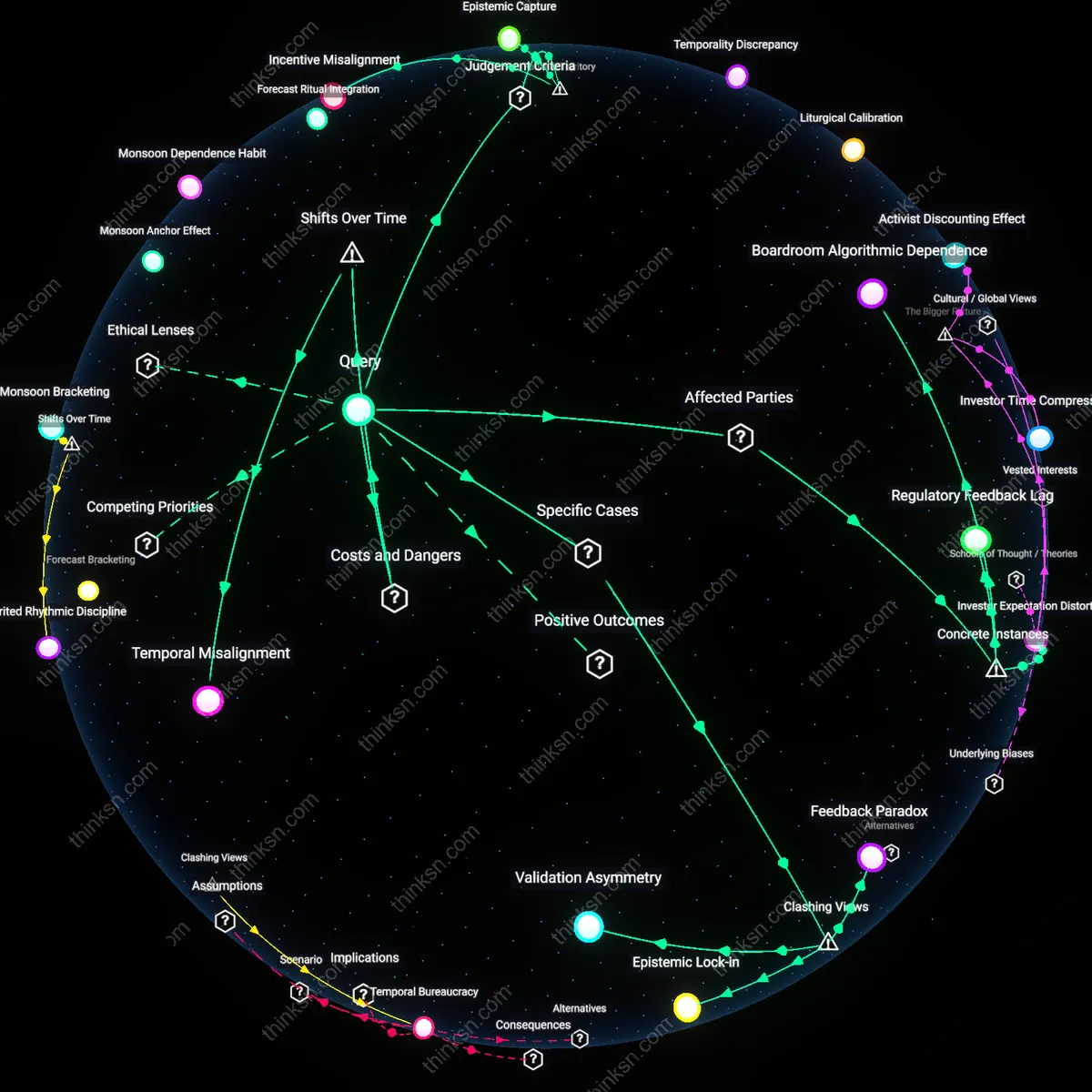

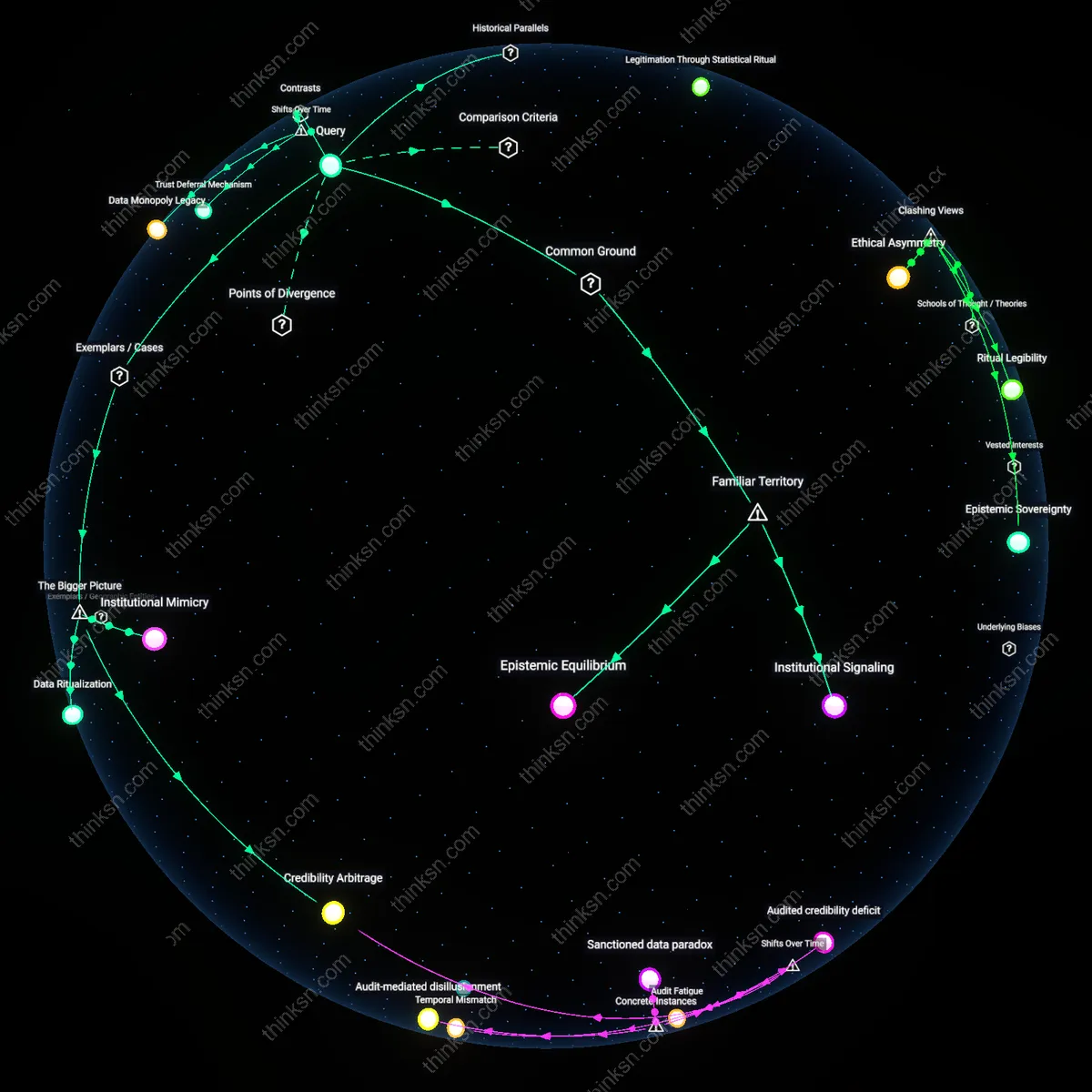

Epistemic Fragmentation

From the 1990s through the early 2010s, strategic financial planning operated on integrated forecasting models grounded in macroeconomic theory and organizational memory, but the post-2015 rise of real-time AI analytics dissolved these shared epistemic frameworks into competing data streams—now, CFOs navigate irreconcilable visions of the future produced by machine learning models trained on divergent datasets. This fragmentation breaks the historical alignment between financial foresight and executive consensus, forcing leaders to choose between adopting opaque AI insights or defending subjective judgment in forums that increasingly demand quantifiable justification. The overlooked consequence is that the dilemma is not about ROI on AI but about the collapse of a common epistemic ground needed for strategic coherence in corporate finance.

Vendor-shaped epistemology

CFOs face the AI investment dilemma because financial software vendors actively shape the definition of 'strategic capability' to favor tool adoption over human development. Enterprise AI vendors like SAP and Oracle embed assumptions about decision-making efficacy into their analytics platforms, positioning algorithmic outputs as inherently superior to judgment, which makes underinvestment in human reasoning appear rational even when evidence is weak. This reframing benefits the vendors’ recurring revenue models and perpetuates reliance on proprietary data architectures, a dynamic overlooked because internal finance teams rarely audit the epistemic design of vendor-driven roadmaps. The real conflict is not between AI and humans, but between autonomous organizational learning and externally defined technical rationality.

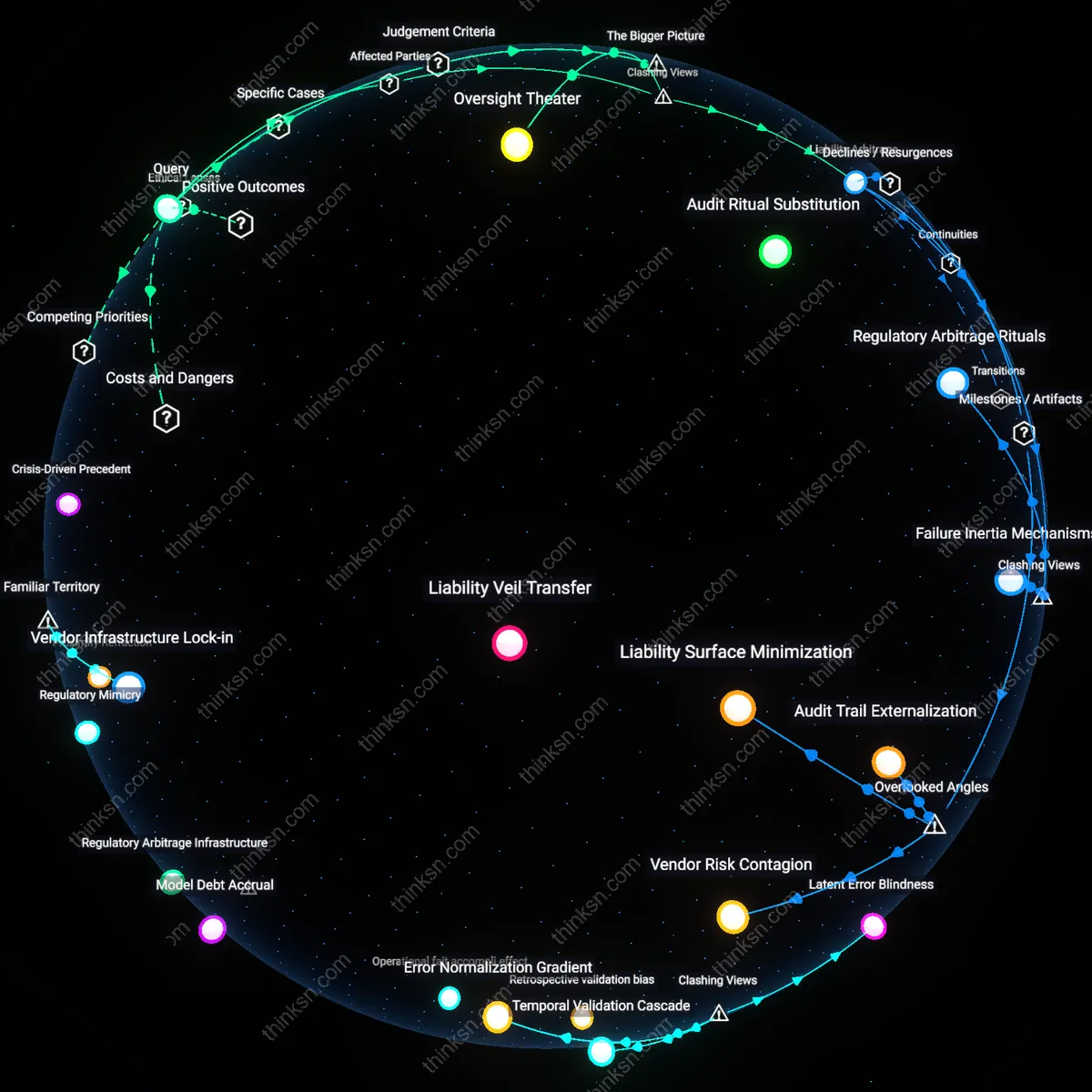

Auditability debt

CFOs hesitate to commit to AI analytics because opaque machine learning models create long-term auditability liabilities that undermine financial governance, a risk not equally present in human judgment systems. Unlike human decisions, which produce traceable rationales through memos, meetings, or approvals, AI-driven forecasts often lack standardized interpretability protocols required by SOX compliance and external auditors, introducing hidden regulatory exposure. This dimension is rarely discussed in ROI comparisons of AI versus human investment, yet it fundamentally shifts the risk calculus for finance leaders operating in highly regulated environments. The overlooked dependency is that financial legitimacy depends not only on accuracy but on the ability to retrospectively justify decisions to auditors and regulators.

Temporal misalignment cost

The CFO’s dilemma arises because AI analytics deliver value on algorithmic timescales—requiring continuous data ingestion and model retraining—while strategic financial planning operates on fiscal and board-reporting cycles, creating a mismatch in feedback loops. Investments in AI generate silent decay when models stagnate between budget cycles, whereas human judgment compounds over time through institutional memory and iterative sensemaking during quarterly reviews. This misalignment means AI capabilities often underperform precisely when strategic decisions are made, a dynamic ignored in most capability maturity models that assume technological and organizational rhythms are synchronized. The hidden cost is not in implementation but in the desynchronization of decision-relevant insight production.

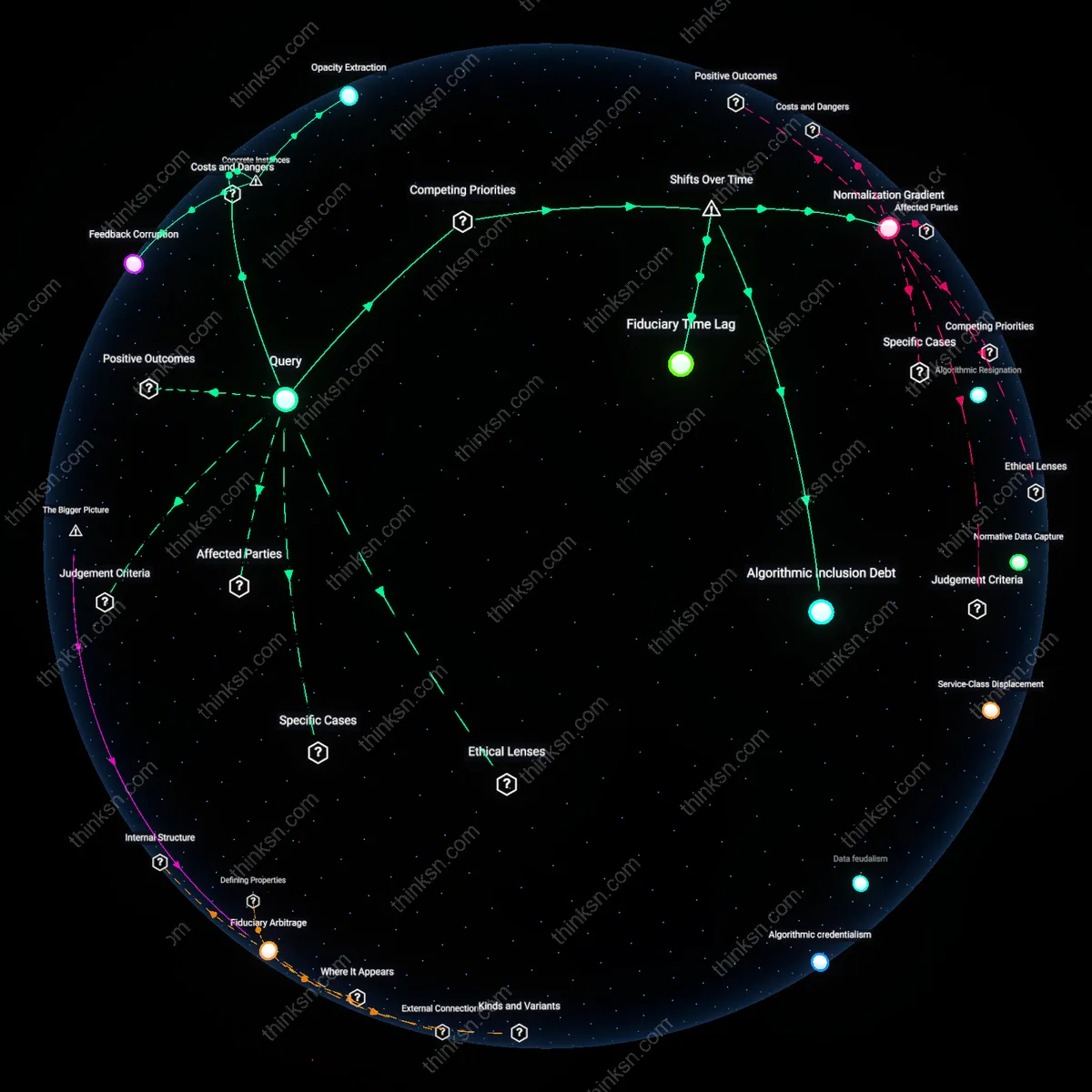

Vendor-driven experimentation

CFOs at General Electric in 2017 prioritized AI analytics investments under pressure from vendor-partners like IBM Watson, who framed early adoption as strategic necessity despite internal audits showing no measurable improvement in capital allocation, thereby shifting financial judgment authority from experienced planners to algorithmic interfaces structured around commercial AI roadmaps rather than proven planning efficacy. This dynamic reveals how corporate procurement logic, sustained by vendor ecosystems, converts technological promise into de facto investment mandates even amid evidentiary gaps, normalizing experimental AI integration as risk mitigation when it may instead obscure accountability through complexity. The non-obvious effect is that CFOs appear to strengthen analytical rigor while actually outsourcing judgment to systems designed by third-party vendors with vested interests in platform dependency.

Regulatory arbitrage

When Shell’s finance leadership expanded AI-driven forecasting tools in 2020 to meet UK Listing Rules on forward-looking disclosures, they did so not because AI improved accuracy but because algorithmic justifications provided defensible, audit-ready narratives to regulators, allowing the company to frame strategic uncertainty as quantitatively managed—despite internal memos acknowledging higher error rates than human-led models. This demonstrates how financial governance regimes incentivize the appearance of analytical rigor over its actual impact, enabling CFOs to use AI as a compliance instrument that strengthens perceived accountability while weakening autonomous human oversight. The underappreciated insight is that AI is often adopted not for performance gains but as a shield against regulatory scrutiny, where its opacity becomes an asset in constructing plausible financial narratives.

Crisis-driven displacement

During the 2008 financial restructuring of Citigroup, senior finance executives sidelined veteran risk assessors in favor of nascent algorithmic stress-testing models promoted by McKinsey & Company, betting that externally validated automation would restore investor confidence faster than internal deliberation—even though the models failed to anticipate liquidity cascades. This episode shows how existential organizational threats amplify belief in technological signaling over experiential judgment, as CFOs adopt AI less for analytical superiority than for its symbolic power in reassuring capital markets during instability. The overlooked mechanism is that AI becomes a substitute for human judgment not through proven utility but through its perceived modernity in moments when credibility, not accuracy, becomes the primary currency of financial leadership.