Is Public Utility Status Better than Antitrust for Search Engine Diversity?

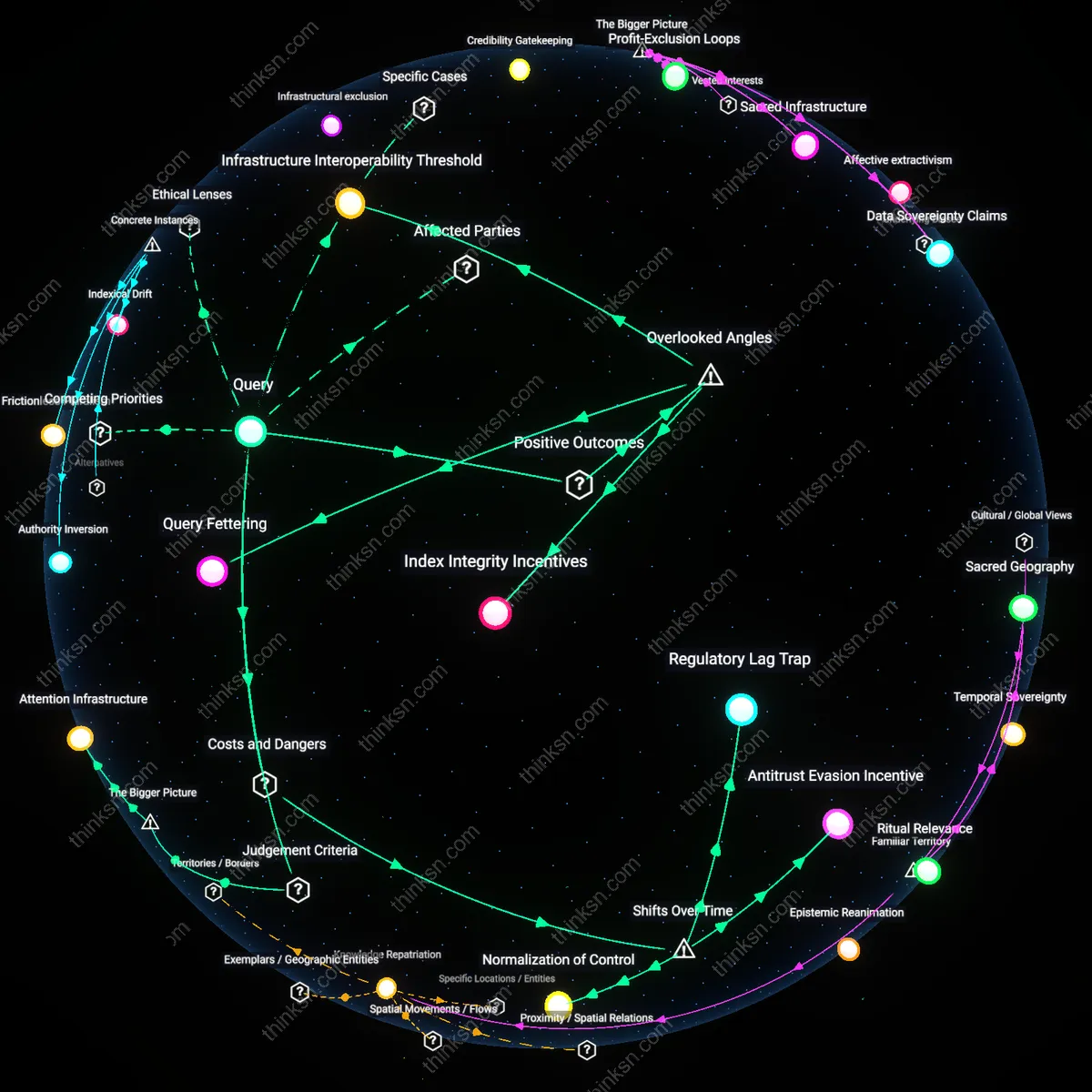

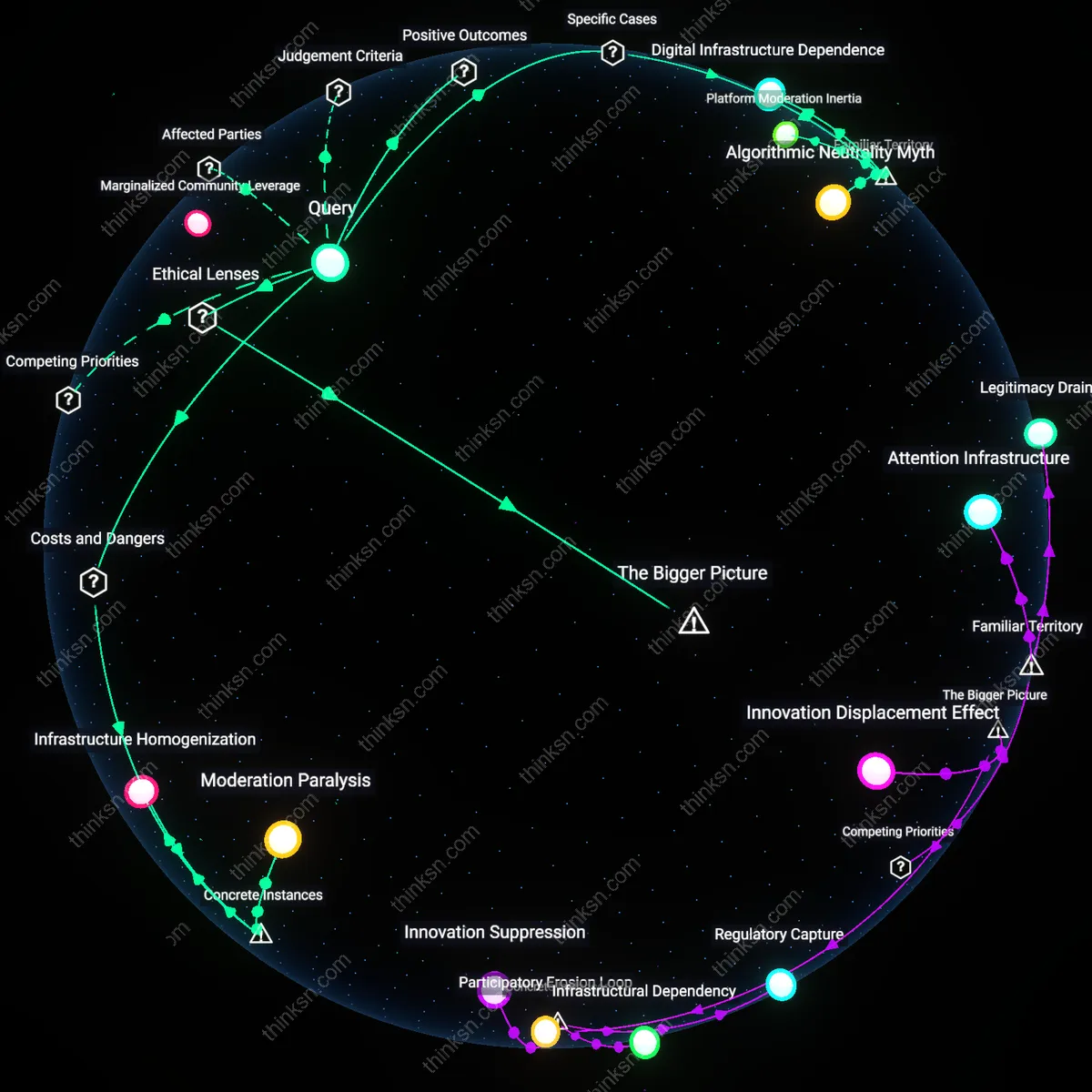

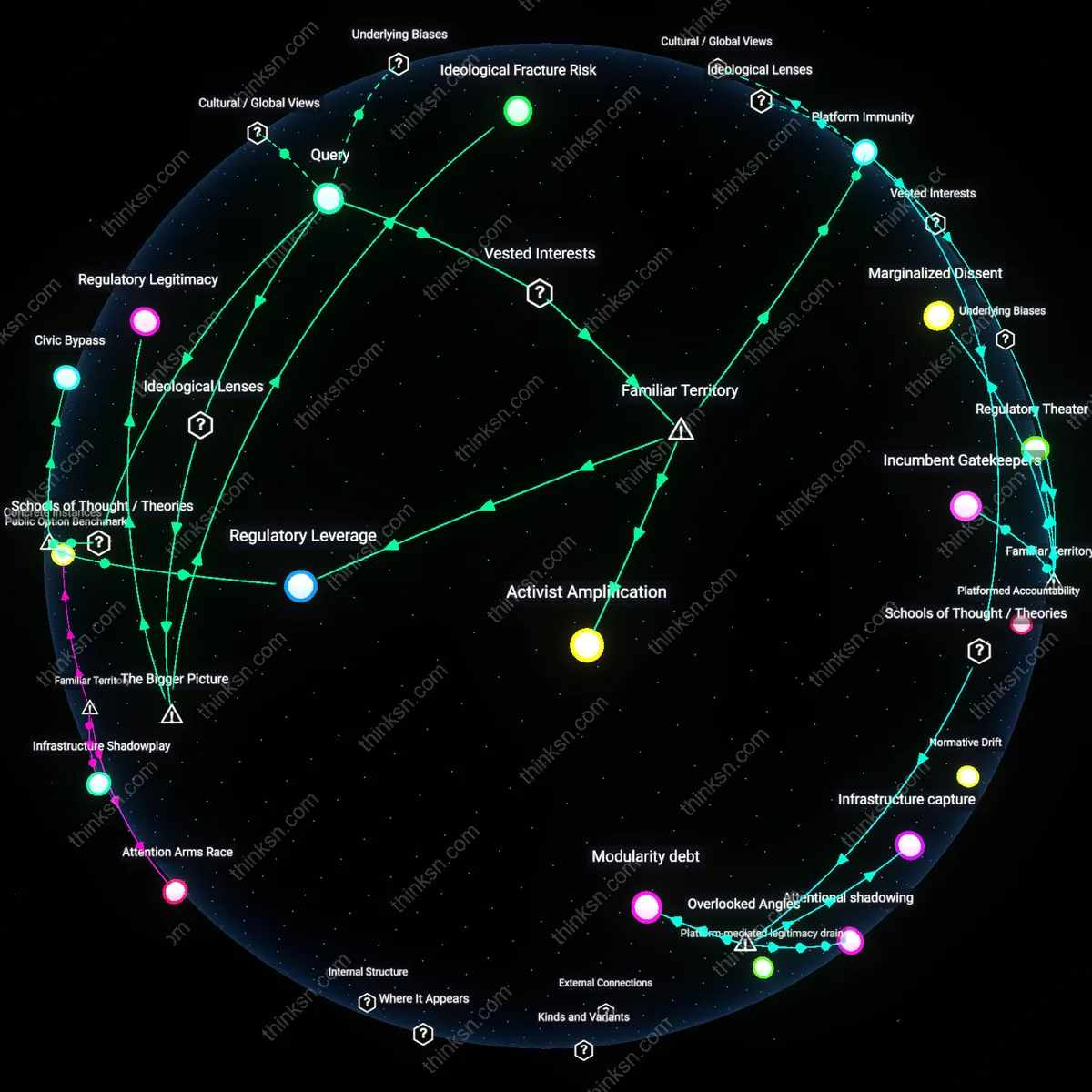

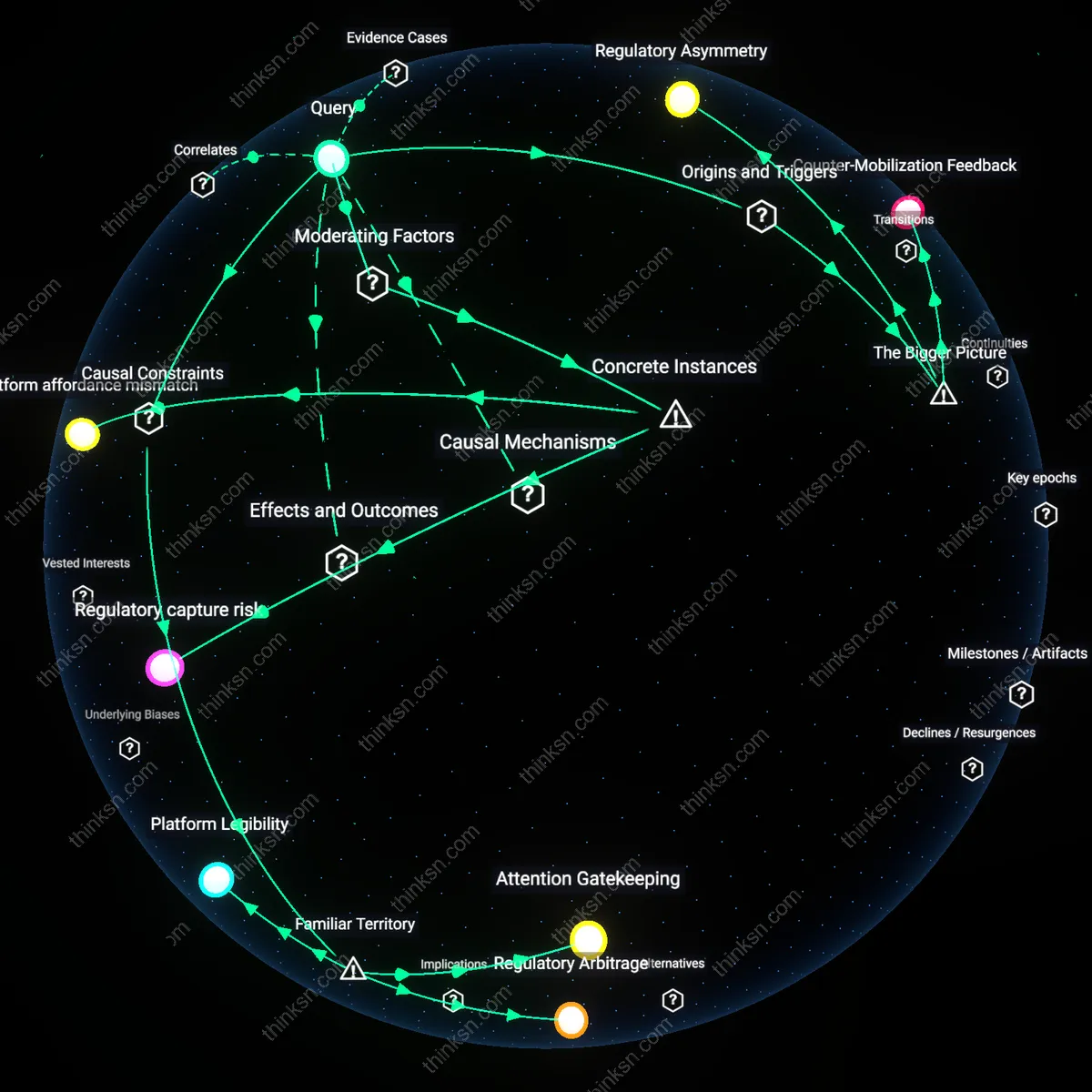

Analysis reveals 8 key thematic connections.

Key Findings

Regulatory Latch

Classifying a major search engine as a public utility would better preserve informational diversity than antitrust remedies by locking in baseline access and non-discrimination rules that survive market consolidation. Unlike antitrust, which acts retroactively to dismantle monopolies after they distort markets, utility regulation establishes forward-looking, asymmetric obligations—such as mandatory indexing and neutral ranking of public-interest content—that persist regardless of market structure. This works through persistent oversight by public utility commissions, which can enforce technical standards on algorithmic dissemination, preventing dominant platforms from systematically downgrading marginalized or non-commercial voices. The non-obvious consequence is that regulatory designation creates a durable institutional anchor—a 'latch'—that resists the political and legal reversals common in antitrust enforcement, thereby stabilizing access conditions over time.

Attention Infrastructure

Treating search engines as public utilities better preserves informational diversity because it reframes the core societal harm not as market foreclosure but as the privatized control of attentional pathways. Antitrust focuses on pricing and competition, missing how search algorithms shape what information users encounter by curating visibility—a function more akin to a city’s water system than a product market. The shift to utility status activates regulatory mechanisms capable of governing non-price dimensions like representational fairness, query transparency, and equitable impression distribution. This matters because it recognizes that informational diversity depends on structural access to user attention, which is currently governed by opaque optimization routines serving ad revenue, not public discourse; treating search as infrastructure disrupts the silent capture of democratic attention by private performance metrics.

Index Integrity Incentives

Classifying a major search engine as a public utility would strengthen informational diversity by obligating neutral indexing practices that prioritize content verifiability over engagement metrics. Unlike antitrust actions that disrupt market concentration without reforming algorithmic incentives, utility designation would install regulatory oversight bodies—such as a digital public service commission—with jurisdiction over ranking parameters, ensuring that underrepresented but high-credibility sources (e.g., local investigative journals, academic repositories) retain crawl visibility and placement weight. This shifts the governance of information access from competition-driven equity to continuity-driven inclusivity, a change rarely considered because most debates focus on ownership structure rather than the hidden economy of web-crawling priorities and index de-duplication thresholds that silently filter diversity before ranking even occurs.

Query Fettering

Public utility status would curb search engines' latent power to shape public discourse through predictive query suppression—the practice of autocompleting or demoting certain search terms based on advertiser influence or platform-aligned content strategies—whereas antitrust remedies do not address this pre-input manipulation. By establishing mandated transparency logs for query suggestion algorithms and requiring proportional representation of sociopolitically sensitive terms (e.g., 'climate change' versus 'global warming hoax'), utility regulation would preserve cognitive pathways into diverse epistemic domains before results are even generated. This dimension is routinely ignored because analysis typically begins at the result page, missing how the framing of inquiry itself is a contested, manipulable frontier of informational control.

Infrastructure Interoperability Threshold

Designating search as a public utility creates enforceable technical mandates for API-level access to real-time indexing data, enabling decentralized archives, nonprofit aggregators, and municipal knowledge hubs to mirror and re-rank core search outputs—thereby increasing systemic redundancy and reducing reliance on a single interpretive lens. Antitrust measures, by contrast, may split monopolies but leave their data silos intact, reinforcing informational bottlenecks even in a fragmented market. The overlooked mechanism here is not ownership concentration but the opacity of indexing infrastructure, which functions as a de facto epistemic chokepoint; utility rules would expose it to pluralistic curation, transforming search from a proprietary product into a shared cognitive scaffold.

Regulatory Lag Trap

Classifying search engines as public utilities would entrench centralized control over information filtering at a moment when algorithmic curation has replaced mere access as the critical bottleneck, locking in a static regulatory model ill-suited to the dynamic, learning-based nature of contemporary platforms. The shift from directory-based information retrieval in the 1990s to AI-driven personalization after 2010 means that the real power lies not in providing access but in continuously shaping what is seen—and utility regulation, designed for stable infrastructure like electricity, cannot adapt to this fluidity. This mismatch creates a regulatory lag trap, where oversight fails to constrain evolving manipulation mechanisms while legitimizing the very entities it aims to discipline, thereby freezing a dangerous status quo under the guise of neutrality.

Antitrust Evasion Incentive

Treating search engines as public utilities after the 2008 financial crisis pivot toward platform dominance risks incentivizing structural evasion, where companies reorganize into vertically integrated ecosystems that fall outside utility definitions while retaining information gatekeeping power. Unlike antitrust, which targets anti-competitive behavior over time, utility classification fixes jurisdiction on a narrow function—search—allowing firms like Google to absorb control through adjacent services (e.g., Android, YouTube) that evolved as regulatory blind spots after the 2010 consolidation phase. This produces an antitrust evasion incentive, where the regulated core becomes a loss leader for a broader, unregulated surveillance infrastructure that consolidates informational control beyond the reach of either utility commissions or traditional competition law.

Normalization of Control

Declaring search a public utility in the post-2016 era of disinformation and algorithmic radicalization risks normalizing state-sanctioned information curation, where the perceived need for stability and fairness leads to codified oversight that legitimizes top-down editorial authority under the banner of diversity preservation. The historical shift from viewing search as a neutral index (pre-2005) to recognizing its role as a de facto editor (post-2012) reveals that regulation may not decentralize but instead institutionalize a single, bureaucratically approved version of neutrality, vulnerable to regulatory capture by both incumbents and political actors. This normalization of control turns what appears to be a democratic safeguard into a mechanism for suppressing emergent, noncompliant forms of expression that challenge algorithmic consensus—precisely the diversity such remedies claim to protect.