Prioritizing Predictive Policing: Fairness vs. Effectiveness?

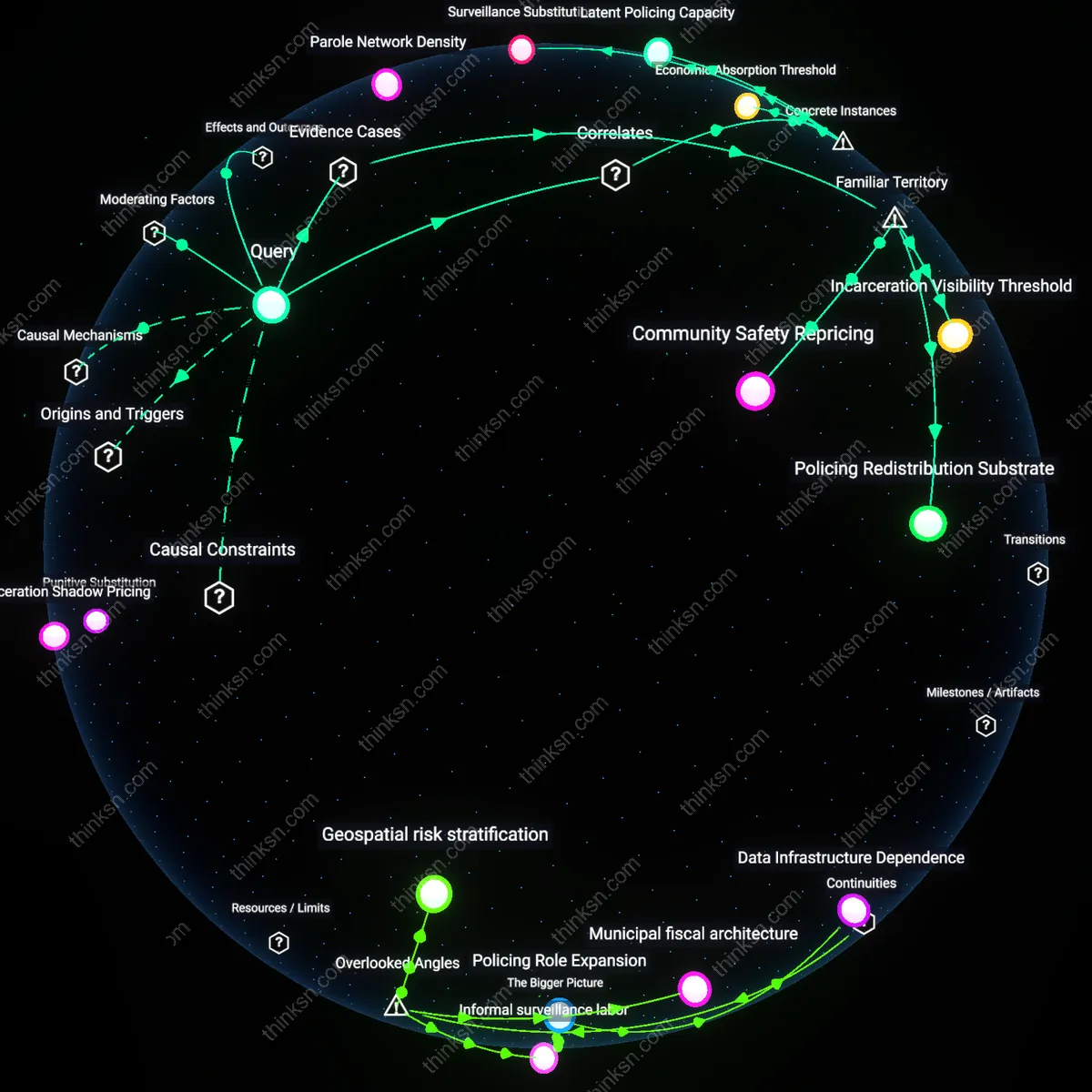

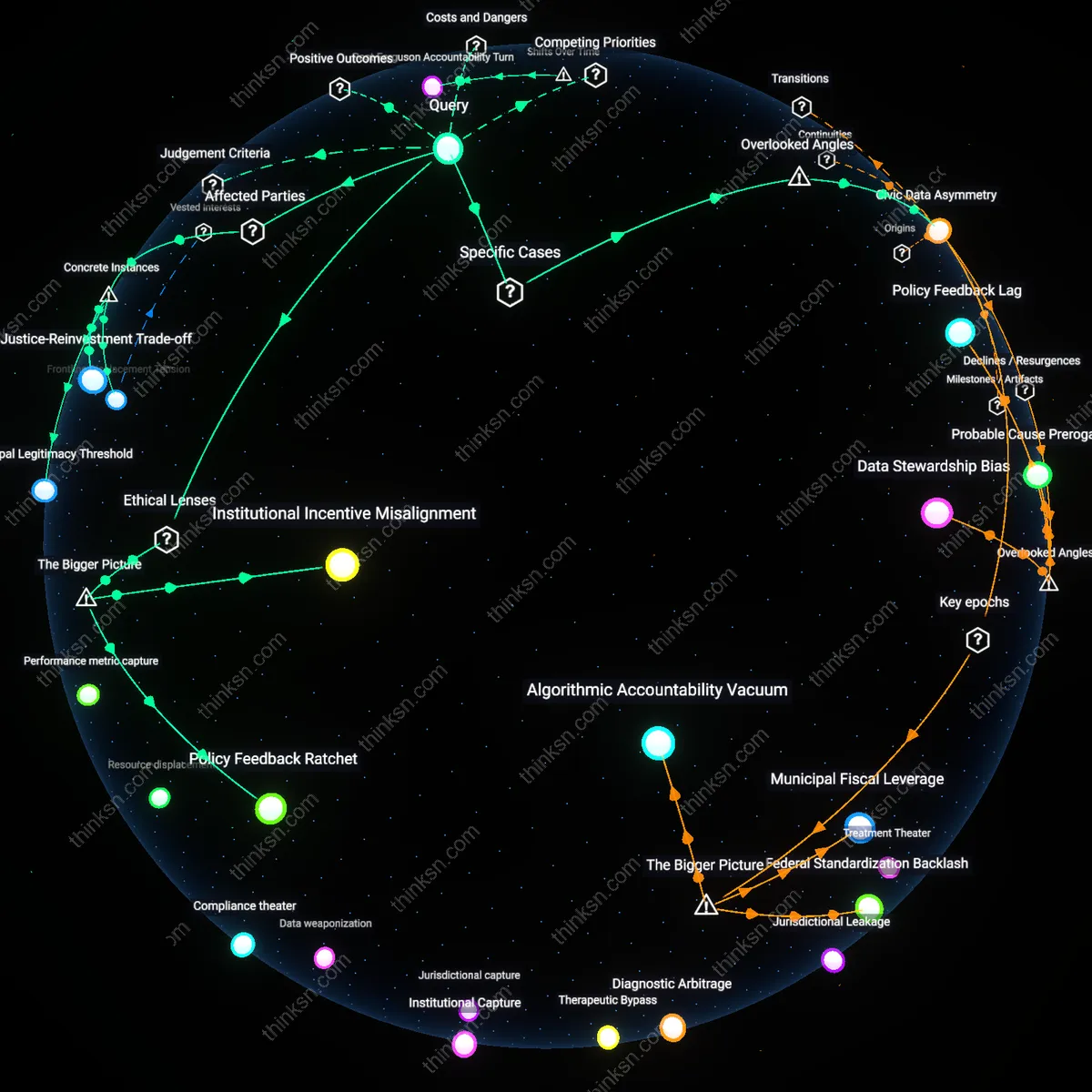

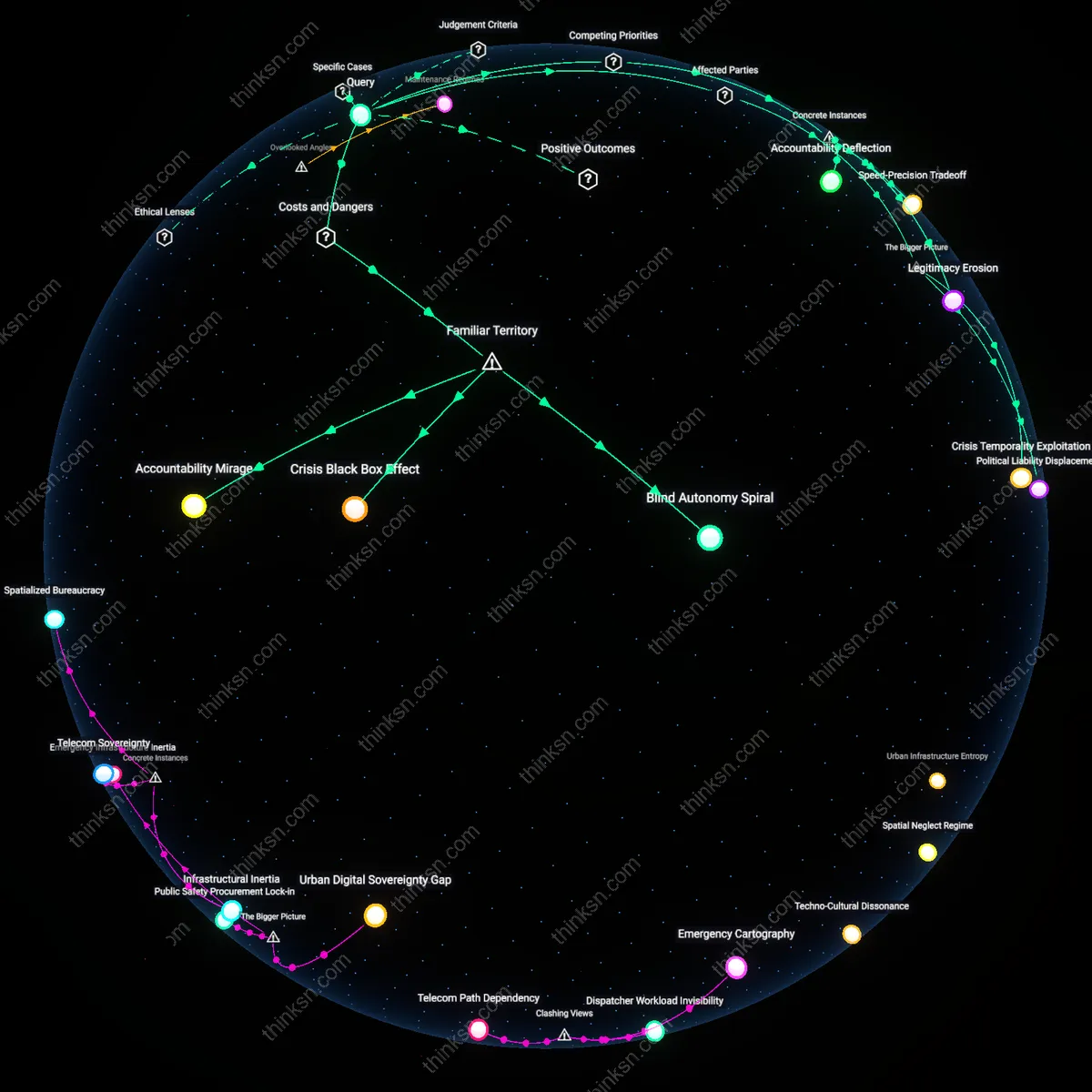

Analysis reveals 10 key thematic connections.

Key Findings

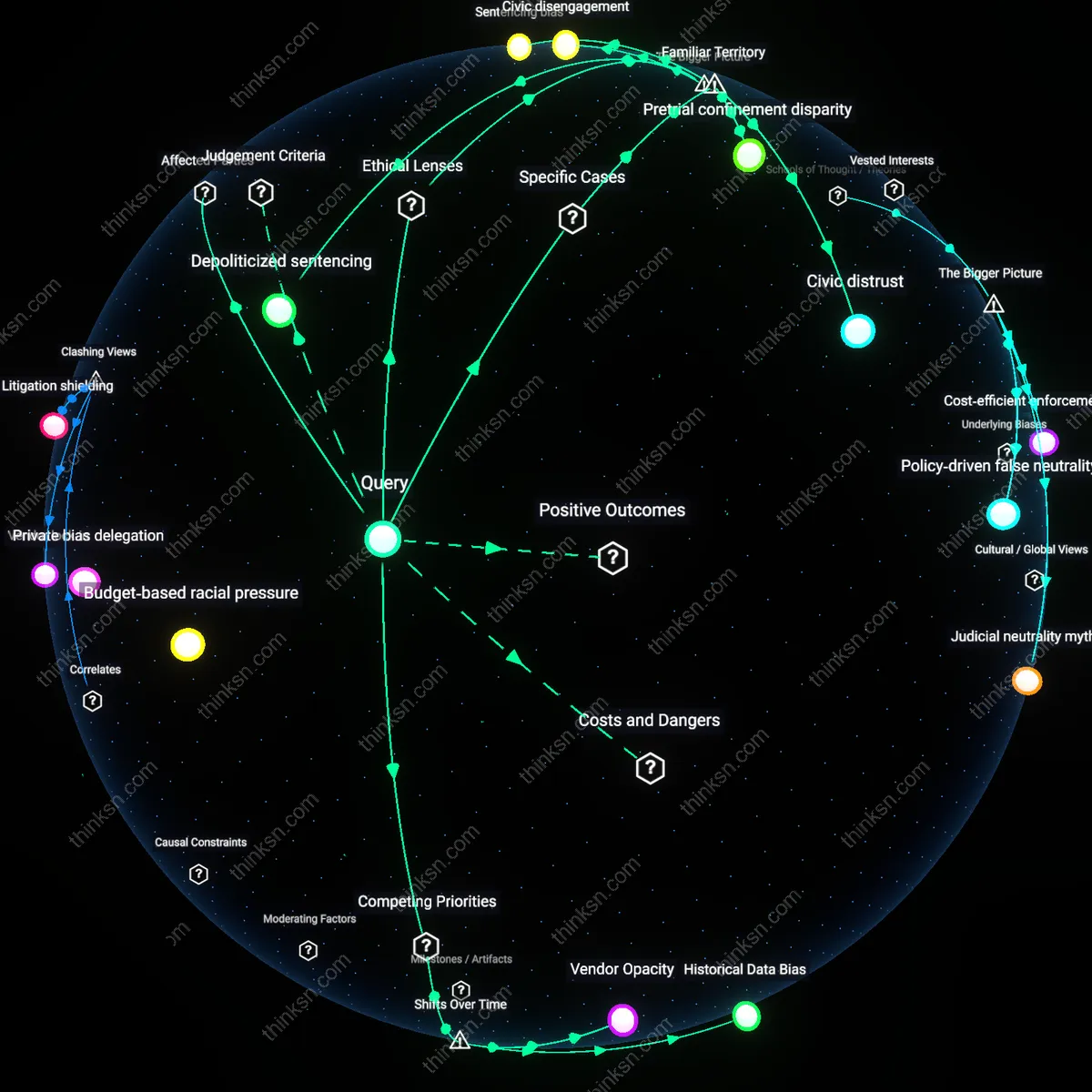

Crisis-Driven Data Legitimacy

Predictive policing gained institutional priority not through demonstrated fairness but through its alignment with crisis-response paradigms after the 2008 recession, which reframed public safety as a fiscal optimization challenge in cities like Chicago and Detroit facing budget shortfalls and rising violent crime. In this context, algorithms were legitimized as fiscally rational tools to allocate shrinking police budgets, displacing community policing with targeted suppression even when outcomes were uneven. The understated shift is that algorithmic deployment became less about preventing crime and more about demonstrating governmental action during visible crime spikes, transforming statistical correlation into political cover—thereby normalizing long-term surveillance infrastructures under the banner of temporary emergency utility.

Automated Marginalization

Predictive policing algorithms should not be prioritized because they reinforce cycles of over-surveillance in minority neighborhoods through feedback loops in historical arrest data. Law enforcement uses these algorithms to allocate patrols, but since past arrests reflect prior discriminatory practices—not actual crime distribution—the system targets the same communities repeatedly, deepening distrust and justifying further intervention. This creates a self-fulfilling prophecy where increased policing leads to more arrests, which in turn validates future algorithmic bias—something most people associate with systemic racism in policing but underestimate in its computational form.

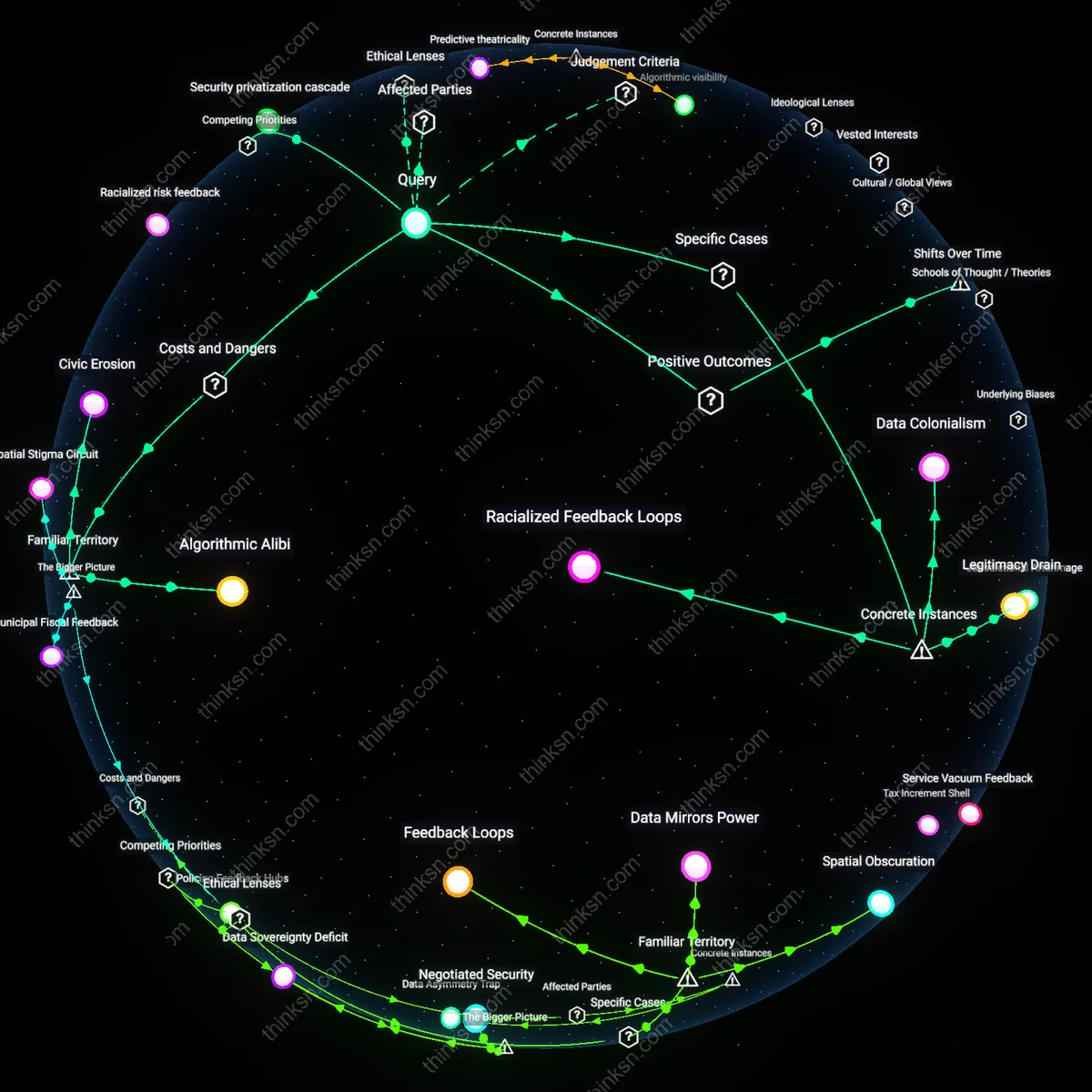

Algorithmic Alibi

Predictive policing should be deprioritized because it provides political cover for maintaining high policing budgets and practices under the guise of scientific neutrality. City officials and police departments use algorithmic tools to claim they are modernizing and reducing bias, but these systems often codify existing disparities while deflecting accountability onto 'objective' technology. This resonates with public skepticism toward 'techno-fixes' in governance but makes complicit a layer of bureaucratic deflection that feels familiar—machines as scapegoats—while preserving the status quo.

Civic Erosion

Prioritizing predictive policing damages community cohesion by transforming minority neighborhoods into data extraction zones where surveillance is normalized and consent is absent. Residents, already subject to higher scrutiny, are less likely to engage with law enforcement or public institutions, weakening collective efficacy and cooperation during crises. While people commonly link policing to tension, they often overlook how algorithmic systems institutionalize disengagement—not through overt violence, but through the quiet erosion of civic participation.

Racialized risk feedback

Predictive policing algorithms should not be prioritized because they amplify historical surveillance biases in urban law enforcement, where over-patrolling in minority neighborhoods generates more arrest data that the system then interprets as higher inherent risk, creating a self-reinforcing loop. This mechanism operates through data dependence—algorithmic models rely on historical crime reports that reflect policing intensity rather than actual crime distribution—causing the system to misattribute disparity as risk. The non-obvious insight is that the algorithm does not merely reflect bias but actively reconstructs it through real-time data feedback, institutionalizing racialized patterns under the guise of statistical neutrality.

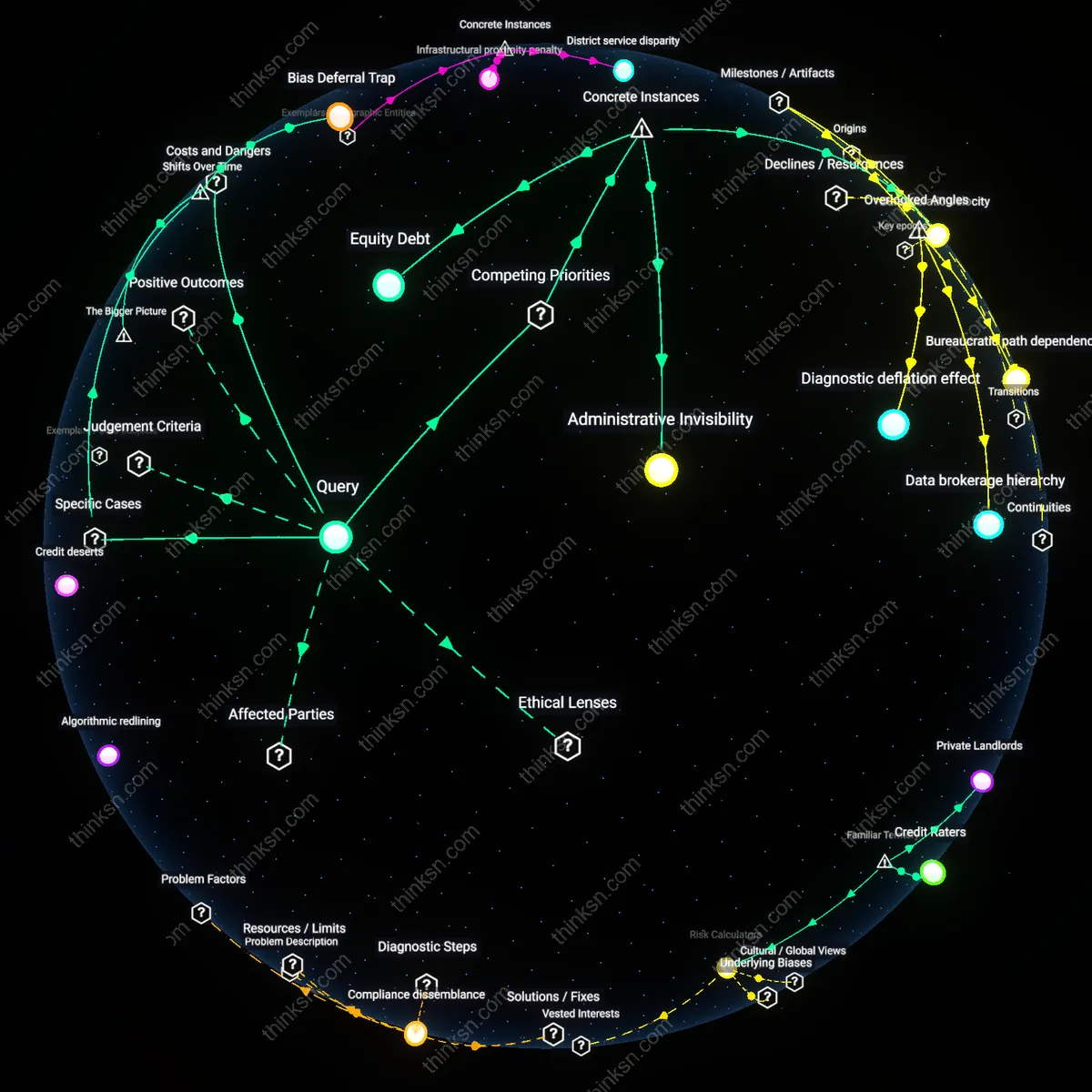

Security privatization cascade

Prioritizing predictive policing undermines community-based safety infrastructures by redirecting municipal budgets toward surveillance technologies, enabling private tech firms to shape public safety policy through contract-based influence over police departments in cities like Chicago and Los Angeles. As public resources shift from social services to algorithm-driven enforcement, the state effectively outsources risk management to proprietary systems whose efficacy and fairness are shielded by trade secrecy, weakening democratic oversight. The underappreciated consequence is that crime reduction becomes contingent on corporate-controlled tools, eroding public accountability and deepening dependency on vendors who profit from sustained urban insecurity.

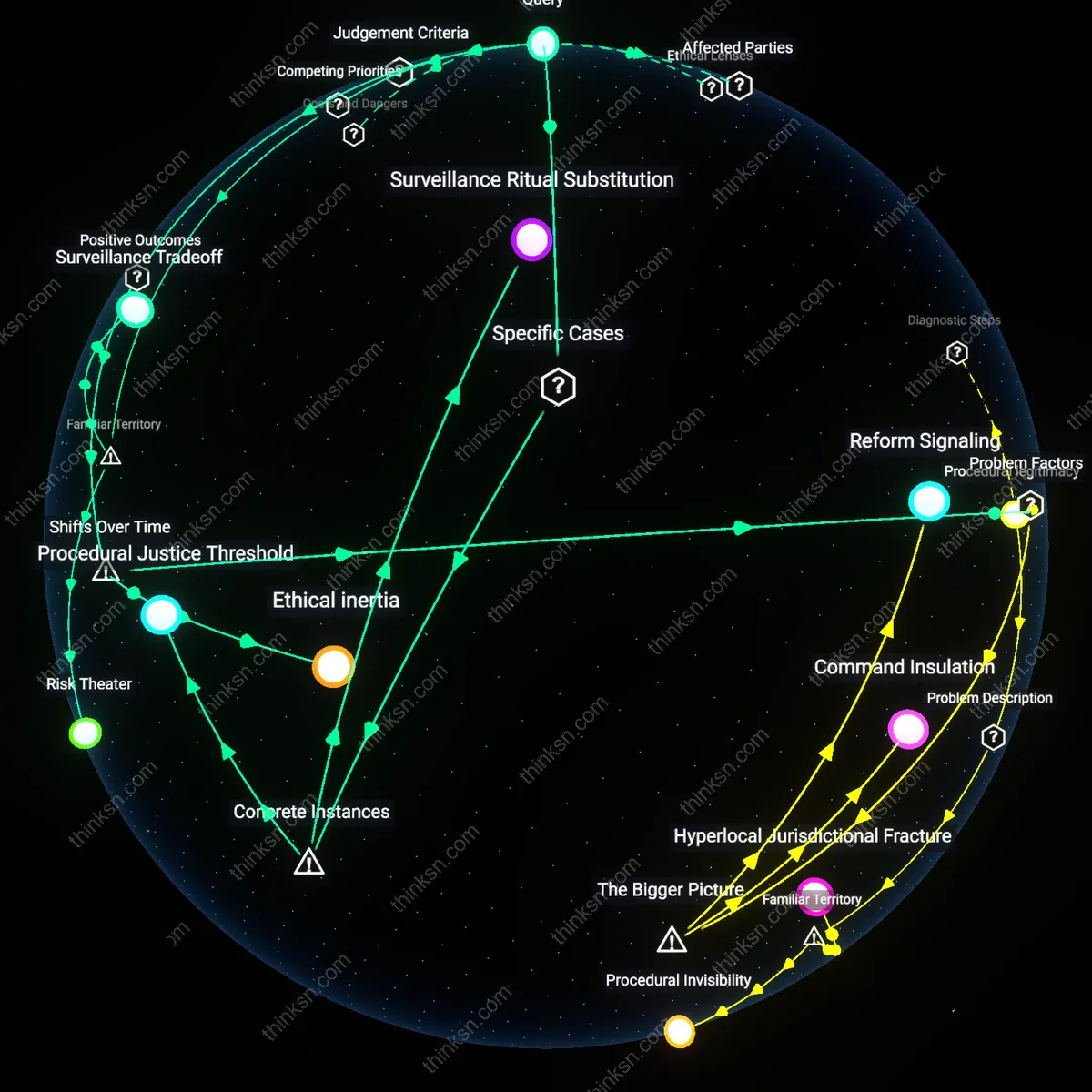

Legitimacy erosion threshold

Even if predictive policing reduces crime, it should not be prioritized because it risks crossing a threshold of community trust beyond which compliance with law diminishes, particularly in historically overpoliced neighborhoods where residents interpret algorithmic targeting as institutionalized discrimination. This dynamic operates through legitimacy—the willingness of communities to accept state authority as fair—which erodes when enforcement mechanisms appear procedurally unjust, regardless of outcome efficiency. The crucial insight is that short-term crime drops may trigger long-term instability if the perceived fairness of the system deteriorates, ultimately undermining the very cooperation necessary for sustainable public safety.

Racialized Feedback Loops

Predictive policing algorithms should not be prioritized because they amplify historical biases in places like Chicago, where the Strategic Subject List used arrest data from over-policed Black neighborhoods to flag individuals as 'likely offenders,' thereby justifying more surveillance in those same areas and reinforcing criminalization cycles without addressing root causes. The mechanism—using biased input data to produce 'risk' scores—creates a self-fulfilling prophecy where over-surveillance begets more data that justifies further intervention, revealing that algorithmic systems can institutionalize racial disparities under a veneer of statistical neutrality.

Data Colonialism

Predictive policing should be rejected in contexts like Oakland, where the Domain Awareness System collected vast quantities of surveillance data primarily from minority communities through partnerships between police and federal agencies, treating urban neighborhoods as data extraction sites without community consent or benefit. The dynamic—where data is harvested from marginalized populations to train systems that regulate them—mirrors colonial resource exploitation patterns, exposing how algorithmic governance can reproduce structural domination by converting social vulnerability into predictive capital.

Legitimacy Drain

Predictive policing undermines long-term public safety in cities like Baltimore, where after the 2015 Freddie Gray protests, increased reliance on algorithmic surveillance eroded trust between law enforcement and Black residents, who perceived these tools as weapons of control rather than protection. By prioritizing technological intervention over community engagement, the police weakened their own legitimacy, demonstrating that even if crime metrics temporarily decline, sustained cooperation necessary for effective policing evaporates when communities feel targeted by opaque, unaccountable systems.