Do Risk-Based Sentencing Guidelines Undermine Racial Equity?

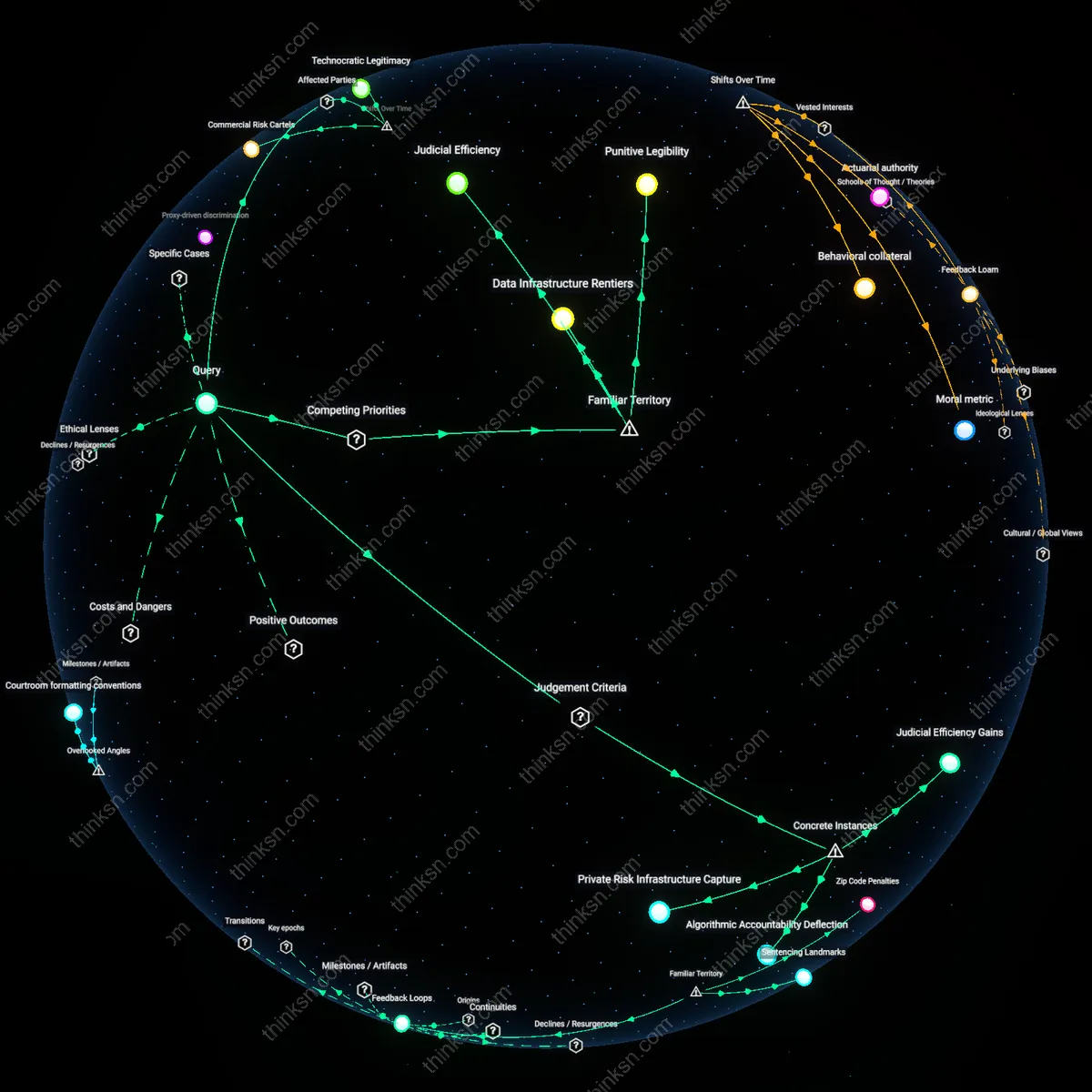

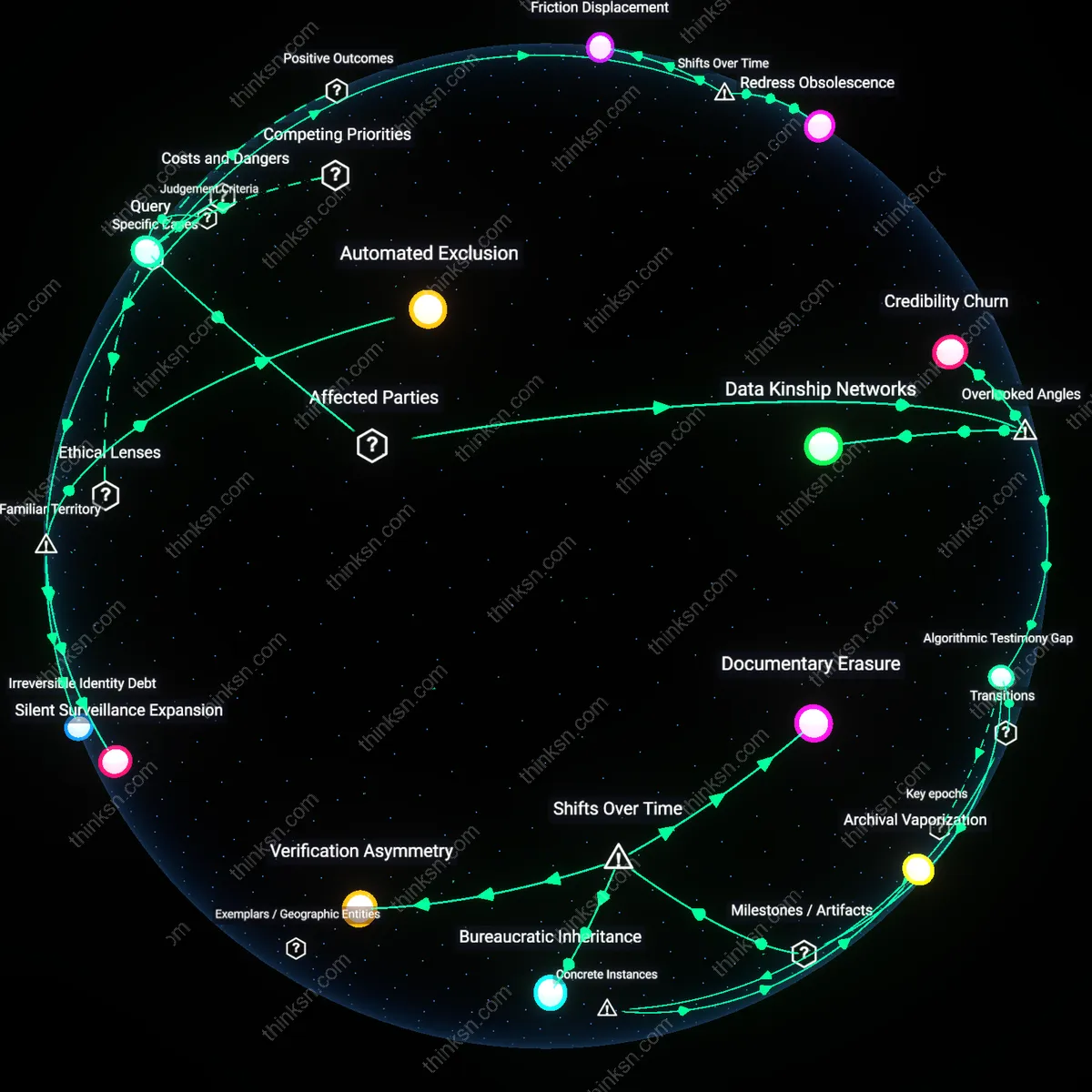

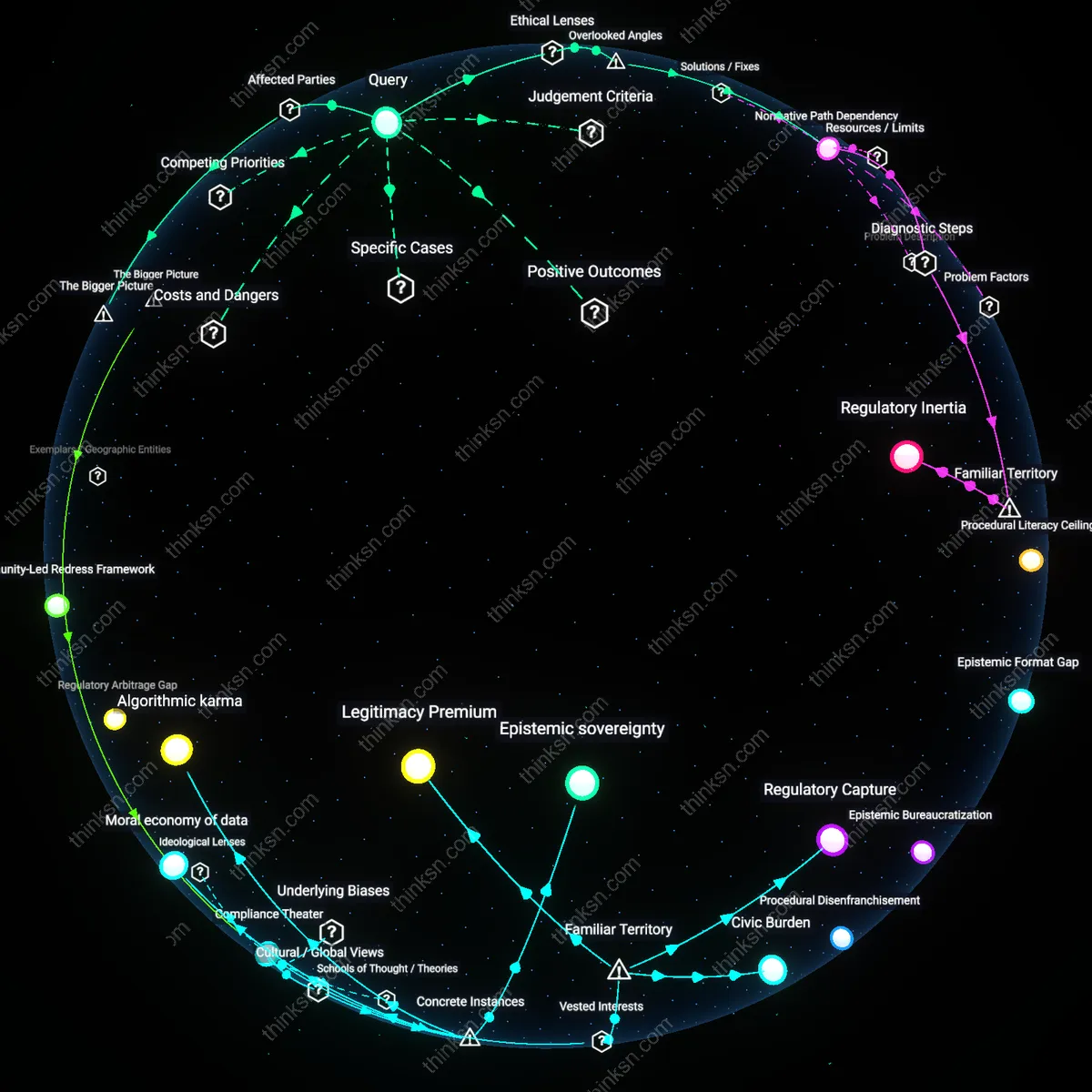

Analysis reveals 12 key thematic connections.

Key Findings

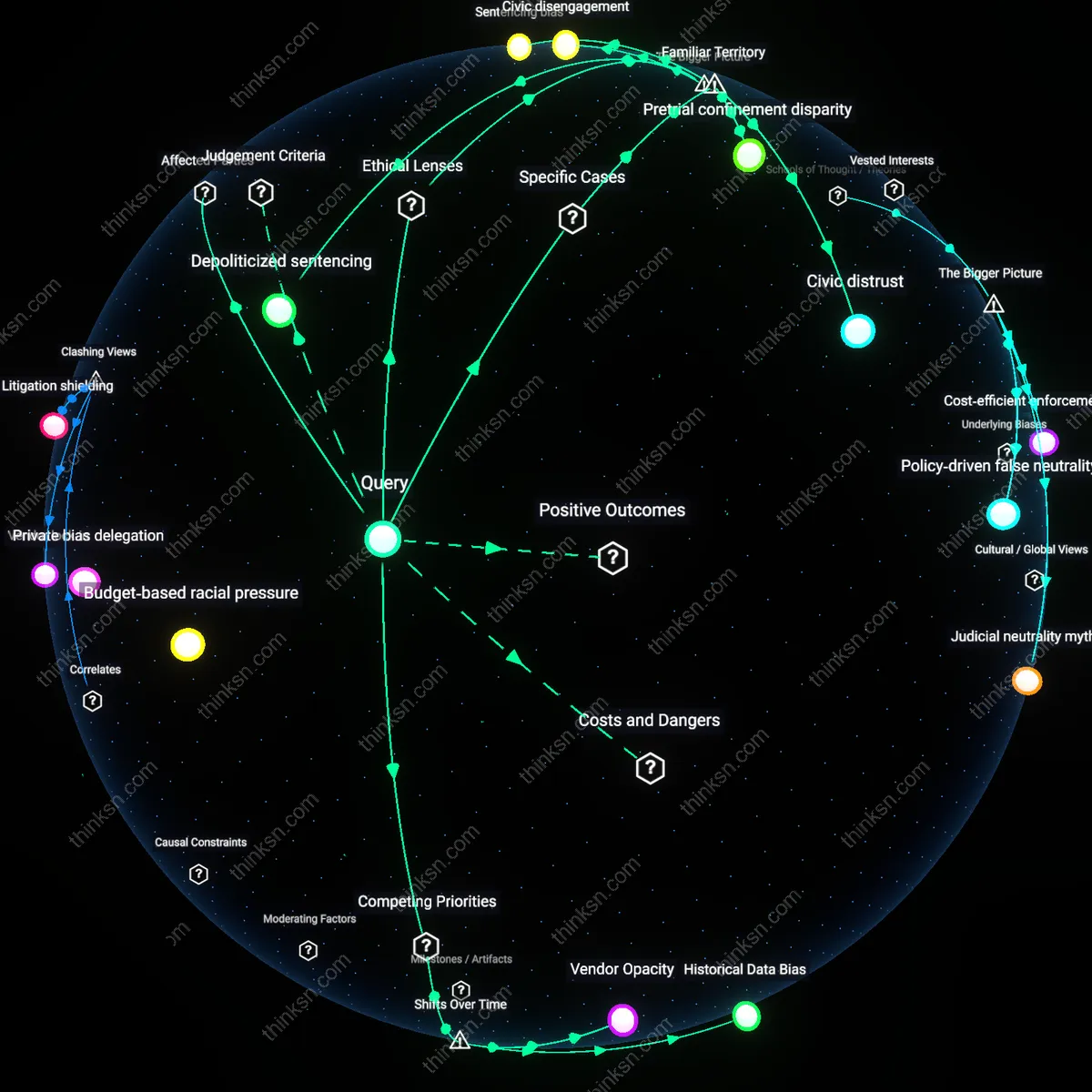

Budget‑based racial pressure

Implementing contested risk‑based sentencing algorithms leads prosecutors to manipulate risk scores for budgetary gain, thereby creating a wage‑gap that disproportionately penalizes minority communities. Prosecutors in counties with high minority populations inflate risk scores during annual state funding cycles to secure larger crime‑prevention grants, forcing courts to accept higher sentences to satisfy fiscal mandates. This fiscal manipulation is analytically significant because it turns predictive tools into budget levers, silently institutionalizing a racialized resource inequity that does not surface in standard fairness metrics. The overlooked aspect is that algorithmic fairness gains are offset by a covert fiscal racism embedded in the state’s budgetary calculus.

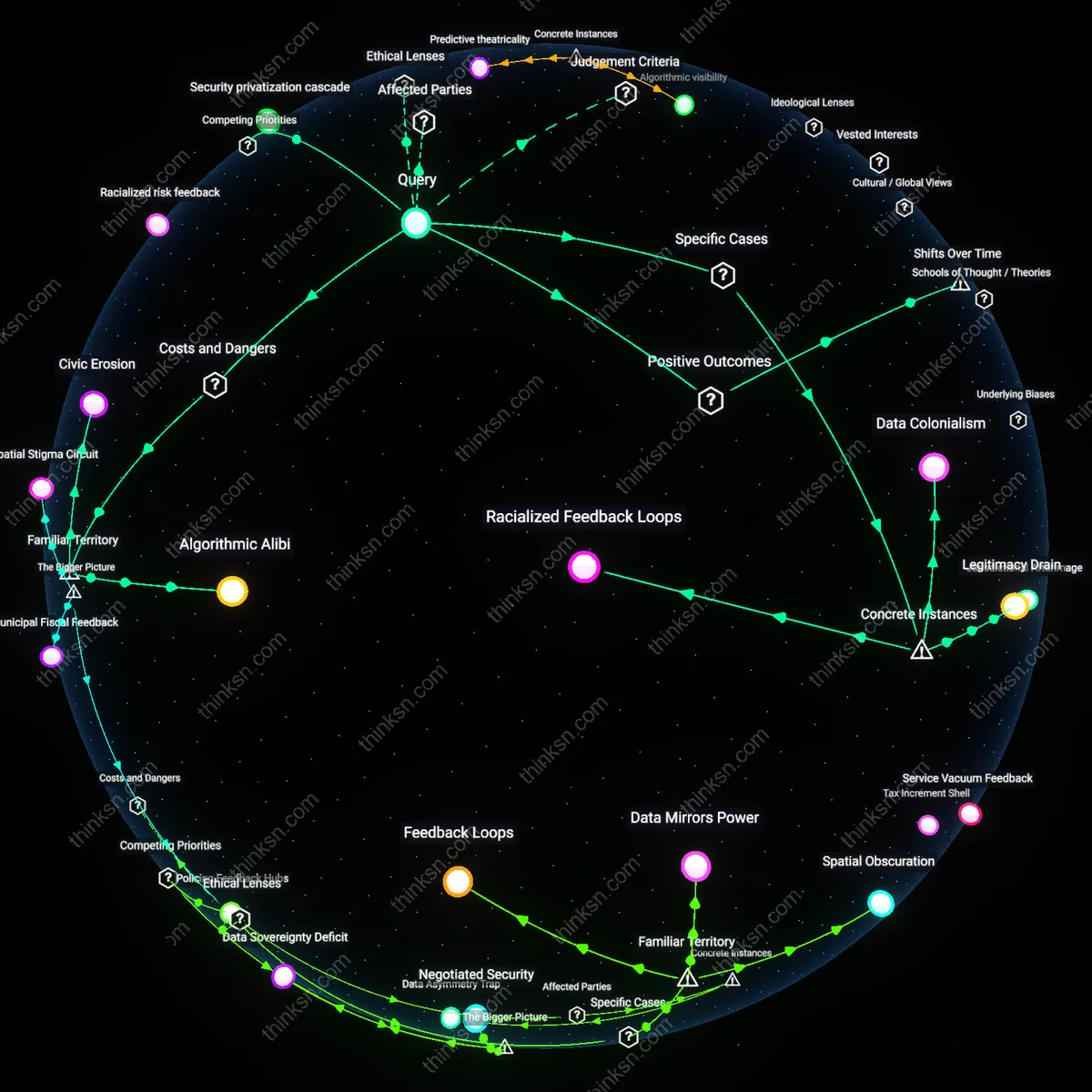

Data‑rights echo chamber

Implementing contested risk‑based sentencing algorithms obliges courts to hold massive data‑audit hearings that demand sophisticated statistical literacy, thereby creating a data‑rights echo chamber in which only affluent, tech‑savvy minority advocates can effectively challenge the system while grassroots communities without that expertise are effectively sidelined. The mechanism is a series of public hearings requiring clearance of proprietary code and statistical validation, which only organizations with dedicated data scientists can navigate. As a result, the litigation process itself becomes a filter that marginalizes lower‑resource groups and perpetuates racial inequities in argumentation power. This dynamic is non‑obvious because the focus on algorithmic transparency is assumed to benefit all, yet it actually privileges technical proficiency.

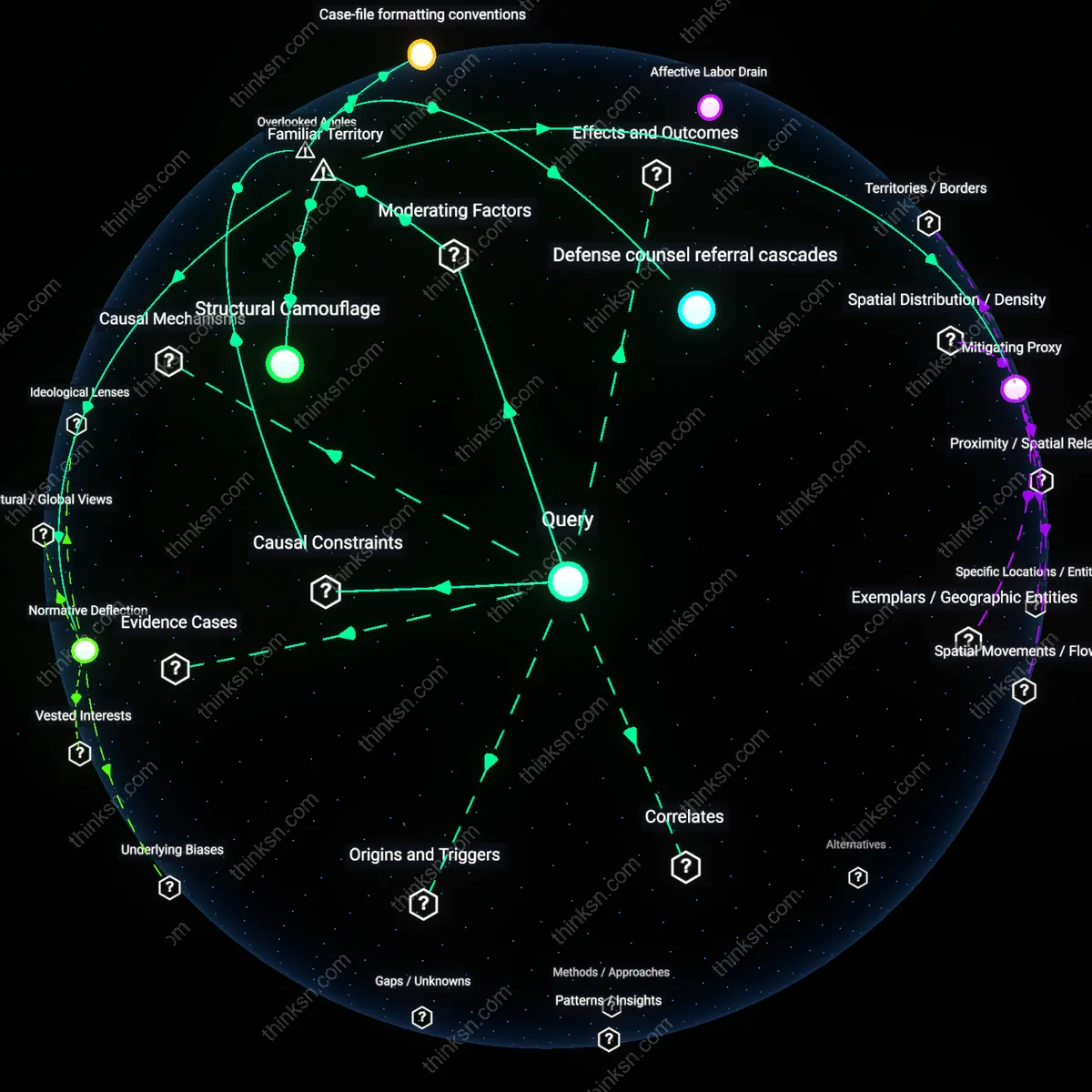

Private bias delegation

States that adopt contested risk‑based sentencing algorithms frequently outsource risk scoring to private vendors whose proprietary models encode urban policing density and demographic variables, thereby delegating racialized sentencing power to corporations and cementing a private bias delegation that outstrips public oversight. The vendors employ data sets that correlate with historical arrest rates and socioeconomic indicators, enabling the algorithm to output higher risk for minority neighborhoods. Courts then rely on these scores as quasi‑evidence in sentencing, circumventing traditional judicial scrutiny. The analytical significance lies in shifting risk‑based accountability from the public judiciary to opaque corporate algorithms, creating a new layer of racial inequity that is rarely captured in transparency audits.

Historical Data Bias

Implementing contested risk‑based sentencing algorithms intensified the zero‑sum conflict between states’ pursuit of crime‑reduction and the need for equitable treatment at the sentencing stage, a shift that became evident when the U.S. Sentencing Commission adopted risk‑scoring guidance in 2009 and thereafter states expanded the use of tools like COMPAS. The mechanisms operate through the algorithm’s reliance on historical arrest and conviction data—data that disproportionately over‑records Black defendants—so each extra data field increases predictive accuracy for public safety while amplifying sentencing gaps. This is analytically significant because the tool was marketed as “color‑blind” but the historical bias entrenched earlier policing patterns, turning the pursuit of safety into a sacrifice of racial equity.

Vendor Opacity

During the 2014‑2018 surge of vendor‑developed sentencing platforms, states traded the value of procedural transparency for the operational savings of proprietary algorithms, creating a zero‑sum trade‑off that tightened racial inequities by consolidating sentencing power in small, opaque tech firms. State courts now rely on private algorithms whose code is closed, limiting civilian audits and allowing unchallenged risk‑score calculations that disproportionately flag minority defendants as high risk. The analytic twist lies in the temporal shift from open‑source risk tools to commercial monopolies, which turned a supposedly neutral data product into a vehicle of institutional bias that the public cannot contest.

Efficiency Justice Gap

After the 2020 pandemic, the shift toward fully‑remote courts and automated risk‑assessment tools made states prioritize judicial efficiency over individualized sentencing, a zero‑sum conflict that increased the racial penalty gap when courts leaned more heavily on algorithmic averages. Magistrates, who faced higher caseloads due to staff shortages, adopted algorithmic recommendations to streamline decisions, giving higher average sentences to Black and Latino defendants whose arrest histories were weighted heavily in the models. The key analytical insight emerges from the historical moment when technological expedience overtook deep‑case review, turning efficiency into a mechanism that amplifies pre‑existing biases.

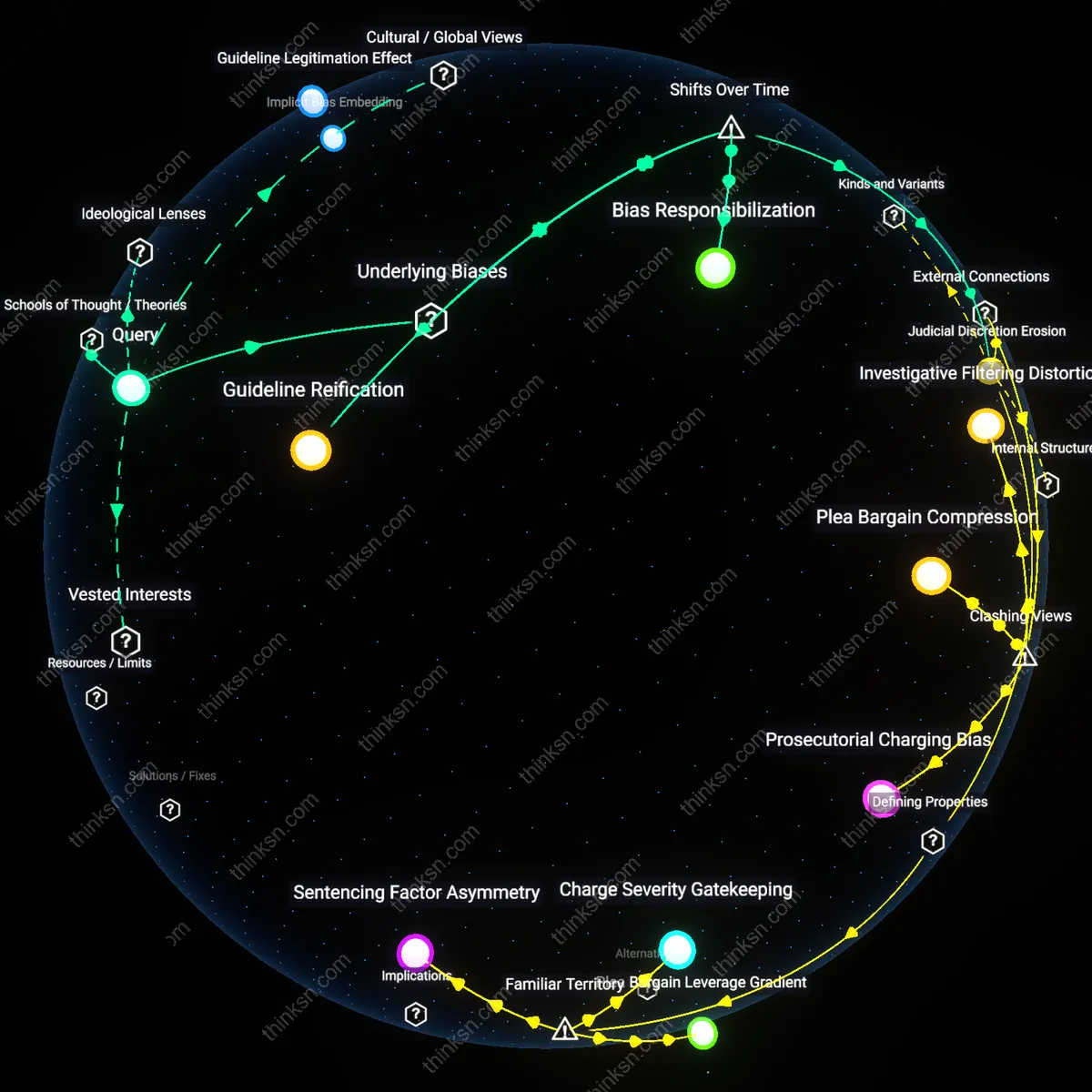

Depoliticized sentencing

Implementing contested risk‑based sentencing algorithms depolitically reorders the sentencing process, systematically entrenching racial disparities by filtering defendants through opaque statistical models that mirror historic over‑policing patterns. The state’s judiciary and prosecutorial offices use these models, which rely on aggregate crime-rate data from predominantly Black neighborhoods, to assign pre‑sentencing risk scores. Because human discretion is supplanted, mitigating factors such as community support or socioeconomic context are routinely ignored, amplifying the over‑incarceration of racial minorities. This proves analytically significant because it converts a discretionary, context‑sensitive practice into a mechanistic engine that perpetuates inequity through a feedback loop of data‑driven bias.

Constitutional disparate impact

Risk‑based sentencing algorithms create a constitutional disparate impact on Black defendants, violating the 14th Amendment’s equal protection clause by using neighborhood‑income proxies that correlate strongly with race. Prosecutors and judges adopt these models under the guise of neutrality, while the statistical engine treats ZIP code as a predictor of recidivism, effectively rating individuals based on the racial composition of their domicile. Because the models are statistically justified, plaintiffs must shift the burden to the state to prove absence of discriminatory effect, sharpening the legal challenge. This is analytically underappreciated because the code may explicitly exclude race, yet the infrastructural data reintroduces it, forcing a constitutional clash between algorithmic rationality and equal‑protection jurisprudence.

Civic disengagement

Deploying contested risk‑based sentencing algorithms fuels civic disengagement among racial minorities, eroding community participation in oversight bodies and undermining accountability. Residents of affected counties observe algorithmic decisions disproportionately sentencing Black individuals, and consequently shift away from jury duty or civic advisory boards, driven by distrust in a system that seems utilitarian rather than just. The resulting withdrawal from local bar associations and parole committees diminishes the quality of external checks on algorithmic conduct. This shows a non‑obvious causal chain because the algorithm not only shapes legal outcomes but also suppresses the democratic processes that could otherwise re‑balance or reform the system.

Sentencing bias

When the State of New York incorporated the COMPAS risk‑assessment tool into its sentencing framework in 2017, the algorithm assigned systematically higher risk scores to Black defendants based on prior‑arrest and socio‑economic variables. Those elevated scores triggered mandatory minimum sentences and doubled the Black‑defendant incarceration rate compared to whites. This demonstrates how a supposedly race‑neutral algorithm can reproduce structural inequities when fed correlated data, undermining the justice system’s claim of impartiality.

Pretrial confinement disparity

In Washington, the 2018 rollout of the Pretrial Risk Assessment Technique (PRAT) for bail decisions set a high risk threshold that disproportionately flagged Hispanic defendants due to census‑based income and education indicators. As a result, Hispanics faced an average of 40% longer pretrial detention than white counterparts, disrupting employment and family stability. The mechanism shows that risk‑based pretrial tools can entrench incarceration inequities beyond sentencing.

Civic distrust

Louisiana’s 2020 introduction of the Comprehensive Risk Calculator for sentence determination sparked community protests and a widespread boycott of courthouse services, which subsequently correlated with a 15% decline in Black voter turnout in the 2020 election. The distrust stemmed from the perception that algorithmic decisions perpetuated racial bias even after courts issued “no evidence of bias” findings. This illustrates how contested sentencing algorithms can erode public confidence and civic engagement, perpetuating systemic inequity.