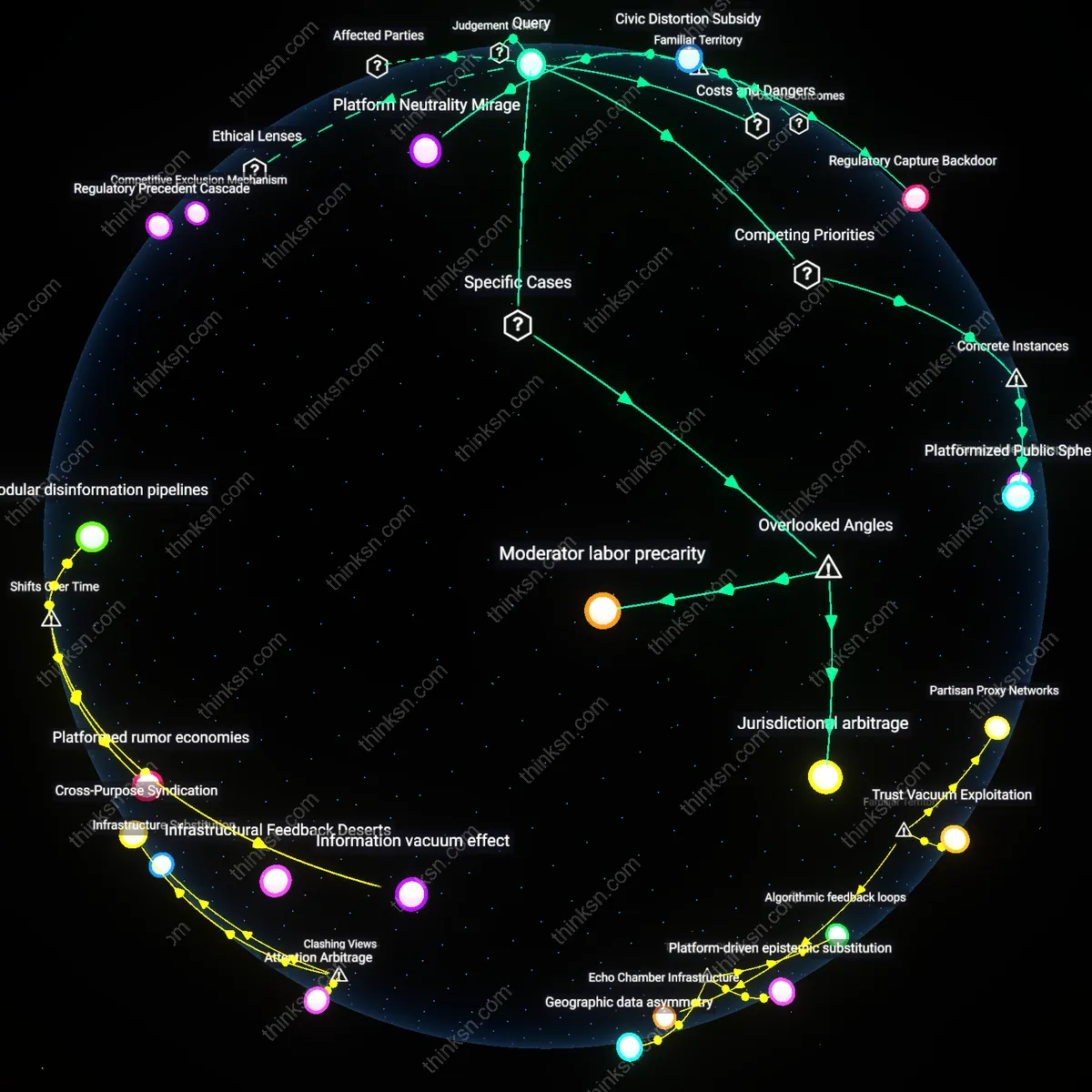

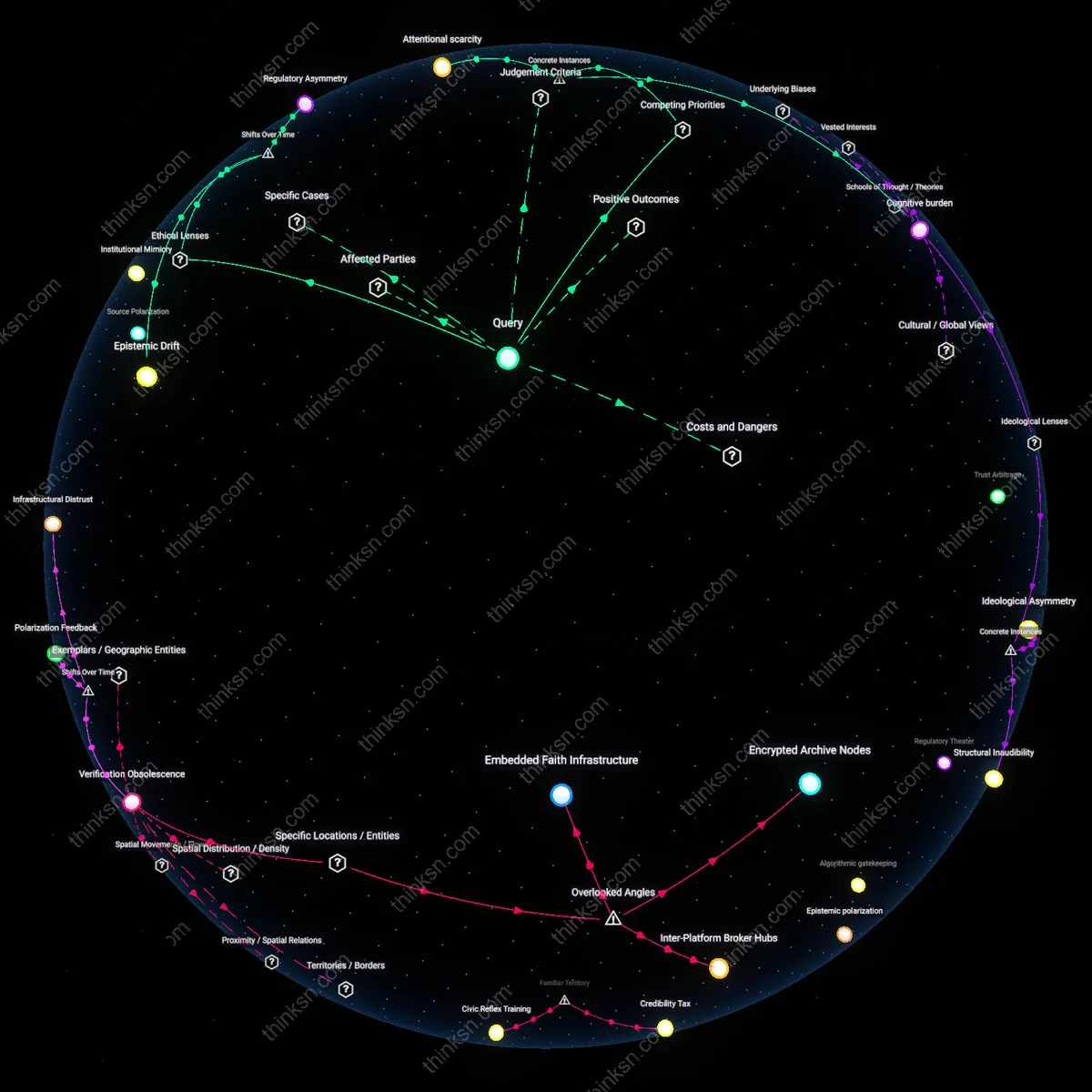

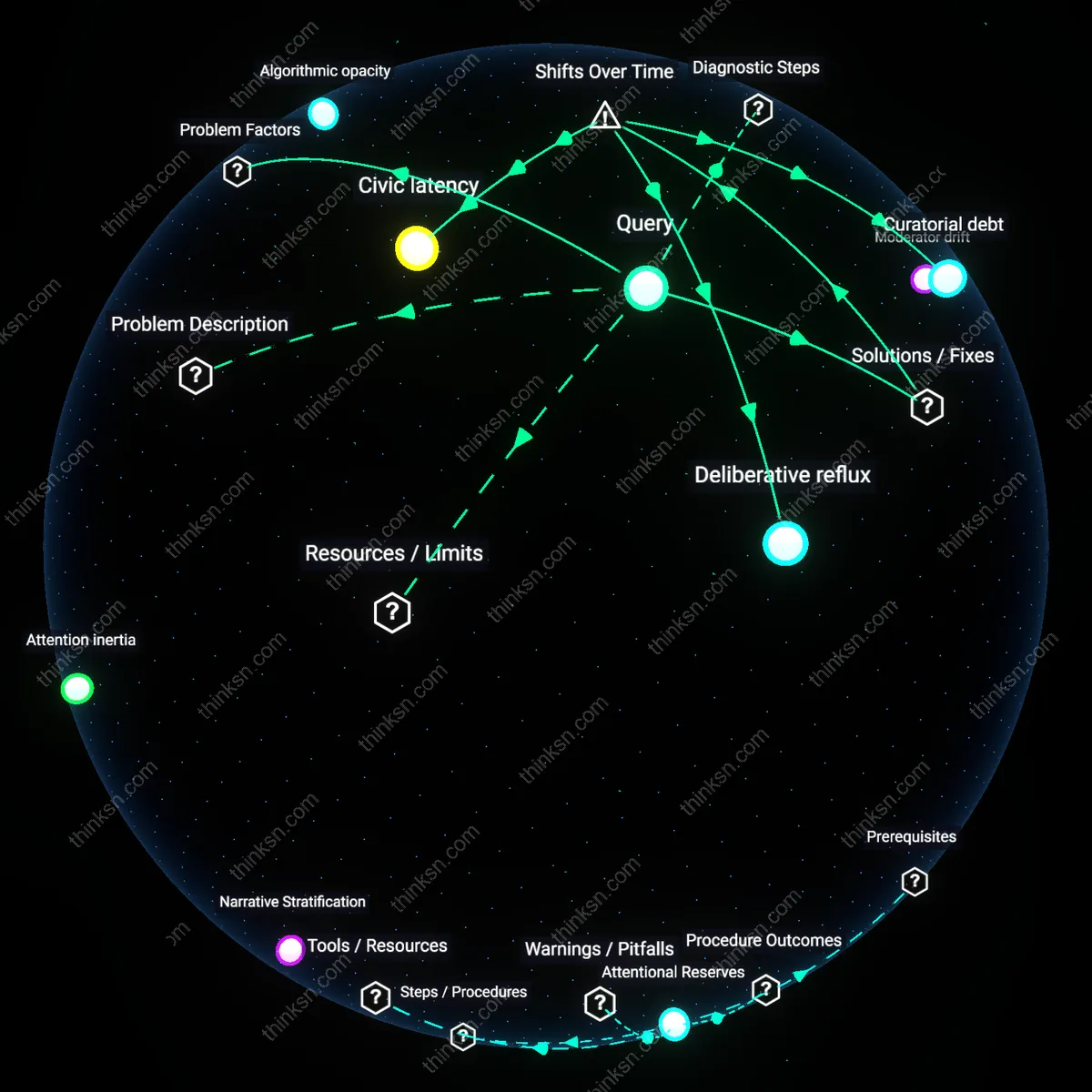

Does Ad Data Monopoly Fuel Political Divides?

Analysis reveals 6 key thematic connections.

Key Findings

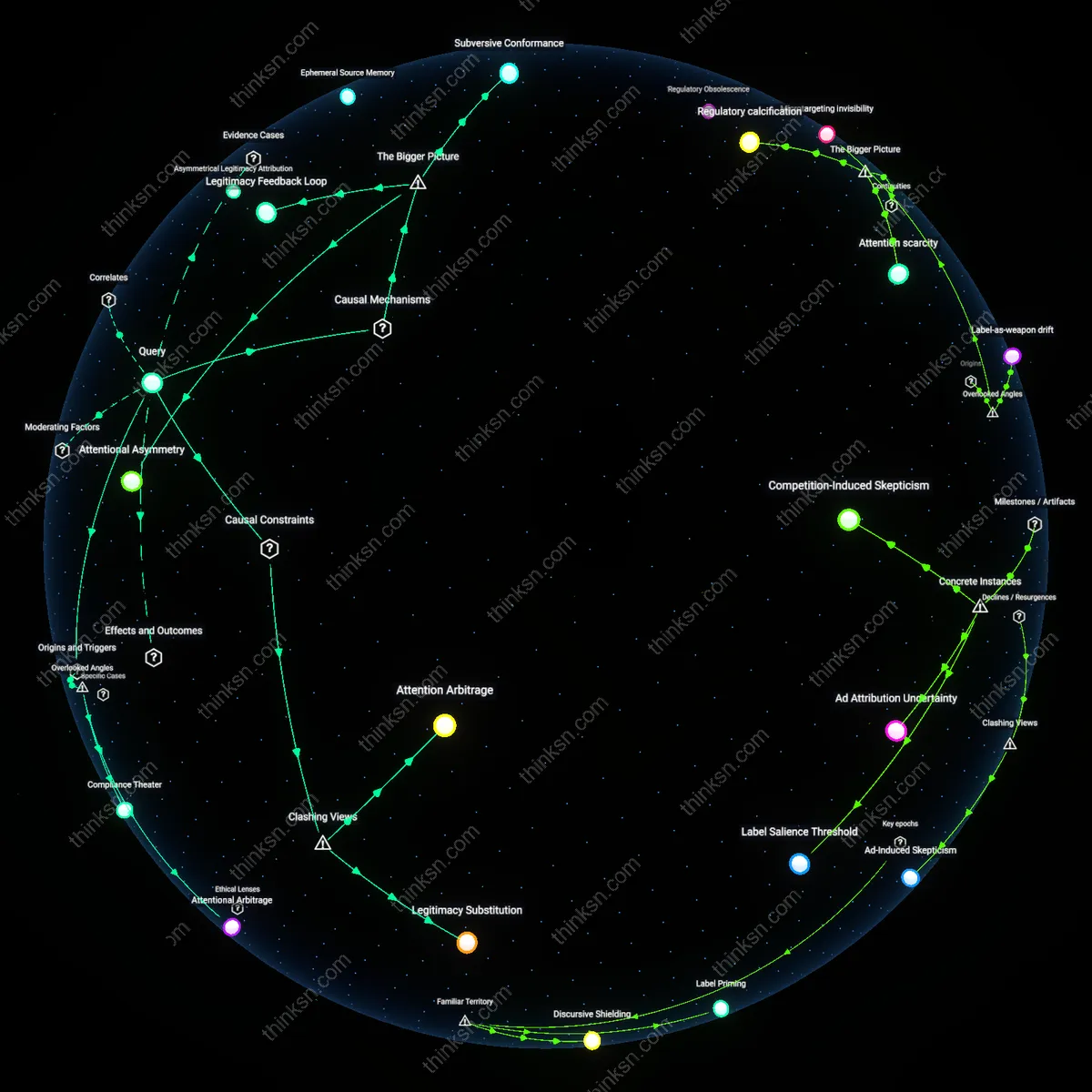

Algorithmic Neutrality Paradox

Concentrated advertising data increases political polarization not because platforms push extreme views, but because regulatory pressure to appear neutral incentivizes platforms to amplify controversial content by default—algorithmic systems optimized for engagement interpret neutrality as passively surfacing divisive material without correction, intensifying ideological segregation in places like Facebook’s News Feed in swing states during election cycles. This mechanism is non-obvious because the standard critique assumes active bias, whereas the real driver is a defensive operational posture that avoids editorial judgment, effectively rewarding outrage under the guise of fairness.

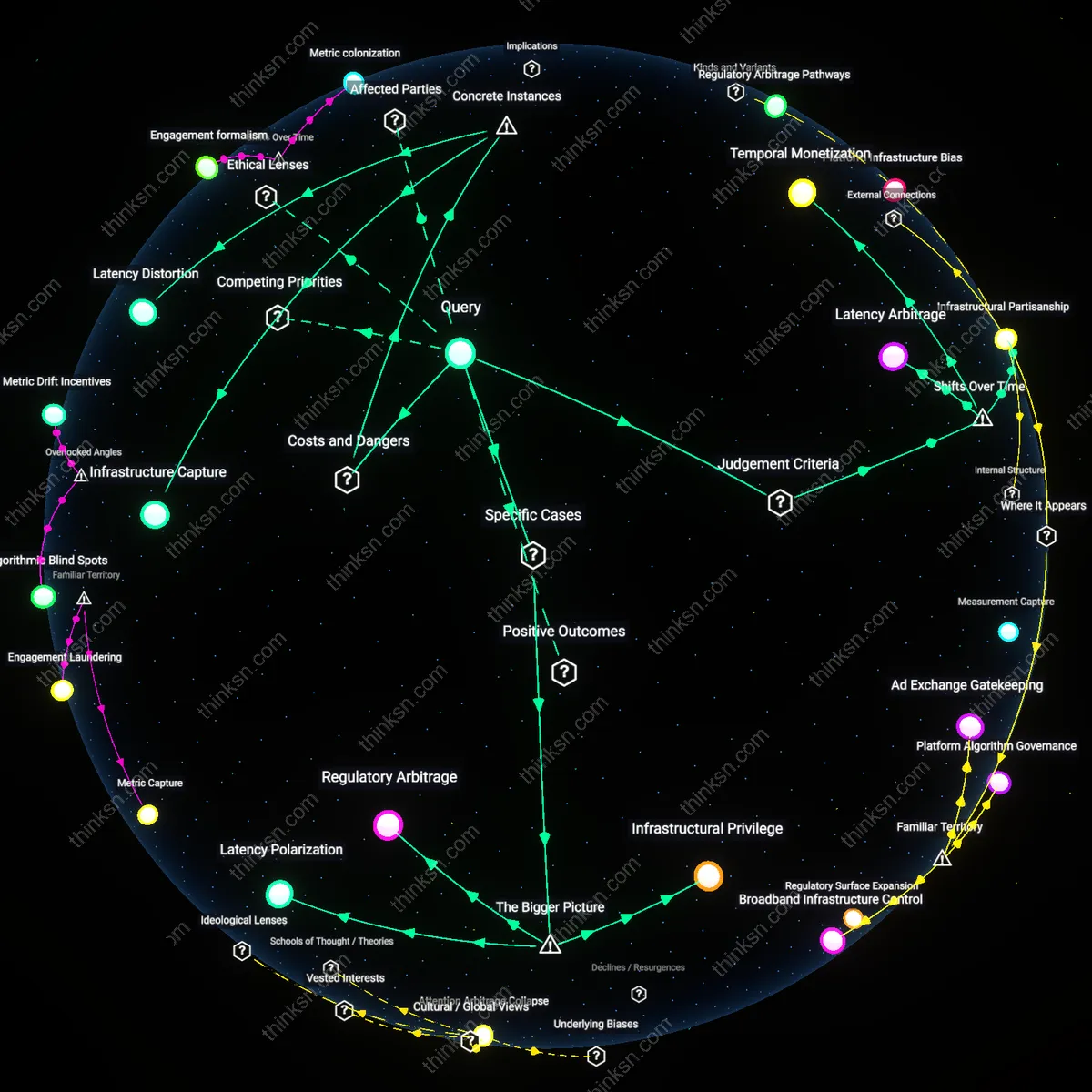

Regulatory Arbitrage Gradient

Sectoral regulation reduces polarization only when it creates uniform constraints across digital and traditional media, but its failure to do so generates a gradient where political advertisers migrate from regulated TV broadcast rules to unregulated social media microtargeting, as seen in the 2020 U.S. election when PACs shifted dark money into Instagram story ads. This underappreciated outcome reveals that regulation does not suppress manipulative advertising but redirects it into less visible, more personalized channels, making polarization less detectable but more behaviorally entrenched.

Audience Fracture Feedback Loop

Concentrated advertising data polarizes not by changing beliefs but by enabling campaigns to speak exclusively to politically volatile subgroups, such as Latino voters in Arizona receiving conflicting Spanish and English ads from the same candidate, thereby deepening fractures that the platform then interprets as organic divergence, reinforcing segmentation in future targeting. This feedback loop is invisible in conventional analyses that treat polarization as belief change rather than as a structural outcome of fragmented messaging sustained by data infrastructure.

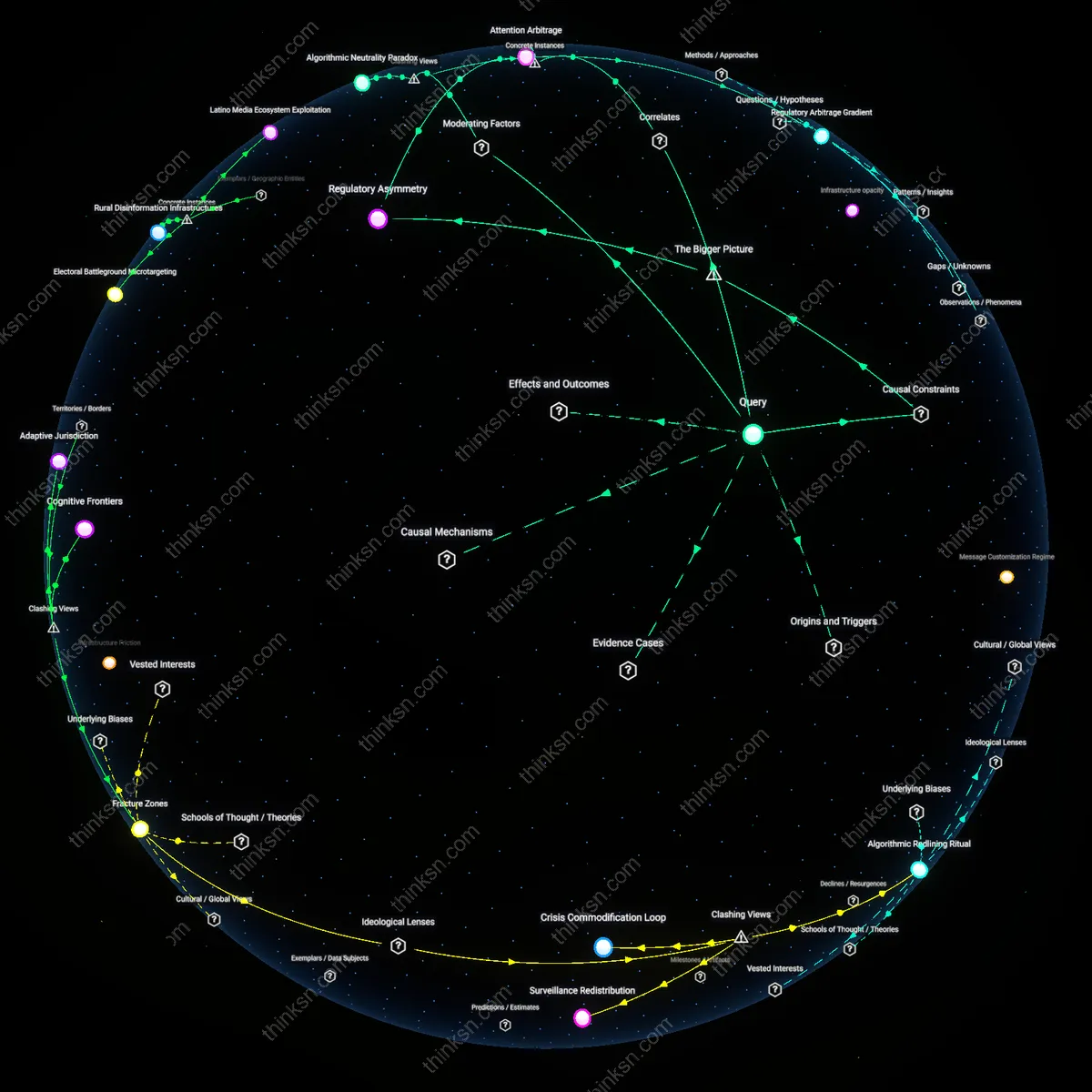

Attention Arbitrage

Facebook’s targeted advertising infrastructure during the 2016 U.S. presidential election enabled political actors to isolate and exploit ideologically extreme segments by delivering contradictory messages to opposing groups, thereby amplifying in-group reinforcement and out-group distrust; this mechanism operated through microtargeting algorithms that prioritized engagement over content coherence, allowing divergent narratives to coexist without public scrutiny. The significance lies in how platform architecture—not message content—enables polarization by economically rewarding divisiveness, a dynamic obscured when focusing only on misinformation. The case reveals that polarization is not solely driven by false beliefs but by the profitable fragmentation of audience attention.

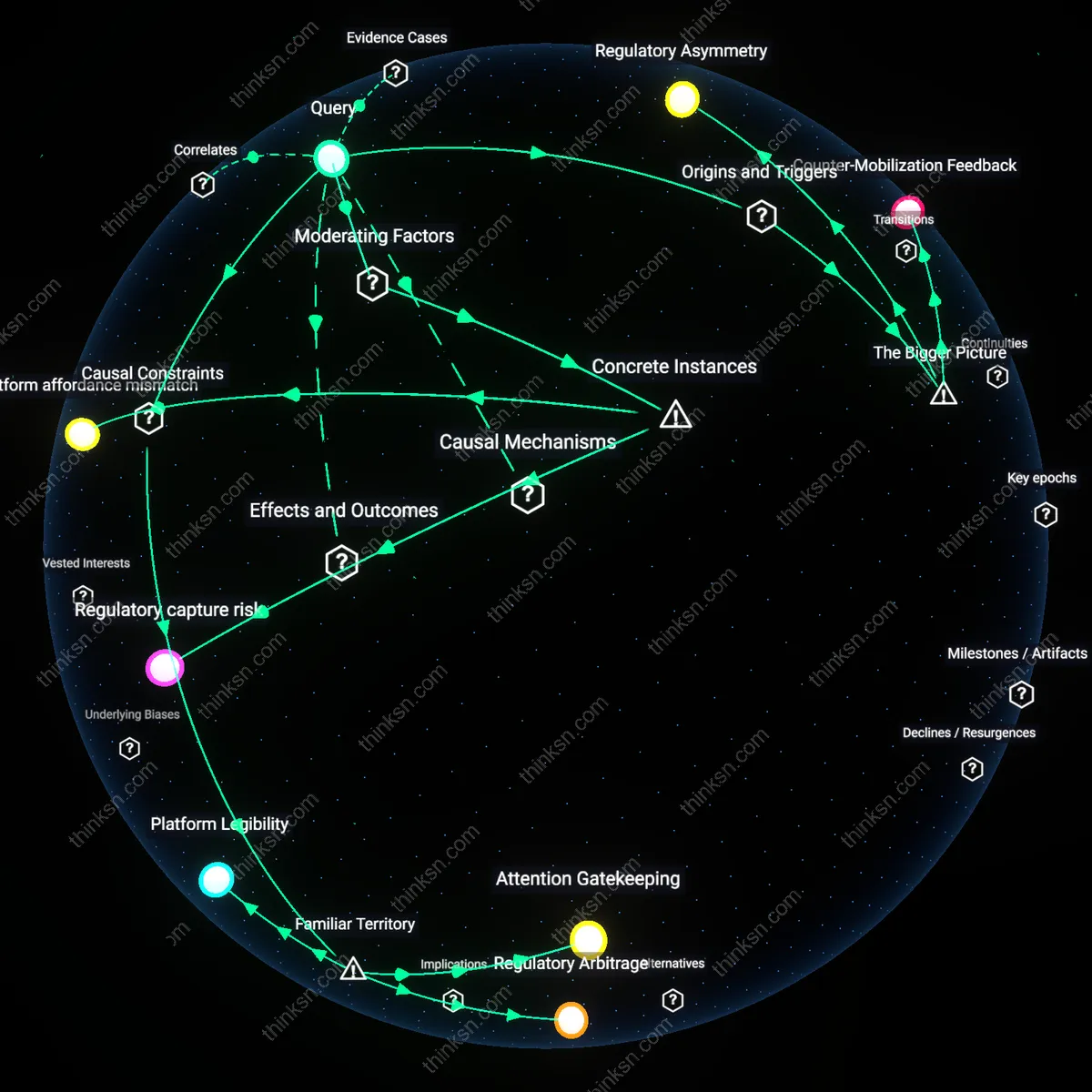

Regulatory Asymmetry

Cambridge Analytica’s use of psychographic profiling on Facebook in the Brexit referendum campaign correlated with increased regional affective polarization, not because the models were uniquely accurate, but because they exposed a gap between data-intensive campaign practices and outdated electoral communication regulations; the U.K.’s Electoral Commission lacked jurisdiction over platform data flows, allowing targeted messaging to evade traditional campaign finance and transparency rules. This misalignment enabled actors to scale divisive content without equivalent accountability, revealing that regulation focused on disclosures rather than data infrastructure perpetuates an enforcement lag. The case underscores that sectoral rules fail not by absence but by temporal and technical mismatch with digital advertising systems.

Feedback Entrenchment

In India’s 2019 general election, the BJP’s deployment of WhatsApp broadcast lists to disseminate Hindu nationalist content in rural Uttar Pradesh correlated with increased communal hostility between religious groups, not because the messages were novel, but because encrypted, traceable-to-sender messaging created echo networks that reinforced preexisting tensions through repeated algorithmically amplified forwards. Since messages circulated beyond public view, fact-checking and regulatory oversight could not interrupt the feedback cycle, allowing emotionally charged content to deepen identity divisions. This illustrates how data concentration on semi-closed platforms entrenches polarization not through reach but through unobservable repetition, a mechanism distinct from public disinformation campaigns.