Does Relying on AI Forecasting Risk Corporate Myopia?

Analysis reveals 10 key thematic connections.

Key Findings

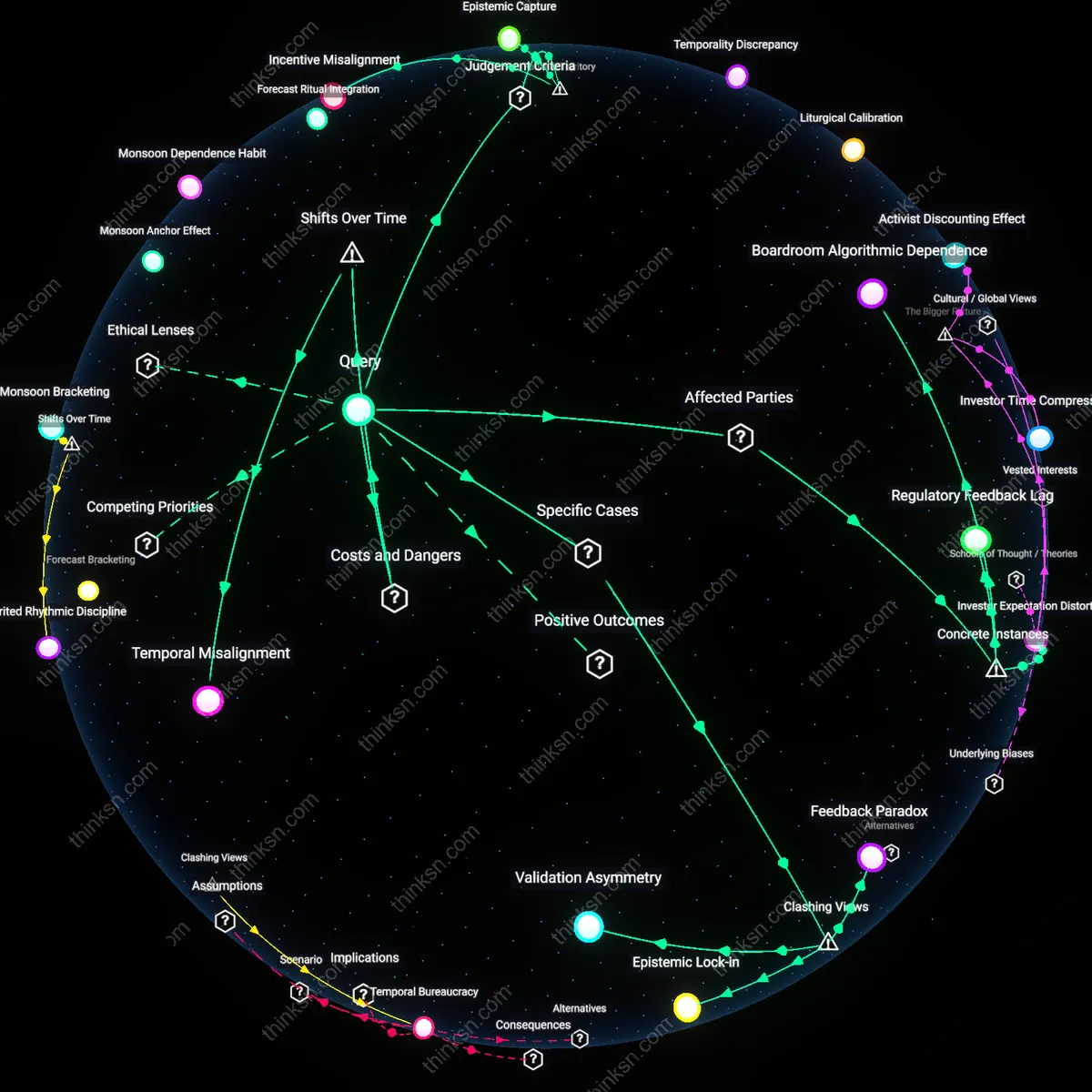

Boardroom Algorithmic Dependence

Corporate boards at Enron increasingly deferred to proprietary AI-adjacent risk models developed by Enron Analytics, which prioritized short-term price volatility smoothing over long-term systemic risk exposure, thereby entrenching strategic myopia under the guise of data sophistication; this dependence neutralized whistleblower inputs and reduced scenario planning to model-fitting exercises, revealing that reliance on proprietary forecasting systems can institutionalize a form of cognitive capture where data validates preapproved outcomes rather than challenges them.

Investor Expectation Distortion

When BlackRock’s Aladdin platform began integrating AI-driven market forecasts into its asset allocation recommendations for corporate clients in 2018, portfolio managers at firms like General Electric aligned capital spending with Aladdin’s short-cycle predictions, sacrificing R&D investments to meet algorithmically projected earnings trajectories; this shift illustrates how third-party AI forecasting tools, designed for liquidity management rather than strategic vision, can distort intertemporal trade-offs by binding corporate strategy to externally generated temporal horizons not under board control.

Regulatory Feedback Lag

Following the 2010 Flash Crash, where AI-driven trading algorithms exacerbated market instability, the SEC implemented Regulation SCI to monitor automated systems, yet corporate boards continued adopting similar predictive models for internal forecasting without equivalent oversight mechanisms, exemplified by AIG’s post-2015 use of machine learning to project credit risk while lacking board-level technical literacy to interrogate model assumptions; this gap reveals that inconsistent predictive performance becomes legislatively invisible when AI is repurposed from trading to strategy, allowing flawed systems to persist in decision-making under regulatory blind spots.

Incentive Misalignment

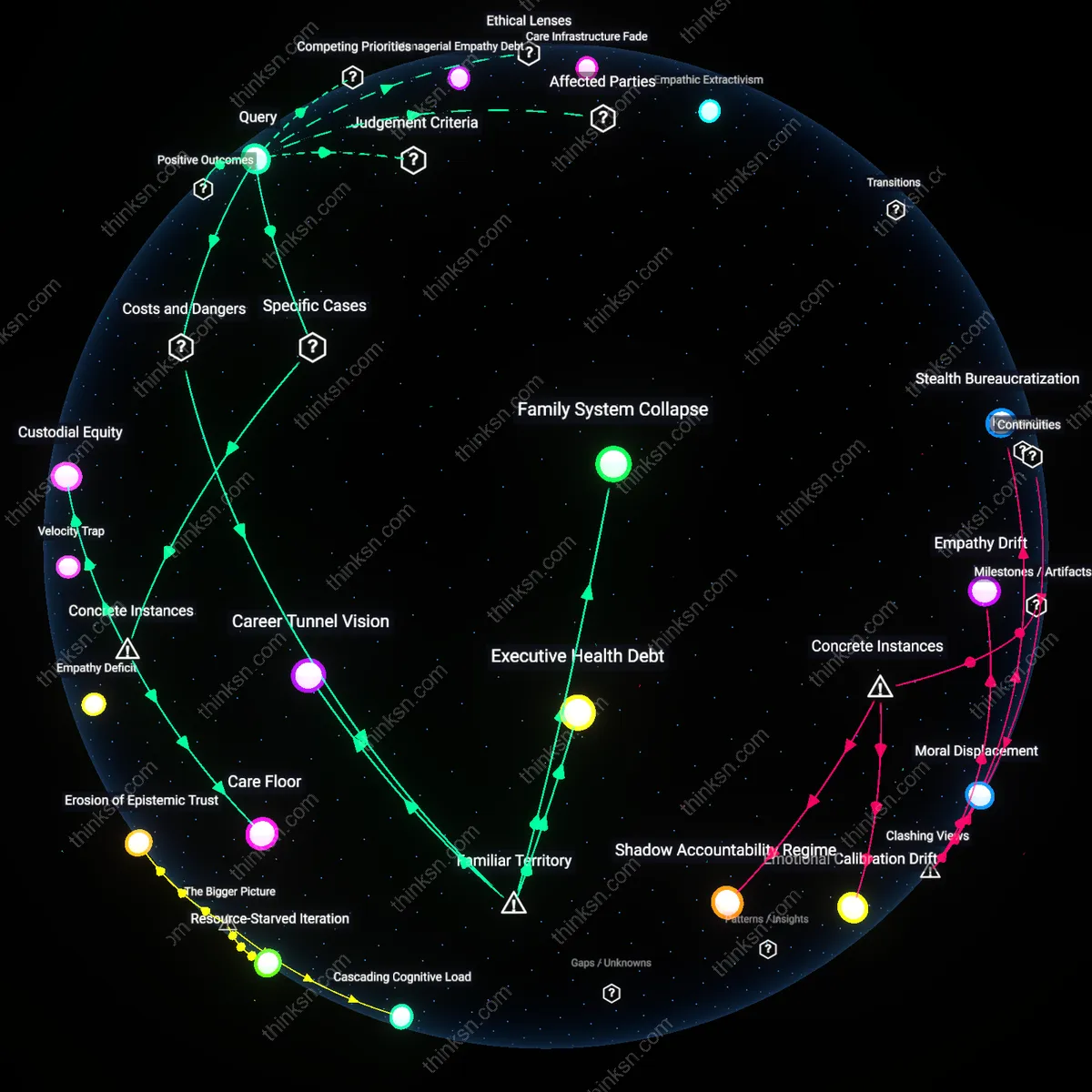

Yes, reliance on AI for market forecasting creates strategic myopia because corporate boards are incentivized to prioritize quarterly earnings over long-term resilience, and AI's apparent precision legitimizes short-term bets under the guise of data objectivity. Executives and board members, judged by near-term financial metrics and shareholder returns, exploit AI-generated forecasts as justification for cost-cutting or market positioning that sacrifices adaptive capacity. This mechanism operates through the alignment of AI outputs with existing performance review systems, where algorithmic confidence is mistaken for strategic certainty. The non-obvious insight is that the conflict does not stem from AI’s technical flaws but from its functional integration into incentive structures that already favor short-termism.

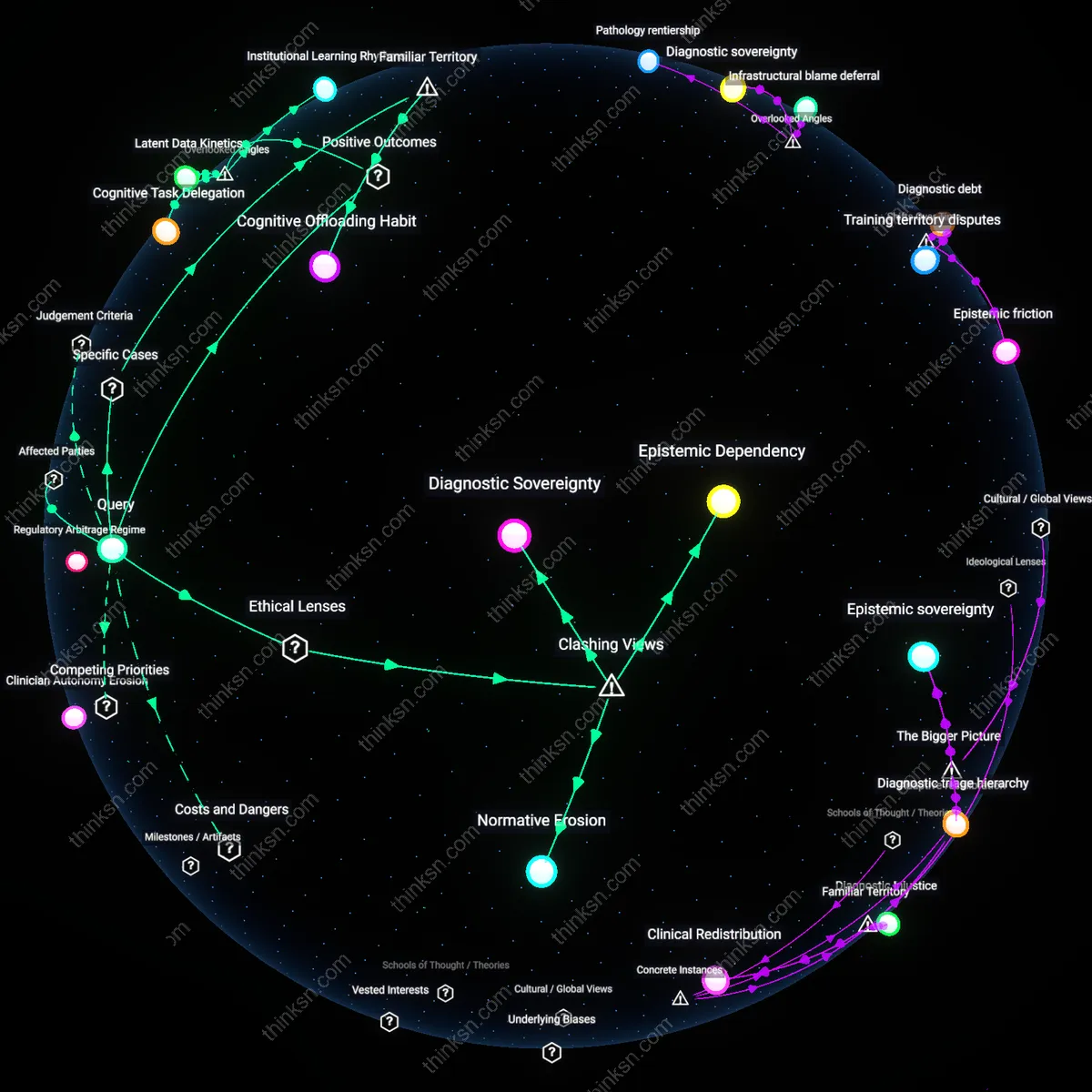

Epistemic Capture

Yes, AI dependence fosters strategic myopia by concentrating interpretive authority in technical teams and black-box models, displacing board-level deliberation grounded in experiential judgment or ethical foresight. Corporate boards, accustomed to deferring to specialized expertise, increasingly accept AI forecasts as neutral truth rather than contingent projections shaped by historical data and model design choices. This dynamic functions through a cognitive shift—where data-driven decisions are equated with rigor, marginalizing dissenting perspectives that lack quantitative backing. The underappreciated risk is that the board’s role as a forum for pluralistic sensemaking erodes, not because AI is inaccurate, but because it alters the very criteria for what counts as a valid strategic argument.

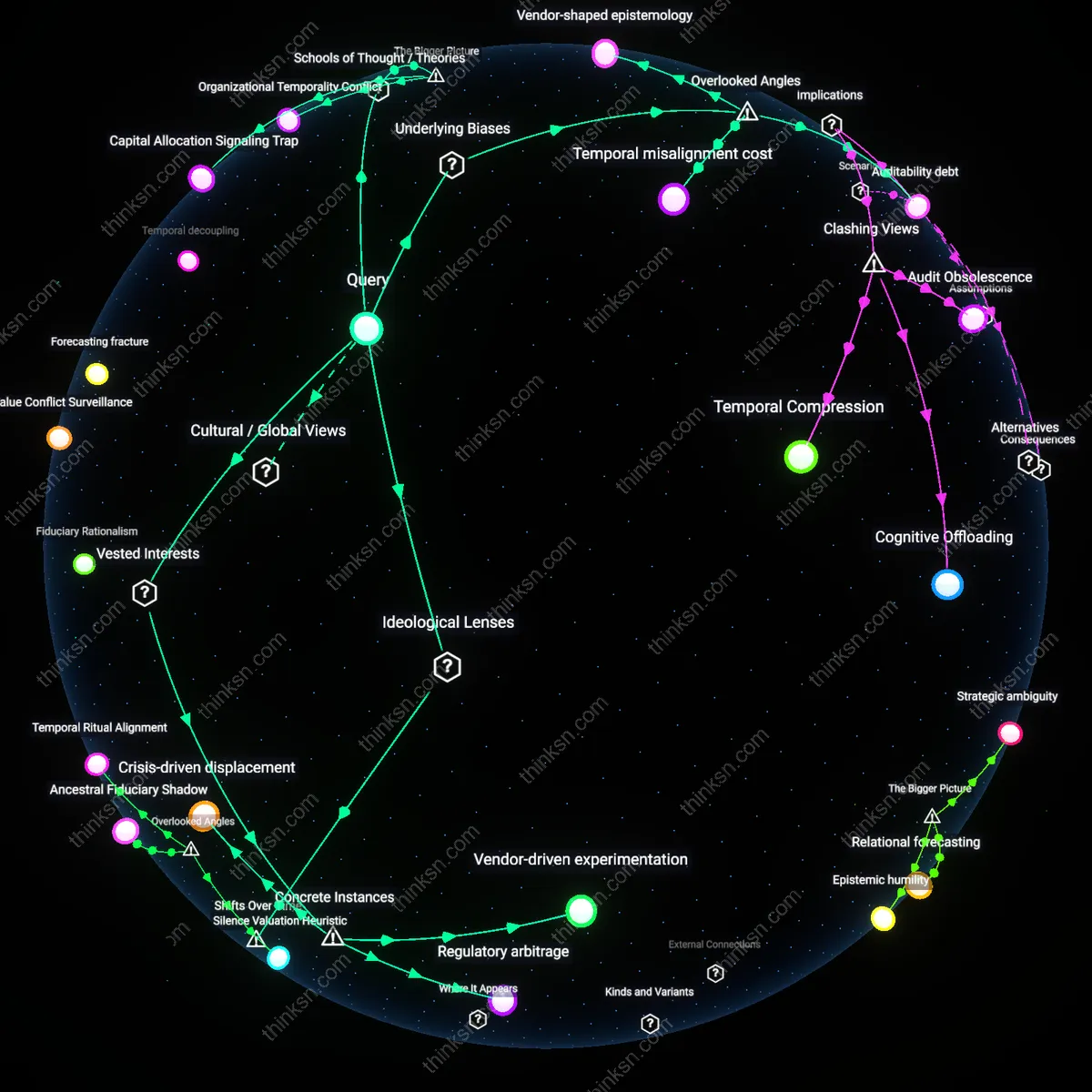

Temporal Dissonance

Yes, the conflict arises because AI forecasting systems are trained on backward-looking data while strategic foresight requires navigating emergent, unprecedented conditions, creating a misalignment in temporal orientation between model logic and board responsibility. Boards use AI to project trends but face decisions about climate transitions, geopolitical ruptures, or disruptive innovations—domains where past patterns fail to predict future states. This dissonance manifests in organizations like energy or retail firms that optimize supply chains using AI while being blindsided by regulatory or cultural shifts. The overlooked reality is that AI’s predictive consistency is not random error but structural lag—a feature, not a bug—causing boards to confuse stability of output with validity of insight.

Temporal Misalignment

The integration of AI forecasting tools into board-level decisions after the 2008 financial crisis created a structural mismatch between the temporal rhythms of capital markets and the lagged adaptability of machine learning systems; while high-frequency trading and earnings cycles demand immediacy, AI models trained on quarterly financials cannot adjust to geopolitical shocks or technological inflection points until patterns are retrospectively confirmed. Unlike the 1990s, when expert panels could reframe risks around emerging digital threats, today’s model governance is locked in regulatory and computational cycles that delay strategic recognition until disruption is already priced in. The underappreciated danger is not model error but systemic synchronization—where all major firms rely on similar training epochs and data vendors, generating collective blind spots that migrate risk across time rather than neutralizing it.

Feedback Paradox

AI-driven market forecasting entrenches strategic myopia at Walmart’s corporate board because predictive models prioritize short-term supply chain efficiencies validated by recent sales data, which systematically discount disruptive consumer behavior shifts captured in social sentiment—this creates a false confidence in data-driven rigor while reinforcing insular decision cycles that mistake precision for foresight, revealing an underappreciated mechanism where algorithmic consistency undermines strategic adaptability.

Epistemic Lock-in

The board of Siemens Energy deepens strategic myopia by adopting AI forecasts that align with engineering-centric performance metrics, marginalizing geopolitical risk inputs from emerging markets because they disrupt predictive stability—this reflects a non-obvious reconfiguration of data-driven decision making into a gatekeeping function that privileges model coherence over environmental complexity, challenging the assumption that more data inherently improves strategic vision.

Validation Asymmetry

Nestlé’s reliance on AI for demand forecasting in emerging markets produces a conflict where only high-frequency, formal-sector sales data are deemed ‘actionable,’ causing the board to ignore informal economy dynamics despite their long-term strategic implications—this selective validation reveals a hidden hierarchy of evidence in data-driven decision making, where the appearance of objectivity masks the exclusion of structurally relevant but statistically noisy inputs.