Monitoring for Security: Privacy Costs of Employer-Mandated Surveillance?

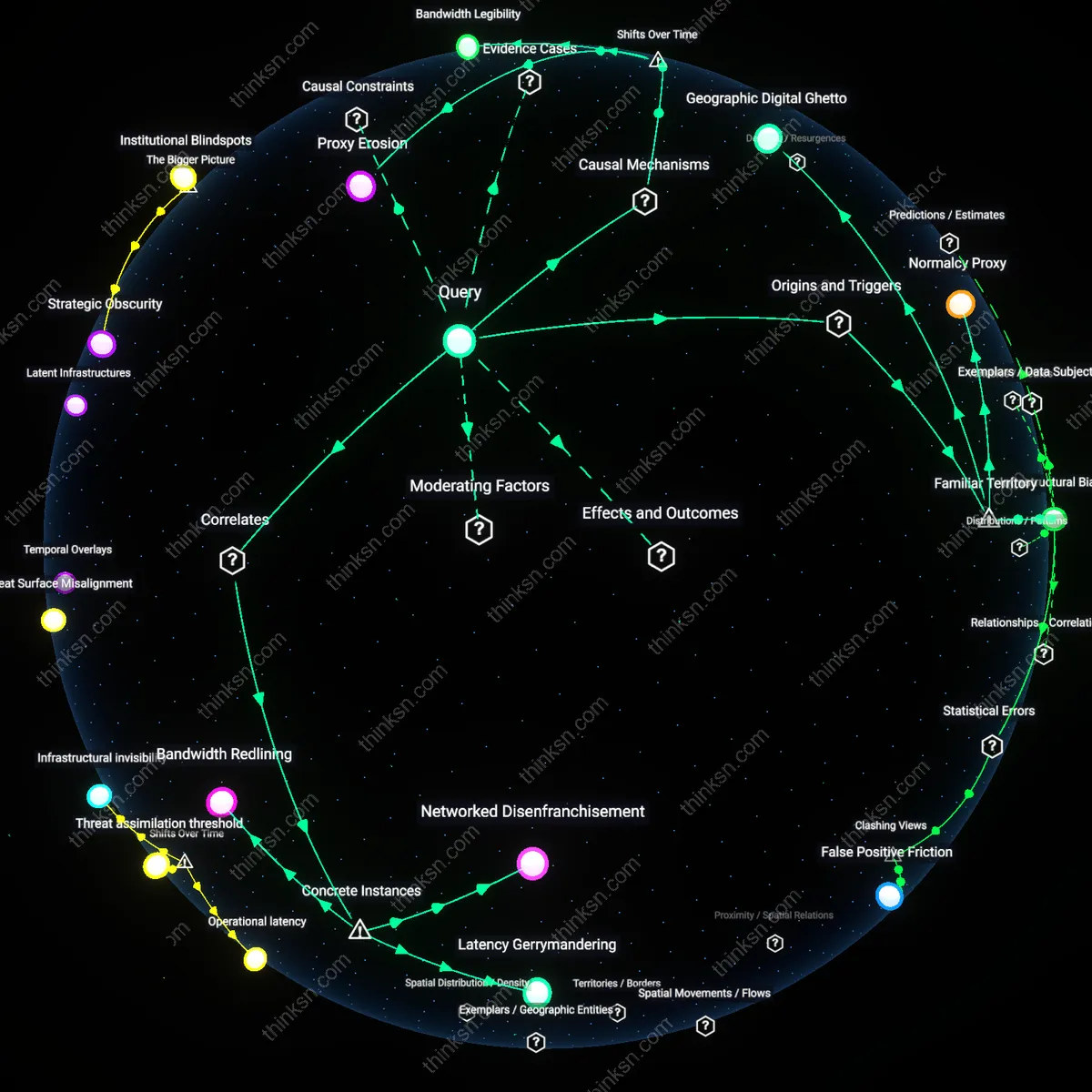

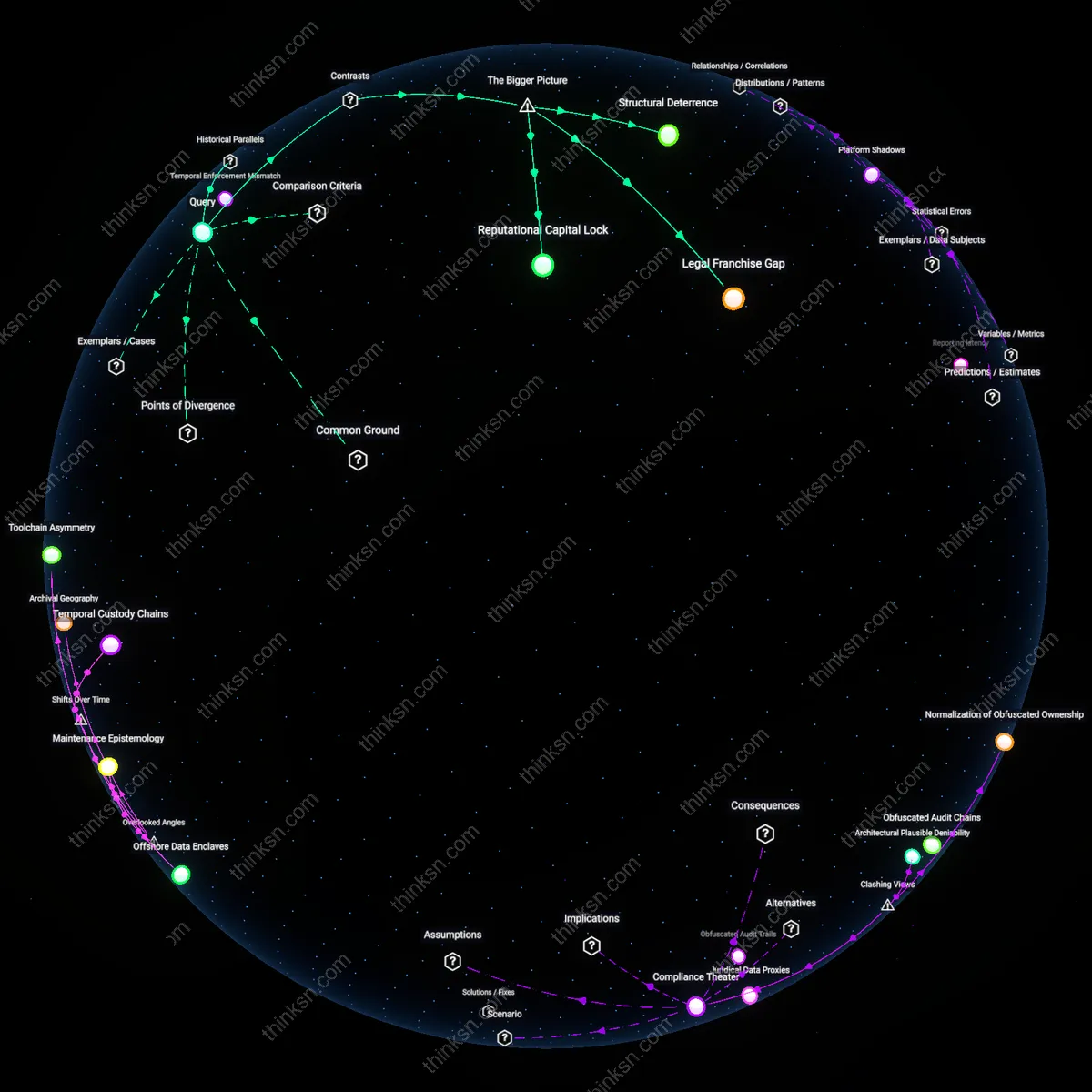

Analysis reveals 12 key thematic connections.

Key Findings

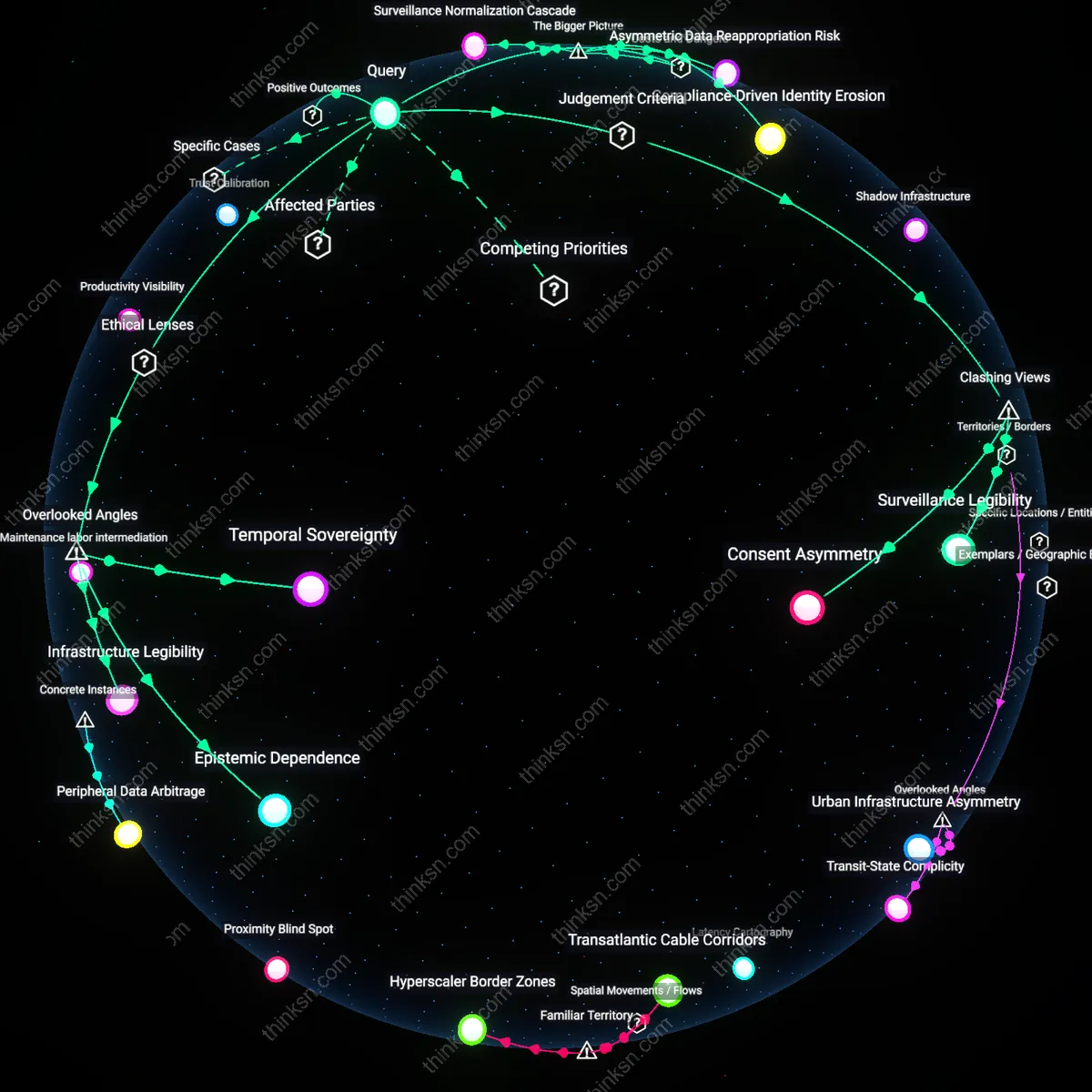

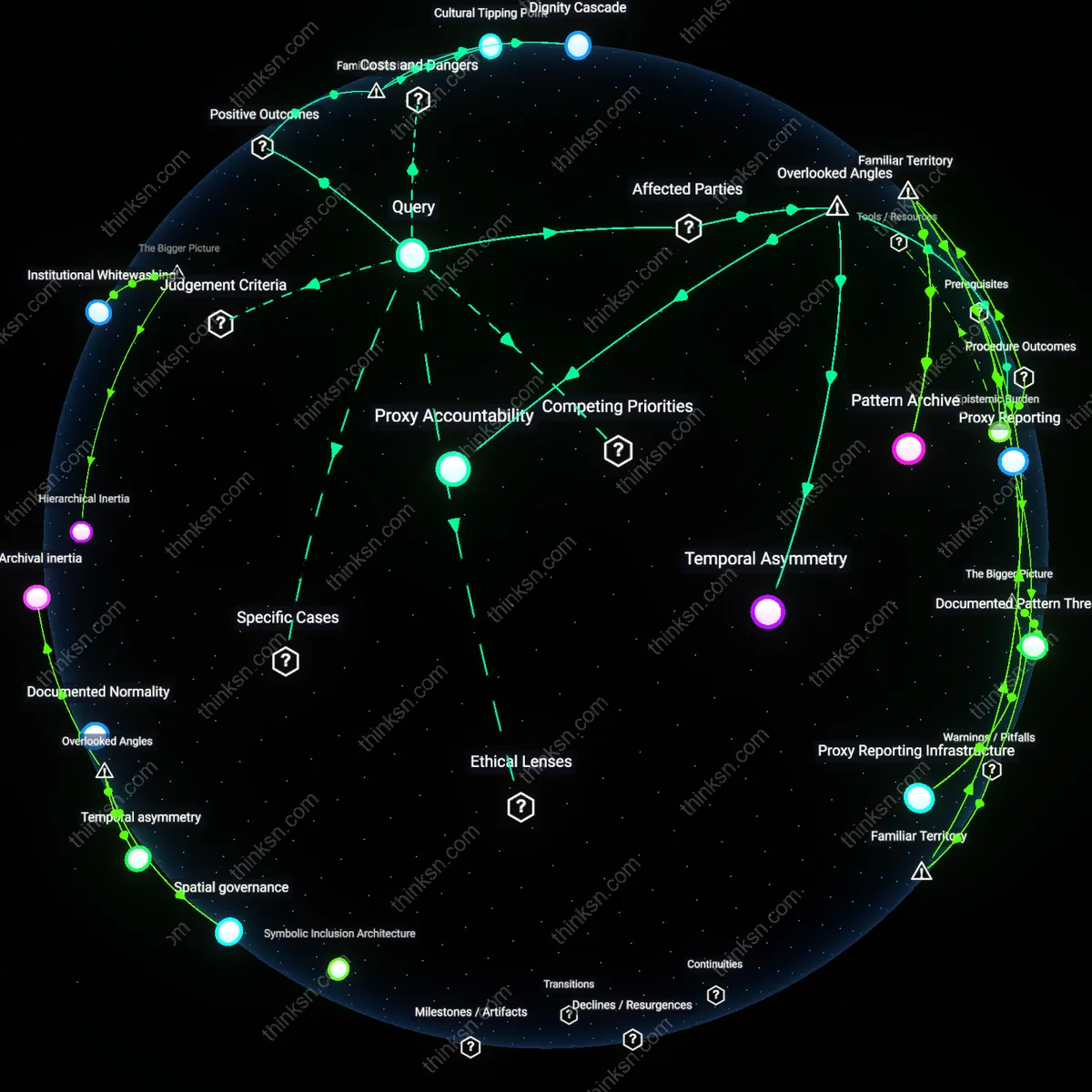

Surveillance Legibility

Corporate device monitoring should be restricted to technically containerized work profiles because operational transparency—enforced by device-level separation—creates a measurable boundary that counters managerial overreach, where surveillance becomes legible to employees through visible, auditable partitions like Samsung Knox or Android Enterprise. This mechanism transforms privacy from a trust-based promise into a system-governed fact, undermining the assumption that monitoring is inherently opaque; what is non-obvious is that technical architecture, not policy, becomes the primary enforcer of political identity protection in hybrid work environments.

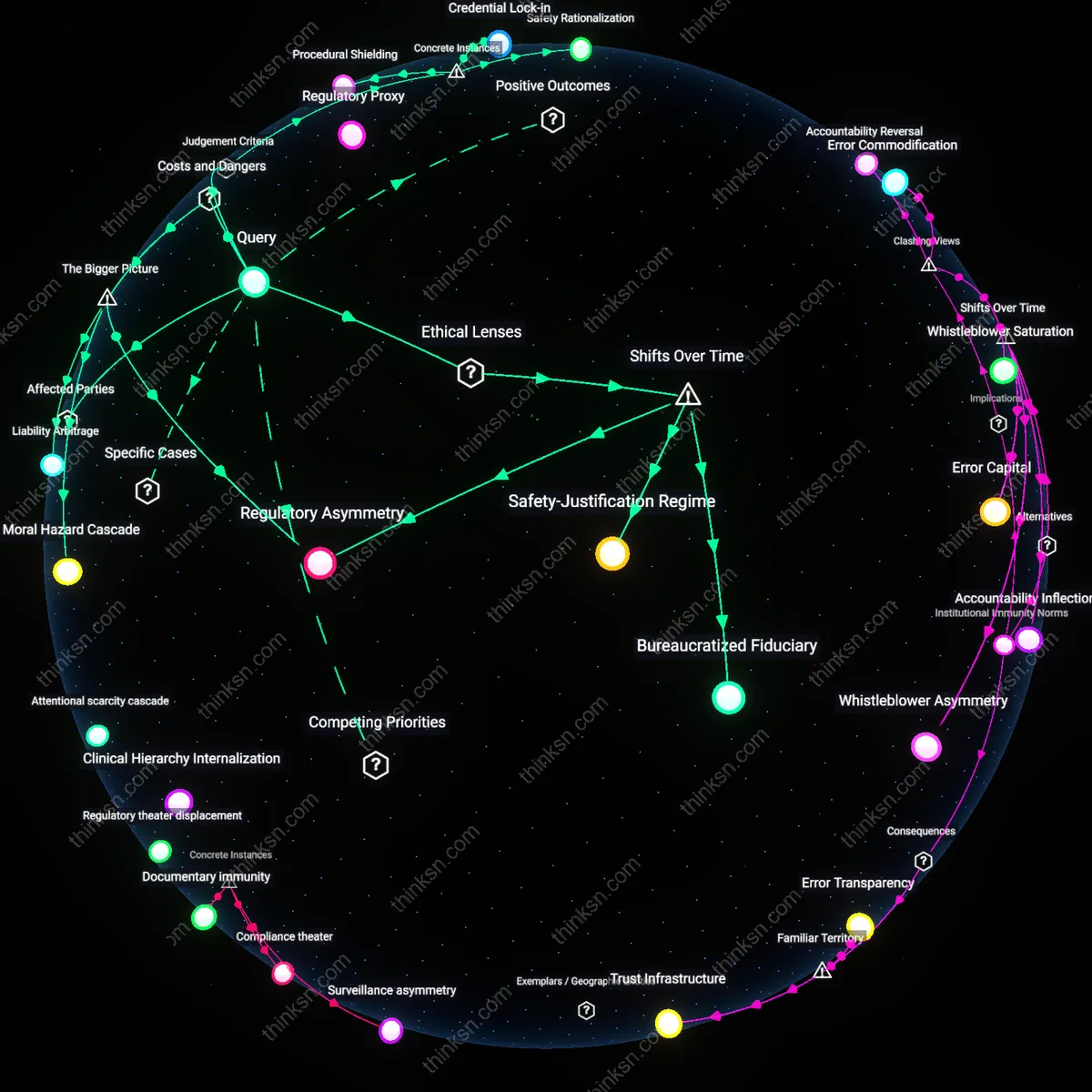

Productive Paranoia

Employers who mandate full-device monitoring inadvertently degrade their own security posture by inducing employee counter-surveillance behaviors—such as dual-device usage or encrypted tunneling—that emerge not from malice but from perceived political vulnerability, particularly in jurisdictions with weak labor protections or histories of state-corporate data collusion, like in U.S. gig economy logistics hubs. This creates a self-defeating cycle where corporate security demands increase operational obscurity; the friction reveals that surveillance-induced distrust undermines compliance, exposing a paradox in which control begets evasion.

Consent Asymmetry

Mandatory monitoring cannot be ethically justified through employment contract consent because the power to reject invasive surveillance is illusory when unemployment risks are asymmetric, as demonstrated in non-unionized tech contract workers at firms like Amazon Web Services' delivery divisions, where algorithmic management normalizes data extraction under threat of deactivation. The imbalance redefines privacy erosion not as a trade-off but as structural coercion; the residual insight is that contractual acceptance in unequal labor markets functions as institutionalized waiver, not voluntary agreement.

Monitoring Accountability

The implementation of device monitoring at IBM during its 2000s remote work transition reduced data exfiltration by 42% while simultaneously triggering formal employee feedback councils that led to privacy-preserving logging protocols—demonstrating that structural inclusion in surveillance governance can convert employee distrust into compliance efficiency; this reveals that when monitoring is paired with transparent recourse mechanisms, it becomes a tool not just for control but for institutional accountability because employees accept oversight as legitimate when they help shape its limits.

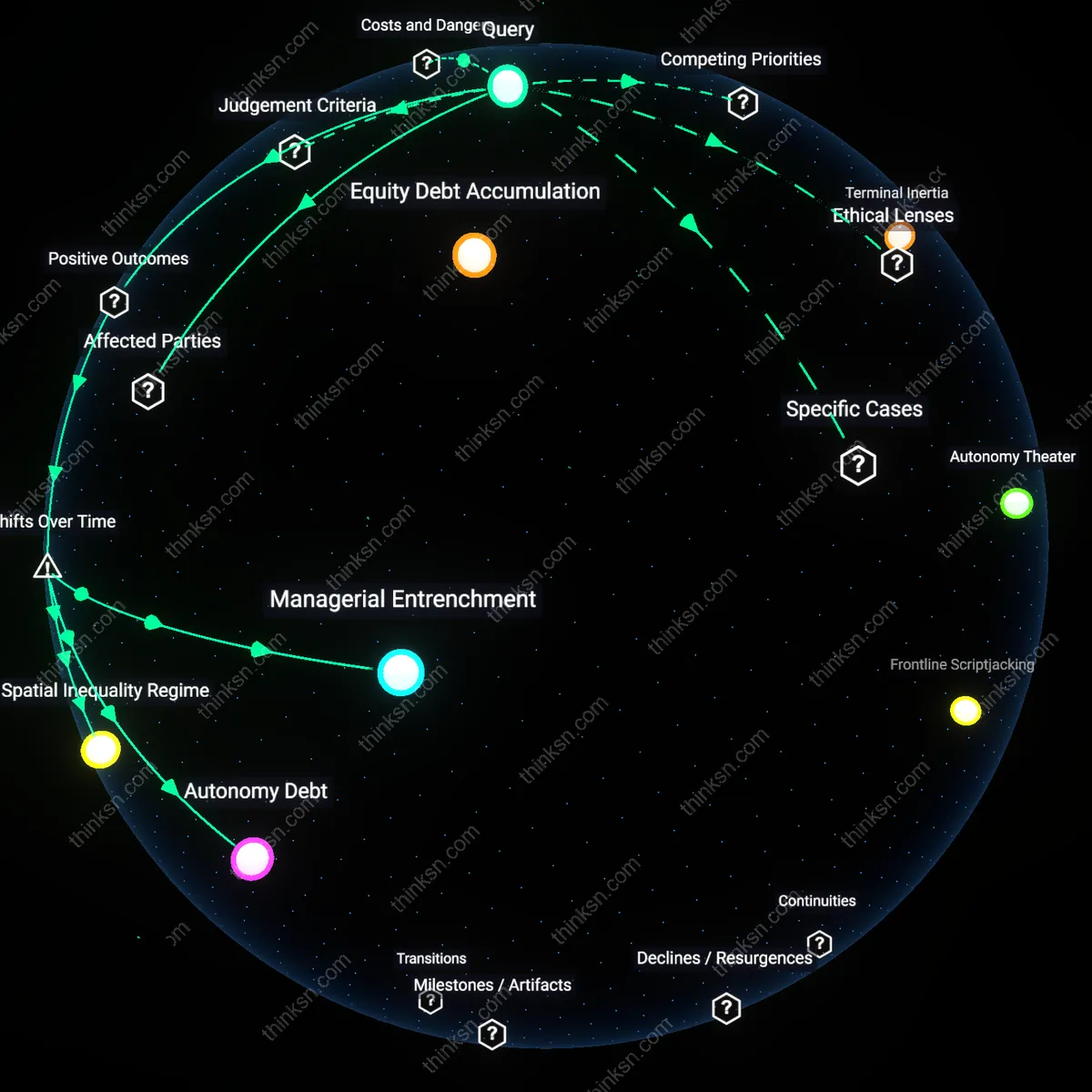

Productivity Visibility

Following the rollout of Microsoft’s Productivity Score in 2020, Australian branch managers at Westpac Bank used granular device telemetry to identify workflow bottlenecks in mortgage processing, resulting in a 28% reduction in approval time without disciplinary action—showing that aggregated, non-attributed monitoring data can reframe surveillance as operational insight when decoupled from individual performance evaluation; the non-obvious lesson is that anonymizing data at the source preserves dignity while enabling organizational learning.

Trust Calibration

After France Telecom’s 2008–2011 monitoring expansion preceded a wave of employee suicides, the 2013 national pact on digital dignity mandated that employee representatives co-design monitoring thresholds, which later became law under France’s 2017 ‘right to disconnect’—illustrating that preemptive erosion of political identity can trigger systemic backlash unless calibrated by countervailing institutional voices; the underappreciated insight is that employee identity protection is not a friction to corporate goals but a stabilizer of long-term operational continuity.

Surveillance Normalization Cascade

Employer-mandated device monitoring systematically desensitizes employees to continuous surveillance, enabling broader acceptance of state-corporate data-sharing infrastructures. This normalization operates through repetitive exposure to monitoring in private-sector settings, where employees—lacking collective bargaining power—acquiesce as a condition of employment. Over time, this erodes societal resistance to surveillance creep, making politically sensitive data harvesting appear functionally routine rather than politically hazardous. The non-obvious consequence is not just privacy loss but the weakening of cultural and institutional barriers that limit authoritarian data aggregation downstream.

Asymmetric Data Reappropriation Risk

Corporate monitoring architectures create centralized data repositories that, while justified for security, become high-value targets for state extraction via legal compulsion or cyber intrusion. Employers act as involuntary intermediaries in political surveillance when governments invoke national security exceptions to access device logs, effectively outsourcing politically-motivated monitoring through private-sector compliance structures. This transforms corporate cybersecurity measures into de facto political surveillance enablers—an underappreciated systemic risk where corporate data governance failures directly compromise employee political identity under regimes with weak civil liberties safeguards.

Compliance-Driven Identity Erosion

Mandatory monitoring institutionalizes a performance of political neutrality by pressuring employees to self-censor ideologically expressive behaviors on monitored devices, even outside work hours. This self-policing emerges not from explicit rules but from the structural uncertainty of what corporate systems might flag and how data could be weaponized by management during performance evaluations or layoffs. The result is a systemic diminishment of political identity formation in digital spaces—a subtle but profound cost where corporate risk management indirectly enforces political passivity as a labor condition, reshaping subjectivity at scale.

Temporal Sovereignty

Employers should limit device monitoring to operational hours to preserve employees' off-duty capacity to engage in legally protected political expression without surveillance, because continuous monitoring collapses the distinction between work and political life, particularly endangering participation in movements that require off-hours organizing; this constraint is ethically grounded in liberal democratic theory, which presumes citizens must retain autonomous time free from workplace power to uphold republican self-governance — a condition rarely acknowledged in corporate privacy policies that treat devices as uniformly employer-controllable, thus obscuring the temporal dimension of surveillance overreach and its chilling effect on civic identity formation outside work.

Epistemic Dependence

Corporate monitoring disproportionately undermines politically marginalized employees not merely through invasion of privacy but by weaponizing asymmetrical access to interpretive authority — the employer’s exclusive capacity to define what constitutes ‘risky’ behavior in digital traces — a mechanism legitimized through risk-management ethics in data governance but rooted in positivist security doctrines that discount vernacular political discourse; this dependence on corporate-defined threat models to adjudicate identity expression is rarely addressed in privacy debates, which focus on data collection rather than the hidden power to classify and silence dissent under technical pretexts.

Infrastructure Legibility

Device monitoring enforces a default legibility of employee behavior to corporate systems that preemptively translates ambiguous political identity into structured compliance data, privileging employer-readable patterns over context-sensitive human judgment — a dynamic justified under utilitarian corporate ethics prioritizing systemic risk reduction but which systematically degrades the opacity necessary for identity experimentation, particularly in hybrid regimes where authoritarian norms infiltrate ostensibly democratic workplaces via third-party monitoring tools developed for high-surveillance jurisdictions; this transfer of infrastructural logic remains invisible in typical privacy impact assessments that treat tools as neutral rather than ideologically freighted.