Why Do Retaliation Claims Succeed Less Internally Than Legally in Tech?

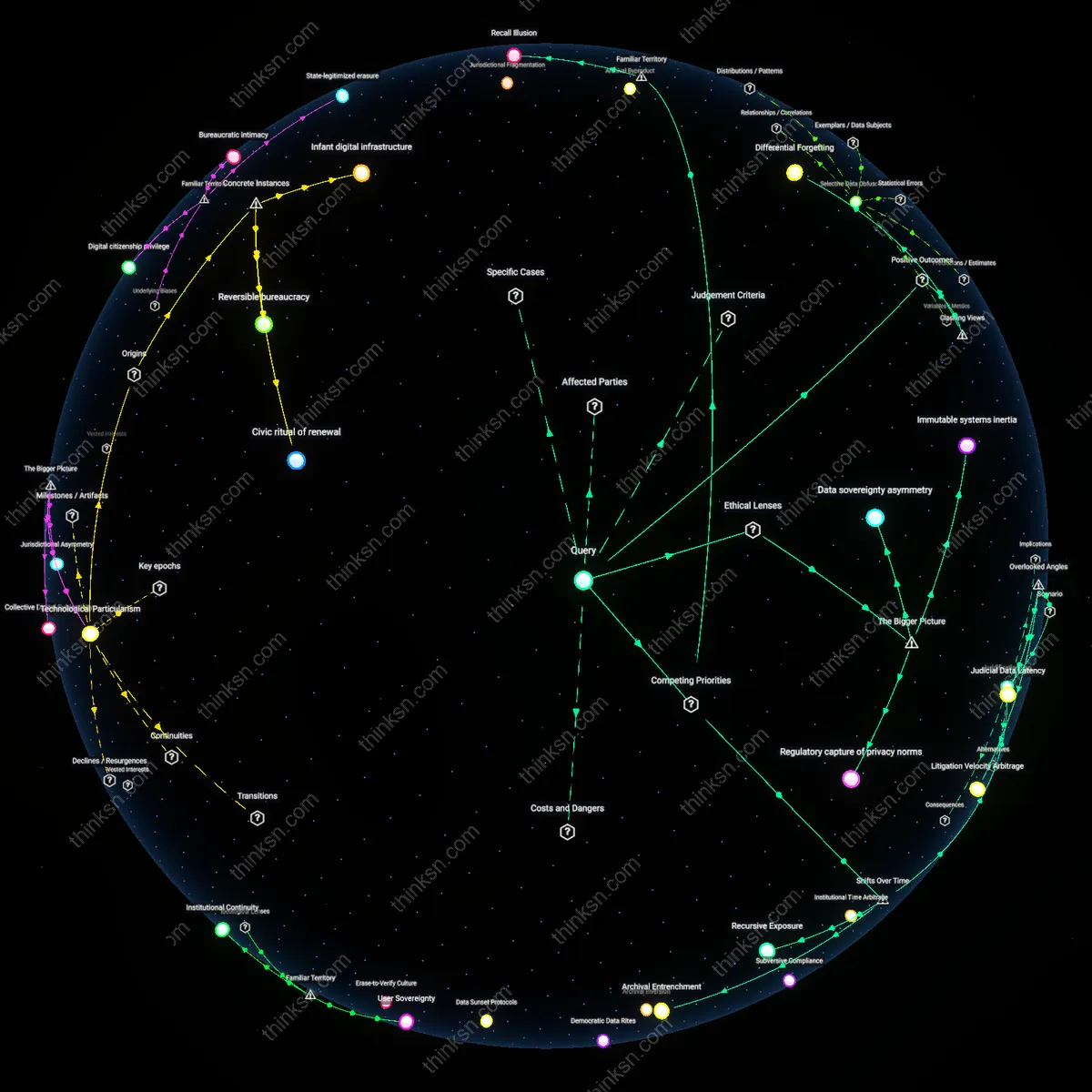

Analysis reveals 4 key thematic connections.

Key Findings

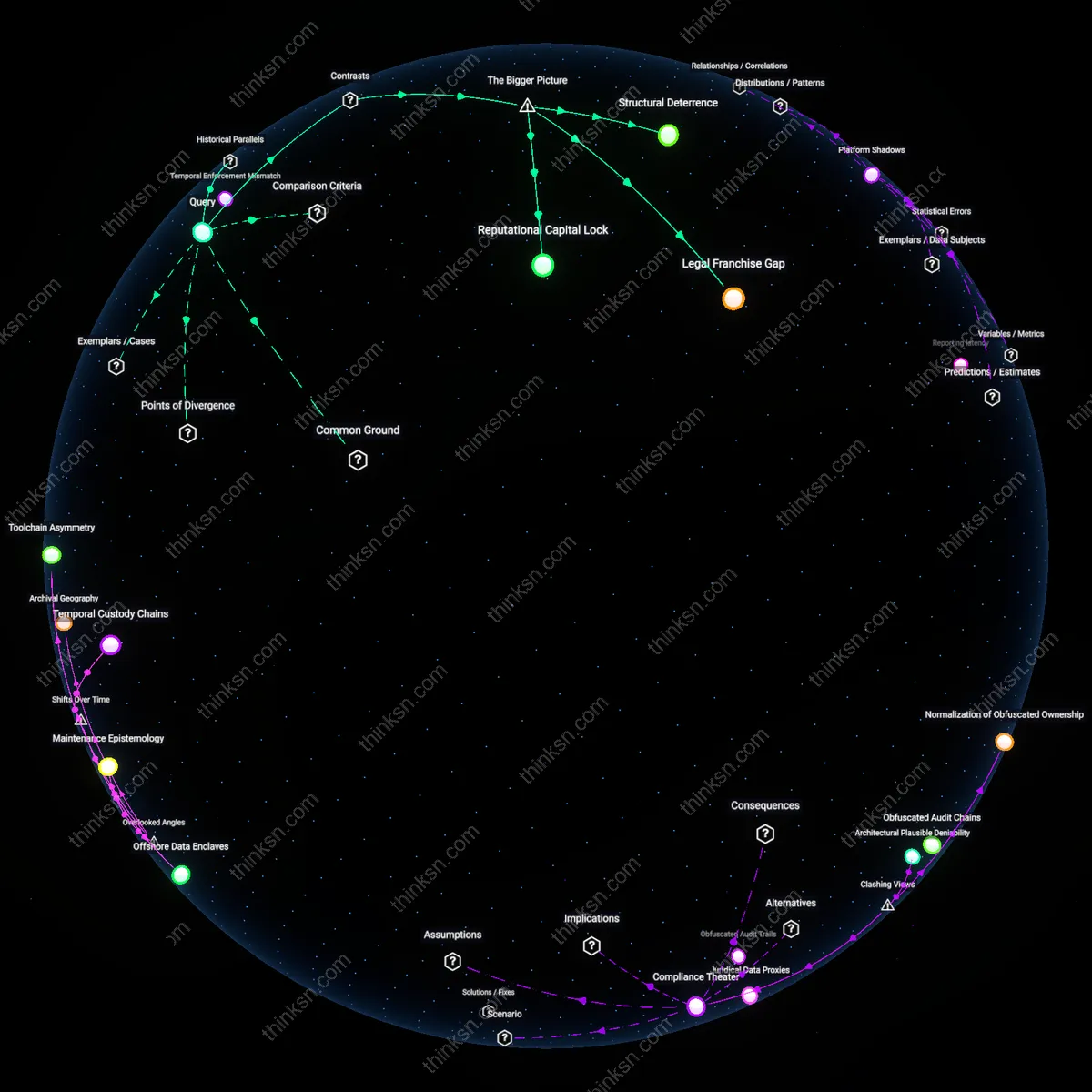

Whistleblower temporal misalignment

External legal action is structurally disadvantaged in tech because whistleblower protections were designed for industrial-era timelines, not the compressed innovation cycles of software development, creating a whistleblower temporal misalignment. By the time a legal case resolves—often 2–5 years—the product in question has been deprecated, the team disbanded, and the wrongdoing absorbed into legacy systems no longer under scrutiny. The overlooked dynamic is that tech-specific obsolescence erodes evidentiary relevance and public interest simultaneously, making legal victories feel anachronistic. Unlike manufacturing or finance, where harm unfolds slowly and linearly, tech retaliation claims expire not through dismissal but through technological irrelevance, a factor absent in most labor law analyses.

Structural Deterrence

Internal reporting in tech companies suppresses retaliation claims not because it resolves wrongdoing, but because its design prioritizes organizational insulation over employee protection. Compliance systems are staffed by HR and legal teams whose fiduciary allegiance runs to the corporation, not the individual, creating a built-in conflict of interest that discourages escalation and reclassifies grievances as performance issues. This produces fewer retaliation claims not through resolution but through preemptive neutralization—what appears as effectiveness is actually systemic disincentive to challenge authority, a mechanism rarely acknowledged because it mimics functional governance while serving containment.

Legal Franchise Gap

External legal action remains inaccessible to most tech employees not due to lack of merit, but because the economic calculus of litigation favors capital over labor in high-skill sectors. Firms operate in jurisdictions like Silicon Valley, where non-disclosure agreements, mandatory arbitration clauses, and asymmetric legal resources are institutionalized, making individual lawsuits prohibitively risky and likely to end in forced settlement. The scarcity of successful retaliation claims thus reflects not the superiority of internal channels, but the deliberate erosion of legal standing for employees—a dynamic obscured by the sector’s veneer of progressive workplace culture.

Reputational Capital Lock

Tech workers avoid external reporting because their career mobility depends on unstated industry-wide reputation networks that penalize public dissent regardless of justification. Unlike regulated sectors with third-party oversight, the tech labor market operates on trust capital accrued through perceived loyalty and discretion, enforced by tight-knit networks of investors, recruiters, and peer technologists. This creates a silent enforcement regime where even valid claims are suppressed not by formal rules but by the fear of exclusion from future opportunities—revealing that the apparent efficiency of internal mechanisms is actually a shadow effect of market-based social control.

Deeper Analysis

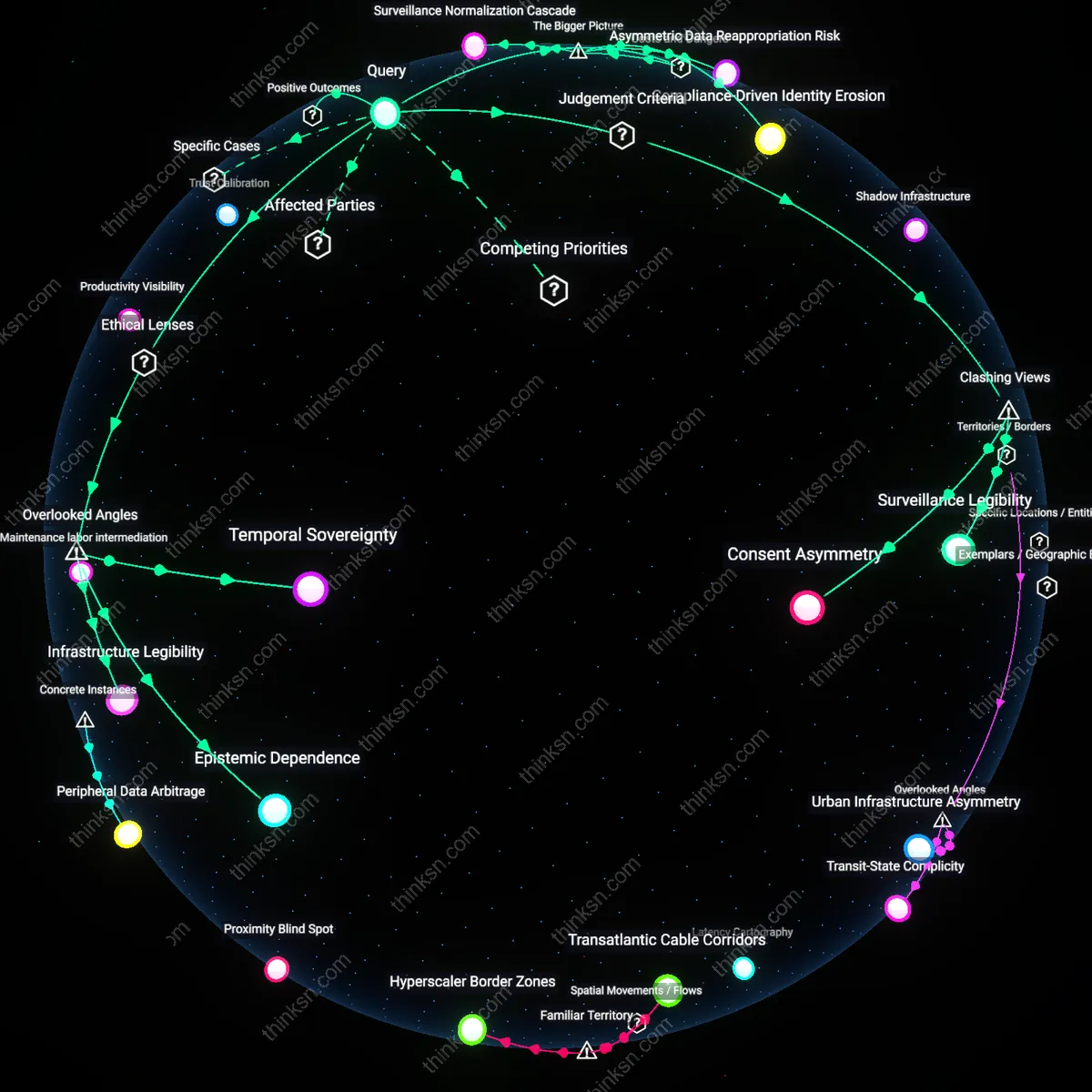

How did the speed of software development shift over time change the way whistleblowers experience the outcomes of their reports?

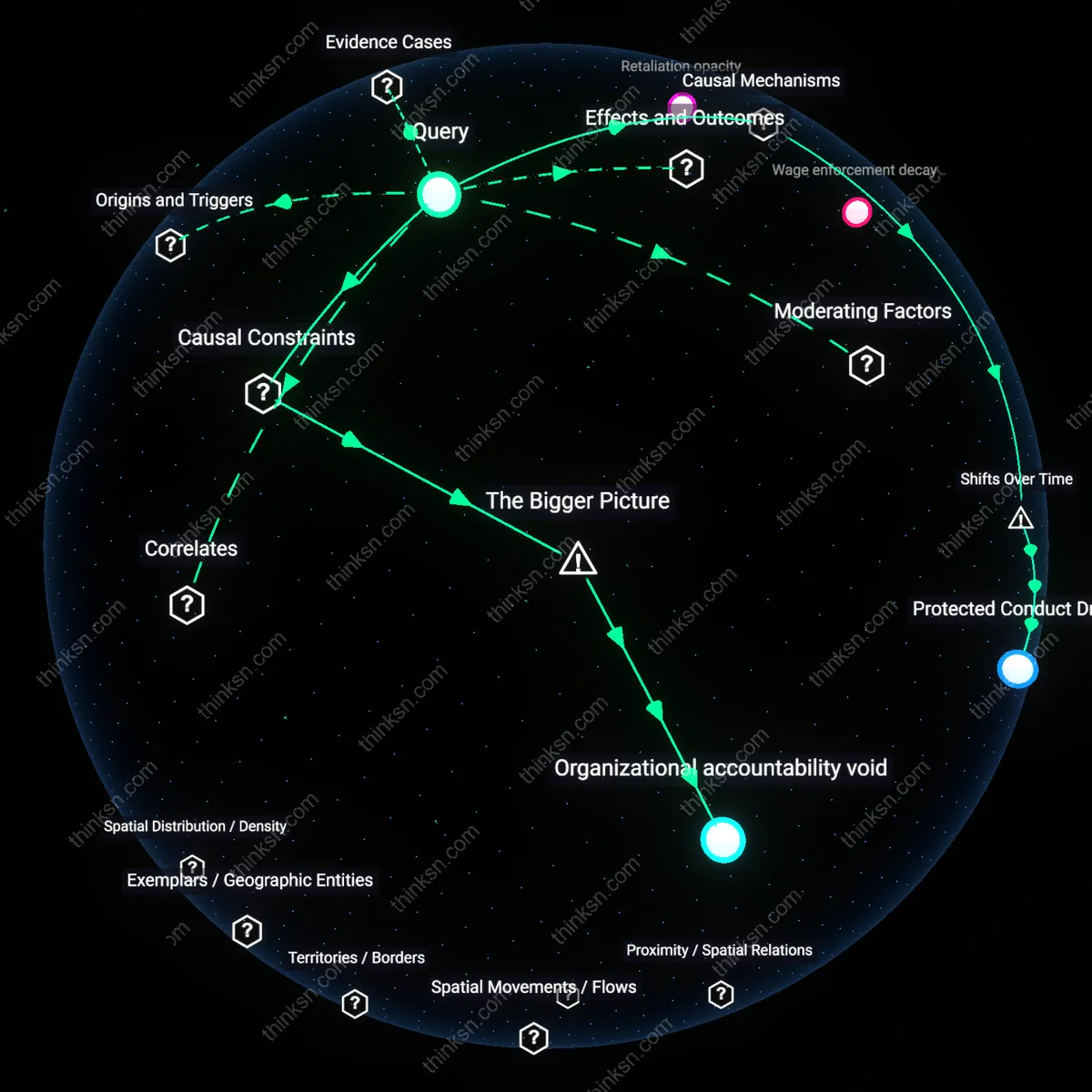

Temporal Mismatch

The acceleration of software development cycles compresses the window between whistleblower disclosure and public visibility of harms, leaving whistleblowers stranded in procedural delays while their warnings are overtaken by technical obsolescence. As continuous integration and deployment systems—like those at major cloud providers or social media platforms—push code updates hourly, whistleblower reports routed through legal or compliance channels (designed for slower industrial-era timelines) emerge too late to influence active engineering decisions, rendering their interventions invisible in the system’s operational memory. This misalignment between rapid technical iteration and sluggish institutional response means whistleblowers often engage with systems that have already evolved beyond the reported flaw, diluting accountability and amplifying their sense of futility—a dynamic rarely acknowledged in discussions that focus on courage or retaliation rather than temporal dissonance.

Artifact Ephemeralization

As software development shifted from long-release cycles to ephemeral infrastructure—such as serverless functions and containerized microservices—whistleblowers lost access to stable, persistent evidence bases necessary for credible reporting. Unlike in the 1990s, when internal memos or source code snapshots could be preserved as static proof, modern systems dynamically generate, mutate, and delete components within minutes, making it nearly impossible to document systemic misconduct with verifiable artifacts. This erosion of evidentiary stability disrupts the foundational assumption of whistleblower frameworks—that wrongdoing can be fixed in time for scrutiny—and subtly shifts the burden onto individuals to prove events they may be the only human observer of, a critical risk that escapes most legal and ethical analyses of disclosure.

Architecture of Deniability

The rise of distributed software development across global teams using modular, opaque components enables plausible deniability at scale, insulating decision-makers from whistleblower claims by fragmenting knowledge across technical domains. For example, in platforms using third-party SDKs or outsourced AI training pipelines, no single engineer may have full visibility into how user data is exploited, allowing leadership to credibly claim ignorance even when systemic abuse is reported. This architectural compartmentalization—driven by agile efficiency and cloud outsourcing—transforms organizational structure into a covert shield against accountability, a mechanistic enabler of silence that is rarely examined in favor of more visible factors like corporate culture or individual ethics.

Temporal Fragility

Accelerated software development compresses the lifespan of evidence, making digital whistleblowing artifacts ephemeral and harder to verify over time. As continuous deployment and ephemeral infrastructure dominate post-2015 cloud-native environments, logs, API states, and algorithmic behaviors that substantiate whistleblower claims vanish within hours or days, undermining long-term accountability. This challenges the intuitive belief that digital systems preserve truth inherently, revealing instead how speed erodes evidentiary stability—a dynamic particularly acute in platforms like AWS Lambda or Kubernetes where audit trails are fragmented by design. The non-obvious insight is that faster development doesn't just obscure wrongdoing temporarily; it structurally dissolves the conditions for proof.

Epistemic Asynchrony

Rapid software development creates an irreconcilable gap between the temporal rhythm of engineering teams and the deliberative pace of legal or ethical review, leaving whistleblowers stranded in a procedural lag. At major AI labs after 2020, where model retraining occurs hourly, internal ethics boards cannot assess risks before systems evolve, causing whistleblower concerns to be dismissed as based on outdated architectures. This defies the expectation that faster development demands faster oversight, revealing instead that speed induces institutional disbelief—wrongdoing is doubted not because it lacks merit, but because it references a version that no longer exists. The critical insight is that real-time development invalidates retrospective accountability by design.

Velocity Traps

Faster software release cycles compress feedback timelines, causing whistleblowers to be seen not as early warners but as last-minute disruptors within operational workflows. When a reporter surfaces flaws in code already in production due to rapid sprints, their disclosure interrupts momentum rather than guiding formation, shifting organizational perception from appreciation to blame. This reframing happens not because the risk is lesser but because the velocity of deployment turns latency between coding and consequence into a moral alibi—'we didn’t have time' is invoked to justify silence or inaction. The non-obvious takeaway in familiar narratives of corporate retaliation is that speed itself becomes a structural deflector of accountability, embedding complicity in tempo.

Platform Shadows

As software development migrated from outsourced projects to in-house cloud-native stacks, whistleblowers lost access to neutral third parties who once served as validation buffers. In the era of monolithic contractors like Lockheed or GM, external auditors were part of the feedback chain; today’s internal DevOps pipelines cut out these stabilizing intermediaries, so disclosures now originate and terminate within the same corporate envelope. This shift turns platform-scale secrecy into a silent enforcer—those who report issues become statistically invisible because logging and escalation systems are self-monitored. The common discourse around Silicon Valley leakers overlooks that it’s not paranoia but architecture that isolates dissent, folding ethical reporting into the blind spots of continuous integration.

Reporting latency

The acceleration of software development compressed the window between whistleblower disclosure and public or regulatory consequence, as seen in the 2018 Facebook–Cambridge Analytica scandal where rapid deployment of data-mining tools left whistleblowers like Christopher Wylie with diminished control over narrative timing; because the software lifecycle moved faster than institutional response, the outcome was effectively determined before formal investigations began. This illustrates how the speed of iteration shifts leverage from deliberative accountability to real-time exposure, making delayed reporting functionally obsolete. The underappreciated reality is that the value of a whistleblower’s evidence now degrades exponentially, not linearly, in high-velocity dev environments.

Ephemeral evidence

The shift to continuous integration and ephemeral infrastructure in platforms like Uber’s early microservices architecture meant that by the time internal reports were filed, the offending code had already been altered or deleted, as demonstrated by the 2017 Susan Fowler incident; because evidence existed only transiently in production systems, the act of whistleblowing became untethered from verifiable proof. This dynamic transforms credibility from documentation to memory, privileging narrative over artifact in adjudicating claims. The non-obvious effect is that rapid software cycles don’t just obscure wrongdoing — they structurally invalidate retrospective verification.

Platform dependency

Whistleblowers at Palantir in the mid-2010s discovered their ethical objections to ICE data systems were undermined by the company’s integration into locked-down government ecosystems where software updates bypassed internal review, making post-report corrections impossible; because their reports targeted static policies while the software evolved continuously, outcomes were pre-determined by deployment inertia. This reveals that speed entrenches decisions through technical momentum, not secrecy. The overlooked mechanism is that fast-moving software doesn’t merely outpace oversight — it replaces policy with irreversible technical fact.

Feedback Delay Collapse

Accelerated software deployment cycles reduced the time between a whistleblower's report and observable organizational response, compressing the feedback loop in which disclosures are assessed and acted upon. As continuous integration and rapid patching became standard—evidenced by GitHub commit logs from Mozilla and Signal showing resolution of reported vulnerabilities within hours—whistleblowers now experience outcomes faster, often bypassing traditional bureaucratic mediation. This shift amplifies psychological and professional consequences in near real time, altering risk perception and strategic timing in disclosure decisions. The non-obvious implication is that speed transforms whistleblowing from a calculated, long-term ethical act into a high-velocity interaction governed by software tempo rather than institutional deliberation.

Infrastructure Visibility Gradient

The migration from monolithic, on-premise software systems to distributed, cloud-based architectures—marked by artifacts like AWS API logs and Kubernetes deployment records—has increased the technical specificity required to substantiate a whistleblower's claim. Modern software’s modularity means misconduct often resides in narrowly scoped services or configuration files, making evidence harder to trace without access to internal tooling. As a result, whistleblowers must possess deeper technical context to produce credible reports, privileging engineers over administrative insiders and shifting the locus of exposure to those embedded in development workflows. This creates a visibility gradient where only those with operational access to infrastructure can effectively document wrongdoing, reshaping who can credibly blow the whistle.

Temporal Enforcement Mismatch

Automated compliance systems, such as those embedded in financial platforms like SWIFT or payment processors like Stripe—documented in their publicly released API enforcement logs—execute regulatory rules in milliseconds, while human-led whistleblower investigation processes remain bound by legal and organizational timelines spanning months. This misalignment means that even valid reports often arrive after algorithmic systems have already amplified or contained the harm, reducing the perceived agency of the whistleblower. The core dynamic is that software speed decouples operational impact from accountability cycles, rendering traditional disclosure mechanisms reactive rather than preventive. The underappreciated consequence is that whistleblowers increasingly experience their interventions as symbolically meaningful but temporally irrelevant.

Explore further:

- How did software development practices evolve over the past two decades to make it harder for whistleblowers to trace misuse of user data?

- Where do whistleblower claims in the tech sector tend to emerge from, and how does the location of teams relate to where evidence is stored and preserved?

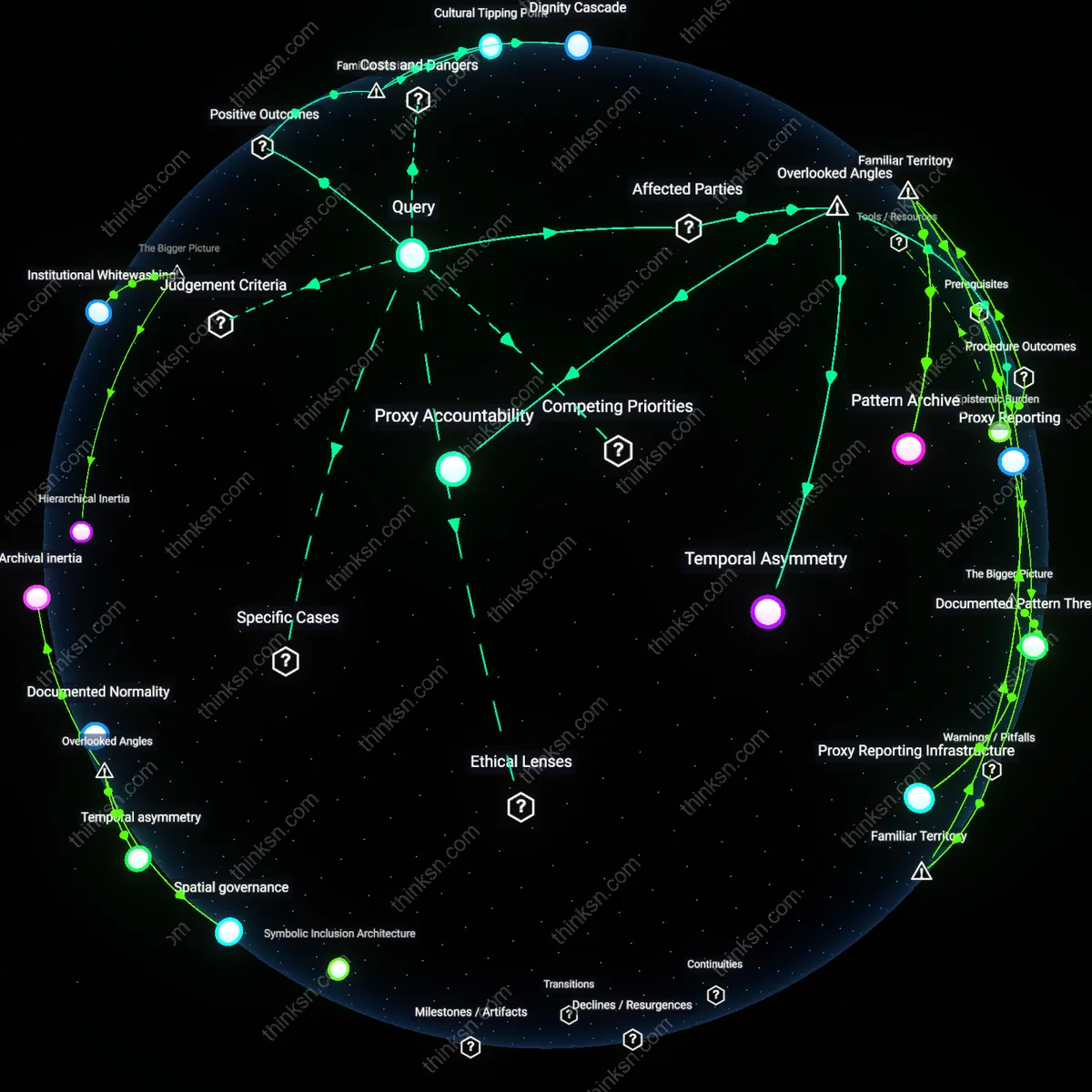

- If internal reporting systems are now isolated within companies, how often do reported issues actually lead to fixes compared to cases that go public?

How did software development practices evolve over the past two decades to make it harder for whistleblowers to trace misuse of user data?

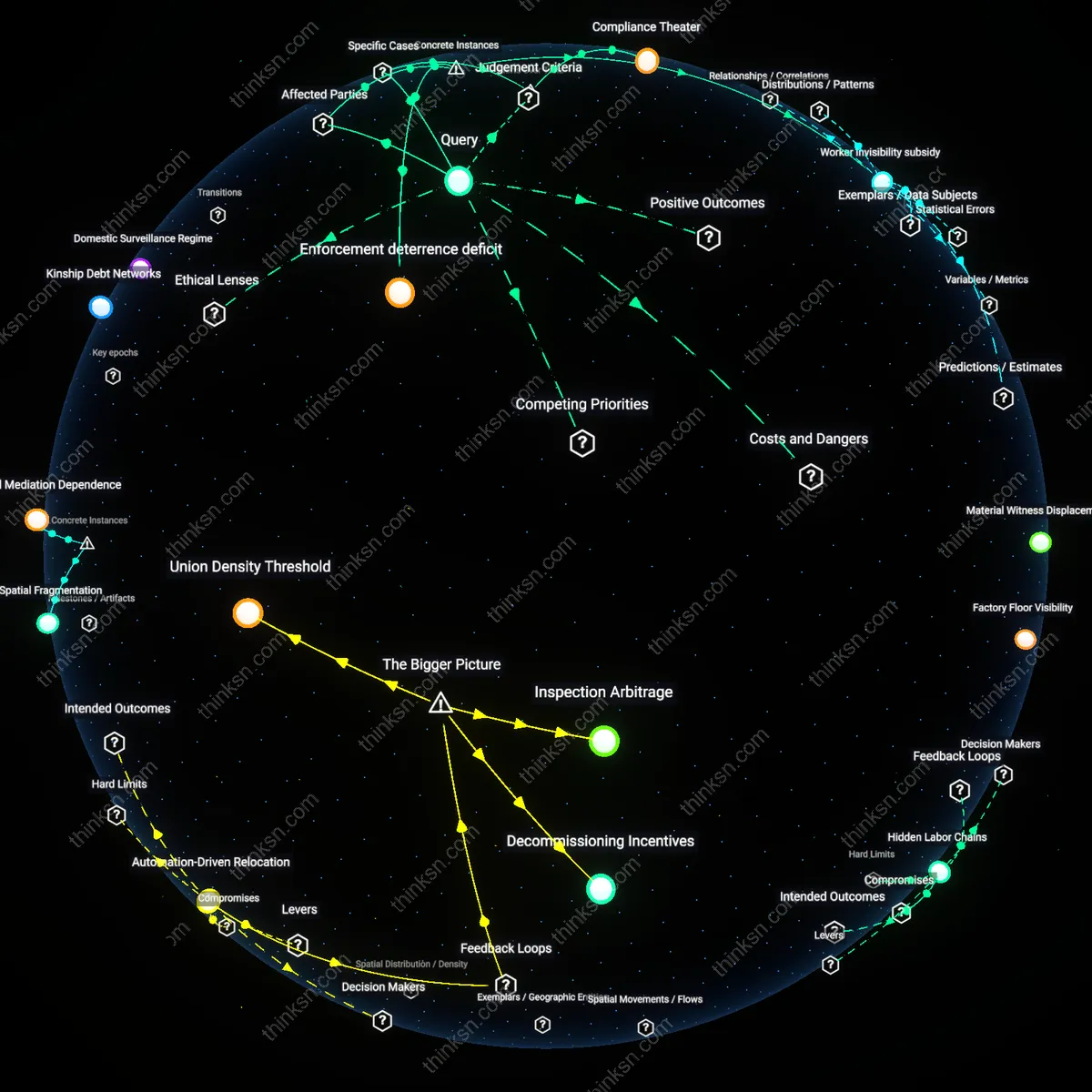

Compliance Theater

Software development practices evolved over the past two decades to obscure data misuse by prioritizing audit-ready documentation over functional transparency, particularly after the adoption of GDPR and HIPAA safe harbor provisions. Engineering teams began designing systems where access logs, consent flows, and data lineage were structured to satisfy regulatory checklists rather than enable investigative clarity, so that while everything appears compliant on paper, reconstructing actual data use trajectories requires navigating contradictory or redundant metadata. This shift, institutionalized first in U.S. healthcare and EU fintech firms around 2016, privileged performative accountability—where the appearance of oversight replaced its substance—making it harder for whistleblowers to isolate anomalies without being dismissed as misunderstanding protocol. The non-obvious consequence is that regulatory alignment became a shield against scrutiny, not a scaffold for it.

Architectural Plausible Deniability

The modularization of software systems through microservices and serverless computing, accelerated by Amazon Web Services’ dominance post-2014, dispersed data processing across ephemeral, regionally isolated nodes that lack coherent audit trails, intentionally fragmenting the chain of custody for user data. Developers optimized for scalability and fault tolerance, but the side effect was that no single actor or service retains full context of a data transaction, so even internal investigators cannot trace misuse without cross-jurisdictional coordination among automated systems not designed to communicate forensically. This design paradigm, championed by platform engineers at large cloud-native firms, embeds structural ignorance—where opacity is not a failure but a feature of resilience—directly contradicting the assumption that better technology yields better oversight. The result is a system where plausible deniability is baked into infrastructure, not just policy.

Normalization of Obfuscated Ownership

The widespread outsourcing of core data-handling functions to third-party software libraries and SDKs—such as tracking pixels from Meta, Google Analytics, or Segment—since the early 2010s has dissolved clear lines of agency, so that developers integrating these tools often do not know, or cannot control, what data is copied or where it flows. These components operate as black boxes within apps and websites, governed by opaque terms of service and dynamically updated code, rendering whistleblower claims about data misuse legally ambiguous because responsibility is technically diffused across multiple vendors. This ecosystem, centered in Silicon Valley–driven SaaS markets, normalizes dependency on unaccountable code, challenging the intuitive belief that software creators retain moral or technical authority over their products. The obscured chain of ownership becomes an institutionalized barrier to both detection and credible reporting.

Obfuscated Audit Chains

Centralized microservices at Facebook between 2014 and 2018 fragmented user data flows across independently managed services, making end-to-end tracking of data misuse dependent on cross-team coordination that was systematically restricted during privacy investigations. This architecture, while touted for scalability, rendered internal tracing of unauthorized data access—such as during the Cambridge Analytica harvesting—dependent on metadata logs deliberately siloed between teams, concealing systemic overreach under operational complexity. The non-obvious consequence was not just inefficiency but intentional jurisdictional friction, where no single team had both the authority and data access to reconstruct full data misuse pathways.

Automated Compliance Theater

Google’s deployment of automated data use controls within its Ads Personalization system after 2016 created audit-ready logs that simulated transparency while masking actual data routing through obfuscated machine learning pipelines. These systems generated regulatory-compliant reports, as seen during the 2019 French CNIL inquiry, but excluded runtime data shadow copies used for behavioral modeling—meaning whistleblowers could verify policy adherence only at surface interfaces, not in backend training loops. The critical underappreciated mechanism was that compliance automation didn’t reduce opacity but weaponized it, substituting symbolic audit trails for functional traceability.

Juridical Data Proxies

In Uber’s 2017 ‘Ripley’ program, engineers reclassified rider location pings as ‘service reliability metrics’ rather than personal data, enabling continuous tracking under permissible use categories defined in user contracts and internal data governance charts. This repurposing of data categories bypassed internal whistleblower triggers tied to ‘personal data access logs,’ allowing stealth surveillance that evaded both employee alerts and regulatory scrutiny until exposed by external journalists in 2018. The overlooked insight was not mere mislabeling but a structural exploitation of policy-category gaps, where data's legal identity—not its use—determined traceability.

Obfuscated Audit Trails

Standardization of microservices architectures in cloud platforms after 2015 made data flows inherently fragmented, preventing linear tracing of user data misuse. Engineers at companies like Amazon and Google decomposed monolithic systems into ephemeral, distributed services that log data asymmetrically and often discard audit metadata within hours. This shift, while improving scalability and deployability, systematically erased the chronological paper trails whistleblowers once relied on in pre-2010 enterprise systems. The non-obvious consequence is not just complexity, but intentional log fragmentation normalized under the banner of technical efficiency, making internal exposure reliant on access to multiple disjointed systems simultaneously.

Privileged Abstraction Layers

Rise of third-party data infrastructure providers like Segment and Snowflake after 2012 introduced contractual and technical barriers that isolate developers from end-use visibility. These platforms centralize data pipelines behind APIs and access controls governed by legal agreements that explicitly prohibit reverse engineering or metadata retention by client-side engineers. As a result, even insiders are barred from observing how data is combined, enriched, or monetized downstream. The neglected insight is that abstraction—often celebrated as modular design—has become a governance mechanism, where the most meaningful data manipulations occur in legally protected, technically opaque vendor environments beyond any single employee’s investigative reach.

Explore further:

- What would happen if regulators required engineering teams to design audit trails for investigative clarity instead of compliance checklists?

- If automated compliance logs can look transparent but hide critical data flows, how often do whistleblower reports fail because they’re limited to reviewing only what these systems choose to reveal?

Where do whistleblower claims in the tech sector tend to emerge from, and how does the location of teams relate to where evidence is stored and preserved?

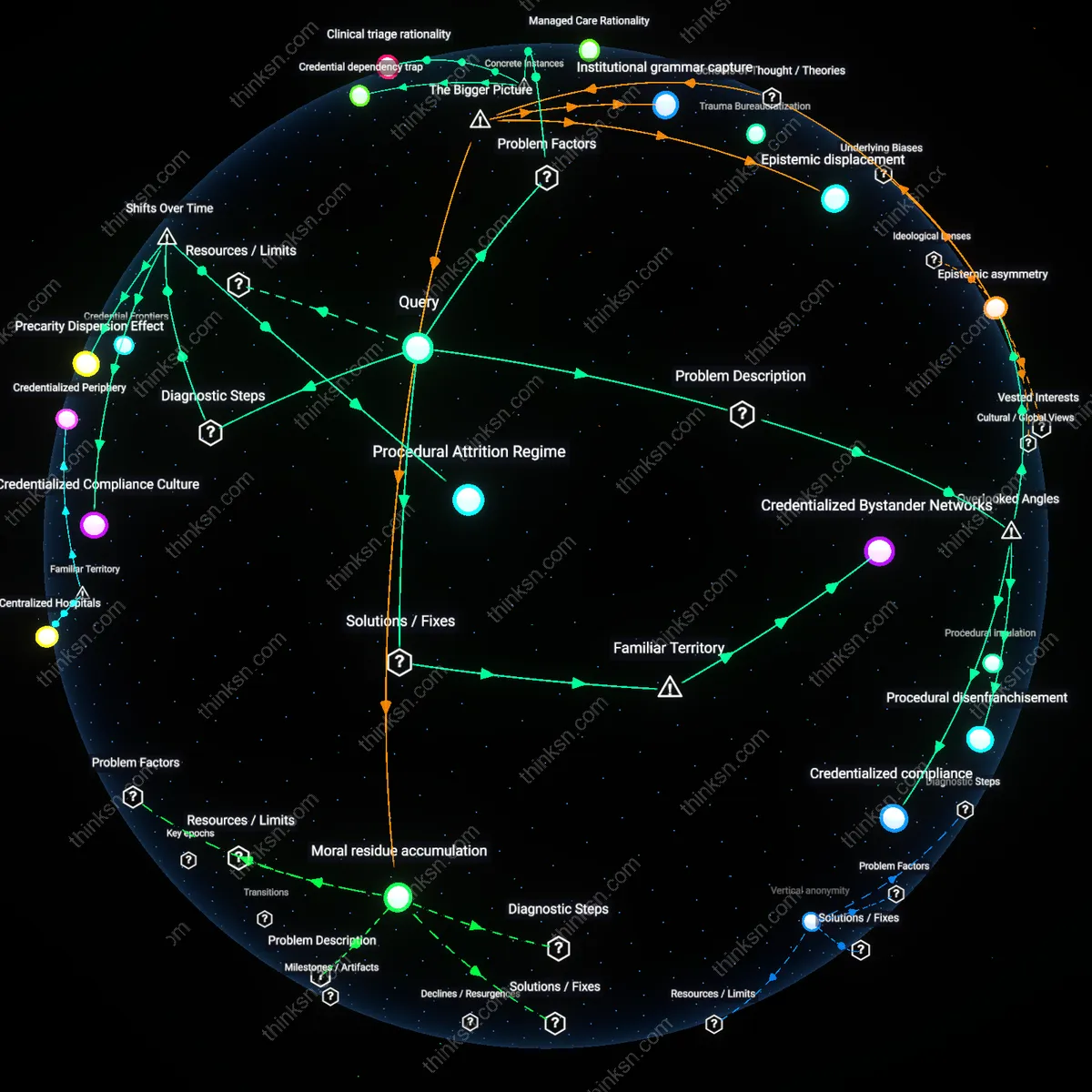

Offshore Data Enclaves

Whistleblower claims in the tech sector now originate most frequently from engineering teams based in Northern California, a shift from the early 2000s when such disclosures emerged from isolated data center operators in the Midwest and Pacific Northwest; this transition reflects the consolidation of technical control and data storage under centralized cloud infrastructures managed from Silicon Valley corporate campuses, where engineers with cross-system access accumulate jurisdictional authority over geographically dispersed server farms. The legal and physical separation between decision-making hubs in California and data storage nodes across international borders—such as AWS facilities in Ireland or Singapore—creates a fragmented evidentiary landscape, where proof of misconduct is stored in jurisdictions not aligned with the team that first observed it. What remains underappreciated is how this spatial decoupling, cemented after the 2013 Snowden revelations, has made internal disclosures contingent not on proximity to servers but on access to orchestration platforms, producing new forms of technical enclosure.

Temporal Custody Chains

The origin of whistleblower claims has shifted from discrete physical locations to ephemeral data states following the industry-wide adoption of end-to-end encryption and auto-deletion protocols after 2016, meaning evidence is now preserved not in static repositories but across transient custody chains governed by cryptographic key rotations and multi-jurisdictional compliance algorithms. Teams working on encrypted messaging platforms—like those at Signal or within WhatsApp’s infrastructure—now generate most disclosures not from where data is stored, but from where decryption events occur, such as in monitored corporate offices in Dublin or Vancouver that serve as legal compliance chokepoints. Because these moments of data revival are time-bound and jurisdictionally constrained, the ‘location’ of evidence shifts from territorial servers to procedural thresholds, such as audit windows or lawful intercept handovers. The underappreciated outcome is that whistleblower viability now hinges on timing access during narrow decompression intervals, revealing a custodial temporality that supersedes geography.

Archival Geography

Whistleblower claims in the tech sector most frequently originate from engineering teams embedded in satellite data centers located in politically unstable jurisdictions—such as Google’s Dublin hub or AWS’s data centers in Bahrain—because these sites are tasked with maintaining real-time backups and regulatory compliance across multiple regimes, leading to unintended accumulation of incriminating metadata trails that persist beyond corporate purge cycles. The proximity of local engineers to both operational systems and legal gray zones enables them to retain forensic access to version logs and access records that are officially classified as ephemeral, revealing patterns of data misuse that headquarters teams assume are erased. This dynamic is overlooked because standard narratives focus on Silicon Valley executives as the epicenter of both decision-making and exposure, obscuring how physical data storage decentralization creates durable evidentiary footholds in peripheral nodes.

Toolchain Asymmetry

The most actionable whistleblower evidence emerges not from executive oversight teams but from DevOps engineers in mid-tier cloud infrastructure firms like Fastly or Cloudflare, who maintain cross-client logging tools that capture unredacted API call patterns, including unauthorized data scraping by privileged internal teams at client companies. These engineers retain access to immutable audit trails embedded in debugging and billing pipelines—tools built for internal reliability but which inadvertently archive evidence of external abuse—because billing compliance requires data retention longer than privacy policies allow deletion. This source is systematically ignored because public discourse treats whistleblowing as a top-down moral decision by informed insiders, not a byproduct of asymmetric tool access in service-layer technicians who are technically outside the offending organization yet possess irreplaceable evidence.

Maintenance Epistemology

Whistleblower claims crystallize most reliably from immigrant contract workers in facilities-management divisions at companies like Meta’s Singapore operations or Apple’s Manila support centers, who perform physical server maintenance and thus develop informal knowledge of shadow partitions and offline storage drives used to bypass automated compliance checks, because firmware-level backups are routinely retained in non-networked storage for disaster recovery and remain invisible to centralized audits. These workers, often excluded from formal reporting channels and non-disclosure agreements, become custodians of material proof through hands-on interaction with decommissioned hardware, creating a parallel, embodied archive disconnected from digital retention policies. This pathway is rarely acknowledged because standard investigations prioritize digital footprints over tacit, site-specific knowledge held by precarious laborers without institutional authority.