Does FCC Fragmentation Stunt AI Regulation?

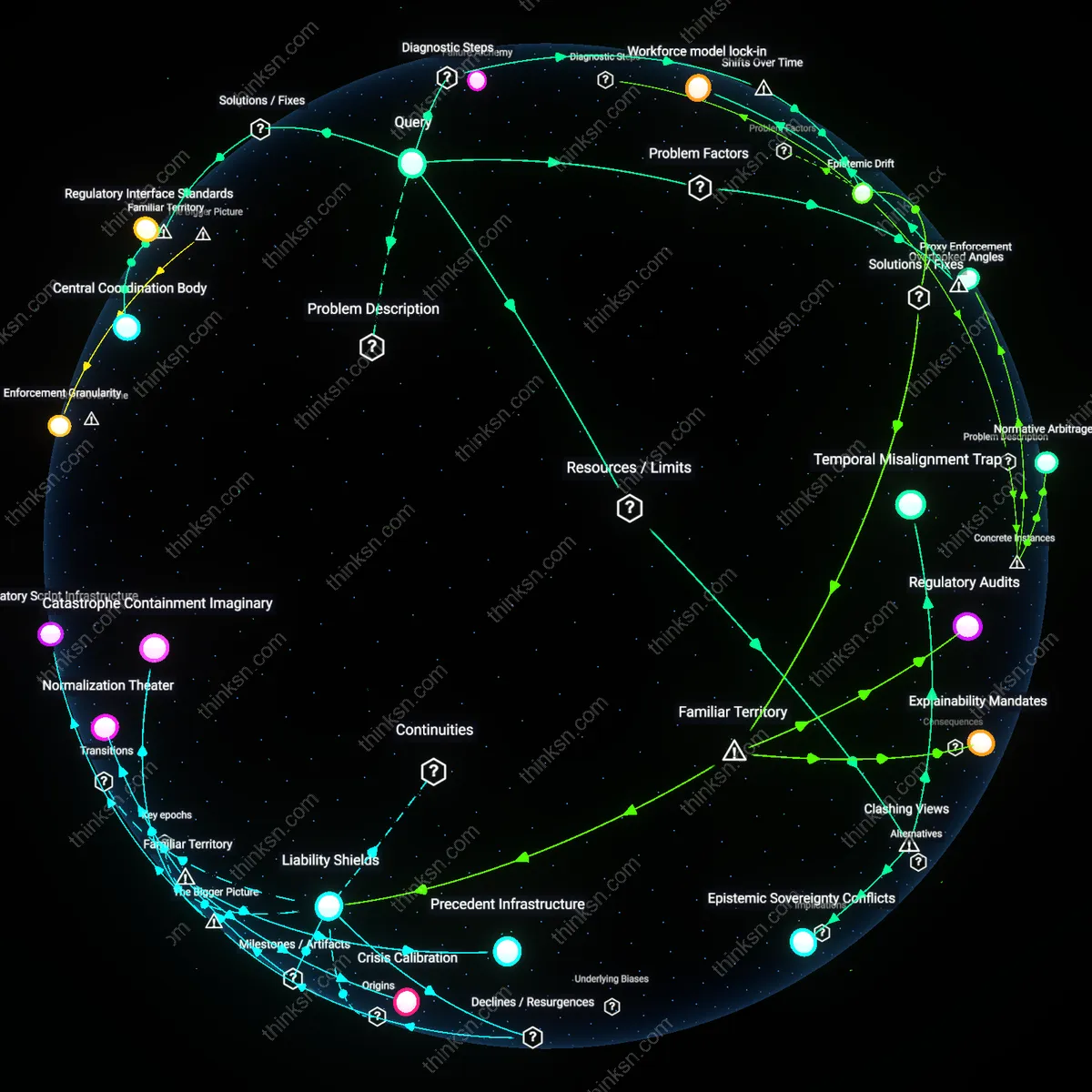

Analysis reveals 8 key thematic connections.

Key Findings

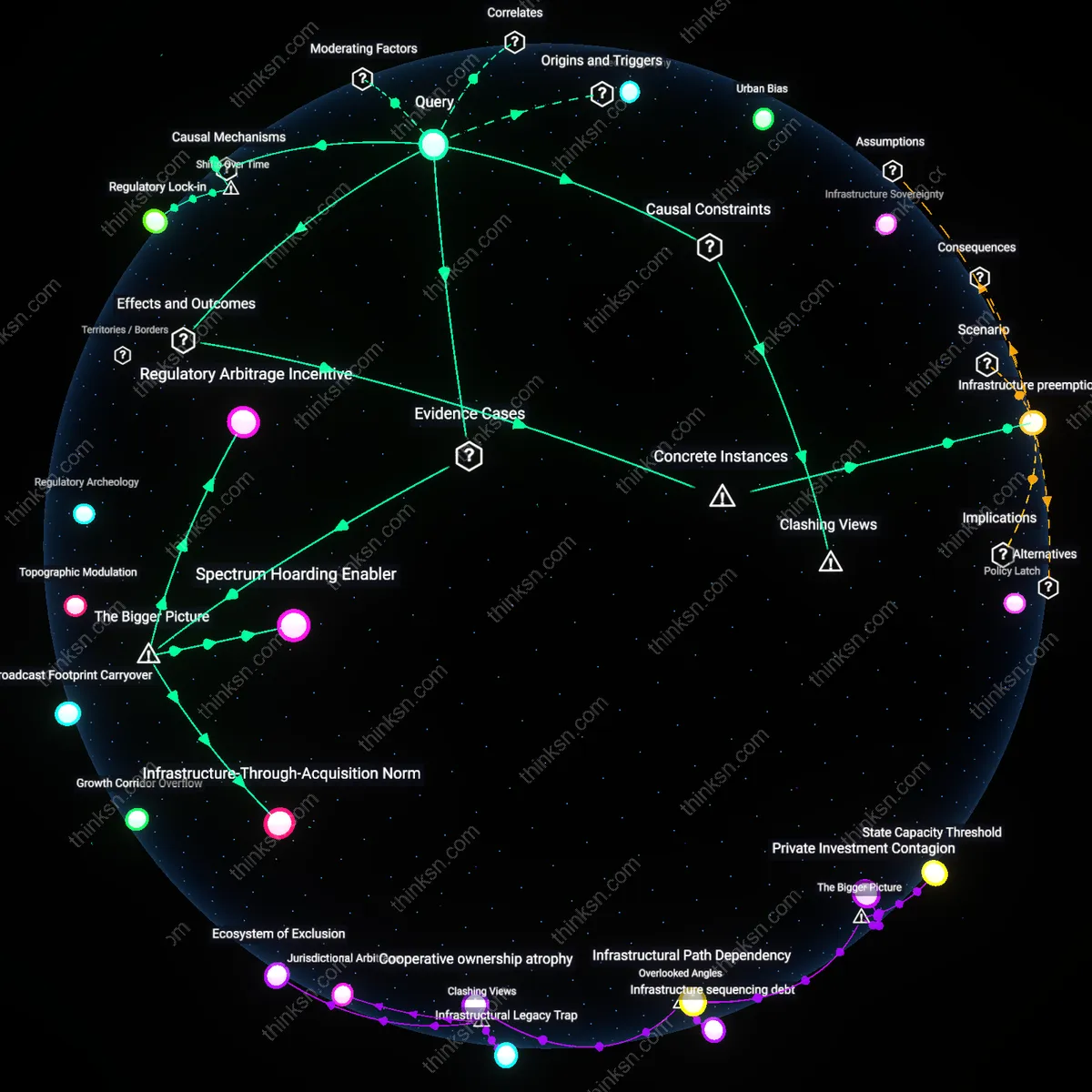

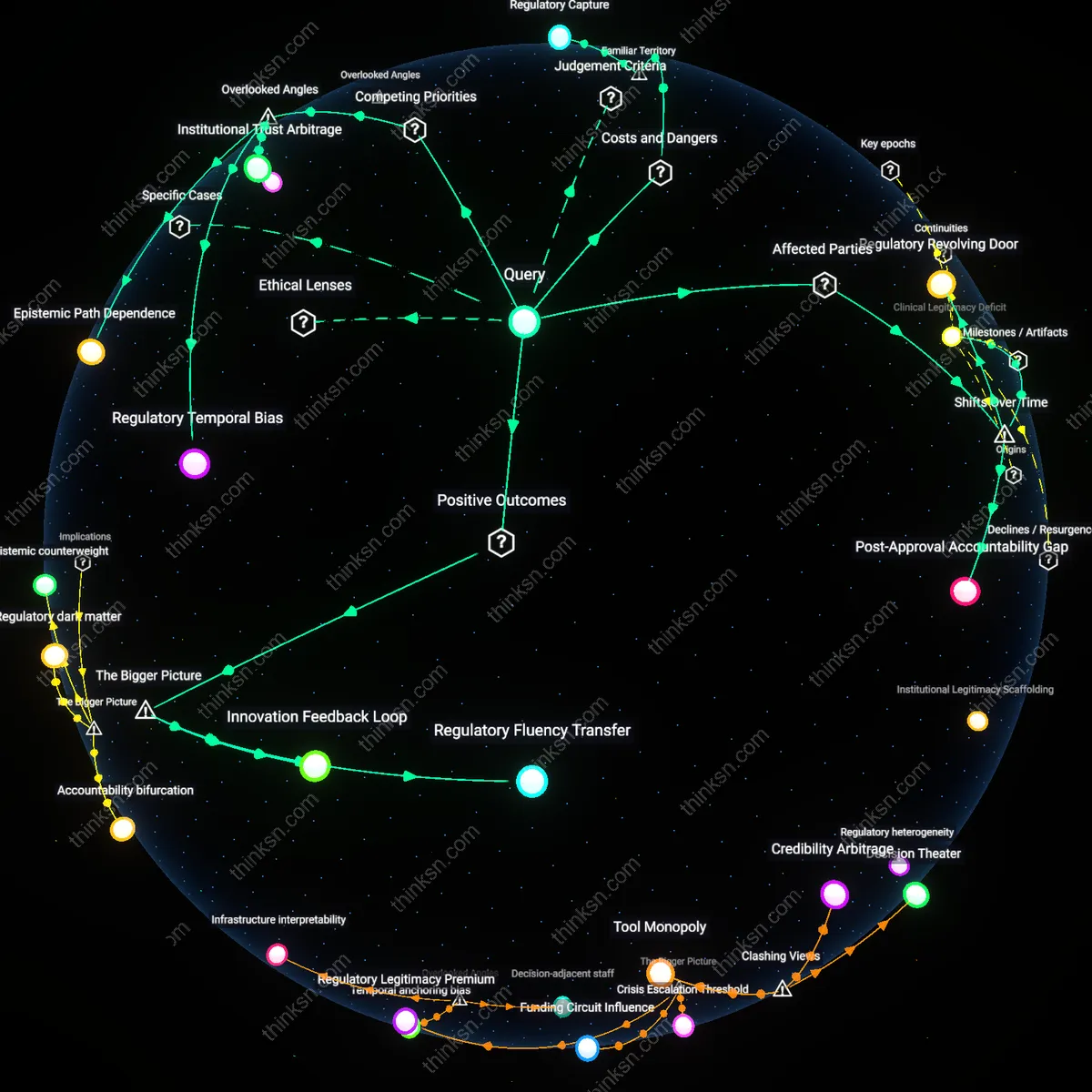

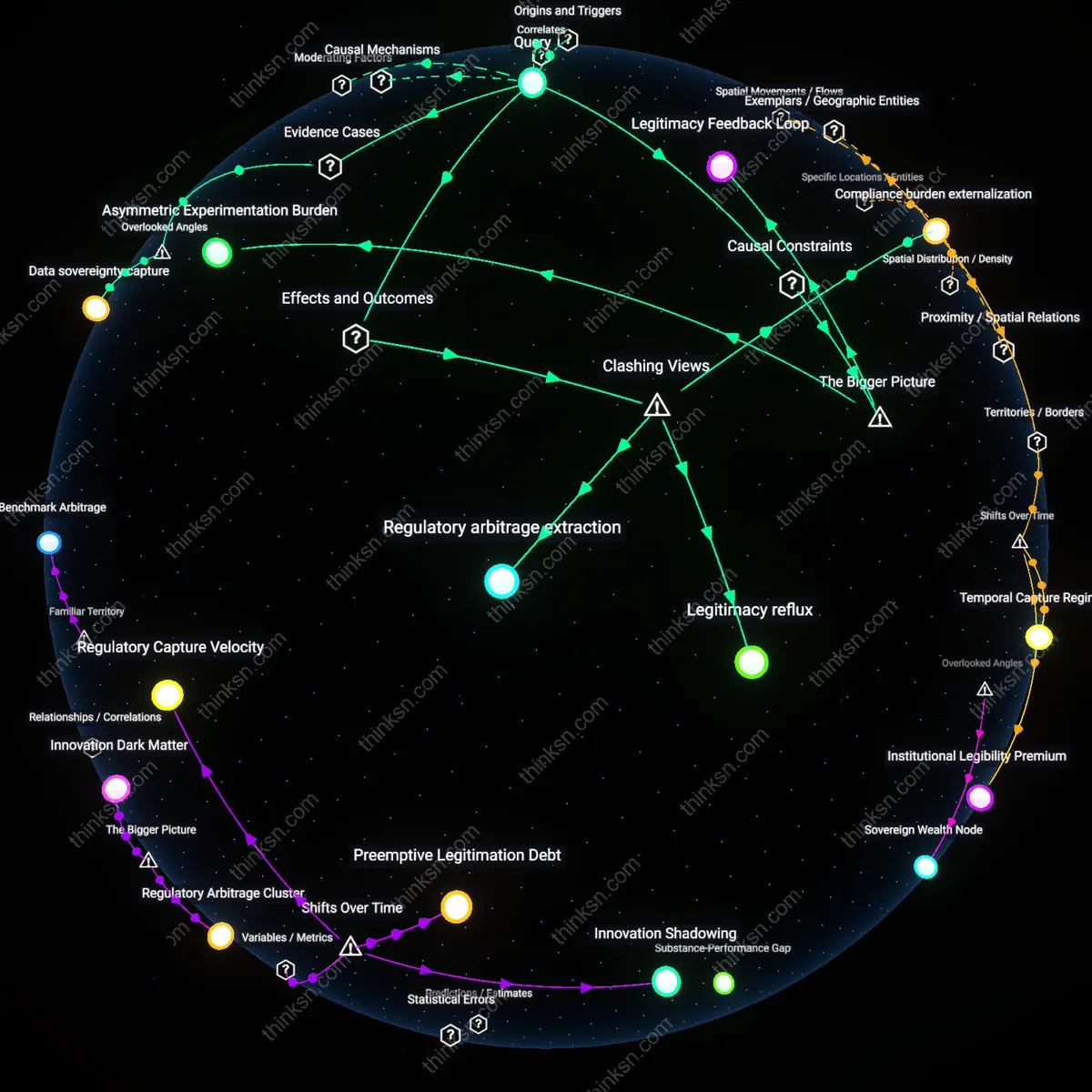

Infrastructure jurisdictional drift

Sectoral regulatory boundaries fail to align with the material pathways through which AI systems are provisioned, particularly cloud computing infrastructures that host AI workloads across healthcare, finance, and defense under uniform conditions. AWS, Azure, and Google Cloud operate vast data centers that serve regulated sectors, yet no agency has authority over the shared computational substrate—its energy sourcing, hardware optimization priorities, or API governance—which implicitly shapes how AI models are trained, scaled, and constrained. The FCC does not regulate compute allocation, the EPA doesn’t assess algorithmic load cycles, and the FTC lacks visibility into backend orchestration—all while these backend decisions determine the de facto compliance environment for AI across sectors. The overlooked dynamic is that jurisdiction follows policy domains, not infrastructure layers, allowing core technological decisions with cross-cutting consequences to escape oversight entirely, not because of regulatory neglect, but because they fall beneath the surface of sectoral visibility.

Workforce model lock-in

The entrenched career pipelines within sectoral agencies reproduce siloed thinking by rewarding domain-specific legal and technical expertise that actively disincentivizes cross-agency fluency in AI governance. Career civil servants in the SEC, FAA, or HHS advance through mastering narrow regulatory canons—securities law, aviation safety, clinical trial protocols—without structural incentives or rotational programs to develop shared mental models for AI risk that transcend domains. As a result, even when interagency task forces convene, participants interpret AI through the lens of their home agency’s enforcement culture and statutory language, preventing the emergence of a unified regulatory epistemology. The overlooked factor is that bureaucratic human capital, not just statutory authority, determines regulatory cohesion—agency staffing models silently enforce fragmentation by treating multidisciplinary competence as peripheral rather than central to modern technological governance.

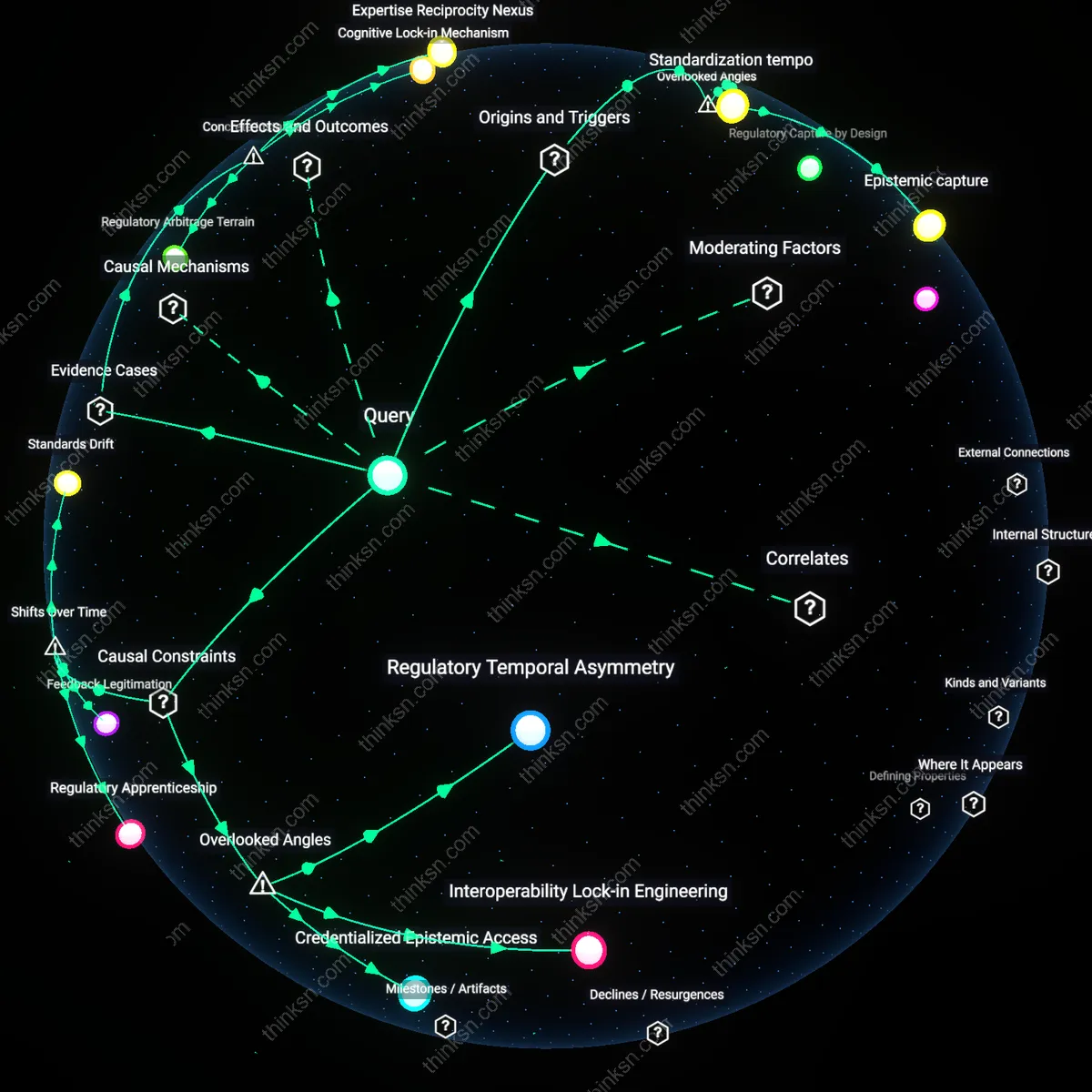

Epistemic Drift

Since the early 2010s, the accelerating pace of AI development has outstripped the slow, precedent-based knowledge accumulation processes within sectoral agencies, creating a growing epistemic gap between regulatory understanding and technological capability. Unlike in the 1990s, when FCC engineers could grasp evolving telecom infrastructure incrementally, today’s machine learning systems involve opaque, adaptive architectures that resist traditional risk modeling—resulting in agencies like FDA or NHTSA relying on external consultants or industry self-assessment, which hollows out independent oversight capacity. This cognitive displacement signifies a shift from regulatory lag to systemic comprehension failure, where agencies no longer just react slowly but operate without coherent mental models of the technologies they oversee. The overlooked consequence is that sectoral specialization, once a source of expertise, now isolates agencies from the cross-domain literacy needed to interpret AI’s cascading societal effects.

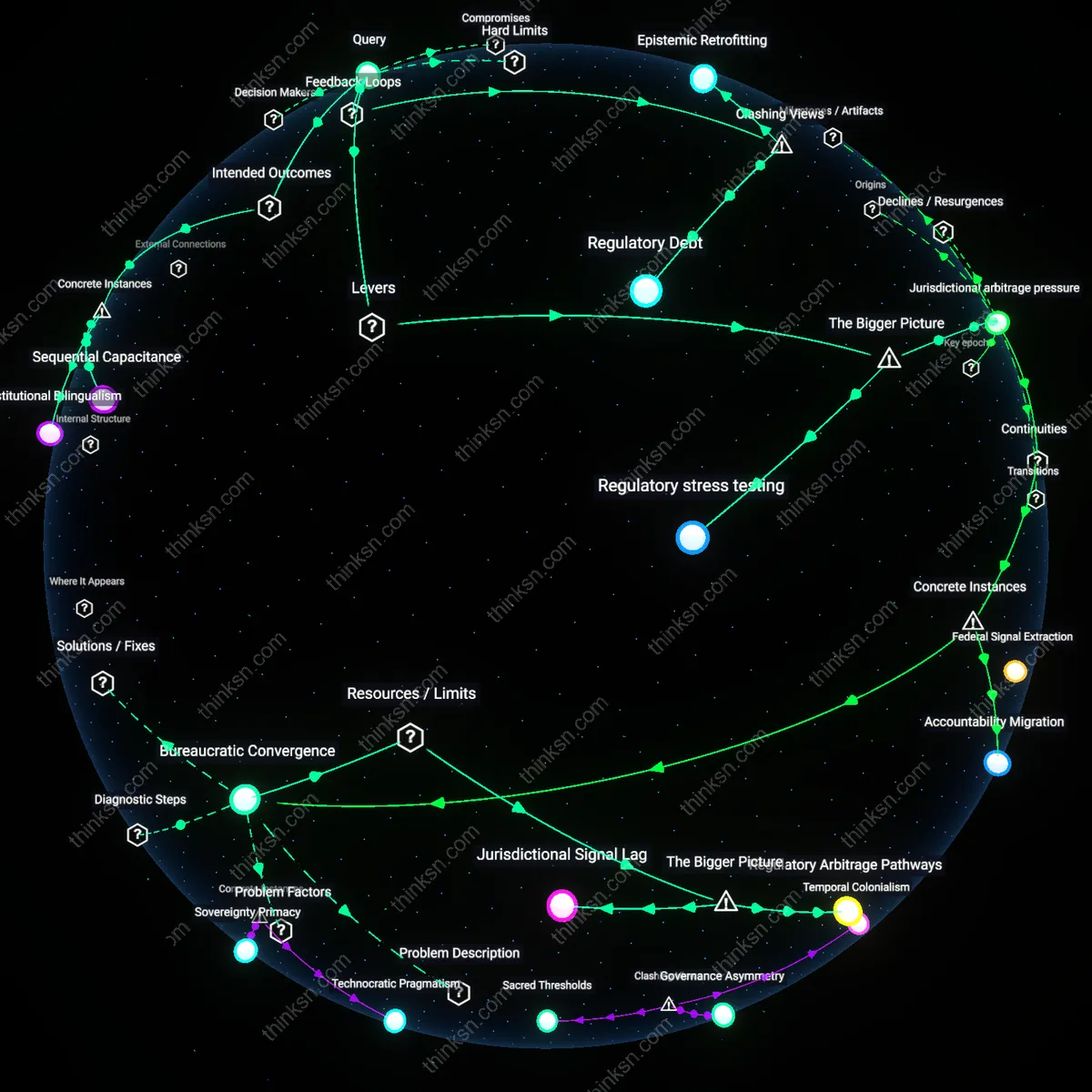

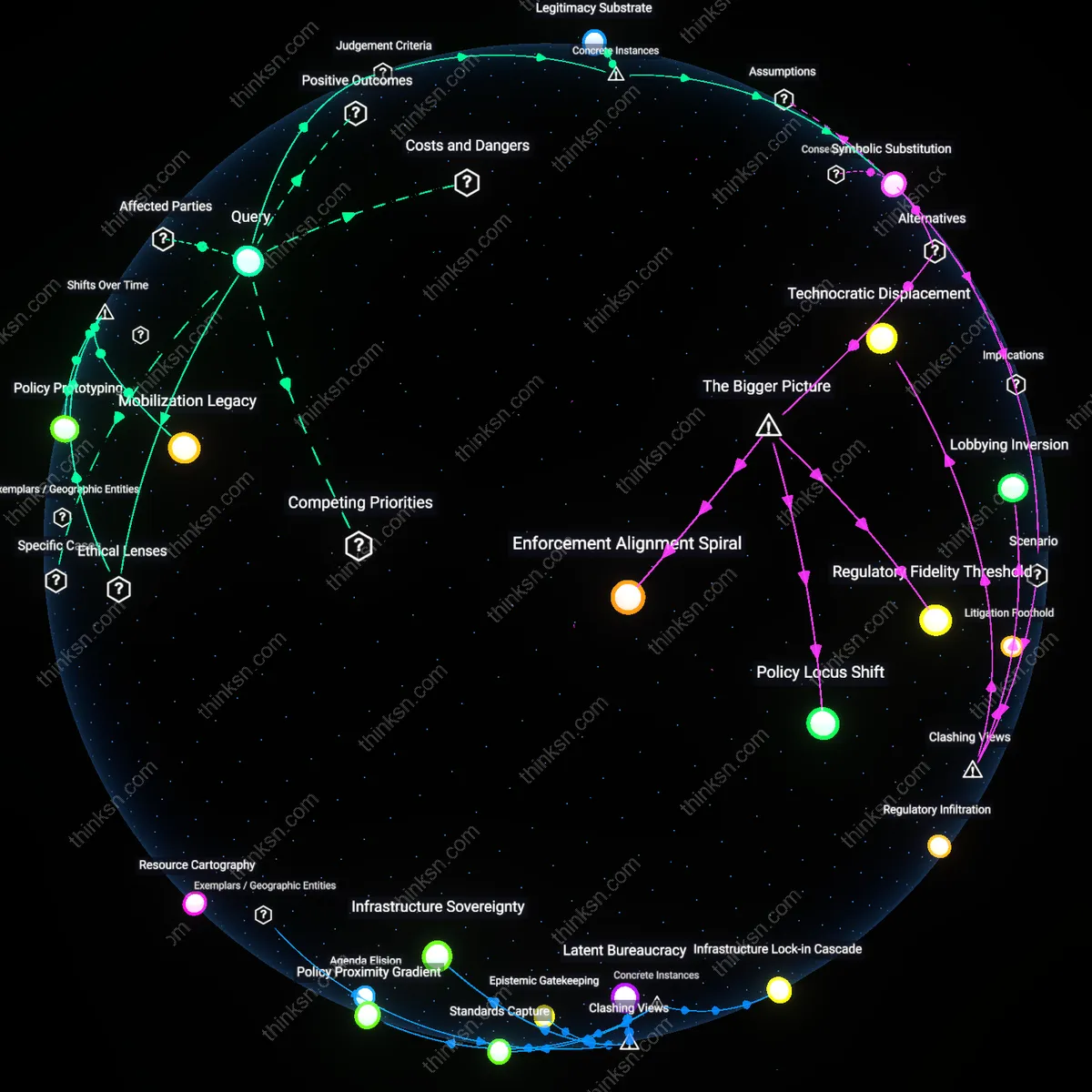

Central Coordination Body

Establish a White House–level council with binding authority over sectoral agencies to align AI regulation. This council would include the heads of the FCC, FTC, FDA, and DHS, operating through a mandate from executive order to resolve jurisdictional conflicts and set cross-agency technical standards. What’s underappreciated is that the current fragmentation isn’t due to lack of expertise but absence of enforcement over coordination—agencies comply with consultation requests only voluntarily, rendering interagency task forces symbolic rather than operational.

Regulatory Interface Standards

Mandate interoperable compliance templates for AI systems that all federal agencies must adopt when issuing rules. These templates—developed by NIST in coordination with OMB—would define common data disclosure, impact assessment, and audit requirements regardless of sector. The overlooked reality is that regulatory incoherence often stems not from policy goals but from incompatible procedural demands, such that a healthcare AI and a financial AI face structurally different reporting burdens even when posing similar risks.

Jurisdictional Triggers

Replace agency jurisdiction based on static sectoral boundaries with dynamic triggers tied to AI system capabilities, such as autonomous decision-making at scale or real-time behavioral manipulation. When an AI crosses such a threshold, regulatory authority would automatically shift to a designated lead agency regardless of industry context. The non-obvious insight is that clinging to domain-specific oversight ignores how AI functions identically across sectors once its behavior reaches a systemic risk level—current frameworks treat a recommendation engine in social media and retail as entirely separate, despite identical algorithmic architectures and societal impacts.

Temporal Misalignment Trap

Yes, the reliance on legacy agencies impedes cohesive AI regulation because their budget cycles, hiring authorities, and rulemaking timelines are mismatched with AI’s development pace. The FCC, for example, needed five years to reclassify broadband under Title II, while generative AI models iterate in months; its legal and technical staff cannot be rapidly scaled to assess real-time deployment risks. This creates a systemic lag where regulatory tools are obsolete before finalization. Contrary to the assumption that agencies can 'adapt,' the real constraint is temporal immobility—emerging technologies don’t wait for public comment periods.

Epistemic Sovereignty Conflicts

Yes, sectoral agencies resist cohesive AI governance not due to incapacity but to protect their domain-specific knowledge authority. The FDA guards its validation protocols for AI in medical devices, rejecting NHIA-style frameworks used in transportation or finance, even when interoperability would improve safety. These are not bureaucratic inefficiencies but deliberate assertions of epistemic control—agencies treat AI not as a shared technological layer but as a subservient tool to their existing mandates. The underappreciated reality is that cohesion fails not from lack of coordination but from competing regimes of expertise that treat integration as a threat to legitimacy.