Does More Media Mean Less Truth?

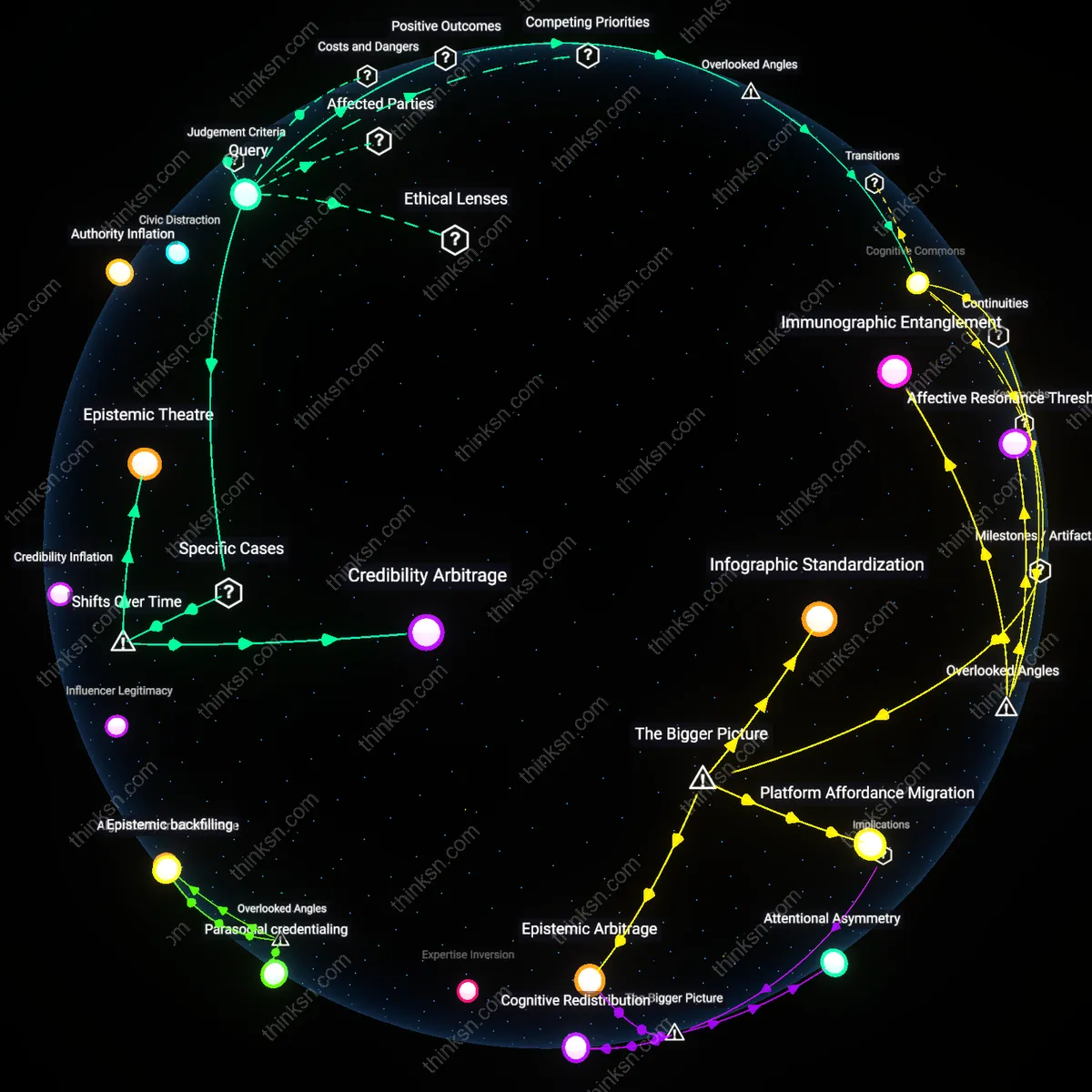

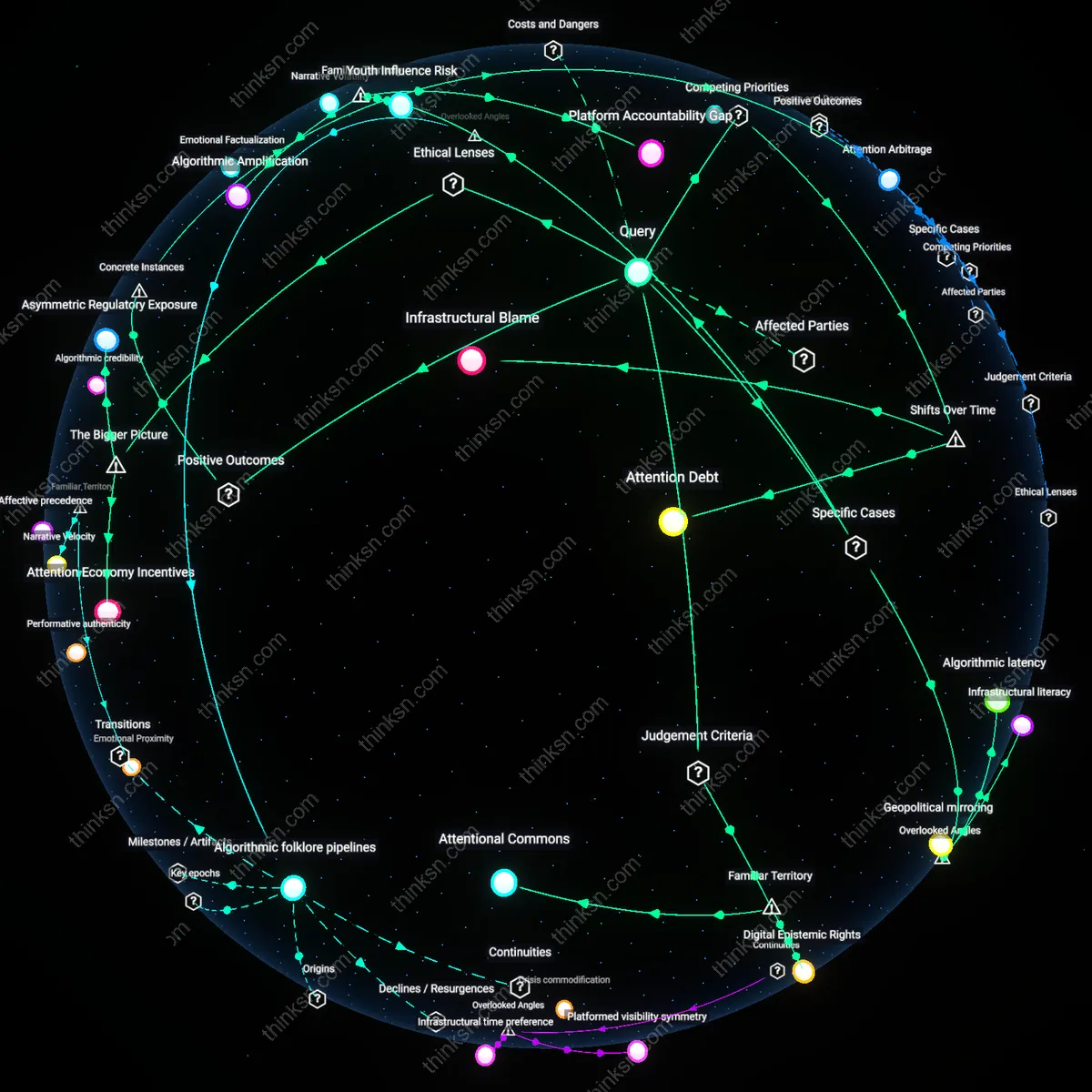

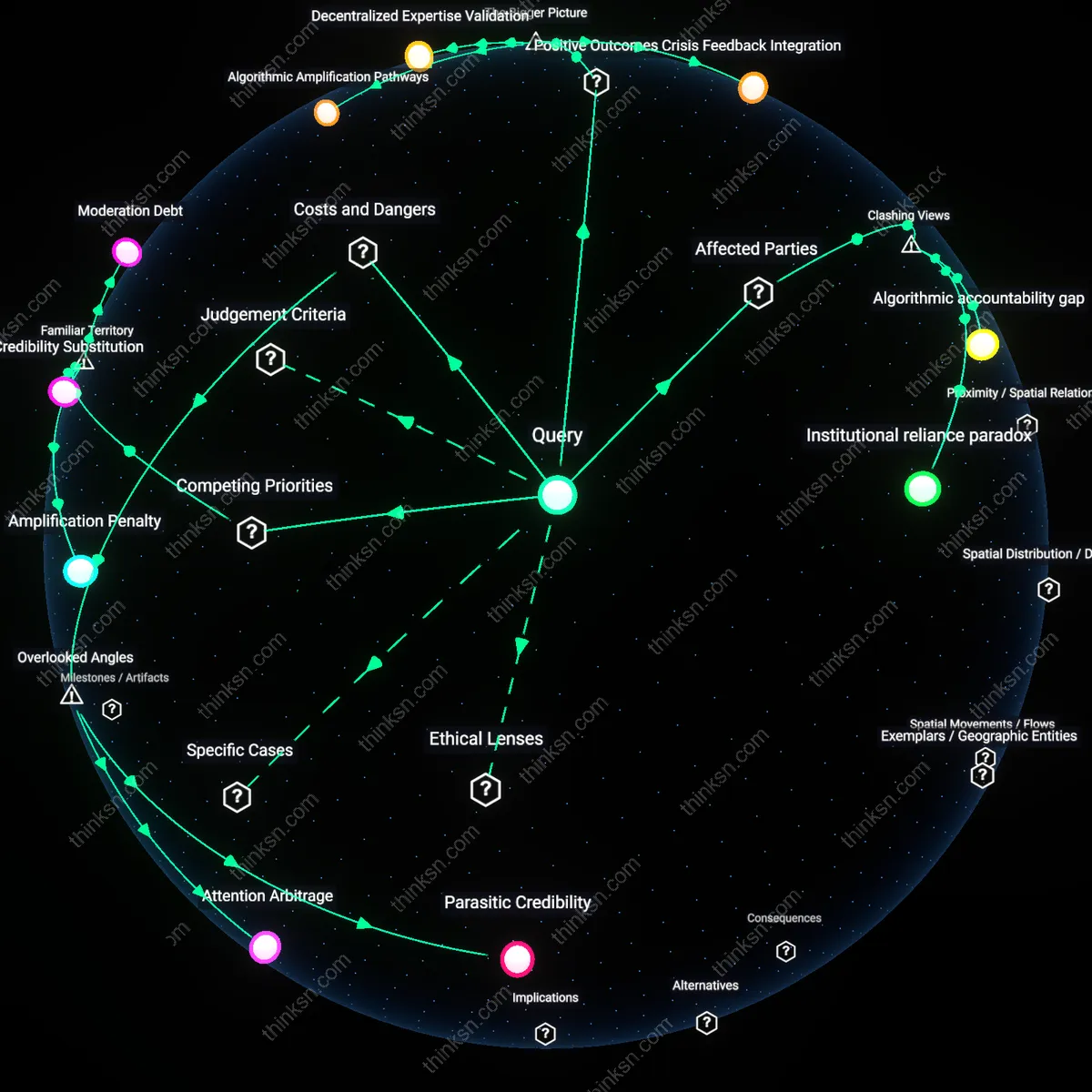

Analysis reveals 11 key thematic connections.

Key Findings

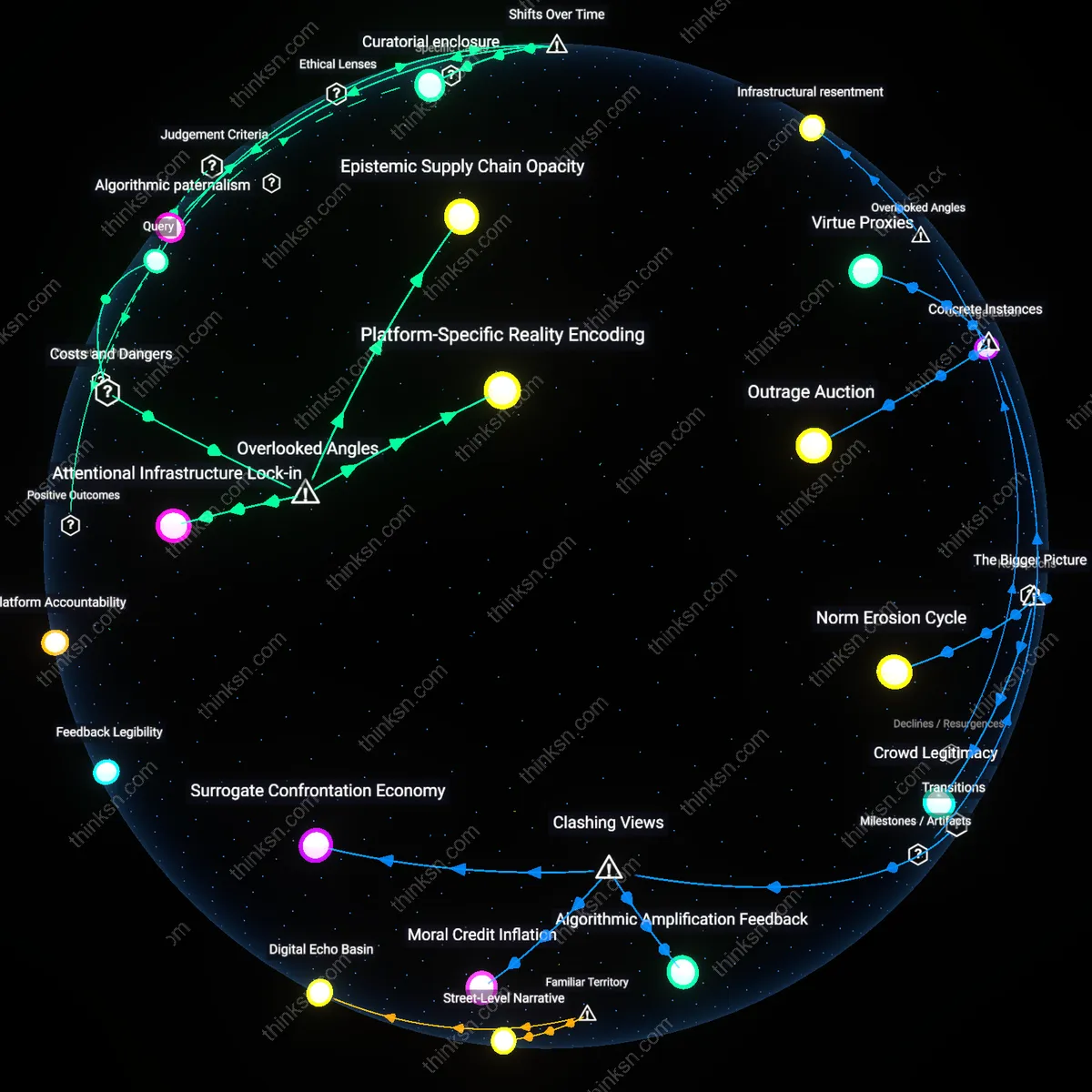

Epistemic asymmetry

Accessing more sources in a fragmented media environment can enhance truth acquisition among expert institutions precisely because they are better equipped to decode and contextualize coordinated misinformation as a signal of adversarial intent. Intelligence agencies, scientific bodies, and investigative journalists exploit the abundance of fringe content to map disinformation networks, trace source provenance, and anticipate manipulation patterns—turning noise into intelligence. This reframes misinformation not as a contaminant of truth but as a byproduct that reveals the operational footprint of bad actors, a dynamic systematically underappreciated when focusing only on public vulnerability. Most analyses assume increased exposure uniformly degrades understanding, but for institutional stewards of knowledge, the proliferation of sources serves as a distributed sensor array.

Credulity infrastructure

Expanding source access undermines truth not primarily through content saturation but by reinforcing preexisting cognitive dependencies among economically marginalized demographics who rely on algorithmically amplified platforms as their sole information pipeline. Rural populations, under-resourced seniors, and under-schooled communities experience not choice but coercion in their media consumption, where algorithmic curation mimics familiarity and trust while quietly funneling them into disinformation ecosystems. The destructive mechanism isn't misinformation itself but the erosion of external reference points that would otherwise enable cross-verification—making the illusion of abundance a trap rather than a liberation. Contrary to the liberal assumption that pluralism of sources fosters resilience, for these groups, fragmentation dismantles the very infrastructure of discernment.

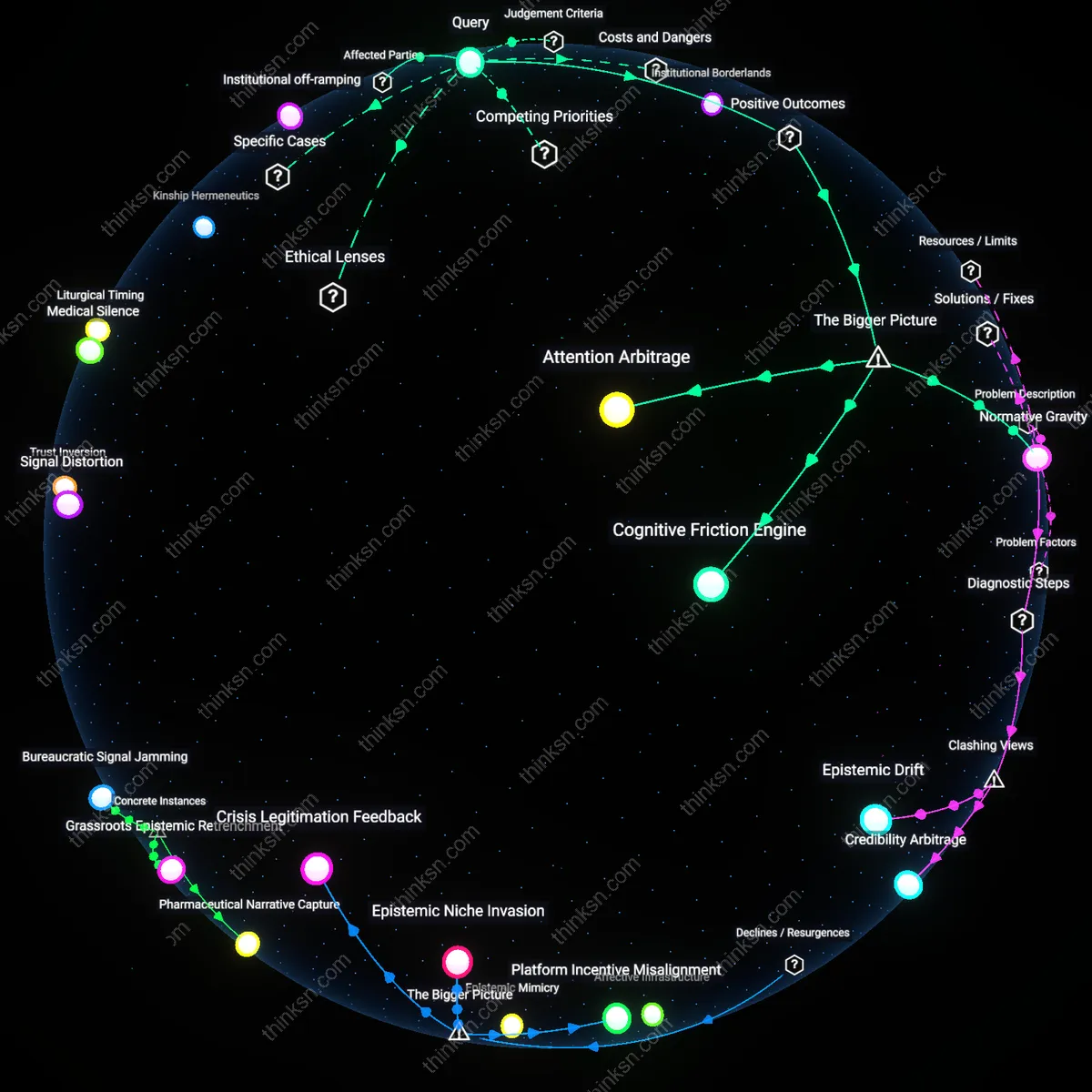

Narrative arbitrage

Coordinated misinformation thrives not by winning belief but by forcing reactive clarification from mainstream institutions, thus shifting the burden of truth maintenance onto public authorities while fringe actors extract legitimacy through controversy. Universities, regulatory agencies, and fact-checking consortia must expend resources to debunk claims that gain traction solely because they circulate widely across fragmented platforms, thereby granting them the appearance of relevance. This creates a perverse incentive structure where bad-faith actors profit from institutional attention, not public gullibility, transforming the pursuit of truth into a reactive performance. Unlike conventional wisdom that views misinformation as a battle for minds, the real damage lies in the exhaustion of truth-preserving institutions through engineered epistemic debt.

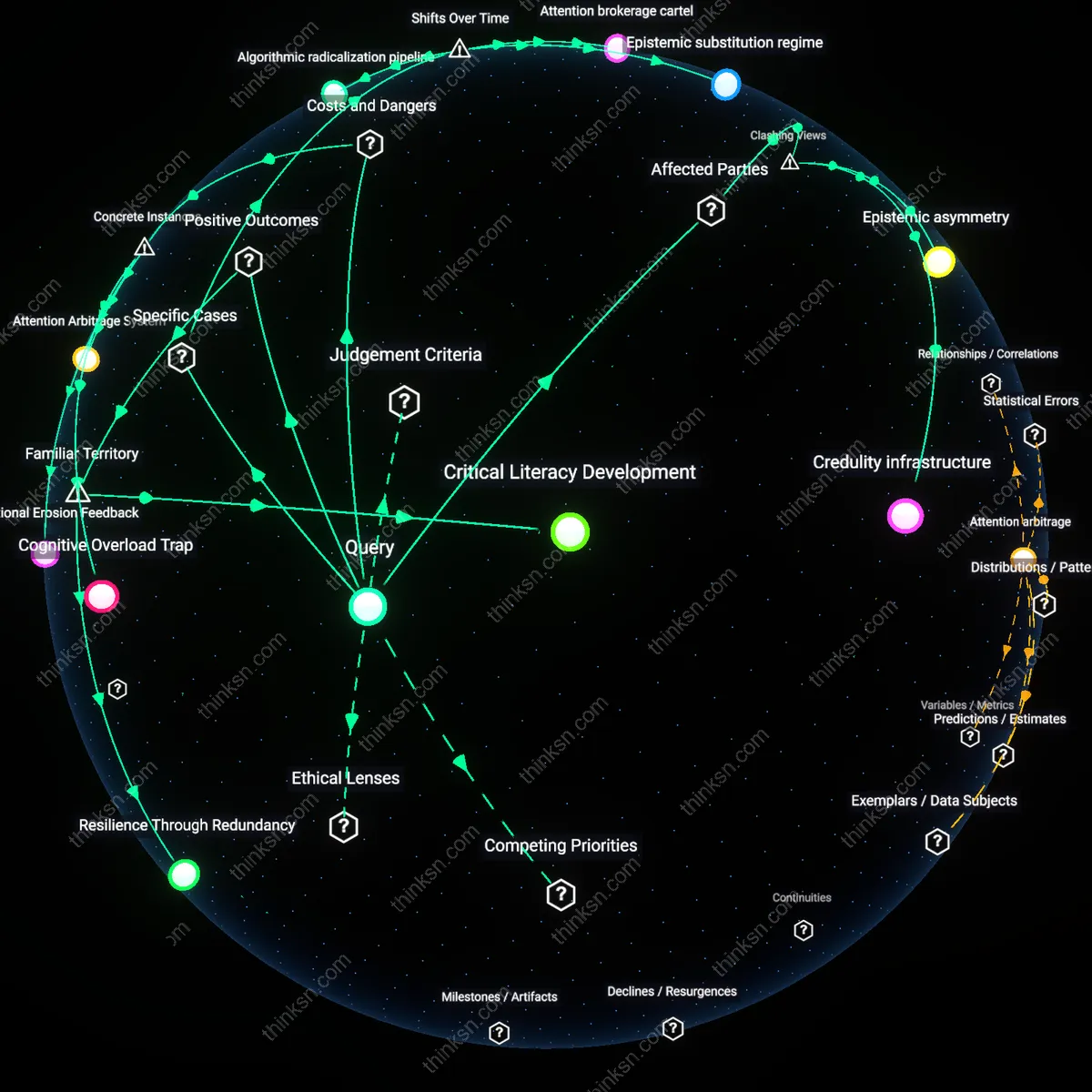

Critical Literacy Development

Accessing more sources in a fragmented media environment strengthens public critical literacy by forcing audiences to compare conflicting accounts of events, which activates cognitive verification behaviors. Individuals encountering contradictory reports—from outlets like Fox News, MSNBC, and independent fact-checking platforms such as PolitiFact—begin to scrutinize evidence, question sourcing, and assess bias, turning passive consumption into active evaluation. This shift is most evident during high-stakes events like elections or pandemics, where mismatched narratives push even non-expert users to adopt techniques resembling media forensics. The underappreciated outcome is that fragmentation, often criticized for polarization, actually simulates a real-world epistemic gymnasium where truth-testing becomes a daily habit rather than an academic exercise.

Resilience Through Redundancy

Exposure to multiple sources, even unreliable ones, builds societal resilience to misinformation by ensuring that factual corrections emerge from within the same channels where falsehoods spread. For example, when false claims about vaccine safety proliferate on social media, public health advocates, independent scientists, and algorithm-aided correction networks like Meta’s fact-checking partners respond directly in the same feeds, creating corrective friction in real time. This dynamic transforms misinformation spikes into teachable moments where audiences see truth-testing in action. The underappreciated reality is that a fragmented system allows truth to compete on the same terrain as lies, making resistance visible and participatory rather than dependent on top-down censorship.

Cognitive Overload Trap

Accessing more sources during the 2016 U.S. presidential election amplified exposure to coordinated misinformation because voters encountered conflicting narratives from outlets like RT, Breitbart, and fringe forums, overwhelming their ability to verify claims; the mechanism was not deception alone but the erosion of epistemic thresholds under information surplus, revealing how pluralism without verification infrastructures incentivizes heuristic-based consumption over critical evaluation.

Institutional Erosion Feedback

The proliferation of alternative health media during the 2019 measles outbreaks in Clark County, Washington, undermined public trust in CDC guidelines as parents consulted vaccine-critical communities on Facebook and YouTube, where emotionally resonant anecdotes displaced epidemiological data; this dynamic reconfigured medical authority as a negotiable preference, demonstrating how decentralized sourcing entrenches belief polarization even when factual consensus exists.

Attention Arbitrage System

During Brazil’s 2018 election, coordinated WhatsApp groups disseminated edited audio clips and doctored images of candidate Fernando Haddad, spreading through encrypted networks faster than fact-checkers could respond, illustrating how fragmentation enables bad actors to exploit algorithmic attention economies by weaponizing source multiplicity itself—where veracity becomes secondary to shareability within closed, high-trust chains.

Algorithmic radicalization pipeline

The rise of recommendation algorithms on YouTube after 2012 transformed misinformation exposure from passive consumption to active cultivation, where users are systematically guided from mainstream content to extremist conspiracies. This shift, marked by the platform’s pivot to engagement-based ranking, turned isolated users into embedded audiences within coordinated networks like the anti-vaccine or flat Earth movements, effectively replacing editorial curation with behavioral optimization. The non-obvious consequence is that truth erosion is no longer driven by false content alone but by the temporal trajectory of algorithmic navigation, which rewards escalating extremity over time.

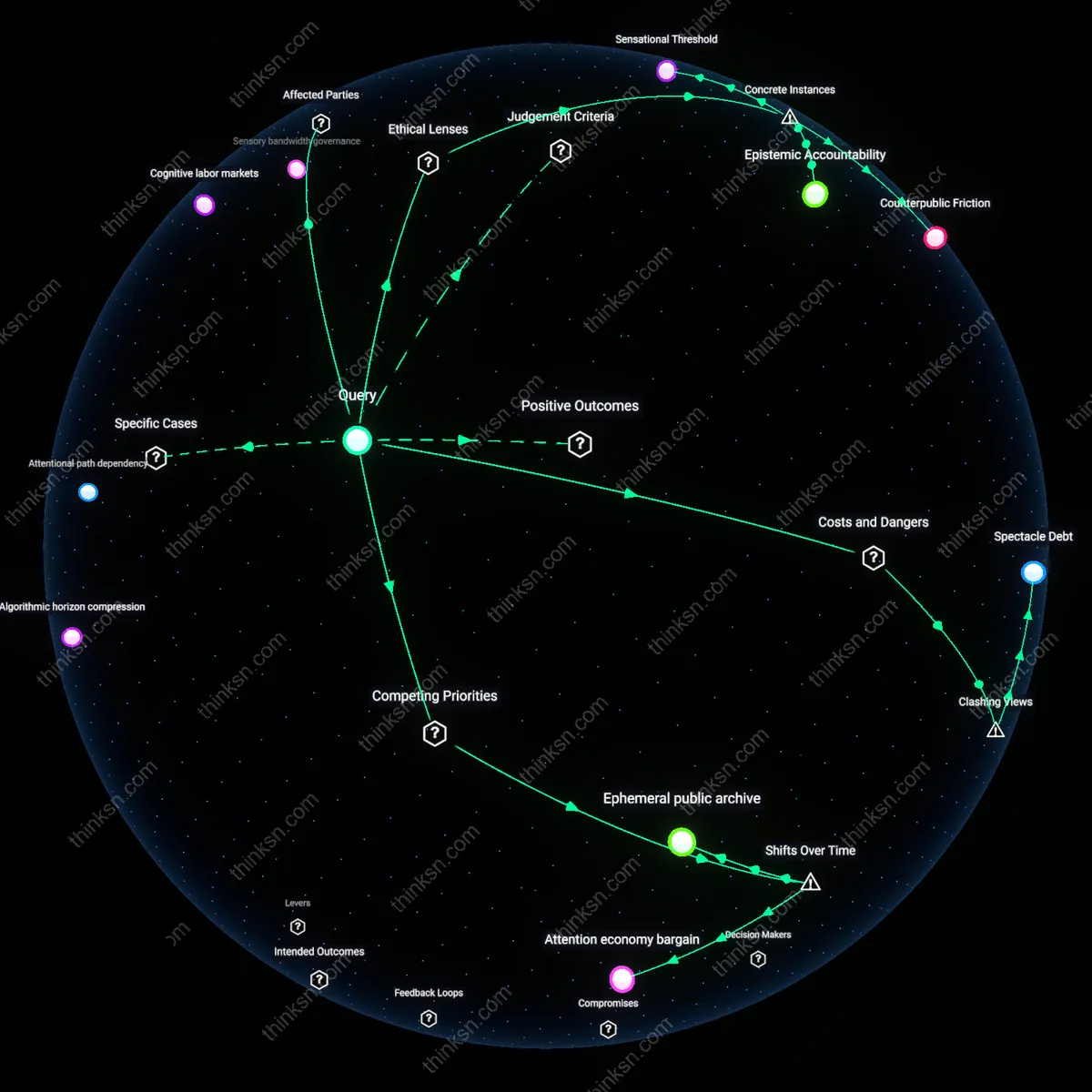

Attention brokerage cartel

The 2016 U.S. election revealed that coordinated misinformation operations by actors such as the Internet Research Agency exploited media fragmentation not by flooding all outlets equally, but by targeting niche platforms—particularly Facebook groups and alternative news sites—where trust-based communities could be weaponized. As legacy media lost audience cohesion, these actors inserted wedge issues that evolved from disinformation into identity-defining beliefs, shifting the mechanism from deception to social realignment. The critical but overlooked transformation is that misinformation ceased to be a content problem and became a structural feature of decentralized attention economies.

Epistemic substitution regime

Since the early 2020s, the proliferation of AI-generated media across messaging platforms like Telegram and WhatsApp in countries such as India and Brazil has replaced the verification of sources with the reinforcement of social identity, where fabricated audio, images, and news are treated as evidence within closed networks. This evolution marks a shift from misinformation as distortion to misinformation as functional replacement—where fabricated content performs the social role of truth, especially during electoral or communal crises. The key insight is that the fragmentation of media access now enables not just divergent narratives but entirely disjointed epistemologies that cohere internally without requiring external validation.