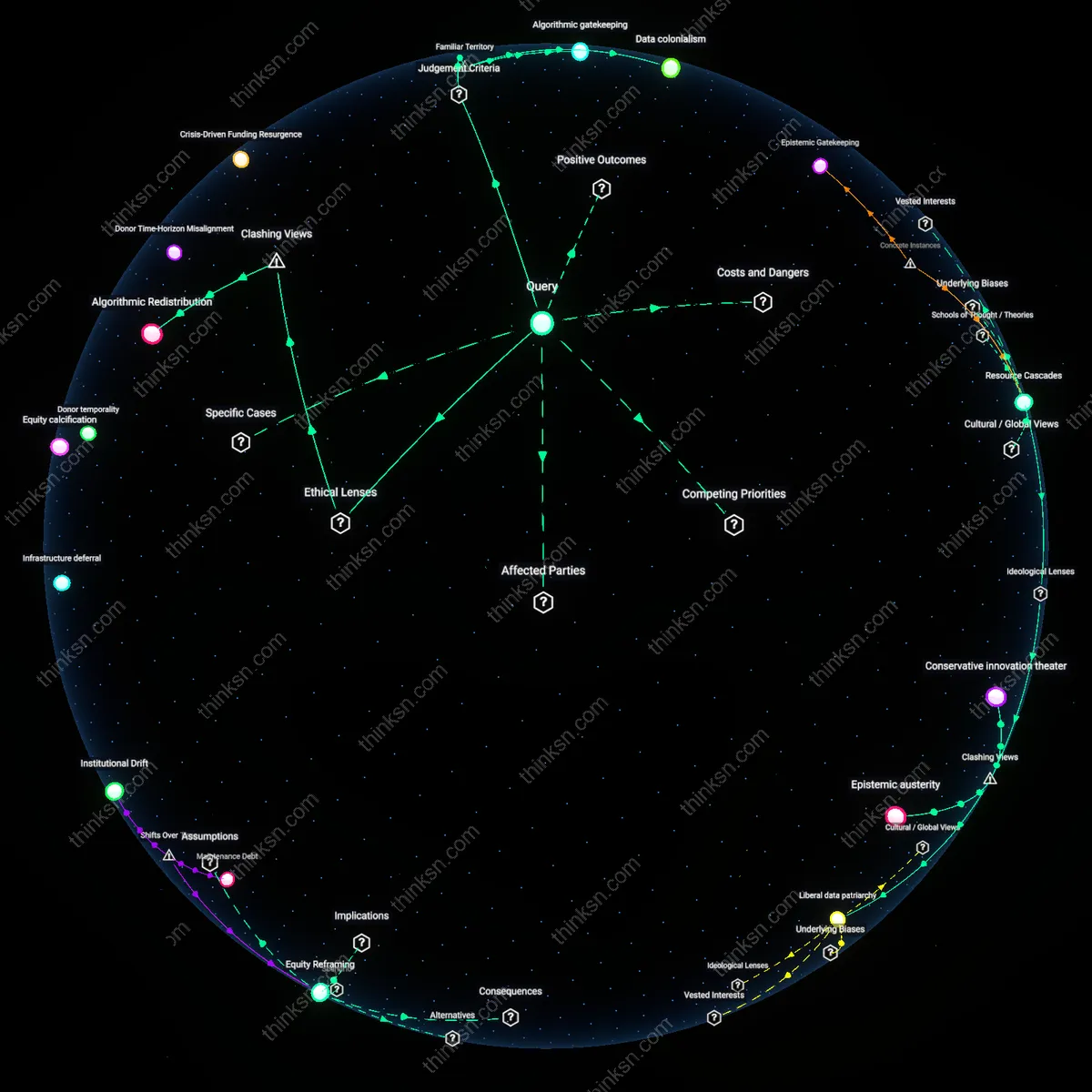

Does AI Efficiency in Grants Harm Underrepresented Applicants?

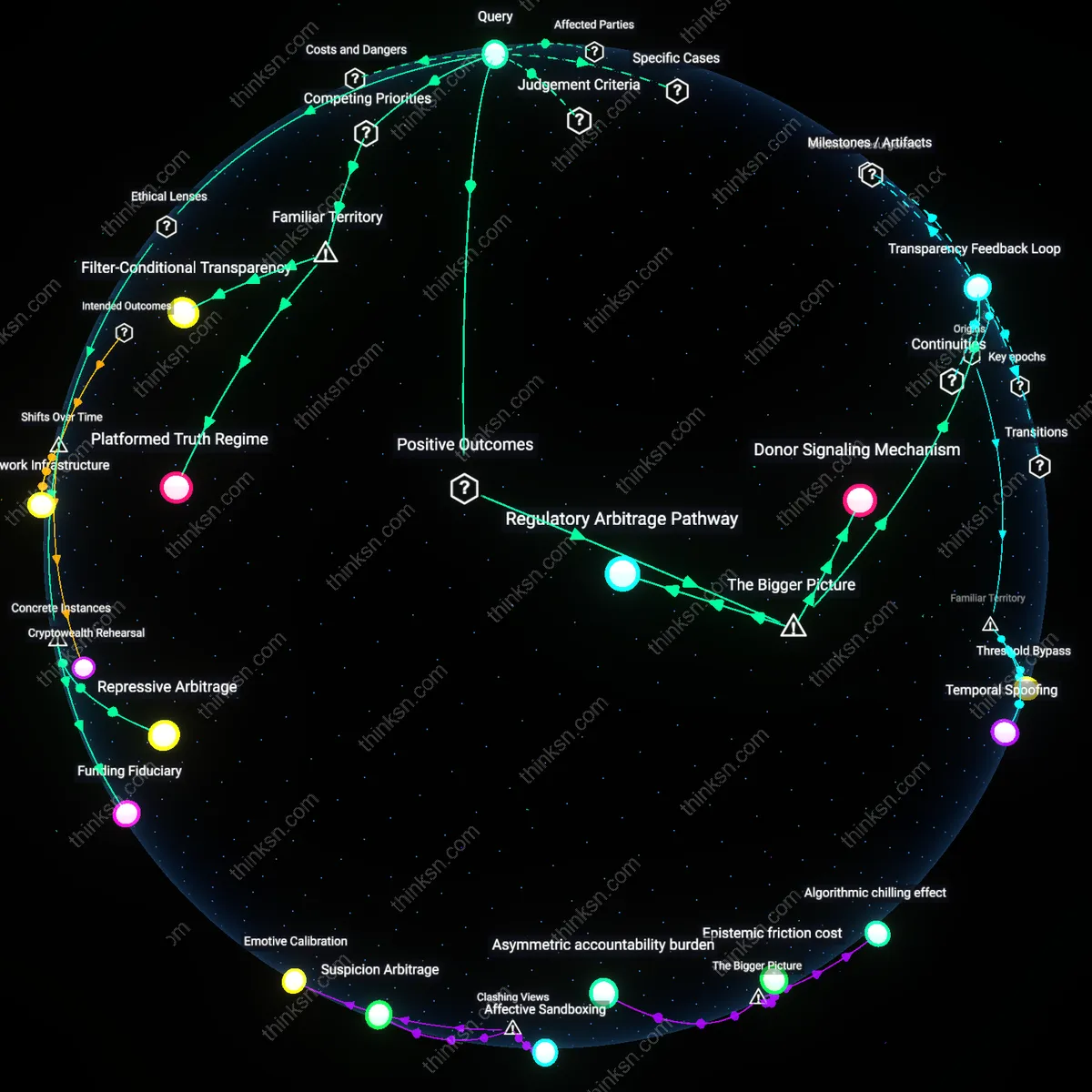

Analysis reveals 4 key thematic connections.

Key Findings

Algorithmic gatekeeping

Yes, AI in nonprofit grant allocation intensifies algorithmic gatekeeping because automated systems prioritize quantifiable metrics—like past funding history or organizational size—over qualitative indicators of community trust or cultural relevance, which underrepresented applicants often possess. This mechanism advantages larger, historically funded organizations by reinforcing existing patterns in the data, thereby replicating systemic exclusions under the guise of neutrality. What is underappreciated is that efficiency in processing applications becomes indistinguishable from structural exclusion when the criteria themselves are shaped by legacy inequities.

Equity debt

Yes, the use of AI creates accumulating equity debt by optimizing for administrative speed and cost reduction at the expense of corrective fairness measures that require intentional design, such as weighted scoring for marginalized communities. Unlike human reviewers who can apply contextual discretion, AI systems generalize from historical data, thus locking in previous disparities as baseline assumptions. The non-obvious insight is that efficiency gains are not neutral improvements but represent deferred investments in equity that compound exclusion over time.

Data colonialism

Yes, AI deployment reproduces data colonialism by extracting application patterns from marginalized groups to train models that ultimately serve decision-makers in centralized philanthropic institutions, often located in high-income countries or urban hubs. The system assumes epistemic authority over what constitutes 'merit' or 'impact,' overriding locally grounded knowledge and alternative success metrics. What most overlook is that the very act of digitizing and standardizing diverse grassroots efforts into uniform data fields becomes a form of extractive control, masked as technological progress.

Algorithmic Redistribution

Yes, AI in nonprofit grant allocation can enhance equitable outcomes by systematically prioritizing underrepresented applicants through counter-majoritarian design, challenging the assumption that automation inherently favors efficiency over equity. When trained on disaggregated socioeconomic indicators and calibrated to over-sample marginalized geographies—such as rural Black farming cooperatives in the Mississippi Delta or Indigenous water sovereignty initiatives in the Southwest—AI systems can function as instruments of redistributive justice under a Rawlsian difference principle framework, where fairness is measured by advantages to the least well-off. This repositions efficiency not as speed or cost reduction but as precision in targeting structural disadvantage, subverting the liberal neutrality presumed in most algorithmic governance critiques. The non-obvious insight is that AI, when embedded in emancipatory administrative traditions like those of the National Council of Churches’ civil rights–era benevolence networks, can encode equity as operational logic rather than external constraint.

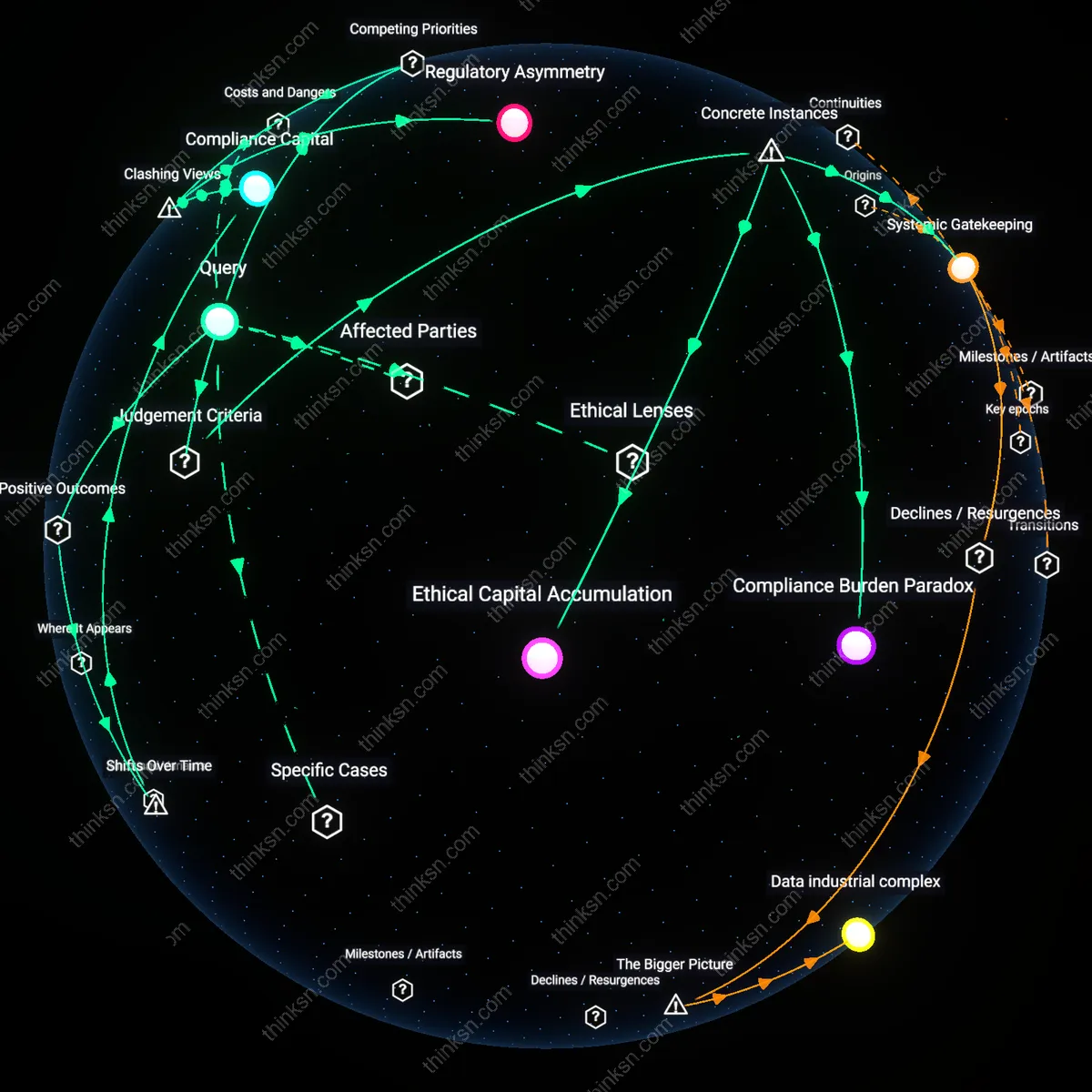

Deeper Analysis

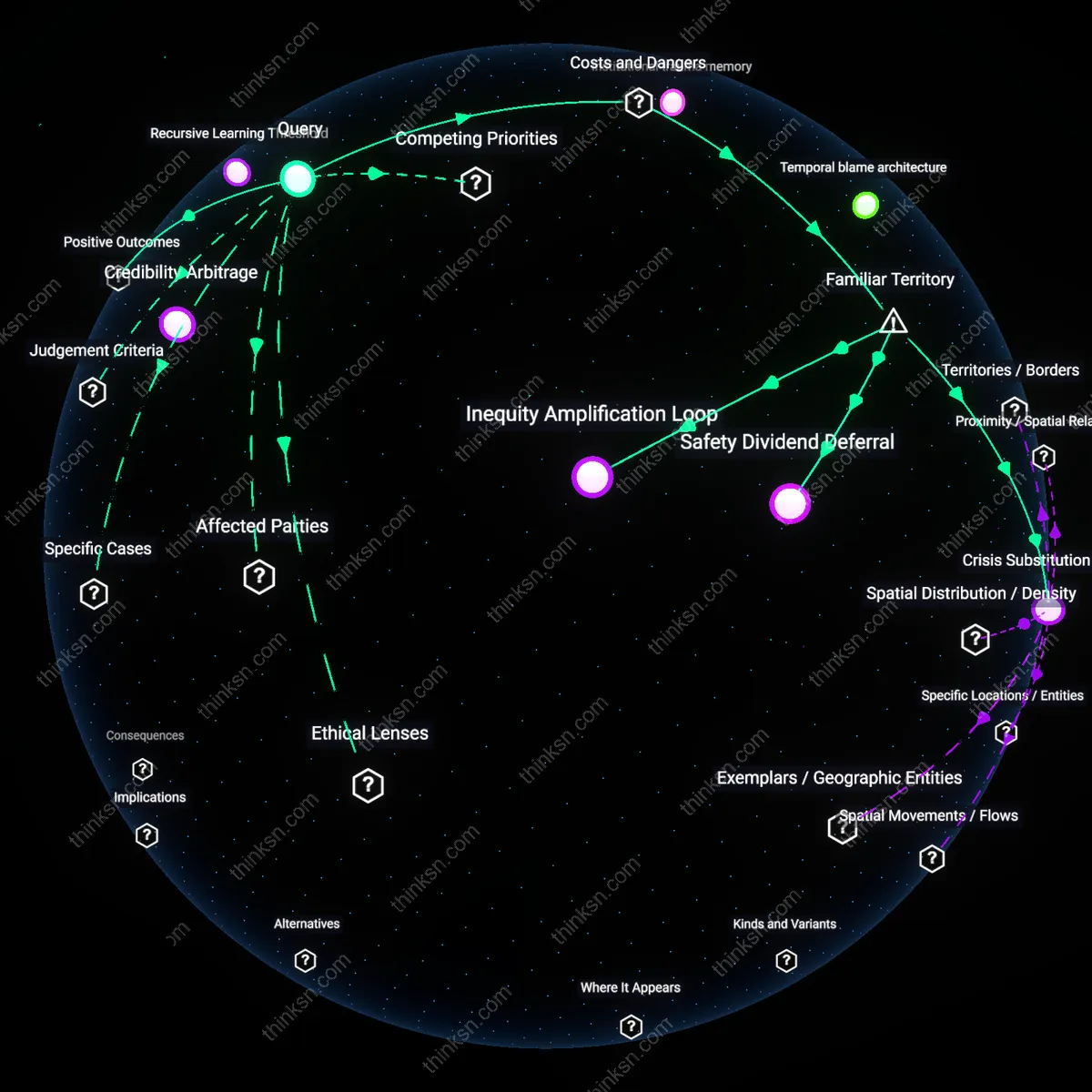

How do the everyday decisions made by grantmakers today shape whether AI systems widen or narrow the gap for underrepresented communities over time?

Infrastructural Lock-in

The Ford Foundation’s 2018 decision to fund proprietary data governance frameworks in Indian digital identity systems reinforced centralized, exclusionary AI dependencies by privileging interoperability with state-built Aadhaar infrastructure, which systematically disenfranchised rural, low-literacy populations during biometric authentication. This funding pathway embedded technical standards that could not adapt to local modalities of identity, thereby freezing out alternative community-led data architectures at scale. The non-obvious consequence was not bias mitigation but the cementing of a technical regime that made equitable adaptation structurally costlier over time.

Epistemic Gatekeeping

The Open Society Foundations’ 2020 AI grant portfolio prioritized algorithmic fairness research from Global North institutions like MIT and Stanford while excluding grassroots knowledge producers in Nairobi and Medellín who were developing context-specific models for algorithmic redress. By institutionalizing a narrow technical epistemology—quantitative, legal, and elite—the funding displaced lived-experience-based innovation that could challenge dominant AI designs. What remains underappreciated is how these choices reframed 'expertise' itself, making it harder for later funders to recognize alternative forms of valid knowledge.

Resource Cascades

The Rockefeller Foundation’s 2016 investment in AI-driven precision agriculture in sub-Saharan Africa funneled follow-on capital disproportionately toward startups in Kenya and Nigeria, accelerating infrastructure development in those hubs while bypassing Burkina Faso and Malawi despite comparable need. This created a self-reinforcing cycle where subsequent funders mimicked the geographic and technical priorities set by the initial grant, consolidating AI benefit accumulation in already-connected nodes. The overlooked dynamic is how early grants act as signal flares, shaping not just one project but entire investment topologies across regions.

Infrastructural Path Lock-in

Grantmakers’ preference for AI systems that integrate with legacy administrative infrastructures reinforces technical and procedural dependencies that exclude underrepresented communities. Most funding prioritizes AI tools compatible with existing public-sector data systems—such as county welfare rolls or public school databases—which were historically designed for majority populations and often omit marginalized groups due to decades of under-enrollment or surveillance-driven exclusion. This entrenches a path dependency where AI applications amplify gaps not through overt bias but by invisibilizing those outside the original infrastructural footprint. The overlooked dynamic is not algorithmic design but the inertial force of inherited data architectures, which silently determine who counts and who is counted.

Epistemic Access Churn

Funding cycles that reward novelty over continuity in AI for social good erode the knowledge sovereignty of underrepresented communities by disrupting long-term, place-based learning. Grantmakers’ emphasis on scalable, measurable outcomes incentivizes short-term pilot projects led by external technologists rather than sustained collaboration with local knowledge keepers, leading to repeated extraction and abandonment. This churn prevents the accumulation of community-owned AI literacy and contextual refinement, ensuring that systems remain alien even when well-intentioned. The overlooked dimension is temporal sovereignty—the right to develop understanding and control at a community-determined pace—which is silently sacrificed for the appearance of rapid impact.

Maintenance Allocation Blindspot

Grantmakers systematically underfund the routine maintenance of AI systems in community-led initiatives, privileging initial deployment over sustained operation, which disproportionately destabilizes services for underrepresented populations. Unlike corporate AI, where backend support is embedded in operational budgets, grant-funded AI tools often collapse after the project period due to unglamorous needs like data reannotation, server updates, or user feedback loops. This creates a 'deploy-and-decay' pattern where marginalized communities experience broken tools as the norm, deepening distrust. The overlooked factor is the invisible labor of technological care—the maintenance work that sustains equity over time but is structurally rendered invisible in funding architectures.

Funding Path Dependence

Grantmakers' early 2010s bets on scalable AI prototypes over community-coupled design entrenched technical trajectories that marginalized context-specific needs of underrepresented groups. By privileging speed and generalization in NLP and computer vision, foundations like MacArthur and Sloan enabled dominant AI paradigms to treat 'fairness' as a downstream retrofit rather than a core architecture, cementing a path where inclusive outcomes require costly reengineering. This shift—from participatory pilot grants in the 2000s to efficiency-driven automation funding—revealed how philanthropic timing shapes technological lock-in, making equity an add-on rather than a default.

Institutional Memory Erosion

As grantmaking shifted post-2016 from supporting standalone ethics initiatives to embedding AI oversight within corporate-led consortia, earlier community accountability mechanisms—like those piloted by the Ford Foundation with Indigenous data sovereignty groups—were sidelined or fragmented. The migration of funding toward scalable governance frameworks diluted localized knowledge that had been cultivated since the 1990s in digital inclusion programs, breaking continuity between past equity experiments and current AI deployment. This erosion was non-obvious because the new structures appeared more 'mature,' yet they systematically excluded longitudinal learning from marginalized testbeds.

Crisis-Responsive Prioritization

Following the 2020 racial justice uprisings, major funders including Gates and Open Society redirected AI grants toward bias detection tools, marking a sharp pivot from prior infrastructural investments in community data literacy. This crisis-responsive mode privileged reactive technical fixes over sustained capacity-building, transforming equity work into episodic sprints rather than generational knowledge transfer. The shift revealed how external political rhythms—rather than community-defined timelines—now govern the resurgence of inclusion agendas, resuscitating old concerns in forms that lack roots in ongoing local practice.

Explore further:

- What kinds of organizations or communities are most likely to be overlooked when early AI funding sets the agenda for entire regions?

- How did the pattern of funding AI projects while neglecting their long-term upkeep begin, and what has changed over time in how grantmakers view ongoing support for these tools in marginalized communities?

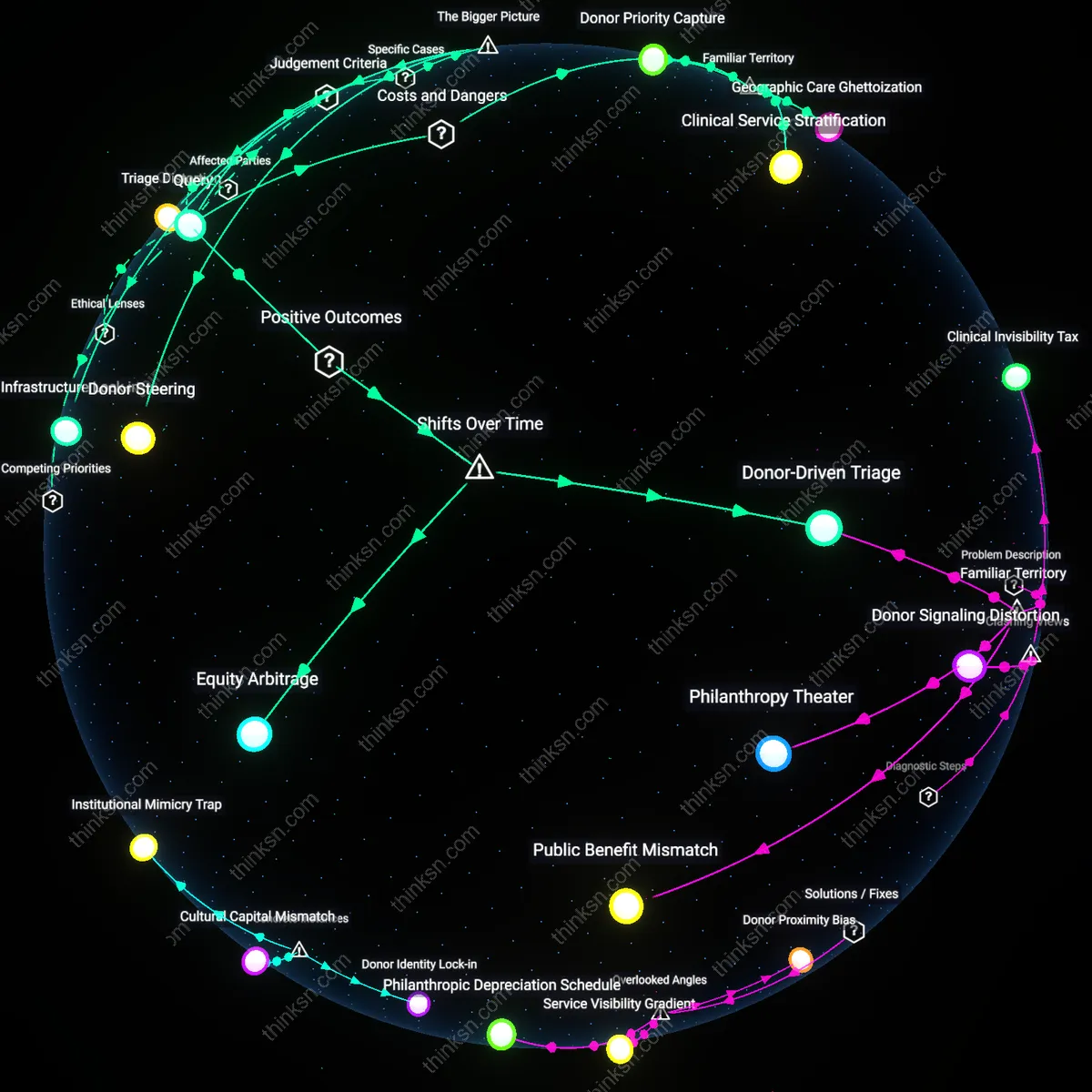

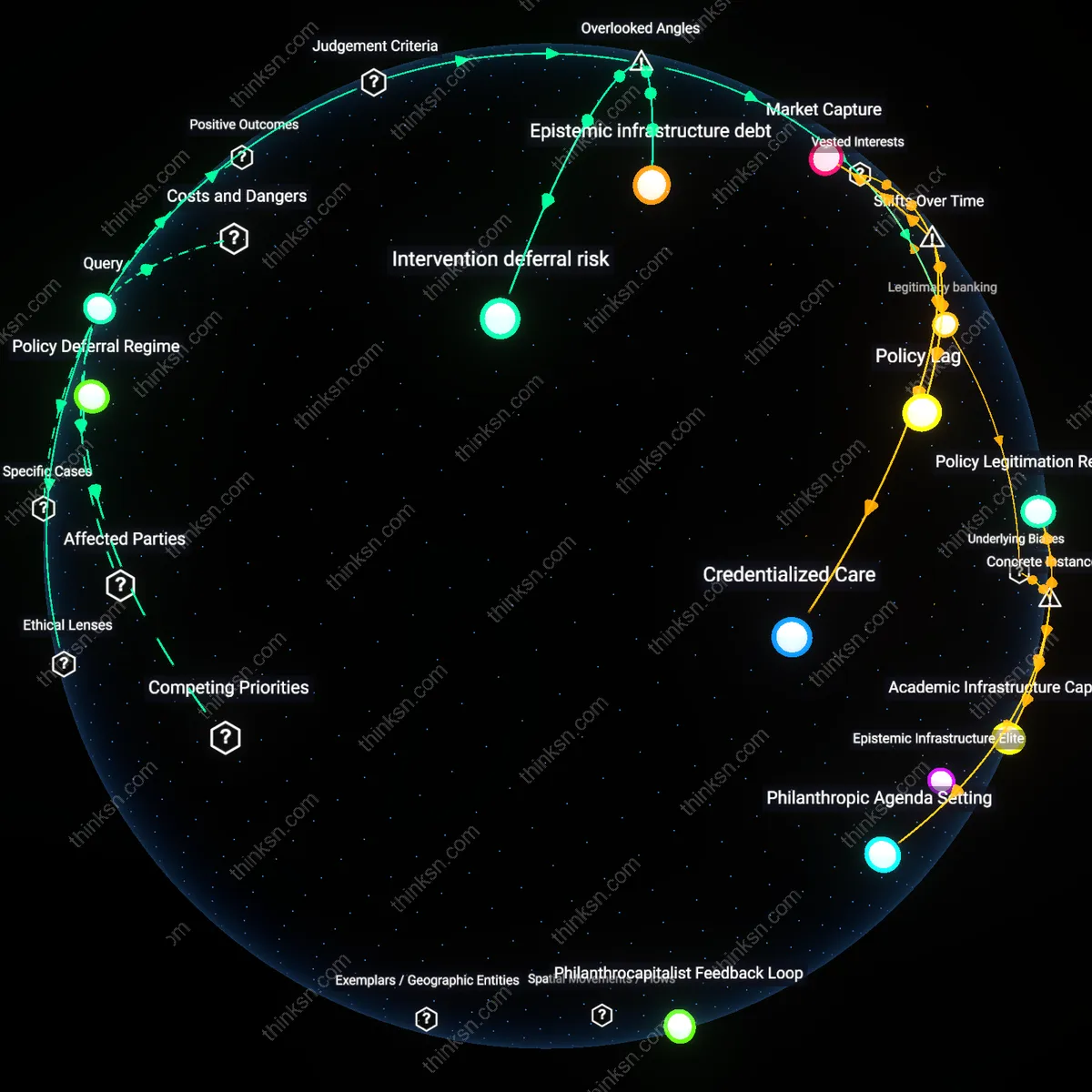

What kinds of organizations or communities are most likely to be overlooked when early AI funding sets the agenda for entire regions?

Epistemic austerity

Marxist analysis reveals that rural collectives in post-industrial regions—such as deindustrialized Appalachia or the Ruhr Valley—are systematically marginalized not because they lack technical capacity but because AI funding reproduces capital’s spatial logic, prioritizing nodes of financialized innovation over sites of labor decommissioning; this mechanism operates through venture-state assemblages that equate developmental legitimacy with market-scalable outputs, erasing forms of social knowledge rooted in care, subsistence, and non-commodified labor. The non-obvious insight is that underdevelopment is not a failure of inclusion but an active byproduct of AI’s alignment with capital valorization, which renders collectively managed, low-data subsistence economies epistemically illegible.

Liberal data patriarchy

Liberal frameworks, despite their rhetoric of inclusion, structurally overlook Indigenous language communities—like the Māori in Aotearoa or Sámi in northern Scandinavia—because AI funding prioritizes interoperability and universalized data standards, which flatten linguistic diversity into extractable tokens optimized for dominant language models; this occurs through institutional grant architectures that treat language as infrastructure rather than sovereignty, privileging computational legibility over ontological self-determination. The dissonance lies in recognizing that liberal inclusion efforts deepen epistemic dependency by framing linguistic preservation as a technical problem solvable by AI, rather than confronting settler-colonial erasures embedded in data governance.

Conservative innovation theater

Conservative governance paradigms, particularly in centralized states like Hungary or India, deliberately sideline independent academic consortia and open-source research cooperatives—not through overt censorship but by channeling AI funding into national-champion tech firms aligned with state ideology, leveraging the rhetoric of technological sovereignty to conflate innovation with political loyalty; this operates through public procurement algorithms and 'patriotic AI' grants that redefine scientific autonomy as a threat to national unity. The overlooked contradiction is that these regimes weaponize anti-elitism to delegitimize pluralistic knowledge ecosystems, transforming AI investment into a performative display of ideological conformity rather than material progress.

How did the pattern of funding AI projects while neglecting their long-term upkeep begin, and what has changed over time in how grantmakers view ongoing support for these tools in marginalized communities?

Infrastructural amnesia

Grantmakers initially funded AI projects in marginalized communities as discrete pilot interventions tied to academic publication cycles, treating deployment as an endpoint rather than a process—this mechanism, driven by university-based research teams optimizing for novelty over continuity, systematically erased maintenance labor from funding models because upkeep does not yield citable breakthroughs. The non-obvious dimension is that the tenure and promotion system in U.S. research universities acts as a hidden selector against long-term engagement, making abandonment an institutional default; this reframes 'underfunding maintenance' not as oversight but as structural incentive alignment, revealing how academic reward systems silently shape the lifespan of AI tools in community settings.

Epistemic precarity

Early AI funding in marginalized communities relied on quantifiable short-term outcomes to satisfy donor impact metrics, which privileged algorithmic development over the slow, context-sensitive work of community-based adaptation—this dynamic, enforced by Northern-based foundations applying corporate CSR logics to grassroots initiatives, rendered invisible the local interpretive labor needed to sustain AI tools amid shifting social conditions. The overlooked factor is that maintenance requires ongoing epistemic negotiation between technologists and communities, a process that does not fit into outcome-based reporting frameworks; this shifts the narrative from simple 'funding gaps' to the erasure of local knowledge infrastructures that are essential for tool relevance and survival.

Temporal colonialism

International development funders imposed linear, milestone-driven timelines on AI projects in marginalized communities, compressing open-ended sociotechnical adaptation into fixed implementation phases that expired before feedback loops could mature—this pattern, institutionalized through USAID and World Bank procurement cycles, treated time as a uniformly measurable resource rather than a socially structured dimension, privileging donor scheduling over local rhythm. The underappreciated reality is that maintenance fails not because of cost but because external funders disallow the recursive temporality that community-led stewardship requires; this exposes how the sequencing of support, not just its amount, becomes a form of power that determines which sociotechnical futures get to endure.

Maintenance Debt

Grantmakers initially funded AI projects as discrete innovation cycles, treating deployment as the endpoint rather than the beginning of operational life. This logic, dominant in the early 2010s through U.S. federal tech-forward grants like those from the National Science Foundation, prioritized novelty metrics and prototype demonstration over sustainability planning, effectively externalizing the costs of upkeep to under-resourced community organizations. The non-obvious consequence was not just tool obsolescence but the institutionalization of maintenance as an afterthought, producing a backlog of unsupported systems in public-serving sectors—especially in marginalized geographies where replacement cycles were unaffordable.

Equity Reframing

After 2018, a shift occurred when grassroots AI accountability collectives, such as Data & Society and local civic tech coalitions, began documenting failures of AI tools in marginalized communities—not as isolated bugs but as systemic outcomes of short-term philanthropy. Their reports reframed underfunded upkeep as an equity issue, linking unreliable tools to eroded community trust and algorithmic harm. This pivot repositioned maintenance from a technical burden to a moral obligation, altering how foundations like Ford and MacArthur evaluated downstream impacts, marking the moment when sustainability became entangled with justice in AI funding rhetoric.

Institutional Drift

Between 2020 and 2023, major grantmaking institutions like the Chan Zuckerberg Initiative and the Rockefeller Foundation began embedding multi-year operations budgets within AI grants, signaling a structural drift away from project-based funding models. This change emerged not from top-down policy but from repeated failure cycles in community health and education AI tools, where grantee attrition and data decay exposed the fragility of non-maintained systems. The shift revealed that sustainability was less a financial adjustment than a slow institutional reorientation—one where upkeep was no longer outsourced to goodwill but absorbed, unevenly, into core funding logic.

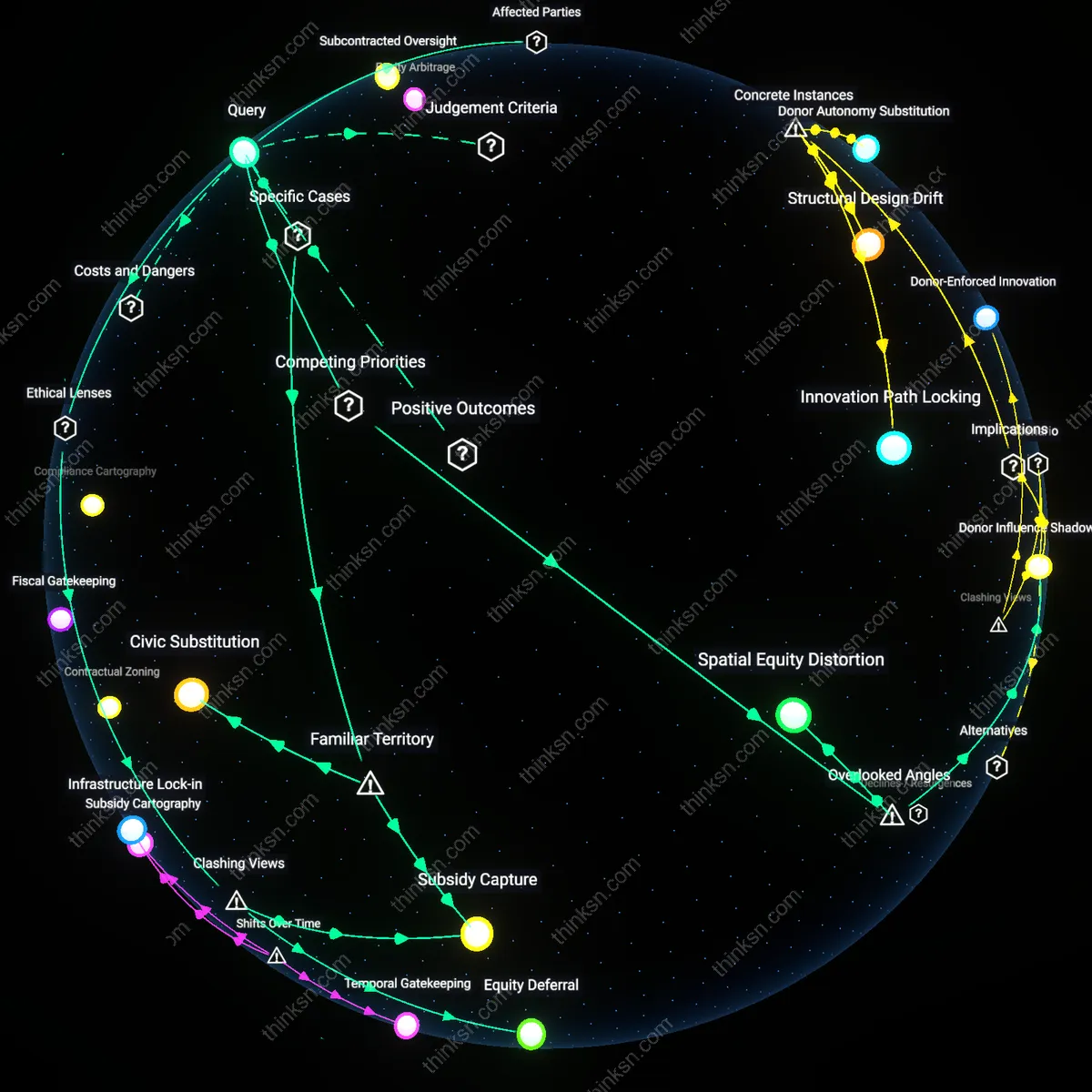

Donor temporality

The Ford Foundation’s 2015 Data & Society initiative funded the development of algorithmic equity tools for housing justice advocates in Baltimore but imposed a strict three-year grant cycle that prohibited funding for maintenance, server costs, or community training beyond prototype deployment, revealing that foundation timelines treat sustainability as external to innovation despite knowing these tools degrade without updates, community feedback, or technical debt management, making upkeep an unfunded afterthought by design rather than oversight.

Infrastructure deferral

When the Rockefeller Foundation backed the creation of the Ushahidi crisis-mapping platform during the 2008 post-election violence in Kenya, it celebrated rapid deployment for real-time reporting but refused subsequent appeals to fund Swahili-language interface updates or local server hosting, forcing reliance on volunteer coders in the Global North, exposing how donor priorities treat technological infrastructure as a one-time build rather than an evolving sociotechnical system embedded in linguistic, legal, and political contexts that require adaptive reinvestment.

Equity calcification

The Gates Foundation’s investment in AI-driven diagnostic tools for rural maternal health in Zambia through the 2017 Digital Health Initiative explicitly excluded funding for continuous data recalibration despite evidence that models trained on urban Lusaka clinics failed in remote Tonga Districts due to differing symptom presentations, showing that risk-averse grantmaking treats algorithmic fairness as a static achievement at launch rather than an ongoing process of retraining, community audit, and local epistemic inclusion, thereby freezing equity at initial design and reinforcing disparities over time.

Donor Time-Horizon Misalignment

Grantmakers initially funded AI pilot projects in marginalized communities through short-term innovation grants because success metrics were tied to immediate outputs like prototype development, not sustained utility, which privileged visibility over longevity and embedded a systemic bias against maintenance in funding architectures. This misalignment was reinforced by competitive funding cycles that rewarded novelty over refinement, causing tools to decay once external support ended, an outcome rarely accounted for in evaluation frameworks. The underappreciated consequence is that the very design of donor reporting timelines—often 12 to 18 months—structurally disqualified long-term stewardship from being a viable strategy for grantees seeking renewal or scaling.

Infrastructure Invisibility Regime

Early AI initiatives in marginalized settings were celebrated for their technological breakthroughs while the labor-intensive, unglamorous work of data curation, model recalibration, and community trust-building was systematically deprioritized in budgets, treated as incidental rather than infrastructural. This invisibility was sustained by a donor ecosystem that classified upkeep as operational overhead—a category frequently minimized or capped—thus starving projects of the recurrent investments needed to adapt to shifting local conditions. The critical dynamic is that funders outsourced sustainability risks to understaffed community organizations without modifying incentive structures to reward durability, effectively treating AI tools as one-off interventions rather than living systems.

Crisis-Driven Funding Resurgence

High-profile failures of AI tools in marginalized communities—such as flawed predictive policing systems or biased welfare allocation algorithms—spurred a resurgence in donor interest in long-term support, not as a routine practice but as a reactive measure to reputational and ethical crises that threatened the legitimacy of tech philanthropy. This shift was catalyzed by advocacy from community watchdogs and whistleblower researchers who exposed how neglect bred harm, forcing grantmakers to adopt maintenance clauses and participatory audits in new funding rounds. The overlooked mechanism is that ongoing support only gained traction when abandonment posed a liability to funders, revealing that ethical sustainability becomes actionable only when it intersects with institutional self-preservation.