Are AI Firms Lobbying for Transparency Really Seeking Accountability?

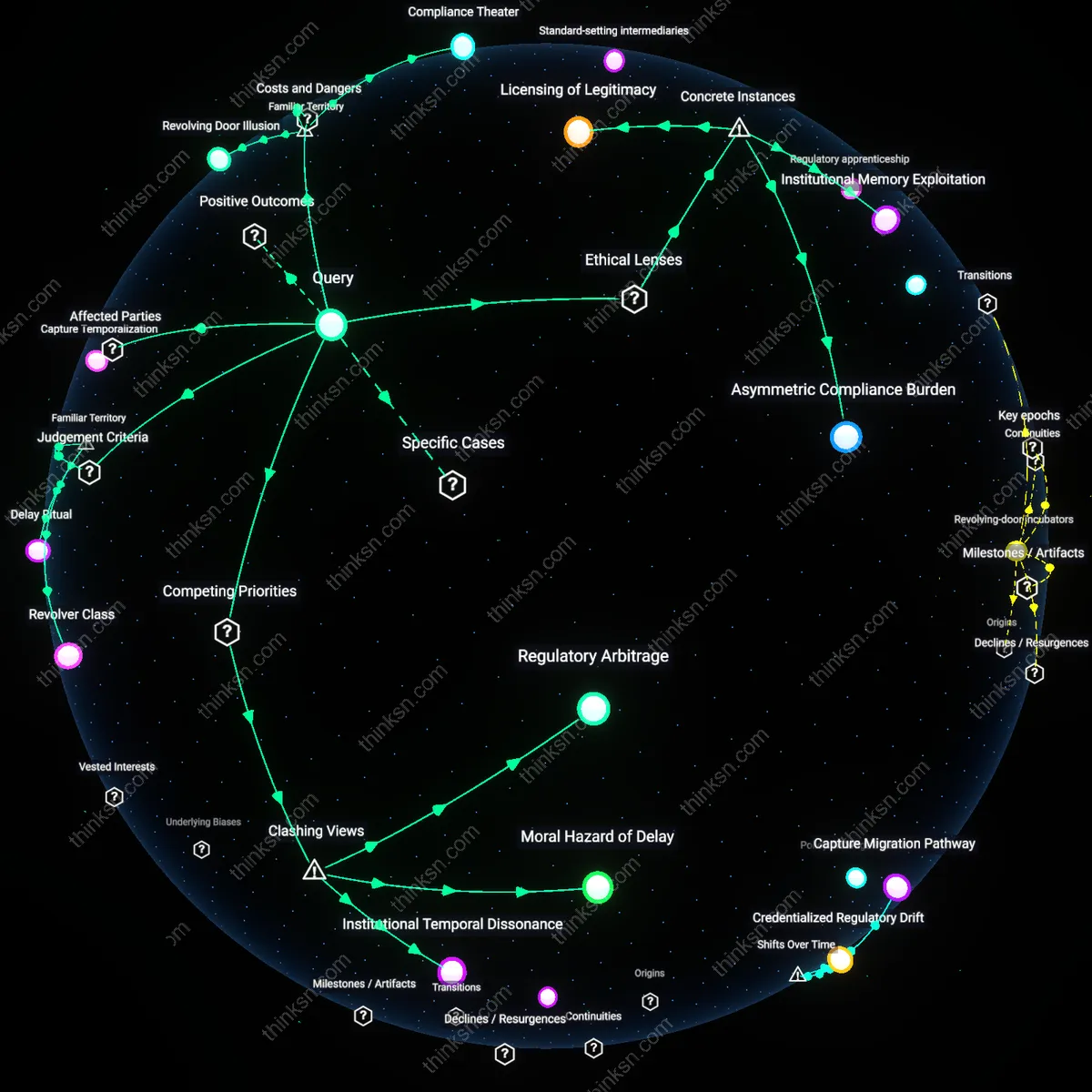

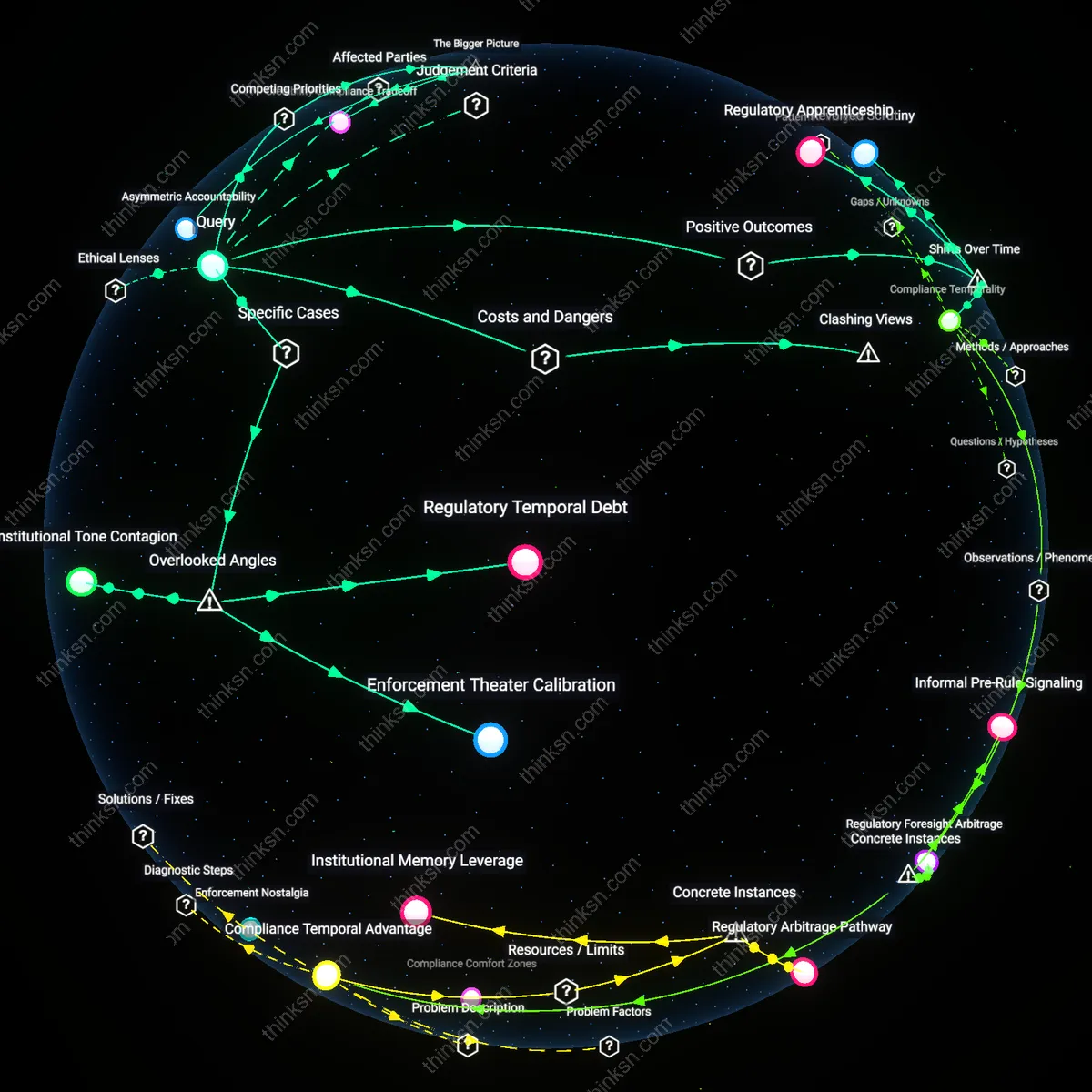

Analysis reveals 8 key thematic connections.

Key Findings

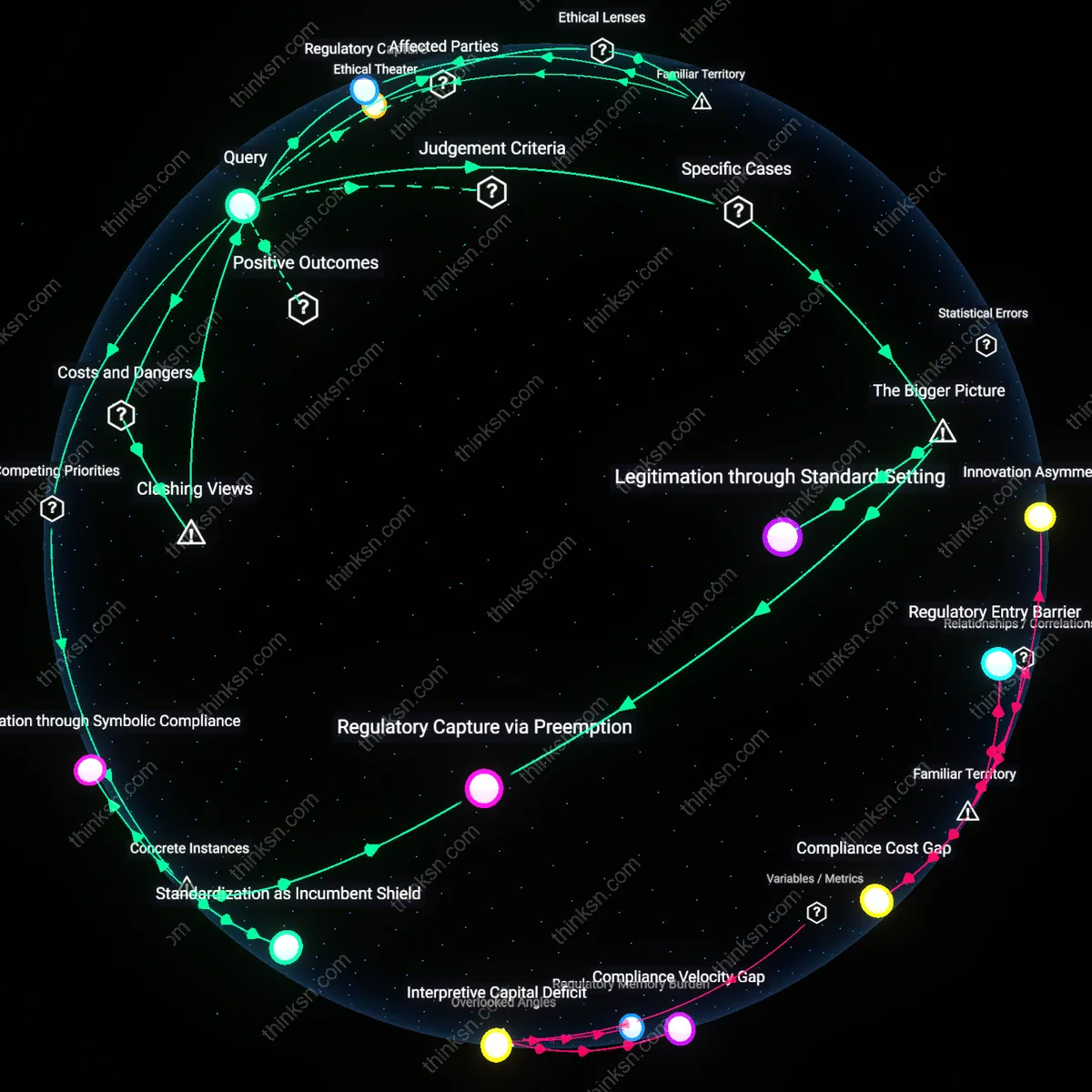

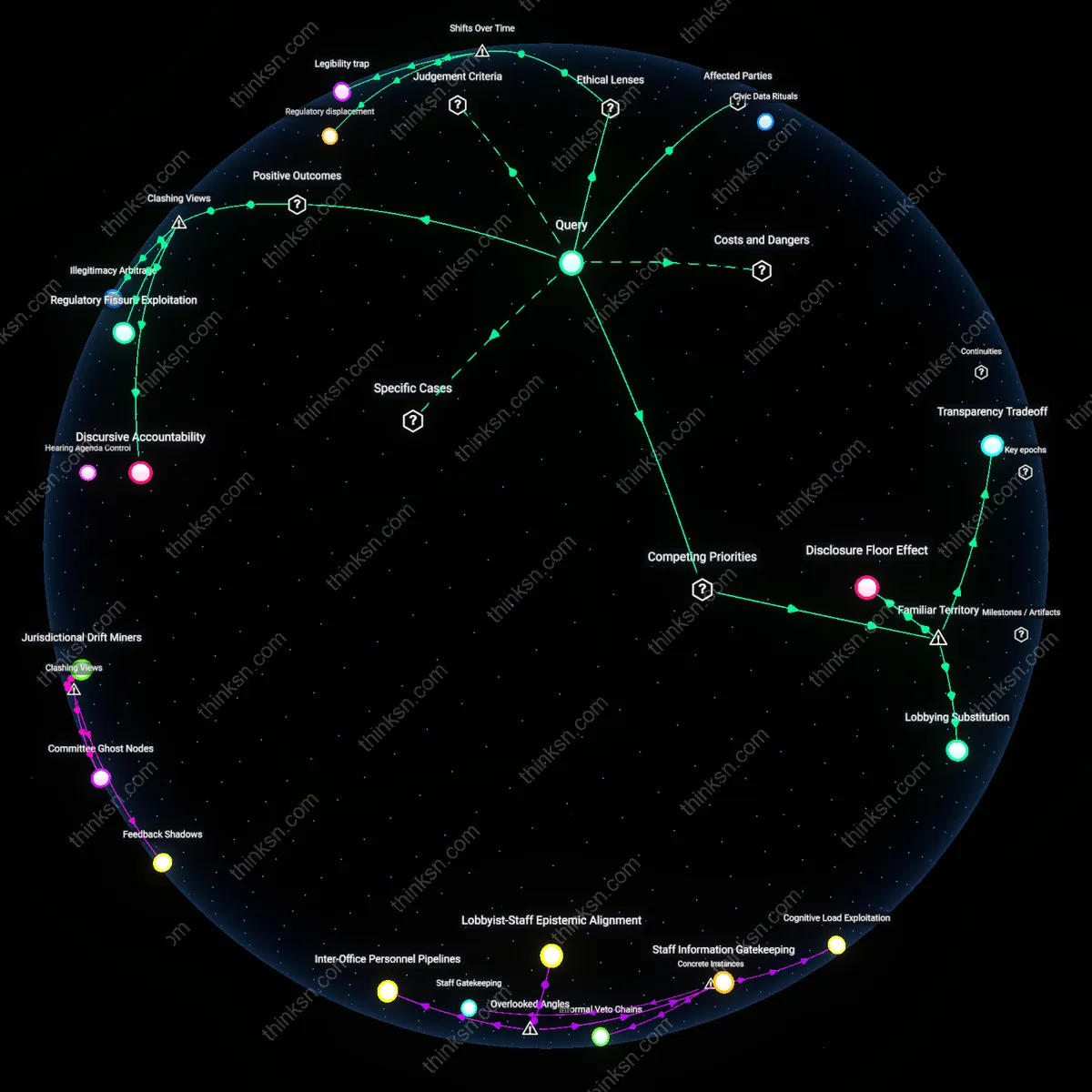

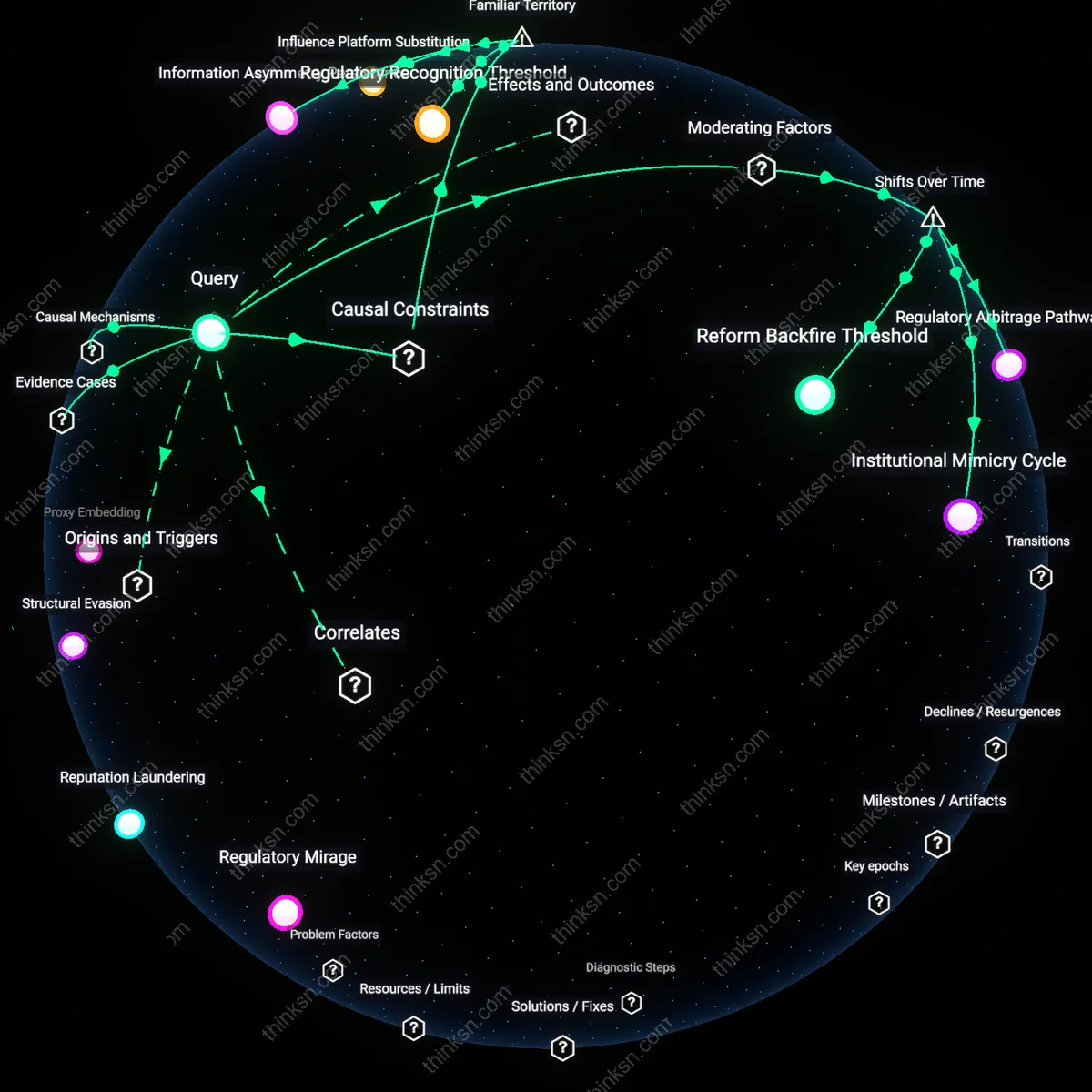

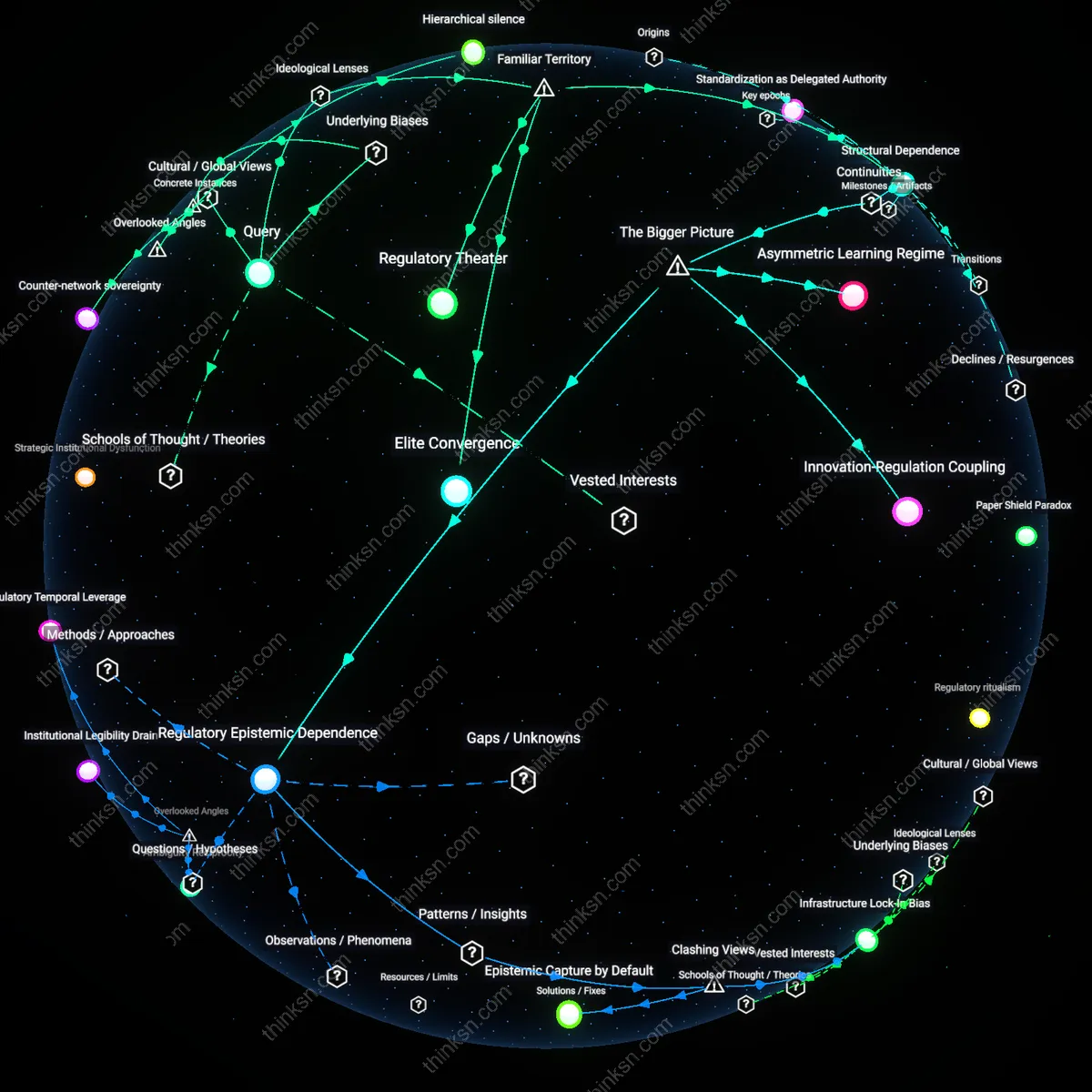

Regulatory Capture

AI companies lobbying for algorithmic transparency legislation are not seeking accountability but instead exploiting opacity reform to embed their existing data infrastructure as regulatory baselines, effectively locking competitors out through compliance complexity. By advocating for highly technical, resource-intensive disclosure standards—such as mandatory model impact assessments or granular data provenance trails—they shape rules that only well-resourced firms can meet, turning transparency into a barrier to entry. This move appears progressive but functions as containment, revealing how corporate actors can reframe accountability mechanisms as tools of market consolidation under the guise of ethical stewardship.

Regulatory Capture via Preemption

When Google advocated for federal AI transparency rules in the 2022 Algorithmic Accountability Act discussions, it simultaneously lobbied to override stricter state-level privacy laws like California’s CCPA, thereby using transparency as a mechanism to centralize regulatory control under weaker federal standards. This maneuver reveals how algorithmic transparency initiatives can serve not to enhance public oversight but to preempt more rigorous local regulations that might constrain data harvesting practices. The non-obvious insight is that transparency becomes a strategic cover for weakening substantive privacy protections, allowing companies to appear responsible while consolidating favorable legal conditions.

Standardization as Incumbent Shield

Meta’s active participation in the EU’s AI Act negotiations—particularly its support for risk-based classification that exempts most content recommendation systems from stringent auditing—demonstrates how dominant platforms use transparency discourse to shape standards that protect their core business models. By framing transparency as compliance with internal, auditable risk assessments rather than public disclosure, firms ensure accountability remains bounded within self-defined parameters. This case uncovers how standardization processes can be weaponized to convert accountability into a barrier for smaller competitors who lack resources to meet complex, customized transparency reporting.

Legitimation through Symbolic Compliance

In 2019, IBM publicly supported algorithmic transparency legislation following criticism of facial recognition bias, while quietly maintaining licensing agreements with law enforcement agencies that bypassed those proposed transparency requirements. By championing visible but non-binding transparency principles—like publishing model documentation without enabling independent verification—IBM leveraged legislative dialogue to legitimize its systems without altering deployment practices. This illustrates how symbolic gestures toward transparency can function as performative accountability, absorbing regulatory pressure without surrendering operational discretion.

Ethical Theater

AI companies lobbying for algorithmic transparency legislation are performing accountability to preempt binding regulation. This gesture leverages the public’s association of disclosure with responsibility, particularly within liberal democratic frameworks where transparency is canonized by doctrines like the Freedom of Information Act and justified through deontological ethics—where the act of revealing is conflated with moral duty. The mechanism is strategic alignment with familiar democratic norms, allowing firms to shape audit standards that favor proprietary data architectures under the guise of openness. What’s underappreciated is not that they resist scrutiny, but that they institutionalize a form of disclosure that masks power rather than redistributes it.

Legibility Power

AI firms advocate for transparency regimes that make algorithms legible on corporate terms, exploiting a Habermasian public sphere ideal where clarity equals fairness, while ensuring the underlying data infrastructures remain opaque. They deploy formats like model cards or API disclosures that simulate accountability but operate within neoliberal governance, where self-reporting substitutes for external enforcement. This functions through standardization bodies like IEEE or ISO, where corporate participation guarantees that transparency means traceability for users, not contestability for communities. The overlooked truth is that rendering systems ‘understandable’ on technical terms actively disarms sociopolitical critique by reframing justice as informational access.

Legitimation through Standard Setting

Major AI developers like OpenAI and Microsoft advocate for transparency initiatives that align with their internal auditing protocols, effectively positioning their proprietary systems as the de facto benchmark for regulatory compliance. By promoting voluntary frameworks such as the EU’s AI Act’s tiered risk classification—which mirrors existing industry impact assessments—they embed their operational logic into public policy, gaining legitimacy without structural change. This works through the institutional coupling of corporate governance models with public regulatory design, where the appearance of cooperation masks the entrenchment of self-defined accountability. The underappreciated effect is that transparency becomes a branding mechanism, allowing dominant firms to gatekeep what counts as responsible AI, thereby marginalizing more stringent or alternative regulatory visions.

Asymmetric Compliance Advantage

Large AI firms push for transparency requirements that impose high fixed costs in documentation, impact assessments, and data provenance tracking, which they can absorb due to economies of scale but which disproportionately burden smaller competitors and open-source projects. For example, provisions in the proposed U.S. Algorithmic Accountability Act requiring comprehensive bias audits favor companies like Amazon and IBM that already have dedicated AI ethics teams and legal infrastructure. This operates through the political economy of regulation, where ostensibly neutral rules function as barriers to entry by weaponizing transparency as a financial and administrative hurdle. The overlooked outcome is that accountability measures become a form of quiet protectionism, consolidating market power under the moral cover of openness.