When Does Curbing Hate Speech Violate Free Expression?

Analysis reveals 6 key thematic connections.

Key Findings

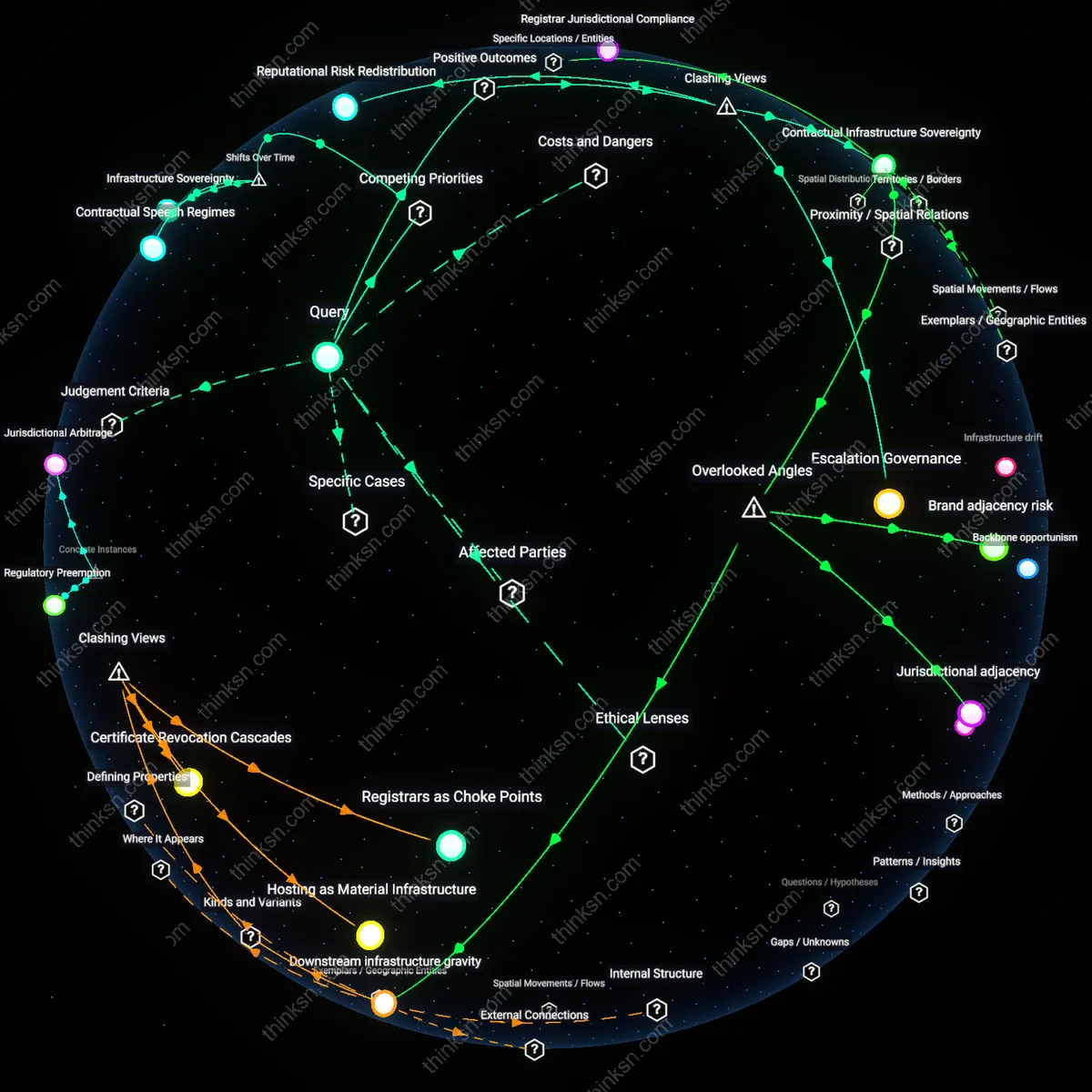

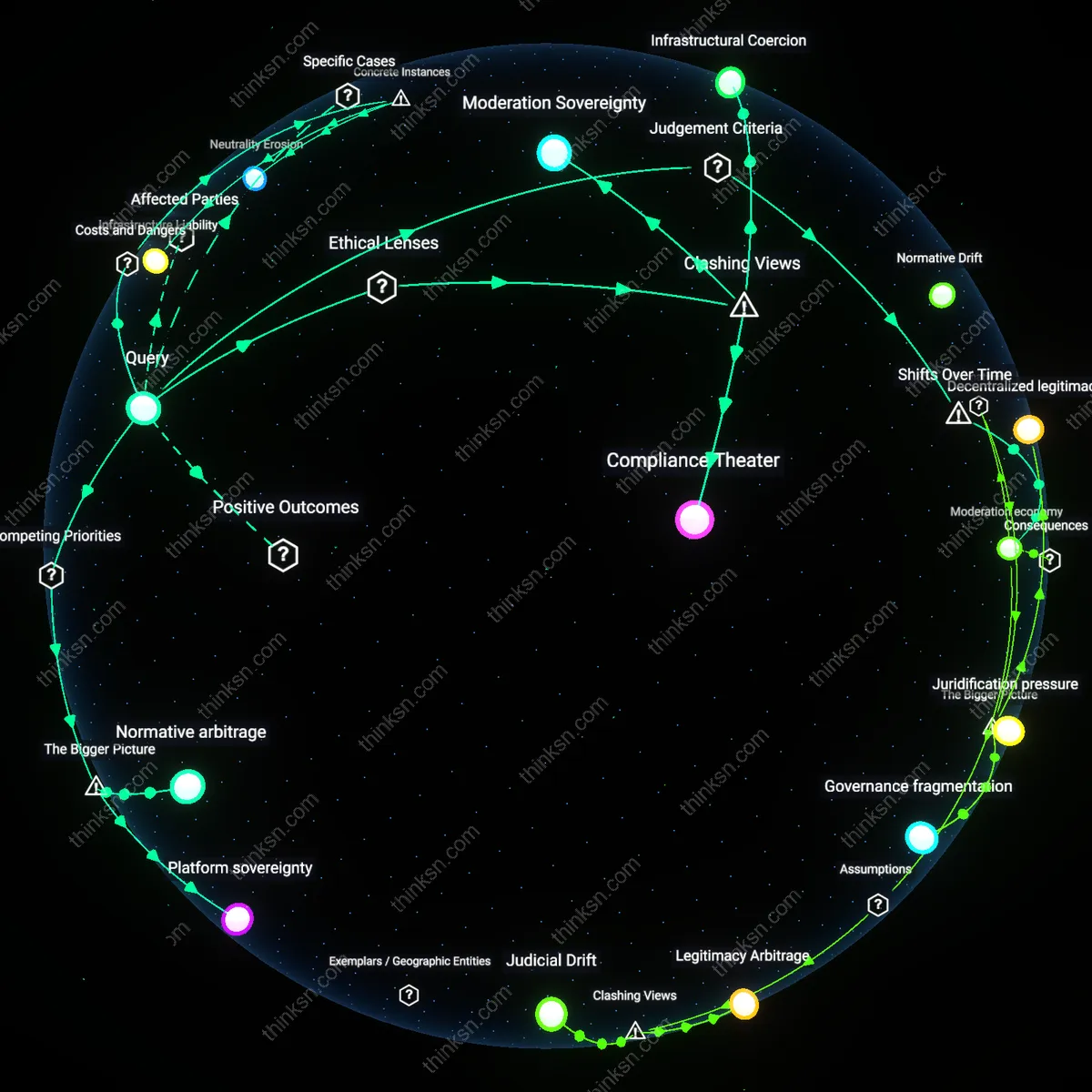

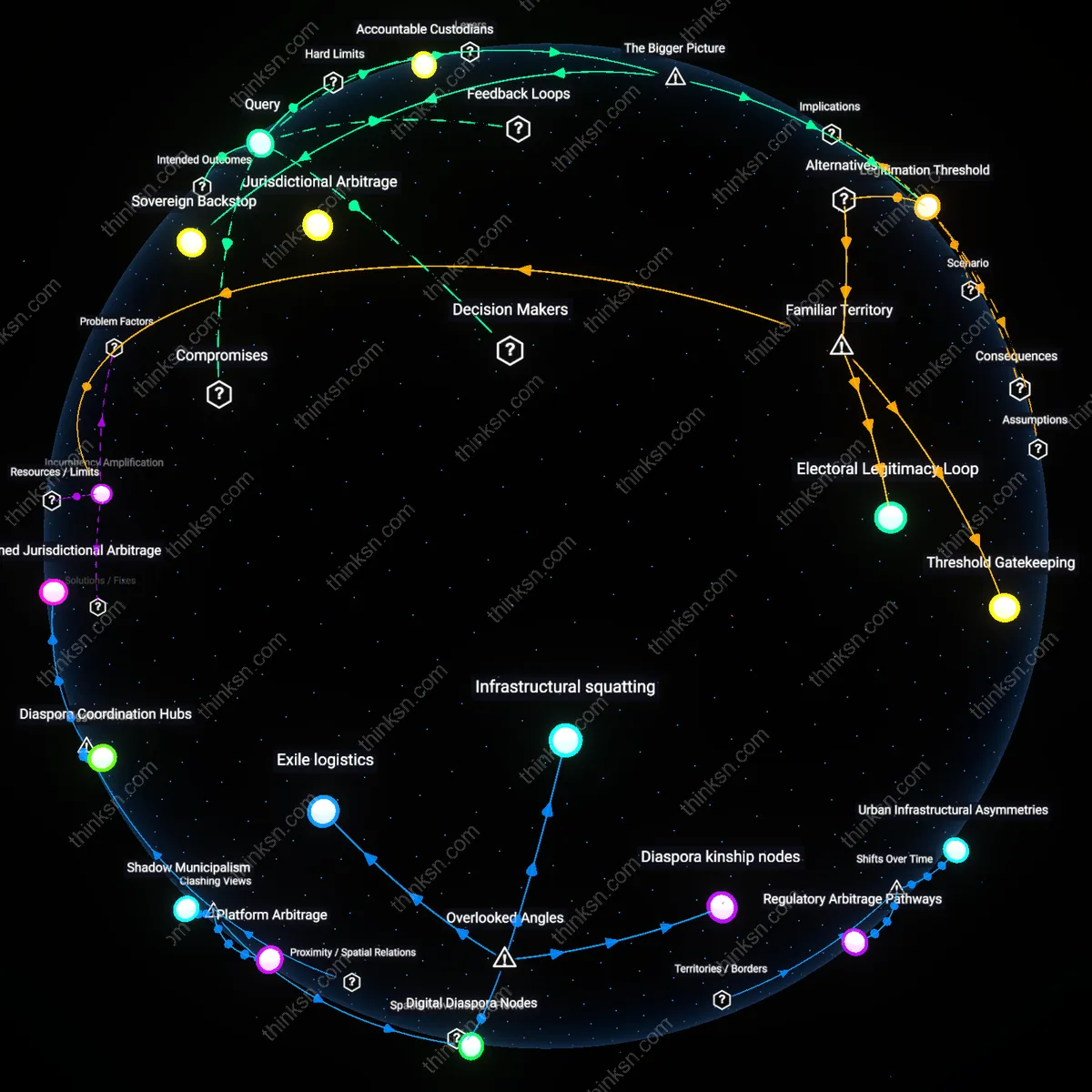

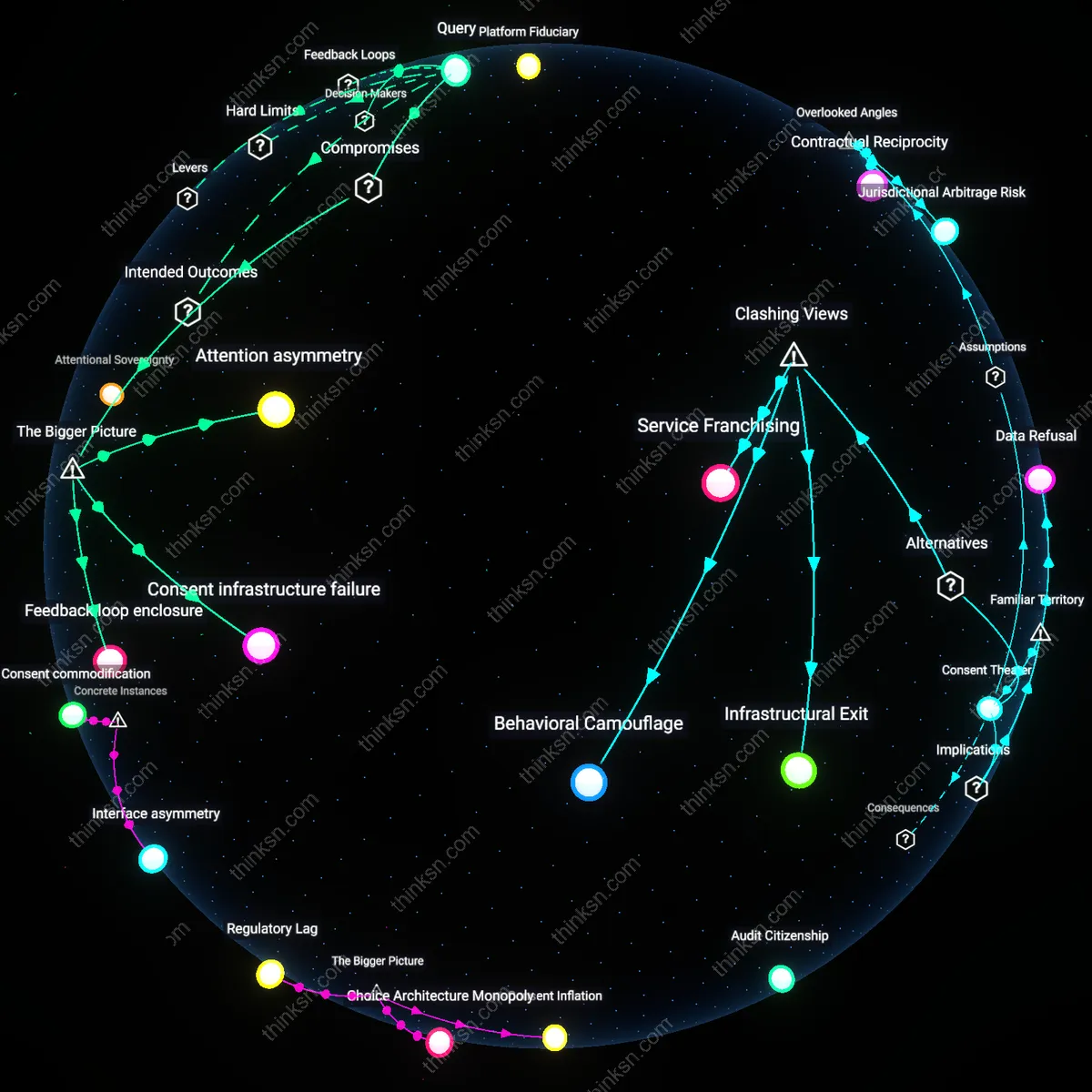

Contractual Infrastructure Sovereignty

A domain registrar’s refusal to host hate speech enhances public expression by establishing predictable, enforceable boundaries for digital speech infrastructure, which allows civil society and marginalized communities to participate online with greater safety and coherence. This occurs because registrars function as de facto governors of technical access rather than content moderators, and their consistent enforcement of terms prevents the weaponization of platform neutrality to enable targeted harassment—particularly in cases like the deplatforming of Gab following the Pittsburgh synagogue shooting. The non-obvious outcome is that private contractual discretion, when transparently and uniformly applied, strengthens free expression by ensuring the underlying architecture of the internet remains hospitable to pluralistic discourse, directly challenging the assumption that such refusals represent censorship.

Escalation Governance

Refusing to host hate speech elevates the threshold for amplification, forcing extremist actors into fragmented, less visible infrastructural niches, which reduces recruitment efficiency and intergroup coordination among radical networks. This mechanism operates through cost imposition—registrar denials compel ideological groups to invest in obscure DNS alternatives like self-hosting or foreign registries, diminishing reach and credibility, as seen when Epik’s acceptance of extremist clients isolated them from mainstream traffic analytics and search indexing. This challenges the dominant narrative of free speech absolutism by revealing that selective access to commercial infrastructure functions not as suppression but as a form of societal immune response, one that constructively de-escalates ideological threats without state intervention.

Reputational Risk Redistribution

Domain registrars who reject hate speech shift accountability from individual speakers to institutional stewards of digital legitimacy, thereby empowering third-party auditors, certifiers, and standards bodies to define ethical baselines for network participation. By treating hosting as a privilege contingent on adherence to behavioral norms—such as Google Domains’ compliance with ICANN’s Uniform Rapid Suspension system—registrars convert abstract principles of free expression into operationalized reputational consequences, which in turn incentivizes platform competition on safety and trust metrics. This reframes censorship concerns by showing that private refusals do not diminish expression but instead distribute normative authority across a broader governance ecology, contradicting the individualist myth that speech rights degrade when intermediaries exercise gatekeeping discretion.

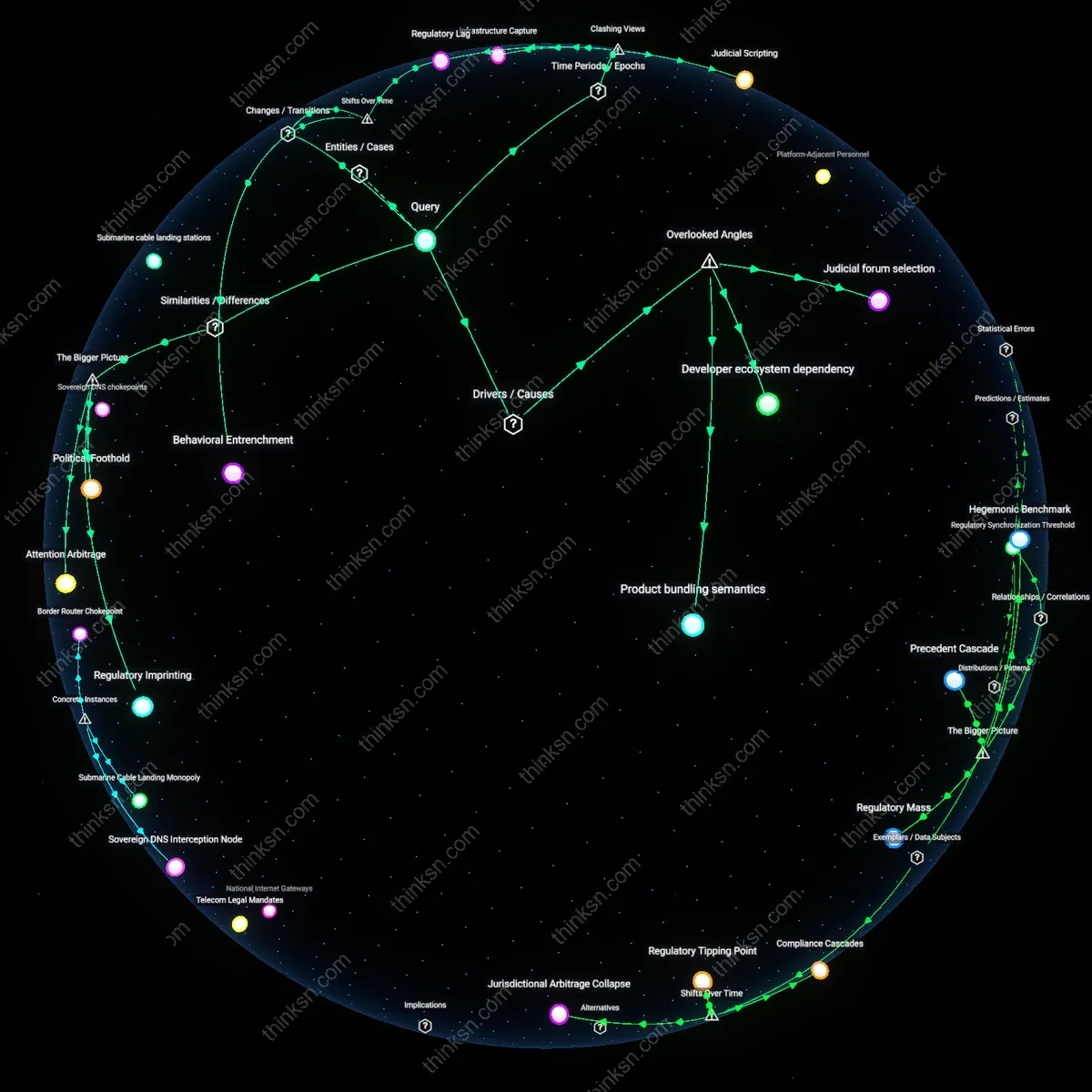

Infrastructure Sovereignty

A domain registrar's refusal to host hate speech crosses into contested free expression territory when privatized internet infrastructure consolidates control over public discourse, a shift that crystallized after the 2017 deplatforming of Gab following the Charlottesville riots. Prior to this moment, registrars like GoDaddy framed takedowns as rare exceptions under acceptable use policies, but the coordinated withdrawal of services by multiple providers marked a turning point where technical gatekeeping became a systemic expressive filter. This revealed that the decentralization ethos of early internet governance has been overtaken by a few dominant intermediaries whose operational policies now function as de facto speech regulators. The non-obvious implication is that free expression is no longer constrained by state censorship but by the accumulated technical authority of firms managing the internet’s foundational layers.

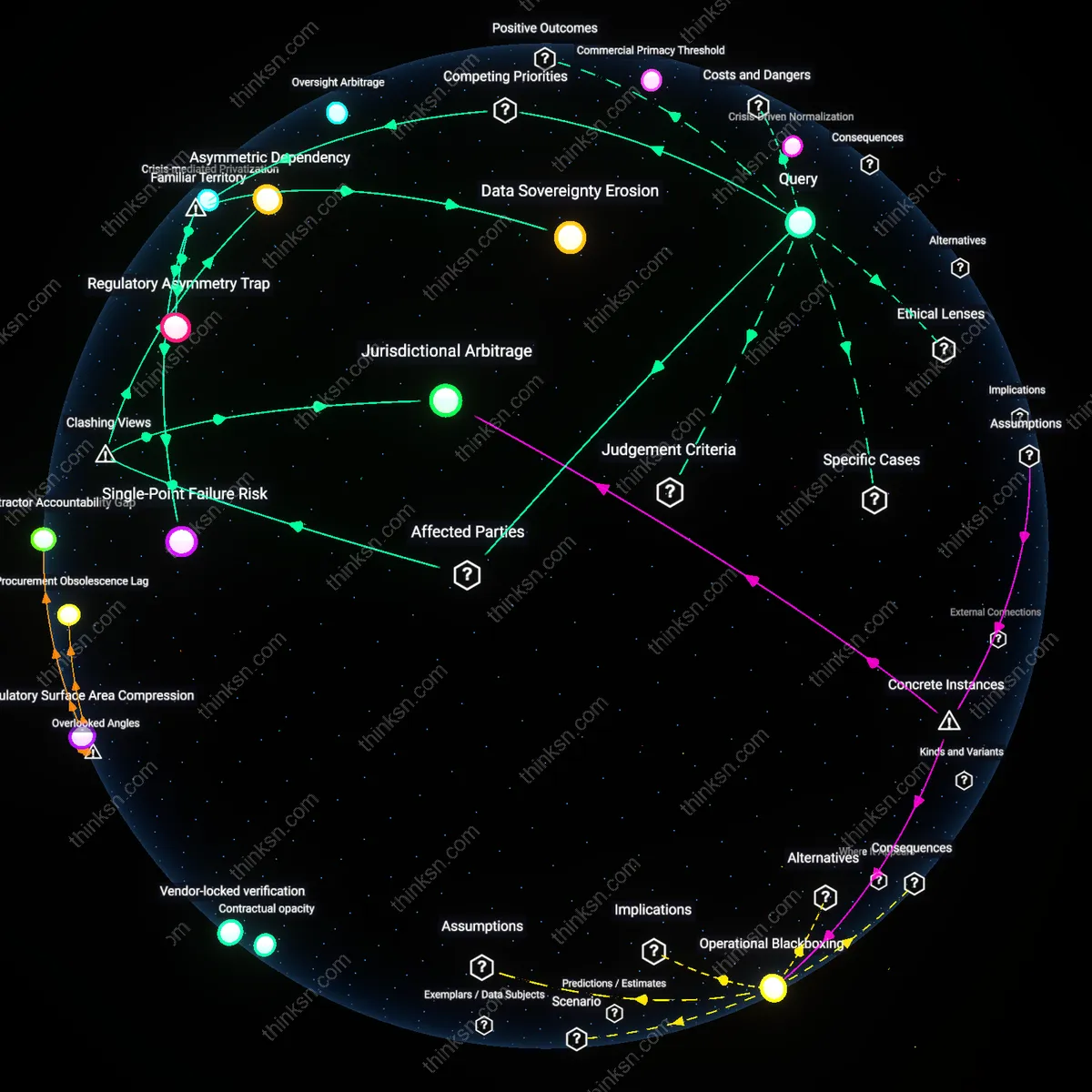

Contractual Speech Regimes

The line is crossed when domain registrars enforce speech norms through standardized contractual agreements rather than case-by-case discretion, a transformation accelerated during the mid-2010s as cloud infrastructure providers adopted uniform content policies in response to public pressure after viral hate incidents. Previously, registrars exercised selective enforcement with opaque reasoning, but platforms like Cloudflare and Amazon Route 53 began codifying hate speech prohibitions in terms of service agreements, turning free expression into a revocable privilege contingent on compliance. This shift embeds moral judgments within legalistic templates, making speech rights subject to the evolution of corporate contract design rather than democratic deliberation. The underappreciated consequence is that expression is now filtered not by removal of content but by the preemptive structuring of eligibility within agreements most users never read.

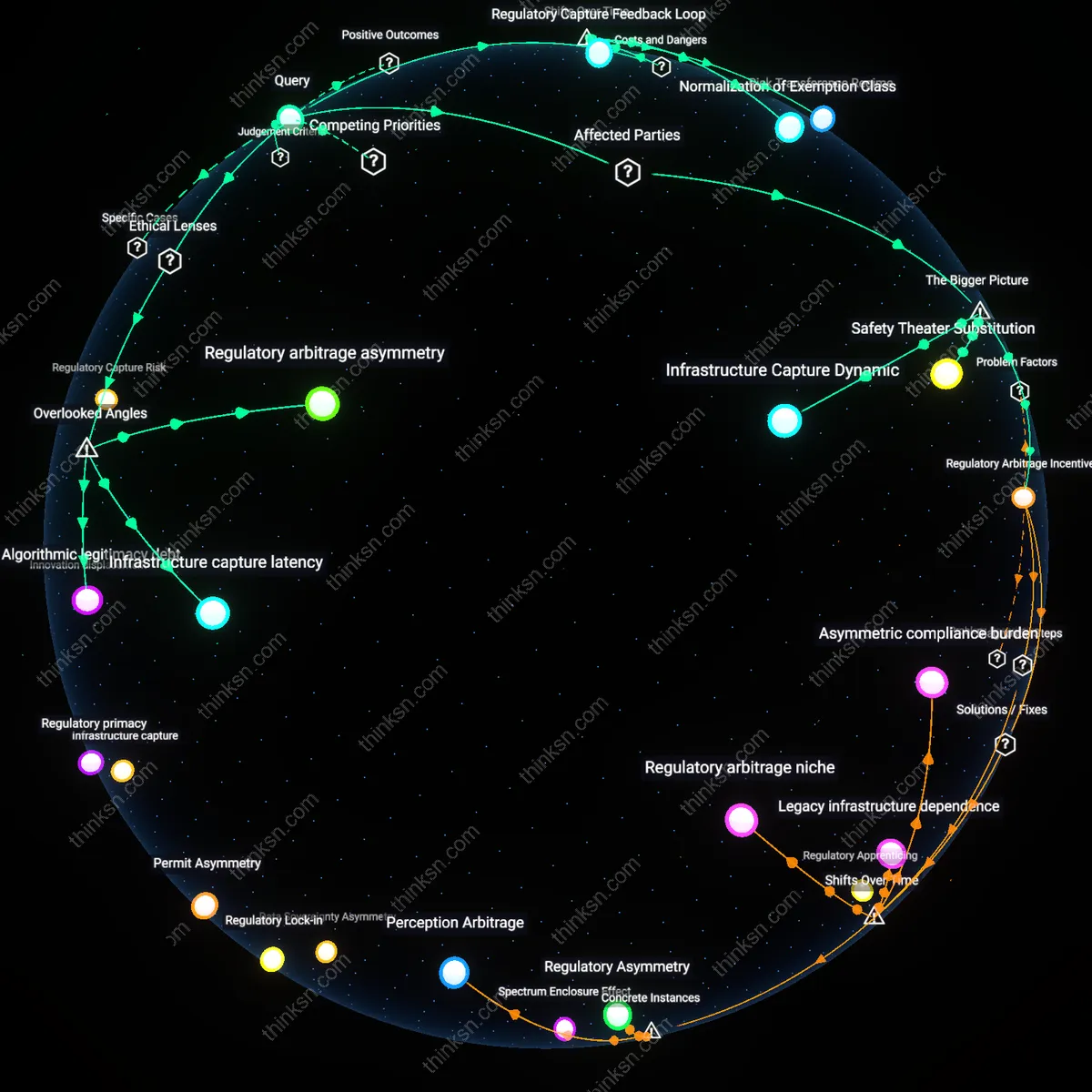

Escalation Thresholds

Refusal becomes expressive infringement when domain registrars act preemptively based on anticipated violence rather than adjudicated harm, a threshold crossed notably after 2018 when registrars began denying service to extremist groups citing potential real-world consequences, as seen with the denial of domains to Proud Boys affiliates ahead of political rallies. Historically, registrars responded to existing illegal content; now they assess risk profiles using threat intelligence from third-party monitors and law enforcement partnerships, shifting from reactive compliance to anticipatory governance. This temporal pivot transforms registrars into predictive speech arbiters, where the calculus of public safety overrides the principle of presumptive openness. The overlooked dynamic is that the measurement of 'dangerous speech' has become detached from speech itself and attached to the social identity of speakers, creating a new vector for exclusion masked as risk management.